ASBIS Tackles Regional IT Challenges with Smart Solutions

At the forefront of IT infrastructure advancements, ASBIS has been making significant strides in providing high-performance solutions, particularly in the transition from traditional hard drives to solid-state drives (SSDs). This shift, driven by the growing demand for speed, reliability and energy efficiency, has seen ASBIS collaborate closely with Solidigm, a leader in the SSD industry, to deliver cutting-edge storage solutions. Eduards Lazdins, business development manager at ASBIS, shared insights into the company’s approach during a recent discussion with Steve Gutierrez, director of sales at Solidigm, and TechArena

ASBIS, a value-added distributor and solution provider, has an expansive footprint that spans 28 countries, with over 20,000 active customers across 60 nations. Its reach is impressive, but it’s the company’s ability to address the unique needs of diverse markets that really stands out.

ABSIS serves a mix of established and emerging markets facing an array of opportunities and challenges for IT infrastructure development. While Western Europe leads in cloud adoption and AI-driven workloads, regions like Central and Eastern Europe, the Caucasus and parts of the Middle East and Africa are catching up rapidly. The challenge is striking the right balance between affordability and performance. This is where ASBIS excels — by offering high-performance solutions at scalable price points, making cutting-edge technology accessible to a wide range of customers.

ASBIS’ approach to staying ahead in the competitive IT landscape involves constantly expanding its product portfolio and geographical reach. The company is not just a traditional distributor — it operates its own server assembly line in the European Union, with the capacity to produce thousands of servers per month. This vertical integration, combined with strong partnerships with leading brands, such as Solidigm, enables ASBIS to deliver tailored solutions that meet the specific needs of its diverse customer base.

A key focus of ASBIS’ strategy has been the transition to SSDs, and it has leveraged its collaboration with Solidigm to accelerate this shift. SSDs provide significant advantages over traditional hard drives, such as faster speeds, improved reliability and lower power consumption. As businesses increasingly turn to SSDs for their storage needs, ASBIS is helping customers adopt this next-generation technology with specialized services, including pre-sales consultancy, robotic solutions, technical support and custom solutions.

One example of ASBIS’ impact is its work with cloud service providers and data centers. By integrating Solidigm’s SSDs into their infrastructure, ASBIS has helped optimize storage solutions, significantly reducing latency and improving workload efficiency. For industries handling vast amounts of data, these improvements in speed and reliability are essential. ASBIS’ solutions have not only enhanced performance, but also minimized power consumption, a critical factor for organizations aiming to reduce operational costs and carbon footprints.

What’s the TechArena take? In a world where technology is rapidly advancing, ASBIS’ commitment to providing innovative, high-performance solutions is a prime example of how the right partnerships and a focus on customer needs can drive real-world results. Looking ahead, ASBIS is well-positioned to continue leading the charge in IT infrastructure solutions, offering both the expertise and technology to meet the evolving needs of its customers.

To learn more about their product offers and solutions, visit ASBIS.com, or follow them on LinkedIn and X for the latest updates.

Supermicro Powers AI Innovation at CloudFest 2025

At CloudFest 2025, Supermicro showcased their innovations that are driving the future of AI, cloud infrastructure and storage solutions. As AI technology continues to evolve, Supermicro’s ability to deliver cutting-edge hardware solutions has become a game-changer, and their booth at CloudFest served as a testament to that progress. Solidigm’s Hayley Corell spoke with Thomas Jorgensen, senior director in the Technology Enabling Group at Supermicro,to dive deeper into how Supermicro is powering AI advancements and meeting the growing demands of modern infrastructure.

Thomas highlighted the rapid growth in AI, noting that the demand for powerful AI infrastructure is being driven by large-scale model training and, increasingly, AI inferencing. But what's crucial for this advancement? A center element of that answer is storage. Supermicro understands that AI models require fast, reliable storage to keep GPUs from idling, ensuring that the entire infrastructure is working in concert to deliver results as quickly as possible. As Thomas bluntly put it, “AI doesn't work without storage,” and Supermicro is delivering solutions to meet this growing demand.

Over the past few years, AI’s exponential growth has shifted the way companies are approaching infrastructure. As Supermicro and the rest of the industry ride the wave from training centric infrastructure demand to a demand curve that also reflects inference, new kinds of infrastructure for a wider range of environments is required. As AI continues to be specifically integrated into edge environments, Supermicro is positioning itself at the forefront, enabling AI at the edge with small, fanless servers that process inferencing directly where data is generated. This localized approach reduces latency, and it also improves the speed at which data is processed, ensuring AI workloads perform seamlessly.

A key part of Supermicro’s success lies in its commitment to delivering high-performance, low-latency infrastructure. Thomas discussed how AI clusters require not only powerful GPUs, but also fast network communication and efficient storage systems. The infrastructure design has evolved significantly to meet the demands of AI, particularly with the rise of high-density petascale storage solutions. Supermicro’s focus on providing multi-tiered storage setups ensures that data is delivered at optimal speeds for any given AI workload, enabling seamless performance across AI applications.

The collaboration between Solidigm and Supermicro has been crucial in driving these advancements, particularly in the realm of high-speed storage. Solidigm’s cutting-edge storage solutions, such as their high-capacity SSDs, perfectly complement Supermicro’s AI infrastructure. By combining Solidigm’s innovative storage technology with Supermicro’s powerful hardware, they deliver the performance and reliability required to handle the intense data demands of AI workloads.

This collaboration helps ensure that AI models can access and process data quickly, making it an essential part of AI-driven infrastructure.

Supermicro's petascale storage is capable of integrating up to 122 terabyte SSDs. This massive capacity allows AI workloads to scale up and manage vast amounts of data with ease.

So, what’s the TechArena take? For on-prem AI deployments, tapping large volumes of data locally for AI integration across business functions is becoming increasingly critical, especially as many businesses shift away from the cloud due to rising costs and data privacy concerns. Supermicro’s petascale storage delivers the speed and bandwidth needed to support the growing demands of AI models, ensuring that organizations can keep up with both the scale and complexity of modern AI workloads right from their own data centers. Solidigm’s leading 122 TB drives are a perfect match for these large scale deployments.

For those looking to learn more, Supermicro offers an abundance of resources on their website (supermicro.com) and social media channels (X, LinkedIn and YouTube).

Tech Innovations Help Consumers Say Goodbye to EV Range Anxiety

Financial incentives and future legislation are pushing EV adoption, but advanced power electronics and battery technologies are crucial to addressing range anxiety and driving broader acceptance.Range anxiety, the feeling of concern over the distance an electric vehicle (EV) can travel on a charge, is typically cited as the primary reason that EVs continue to see modest adoption. While both the carrot, in the form of financial incentives, and the stick, in the form of future legislation banning internal combustion engine (ICE) vehicles from entering major cities, are being employed to incentivize consumer adoption of EVs, extending the range of EVs is one of the most important factors to address to drive broader adoption.

One theme that I have highlighted in every blog to date is that the automobile has significantly transformed from the historical adoption of trailing-edge electronic technologies. Today’s vehicles and those on the drawing table (or maybe now it’s in the CAD tool) push the boundaries of employing state-of-the art technologies. Power electronics, specifically those used to power the electric motors of the EV and the associated control electronics and architectures, are no exception.

In this blog, I’ll provide a very high-level overview of some of the advanced technologies and architectures associated with powering the EV drive train and where these are being pushed to further extend the range of EVs. Each of these topics could easily warrant its own blog. Suffice to say, this will be a light treatment of these topics.

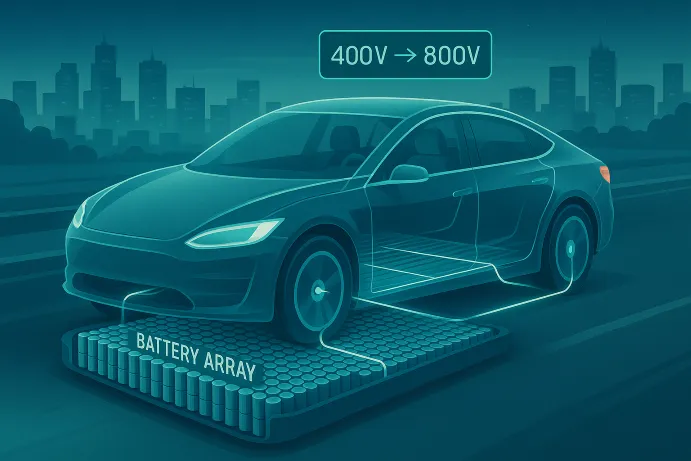

A very simplified block diagram of the powertrain of an EV is shown below.

While this block diagram hardly does justice to the complexity of the EV powertrain, it provides an adequate framework to highlight some of the major building blocks and the key technologies that are employed in the EV powertrain, the impact they have on the range of the EV, and the innovations that we can expect to see on the horizon to further extend that range.

Starting with the battery pack, a typical EV on the market today consists of a total of 7,104 rechargeable 3-volt lithium-ion batteries — each roughly the size of an AA battery! These batteries are connected in series and parallel combinations to generate about 400 direct current (DC) volts (at 90 kilowatt-hours), which is used as the power source for the traction inverter. 90 kWh means that the battery pack can supply 90 kW of power for 1 hour, which is a lot of power! The average US household uses roughly 30 kWh of power in a day.

To further improve power efficiency in the future, the industry trend is for the battery pack voltage to move from 400V to 800V. That shift will lead to significant benefits in actual power consumption (reduced by a factor of 2!), which in turn leads to less heat and hence less weight required to address system-level cooling and less weight due to lighter cabling requirements. The move to 800V does, however, require the charging infrastructure and the traction inverter to support these higher voltages.

The traction inverter is responsible for supplying power to the brushless DC motors, as controlled by the accelerator. Historically traction inverters employed relatively mature power semiconductors called IGBT (insulated gate bipolar transistors). Semiconductors are typically challenged to operate at such high power and voltages, especially if high speed switching is required. While IGBTs can address the high-power demands, they offer relatively low switching performance and are not practical in the most efficient traction inverters, which are typically based on switched topologies.

Recent advances in manufacturing processes have made a relatively new class of power electronics, wide bandgap semiconductors, viable for volume production applications. Wide bandgap semiconductors can operate at very high voltage levels, power levels, and at high switching frequencies with very low resistance (no energy lost in the device). There are two different types of wide bandgap technologies — one based on silicon carbide (SiC) and another on gallium nitride (GaN). SiC can operate at higher power levels but at slightly lower switching frequencies compared to GaN, which supports higher switching frequencies at lower power levels. Both technologies offer significant improvements over the traditional IGBT devices.

Wide bandgap devices are one of the fastest growing segments in the power electronics market, driven by EVs, the EV charging infrastructure, renewable energy, and a host of other applications requiring high-power, high-efficiency semiconductors. They demonstrate how automotive now drives demand for leading-edge technologies. The ability to operate at 400V and then support the shift to 800V is only made practical through the advent and use of wide bandgap semiconductors.

The traction inverter also employs some of the industry’s leading-edge motor control techniques, which include space vector modulation (SVM) and field-oriented control (FOC) to deliver maximum inverter performance, efficiency and control over the state-of-the-art brushless DC motors. The switching circuitry topologies employed in the traction inverter also represent some of the most advanced topologies, which again focus on achieving the highest energy efficiency when driving the EV motor. With the objective to address range anxiety, there is no stone left unturned in employing innovative technologies whenever it is applicable, viable and beneficial.

The last building block in the discussion of the EV power train is that of the battery management system (BMS). The BMS also profoundly affects the range of the vehicle. As previously mentioned, the battery pack of an EV is based on thousands of rechargeable batteries that are connected in various series and parallel combinations to generate 400 volts. One of the key challenges that the BMS addresses is that each of those batteries has a different charge and discharge rate, and those rates need to be monitored and controlled to ensure batteries are not under-charged or overcharged. Under-charging will directly affect range (not a full charge), whereas overcharging can lead to catastrophic results, including fires and explosions.

The BMS uses advanced charging algorithms to ensure the maximal charge is achieved on a battery-by-battery basis, while optimizing for minimal time to achieve full charge, and without overcharging a battery or overheating the battery pack. Again, this is a non-trivial problem to solve that also leverages state-of-the art technologies, including wireless connectivity to communicate charge status to electronic control units (ECUs) that monitor the overall charging of the battery pack. Not only are advanced charging algorithms employed, but also AI is employed for preventative maintenance, flagging when a battery or battery pack is starting to fail. Over the air (OTA) update technology is also employed as new, more advanced algorithms are identified that lead to more effective charging or faster charging times.

Lastly, while not shown in the simplified block diagram, there are also significant considerations given to the vehicle architecture as it applies to the distribution of power. Similar to changes in automotive E/E architecture supporting advanced driver assistance and in-vehicle infotainment systems, trends are occurring in the power architecture of the EV in the interest of power efficiency and robustness. In a nutshell, the industry is moving from a functional architecture — where power is delivered over long cables from the power source to the EV motor, to an architecture that is based on spatial positioning, where the shortest reach can be achieved from the traction inverter to the EV motor. Each approach has its benefits and complications, but given the importance of range anxiety, expect the spatial positioning architecture to win out.

In summary, while 50 years ago the average vehicle used few electronics, just a quick look at the EV power train demonstrates the complexity of the technologies that are being employed today, and where this application area is headed. In the past, computers were considered the driver of electronics technologies, then it was computer networking, and then cellular technologies/smart phones. Today, the electronics industry acknowledges that automotive is now driving leading-edge technologies across many fronts. We live in amazing times.

Powering Agentic AI at Scale

From eight-way GPU racks to liquid cooling breakthroughs, Giga Computing and Solidigm explore what it takes to support AI, HPC, and cloud workloads in a power-constrained world.

Simplify Data Infrastructure at Any Scale with VergeIO

1. What are some of the key challenges your customers face related to generating, storing, and processing high volumes of data?

Our customers, particularly those in data-intensive sectors like telecommunications, financial services, education, and healthcare, encounter several significant challenges when managing large volumes of data. These include:

- Escalating storage costs as data volumes continuously grow

- Performance bottlenecks due to aging infrastructure and increasing capacity and performance demands from workloads.

- Complex scalability issues, where scaling resources efficiently becomes difficult.

- Maintaining legacy infrastructure that wasn't designed for today's data-intensive workloads.

- Extending hardware lifespans to avoid costly and premature hardware refreshes.

Additionally, they must consistently ensure uptime, data resilience, regulatory compliance, and energy efficiency amid growing data demands.

2. How does VergeIO help customers overcome these challenges with its products and services?

VergeIO provides a comprehensive solution through our software-defined platform, VergeOS, which seamlessly integrates virtualization, storage, and networking into a single, efficient operating system. The key advantages include:

- Built-in data optimization: Advanced deduplication and live VM tiering.

- Unified infrastructure management: Simplifying operations across virtualization, storage, and networking.

- Significantly reduced hardware footprint and operational complexity.

- Seamless scalability: Easily scale performance from dozens to hundreds of nodes without complexity.

- Elimination of vendor lock-in and hardware-refresh dependency, maximizing ROI and flexibility

3. How has the shift to high-density SSDs enhanced VergeIO’s ability to manage massive-scale virtual environments, especially in data-intensive sectors like telecommunications and financial services?

The adoption of high-density SSDs has greatly amplified the effectiveness of VergeOS. When paired with our high-performance distributed file system, these ultra-dense flash storage technologies enable:

- Unmatched VM density, dramatically increasing infrastructure efficiency.

- Per-node storage capacities approaching 3PB within compact 2U servers.

- Exceptional IOPS and throughput, vital for performance-sensitive environments.

- Reduced infrastructure footprint, cutting rack space, power consumption, and cooling costs.

- Improved scalability and reliability, essential for critical sectors like telecom and financial services.

This powerful combination ensures exceptional performance, efficiency, and cost-effectiveness for data-intensive, mission-critical workloads.

4. How does VergeOS contribute to energy efficiency and extend hardware lifespan?

VergeOS is purpose-built to optimize efficiency throughout your infrastructure lifecycle:

- Lightweight, resource-efficient architecture reduces CPU and memory overhead.

- Higher VM density allows more workloads to run effectively on fewer physical servers.

- Lower overall energy consumption, significantly reducing power and cooling expenses.

- Intelligent resource optimization, such as workload balancing and live VM tiering, maximizes hardware utilization.

- Extended hardware lifecycle, reducing the frequency and cost associated with hardware refreshes.

The result is a sustainable, cost-effective infrastructure designed for long-term growth and efficiency.

5. What new possibilities do VergeOS and advanced storage technologies unlock for your customers in terms of real-time virtualization, streamlined data management, and scalable infrastructure?

Together, VergeOS and next-generation storage solutions offer unprecedented infrastructure agility, enabling organizations to:

- Accelerate real-time virtualization with instantaneous VM spin-up, cloning, and replication.

- Simplify data management through a unified control plane covering virtualization, storage, and networking.

- Effortlessly scale infrastructure with Virtual Data Centers (VDCs) that provide secure, isolated multi-tenant environments.

- Enhance disaster recovery and automation with built-in capabilities for automated backup, fast recovery, and comprehensive observability.

- Ensure future-proof growth, enabling dynamic scalability without complexity or disruptions.

Specifically, VergeIO's Virtual Data Center (VDC) technology creates completely isolated, software-defined data centers within a single infrastructure, each with independent compute, storage, and networking resources. This isolation enables customers to:

- Rapidly provision secure, dedicated environments for tenants, departments, or projects.

- Implement granular resource allocation and control, ensuring efficient use of infrastructure.

- Automate and streamline disaster recovery processes through easy replication of entire VDCs.

- Achieve higher resource utilization, reducing operational costs and infrastructure overhead.

By leveraging this unified, software-defined approach, our customers achieve greater productivity, resilience, and innovation—all while simplifying operations and reducing costs.

How Scaleway Is Scaling AI Sustainably in Europe

Scaleway’s Yann-Guirec Manac'h shares how the company is simplifying complex AI pipelines, maximizing SSD performance, and driving sustainable innovation in European cloud infrastructure.

How Ocient Tackles Big Data Challenges

1. What are some of the key challenges your customers face related to generating, storing and processing high volumes of data?

- Cost and Unpredictable Spending: The cost associated with traditional data warehousing solutions, especially in cloud environments, has become unpredictable and is leading to budgets spiraling out of control.

- Data Movement: Moving data between disparate systems is inefficient, introduces vulnerabilities, security risks, and increases operational complexity.

- Increased Energy Consumption: Running compute-intensive workloads on legacy hardware and software architectures leads to excessive energy consumption and a large environmental footprint. This restricts an organization’s ability to innovate sustainably and impacts the overall operational burden associated with large, complex data workloads.

- Data Preparation: Preparing data for AI and machine learning is a time-consuming and resource-intensive process, with a lot of that complexity having to do with data pipeline.

- Operational Burden: Maintaining and managing complex data environments and pipelines poses a significant operational burden on enterprise teams who may already be constrained for time and resources.

- Data Sprawl: Enterprises with data spread across many different systems struggle with inefficient data ecosystems and sprawl.

2. How are you helping to address these challenges with the products and services you provide?

- Unified Data Platform: Ocient’s data platform consolidates various analytics capabilities into a single, efficient system, built for sustainable data performance with compute-intensive workloads.

- Compute-Adjacent Storage Architecture (CASA): Ocient's innovative architecture brings compute directly to the storage layer, minimizing data movement and maximizing processing efficiency.

- SSD-Based Infrastructure: With hardware partners like Solidigm, Ocient leverages an all-NVMe SSD architecture for high performance and efficiency.

- In-Database Machine Learning (OcientML): Ocient’s in-database machine learning capabilities enabling customers to train and deploy models directly on their data.

- Customer Solutions and Workload Services: Ocient offers comprehensive customer support, including pre-purchase workload analysis and post-purchase optimization, ensuring successful deployments and sustained customer success.

- Built for Efficiency: Ocient enables organizations to reduce the total cost of ownership, operational burden, and environmental footprint of their analytics and AI use cases.

- Streamlined & Consolidated Analytics Stack: Ocient helps organizations consolidate and streamline their analytics stack, eliminating the need for disparate ETL, real-time streaming, and orchestration solutions in many implementations.

3. How has the shift to high-density SSDs impacted your ability to handle massive-scale workloads, particularly in industries like telecommunications, geospatial analytics, and financial services?

- High-density SSDs, like those delivered by Solidigm, are foundational to Ocient's architecture and our CASA-based approach to large, complex, costly data workloads.

- With an architecture underpinned by SSDs, Ocient is able to deliver extremely fast data access and processing, which is essential for the following industry verticals:

- Telcos (e.g. data retention and disclosure; internet connected records),

- AdTechs (e.g. real-time bidding and reporting)

- Public Sector organizations (e.g. geospatial analytics; network operations and security, search and analysis)

- The speed and efficiency of SSDs enable Ocient to deliver real-time analytics and data processing capabilities that would be nearly impossible with traditional hard disk drives.

4. Can you elaborate on the role of high-capacity SSDs in enabling energy efficiency and sustainability within your data centers?

- With hardware-aware software and innovations delivered via Ocient’s Hyperscale Data Warehouse, Ocient can reduce the cost, energy, and system footprint for data and AI workloads by up to 90%

- Using SSDs with massively better IOPS than HDDs means that you can use fewer drives to handle real-time data and therefore cut the carbon footprint.

5. What opportunities do these advanced storage solutions unlock for your clients in terms of real-time analytics, data accessibility, and scalability?

- Cost Reduction: Cost efficiencies delivered via the hardware and software layer translate to an overall efficient system capable of delivering powerful data performance at a significant cost reduction.

- Scalability: Ocient's platform is designed for sustainable growth and data performance, which allows customers to handle massive-scale workloads while also being future-proof for future workloads.

- Faster Machine Learning: The ability to run machine learning directly on the data within Ocient's platform accelerates the deployment of AI models.

- Reduced Complexity: Consolidating data environments and simplifying data pipelines reduces operational complexity and frees up resources for innovation.

Arm Weighs in on AI’s Evolution

At GTC 2025, Arm’s Chloe Ma explains how AI is shifting from compute to full-system optimization — and why storage, inference, and the edge are becoming central to tomorrow’s intelligent infrastructure.

Unpacking the Power of Alluxio: A Game-Changer in AI Infrastructure

With NVIDIA CEO Jensen Huang’s headline-grabbing reference to GTC as the “Super Bowl of AI,” expectations for this year’s conference were sky-high — and key players delivered. Among the standout innovations was Alluxio’s contribution to transforming how data is managed and accelerated in AI workloads. Scott Shadley, Director of Leadership Narrative and Evangelist at Solidigm, joined Bin Fan, Founding Engineer and VP of Technology of Alluxio, to discuss how their team has been pushing the envelope in AI data acceleration and efficient storage management, and quickly establishing a tangible impact on how AI models are trained and deployed across industries.

At the heart of Alluxio’s innovation is its ability to decouple storage and compute. Traditionally, data storage has been tightly coupled with compute resources, limiting the scalability and speed of AI workloads. But with Alluxio’s technology, data scientists and AI modelers no longer need to worry about the complexities of storage management. Instead, Alluxio introduces an abstraction layer between applications and storage, making data access seamless and efficient.

One of the most compelling aspects of Alluxio is its ability to accelerate data access. By positioning Alluxio close to GPU applications, the technology significantly reduces the time it takes to access large datasets, especially in geographically dispersed environments. This is particularly important for AI workloads that require massive amounts of data across different regions or clouds. With Alluxio’s caching layer, repeated data access is minimized, ensuring that applications are running at peak performance without the usual latency or overhead.

But Alluxio isn’t just about speeding things up – it also brings simplicity and flexibility to the table. By abstracting storage into a unified structure, their solution enables organizations to seamlessly manage their data across multiple on-prem and cloud deployments without the hassle of manual configuration or inconsistent access. Whether it's scaling up GPUs in one region or shifting workloads to another, Alluxio’s virtualization and abstraction layers provide a seamless experience for both data engineers and end users.

To meet these varied and demanding workloads, Alluxio has partnered with Solidigm to provide reliable, high-capacity storage solutions. While Alluxio serves as the software layer for managing data storage, Solidigm brings its experience as a leading supplier of SSDs to offer the ideal hardware for Alluxio’s caching layer. Together, this collaboration ensures that AI workloads are running on the fastest, most reliable infrastructure possible. The ability to store and retrieve data efficiently is essential in today’s fast-paced AI landscape, and Alluxio’s integration with Solidigm hardware delivers that performance without compromise. (Learn more about data storage optimized for the AI era.)

Alluxio is doing more than just keeping up with the growing demand for AI infrastructure — it’s leading the way in making data management simpler, faster, and more efficient. As AI continues to evolve, technologies like Alluxio will be at the forefront, empowering organizations to harness the full potential of their data.

For anyone curious about diving deeper into Alluxio’s capabilities, the company’s website, alluxio.io, and social media channels, including YouTube, offer a wealth of resources. Watch the full video here.

Scaling Smarter: Peak AIO Tackles AI Bottlenecks at GTC

Peak AIO’s Roger Cummings joins Solidigm’s Scott Shadley at NVIDIA GTC to talk AI infrastructure shifts, single-node innovation, and making data placement as intelligent as the AI it powers.

Beyond Automation: Cloudflare’s Vision for Developer Empowerment

At NVIDIA GTC 2025, Cloudflare shared an exciting vision for the future of AI, automation, and developer tools. During a conversation with Scott Shadley, Director of Leadership Narrative and Evangelist at Solidigm, Aly Cabral, Cloudflare VP of Developer GTM, explained how they are becoming a critical player in the rapidly evolving tech landscape. As industries shift and change, Cloudflare’s focus is clear: empowering developers to navigate these transformations with the right tools and ample support.

One of the main topics of discussion was agentic AI, and how to define the loosely used term that’s been all the rage for next-gen AI predictions. Simply put, agentic AI goes beyond traditional automation by enabling systems to make decisions and manage more complex, dynamic tasks autonomously. While automation improves efficiency, agentic AI adds intelligent oversight, making it easier to both monitor and manage automated systems. Aly emphasized that automation alone isn’t sufficient — it’s about creating systems that are not only efficient, but also transparent and easy to troubleshoot. Cloudflare’s Workflows product addresses this by giving developers visibility into complex systems, helping them quickly identify and resolve issues in multi-step processes. This capability is becoming even more essential as automation plays a larger role in development.

In addition to automation, CodeGen tools are emerging as valuable resources for developers. These AI-powered tools simplify the coding process, allowing developers to generate code faster and with less effort. However, as Aly pointed out, the real challenge lies not in creating applications, but in managing and maintaining them over time. Cloudflare’s platform is built to support developers throughout the entire lifecycle of an application — from creation to long-term management — ensuring that systems remain scalable, secure, and efficient as they evolve.

Looking forward, Cloudflare is doubling down on AI and developer tools. As Aly mentioned, the company is preparing for Developer Week in April, where they’ll unveil new launches and innovations aimed at improving the developer experience. With new features and tools focused on simplifying the development process and harnessing the power of AI, Cloudflare is working to ensure developers have everything they need to create smarter, more scalable applications.

So, what’s the TechArena take? Cloudflare’s approach to partnerships sets them apart. Rather than locking customers into proprietary ecosystems, Cloudflare prides itself on being a connector, offering a globally distributed network and an open ecosystem that integrates well with a wide variety of third-party services – without an aggressive egress tax. This flexibility allows developers to use the best tools for their needs without being restricted to a specific platform. It’s this open approach that makes Cloudflare an ideal partner for companies like Solidigm, who offer unique solutions that complement Cloudflare’s services.

Watch the full video here. Learn more about Solidigm’s data storage solutions for the AI era here.

For those interested in staying connected with Cloudflare’s latest developments, the company maintains an active Discord community, YouTube channel, and X presence, providing ample opportunities for engagement and learning. Or visit their website.

Building Trust with NIST AI Risk Management Framework

NIST, the United States Institute of Standards and Technology, has long been an invaluable resource to product marketers. From data protection to AI, it has provided definitive technical definitions and processes. But if its role evolves, should we examine its guidance more critically?

The importance of NIST for product marketers

One of the jobs of product marketers is to get the team to agree on consistent terminology. We must use language that resonates with our audiences when marketing our product.

For example, if you have a security product (including backup and recovery), then you’ll need an authoritative guide to explain the best way to set up a secure computing environment. NIST offers that guidance. It’s simply good data center hygiene practice.

The NIST Cybersecurity Framework (CSF) has a dedicated website full of non-prescriptive guidance for companies developing and implementing cyber security programs. If you are selling to that market, it makes sense to use the CSF as the arbitrator for definitions and procedures.

I personally used the NIST framework many times, aligning product features to the CSF guidelines. So, I was extremely excited to know that NIST would build the same types of documents for AI environments.

NIST Artificial Intelligence Risk Management Framework

The NIST Artificial Intelligence Risk Management Framework was created to provide “in-depth, voluntary guidance primarily intended for developers and users of AI systems” (techpolicy.press).

The framework provides guidance on setting up governance for AI systems. It explains how to assess risks at every level, emphasizes the importance of documentation, and defines seven characteristics of trustworthy AI.

The U.S. Artificial Intelligence Safety Institute (US AISI) was established in November 2023, as a part of NIST. The Institute’s mission is “tasked with developing the testing, evaluations, and guidelines that will help accelerate trustworthy AI innovation in the United States and around the world.”

Interestingly, there have been new requirements for scientists partnering with U.S. AISI. According to a Wired report, these instructions require the removal of references to “AI safety,” “responsible AI,” and “AI fairness” from the expected skills of members.

But as AI continues to shape our world, it is crucial to ensure that the stories it tells are accurate, fair, and reflective of our diverse societies. NIST's frameworks provide a foundation, but it is up to us to build on it responsibly and require AI safety and fairness from the frameworks we rely on to tell the story of our products and services.

Those who tell the stories rule society

Plato is credited with saying, “those who tell the stories rule society.” If he was right, we must ensure that the stories told by technology are accurate, fair, and inclusive.

At its root, AI simply processes data to tell a story. For example:

• Sequencing genomes

• Detecting hackers before they can encrypt your data

• Finding new cancer treatments

• Animating your great-grandmother’s pictures

There have already been warnings from data scientists and linguists about concerns of AI safety and fairness. In the paper “On the Dangers of Stochastic Parrots: Can Language Models be Too Big” by Emily M. Bender et al, the authors raised concerns about how large language models can negatively affect the environment, diversity, social views, and encode bias.

AI tells the story of our world, based on its training data of course. Responsible AI is how we can be sure the entire world is represented as those stories are told.

Building a future with responsible AI

Product marketers and technical writers rely on frameworks like those from NIST to guide our work and ensure clarity for our audiences. With the rise of AI, the stakes are higher than ever.

By embracing responsible AI practices and using tools like the NIST frameworks, we can shape a future where technology tells the true story of our societies, one that promotes fairness, safety, and innovation.

Verge.io Reimagines IT Infrastructure for AI Demands

I recently had the opportunity to sit down with Jeniece Wnorowski of Solidigm, and George Crump, Chief Marketing Officer at Verge.io, to discuss how Verge.io is taking a fresh approach to IT solutions.

The Verge.io solution is game-changing for enterprises looking to optimize their IT infrastructure. Instead of relying on the traditional method of integrating different components through a GUI (Graphical User Interface), Verge.io has gone a step further by combining all aspects of IT infrastructure—networking, storage, and virtualization—into a single, unified code base. This results in a seamless user experience coupled with a dramatic improvement in performance - all while lowering hardware requirements.

By integrating these components, Verge.io improves efficiency and increases hardware flexibility. George shared that Verge.io supports hardware up to seven years old, making it highly adaptable for organizations with legacy systems. This innovation stems from Verge.io’s founding story — Greg Campbell, Verge.io’s CTO, was initially frustrated by the amount of time he spent managing infrastructure while developing a search engine to compete with Google and Amazon – so he decided to build his own solution. Today, this approach has resulted in a product that runs on less than 300,000 lines of code, ensuring fewer bugs and greater reliability compared to traditional solutions that often operate on millions of lines of code.

The solution also addresses one of the most pressing concerns for IT professionals today—cost predictability. Verge.io’s simple licensing model charges per server rather than by core or capacity. This straightforward pricing model appeals to a wide range of IT professionals. By supporting multi-tenancy, Verge.io makes it easier to manage resources across various clients, delivering efficiency and flexibility in a shared environment.

As AI continues to shape the future of IT infrastructure, Verge.io’s platform is designed to be AI-ready. George highlighted the growing importance of AI in enterprise workloads, particularly as organizations explore the use of private AI models. Verge.io is addressing the challenge by ensuring its platform can easily integrate GPUs for AI workloads.

One of the key factors that sets Verge.io apart from others in the market is its approach to migration. As George pointed out, migration is essential when transitioning to a new infrastructure. He also shared that Verge.io’s approach ensures minimal downtime, enabling businesses to shift their data and settings with greater efficiency. This streamlined migration process is key to Verge.io’s ability to deliver a seamless experience for their customers, no matter the scale.

In addition to seamless migration, Verge.io focuses heavily on reliability through its integrated platform. George pointed out that the system continuously monitors the environment, ensuring operations run smoothly even during network disruptions. For example, he described how Verge.io's platform maintained data integrity during network failures when traditional infrastructure would have struggled to do the same.

For enterprises considering storage solutions, Verge.io is leveraging Solidigm’s storage technology to optimize performance and lifespan. George shared how the platform supports various classes of SSDs, including QLC and TLC drives, and integrates them seamlessly into the infrastructure to meet workload demands. This approach ensures that organizations can optimize performance while managing their storage needs efficiently.

So, what’s the TechArena take? Verge.io’s unified infrastructure solution is reshaping the way organizations manage their IT environments. With a focus on cost predictability, AI-readiness, and seamless migration, Verge.io presents a compelling option for businesses that are looking to simplify their infrastructure and improve efficiency.

Listen to our full discussion here.

VAST Partners with Deloitte to Scale AI for the Enterprise

The AI landscape is evolving rapidly, and Deloitte and VAST Data have joined forces to deliver enterprise class adoption. From business strategies to cutting-edge technologies, this collaboration has the potential to bring transformative value to enterprises worldwide. Why is this one to watch? With Deloitte’s deep history of guiding enterprise IT strategy with clients and VAST’s disruptive architecture fueled by new enterprise inference focused solutions, the duo has the right mix of expertise and innovation to make a splash.

In a recent conversation with TechArena, Rick Scurfield, Chief Revenue Officer of VAST Data, and Stephen Brown, AI and Data Managing Director of Deloitte, discussed this collaboration and its impact on the next wave of AI growth. With 2025 and 2026 projected as the "years of scaling enterprise AI," they customers moving past the early phase of AI that has been centered around experimentation, proofs of concept, and business cases to transition to scaling.

This will require robust, secure, and efficient AI infrastructure, leading to the integration of data-driven business value and AI-powered outcomes. The goal is not just to deploy AI in isolated systems, but to ensure that AI can drive business value across multiple functions in the enterprise. As the two leaders explained, AI’s potential is truly realized when it operates across the business as a whole—transforming processes, improving decision-making, and, ultimately, creating new avenues for growth.

Central to this transformation is the integration of data security, risk management, and auditability. As Stephen pointed out, AI applications process enormous amounts of data from both internal and external sources. This data needs to be secured, monitored, and easily auditable at every stage. VAST excels at providing a solution that ensures data integrity, security, and full transparency to the operator. In an era where data breaches and compliance are top concerns for every organization, this ability to deliver a secure and auditable environment is invaluable.

While the collaboration around security is alone enough to turn enterprise heads, Deloitte and VAST are working together to leverage VAST technology for all of its potential. Powered by NVIDIA GPUs, VAST’s advanced data platform enables enterprises to ingest and process massive amounts of data efficiently. This paves the way for an upcoming generation of agentic computing capable of driving significant business value through improved process automation and smart decision-making.

So what’s the TechArena take? If we were looking at massive scale AI inference adoption and coming agentic workflow integration, this partnership has great potential to add significant value. Deloitte is well positioned to engage with customers on the ground, ensuring that the right workloads are handled effectively. At the same time, VAST’s continuous innovation, which we are notable fans of at TechArena, delivers tailored, scalable solutions to meet the ever-changing demands of the AI landscape which will grow and evolve at the pace of AI. And with uncertainty driven up by the rate of AI change, engaging with these vendors provides some much needed risk-mitigation. We are excited to learn more in the months ahead.

Watch the video here. To connect with Deloitte and VAST Data, check out their websites: deloitte.com and vastdata.com.

CloudFest 2025: Honing the Human Edge

After a smooth plane ride and quick car ride via the German autobahn, I arrived in EuropaPark. For four days, the quiet city of Rust was the home of the world’s leading cloud conference, bringing together the industry’s largest players, eager start-ups, and those passionate about how cloud is changing our future.

Bringing together cloud service providers, system integrators, corporate IT, resellers, platform integrators, open source professionals, OEMs and distributors across 90+ countries and with an impressive number of 9,000+ participants, CloudFest 2025 was the place where tech met humanity. Major topics revolved around:

- Human centric AI – How can the integration of human empathetic oversight in the latest AI driven hardware enhance performance and service delivery

- Cybersecurity and networks – How human vigilance and expertise safeguard the digital infrastructures against continuously evolving threats

- Service is everything – Innovations in products and services that deliver delight to customers through human-centered design and empathetic human interactions, making the difference in a cutthroat market

Through advanced AI technologies, 1SP Agency together with MSM.Digital, brought Albert Einstein to life and into CloudFest, for a fireside chat with Soeren von Varchmin, CloudFest’s Chief Evangelist. Einstein delved into his groundbreaking theories, his perspective on modern advancements, posing the troubles arising from conflicts and inequalities, as well as his hopes of a future where reason, compassion and cooperation guide humanity. His invitation to everyone in the audience was to never let their curiosity wane.

Lenovo made a solid case around liquid cooling in data centers. A decade ago, the company brought liquid cooling in data centers to address overall performance and sustainability. John Donovan, Executive Director & GM of MSP, spoke about how today the discussion is around what data centers are capable of, design constraints, total cost of ownership (TCO), sustainability, competitive forces and regulatory actions. And liquid cooling comes as an attractive solution to the high energy spend happening in data centers, who use a significant 3% of global electricity resources. Out of these 3%, 40% is used to cool and by 2030 the numbers quadruple. Reasons are many: CPUs usage increase, memory, smart mix, addition of GPUs, more wattage used, more heat generated, more power used to remove the heat. Lenovo proposes Neptune technologies (DWC + Open) which meet criteria on environmentally friendly, hassle-free maintenance, high quality tubing and hoses, low pump pressure and pre-tested and shipped air pressurized. Using warm water, a single CDU that can manage 400 kW of heat rejection to water only consumes 3.7 kW of power. A CDU can provide 4x heat rejection to liquid for 10X less power than air cooling.

Esther Spanjer, Micron’s director of channel business development, introduced the topic of how AI is stretching the limits of data centers from a performance, capacity and energy efficiency perspective. On memory and storage, the quest for bigger memory and better capacity continues. The current EDSFF (Enterprise and Datacenter Standard Form Factor) E1 is optimized for 1RU servers, supports data and boot use cases and targets mainstream data center use; while E3 is optimized for 2RU servers, maximizes power envelope and supports a broad range of storage, memory and specialized use cases. Micron introduced Micron 6550 ION NVMe SSD, the world’s fastest, most energy efficient 60TB data center SSD. It has best-in-class 60TB performance, uses up to 20% less power and it can store up to 67% more per rack. It comes in 30TB and 60TB capacity, PCI Gen 5, and the form factor E3.S, E1.L and U.2. Data gets pulled faster, which means that expensive GPUs don’t sit idle. And as a side effect, more drives can be squeezed into a server: 40 E3s SSD drives in a U2 server.

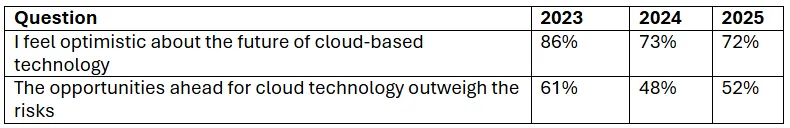

CloudFest turned to its members in 2023 and 2024 to learn about the current state and future of the industry and they did the same this year. Brooke Edge, from Open Eye, presented the state of global cloud market report and the trends for 2025. With a sample of over 600 global responses, in-depth expert interviews, and fill in date with ~1 week before CloudFest, the report passed statistical significance. 2025 outlook is not as bright as it was in 2023 and brings additional stressors that factor on the continued dip. AI is not seen as a villain, but is not seen as the hero either, at least not yet. Here are some interesting trends in responses for the past 3 years:

The conclusion is that we didn’t become more pessimistic, but the causes for the industry’s concerns have become more intense. And through the in-depth interviews, Open Eye was able to pinpoint the fact that people feel somehow powerless to the winds of regulations and geopolitics.

I so enjoyed my time and learnings at CloudFest 2025, and look forward to what the event will hold in store for 2026.

Nebius and VAST Data Combine Breakthrough Tech for AI Innovation

Enterprises are seeking innovative solutions to make organizational learning curves flatter, and trusted partnerships with industry innovators will help speed adoption. One such partnership that caught my eye at GTC features two players that are integrating cutting-edge technologies to deliver a powerful solution to the market. Nebius, a global AI cloud provider, and VAST Data, a leader in high-performance data solutions, are delivering a truly transformative approach to AI infrastructure that is poised to deliver needed tools for enterprises worldwide. Daniel Bounds, CMO of Nebius, and Chris Morgan, VP of AI Solutions at VAST Data, recently shared insights with TechArena into how their collaboration is revolutionizing AI cloud infrastructure. Let’s unpack what we learned.

Nebius offers a full-stack approach to AI workloads, providing an integrated platform across distributed infrastructure. What truly sets Nebius apart is how it has constructed its software layer to ensure a robust and easy-to-use experience for customers. As Daniel emphasized, this “secret sauce” is what makes the difference. It’s not just about having oodles of GPUs, but making sure customers are able to fully leverage this valuable infrastructure through an intuitive, user-friendly experience.

This is where VAST Data comes in. Known for its high-performance data solutions, VAST’s technology is helping Nebius push the envelope even further. By integrating VAST’s technology into Nebius’ stack, the companies are enabling customers to access not only powerful GPUs, but also an optimized data environment that can handle the most demanding AI pipelines. VAST’s platform ensures that the data management of AI is handled efficiently, giving users the ability to scale their operations without compromising performance.

As AI becomes more mainstream in the enterprise, the shift from model training to model inference is a major milestone and reality to manage. Chris highlighted that this transition is where the real value of AI lies for many businesses. Enterprises are increasingly looking to incorporate AI into their day-to-day operations, not just for training models but for integrating them into known workflows to drive positive business outcomes.

When we look deeper at Nebius, we find a wide range of flexible services. Whether it’s their AI studio, which enables on-demand inference, or a full suite of self-service and managed services, Nebius provides customers with a tailored experience based on their specific needs. As the demand for AI continues to grow, so too does the need for infrastructure that can handle large-scale, complex workloads, and this is where VAST’s data platform really shines. Nebius is tapping VAST technology to address this challenge by providing a solution that’s not only powerful, but also scalable, adaptable, and user-friendly.

What’s the TechArena take? We’re excited to see Nebius differentiate itself with an AI-centric cloud service offering, and we’re delighted to see how they’re leveraging VAST for the enterprise. Most of all, we went into GTC seeking signs of enterprise adoption, and while we’re not yet overwhelmed by scores of customer adoption stories at scale, VAST proved by be a foot forward player ready to deliver value to scale inference across enterprise verticals and functions. We can’t wait for what comes next.

For those interested in exploring Nebius’ AI cloud solutions, their platform offers multiple entry points, including AI Studio and other partner ecosystems: nebius.com. To learn more about how VAST’s high-performance data solutions can accelerate AI workloads, visit vastdata.com.

AI Benchmarks Shift as MLPerf Highlights LLM Dominance

Last week, MLCommons dropped a benchmarking bombshell with the release of MLPerf Inference 5.0 — and the implications for the AI infrastructure world are massive. As one of the most trusted benchmarking efforts in machine learning, MLPerf continues to evolve at the pace of the industry it serves. And with this latest round of nearly 20,000 new results, a surge in large language model (LLM) submissions, and several new hardware entrants, the signal is clear: inference is having a moment.

Here’s the TechArena take on what matters most.

LLMs Take the Crown from ResNet-50

In what feels like a milestone moment, the ResNet-50 era is officially over — at least in terms of benchmark popularity. MLPerf Inference 5.0 marks the first time an LLM has overtaken ResNet-50 as the most frequently submitted workload. Specifically, LLAMA 2 70B now leads the pack, with 2.5x more submissions than it had a year ago. The benchmarking community – often conservative in adopting new workloads – is fully embracing the age of LLMs.

Why does this matter? Because benchmarks drive optimization, and optimization drives real-world performance. The more representative the benchmarks, the more aligned vendor innovation becomes with enterprise needs.

Introducing the Biggest LLM Benchmark Yet

MLPerf 5.0 introduced LLAMA 3.1 405B, the largest model ever benchmarked by the organization. And yes, it’s as heavy as it sounds — long context windows, massive parameter counts, and distributed inference across accelerators. The real challenge here isn’t just throughput; it’s achieving tight latency constraints while maintaining accuracy.

A few stats that stood out:

- Median input token length: ~9,500

- Time to first token (99th percentile): 6 seconds

- Time per output token: ~175ms (faster than human reading speed)

Translation: These benchmarks aren’t theoretical — they’re reflecting production use cases like RAG (retrieval-augmented generation), agentic AI, and high-performance LLM APIs.

It’s Not Just About LLMs

MLPerf 5.0 also brought new benchmarks for Graph Neural Networks (GNNs) and automotive workloads:

- The GNN benchmark features a relational graph attention network (RGAT) trained on a massive heterogeneous dataset with over 5 billion edges.

- The new automotive benchmark, based on point painting and the Waymo Open Dataset, blends LiDAR and image processing — key for 3D object detection in real-time, safety-critical applications.

Both benchmarks reflect a broader point: inference is everywhere, from cloud AI services to edge deployments in cars and industrial systems.

The Hardware Evolution: FP4, Virtualization, and Liquid Cooling

From AMD’s Instinct MI325X and NVIDIA’s GB200 to Broadcom’s push for virtualized GPUs and Solidigm’s liquid-cooled SSDs, MLPerf 5.0 submissions captured an accelerating hardware shift.

We’re seeing:

- Adoption of FP4 (4-bit floating point) for LLMs, pushing performance up to 3x while meeting tight accuracy requirements

- Virtualized inference platforms from Broadcom and others that aim to mirror what VMware did for CPUs in the early 2000s

- Liquid-cooled servers entering the mainstream, as GPUs and SSDs hit thermal thresholds that demand new data center designs

This round wasn’t just about faster silicon — it was about smarter system-level design.

The Industry Is All In

Submissions came from 23 organizations: AMD, ASUSTeK, Broadcom, CTuning, Cisco, CoreWeave, Dell, FlexAI, Fujitsu, GATEOverflow, Giga Computing, Google, HPE, Intel, Krai, Lambda, Lenovo, MangoBoost, NVIDIA, Oracle, Quanta Cloud Technology, Supermicro, and Sustainable Metal Cloud. The “open” division also gained traction, giving software-focused companies a chance to shine by showcasing algorithmic and architectural innovations outside strict benchmark constraints.

As for data center decision-makers, MLPerf continues to offer a valuable lens for understanding how platforms evolve over time. Submitters now see MLPerf as more than a race — it’s a way to validate software stacks, evaluate scaling strategies, and compare performance under realistic production constraints.

With plans underway to replace older models like GPT-J, expand low-latency LLM scenarios, and broaden the edge inference suite, MLPerf is already planning for version 5.1. And if the growth we saw this round continues, the next wave of results will reflect even more LLM momentum, cross-industry relevance, and workload diversity.

At TechArena, we’ll continue tracking this fast-moving space and bringing clarity to the deluge of data. Because when benchmarks are this influential, they don’t just reflect the market — they help shape it.

Want to explore the full MLPerf 5.0 dataset? Dig into the Tableau dashboards now via MLCommons.org.

AI Ops & Autonomous Networks: The Future of Telecom

In this Data Insights episode, Andrew De La Torre discusses how Oracle is leveraging AIOps to enable automation and optimize operations, transforming the future of telecom.

Edge AI Silicon Heats Up as MemryX Closes $44M Series B

MemryX, an Edge AI startup that’s shared its vision on TechArena, announced today that it’s secured $44 million in its latest Series B round. This investment underscores the market’s continued appetite in infusing capital into AI processing capabilities including those aimed directly at the edge.

Founded in 2019, MemryX specializes in developing hardware and software solutions for edge acceleration. Their technology integrates memory and processing units to achieve optimal efficiency and flexibility, utilizing a single standard connection between the host processor and MemryX chip to limit data bottlenecks. This low-latency solution has the potential to expand edge applications where data flow is mission-critical, addressing the increasing demand for efficient AI processing at the edge, where data is generated.

The recent funding round saw participation from new and existing investors, including notable firms such as HarbourVest Partners and M Ventures. This infusion of capital is expected to accelerate MemryX's efforts in scaling their technology and expanding their market presence.

As AI continues to permeate various industries, the need for efficient, localized processing becomes paramount. It’s a fantastic time to be in the silicon industry, and MemryX's innovative solutions position them to play a significant role in the future of AI acceleration. They are meeting evolving demands, giving customers more tools to deliver efficient processing. Bravo!

Dryad Adds Drones to Speed Up Wildfire Detection

At TechArena, we’re always watching for companies that apply cutting-edge technology to solve complex, high-stakes problems — and Dryad Networks continues to deliver. This week, the company unveiled a major step forward in early wildfire detection with the successful demonstration of its new wildfire monitoring drone prototype at the ProSion Fire Brigade in Eberswalde, Germany.

Dryad is already known for its solar-powered, LoRaWAN-based Silvanet sensor network — one of the world’s largest deployments of its kind — which detects wildfires in their earliest smoldering stages. But this latest advancement sets its wildfire intelligence platform to flight.

Sensors Meet Drones

The Silvaguard drone prototype is designed to autonomously investigate alerts triggered by Dryad’s underground sensor network. Once a sensor detects a potential ignition, the drone can quickly fly to the location, equipped with thermal and optical cameras to visually confirm the fire. This not only helps reduce false alarms but gives emergency responders real-time situational awareness of an emerging threat.

Dryad’s approach has always been about interoperability and resilience. The company’s sensor mesh network, leveraging LoRaWAN for low-power, long-range communication, enables ultra-early wildfire detection — often before there’s visible smoke. Now, by integrating aerial surveillance into the platform, Dryad has created a layered response system that can reduce emergency response times and improve firefighting efficiency.

Smart Forests, Smarter Infrastructure

This is another example of distributed intelligence making a real-world impact. It’s a compelling use case for edge computing, IoT, and drone autonomy, delivering tangible benefits in sustainability, resource protection, and public safety. As climate change continues to amplify the frequency and intensity of wildfires globally, innovations like Dryad’s are essential infrastructure.

Dryad says the drone prototype is just the beginning. The company is now working to expand the integration between its sensor network, drones, and AI-based alerting platform to create a fully autonomous wildfire detection and verification system. If successful, this could become a blueprint for wildfire-prone regions around the globe.

So, what’s the TechArena Take? As wildfire seasons grow longer and more dangerous, this type of innovation doesn’t just matter — it could be the difference between containment and catastrophe.

See our earlier coverage of Dryad here.

AI Dev Tools, Agentic Trends & Cloudflare’s Evolving Stack

At GTC 2025, Cloudflare discusses empowering developers, managing agentic AI workflows, and simplifying application management as AI infrastructure rapidly evolves.

Unpacking The AI Data Factory – A Storage Perspective

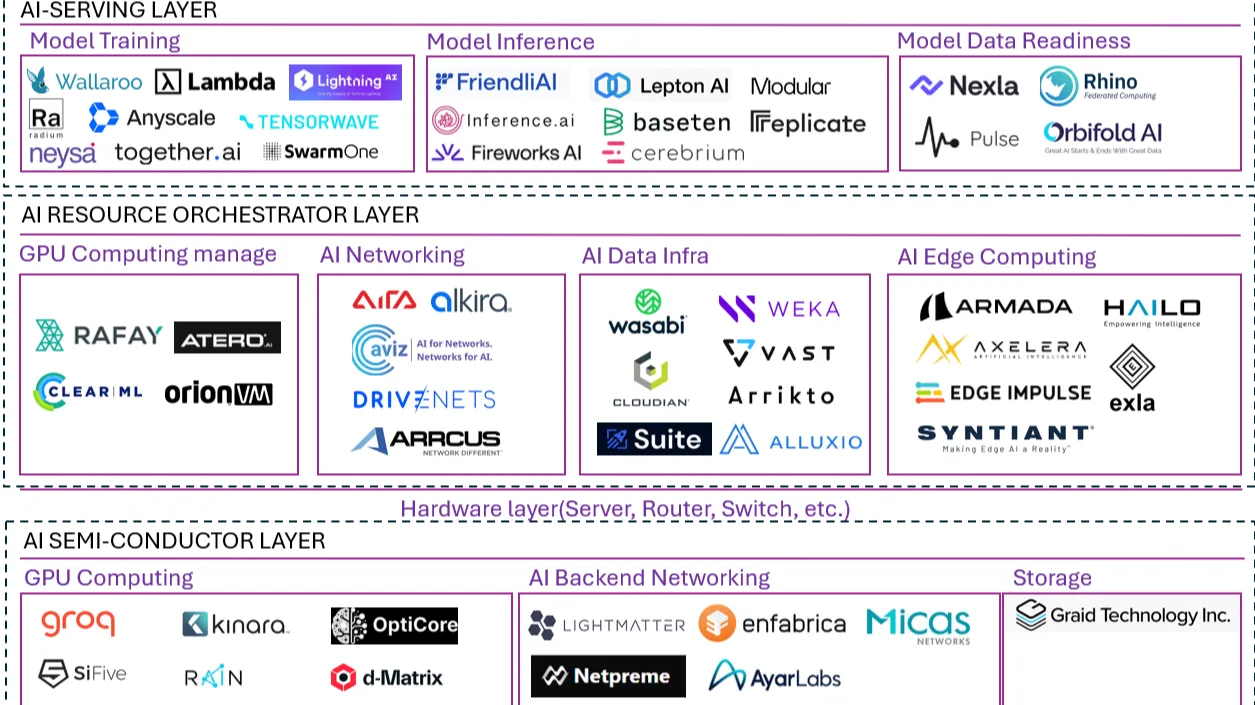

Last week’s GTC event featured so many updates in the world of computing, and we’ll be unpacking layers over the next few weeks on TechArena. One story that caught my eye was NVIDIA’s view of the AI Factory, and specifically calling out the key vendors who were innovating for AI-centric infrastructure delivery. Here, you can see a who’s who of innovators across AI-serving layer, AI-resource orchestrator layer, and AI-semiconductor layer. What is fascinating about this grouping of companies is how many disruptors are present and how this reflects the change in architecture foundations for an AI-centric world.

In today’s piece, I want to focus on storage, as data movement across a distributed landscape is so critical to the entire AI pipeline – and getting even more important, given the sustained access to data required by agentic computing. Last week at GTC, we featured a major collaboration announcement from VAST Data, showcasing a deep engagement with NVIDIA to deliver enterprise inference capabilities using VAST’s proven data platform. NVIDIA and VAST have a long history dating back to work within the HPC arena, and it shows in this announcement, as VAST is providing a core technology path that NVIDIA needs – simplified, broad adoption of generative AI workloads. While this element of their broader collaboration is just rolling out, expect to hear more about enterprise utilization of these solutions in the months ahead.

We also took a deeper look at Weka’s announcement of a new NAND-based memory grid architecture to drive “across-the-network” performance for fast response of scalable data. Weka’s solution is built upon access of distributed data across cloud and at scale, so this advancement carries the credibility of integrating into a solution that assumes a distributed architecture. It was cool to see Weka realizing that network data rates and SSD latencies have fundamentally shifted to the point that long-held truths about local vs. networked data have become legacy.

If we dig a bit deeper into NVIDIA’s map, we see that Graid has been called out for storage hardware innovation. They sit notably alone in the storage box, which is why I wanted to dig in further, as they’re the only solo vendor across the landscape. NVIDIA discussed GPU-accelerated storage extensively at the conference, and I soon learned why Graid was getting this opportunity for the spotlight. Graid has delivered exactly to NVIDIA’s vision with its SupremeRAID solution, providing software-defined storage control on a GPU for maximum SSD performance. This allows RAID solutions to be utilized within AI clusters without the I/O bottlenecking found in traditional RAID architectures. Again, we’re seeing traditional architectural models turned on their sides to deliver new capability, and once again a disruptor is taking center stage with an innovative solution for the market.

But let’s go under the covers one step further. We’ve written a lot about liquid cooling on TechArena, and of course we’re seeing a massive migration to liquid cooling on accelerated hardware. When we think of this, we typically think about GPUs. But what about the other components inside a server? There was an announcement at GTC at the heart of storage that shows more broadly how accelerated computing is putting new demands on innovation across the box, and this came from Solidigm. The purple monster of SSD capacity delivered a breakthrough liquid-cooled enterprise SSD, specifically for AI deployments. The innovation eliminates the use of a fan for cooling an in-server SSD, moving to a cold plate cooling alternative that allows for cooling both sides of the SSD and allowing for hot swapping of the device. The first design based on this new part will be delivered via ruler form factor, and I expect we’ll start seeing it integrated into many of the disruptors on the list, showcasing both the importance of all SSD configurations in AI clusters and the rate of innovation in every element of AI configurations.

Like what you’re seeing? Check out more GTC unpacking posts in the days ahead.

Deloitte and VAST on the Next Stage of Enterprise AI

Deloitte and VAST Data share how secure data pipelines and system-level integration are supporting the shift to scalable, agentic AI across enterprise environments.

With Augmented Memory Grid, Weka Challenges Old Guard Thinking

A wonderful thing about the GTC conference is that there are pockets of news to unpack across the data center computing landscape, and one such story is WEKA’s announcement of a new Augmented Memory Grid within their WEKA Data Platform software integration with NVIDIA accelerated computing. It’s these kinds of stories that get our attention at TechArena, as they demonstrate shifts in the computing landscape that are often overlooked.

To understand just what WEKA has delivered, we have to look at a broader trend going on within computer interface advancement, those industry standards that connect components in and across systems. When we look at the past five years in Ethernet speeds, we’re seeing data rates climb from 25 Gb to 40, 100, 200, 400 Gb and beyond. In a similar timeframe, PCIe has moved from 64 GB/s to a forecasted 256 GB/s with 7.0 (with influence on future generations of NVMe transfer rates as well), and the upcoming introduction of MR-DIMMs will introduce increased bandwidth and transfer rates – but all of these changes have not advanced as quickly as Ethernet.

What does this represent? We’re advancing data movement in the platform, but the thought that keeping data local vs transferring data across the network may be antiquated, at least at this moment of our architectural paradigm.

WEKA – an expert in managing distributed data – has seized on this opportunity with Persistent Memory Grid support. What the technology does is tap standard NVMe drives for fast data capacity delivered at surprisingly low latency, forming a far memory tier for accelerated inference clusters. This augmented memory grid, being based on NVMe, offers persistence. Don’t get distracted by that persistent memory name. This is not 3DXPoint or Optane, just standard NAND-based storage operating at fantastic speeds.

WEKA claims a 3x capacity improvement to existing designs for large model support, and this is important when you consider the longer context windows needed to support functions like agentic computing and its inherent expanded autonomous decision-making. Some performance claims offered to support the technology included improvement of first token delivery by 41x when processing 105,000 tokens and a savings on the cost of token throughput by 24%. Those claims are significant when considering the scale of infrastructure deployment and are just another example of great engineering delivering pragmatic value to customers.

Building a Full-Stack AI Cloud with Nebius and VAST

This video explores how Nebius and VAST Data are partnering to power enterprise AI with full-stack cloud infrastructure—spanning compute, storage, and data services for training and inference at scale.

.jpg)