Solidigm, Dell Discuss AI Data Challenges at GTC

During GTC, Solidigm’s Scott Shadley and Dell’s Rob Hunsaker, director of engineering technologists, discussed how Dell is tackling the challenges of AI data infrastructure with cutting-edge solutions.

Ampere Receives A $6.5 Billion Exit

Earlier this week, Ampere announced that it had been acquired by Softbank, the Tokyo-based conglomerate with deep investments across semiconductors, AI and robotics, telco and more. This ends the startup’s independent quest to drive Arm-based silicon innovation deep into the data center and edge and opens a new chapter for the 1,400-member team as part of a much larger and more powerful entity. With the acquisition, Oracle and Carlyle, Ampere’s lead investors, have sold their stakes in the company.

At its launch, Ampere delivered a shot across the bow of x86 dominance in the data center landscape introducing large core count processing for data center workloads based on the Arm core. Their focus was on addressing the balance of performance and efficiency at scale, modernizing an architecture that was designed specifically for the cloud. This has played out in the market with mixed results, with highs of Ampere gaining traction within OCI (note Oracle investment) as a wedge against x86 alternatives. More recently, Ampere had seen sales slump from over $46 million in 2023 to just over $14 million in revenue in 2024 as data center operators attention shifted to accelerated computing to fuel AI, and a value proposition of large quantities of efficient cores became less urgent.

$6.5 Billion – That's a Lot of Cores

When looking at this deal, we need to ask why Softbank, a very savvy investor group, spent $6.5 billion for a company generating $14.6 million in revenue. The answer likely lies in a few strategic broader trends in the market, that trace from the world's governments to this week’s GTC conference, and to the limitations of savvy microprocessor designers vs. industry demand.

At GTC this week, Jensen Huang made clear that the unrelenting demand for computing is growing at an exponential rate. Hitting a nail in the threat of new levels of efficiency of LLMs like Deep Seek, Jenson clarified that these reasoning models require a stunning 100X compute power vs previous forecasts to deliver to their market opportunity. This compute demand will, of course, in part be satisfied by NVIDIA GPUs like the upcoming Blackwell Ultra and Vera Rubin models. But customers are getting increasingly nervous about continued “one basket” investment with team green. We’ve commented on this recently in our coverage of late on Cerebras, the high-powered AI ASIC startup that is delivering some breakthrough performance, and we expect to see a groundswell of growth across accelerator alternatives as large players start chipping away at that 100X capacity demand.

What customers may have this on their radars? Well, a first stop may be looking at the recent announcements of governments stepping aggressively into the AI game, most notably with the Stargate (U.S. government initiative announced earlier this year). We’d also look at something Softbank announced themselves, Cristal Intelligence, a bold move for AI adoption in the Japanese market. Both deals featured OpenAI collaborations. Both also had another highly featured vendor...Arm. Arm and Softbank have a long history together with Softbank maintaining 90% control of the company’s equity. Arm was also notable in the Stargate announcement, and many took their presence as a reflection of their core integration into Grace Blackwell platforms, bringing an efficient head node core in balance with Blackwell GPUs. The company was more prominently featured within the Cristal Intelligence announcement being called out as a strategic innovator for the new “SB Open AI Japan” joint venture that is seeking to unleash agentic computing to automate over 100 million workflows for Softbank group companies.

And agentic computing takes us to our third topic – a dramatic shortage of talented semiconductor designers within the industry to serve this 100X ramp. In fact, this was a key theme at Synopsys’ Executive Forum this week, where the firm rolled out their strategy for agentic semiconductor engineering assistance to help the industry fill this gap. We’ll be delivering more on this story in the coming days, but needless to say, with demand to shrink component designs from historic windows of 7 years down to one year from concept to deployment, the semiconductor arena requires an infusion of engineering cycles as the entire industry innovates the semiconductor design workflow.

With Ampere, Softbank gains just that with a 1,100 member CPU design team to match their architecture design team within Arm, filling what likely is a strategic talent gap for path to market. This marries perfectly with the large customer collaborations rolling out at rapid pace and potentially blends with the much rumored direct from Arm product portfolio expected by the industry later this year.

So what’s the TechArena take?

We’ve been covering Ampere since the foundation of our platform and are excited to see the next chapter for the company and its leadership. I, for one, am intrigued by how the two firms will work under one Softbank umbrella. And, most of all, I’m led into conjecture space where I see the mounting pressure for more competition in the accelerator arena. Reading through the ambitions of the Cristal Intelligence initiative, I see Arm being positioned for much more than efficient core delivery for this landscape. Arm’s CEO, Rene Haas, has a history in GPU product oversight in the seven-year tenure at NVIDIA preceding his move to take the helm at Arm. He’s tapped key talent from NVIDIA to help him lead that charge. Now we’ve got Renee James, a very savvy semiconductor executive with decades of experience in understanding both microprocessor delivery and full stack optimization at Intel dating back to her time as Andy Grove’s protege, growing what seems to be tighter alignment with team Haas.

Does this deliver an opportunity for tech innovation that we have yet to imagine? We can’t wait to find out what comes next.

AI, Edge, and Power: Key Takeaways from MWC Panel Discussion

At Mobile World Congress 2025 in Barcelona, the discussion around edge computing, data centers, and AI took center stage, and I had the honor of moderating an engaging panel featuring leaders from Ansys, Ampere, and Rebellions.

Ansys CTO Jayraj Nair, Ampere Chief Product Officer Jeff Wittich, and Rebellions CEO Sunghyun Park shared valuable insights on the evolving landscape of AI, hardware innovation, and the challenges of modern computing.

According to Sunghyun, 2025 is shaping up to be a pivotal year for the widespread adoption of generative AI, especially in the enterprise sector. As AI continues to evolve, the focus is shifting from AI R&D to the practical implementation of AI in business operations, with a strong emphasis on inference.

Meanwhile, Ampere has been focused on solving the power challenges associated with AI’s increasing presence in cloud and data centers, as well as at the edge and endpoint devices. As AI models expand, so too does the need for more efficient hardware that can deliver high performance while managing power and space constraints. Jeff pointed out that these challenges aren’t limited to data centers, but are also now pervasive in telco environments, where AI models are also deployed.

With advances in simulation engineering, companies like Ansys are helping accelerate AI adoption. Their work enables faster, more efficient design of critical factors, such as signal integrity, power integrity, and thermal performance across multi-scale models. As chiplet architectures and multilayer designs become more common, simulation tools will help engineers address challenges, such as voltage drops and thermal stress, which can negatively impact performance. This multi-physics simulation engineering ensures that silicon can handle the demands of AI-driven systems.

As the conversation continued, the panelists highlighted a major turning point for AI adoption in 2025: its seamless integration into all enterprise applications. Rather than users actively seeking out AI tools, AI will become an invisible force running in the background, embedded in everyday software. As AI-powered systems work behind the scenes to enhance productivity, it will increasingly rely on autonomous, machine-to-machine interactions, reducing human involvement. This shift will fuel tremendous demand for AI hardware and software across industries.

Jeff emphasized the need for collaboration and open-source technologies. He pointed out that without strong partnerships, progress in AI and silicon would be limited. The discussion highlighted how AI ecosystems require integrated efforts to keep pace with technological advancements.

Jayraj stressed the importance of academic partnerships in shaping the next generation of engineers. As AI hardware and software become more complex, specialized curriculum is essential for preparing future tech leaders.

The conversation then shifted to the global stage, particularly the geopolitical implications of AI investments. The EU’s $200 billion InvestAI initiative raised important questions about the risks and opportunities of such investments. While Sunghyun acknowledged uncertainties in global dynamics, he emphasized the importance of collaborating to secure second-source technologies and ensure resilience in the supply chain.

Looking ahead, the panel agreed that power efficiency and sustainability would remain significant challenges. As AI models grow, the demand for energy will rise, but innovations in low-power CPUs and efficient hardware will help address these issues. Despite the hurdles, the panelists were optimistic about the future, envisioning a world where AI is seamlessly integrated into every device and workload, transforming industries worldwide.

So, what’s the TechArena take? The future of AI is unfolding, and the path ahead is filled with opportunity.

For a deeper dive into the topics we discussed, watch the video here.

VAST & NVIDIA on the Insight Engine-DGX Collaboration

At GTC 2025, VAST’s John Mao and NVIDIA’s Tony Paikeday discuss their recent announcement and how AI infrastructure is evolving to meet enterprise demand, from fine-tuning to large-scale inferencing.

The Next Frontier of AI: Key Insights from AMD’s Salil Raje

While at Mobile World Congress (MWC) in Barcelona, I had the delightful opportunity to chat with Salil Raje, senior vice president and general manager of Adaptive and Embedded Group within AMD.

During our fireside chat, Salil shared some exciting insights about the future of AI at the edge—one of the most transformative trends in technology today.

Here are five key takeaways from Salil’s conversation at MWC.

1. AI at the Edge: A Paradigm Shift

While AI’s rise in the cloud captured attention in 2024, Salil anticipates that things will shape up differently in 2025, with the real transformation lying at the edge.

“AI will be everywhere—from satellites to devices,” he said.

As AI moves closer to users and devices, it enables real-time data processing, reducing latency and improving decision-making. This shift is crucial for industries like healthcare and automotive, where speed and efficiency are vital.

2. The Power of Federated Learning

Federated learning is a game-changing technology that powers edge AI. It allows devices to process data locally and send only necessary updates to the cloud, where the model weights are coalesced and then sent back to the edge. This minimizes data transfer and improves decision-making speed.

3. Revolutionizing the Automotive Industry

In the automotive sector, AI at the edge is transforming not just autonomous driving, but also safety and driver experience. Salil mentioned AMD’s partnership with Subaru, which centers on reducing fatalities to zero by 2030. Through AI, Subaru’s safety systems are becoming more intelligent, processing real-time data for faster decision-making. Additionally, AI companions are enhancing the driving experience, personalizing interactions and improving safety.

4. AI in Healthcare: Beyond Diagnosis

AI’s impact on healthcare is already irrefutable, but Salil highlighted how AI and robotics are taking things a step further. AI-driven exoskeletons, for example, are helping individuals who have lost a limb regain mobility and functionality, offering a new level of independence and improving their quality of life. AMD’s partnership with Hiroshima University utilizes AI to improve rates of early detection of cancer, while their partnership with Clarius aims to enhance diagnostics through advanced portable ultrasound imaging techniques. These innovations show how AI at the edge is improving not just diagnostics, but patient care in real time.

5. The Silicon Behind the Revolution

To support AI at the edge, powerful, specialized hardware is essential. Unlike cloud AI, which relies on massive CPUs and GPUs, edge devices require more compact and efficient solutions. Salil highlighted AMD’s innovative hardware, such as the Versal AI Gen 2, which integrates CPUs, GPUs, and FPGAs into a single platform designed for edge workloads. This hardware helps industries efficiently process complex data while meeting size, power, and cost requirements.

What’s Next for AI at the Edge?

The potential for AI at the edge is vast, but there are still hurdles to overcome. For example, Salil pointed out the need for faster adoption within telecom, a sector that has been slower to deploy AI.

So, what’s the TechArena take? In the coming years, more industries will embrace AI at the edge, enabling smarter systems and better, faster decision-making. As Salil put it, we’re on the brink of an “AI moment” that will reshape the way we interact with technology.

Synopsys Accelerates Chip Design with NVIDIA Grace Blackwell, AI

At GTC today, Synopsys delivered a unique insight into the reach of Grace Blackwell-powered innovation with the release a suite of electronic design automation (EDA) tools including PrimeSim circuit simulation, Proteus computational lithography simulation, and Synopsys AI CoPilot. PrimeSim taps NVIDIA CUDA-X libraries to help accelerate circuit design by up to 30x vs historic solutions. Proteus advancement is not that far behind, achieving up to 20x performance improvement within the lithography arena. That collective performance advancement is simply stunning, especially when considering the incredible pressure on the semiconductor industry to innovate and deliver bespoke solutions that are optimized for point workload requirements across the AI continuum and across cloud-to-edge environments.

This announcement is reflective of the increased complexity of semiconductor design and delivery in the AI era, something we covered extensively on the TechArena platform at Chiplet Summit earlier this year, as monolithic designs shift to more complex packaging technologies enabling modular chiplets, often delivered across different process technologies.

We'll be spending time with Synopsys later today and tomorrow to learn more about this and other advancements from the tech leader as they continue advancing foundational technology to fuel the next generation of semiconductor innovation.

How PEAK:AIO is Powering the Future of AI and GPU Compute

Solidigm’s Jeniece Wnorowski and I recently had the pleasure of chatting with Mark Klarzynski, founder of PEAK:AIO and a key player in the world of AI-driven storage solutions. With AI’s ever-increasing demands for faster data processing, the need for innovative storage solutions is paramount. Mark shared valuable insights into how PEAK:AIO is leading the way in high-performance storage for AI and GPU compute, and its role in transforming industries from conservation to healthcare.

AI’s Growing Data Needs: A Storage Challenge

As AI technology becomes more advanced, it generates and relies on increasingly vast amounts of data, which presents a significant challenge for traditional storage solutions – underscoring the need for faster, more efficient storage options. Mark explained that many industries, from wildlife conservation to healthcare, are increasingly depending on AI to process massive datasets in real time. However, many legacy storage systems simply can't keep up with the processing demands of modern AI workloads or fit within the tight power and space restrictions.

This is where PEAK:AIO is stepping in to revolutionize and refine AI storage. Unlike traditional solutions, PEAK:AIO is specifically designed to optimize AI performance by reducing space, power, and complexity, which ensures rapid data processing without bottlenecks.

PEAK AIO’s Role in AI: Real-World Impact

Mark offered some intriguing examples of how PEAK:AIO is already making a significant impact. In the healthcare sector, for example, AI is now providing healthcare professionals with medical image analysis – such as MRIs and CT scans – at previously unimagined speeds. The increased rates at which massive image files are processed enable doctors and medical technicians to more rapidly develop insightful diagnoses.

Mark also outlined possible scenarios where AI, powered by PEAK:AIO’s fast and reliable storage, has the potential to save lives with its rapid processing capabilities. Rather than waiting six weeks for a doctor to evaluate imaging results, AI can deliver results before patients even leave the clinic. The ability to identify and diagnose health issues in real time, which might otherwise have gone undetected due to delays in data processing, will have significant positive impact on health outcomes.

AI in Conservation: Protecting Wildlife with Peak AIO

Beyond healthcare, Mark also highlighted how PEAK:AIO is contributing to wildlife conservation efforts. One project involves the Zoological Society of London (ZSL) and their use of AI-powered camera traps to monitor endangered species in remote locations. These camera traps generate massive amounts of image data that need to be processed and analyzed quickly to track animal populations and combat poaching.

Mark explained that PEAK:AIO’s high-performance storage technology allows conservationists to analyze this data in real time, enabling them to make faster decisions that critically impact efforts to protect populations of endangered species. By offering scalable, reliable storage that can handle large volumes of data, PEAK:AIO plays a crucial role in ensuring that AI can provide accurate and timely insights that directly impact conservation efforts.

Scalability: Preparing for the Future of AI

One of the key factors that Mark emphasized throughout the conversation was the scalability of PEAK:AIO’s storage solutions. As AI continues its inexorable growth, the amount of data generated will only increase. Unlike traditional storage systems that are often ill-equipped to sustain such growth, PEAK:AIO’s technology is designed to scale seamlessly. Whether it’s in healthcare, conservation, or any other industry, PEAK:AIO ensures that data can be processed, stored, and accessed without the bottlenecks that slow down traditional systems.

In fact, Mark shared that PEAK:AIO is already looking ahead to the next big advancements in AI storage. As AI technologies become increasingly sophisticated, the demand for storage that can handle larger, more complex datasets will continue to rise. PEAK:AIO is uniquely positioned to meet these future demands, providing high-performance solutions that keep up with AI’s rapid evolution.

The Power of Efficient Storage for AI’s Growth

So, what’s the TechArena take? PEAK:AIO’s innovative storage solutions are helping industries unlock the full potential of AI by providing fast, scalable, and efficient data storage. Whether in healthcare, conservation, or beyond, the work being done by PEAK:AIO is making AI faster, more reliable, and capable of driving meaningful change in the world. In the heady world of hype on AI’s future potential, our conversation was a refreshing reminder that AI capability is being deployed for pragmatic benefit today. Check out the interview to learn more.

The Truth About AI Inferencing: Understanding Generative AI and Its Infrastructure Demands

Generative AI has rapidly become the driving force behind over 80% of enterprise AI deployments, signaling a shift from traditional predictive models to systems capable of creating entirely new content. In this webinar, Shimon Ben David, CTO of Weka, and Ace Stryker, AI & Data Center Lead at Solidigm, explore the evolution of AI inferencing, the critical infrastructure challenges that come with scaling generative models, and real-world case studies showcasing how enterprises manage inferencing at exabyte levels. Learn how advanced storage solutions, optimized resource utilization, and seamless GPU integration can reduce model load times dramatically and ensure the efficiency needed to support the next wave of AI innovation.

GTC 2025: AI Factories Take Center Stage

We are heading to GTC this week, and I can't help but think of Aaron Burr singing about the room where it happened. This is THE AI conference of the year, where a certain leather-clad CEO will provide his outlook on the next stage of AI infrastructure delivery. And while there will be heady talk of the next mega-factory buildout pushing LLM capability further, I am seeking information on a few topics to set the stage on the strength of broad AI adoption.

- The DeepSeek impact: We have featured DeepSeek extensively on TechArena, and I'm intrigued by how NVIDIA will embrace this and other Chinese developed LLMs, all delivering new capability and efficiency. Some have claimed that these models threaten NVIDIA demand. I, for one, believe more efficient technology begets broader market adoption and am hoping to hear a lot about a broader array of model optimization at the show.

- Enterprise uptick: We have been discussing AI inference at scale lately – from the pragmatic approaches offered by Intel to the fine-tuned leadership performance from Cerebras to the energy efficient AI acceleration at the edge from Untethered AI. What I'm excited to see at GTC is actual enterprise adoption examples across industries, with meaty, scalable, adoption of LLM infused applications. 2025 is forecasted to be the year of hockey-stick growth for enterprise application ignition, and I'm keen to see what use cases and industries are leading the charge. While NVIDIA will likely showcase these stories across the event, we will be digging deep into the vendor showcase to seek out broader examples of customers taking the gen AI plunge.

- Agentic computing: At MWC earlier this month, the talk suggested everyone got a memo upon entering the Fira stating that they must mention AI agents at least three times a day. Agentic AI is the new hot topic as researchers push the application of LLMs further, and automated sequencing of work takes hold. I am at GTC to dig into the various approaches to Agentic computing and how vendors are building guardrails to agent actions for risk-averse enterprises who may not be totally ready to hand over full control to an army of agents.

We will be reporting on these topics and more in the coming days from San Jose! Watch this space for coverage, and follow the TechArena feed to ensure you don't miss any of the story unfold.

ASIL Decomposition and Functional Safety

In my previous blog, titled A Deeper Dive on Functional Safety (FuSa), I took a closer look at the two key elements of functional safety: systematic fault coverage and random fault coverage. In short, systematic fault coverage ensures that the device in the system is designed, verified, and tested at a level of rigor and robustness that is consistent with the target Automotive Safety Integrity Level (ASIL), to ensure there are no faults that are systematic in nature. (For those not familiar with the terminology being used at this point, it is advised to review the earlier blogs on this topic.)

For those of you old enough to remember the Intel “Pentium Processor Divide Error,” this is a good example of an error that was systematic in nature - i.e. the same set of numbers when divided by one another consistently produced an erroneous result across every Pentium Processor. A key foundation to achieving a given ASIL is that there are no systematic faults that have been designed into the device. If that is not the case, one can imagine a situation where an instruction is issued to a processor to have a car turn right, but instead, it turns left and will do so erroneously every time that instruction is issued. If systematic errors are not wrung out during the design, verification, and testing of the device, it is almost certain that the system will fail or, at a minimum, provide an undesired response.

Random hardware failures, on the other hand, occur unpredictably over the lifetime of a product. However, they tend to be probabilistic in nature. These errors are the basis for the term ‘probabilistic metric for random hardware failures’ (PMHF), and occur for various reasons, such as neutron flux, power supply droop, transients, etc., which are independent of design and quality rigor. The other faults that comprise the suite of random hardware faults include single point fault metric (SPFM) and latent point fault metric (LFM). As ASILs increase, the acceptable levels of “escaped faults” become more stringent for the system.

To remind the reader, functional safety is not the absence of faults - it is the ability to detect or flag a fault when it occurs, so this information can be passed on to the system, and the appropriate corrective action can be taken. This action can range from advising the driver to take the car into the shop for maintenance in a few months, to crippling the car and driving it off to the side of the road. These decisions happen at the system level, but the fault needs to be detected and flagged at the chip level and communicated in order for the system to be able to make those decisions.

While semiconductors employed in critical automotive safety applications support ASIL D systematic fault coverage, the majority of the complex semiconductors employed in a vehicle support only ASIL B random fault coverage, which seems somewhat counterintuitive. That said, the entire system, which comprises all the multiple devices in the safety path, typically needs to achieve ASIL D at the system level. So, the question is: how is ASIL D random fault coverage at the system level achieved when each device itself supports only ASIL B random fault coverage? The answer is through a technique called decomposition. Before going into some of the details of decomposition, here is another analogy that can be very helpful.

If you have ever glanced into the cockpit of a major airliner aircraft, it is riddled with an incredible number of gauges, dials, knobs, and switches. One begins to wonder exactly how a pilot would be able to figure out which gauges to read and which knobs and switches to turn. Upon closer inspection—should you be allowed inside the cockpit of a commercial airplane—you would find that there are actually three gauges that are measuring the exact same thing, as well as three switches and knobs that control the same aspect of the plane. This is done deliberately, and is referred to as triple mode redundancy (TMR).

The motivation for this approach is that, under normal operating conditions, all three gauges will read identically the same. However, in the case of a random fault, the probability is that the random fault will only occur with one of the gauges, not all three. So, if two of the three gauges are reading the same value and one gauge isn’t, it probably means that the gauge showing the different result is wrong. Employing TMR, the probabilities of a random fault not being detected can become even more infinitesimally smaller than those defined in ASIL D random fault coverage.

The price tag associated with solving the problem through TMR also leads to a significant increase in the cost of each protected system. This may not be a problem for a multi-million-dollar aircraft, but it quickly becomes impractical in an eighty-thousand-dollar passenger car. Now, taking a step back for a moment and returning to the original point with regard to systematic fault coverage, which requires real rigor and a foundation for FuSa, imagine a platform with three Pentium Processors, with the divide error now connected in TMR. When they check each other’s results, even though the answers to the division problem are incorrect, they all arrive at the exact same conclusion, which is obviously a problem. This is why I made the point that robust, i.e. ASIL D systematic fault coverage is a foundational requirement for FuSa.

So, for reasons previously explained, most semiconductors don’t simply employ TMR on-chip to achieve ASIL D random fault coverage, because the costs would be exorbitant and prohibitive. To achieve ASIL B random fault coverage alone requires subsystems with “lock-step” processors on board, where, rather than three processors, two processors check each other’s results and use that information for safety-critical regions of the device. Typically, these are the processors that either drive actuators (motors, brakes, etc.) or pass messages on the CAN bus - a bus that ultimately may affect the actions of an actuator. I’ll stop here, because this topic too can get fairly deep fairly quickly.

Through a technique called decomposition, multiple devices in the system provide alternative paths to ensure checks and balances are maintained at the system level, similar to the TMR aircraft example. Decomposition is not a topic worthy of blogging about in depth, as it becomes more complex even faster than the way ASIL B random fault coverage is achieved at a device level. However, the curious reader can certainly use ChatGPT to dig in deeper if interested. Suffice to say, decomposition, in effect, mirrors that of TMR in the way the results from one device aren’t simply taken at face value.

Perhaps the key takeaway from this three-part series on FuSa is that designing devices and systems for automotive applications requires significant thought and foresight. One of the key forcing functions to ensure that best-practices are employed and ISO26262-compliant guidelines are followed with rigor is that, in the case of a life-threatening accident, if it becomes clear that these guidelines and rigor were not followed, the automotive OEM and Tier 1 (as appropriate) will assume the liabilities.

When it was proven that a well-known OEM with a “stuck accelerator” problem was caused by poor software coding practices, a settlement of $1.2 B was reached. And oh, yeah, we didn’t touch on the topic of FuSa as it applies to software. Maybe another blog?

Generative AI vs. Machine Learning: What's the Difference?

Some people say that we are in the age of AI. In fact, we seem to be smack in the middle of a bubble – AI is all anyone in tech can talk about! But what do people mean when they talk about AI? How can you be sure you’re building something that will solve real problems? One way is by understanding terms. Let’s start with the differences between Machine Learning (ML) and Generative AI (Gen AI).

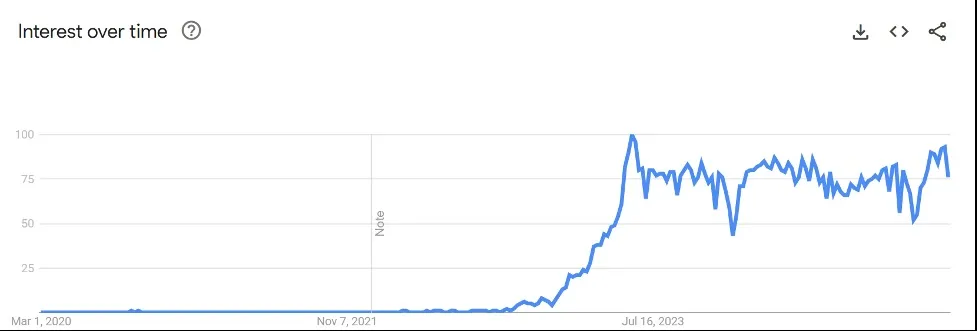

Search Terms Indicate a Hype Bubble

In many cases, marketing for AI is really marketing for Gen AI. Take a look at Google trends for the term “generative” AI for the past five years:

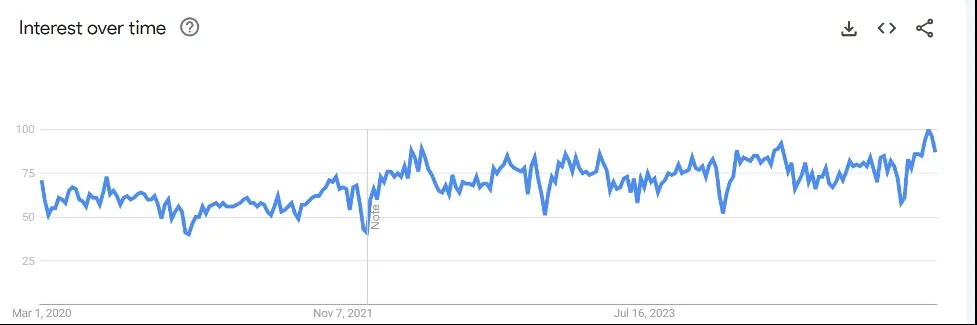

But when you look at Google trends for the term “machine learning,” you notice that it’s been a popular search for the same period:

It’s apparent from Google search trends that interest is more consistent with ML than Gen AI. Why is that?

How is generative AI different than Machine Learning

Generative AI creates new content from existing data. Different models generate text, images, music, and more, based on the way they have been trained with advanced algorithms. It takes vast amounts of data to power the algorithms.

Machine learning is an entire subset of AI. According to IBM , ML is “a branch of AI focused on enabling computers and machines to imitate the way that humans learn, to perform tasks autonomously, and to improve their performance and accuracy through experience and exposure to more data.” ML is what fuels descriptive, predictive, and prescriptive AI applications.

Machine learning is an entire subset of AI. Algorithms are applied to large amounts of data so that the ML program can “perform tasks autonomously, and ... improve their performance and accuracy through experience and exposure to more data (via IBM). ML is what fuels descriptive, predictive, and prescriptive AI applications.

Machine Learning Categories Explained

There are three ways to perform ML. With supervised learning, the models are trained with labeled data sets. My colleague, Tony Foster, walked through training a model to recognize cats in our VMworld session last year.

With unsupervised learning, the models use unlabeled data sets. This is what helps you find data trends you may not know to look for.

Reinforcement learning trains models “through trial and error to take the best action by establishing a reward system”. This could be as simple as letting the model know when it made the right decision.

Most AI in the Enterprise is Powered by Machine Learning

Here’s a list of applications that depend on machine learning that you probably already use (via TechTarget):

- Chatbots use ML and natural language processing (NLP) to mimic conversation.

- Digital assistants, such as Siri and Alexa use ML to understand and respond to voice commands.

- Recommendation engines process your past purchases + current inventory + what other customers are buying to recommend what you should also buy.

- Dynamic pricing helps companies adjust the prices they charge based on market conditions. Controversially, it also seems like they have been marrying recommendation engines and dynamic pricing to charge different prices for individual consumers.

- Speech recognition can record calls, monitor customer calls with human agents, and provide language translation.

Generative AI Doesn’t Work Without Machine Learning

Gen AI relies on ML techniques to work. It often uses NLP and computer vision in its creation process. Other ML disciplines used by Gen AI include (via Blue Prism):

- Multimodal AI to interpret text, images, and videos.

- Large Language Models (LLMs) were designed to generate and understand human language text.

- Generative adversarial networks (GANs) are unsupervised learning techniques that pit two neural networks against each other during training.

- Transformers use math to identify the context and relationships between data.

- Diffusion generates new data.

Know AI Basics to Navigate the Hype

Generative AI is built to create new content. Machine Learning analyzes lots and lots of data to make sense of it, and there are many models to accomplish this. Knowing the difference between the two will help you zero in on what type of AI can help you solve business problems.

Here’s the question to ask yourself before a vendor calls you to pitch an “AI” solution: what is your business use case for AI? Will it require Gen AI? Or will an ML algorithm be enough?

The Evolution of Ransomware, Pt. 2

Welcome to the second half of this series on the growing threat of triple extortion ransomware. In the first part of this series, we discussed Aaron, a business owner who became the victim of a ransomware attack that not only rendered his data inaccessible, but also threatened to expose sensitive personal information that would destroy his reputation. This attack highlights the evolution of ransomware, which is no longer just about encrypting a victim’s data – it’s about exploiting that stolen data in increasingly personal and nefarious ways to maximize damage.

As ransomware has evolved, attackers have adopted more sophisticated methods, such as double and triple extortion, to target both the confidentiality and reputation of businesses and individuals. These advanced techniques render traditional defenses, such as secure backups, insufficient against this growing and dangerous threat.

The Goalposts Have Moved: Triple Extortion Ransomware

Old-fashioned ransomware attacks focused on encrypting a victim's data, rendering it inaccessible. The attackers would then demand a ransom payment in exchange for the decryption key, effectively holding the availability of the data hostage. This type of attack disrupted operations by targeting the availability of the data to its rightful owner.

Modern ransomware has evolved beyond simply encrypting data in two ways. Now, attackers not only encrypt the data, but also steal it and threaten to release it publicly if the victim does not pay the ransom. Typically, they try to sell it as well. This "double extortion" tactic adds another layer of pressure for the victim to pay the ransom, targeting not only the availability of the data, but also its confidentiality. Even if a victim has protected backups and can restore their data without paying, they still face the risk that attackers will expose sensitive information, potentially leading to reputational damage, financial losses, identity theft, and legal repercussions.

Triple extortion ransomware, the latest evolution in ransomware, amplifies the already devastating effects of double extortion (data encryption and theft) with a third, often highly personalized, attack. It represents a significant and growing trend, especially among more sophisticated threat actors. Think of it happening to you: First, your computer is locked down by encryption – you cannot access any of your files. That is the initial blow. Second, the attackers reveal they have stolen sensitive personal data, such as tax returns, banking files, medical records, and private messages, and threaten to expose it publicly or sell it unless you pay. This is the second extortion, preying on your confidentiality. Then comes the third attack, designed to maximize coercive pressure, so they get paid immediately: they might hijack your social media and email accounts, locking you out or posting fabricated, reputation-destroying content to your contacts. Mixing stolen family photos with materials involving the sexual exploitation of minors, for example, effectively coerces payment, even though it is a falsified accusation. If you go to the police, they will release this material. The sense of helplessness can compel victims to pay even more quickly. This triple threat – loss of access, threat of exposure, targeted disruption of your online life, and police deterrence – makes triple extortion a particularly effective and profitable form of ransomware.

No Longer Safe with Backups

Traditional ransomware solely targeted the availability of data. The threat was primarily operational disruption. Modern ransomware, with double and triple extortion, targets confidentiality as well. The threat now includes reputational damage, financial losses from data breaches, and potential legal repercussions. This is a fundamental shift, because even with secure data backups, victims are no longer safe from ransomware.

The progression from single to double to triple extortion reveals a calculated escalation in attackers' methods, driven by a desire to maximize profit. Many people view the pursuit of financial gain as a healthy business practice, but ransomware attackers go beyond mere profit-seeking by using increasingly mean tactics to achieve this goal. The example of mixing stolen family photos with false accusations of child predation, while deeply disturbing, illustrates the lengths to which they will go to coerce quick payment. The emotional and psychological impact of triple extortion, therefore, extends past financial losses, underscoring the consequences of their profit-driven motives.

The evolution of ransomware techniques is directly linked to increased profitability for attackers. Double and triple extortion tactics provide more leverage, increasing the likelihood of payment, as attackers not only extort victims, but also have the option to sell the stolen data. This increased profitability often directly funds the development of more effective and profitable RaaS tools, creating a cycle that perpetuates itself.

A Widespread Threat

While ransomware is widely acknowledged as a serious threat, some believe that strong cybersecurity measures—such as up-to-date backups, robust endpoint protection, and employee training—are sufficient to mitigate the risk. However, even the best backups become ineffective when attackers focus on extorting personal information, as ransomware has evolved to more aggressive tactics.

Some argue that triple extortion is still in its pilot stages and believe that most organizations and individuals are unlikely to be targeted by such sophisticated attacks. However, the tools and techniques used for triple extortion are becoming increasingly accessible. The democratization of cybercrime tools, due to RaaS providers, lowers the barrier to entry for attackers. For example, using AI-generated images, it has become relatively easy to place a victim into a compromising position.

Additionally, anyone with enough financial resources—such as those able to pay $25K—becomes a potential target. But it’s not just individuals with that amount of money on hand that are at risk. Those with valuable data that can be sold, or those holding data that can be used to extort a third party for a payment, also become prime targets. This makes individuals and businesses involved in supply chain networks, or affected by data broker breaches, especially vulnerable to these growing threats.

A Call to Stay Vigilant

This article aimed to shed light on the evolving ransomware landscape, encouraging both individuals and organizations to adopt proactive and comprehensive security measures. As we've discussed, ransomware has moved beyond simple data encryption; it has transformed into a multifaceted extortion tool, utilizing "double" and "triple" extortion tactics. This shift, driven by the potential for direct financial gain, the rise of personalized attacks (such as social media hijacking), and the limitations of traditional defenses, like backups, highlights the urgent need for increased vigilance.

The dangerous feedback loop, fueled by the growing profitability for attackers, demands immediate attention. This issue directly impacts personal and professional security, threatening reputations, finances, and even emotional well-being. Therefore, readers will benefit by learning more about how attacks work, engaging in meaningful conversations about these evolving threats, and taking steps to protect themselves and their organizations from the real threat of modern ransomware.

Shaping the Future of AI: Insights from Ami Badani, CMO of Arm

Mobile World Congress took place in Barcelona last week, and I’m still buzzing with the discussions from the event on the advancement of artificial intelligence (AI). A surprising company to enter the leadership fray in this space is Arm, whose technology is driving AI powered devices and applications that are transforming industries and enhancing user experiences worldwide. I caught up with Ami Badani, CMO of Arm, in a TechArena fireside chat from the show. She shared valuable insights into how the company is positioning itself at the forefront of the AI revolution. Ami’s heritage is vast, with time spent in finance as well as creating market traction at NVIDIA, so she knows a lot about both AI’s advancement and what it takes to manifest broad market opportunity.

Arm’s Crucial Role in AI

Arm has long been known for its power-efficient computing. As AI takes center stage, some might question how Arm will fare as accelerated computing has taken center stage. Arm, however, has strategically positioned itself to be in the middle of AI’s advancement noting that AI is swiftly transforming to hyperscaler driven training to inference across a vast sea of computing platforms at the edge. With its role expanding rapidly. Ami highlighted how Arm is involved in the training, fine-tuning and inference phases of AI. “You can’t do anything without a power-efficient compute platform,” Ami explained, underscoring Arm’s importance in enabling AI across a wide range of applications.

Where is AI expanding? Well, everywhere. Ami sees the technology advancing across industrial systems, autonomous vehicles, and even smart homes. And with a focus on delivering maximum performance per watt, Arm is ideally positioned to support AI in in use cases and environments that seek efficient compute at scale.

Strategic Partnerships Driving Growth

Ami highlighted Arm’s success, particularly through its strategic partnerships with major tech players - companies like NVIDIA, Amazon AWS, Microsoft, and Google, all of whom rely on Arm’s power-efficient compute to deliver AI solutions. For example, Arm’s partnership with NVIDIA on the Grace Blackwell system (they are the Grace in Grace Blackwell) has proven pivotal for AI training and inference. Arm’s leadership extends into hyperscaler’s home-grown chips with industry behemoths including Amazon, Microsoft, Google and more tapping the architecture for their respective silicon offerings.

But the data center is just one aspect of the story here. Arm’s historic strength lies at the edge especially mobile platforms. Arm’s collaborations with leaders Samsung and Apple powers devices like the Samsung Galaxy and iPhone where AI is rapidly integrating into the user experience. In the automotive space, companies such as Mercedes-Benz, Rivian, and Honda rely on Arm’s technology for AI-driven experiences in autonomous vehicles, from driver assistance to in-car infotainment.

AI at the Edge: The Next Frontier

Looking ahead, Ami highlighted expanding opportunities for AI at the edge. Devices in cars, factories, and homes will increasingly run AI workloads locally, without relying on distant cloud servers. This shift to edge-based AI will showcase the ability of Arm’s power-efficient architecture to enable autonomous systems to make decisions quickly and efficiently.

In addition to hardware, software optimization plays a key role in ensuring AI works seamlessly on Arm’s platform. Ami pointed out that, as AI use cases expand, software will need to evolve to better scale and deploy across Arm’s ecosystem. This focus on optimization is critical to making Arm’s technology easier to integrate into AI applications.

A Commitment to the Developer Community

Arm’s commitment to AI extends beyond hardware development. Ami emphasized the company’s commitment to empowering developers, who are vital to driving AI progress. Arm offers a wealth of resources for developers, from tools to educational content. “We have a lot going on in the developer space,” Ami said, encouraging developers to explore Arm’s offerings and engage with the company’s online presence.

Whether through social media platforms, such as LinkedIn and Instagram, or the Arm Newsroom, the company ensures that both industry professionals and developers stay connected with the latest advancements in AI and compute technology.

So what’s the TechArena take? Recent announcements, such as Arm’s inclusion as a key partner in the Stargate project with OpenAI, Oracle, and SoftBank, confirm the company’s position as a leader in AI growth and development. “Arm is at the center of everything happening in AI,” Ami said, emphasizing the company’s integral role in enabling AI at scale. I’m excited by the opportunity that the architecture represents to extend AI to the far edge and curious as to how the market responds to the rumored Arm delivered processors later this year. Expect more coverage on the company in coming weeks from TechArena.

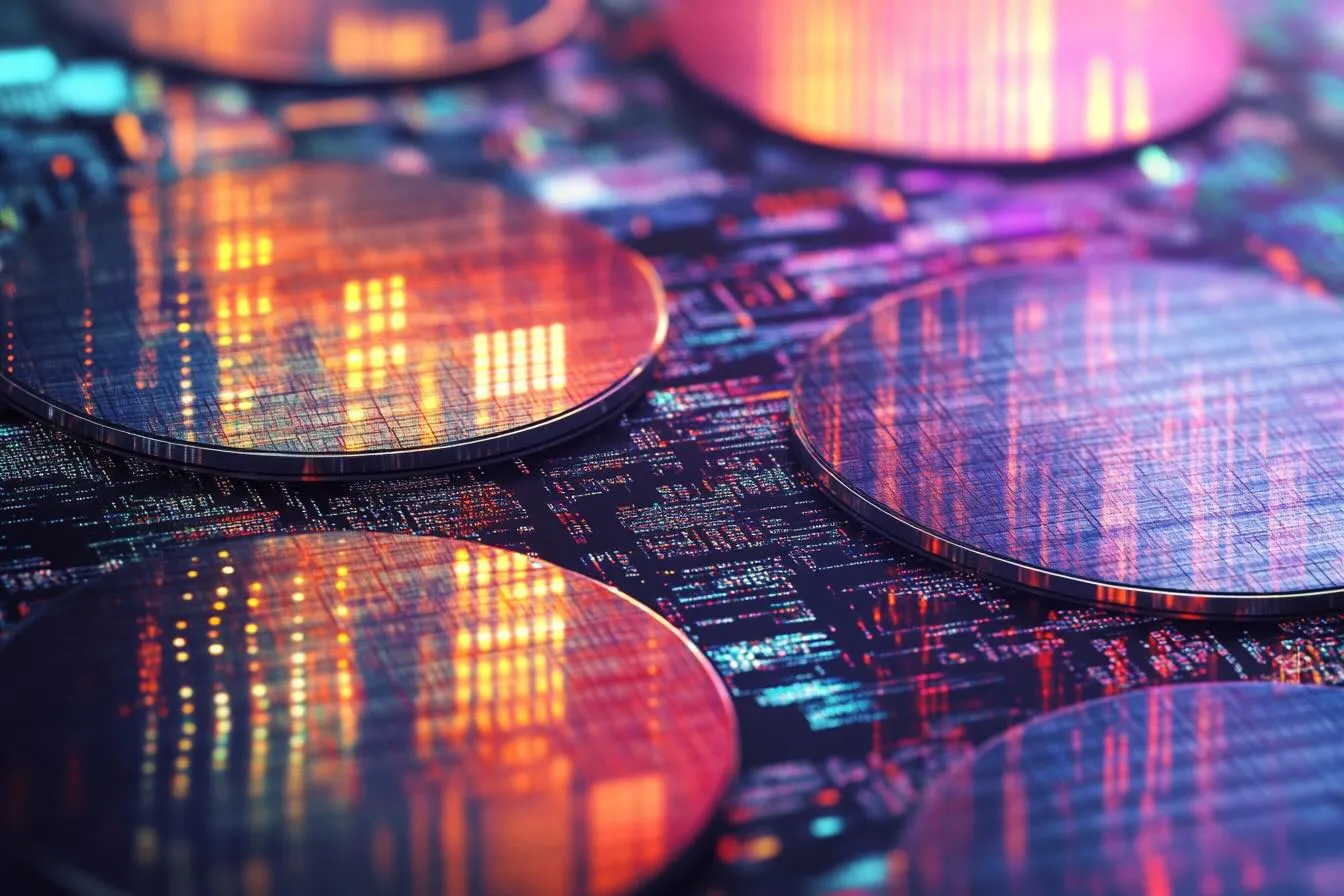

Optimizing AI Inference: Fireside Chat with Cerebras’ Andrew Feldman

Cerebras is on a tear. Just a week ago, I had the privilege of hosting Cerebras CEO Andrew Feldman, for a Fireside Chat. He laid out his vision for the company, the background of delivering a dinner plate sized ASIC to drive AI inference at scale, and how he drives disruptive innovation where others have failed. It was a fascinating interview that showcased why Cerebras is turning customer heads.

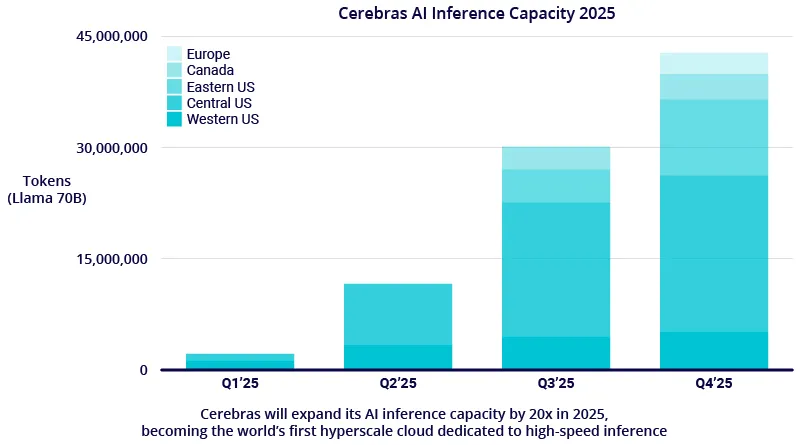

And at AI speed, Cerebras has unleashed new momentum in the market. This week, the company announced a massive expansion of its inference capacity, adding six new AI inference data centers across North America and Europe. Powered by thousands of Cerebras CS-3 systems, this network will deliver over 40 million Llama 70B tokens per second, marking a significant leap forward in AI compute.

What’s more, the company announced a sweeping collaboration with Hugging Face, everyone’s favorite opensource AI model, bringing new capability to over 5 million AI developers.

In an AI landscape where advancements emerge weekly, the scale of deployment and the boldness of the collaboration has the potential to be a game-changer. You’d almost start to wonder if it was the week before GTC 😊.

How did we get here? Let’s wind the clock back, all the way to last week’s fireside chat. Andrew shared that he and his co-founders crafted a vision to revolutionize AI compute, as far back as 2015, after observing that existing compute architectures weren’t well-suited to handle the unique performance requirements AI demanded. They saw an opportunity to create a new type of processor that was specialized to handle the increasing demands of AI inference. This ambition led to the creation of the largest chip ever designed—one that would not only challenge conventional architectural approaches, but also surpasses them in performance.

One of the most interesting aspects of our conversation was the way Andrew and his team approached the challenge of building such a massive chip. At the heart of Cerebras’ strategy is the belief that merely improving on existing technology wasn’t enough: "If you're going to attack an incumbent, doing something a little better and a little cheaper is unlikely to get the job done," Andrew explained. Instead, Cerebras went big—building a chip 56 times larger than the largest GPU ever made.

But the scale of impact of Cerebras’ chip isn’t just about size. The design is optimized to deliver high performance with efficient power consumption, making it ideal for navigating the complex computations required by AI inference, especially when you consider power requirements of competing GPUs. The result is a chip that delivers performance that far exceeds traditional GPUs, with some models outpacing 50, 60, or even hundreds of GPUs, depending on the workload.

Today, Cerebras-based platforms are delivering results across a wide range of AI models. Andrew spoke about how their engineers are able to quickly adapt their hardware to new models, something that’s a challenge in today’s warp speed model advancement, to enable customers to utilize models of choice including support for models optimized for different languages. Cerebras technology, for example, was instrumental in optimizing Jais, a leading Arabic-language LLM developed by G42.

This model advancement, of course, assists with performance of end user deployments. One example Andrew shared that particularly resonated with me was their collaboration with GlaxoSmithKline to increase the pace of pharmaceutical innovation using new AI models. I have spoken on this platform before about the potential for AI tools to unlock new frontiers in medicine, and by putting cutting-edge compute in the hands of researchers, Cerebras is playing a role in solving important medical issues and improving lives.

Looking toward the future, Andrew predicts that AI inference advancement will continue to skyrocket, especially in industries such as healthcare, energy, and communications. He explained that the next wave of AI adoption would be driven by an interesting interplay between edge and cloud, stating that the role of edge computing would enable broad inference adoption and place more demand for data center compute. Drawing a comparison to the launch of the original iPhone, Andrew posited “everybody was worried that it would mean less data center spend.

And lo and behold, we make the device in your hand more powerful, it does more on the edge, and what do we want? We want more—and that means you go back to the data center more and more frequently.”

So what’s the TechArena take? The news this weeks puts a lot of wind in the sails of Cerebras market advancement. I expect that Andrew and the Cerebras team will leverage this news to fill 2025 with continued performance optimization advancement across large language models. I’m expecting to see more customers hop aboard the Cerebras wagon as enterprises seek inference performance at scale and are willing to invest the deep engineering collaboration time to tap Cerebras hardware to its fullest capability. This will be bolstered by the massive uptick in developer engagement through the new Hugging Face collaboration. While Cerebras may not be every enterprise’s cup of tea (for example small models may not require the gut punch of compute power that Cerebras delivers), many will be attracted by Cerebras’ performance and performance efficiency... and having a second source of supply for AI inference at scale. I love that there is another horse in the race and can’t wait to see how this plays out. More on the AI front next week as TechArena ventures to San Jose with front row seats at GTC.

AI in 2025: From Hype to Practical Business Impact

Join Intel’s Lynn Comp for an up-close TechArena Fireside Chat as she unpacks the reality of enterprise AI adoption, industry transformation, and the practical steps IT leaders must take to stay ahead.

The Evolution of Ransomware, Pt. 1

No one wants ransomware attacking their company. But what if you were the target? Ransomware targeting individuals has evolved since WannaCry’s ransomware outbreak that swept across the globe in May 2017. Individual ransomware is no longer just about locked files—it's personal.

The rise of this type of ransomware, where stolen data is weaponized, is creating a new era of ransomware for businesses and individuals. With the evolution of double and triple extortion tactics, ransomware has become a personal threat, rendering traditional defenses, like immutable backups, completely inadequate.

That is how Aaron found himself on a Friday morning this autumn. Aaron was a successful businessperson who faced a $25,000 extortion demand after hackers stole sensitive personal data and threatened to release it publicly.

Aaron founded a company that leased a fleet of planes to governments and corporations, a business that represented the sum of his whole career. When he reached for his phone first thing Friday morning while still in bed, instead of the familiar green on the Bloomberg screen, a stark red skull filled the display. "WE HAVE COPIES OF ALL YOUR DATA," it screamed in block letters, along with, "pay $25,000 in USD with Monero or risk a much higher demand if we find anything particularly sensitive to share with your contacts." A screenshot of some of his files was included as well. And they would find something particularly sensitive if they looked – photos, chats, and emails with the person he had an affair with that summer.

Aaron told me his wife would divorce him, for sure, after the last time, and also take the kids. He was just as concerned about the socially conservative clients he had in the Middle East. He had cultivated these relationships with great care, even hiring an Islamic salesman to insulate himself, knowing of the cultural sensitivities. While they had overlooked his Jewish background, they would not overlook his gay adultery. Exposure would mean the immediate loss of their predictable contracts, which would trigger a cascade of loan defaults on the fleet, lawsuits, and financial ruin - and compound the personal hit of the custody battle.

Despite having a Managed Security Service Provider (MSSP) for digital security, Aaron’s personal devices were a mess. His phone was his lifeline, but also a major risk. He knew about potential risks, such as clicking on unfamiliar links, but he had fallen into poor security habits—clicking on links in emails from “old friends” he hadn’t heard from in years that might want to invest, and using variations of the same password that had been a part of multiple data breaches across sites.

Aaron knew calling his MSSP was out of the question for such a private matter. This was the situation when I first spoke with Aaron. I wanted to break it to him, gently, that the situation was much worse than he realized. It was not just his stolen data – his banking, tax returns, medical records, intimate photos, and private messages. That was bad. But in addition to selling his data, they'd also likely gained access to his social media and email, and would likely post fabricated, deeply damaging content to his business and personal network. Imagine your family photos, twisted into a grotesque narrative with the help of generative AI, falsely accusing you of child predation, or spewing racist vitriol, all meticulously crafted to obliterate your reputation. His friends would probably call the police on him, the victim. Some of the accusations would make it to the divorce court, if not a criminal court. The attackers' goal was straightforward: to leave him with no other choice than to pay them immediately.

This is the terrifying threat of modern ransomware – a threat that may not dominate the headlines in 2025, but nonetheless continues to evolve and pose serious risks. Threat actors have refined ransomware into one of the most effective ways to monetize compromised systems, targeting both individuals and organizations.

Ransomware provides attackers with direct payment from victims. Although it is often difficult and risky, ransomware attackers can also sell the stolen data, a tactic known as 'double extortion ransomware.' This means victims not only lose access to their data, but also risk attackers publicly sharing or selling it, breaching their confidentiality.

Cybersecurity organizations, such as CrowdStrike, Sophos, Mandiant, Verizon, IBM, CISA, and the FBI publish reports that provide insights into the ongoing ransomware threat. Cybercriminals are making the tools and techniques used for the most sophisticated triple extortion ransomware more available. For example, Ransomware-as-a-Service (RaaS) platforms have proliferated, enabling cybercriminals to deploy advanced ransomware attacks. These platforms often provide web interfaces, documentation, tutorials and support, making sophisticated ransomware and money laundering techniques more accessible. Even when a major ransomware group like LockBit is disrupted, the problem of ransomware persists because RaaS tools are widely available.

The evolution of ransomware enables cybercriminals to profit more directly than many forms of hacking, keeping the risk high to individuals and organizations.

Ransomware's ability to extract direct payments, unlike many other hacking methods, makes it profitable and, therefore, a persistent threat to individuals and organizations.

Watch for Part 2 of this article – Where I'll dig deeper into ransomware and provide additional insights and methods to protect yourself.

How Palo Alto Networks is Fighting AI Threats with AI

In this episode of In the Arena, Palo Alto Networks’ Dharminder Debisarun explores the challenges of securing smart industries, preventing attacks, and staying ahead in an evolving threat landscape.

Dryad Networks’ Solar-Powered Gas Sensors Sniff Out Fire

One of the nicest things about MWC is the ability to track advancement of technology, and this is why I was delighted to chat with Carsten Brinkshulte today. Carsten is the founder and CEO of Dryad Networks, an innovator that we first met on the TechArena podcast last year. The firm has a vision of creating a network of the forests, deploying solar powered gas sensors to sniff out fire, even before the point of ignition. With visions of LA fires still raw, and the global threat of wildfires growing more acute daily, Dryad's mission is both urgent and essential.

Carsten had returned to Europe from Thailand, just in time for MWC, where the Dryad team has partnered with the Thai government to deploy over 50,000 sensors in a national forest outside of Chiang Mai. This is a large-scale deployment compared to the POCs he'd shared last year, and the network was put through its paces to ensure quality performance. This started with sensor and base station deployments, all done at least three meters off the ground, and carefully hung to prevent injury to the trees. Once the network was in place, the Thai government put it to the test, with both a large and small scheduled burn. The sensors sniffed out the beginnings of fire within 6 minutes, fulfilling the mission of early detection as planned. Their rugged design ensured their continued operation despite exposure to high temperatures.

But that's not all. Days later, another alert was signaled, which the team thought was a false positive. After a couple hour hike through the jungle, the team found a smoldering tree, which had been overlooked when maintaining the controlled burn. Here was proof that even smoldering gas triggered the sensor as planned, and with this deployment, this corner of forest floor is now a bit safer from fire.

With deployments scaling, I expect to hear more about Dryad in the months ahead, including advancement on Carsten's broader vision for the company. I have to admit that this combination of sensor technology and mesh network, all powered by solar cells, is one of the TechArena team's favorite tech use cases. After all, we hail from the Pacific Northwest, and forest protection speaks deeply to us.

Diving Deep in the Tech at MWC

Most people are thrilled by the glamour of MWC. Holographic images, building-sized mobile gaming, and corporate taglines blazing future promises.

What AI was meant to be!

AI Inside for a New Era!

Let the Transformation Begin!

Taste the Rainbow!

Ok, just kidding on that last one. While marketing, with magic to misery on display in Barcelona, I was digging deeper for the pure tech stars of the show. What were the standout use cases and tech innovations that would both carve the industry conversation for the upcoming months, and frame targets for next gen deployments. Here's what I found:

Agentic AI is Arriving for the Network

A recent blog post covered enterprise AI adoption and how much of enterprise deployments today are focused on traditional use cases, such as image recognition and natural language processing. Yesterday, in my fireside chat with AMD's Salil Raje, we discussed when the edge would have its “Chat GPT” moment with true adoption of Gen AI. Today, I had a chance to walk through Metrum's agentic AI platform for network management, and while not a traditional chatbot (in fact much more exciting), this demonstration showed a next level of AI application at the heart of IT control. What the Metrum team has pulled off showcases the power of telemetric oversight of a broad array of infrastructure, with automated delivery of recommended actions to solve real time problems. These recommendations, soon enough, can be delivered as fully automated network control with the right trust and reliability, and are a quantum leap from the endless sea of network help desk chat bots that littered MWC demos in 2024.

Cloud to Edge Public Safety

The folks at Oracle know a thing or two about data analytics, and so it's not shocking that they've applied their expertise to this critical use case. Using Oracle's Enterprise Communication Platform, first responders can tap technology across on-body, in-vehicle, overhead drone, and back to the cloud, with Oracle's Public Safety Suite to deliver real-time analysis of crisis situations. Teams have the benefit of multi-video feeds simulcast simultaneously, alongside broader environment data streams, to improve decisions and actions that can save lives.

Telephonic Fraud Control

We have all got those calls...scammers phishing for our data, challenging us to divulge private information, or simply being an annoyance. It's often made me wonder why the telco industry doesn't do more to identify and alert fraudulent connections for customers. New tech by Neural Technologies provides sweeping identification of devices and connections that have high chance of fraud risk. With legislation gaining momentum across the globe to hold carriers partially accountable for fraudulent engagement, this type of analytics should be seen as a cornerstone of network security platforms in the near future.

Humanoid Robots: Edge AI Personified?

There’s always one use case at MWC that seems to capture the collective conversation, and this year’s topic was humanoid robotics. These life-like machines, integrated with all of the intelligence of the latest LLM, have been bequeathed THE innovation that will propel Gen AI at the edge, and solve pressing challenges, such as healthcare and teacher shortages in underserved communities. We may all be cohabiting with Rosie Jetson sooner than we think.

Hats off to the true innovators from companies large to small here in Barcelona. It's engineering breakthroughs like these that make MWC a highlight of every year's tech landscape.

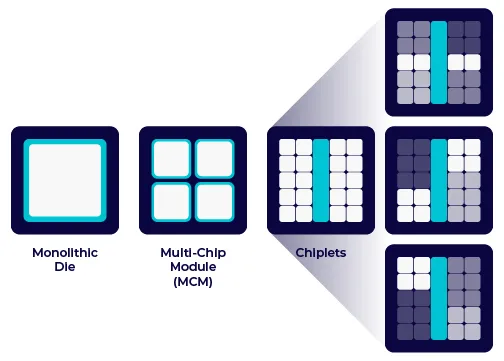

Why Small Chiplets are a BIG Deal

We all know the IT industry is constantly evolving, but now, one of its core technologies is being redefined in the process. I am referring to the processor: the brains of the computer, which are fundamentally being redesigned to change how we build and scale computing systems going forward.

This is happening amidst growing demands for computing power, driven by AI, data analytics, and edge computing. This demand is creating more complexity for design and manufacturing as density, power and integration of functions continue to increase. Traditional silicon scaling of monolithic designs has hit its limits, forcing engineers and companies to explore new ways to achieve performance improvements. Enter: Chiplets!

In a nutshell, Chiplets are small, modular silicon dies that function as individual components within a larger processor package. Unlike traditional monolithic CPUs and GPUs, which integrate all processing units and interconnects onto a single silicon die, chiplets are manufactured separately and then assembled into a single package using high-speed interconnects. This approach enhances scalability, improves yields, and enables heterogeneous integration of different technologies within a single processor.

What makes this approach so special is that chiplets allow for the integration of different components (CPU, GPU, memory, network etc.) within a single package. Additionally, chiplets can be fabricated using different manufacturing nodes and processes, optimizing performance, cost, and energy efficiency. This modular design reduces manufacturing complexity, improves yield, accelerates time to market and enhances scalability, addressing key challenges associated with monolithic chip designs.

Chiplets are brimming with potential and are the unsung heroes of innovation, as they bring multiple benefits to the industry.

For decades, Moore’s Law—predicting that the number of transistors on a chip doubles approximately every two years—was the guiding principle driving progress in computing. However, as transistor scaling slows due to complexity, chiplets offer an innovative workaround by allowing manufacturers to integrate smaller, specialized silicon dies into a cohesive system. Chiplets extend the benefits of Moore’s Law without requiring a single, large, monolithic chip. It gets the benefits via a modular approach that enables the use of different manufacturing nodes in a single product, optimizing both cost and performance while reducing production risks.

Allowing different types of processors—such as CPUs, GPUs, and AI accelerators—to coexist within the same package offers a great many benefits. It optimizes performance for diverse workloads, whether in cloud computing, AI processing, or edge devices. By reducing the physical distance between these components, chiplets also cut down on latency and power consumption, enhancing overall system efficiency.

In addition to powering diverse workloads, chiplets also allow manufacturers to create highly customized solutions for specific applications, such as AI, gaming, and high-performance computing. Instead of designing a one-size-fits-all processor, companies can mix and match chiplets to tailor performance for niche markets.

To achieve these benefits, advancements in packaging technologies were required. Innovations such as die stacking—when multiple chips are layered vertically—save space, improve power efficiency, and accelerate data transfer between stacked components. For instance, stacking memory chips directly on top of processors enhances data access speeds while reducing the device’s footprint. Such innovations are reshaping semiconductor manufacturing and delivering more compact, high-performance computing solutions.

In the greater scheme of things, chiplets also make computing systems more energy-efficient and sustainable. For example, the Data Center Multi-Chip Heterogeneous Systems architecture (DC-MHS for short) extends the benefits of chiplet architecture to a system-level. It allows different chiplets to be integrated and upgraded individually, rather than replacing entire chips or servers. This flexibility would theoretically allow you to reconfigure a webserver into a storage or database server by simply swapping out modules, where normally you were confined by the fixed configuration of the motherboard. This modularity extends hardware lifespans, minimizes electronic waste, and optimizes power efficiency.

Evidently, chiplets could herald a turning point for the IT industry, addressing critical challenges in performance, cost, and scalability. As this technology continues to mature, it will redefine everything from data centers to personal devices, making IT infrastructure more powerful, efficient, and sustainable than ever before. Whether in AI, cloud computing, or next-gen gaming, chiplets are shaping the future—one modular building block at a time!

The Time is Now for AI Infused Silicon Innovation

It’s not every day that one gets to witness the world’s leading silicon innovators capturing the zeitgeist of industry development, but today’s MWC session, Chips for the Future: Fueling Business Transformation with Computing Power, delivered just that opportunity. I’d spent the days before MWC chatting with Cerebras CEO Andrew Feldman and Arm CMO Ami Badani about their companies’ leadership in breakout innovation to fuel AI adoption.

What I learned from those sessions set the stage for my moderation today, highlighted by a fireside chat with AMD’s SVP and GM of Adaptive and Embedded Computing, Salil Raje. You wouldn’t know it from his understated demeanor, but Salil oversees a massive business at AMD, driving the strategy and portfolio delivery for edge computing in its various forms. Salil spoke passionately about 2025 as a time for broad edge AI adoption. He highlighted customer traction in spaces including automotive, industrial, healthcare, and communications networks.

Imagine exoskeleton technology that grants people with disabilities the power to walk again. Imagine healing sick fish for sustainable fish farming. Imagine delivering foundational technology to target zero auto fatalities by 2030. These are some of the customer applications that AMD’s heterogeneous computing platforms help deliver, and as Salil spoke about how AMD was working across the value chain to enable AI infusion into industry after industry, I was left with an impression of bold technology leadership and open collaboration.

Salil also threw a gauntlet to the audience about 2025, stating that the standards-based innovation that has been the hallmark of the communications industry is out of step with the speed of AI advancement. While some target 6G transition as the moment for AI integration into communications networks, he challenged his peers to move more swiftly, stating that now was the time to advance with 5G.

While AMD showed its newfound weight in the edge, Ampere, Ansys and Rebellions also took the opportunity to battle out broader trends driving enterprise AI adoption. Sunghyun Park, CEO of Rebellions, made an insightful observation, stating that AI training is very akin to R&D investments, but it’s in AI inference that monetization of AI will occur. And it’s in this space that his company, the leading AI accelerator firm in APAC, is targeting its technology. Not to be outdone, Jeff Wittich, CPO at Ampere, observed that all of this AI inference adoption will be hindered without tackling some of the fundamental efficiency challenges in much of AI infrastructure today. He pointed to Ampere’s portfolio as part of that fundamental solution, based on its superior energy efficiency offered by Arm cores and a design that has placed efficiency and performance on equal footing in design priority. Jayraj Nair, Field CTO of High Tech at Ansys, added that it’s the industry collaboration, founded in Ansys simulation that starts at the chip, that will ultimately fuel technology advancement from data center to edge.

Intel was also on hand, with Sachin Katti, SVP and GM of the Network and Edge Group, detailing the advancement of Xeon 6 SoC designs that just hit the market. And it’s within this presentation that we got a broader view of the challenge and complexity of all silicon innovation in this era. We’ve moved to a chiplet-driven, performance and efficiency intensive environment for silicon requirements, and all companies onstage, and those that joined me before the show, have never faced the engineering challenges that we’re witnessing today to keep up with the torrent-like pace of AI requirements. The demand is vast for semiconductors to fuel every nook of AI’s advancement, and while each player would love to capture the lion’s share of deployments (and arguably AMD has the portfolio to do just that), we all benefit from the competition and collaboration demonstrated on the MWC stage today. As a certified chiphead, I’m happy to see engineering delivery this inspired.

Arm: Scaling AI Compute from Edge to Cloud

At Mobile World Congress 2025, Allyson Klein and Arm CMO Ami Badani discuss how power-efficient compute is fueling the next wave of AI innovation, enabling new use cases across industries.

Cerebras Systems CEO Andrew Feldman on Scaling AI Innovation

In this TechArena Fireside Chat, Cerebras CEO Andrew Feldman explores wafer-scale AI, the challenges of building the industry’s largest chip, and how Cerebras is accelerating AI innovation across industries.

Anticipating MWC Trends in AI, 5G, and Network Evolution

AI, 5G, and network automation are reshaping telecom. In this episode, Keate Despain discusses anticipated trends at MWC 2025, from edge computing to the road to 6G, and what’s next for network innovation.

AI Agents - Buzzword or Accelerator?

If you’re like me, you’ve been flooded with headlines about AI agents, agentic AI, AI systems with agency, and more. And like me, you may be wondering what aspects of AI agents represent real technology advancements that are appropriate for enterprise deployment, and which are just gimmicks and marketing hype.

The good news is that AI agents do represent a significant step forward in the evolution of the ability of generative AI (GenAI) to solve practical problems. Think of an AI agent as a small software program that was written to carry out a single task, but imagine that, at the heart of this program, there is an intelligent orchestrator that can adapt to variations in how the task is done.

This is very different from a traditional software application in two important ways. First, the agent is able to communicate easily with humans, in natural language or through more formal data structures, depending on the situation. So, an agent could take a spoken instruction from a human, ask a clarifying question, do some processing, and then respond with either a spoken response, or a very structured response, such as an API call, a document, or a form. A few years ago, that alone would have been amazing, but in the current era of GenAI, “talking” to a computer is old hat.

This is where the second critical difference between agents and traditional applications comes in: The agent has the ability to plan and adapt to changing conditions. In the past, even the simplest task required detailed advance planning to create a flow chart of (hopefully) all the variations in how the inputs and responses might change on the path to completing the task. Now, the GenAI at the heart of the agent can break a complex problem into small parts, tackle those small parts, and adapt the plan as things change.

Let’s illustrate this with an example. To make myself a cup of coffee this morning, I had to turn on my espresso machine, grind the beans, get an appropriate cup, and pull the shot. But what if I wanted different beans, or if I had to get the cup from the dishwasher rather than the cupboard? What if the espresso machine needed more water? To program all of the potential contingencies in the flow chart ahead of time for even a simple task can be difficult or impossible. If the GenAI at the heart of my AI agent can automatically adapt to these variations, and even ask for clarification (“Did you want the Arabica beans from Colombia or the Robusta Beans from Vietnam?”), then the path to a successful assistant is much more clear.

You may ask, “Can’t a regular GenAI interface do all this for me already? Why build an agent?” At Orchestrated Intelligence, we have developed user experience agents that can answer complex questions about supply chain cost-performance tradeoffs. During this process, we have identified additional benefits of agents that I will outline in my next article.