Unpacking The AI Data Factory – A Storage Perspective

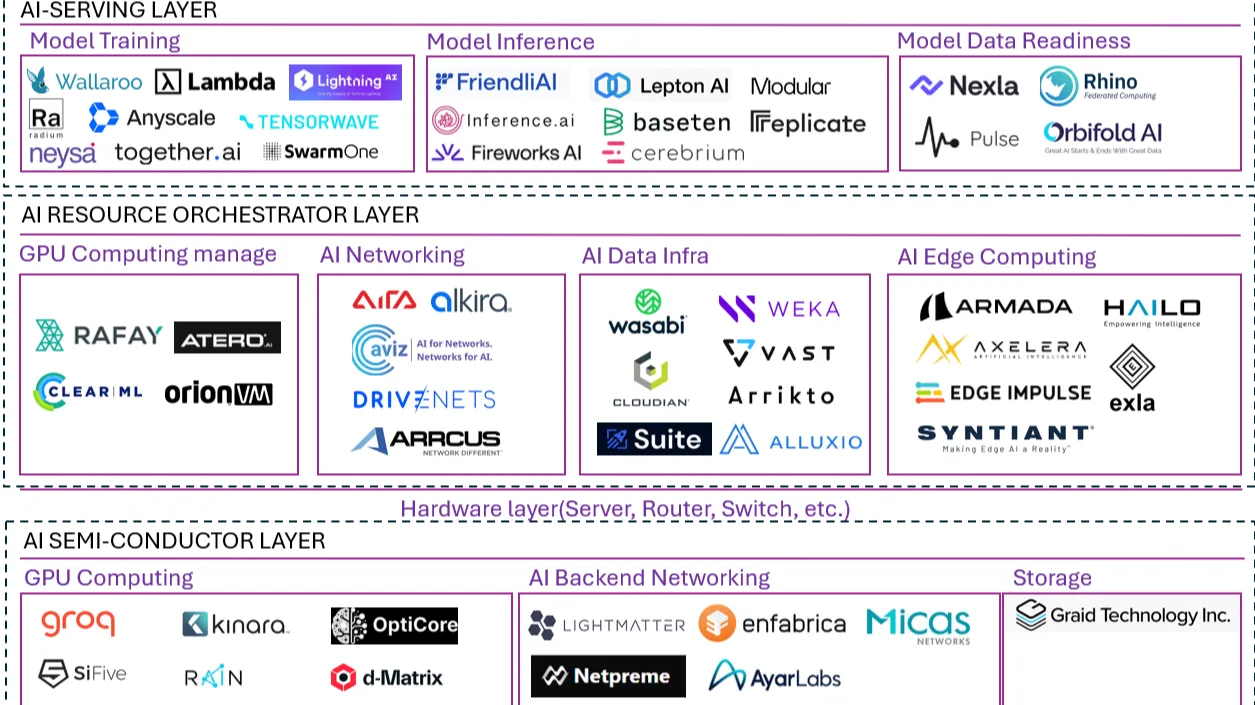

Last week’s GTC event featured so many updates in the world of computing, and we’ll be unpacking layers over the next few weeks on TechArena. One story that caught my eye was NVIDIA’s view of the AI Factory, and specifically calling out the key vendors who were innovating for AI-centric infrastructure delivery. Here, you can see a who’s who of innovators across AI-serving layer, AI-resource orchestrator layer, and AI-semiconductor layer. What is fascinating about this grouping of companies is how many disruptors are present and how this reflects the change in architecture foundations for an AI-centric world.

In today’s piece, I want to focus on storage, as data movement across a distributed landscape is so critical to the entire AI pipeline – and getting even more important, given the sustained access to data required by agentic computing. Last week at GTC, we featured a major collaboration announcement from VAST Data, showcasing a deep engagement with NVIDIA to deliver enterprise inference capabilities using VAST’s proven data platform. NVIDIA and VAST have a long history dating back to work within the HPC arena, and it shows in this announcement, as VAST is providing a core technology path that NVIDIA needs – simplified, broad adoption of generative AI workloads. While this element of their broader collaboration is just rolling out, expect to hear more about enterprise utilization of these solutions in the months ahead.

We also took a deeper look at Weka’s announcement of a new NAND-based memory grid architecture to drive “across-the-network” performance for fast response of scalable data. Weka’s solution is built upon access of distributed data across cloud and at scale, so this advancement carries the credibility of integrating into a solution that assumes a distributed architecture. It was cool to see Weka realizing that network data rates and SSD latencies have fundamentally shifted to the point that long-held truths about local vs. networked data have become legacy.

If we dig a bit deeper into NVIDIA’s map, we see that Graid has been called out for storage hardware innovation. They sit notably alone in the storage box, which is why I wanted to dig in further, as they’re the only solo vendor across the landscape. NVIDIA discussed GPU-accelerated storage extensively at the conference, and I soon learned why Graid was getting this opportunity for the spotlight. Graid has delivered exactly to NVIDIA’s vision with its SupremeRAID solution, providing software-defined storage control on a GPU for maximum SSD performance. This allows RAID solutions to be utilized within AI clusters without the I/O bottlenecking found in traditional RAID architectures. Again, we’re seeing traditional architectural models turned on their sides to deliver new capability, and once again a disruptor is taking center stage with an innovative solution for the market.

But let’s go under the covers one step further. We’ve written a lot about liquid cooling on TechArena, and of course we’re seeing a massive migration to liquid cooling on accelerated hardware. When we think of this, we typically think about GPUs. But what about the other components inside a server? There was an announcement at GTC at the heart of storage that shows more broadly how accelerated computing is putting new demands on innovation across the box, and this came from Solidigm. The purple monster of SSD capacity delivered a breakthrough liquid-cooled enterprise SSD, specifically for AI deployments. The innovation eliminates the use of a fan for cooling an in-server SSD, moving to a cold plate cooling alternative that allows for cooling both sides of the SSD and allowing for hot swapping of the device. The first design based on this new part will be delivered via ruler form factor, and I expect we’ll start seeing it integrated into many of the disruptors on the list, showcasing both the importance of all SSD configurations in AI clusters and the rate of innovation in every element of AI configurations.

Like what you’re seeing? Check out more GTC unpacking posts in the days ahead.