AI’s Power Crunch Is Making Brownfield the New Greenfield

365 Data Centers and Aphorio Carter, the critical infrastructure and data center division of Carter Funds, announced a strategic partnership on May 6 to develop approximately 200 megawatts of AI-ready data center capacity across six U.S. sites. The first letters of intent target Aurora, Colorado, and Simpsonville, Kentucky, with additional locations planned in Trumbull, Connecticut; Louisville, Kentucky; Harrisonburg, Virginia; and Columbus, Ohio.

The details matter here. These are not greenfield projects. 365 and Aphorio Carter plan to identify, convert, and develop existing properties into high-density facilities supporting 50 to over 200 kilowatts per cabinet, using liquid-to-chip cooling designed for AI and high-performance computing workloads. 365 will serve as long-term operator across the portfolio, with projects expected to come online within nine to 24 months.

“Through this partnership, we’re in an ideal position to create a new class of high-density infrastructure designed specifically for AI-era workloads,” said Derek Gillespie, CEO and CRO of 365 Data Centers. “Working with Aphorio Carter will allow us to create new value in existing assets while bringing new capacity online to support today’s demand.”

Nine to 24 months. In a market where greenfield data center projects now take three to five years from announcement to first megawatt, that timeline is the real story. And the conditions creating demand for this kind of speed are only intensifying.

A $725 Billion Spending Spree Collides with a 15 GW Grid

The capital flowing into AI infrastructure has reached a scale that’s difficult to contextualize. Google, Amazon, Microsoft, and Meta collectively plan to spend $725 billion on capex in 2026, a 77 percent increase over last year’s record $410 billion.

Fortune reported in late April that the buildout shows “no clear end in sight.” The Stargate Project, a government-backed initiative led by OpenAI, Microsoft, Oracle, and SoftBank, has committed $500 billion toward AI-first data centers by 2029.

The money is not the constraint. Power is.

The entire U.S. data center sector draws less than 15 gigawatts of electricity today. The announced project pipeline, if every facility were built, would pile 140 gigawatts of new load onto that grid. That’s roughly nine times the current draw. And the grid is nowhere near ready to absorb it.

Of 12 GW of data center capacity announced for 2026 delivery, only about 5 GW is under active construction. Roughly half of all planned 2026 builds have been delayed or canceled outright. The reasons are physical, not financial. Lead times for high-voltage transformers have ballooned from 12 to 18 months before 2020 to 36 to 48 months today, with some orders stretching to five years. Grid interconnection wait times exceed five years in many U.S. regions. Beyond what’s currently under construction, an additional 37 GW of planned infrastructure lacks firm completion dates, pushing the cumulative pipeline gap past 50 GW of announced-but-unbuilt capacity.

America’s investor-owned utilities have responded with $1.4 trillion in capital spending plans through 2030, up 27 percent from the prior year’s projection. But grid upgrades move on timelines measured in years, not quarters. The gap between committed capital and deliverable megawatts continues to widen.

This mismatch has created an opening for operators willing to think differently about how AI-ready capacity gets built.

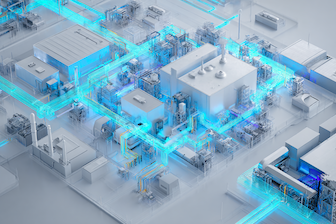

The Case for Building Inside Existing Walls

The brownfield conversion model attacks the binding constraint directly. Existing properties with committed utility power can bypass the two longest delays in data center development: interconnection queues and greenfield construction timelines.

The approach is gaining traction faster than most industry observers expected. Goldman Sachs models assume brownfield accounts for 15 percent of required data center space in 2026, growing to 30 percent by 2031. More than 70 percent of global data center capacity already resides in existing buildings, according to Schneider Electric. And brownfield retrofits can cut facility capital expenditure by up to 30 percent compared to greenfield builds, per Data Center Dynamics.

The most dramatic proof of concept arrived in 2024, when xAI assembled Colossus, its 100,000-GPU supercomputer, inside a shuttered Electrolux factory in Memphis in just 122 days. That project moved from empty industrial space to operational AI training cluster faster than most greenfield developers can secure permits.

Three forces have converged to make this model viable at scale.

The first is the power bottleneck itself. When interconnection queues run five years deep, any operator who can deliver capacity on existing grid connections holds a structural advantage. Speed-to-megawatts has become a premium asset in its own right.

The second is liquid cooling. Two years ago, retrofitting an existing building for AI-grade power density was impractical. Air cooling hit its thermal ceiling around 30 to 40 kW per rack. Direct-to-chip liquid cooling has upended that calculus. Goldman Sachs projects the share of liquid-cooled AI servers will climb from 15 percent in 2024 to 76 percent in 2026. Schneider Electric published a technical framework in February 2026 focused on brownfield liquid cooling retrofits, highlighting localized, staged upgrades as a strong fit for colocation and service provider environments. Design loads exceeding 100 to 200 kW per rack are now standard in new AI builds, and the AI data center liquid cooling market is projected to expand from $6.6 billion in 2025 to $61.8 billion by 2034.

The third is the shift from training to inference. Centralized mega-campuses make sense for massive training clusters. But inference workloads, the production side of AI where models serve real-time predictions and outputs, benefit from geographic distribution. Smaller facilities deployed closer to end users reduce latency and spread the load across multiple grid connections. The conversion model maps well to this emerging architecture.

Not every building qualifies, of course. Viable candidates need structural load capacity for high-density racks, proximity to utility substations with available or committed capacity, adequate water supply for cooling loops, and fiber connectivity. The differentiator is finding properties where utility power is already allocated or can be secured without entering the back of a multi-year interconnection queue. That’s where real estate expertise becomes just as valuable as engineering capability.

How the 365/Aphorio Carter Partnership Works

The 365 Data Centers and Aphorio Carter partnership pairs two distinct competencies. Aphorio Carter brings real estate investment, development, and asset management experience, backed by a leadership team that has collectively invested in and managed over $6 billion in data center real estate. 365 brings operational depth: colocation, connectivity, managed cloud services, and a pipeline of enterprise customers who need high-density capacity.

The division of labor is clean. Aphorio Carter sources and develops the properties. 365 operates them long-term. Together, they can move on multiple sites simultaneously rather than sequencing projects one at a time.

“We’ve aligned the delivery of utility power with critical infrastructure allowing us to provide scalable, high-density infrastructure where it’s needed most,” said John Regan, President and COO at Aphorio Carter. “This is a great partnership where we’ve got the real estate and the ability to supply the data center infrastructure inline with available utility capacity while 365 has a highly reliable O&M track record along with a healthy pipeline of customers. Together, we’re creating a scalable supply of power-rich environments that can be delivered faster and perform at a higher level than traditional developments.”

The geographic strategy reveals a deliberate calculation. Aurora, Simpsonville, Trumbull, Louisville, Harrisonburg, Columbus. These are not Northern Virginia or Dallas or Phoenix, the traditional hyperscale corridors where grid capacity is most constrained and competition for power is fiercest. These are secondary markets where utility power remains accessible, where interconnection timelines are shorter because operators aren’t competing with multi-gigawatt campus developments for grid access.

The nine-to-24-month delivery window stands in sharp contrast to the three-to-five-year timelines that are now common for greenfield projects, particularly in regions where transformer shortages and grid congestion have slowed permitting and construction.

The Window Is Open, and the Clock Is Running

Brownfield conversions will not replace hyperscale campuses. Meta’s multi-gigawatt Hyperion project in Louisiana, the Stargate consortium’s 7 GW expansion across Ohio and Pennsylvania, Microsoft’s $7 billion Fairwater campus in Wisconsin: these projects exist at a scale and serve a purpose that conversions cannot replicate. The industry’s largest training clusters will continue to demand purpose-built facilities with dedicated power plants and custom electrical infrastructure.

But training clusters are only part of the picture. For mid-market operators serving enterprise AI deployments, for the growing wave of inference workloads that demand geographic distribution, and for any organization that needs AI-ready compute capacity in the next 12 to 18 months rather than the next three to five years, the conversion model fills a gap that greenfield development physically cannot address on the timelines the market demands.

The brownfield model carries a sustainability advantage, too. Reusing existing structures means a lower embodied carbon footprint than new construction, a factor that enterprise procurement teams are weighing more heavily in vendor selection as corporate climate commitments run headlong into the energy intensity of AI.

The competitive field for brownfield AI infrastructure is still forming. Few operators have assembled a repeatable, scalable conversion playbook. The 365 and Aphorio Carter partnership represents one early template, but the opportunity set extends well beyond six sites in Colorado and Kentucky. The window exists because the power wall is not temporary. Transformer supply chains, interconnection backlogs, and permitting processes will take years to normalize even with the $1.4 trillion in utility investment now committed.

The AI infrastructure race will not be decided solely by who writes the biggest check. In a market where half of planned builds stall before breaking ground, the ability to deliver megawatts fast, inside existing walls, on existing grid connections, in markets the hyperscalers haven’t yet saturated, may prove just as consequential as any $10 billion campus announcement.