Giga Computing Scales OCP Innovation with Giga PODs

From GPU and storage servers to turnkey rack-scale solutions, Giga Computing showcases its expanding OCP portfolio and the evolution of Giga PODs for high-density, high-efficiency data centers.

Zero Point Technologies on NeoCloud and the Future of AI Infra

At OCP Dublin, ZeroPoint’s Nilesh Shah explains how NeoCloud data centers are reshaping AI infrastructure needs—and why memory and storage innovation is mission-critical for LLM performance.

Supermicro and Solidigm: Revolutionizing Storage for AI Workloads

NVIDIA’s GTC is always a hotspot for innovation. This year, two industry leaders, Supermicro and Solidigm, brought their cutting-edge solutions designed for the next wave of AI workloads for CSP and Hyperscalers. From high-density storage to powerful cooling solutions, their collaboration is shaping the future of data centers. Wendell Wenjen, director of storage market development at Supermicro, and Shirish Bhargava, of Solidigm’s global field sales, sat down with TechArena to discuss how their partnership is addressing the growing demands of AI and what lies ahead for next-generation data center infrastructure.

Supermicro, known for pushing the envelope in server technology, showcased their latest systems directed at powering AI applications. Central to their presentation was the NVIDIA HGX B300 NVL16, featuring the powerful NVIDIA Blackwell UltraGPUs— Supermicro’s most advanced GPU solutions to date. These high-performance servers were designed with AI in mind, supporting the growing demand for deep learning and large-scale data processing. Supermicro also introduced a range of storage solutions optimized for AI workloads.

Another exciting announcement was the launch of a new 1U Petascale storage system, equipped with the NVIDIA Grace CPU. This dual-die, high-performance processor delivers impressive power efficiency, ideal for the ever-growing demands of AI. For those seeking even more storage efficiency, Supermicro unveiled a JBOF system powered by NVIDIA’s BlueField-3 DPU, which supports demanding storage workloads with lower power usage.

However, it wasn’t just Supermicro’s hardware that drew attention at GTC. Solidigm, a leader in enterprise solid-state drive (SSD) solutions, brought its own innovative technology to the table. The Solidigm D5 P5336 SSD, a 122 TB powerhouse, was also on display, offering extreme storage density that is essential for AI training and large-scale data management in all form factors. This SSD is part of Solidigm’s broader portfolio designed for AI, providing the kind of performance and capacity needed to handle massive datasets with low latency.

But what makes this collaboration truly stand out is the shared commitment to pushing the boundaries of storage capacity and efficiency. Supermicro and Solidigm’s joint focus on reducing data center footprint while maximizing storage capacity is a game-changer. By using Solidigm’s 122 TB quad-level cell (QLC) SSDs, Supermicro is able to pack three petabytes of storage into a 2U server, a dramatic leap from the previous one-petabyte systems. This compact, high-capacity solution not only optimizes data center space, but also reduces power consumption, making it a win-win for enterprises and service providers alike.

In an industry where cooling and power efficiency are critical, Supermicro’s approach to liquid cooling for high-performance GPUs stood out. Some of today’s most powerful GPUs can consume over 1,000 watts, making liquid cooling a necessity for keeping systems running at optimal temperatures. Supermicro’s comprehensive liquid cooling solution — ranging from cold plates for CPUs and GPUs to large outdoor cooling towers — ensures that even the most demanding AI systems stay cool, efficient and reliable.

So, what’s the TechArena take? This partnership between Supermicro and Solidigm is a testament to the importance of collaboration in driving innovation. Both companies have long been at the forefront of their respective fields, and their combined efforts are delivering practical, high-performance solutions to address the challenges of modern AI workloads.

For those looking to learn more, Supermicro offers an abundance of resources on their website (supermicro.com) and social media channels (X, LinkedIn and YouTube).

Solidigm & Supermicro Bring AI Storage to New Heights

From 122TB QLC SSDs to rack-scale liquid cooling, Solidigm and Supermicro are redefining high-density, power-efficient AI infrastructure—scaling storage to 3PB in just 2U of rack space.

Circle B Delivers End-to-End OCP Innovation in Europe

From full rack-scale builds to ITAD, Circle B is powering AI-ready, sustainable infrastructure across Europe—leveraging OCP designs to do more with less in a power-constrained market.

Bel Power Scales Up for AI-Hungry Data Center Racks

At OCP Dublin, Bel Power’s Cliff Gore shares how the company is advancing high-efficiency, high-density power shelves—preparing to meet AI’s demand for megawatt-class rack-scale infrastructure.

Will OCP Infiltrate NeoCloud?

The Open Compute Project Foundation (OCP) has undeniable impact on cloud deployments, and with $191B in forecasted infrastructure sales by 2029, there is no slowing this segment of the market. But many ask if OCP can transcend their current focus on hyperscalers to engage with neo-cloud providers. Who is neo-cloud? If you’re not familiar with the term, they are the large-scale players building infrastructure custom designed for AI workloads. Think CoreWeave, Lambda…or regional players like ScaleUp. And instead of building infrastructure to fail, as has been the case with the built in redundancy of the cloud, they are building infrastructure to scale to deliver the horsepower required for every aspect of AI.

OCP has been very CPU focused on its specs and marketplace, and with NVIDIA bringing some initial designs into the OCP ecosystem, the doors opened for neo-cloud influence. At the Dublin event this week, Solidigm, Fractile, FarmGPU, and ScaleUp discussed what it will take to make this segment of the market as robust as traditional hyperscale.

In the discussion, Fractile CEO Walter Goodwin pointed out that these players tend to push configurations even more aggressively, given the inherent challenges of accelerated computing. This can challenge traditional standards-based hardware’s speed of innovation. How can OCP deliver a standard without limiting designs to a perceived one-size-fits-all philosophy?

Discussion moderator Nilesh Shah from Zero Point Technologies pointed to an underlying change in infrastructure, moving towards a more storage-centric approach, including an example of OpenAI’s new storage-centric blueprint for accelerated computing. Finding a similar focus and pulling the storage industry closer into the center of OCP, he argued, would bring new approaches to configuration alternatives. Beyond storage centricity, Nilesh pointed to broader diversity of silicon design foundations for innovation, providing operators with different options for AI acceleration. Walter agreed, stating that his company’s planned product introduction in 2027, aimed at competing with NVIDIA GPUs, is designed for the rack and facility level – exactly where neo-cloud vendors are seeking accelerator alternatives.

JM Hands, CEO of FarmGPU, suggested that another opportunity for neo-cloud traction involves adoption of a mix-and-match approach to configurations, delivering a higher level of customization and a deconstruction of infrastructure to dial in exactly what providers need. Many of the challenges neo-cloud is grappling with, he argued, have been solved by the hyperscalers within OCP. Creating more flexible ways to tap this technology through the OCP marketplace would help spur interest and engagement from these service providers.

What’s the TechArena take? Neo-cloud is still arguably in its infancy. Yes, that is ridiculous given the valuation of some of these companies, but with AI moving so fast and furious, it’s hard to remember that some of these companies were not delivering services just a few years ago. Today, many neo-cloud providers are functioning as warehouses for NVIDIA configurations for hyperscaler consumption. Will they remain a function of outsourced compute for hyperscaler balance sheet management, or will their businesses take them further afield as more enterprises start adopting generative and agentic AI at scale? The discussion certainly underscored the challenge in data centers today that NVIDIA designs are taking some of the air out of the room for flexible innovation, and neo-cloud’s current reliance on NVIDIA GPUs certainly aligns with a narrower approach to deployments, at least in the near term. We applaud the call for more memory and storage-centric thinking, given the growing complexity of feeding AI and avoiding what we affectionally call agentic dementia, something we’ll cover in depth in an upcoming post. We see an acute opportunity for OCP, which is more of an imperative to capture the AI curve fully to build a vibrant ecosystem. We also see the industry – from chiplet designers to integrated rack manufacturers and power and cooling providers – benefiting through a widened view on the market opportunity from OCP-centric innovation. We’ll be watching attentively come October to see signs of significant traction in this space.

Optimizing AI Compute Value with OVHcloud’s Custom Infrastructure

At CloudFest 2025, OVHcloud shared insights on AI’s expanding role in cloud infrastructure, the benefits of custom-built servers, and how their global network is optimizing performance and efficiency.

Liquid Cooling Takes Center Stage at OCP Dublin

In this TechArena interview, Avayla CEO Kelley Mullick explains why AI workloads and edge deployments are driving a liquid cooling boom—and how cold plate, immersion, and nanoparticle cooling all fit in.

Giving Hardware a Second Life with Sims Lifecycle Services

At OCP Dublin, Sims Lifecycle’s Sean Magann shares how memory reuse, automation, and CXL are transforming the circular economy for data centers—turning decommissioned tech into next-gen infrastructure.

Silicon at the Edge of Innovation

Attending an Open Compute Project Foundation (OCP) conference provides a unique look at the bleeding edge of data center innovation, and this week’s event in Dublin has provided insight across the data center landscape. The foundation of advancement starts with silicon, and I was keen to see the latest technologies that are driving system capabilities forward. Here’s our quick take on the most interesting updates from the show.

Was the Elephant in the Room?

While NVIDIA was present across the show floor in vendor booths, they have been limited in their engagement within OCP shows. The most notable impact of NVIDIA’s recent contributions was seen in OCP CEO George Tchaparian’s keynote, during which he announced an IDC forecast of $191B of global OCP infrastructure sales by 2029, up significantly from the $73.5B forecasted for 2028 shared last year. This is reflective, at least in part, of the massive impact that NVIDIA spec and technical document inclusion within the organization’s offerings represents to uplift of OCP infrastructure sales. This opportunity was echoed by the prominence of NVIDIA systems within integrated rack configurations on the show floor, and centrality of NVIDIA within the first day’s sessions. In my mind, this expansion to accelerated computing is foundational for the next generation of OCP’s existence as it takes the organization into the heart of AI compute and makes the org’s programs relevant to the next wave of AI centric data center operators like CoreWeave, Lambda, and more (more on this in an upcoming post about the neo-cloud). The OCP Foundation doubled down on the importance of AI centric configurations, rolling out a new AI marketplace this week to serve as a central information hub for adopting AI configurations.

Who’s Up Next?

Of course, this is a bleeding edge tech conference, so TechArena is always looking for who’s going to emerge to compete with NVIDIA in the AI computing game.

Enter Fractile, the AI accelerator company that claims that they can deliver 100X faster inferencing at 1/10th the cost of existing systems.

While their first-generation product silicon has yet to tape out, Fractile has targeted their designs to solve a fundamental problem of current AI processing on GPUs – time and cost wasted in movement of data between memory and processor, and the resultant intractable trade-offs for inference between lowering costs and increasing speed.

Instead, Fractile CEO Walter Goodwin explained, the company is pursuing in-memory computing that fuses key calculations into memory structures, eliminating the friction and bottlenecking of constant movement of data between processor and memory. This means faster inferencing, and it also means reduction of energy consumption at scale, both capabilities that have garnered attention from the likes of industry heavyweights Stan Boland and Pat Gelsinger.

Goodwin has assembled a seasoned team – hardware, for instance, is led by ex-NVIDIA VP Pete Hughes. Time will tell if Fractile is the player to give NVIDIA a real challenge in the market. At this point it’s clear that at least in approach, they are taking a road that gives them an opportunity to compete at hyperscale.

What about Data?

With compute performance moving at hyper-Moore’s Law, pressure is increasing on other areas of the platform to keep pace. As we wait for in-memory compute to become reality, we need to take a look at discrete memory innovation. While HBM has provided fast delivery of data for AI systems, there are power and capacity limitations that make this technology imperfect for all target applications. ZeroPoint Technology’s Nilesh Shah shared his views in an OCP session stating that LLM’s today are memory bound with HBM GPUs operating at <60% of utilization and memory inference averaging a 6:1 read to write ratio. What’s more, current lossy-based memory compression technologies degrade application accuracy. New innovation is needed, and Nilesh pointed the way with a one-two punch of breakthroughs. The first is MRAM, a new memory technology that delivers HBM like bandwidth with 30-50% less power utilization. According to MRAM provider Numem, the technology is ready for mainstream adoption. But, the full story of memory innovation potential is, when coupled with a new approach in cacheline compression technology that avoids traditional lossy intolerance to nanosecond latencies, it delivers a 1.5X improvement in memory performance. ZeroPoint has introduced AI MX 1.0 to help the industry take advantage of this new approach with on-chip decompression engines…and in the near future compressions also brought on-chip.

Are We Talking Efficiency?

I expected compute and memory advancement at OCP, but Empower Semiconductor surprised me with their new technology that improves efficiency of power delivery on the board. They’re the world’s leader in integrated voltage regulation, reducing the need for on-board capacitors by deploying FinFast-based regulators on the backside of the PCB board and greatly reducing the distance that power is traveling across the board to get to host processors. Their solutions are increasingly tapped by hyperscalers to drive down power utilization and claim a reduction of up to 20% vs. traditional capacitor technology. What’s coming next? Integration of their Crescendo solutions directly into the chip, made possible by the physical size reduction of their designs vs. competitive alternatives.

What’s the TechArena take?

The biggest story of silicon innovation at OCP was more about who was not in the room – minimal presence from NVIDIA, AMD and Intel, to name a few – as much as who was present in Dublin. While OCP configurations deliver system- and rack-level standards-based advancement, we think that silicon driven configurations were lacking, at least within this event. And as the organization grapples with broader impact beyond hyperscalers, bringing new silicon players like Fractile to the table to drive disruptive innovation to the marketplace will help unleash a new wave of innovation that the industry sorely needs.

OVHcloud Brings Carbon Transparency to the Cloud

At OCP Dublin, OVHcloud’s Gregory Lebourg shares how the company is giving customers real-time visibility into the carbon impact of their cloud workloads — before they even hit deploy.

Intel’s Lynn Comp on Agentic AI: How Enterprises Can Prepare

Agentic AI is reshaping enterprise data, infrastructure, and governance. Intel’s Lynn Comp joins TechArena to explore how organizations can get ahead of the coming wave of change.

Synopsys, Intel Foundry Team Up to Spur Angstrom-Scale Innovation

APRIL 29, 2025: At Intel Foundry Direct Connect 2025 today in San Jose, Synopsys announced a major expansion of its collaboration with Intel Foundry, fueling a new era of high-performance chip design with certified, production-ready EDA flows, expanded IP portfolios, and next-gen packaging innovation.

When it comes to the future of silicon, Synopsys is pushing full throttle into the angstrom era. Building on a multi-year partnership with Intel Foundry, Synopsys unveiled several key initiatives to fast-track advanced node designs, from silicon to system, across Intel’s most cutting-edge process technologies — including Intel 18A and the new Intel 18A-P with its groundbreaking RibbonFET and PowerVia innovations.

Here are the high points:

1) Certified digital and analog EDA flows for Intel 18A are now ready for Intel customers, supporting faster, more efficient design starts.

2) Production-ready EDA flows for Intel 18A-P — the evolution of 18A featuring gate-all-around transistors and backside power delivery — are now available, built through early collaboration between Synopsys and Intel, informed by unique early DTCO work on Intel 18A.

3) Advanced multi-die design enablement is also on deck. Synopsys has developed an optimized reference flow for Intel’s new EMIB-T packaging technology, powered by its 3DIC Compiler platform.

4) Expanded Synopsys IP support for Intel’s angstrom nodes, with solutions designed to maximize performance, power efficiency, and silicon lifecycle management.

Synopsys also announced they’ve joined the Intel Foundry Accelerator Design Services Alliance, and are a founding member of the new Intel Foundry Chiplet Alliance, further deepening their leadership role in the Intel Foundry ecosystem.

What Makes This News Stand Out

The industry has been anticipating Intel 18A as a critical moment — not just for Intel’s re-entry into foundry leadership, but for the broader enablement of truly differentiated HPC silicon. PowerVia and RibbonFET technologies are redefining what’s possible in PPA (Performance, Power, Area) optimization, but designing on these advanced nodes requires a complete rethinking of traditional EDA flows and IP development.

Synopsys has done more than adapt. They leaned into early co-optimization efforts with Intel, notably through unique Design Technology Co-Optimization (DTCO) on Intel 18A, fine-tuning every stage from exploration to signoff, and enabling early adopters to move faster with fewer design iterations. This close collaboration continues, with Synopsys now engaged in DTCO work for Intel 14A-E.

Multi-die design is also a critical theme. With chiplets rapidly becoming a dominant architecture for AI accelerators, network processors, and HPC systems, advanced packaging solutions like EMIB-T — and the design tools that support them — will be make-or-break for innovation. Synopsys' unified 3DIC Compiler platform allows for exploration, planning, and multiphysics signoff in a single environment, which helps teams manage the complexity of heterogeneous integration at scale.

And on the IP side, the breadth of Synopsys' offering for Intel 18A is impressive. High-speed interfaces like 224G Ethernet, PCIe 7.0, UCIe, and USB4, along with critical foundation IP, such as embedded memories, logic libraries, IOs, and PVT sensors, all optimized for PowerVia and the unique characteristics of RibbonFET designs.

The TechArena Take

At TechArena, we see Synopsys' expanded collaboration with Intel Foundry as a key accelerant for the next wave of semiconductor innovation — especially in AI and high-performance computing, where the race for leadership is not just about process nodes, but about full-system optimization.

By delivering certified flows for 18A, production-ready flows for 18A-P, robust IP portfolios, and deeply integrated multi-die design capabilities, Synopsys is helping to de-risk early adoption of Intel’s most advanced technologies. This could open the door for a broader range of customers to confidently tape out designs on Intel 18A and 18A-P — and could ultimately help accelerate ecosystem momentum for Intel Foundry’s ambitious growth plans.

In an era where silicon leadership increasingly means system leadership, Synopsys isn’t just following the roadmap. They’re helping draw it.

Inside OCP Innovation: A Fireside Chat with Matty Bakkeren

Ahead of OCP Dublin, Matty Bakkeren joins Allyson Klein to break down rack innovations, liquid cooling, sovereignty, and the trends shaping data center infrastructure across Europe.

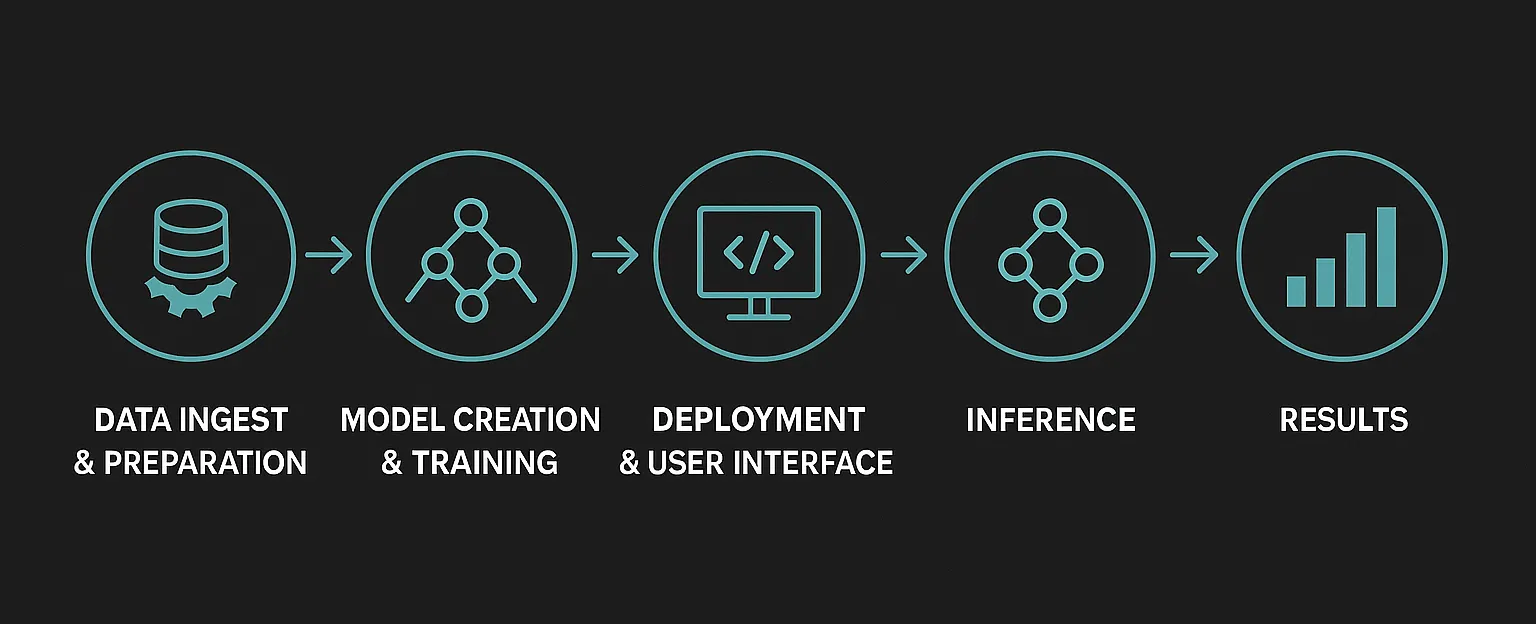

Creating a Foundation for End-to-End AI Security Solutions

Your organization has caught the generative AI fever and is rolling out chatbots powered by cutting- edge models that promise to reveal valuable new insights and deep data linkages —– all accessible via plain-language prompts. You’re probably considering Retrieval-Augmented Generation (RAG) to add private, context-specific data to the model to address the risks of hallucinations and out-of-date or missing contexts. Your development team is under pressure to be first to market, and the business team may even be experimenting with things like “vibe coding” to get there even quicker. You’ll test and learn and then refine as you go. You’re keeping your private data sources on-premises, so you should still be covered for confidentiality, privacy, and regulatory requirements, right?

But here’s the deal — Regulations generally trail innovation, but likewise fully-compliant, out-of-the-box solutions tend to trail the regulatory mandates as they begin to be established. The “run fast” mentality could put you on a collision course with safety in critical domains such as healthcare, finance, autonomous vehicles, and agentic systems where “breaking things” can mean disastrous consequences. So, the question becomes: how do we keep innovating at today’s breakneck pace — without breaking the trust, security, and safety foundations our systems depend on?

"Our approach to security must adapt to AI. Some controls, such as static defenses, signature-based detection, and perimeter-based security, will no longer work."

Not Just Another Workload

I’ve been around IT and Enterprise systems for a long time. I started during the “PC Revolution”, putting real data processing into the hands of ordinary consumers rather than just specialists with access to mainframes. Soon we were marching into the “Internet Revolution”, with all its interconnected glory and chaos. The “Cloud Revolution” followed, enabling IT departments to become more agile and efficient by breaking free from the constraints of physical location and infrastructure ownership. And now the “AI Revolution” is careening forward, promising to disrupt even the basic ideas of where data can come from and how it can be used. Each of these eras required new ways of thinking about how we approach the core tenets of compute.

My primary area of focus is how to protect these systems, the data they process, and users and organizations that live and die by them. That hasn’t changed, and most of the cybersecurity principles we’ve lived by continue to apply. We still need to address the fundamental triad of Confidentiality, Integrity, and Availability. We still need to monitor networks and assets, manage access controls, secure our supply chains, and so on. It’s tempting to think of AI as “just another workload” and assume we’ll simply protect it like any other compute, but AI brings unique challenges we never faced before, at unprecedented scale.

Scale and Unpredictability

I’ve noted two significant new factors. The first is the sheer volume of data involved. In the past, a program had specific and well-defined data sources and outputs. We knew what was going in, and how it would be processed; we were the ones deciding after all. But now we scoop up massive troves of data from previously unutilized sources. Different types of data, such as audio/visual, machine logs, multi-lingual, specifications and diagrams, out-of-date documents, and unsupervised social posts are all being pulled into training sets. Garbage in, garbage out. This makes me wonder how I ensure only valid, accurate, appropriate, and relevant data drives my AI when it literally could be anything.

The second major factor is the non-deterministic nature of AI. Sure, I set up data sources, I codify a model training structure, I sample and test the outputs. But I don’t know the linkages AI will find amongst all that data. I can’t evaluate each logic tree that led to a prediction. I won’t be able to fully anticipate the biases, blind spots, and misunderstandings that will be buried in a vector database and ultimately drive a decision. I’ve set up the arena, but the movements won’t always be what I expect, and my outcomes are determined by unknown probabilities rather than IF-THEN directives.

Our approach to security must adapt to AI. Some controls, such as static defenses, signature-based detection, and perimeter-based security, will no longer work. Others that were previously niche will become commonplace. We live in a world with billions of personal compute endpoints, fabulously interconnected across the internet, with access to infinite data hosted in the world’s clouds, but now we have an evolving and unpredictable logic operating across it all. These are exciting times full of promise, but we’d better not shoot ourselves in the foot as we race to realize it.

Start at the Foundation

We are starting to see some very good frameworks for addressing some of the unique aspects of securing AI. The OWASP Top-10 for LLMs and Gen AI Apps from the Open Worldwide Application Security Project is one these. It catalogues some of the primary vulnerability types, complete with attack scenarios and potential mitigations for each. MITRE’s ATT&CK framework and ATLAS are others for which Intel was a contributing developer. When assessing their security postures, most cybersecurity professionals consider network topologies, access controls, data architectures, DevOps practices, and more. Numerous software stack solutions are available to help solve this, and it’s generally taken for granted these will “just work” on whatever platform they reside on. But many still don’t consider the role the underlying platform plays in enabling secure solutions. Selecting the right foundation is actually your first security decision, and it can impact all the decisions that come after.

Defense-in-Depth: A Comprehensive Security Approach

Combating today's sophisticated threats requires a defense-in-depth strategy, where hardware and software are tightly optimized to enhance overall security. Intel has been a recognized leader in securing critical assets and data and offers a holistic approach to defend AI artifacts and workloads throughout their lifecycle.

Best-in-Class Platform Assurance

Product security is at the foundation of everything we do at Intel, and that’s proven by Intel’s number one rank in product security assurance compared with other top silicon vendors. Intel’s latest Product Security Report highlights how our proactive approach to identifying and mitigating vulnerabilities resulted in 96% of addressed vulnerabilities being were discovered by internal programs, rather than purely external researchers or attackers to find issues after-the-fact. AMD reported four times more firmware vulnerabilities in their hardware root-of-trust than Intel, and almost twice the number of vulnerabilities in their confidential computing technologies than Intel. This is especially significant since 43% of AMD’s platform firmware vulnerabilities were discovered externally.

Cryptographic Accelerators

Encryption and hashing sit at the heart of security solutions, and we just expect those to work. But we are approaching the era of quantum computing, where stronger algorithms and larger key sizes are required to resist the brute-force capabilities of future quantum computers. And these computationally-intense algorithms, in turn, put more strain on today’s processors. Intel is not only integrating the latest quantum-safe algorithms into our platform, but we’ve embedded encryption accelerators in our processors to support bulk crypto offload to significantly speed these computations and reduce the performance impact.

Hardware-Based Defenses

In protecting the application stack, security is only as good as the layers below it. Even the best application security techniques and architectures can be circumvented by vulnerabilities in the OS, attached components, supply chain, etc. This is why security should be rooted in the lowest layer possible, in the platform silicon. This begins with establishing a root of trust at the start of boot, with each level first validating the next before giving it permission to instantiate. Instructions in the processor can also identify and prevent return-oriented programming (ROP) and jump-oriented programming (JOP) attacks, which could be used to manipulate AI processing flows. And the processor also can provide low-level telemetry that can be utilized with AI to identify the processing signatures of ransomware and cryptojacking that would be otherwise undetectable by high-level monitoring.

"We’re stepping into a new epoch of compute. The old rules aren’t out, but new rules have been added, with more to come."

Confidential and Trusted AI

It’s common practice to encrypt data in-transit to protect against interception while it’s sent across the network. And more and more data is also being encrypted at-rest in case of malicious or accidental exfiltration from storage. But data exists in another state as well: in-use. In traditional computing, the data is in an unencrypted state while it is being processed in memory. This makes data in -use vulnerable to attack via malicious admins and malware that can exploit vulnerabilities at the OS layer to gain access. There are literally thousands of logged vulnerabilities that can result in an escalation of privileges, essentially granting root access for the attacker to access the contents of memory, including the data that would otherwise be encrypted at -rest and in -transit. But perhaps even more frightening are the zero-day vulnerabilities that haven’t yet been discovered and patched.

Confidential Computing technologies are rooted in hardware and provide trusted execution and encryption of memory, plus isolation and verification of the integrity of workloads, closing off low-level privilege escalations as a mechanism of attack. This is especially important where workloads run on infrastructure that is remote (such as edge) or not operated by the organization (such as in the cloud) but also applies for on-prem to reduce exposure to insiders and zero-days. Confidential AI solutions reduce the risk of attacks such as prompt injection, data and model poisoning, model theft, and sensitive data disclosure.

Conclusion

We’re stepping into a new epoch of compute. The old rules aren’t out, but new rules have been added, with more to come. We need to evolve our approach to security as well, with foundational capabilities rooted in the immutable core. Intel platforms have leading security built in by default, and deliver a security foundation to build upon.

Taurus Group Champions Open Infrastructure for Today’s IT Needs

In today’s rapidly evolving IT landscape, flexibility and adaptability are key to meeting the demands of businesses seeking innovative, scalable solutions. This shift in customer needs came into sharp focus at CloudFest 2025, where we sat down with Steve Gutierrez, director of sales at Solidigm, and Arun Garg, founder and CEO of Taurus Group. With a focus on open platforms and best-of-breed components, Taurus is providing businesses with the freedom to build the infrastructures they need, without being tied to a single-vendor, one-size-fits-all solution.

Taurus Group’s approach is centered around offering a diverse array of options to its partners, tapping into open compute platforms and leveraging the expertise of its sister company, Circle B, a specialized provider for the Open Compute Project Foundation (OCP). Alongside this, Taurus works closely with Cluster Vision to deliver high-performance computing solutions and open-source cluster management tools. These collaborations give Taurus the tools to provide cutting-edge, flexible infrastructure options while remaining agile enough to adapt to the unique demands of their clients.

Arun highlighted how important it is for businesses today to have the freedom to choose the best components for their needs, rather than relying on a single vendor’s ecosystem. The days of restrictive, monolithic IT systems with one-size-fits-all solutions are long gone — today’s customers are looking for more flexibility and the ability to customize their infrastructure.

Solidigm’s products, such as the recently launched 122TB storage solution, perfectly complement Taurus Group’s portfolio, offering innovative, reliable options for clients in need of scalable storage. According to Arun, customers appreciate the collaboration with Solidigm because it ensures that they can deliver the best possible solutions with the added benefit of excellent support from Solidigm’s technical and support teams.

What’s the TechArena take? The collaboration between Taurus Group and Solidigm is a great example of how true partnerships can help businesses deliver more powerful solutions to their customers. It’s clear that as companies like Taurus continue to push for openness and flexibility in the IT space, they’re creating a future where businesses are no longer constrained by traditional, single-vendor systems, but instead have access to a wide range of tools and solutions that are tailored to their needs.

For anyone looking to explore more about Taurus Group and how they can leverage the latest storage solutions, reach out directly through their website, tauruseu.com. With a clear focus on building strong, flexible partnerships, Taurus Group is poised to continue playing a key role in the future of IT infrastructure.

Synopsys + TSMC Angstrom-Scale AI Design

At the 2025 TSMC Technology Symposium today, Synopsys and TSMC revealed new milestones in their long-standing collaboration — signaling a major step forward for angstrom-era silicon design. With certified digital and analog design flows now available for TSMC’s A16™ and N2P nodes, the two companies are opening new pathways for semiconductor innovation, particularly in high-performance compute (HPC), AI, and multi-die architectures.

The announcement is packed with substance: certified EDA tools, new IP, deeper 3DIC integration, and early development for the next wave of process tech, A14. It all reflects a broader trend — the convergence of AI design complexity, extreme scaling, and ecosystem-driven innovation.

Angstrom-Scale Design, Accelerated

Certified Synopsys.ai-driven flows for both digital and analog design on TSMC’s A16 and N2P processes enable optimized performance, power efficiency, and rapid design migration. Enhancements such as backside routing and frequency-optimized logic placement in Fusion Compiler are designed to squeeze every ounce of efficiency out of GAA transistors — accelerating the transition from FinFET to gate-all-around.

While customers gain productivity today, the future is already in view. Synopsys is working closely with TSMC to develop flows for the forthcoming A14 process, giving design teams a head start on next-generation innovation.

Simplifying Complexity with 3DIC

As multi-die integration becomes the new performance engine for advanced workloads, the companies are also tightening their alignment on 3D packaging. Synopsys’ 3DIC Compiler — now certified for TSMC’s CoWoS® with up to 5.5x reticle interposers — enables ultra-dense chip stacking. That means customers can build larger, faster AI and HPC chips without waiting for smaller process nodes.

With support for 3Dblox and seamless integration of Ansys simulation technologies for thermal, power, and signal integrity, 3DIC Compiler offers an all-in-one toolkit for design exploration and signoff — critical for getting high-stakes multi-die systems to market quickly and reliably.

IP to Match the Mission

You can’t innovate at the edge of physics without the right IP — and Synopsys delivers it. The company announced first-pass silicon success for its Foundation and Interface IP portfolio on TSMC’s N2 node, giving customers the confidence to tape out with aggressive performance and power targets.

The IP stack spans industry-leading protocols including PCIe 7.0, HBM4, UCIe, USB4, LPDDR6, DDR5, and the newly added UALink and Ultra Ethernet technologies — reflecting the push for faster interfaces in data-hungry workloads. Synopsys’ 224G PHY IP, a cornerstone of this performance tier, is already demonstrating ecosystem-wide interoperability, including support for optical and copper links.

With this broad portfolio, customers building AI, HPC, edge, and automotive systems can reduce integration risk while maximizing bandwidth and energy efficiency.

The TechArena Take

This is more than a standard EDA/IP announcement. It’s a signal flare for where the semiconductor industry is headed — and how AI is shaping every element of innovation. From A16 silicon to multi-reticle packaging, to next-gen PHY IP, the collaboration between Synopsys and TSMC is pushing complexity behind the scenes so design teams can focus on what matters: delivering differentiated silicon.

It’s also an important marker for how platforms like Synopsys.ai are helping the industry move faster. As GAA nodes introduce new challenges in pin access, backside power delivery, and physical design, AI-native tools aren’t just “nice to have” — they’re essential.

And as 3D packaging becomes the standard rather than the exception, a unified environment like 3DIC Compiler becomes critical to maintaining speed and sanity in high-stakes design cycles.

At TechArena, we’ll be watching how this evolves — especially as the A14 node development matures and more chipmakers lean into multi-die architectures for inference acceleration, memory disaggregation, and compute scaling.

For now, one thing’s clear: Synopsys and TSMC are setting a new bar for collaboration in the angstrom era.

For More Information:

News release: Synopsys and TSMC Usher In Angstrom-Scale Designs with Certified EDA Flows on Advanced TSMC A16 and N2P Processes

Additional news blog post: First-Pass Silicon Success on TSMC’s N2 Process Will Enable New Generation of AI-Enabled Edge Devices

Scaleway’s AI Vision: Scalable, Sustainable Cloud Infrastructure

In a landscape often dominated by hyperscalers and familiar names, Scaleway is quietly rewriting the rules. With a clear vision and a sharp focus on what modern cloud scalers actually need, it’s stepping into a role that feels both timely and transformative. In a recent conversation between Solidigm’s Conor Doherty, field applications manager at Solidigm, and Yann-Guirec Manac’h, head of hardware R&D at Scaleway, we get a closer look at how this European cloud provider is not only keeping pace with hyperscale trends, but is also helping to shape them through focused innovation in AI infrastructure and sustainability.

Scaleway’s approach feels refreshingly grounded. It focuses on delivering a complete foundation — compute, network, storage — and binding it all together with a unified control plane that supports both traditional and AI workloads. But what really sets them apart is the dual-track strategy for AI: accessible GPU instances for smaller-scale use, and massive, tightly interconnected GPU clusters for the heavy-duty training jobs. It’s the kind of infrastructure that recognizes how AI work isn’t a one-size-fits-all operation — some tasks need 2 GPUs, while others demand thousands. Scaleway is designing for both.

Gaining a deeper understanding of Scaleway’s AI strategy starts with examining the data pipeline. As Yann-Guirec put it, AI training isn’t just compute-heavy — it’s about data complexity, scale and flow. Harvesting and curating vast datasets, handling throughput during training, managing checkpoints and doing inference all require different storage strategies. Cold storage for archival compliance, warm layers for preparation and hot storage for training — each has different hardware implications. It’s not just about speed, it’s about adaptability, and Scaleway’s infrastructure acknowledges that every phase in the AI pipeline has unique demands.

With the conversation around sustainability finally taking center stage in tech, Scaleway’s stance is more than a footnote — it’s core to their identity. Backed by the Iliad Group, the company has built and operates data centers that run on 100% renewable energy. DC5, the data center that houses a lot of their AI pods, forgoes traditional air conditioning in favor of adiabatic and free cooling methods. The result is dramatically lower power usage effectiveness without sacrificing performance. But Yann-Guirec takes it a step further, pointing out a rarely discussed metric: water usage. Scaleway is looking at water usage effectiveness as well, with a view toward responsible innovation that doesn’t overlook environmental cost.

What’s perhaps most fascinating is how Scaleway sees the future of AI training workloads. Today’s language models may be grounded in web-scale text, but tomorrow’s models — multimodal, agentic and domain-specific — will need exponentially more data across formats, such as images, audio and video. That means even more demand on both GPU throughput and the bandwidth feeding those GPUs. Scaleway is building toward this future now, with GPU pod systems capable of pushing hundreds of gigabits per second and storage systems built to scale with that need.

While big names in AI infrastructure often dominate the narrative, conversations like this remind us that serious innovation is happening beyond the usual suspects.

So, what’s the TechArena take? Scaleway isn’t trying to be everything to everyone – but for teams building sophisticated AI pipelines, in Europe and beyond, it’s quickly becoming a name to watch.

To dive deeper, visit scaleway.com.

Google's Antitrust Reckoning: U.S. and India Take Action

Reading the antitrust news about Google this past week gave me a keen sense of déjà vu as I thought back to my years at Intel and what it was like to witness its historic regulatory battles from the perspective of an employee (more on that in a moment).

During a week packed with regulatory friction, Google faces serious domestic and international challenges with legal actions that promise to shake the foundations of its business model. In the U.S., the Department of Justice (DOJ) is now in the remedies phase of its antitrust case against Google. The court already ruled that Google violated antitrust laws by using multi-billion-dollar contracts to entrench its search engine dominance. Those exclusive deals — like the reported $20 billion agreement with Apple — are now in the crosshairs of regulators. The DOJ’s proposed remedies could go as far as banning those contracts altogether or even forcing a Chrome browser divestiture.

In a separate case, a U.S. District Court found Google’s advertising business to be an illegal monopoly — one that actively suppressed competition and harmed publishers through a tightly controlled ad stack. The DOJ is pushing for a breakup here too, potentially including the sale of Google Ad Manager.

Outside the U.S., Google has just settled a longstanding case with India’s Competition Commission regarding its Android TV practices. The settlement, which includes a $2.38 million payment, will loosen Google’s grip on device manufacturers, allowing more freedom to choose non-Google apps and services. While this is a rounding error on Google’s balance sheet, it reflects growing global interest in holding the company accountable to anti-competitive measures.

Déjà Vu: Intel’s Antitrust Era

For those of us who have been in tech long enough to remember, Google’s situation feels eerily familiar. Let’s face it. The tech industry is ripe with antitrust challenges, and recent history has filled the headlines with companies including Apple, Amazon, Meta, Microsoft, NVIDIA, and Qualcomm facing legal challenges regarding anti-competitive practices.

During my tenure at Intel, I had a front-row seat to what it means to operate under a regulatory microscope. Intel was repeatedly investigated — and fined — for allegedly using its x86 CPU market position to suppress competition, particularly from AMD. The landmark 2009 European Commission decision fined Intel €1.06 billion (then $1.45 billion), claiming that the company gave rebates to PC makers and retailers in exchange for exclusivity, effectively locking out AMD. Though Intel spent over a decade appealing the ruling, the reputational and strategic impact was immediate and lasting. The FTC also launched its own suit, which Intel settled in 2010 by agreeing to a slew of business practice changes.

Those years were...educational. Internally, every marketing strategy and partner engagement had to be re-evaluated under the lens of compliance. Creativity and flexibility in the market were absolutely curtailed, and while the business remained strong, the regulatory pressure opened the door for new competitors and architectural shifts — including the rise of Arm and resurgence of AMD. And that’s what these restrictions are supposed to do – open the door to competition that benefits the broader market and customers.

Now, Google is facing a similar inflection point. What’s at stake isn’t just its business practices — it’s the foundational structure of its revenue model, particularly in advertising and browser dominance. If remedies go as far as DOJ proposals suggest, Google could be forced to rewrite the rulebook on how it competes, once again opening the door to new competitors in the market.

The TechArena Take

At TechArena, we’re watching this moment closely — because it’s not just about Google. It’s about how innovation and market power intersect, and how regulatory pressure can unlock or stifle competition. If history is any indication, the companies that come out on top in these moments aren’t the ones who just fight the legal battle — they’re the ones drive disruptive change into one player dominant markets at time of regulatory pressure. We celebrate open competition and standards-based tech stack innovation, and we’re excited to see how this regulatory pressure cooker will foment in new opportunity for the market and customer engagement.

GIGABYTE and Solidigm Unveil Advanced AI and HPC Solutions

At the NVIDIA GTC conference, where the latest advancements in AI and high-performance computing (HPC) are on display, Scott Shadley, director of leadership narrative and evangelist of Solidigm, sat down with Carrie Wang, sales marketing at Giga Computing, and TechArena, to discuss the rapidly evolving landscape of enterprise technology and AI infrastructure. Our conversation shed light on the critical role data centers and efficient computing play in addressing the growing demand for AI-driven workloads.

Giga Computing is making significant strides in the AI and HPC arenas. Carrie spoke about the company’s journey, noting how GIGABYTE’s server business began with a small team back in 2000, ultimately evolving into a leader in the AI infrastructure sector. Today, Giga Computing, a wholly owned subsidiary of GIGABYTE, is shaping the future of high-performance AI, cloud and HPC solutions for businesses worldwide.

As AI adoption surges across industries, the need for power-efficient and scalable infrastructure has never been more critical. Carrie highlighted that AI inferencing, the process of applying trained models to real-world data, is becoming more power efficient, but the growing demands of AI workloads mean that data-intensive processes are increasingly requiring better storage, networking and compute solutions.

One of the standout solutions Giga Computing is showcasing this year is the GIGABYTE G893 GPU server platform. With support for NVIDIA HGX™B200 and NVIDIA HGX™ B300 NVL16, this platform is specifically designed to handle the most demanding AI and HPC workloads. Paired with NVIDIA BlueField®-3SuperNICs, the G893 delivers outstanding performance while minimizing energy consumption – a key concern as data centers grapple with rising power demands. Additionally, Giga Computing’s innovative cooling solutions, including the GIGABYTEG4L3 platform, ensure that these powerful systems run efficiently even under heavy loads. At the conference, Giga Computing presented a comprehensive solution for data centers, featuring a direct liquid cooling (DLC) rack system that combines multiple racks for a fully integrated solution.

The conversation also touched on how Giga Computing is addressing the challenges faced by the industry. With AI workloads continuing to surge, the demand for power-hungry data centers is driving up rental rates, with increases of up to 6.5% compared to the first half of 2023. In this environment, businesses must carefully select the right components to maximize performance while keeping power consumption in check. It’s a delicate balancing act, and Giga Computing’s solutions are designed to help customers optimize their infrastructure to address these challenges.

Another topic discussed was the rise of agentic AI — systems capable of making decisions autonomously based on real-time data. Carrie emphasized that agentic AI relies heavily on inferencing, and Solidigm’s NVMe solid state drives (SSDs) play a critical role in supporting these models. With Solidigm’s cutting-edge storage solutions, customers can efficiently handle large-scale datasets in their data centers, minimizing delays and ensuring low costs and power consumption.

So, what’s the TechArena take? As AI, HPC, and cloud workloads continue to evolve, it’s clear that collaboration between companies like Solidigm and Giga Computing is key to driving the next wave of innovation. By working together to deliver integrated solutions that prioritize performance, power efficiency, and scalability, these companies are setting the stage for the future of AI infrastructure.

Watch the full video here.

For those looking to learn more about Giga Computing and their groundbreaking solutions, you can visit their website, gigabyte.com/Enterprise, or follow them on LinkedIn and Twitter to keep up to date on their latest offerings and innovations.

AI, Storage & the NYSE: Behind the World's Fastest Markets

Solidigm's Roger Corell chats with ICE's Anand Pradhan to explore how AI, storage, and system design fuel 700B+ daily trades — and what AI inference means for the future of storage at scale.

Torc’s Autonomous Trucking Platform: Powering the Future of Freight

The future of trucking is autonomous – and Flex is helping enable it by building the brains behind the big rigs.

At NVIDIA’s GTC 2025, Torc Robotics unveiled its latest advancement in autonomous trucking: a scalable physical AI compute system developed in collaboration with Flex and NVIDIA. The platform is designed to enable self-driving trucks to perceive and navigate their environment in real-time, utilizing sensors like lidar, radar, and cameras.

Flex plays a pivotal role in this collaboration by providing the Jupiter compute platform and advanced manufacturing capabilities. The Jupiter platform integrates NVIDIA’s DRIVE AGX with the DRIVE Orin system-on-a-chip and DriveOS operating system, delivering the high compute performance and low latency required for Torc’s autonomous trucking software. Each Jupiter unit has 2 SoCs, for a total of 8 SoCs in the four-unit version Torc is deploying.

This partnership ensures that Torc’s autonomous trucks meet stringent requirements for size, performance, cost, and reliability, aligning with fleet customers' total cost of ownership targets. The platform's adaptability allows it to accommodate varying operational conditions, including new routes, hardware configurations, and road environments.

Torc plans to commercially launch this autonomous trucking platform in 2027, marking a significant step toward scalable production and deployment of self-driving long-haul trucks.

Flex Wins Automotive PACE Award

In a testament to its pioneering efforts in autonomous vehicle technology, Flex was recognized with a two-time 2025 Automotive News PACE Award – honored for its industry-leading Jupiter Compute Platform and Backup DC/DC Converter design platforms. The PACE Awards recognize automotive suppliers for superior innovation, technological advancement, and business performance. The innovation awards demonstrate Flex’s leadership in compute and power electronics product design for next-gen mobility.

The Broader Impact of Autonomous Trucking

The introduction of autonomous trucks promises to bring transformative changes to the freight industry:

Cost Reduction: Autonomous trucks are projected to reduce shipping costs by 25-30%, primarily by eliminating driver wages, which constitute a significant portion of operational expenses.

Increased Efficiency: These vehicles can operate nearly 24/7 without the need for rest breaks, potentially cutting shipping times by 25-35% and enhancing supply chain efficiency.

Enhanced Safety: By removing human error factors such as fatigue and distraction, autonomous trucks are expected to significantly reduce accident rates, leading to lower insurance costs and improved road safety.

Environmental Benefits: Continuous operation and optimized driving patterns contribute to better fuel efficiency, reducing emissions and supporting sustainability goals.

The impact of autonomous trucking on employment, particularly for long-haul drivers, is a topic of significant discussion and analysis. A study commissioned by the U.S. Department of Transportation suggests that with slow to medium adoption of autonomous trucking technology, the industry could avoid mass layoffs. High turnover rates in long-haul trucking—often exceeding 90% annually—indicated that reductions in employment could be managed through natural attrition rather than forced layoffs.

In a model where adoption of autonomous trucking moves faster, USDOT study predicts a potential loss of up to 11,000 long-haul trucking jobs over five years, representing about 1.7% of the workforce. It's important to note that while automation may reduce certain driving jobs, it could also create new roles in vehicle maintenance, remote operations, and logistics management. The transition's net effect on employment will depend on various factors, including policy decisions, retraining programs, and the pace of technological adoption.

So, what’s the TechArena take? Torc's collaboration with Flex and NVIDIA represents a significant advancement in autonomous trucking technology. By integrating high-performance computing with scalable manufacturing, this partnership is poised to deliver safer, more efficient, and cost-effective freight solutions. As the industry moves toward a future of autonomous logistics, such innovations will be critical in addressing the growing demands of global supply chains.

An Army of Agentic EEs Unleashed by Synopsys

Last month, I had the pleasure of attending Synopsys’ first Executive Forum in Santa Clara. For those who don’t follow Synopsys, the firm has a multi-decade history of providing foundational technology to the semiconductor industry, and they have not missed a beat under CEO Sassine Ghazi’s leadership. And while I knew that the leadership team would be sharing the latest innovations that would help fuel the next generation of processors, what Synopsys put together was stunning in terms of its long-term implications to semiconductor design.

Taking a step back, a processor has historically maintained a five-to-seven-year development cycle from vision to production, and over time market pressures have shrunk this development cycle significantly. According to Jensen Huang, who was running his own conference across town as the Synopsys event unfolded, we have now entered a realm of hyper-Moore’s law advancements with silicon design cycles within a one-year window. Unheard of, aggressive, and seamlessly impossible with yesterday’s tools.

The first advancement to address this challenge has been the introduction of chiplet architectures and the movement of different IP blocks, sometimes manufactured with different process technologies, connected together to form complex and scalable systems. This allows for re-design of chiplets across a portfolio of products or even across a portfolio of vendors for design elements that are not critical to differentiated solution delivery. Synopsys itself operates in this space, delivering IP and multi-die integration solutions to the semiconductor ecosystem. We’ve seen the industry move in this direction with all major players favoring chiplet architectures, and while we’re still waiting for a vibrant chiplet ecosystem to emerge for open, interoperable designs, we are seeing an uptick in adoption of chiplet designs across the industry delivered to bespoke customers.

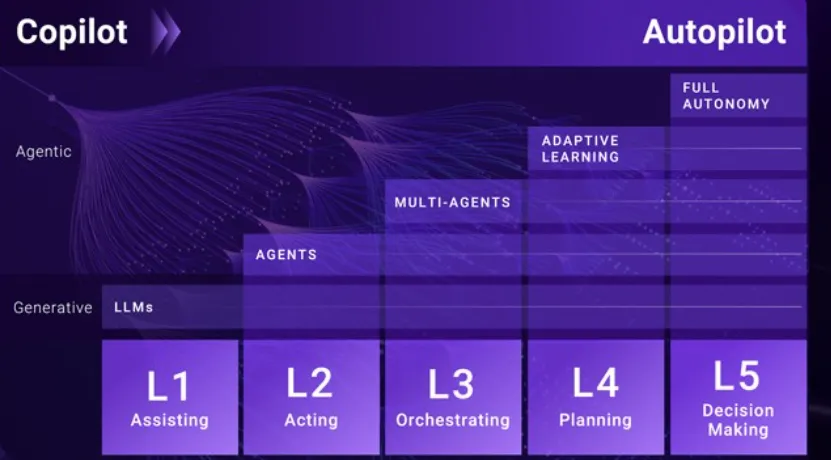

But what Sassine and his team envisioned next rips the doors off of semiconductor design to the point of considering that this is a next era of advancement: agentic AI controlled design. Imagine deploying millions of AI agents that a semiconductor designer can utilize to craft semiconductors. This starts with something Synopsys has already delivered – an AI Copilot that assists a designer in the process of his or her design, trained specifically on an organization’s technology and sitting aside other Synopsys tools and IP. This is a great advancement and a good use of LLM training for a pressured workforce. But…it’s only an amuse bouche of what is to come.

We are on the precipice of what Sassine called level 2 implementation of a journey taking us from copilot to auto-pilot design. Synopsys will be delivering agentic capability in their portfolio later this year to offload design of specific functions to agents, with human controlled integration of this design into larger workflows. Synopsys sees a reality where a portion of design is delivered through chiplet and IP blocks, a portion is delivered by agents, and actual engineers focus on the most challenging elements of execution, speeding design significantly.

This will be followed by level 3 delivery, where multi-agent models will include specialists orchestrated together for more complex work, followed by the integration of adaptive learning improving designs as they progress, and finally level 5 full autonomy delivery with agents empowered with decision making. Yes…in level 5, agents in theory can design and validate microprocessors autonomously.

This is stunning. It’s stunning for the acceleration of compute, given that we can also advance process technology and continue delivery of performance improvements, but it’s stunning for another reason. Microprocessors are the most complex inventions on the planet, and Synopsys’ vision includes processors of a trillion transistors, reaching new levels of design complexity. If AI agents can deliver this complex of problem solving, we need to ask what they will be incapable of doing.

While we do not have an acute time horizon for this advancement, one thing is clear. Just like the accelerated microprocessor design windows, the speed in which generative AI and agentic computing has been advancing makes this reality much closer than we’d historically predict. And while this story feels a lot like the backstory of Skynet, in the near term there is no escaping that AI agent assist will help Jensen and his team at NVIDIA, and the entire silicon ecosystem, accelerate design to meet the insatiable customer demand represented by AI. AI…creating AI.

MicroCloud + AMD EPYC™ 4004 = High-Density Win for CSPs

Supermicro’s new MicroCloud platform, powered by AMD EPYC™ 4004 CPUs, delivers higher core density, network flexibility, and TCO advantages for cloud service providers at scale.

.jpg)