From Operators to Innovators: Inside Midas Immersion Cooling

The data center industry stands at an inflection point. As AI-enabled workloads drive compute densities beyond 100 kilowatts per rack, traditional air cooling approaches are reaching their limits. My recent conversation with Solidigm’s Jeniece Wnorowski and Scott Sickmiller, CEO of Midas, revealed how immersion cooling technology has evolved into a practical solution for today’s most demanding workloads.

What makes Midas’s perspective particularly valuable is their origin story. Unlike companies that developed immersion cooling as a product, Midas became a provider because they were first a user facing real cooling challenges in their Austin data center.

From Necessity to Innovation

Midas began as a data center operation in 2011, quickly becoming the go-to provider for hard-to-cool IT infrastructure. The growth trajectory forced them to look beyond traditional air cooling solutions. Between 2011 and 2012, the team iterated through multiple immersion cooling designs, ultimately developing and patenting their own solution. In 2016, they made the decision to exit the data center business and focus exclusively on providing immersion cooling infrastructure to the industry. “And the rest, as they say, is history of 4,000 tanks,” Scott said.

This user-first development approach shapes everything about Midas’s technology today. As Scott explained, having to maintain the systems themselves drove design decisions toward user-friendliness and operational efficiency that competitors who never operated the technology might overlook.

The Physics of Immersion

At its core, immersion cooling leverages a simple advantage: liquids dissipate heat approximately 1,200 times more effectively than air. By submerging IT equipment, data centers immediately gain this thermal efficiency advantage. However, as Scott emphasized, doing immersion well requires more than just dunking servers in liquid.

Early on, the team learned that success depended on computational fluid dynamics (CFDs). CFDs are critical to ensuring that the dielectric liquid reaches all heat sources, engages with them, and moves away from them with a uniform flow. CFDs are crucial to ensuring that this happens no matter the rack’s form factor. While adapting to diverse hardware designs is a challenge, Scott noted, “At the end of the day, it’s only physics. So the physics can support the workload. We just have to fit the form factor into the physics box.”

The Thermal Recovery Advantage

Beyond raw cooling efficiency, immersion cooling enables thermal energy recovery in ways air-cooled systems cannot match. The dielectric fluid not only captures heat more effectively than air, it also retains that heat longer, enabling efficient transfer to other systems.

Scott shared an example from a recent meeting with a German district heating facility. In district heating, water or another fluid is centrally heated and then pumped out into a distribution network, eventually reaching buildings where it regulates temperature through boilers. When a data center can provide water at 50° Celsius (122° Fahrenheit), this represents a significant opportunity to reuse energy already consumed for computing. The economics are compelling. “We’ve already paid for the energy once,” Scott said. “So at that point, why not use it again? And that’s where thermal recovery is really useful.”

Practical Deployment Considerations

Immersion cooling shows strong return on investment above 40 kilowatts per rack, and the technology becomes necessary at 100 kilowatts and beyond. As advancement of graphics processing units (GPUs) drives power densities higher, direct liquid cooling alone cannot solve the challenge. Peripheral components still generate heat requiring air cooling, straining facility infrastructure as power becomes the ultimate constraint.

The barrier that Midas faced for 15 years—data center operators’ resistance to liquid near equipment—has been addressed as organizations adopt rear-door heat exchangers and direct-to-chip cooling. “Many of the data centers, especially the ones that are focusing on machine learning and AI, are building water loops in the facility,” Scott said. “So that prerequisite is done. Then we need to start looking at the IT.”

The IT requirements are “quite a bit different.” One of the biggest changes? Fans are no longer needed, and the immediate benefit is significant. A one-kilowatt server that dedicates 150 to 200 watts to fans can complete the same compute at just 800 watts immersed.

The Midas Difference

What distinguishes Midas in an increasingly competitive market comes back to their operational heritage. Scott highlighted their truly concurrent maintainability and fault-tolerant design, which includes redundant cooling distribution units (CDUs) as standard. The system supports easily hot-swapping failed CDUs: with just an hour of education, the company’s global sales manager learned to hot-swap a CDU in seven minutes. The operational simplicity extends to deployment, as well. Scott described installing a system at a university in the United Kingdom in 40 minutes. “That’s an advantage of a Midas,” Scott said. “We had to maintain it ourselves, so we built it that way.”

TechArena Take

Midas’s journey from data center operator to immersion cooling provider demonstrates how real operational experience drives practical innovation. Their emphasis on user-friendly design addresses the large and small daily challenges that data center operators face. As compute densities continue climbing and power constraints tighten, immersion cooling is transitioning from alternative technology to essential infrastructure. Companies like Midas, with proven deployment experience and field-tested designs, are well-positioned to lead this transformation.

Learn more about Midas immersion cooling solutions at www.midasimmersion.com.

AI, the Silent Partner Mitigating Clinician Burnout

Burnout isn’t just a trendy term; it’s a real crisis. Doctors and nurses are feeling the weight of unprecedented stress, fatigue, and emotional exhaustion. With overwhelming administrative tasks, endless paperwork, and the constant pressure to provide top-notch care in a shorter time frame, the environment has become unsustainable. The outcome? Burnout rates soaring above 50% in certain specialties, which is leading to workforce shortages and putting patient care at risk.

Enter Artificial Intelligence (AI), a tool that can act as a supportive partner working quietly in the background to help restore balance.

The Burnout Epidemic

Healthcare professionals often find themselves spending almost half of their day on administrative tasks instead of focusing on patient care. While Electronic Health Records (EHRs) are crucial, they can also be a major source of frustration due to their complexity and the time they require. The issue of burnout doesn’t just impact the providers; it sends shockwaves throughout the entire system, affecting patient satisfaction, safety, and even the financial health of the organization.

AI as a Workflow Optimizer

One of the most impactful ways AI is changing the game right now is by taking over those tedious tasks that can really drain provider time. Smart systems are stepping in to handle things like scheduling appointments, verifying insurance, and even managing prior authorizations—jobs that used to consume hours of clinicians’ time. Then there are the more sophisticated tools, like ambient clinical intelligence, which can listen in during patient visits and automatically generate structured notes. This means healthcare providers can finally break free from the never-ending cycle of typing.

Imagine this: a provider finishes a consultation and the documentation is already taken care of—accurate, compliant, and ready for a quick glance. It might sound like something out of a sci-fi flick, but it’s happening right now.

Clinical Decision Support: Reducing Cognitive Load

Burnout isn’t just about the endless paperwork; it’s also tied to decision fatigue. Clinicians are constantly juggling a mountain of data, from lab results to imaging studies. That’s where AI-powered clinical decision support tools come in. They sift through all this information in real time, bringing forward actionable insights and highlighting potential risks. Instead of feeling overwhelmed by data, healthcare providers receive clear, evidence-based recommendations.

This doesn’t take the place of clinical judgment; it enhances it. By lightening the cognitive load, AI gives clinicians the freedom to concentrate on what truly matters: connecting with patients and showing empathy.

Real-World Examples

- Ambient Documentation: Tools like Nuance’s Dragon Ambient eXperience (DAX) are already transforming workflows by capturing conversations and generating notes automatically.

- Predictive Scheduling: AI models forecast patient flow and staffing needs, helping hospitals allocate resources efficiently and prevent overload.

- Smart Triage: AI-driven triage systems prioritize cases based on urgency, ensuring critical patients receive timely care.

The Human-AI Partnership

AI can’t take the place of empathy, intuition, or that special human touch. What it can do is create an environment where those qualities can flourish. By handling administrative tasks and simplifying decision-making, AI allows clinicians to reclaim their most asset: time.

Future Outlook: AI as a Resilience Tool

As healthcare evolves, AI will become a cornerstone of provider well-being strategies. Beyond automation, expect predictive burnout analytics, systems that monitor workload patterns and flag early signs of stress, enabling proactive interventions.

By reducing administrative friction and cognitive overload, AI empowers clinicians to reconnect with their purpose: caring for patients. The future of healthcare isn’t man versus machine it’s man and machine, working together to restore balance and resilience.

Valvoline Global Operations Engineers Data Center Liquid Cooling

For more than 150 years, Valvoline has been synonymous with high-performance motor oil and racing heritage. Now, the company is applying its expertise to a very different kind of performance challenge: keeping AI data centers cool as they transition from the megawatt era into the gigawatt era.

In a recent conversation with Michael Morrison, director of new ventures at Valvoline Global Operations, and Solidigm’s Jeniece Wnorowski, I discussed how data center cooling represents a natural evolution for a company built on managing heat and performance. As Michael explained, Valvoline has actually maintained a data center presence for years, providing oils for backup power generation systems. The move into cooling solutions represents a deeper engagement with an industry facing unprecedented thermal challenges.

Two Liquid Cooling Solutions to Meet the Density Challenge

While rising temperatures grab headlines, Michael emphasized that density is the real challenge facing modern data centers. AI-enhanced workloads require packing more chips into the same physical space, creating concentrated heat loads that traditional air cooling cannot effectively manage. Liquid cooling enables increased density of chips per server and of servers per data center, fundamentally changing the economics of AI infrastructure deployment.

Two approaches to liquid cooling have arisen in response to this challenge: direct-to-chip cooling, and immersion cooling. Direct-to-chip cooling runs coolant through lines and cold plates to cool individual processors, and it has already moved beyond adoption into rapid growth. Major manufacturers have begun supporting this approach, and deployments have begun.

Immersion cooling, however, remains in earlier stages. In immersion cooling applications, entire servers are submerged in tanks filled with dielectric fluid. The approach allows heat to be captured from all components simultaneously. It also represents a large potential change for hyperscalers, which explains why it is still largely in proof-of-concept phase.

“They’re not used to having large open tanks sitting in their data centers,” Michael said. “So, they’re not only testing performance metrics, but understanding, ‘what is my maintenance on a server like?’ All of those things have to have operational procedure set: all the nuances of running it in a normal setting and an emergency environment.”

Precision Testing and Partnership: Engineering Compatibility at Every Level

The key to immersion cooling is dielectric oils. Dielectric materials, like the oils Valvoline produces, are nonconductive substances for electric currents. And finding a fluid with ideal properties to enable high performance is where Valvoline Global shines.

“We’re used to testing properties in our fluids that would determine, does it conduct electricity? Does it transfer heat?” he said.

While Valvoline Global’s fluid testing capabilities form the foundation, Michael emphasized that deploying these solutions successfully requires a more comprehensive approach. When servers are immersed in dielectric oil, compatibility becomes critical across thousands of individual components. Valvoline Global works closely with data center operators to ensure their fluids are compatible with specific hardware configurations, tank materials, and operational requirements. This collaborative approach, which the company has refined over more than 150 years of customer relationships, distinguishes their market strategy from simple product provision.

Sustainability Through Operational Efficiency

Beyond performance, liquid cooling addresses sustainability concerns that are becoming critical for data center operators. By reducing or eliminating large HVAC systems required for air cooling, facilities can significantly decrease power consumption and operational expenses. Water usage can also be reduced depending on system configuration. Michael noted that liquid cooling can creates a scenario where improved cost structure and reduced environmental impact work together rather than act as competing priorities.

The TechArena Take

Valvoline Global’s entry into data center cooling represents more than a company diversifying its product portfolio. It reflects how foundational technologies from established industries are being reimagined to solve the infrastructure challenges of AI deployment. As data centers grapple with the thermal and density challenges of AI-enabled workloads, Valvoline Global’s’s combination of fluid science expertise, collaborative approach, and long history of managing high-performance applications positions them as a meaningful player in this infrastructure evolution. For organizations planning liquid cooling deployments, the lesson is clear: success depends not just on the technology itself but on the partnerships and compatibility testing that ensure reliable, long-term operation.

Learn more about Valvoline Global’s data center cooling solutions at their website, valvoline.com, where they provide detailed technical resources on liquid cooling technologies. Connect with Michael Morrison on LinkedIn to continue the conversation about thermal management innovation.

Equinix on Architecting the AI-Ready Data Center

Inside Equinix and Solidigm’s playbook for turning data centers into adaptive, AI-ready platforms that balance sovereignty, performance, efficiency, and sustainability across hybrid multicloud.

6 Plays to Close the AI-Era Connectivity Gap

The foundation of the digital economy is buckling under the weight of its own success. artificial intelligence (AI) inference, real-time autonomous systems, and the explosion of edge computing are driving network demand far beyond what today’s infrastructure was designed to support.

This pressure is creating a pervasive state of digital asymmetry. The problem is no longer a simple binary of “connected” versus “unconnected.” Instead, it shows up as a spectrum of gaps in coverage, consistency, and resilience that threaten the promise of real-time, AI-driven services.

This playbook lays out the key principles and deployment patterns needed to close that gap with a converged, “all of the above” architecture that uses fiber, wireless, satellite, and free space optics (FSO) together instead of pitting them against each other.

Play 1: Redefine the Problem

Digital asymmetry describes the widening mismatch between where demand for high-quality connectivity is exploding and where networks can realistically deliver it. It manifests in three distinct, overlapping gaps.

- The Unserved Gap: Roughly 2.6 billion people still lack reliable connectivity, often living in areas where fiber is fiscally impossible to deploy. In these regions, the economics of trenching long distances for a small number of subscribers simply don’t pencil out.

- The Under-Served Gap: Millions more experience wildly inconsistent broadband. Service quality can vary street by street, constrained by aging copper, congested last-mile technologies, or a legacy plant that was never designed for high-throughput, low-latency AI-era workloads.

- The Reliability Gap: Even in hyper-connected cities, capacity shortfalls and service outages are forcing enterprises and autonomous systems to rethink operations. Simple fiber cuts, severe weather, or temporary environmental interference can break the “real-time” promise that AI applications depend on.

The first shift in mindset is to stop thinking in terms of “connected vs unconnected” and start thinking about where and how these three gaps show up in your footprint.

Play 2: Admit Fiber Is Essential, but Not Sufficient

Fiber optic cable is the undisputed gold standard for modern broadband: high capacity (100 Gbps+), ultra-low latency (< 5 ms), and decades-long reliability. When it can be deployed economically, it is often the first and best choice.

But physics, time, and money place hard limits on what fiber can solve on its own.

Deployment timeline: Fiber projects are fundamentally linear and slow. Typical builds can take 12–18 months from planning to activation, and the bottlenecks are rarely technical. Permits, street closures for trenching, utility coordination, environmental reviews, and complex right-of-way negotiations can stall a single mile of deployment for half a year or more. Fiber scales linearly in a world where demand is growing exponentially.

Unfavorable economics: The cost of construction alone makes fiber infeasible in many regions. Urban builds often cost $30,000–$50,000 per mile. Rural deployments, where trenching crosses longer distances and serves fewer customers, can exceed $100,000 per mile. Extending connectivity into sparsely populated regions demands heavy capital investment, and the business case rarely works without substantial government subsidies.

Geography: Fiber requires a continuous physical path. Mountains, rivers, highways, rail crossings, and protected lands are not just obstacles; they are hard chokepoints that add months and millions to construction budgets. In many parts of sub-Saharan Africa, Southeast Asia, and rural America, avoiding these barriers is simply not practical.

Global funding doesn’t erase these constraints. The World Bank estimates that closing the global connectivity gap with fiber alone would cost more than $1 trillion and take decades. Policymakers have started to acknowledge this reality. The U.S. government’s $42 billion Broadband Equity, Access, and Deployment (BEAD) program, historically fiber-focused, is now open to high-performance wireless and satellite alternatives.

Fiber is therefore essential, but not sufficient. Even with aggressive funding, it cannot close every capacity and reliability gap on its own.

Play 3: Use Each Medium Where It Wins

If fiber is the backbone, the next step is to treat every other transport medium as a specialist, not a generalist. The goal is to use each technology where its physical and economic profile is strongest.

Fiber Strengths

- Unmatched capacity per strand (100+ Gbps)

- Ultra-low latency

- Immunity to weather

- Multi-decade lifespan

Fiber Weaknesses

- Slow, permit-bound deployment

- High cost per mile

- Vulnerable to geographic and right-of-way constraints

Fiber is best used for dense urban cores, data center interconnects, and backbone routes where capacity and long-term value justify the investment.

Radio Frequency Wireless Strengths

- Fixed wireless access (FWA) is booming, delivering rapid last-mile access to millions of homes and businesses.

- RF systems are mobile-friendly, deploy in weeks to a few months, and benefit from a mature ecosystem that includes high-capacity backhaul (for example, E-band or microwave up to 10 Gbps).

RF Wireless Weaknesses

- RF spectrum is finite, costly, and congested in dense markets.

- Capacity per sector is limited, and densification through small cells (often $50K+ per site) requires robust backhaul.

RF wireless is best used for suburban and rural access, mobile coverage, and as a flexible complement to fiber for last-mile connectivity.

Free-Space Optics (FSO) Strengths

- FSO delivers fiber-class capacity (10–100 Gbps) and low latency (< 5 ms) without trenching or licensed spectrum.

- It can be deployed in days, at a fraction of the cost of equivalent fiber, and modern systems use forward error correction (FEC) to smooth minor interruptions from birds, dust, or other transient obstructions.

FSO Weaknesses

- Requires line-of-sight; buildings, trees, and terrain can block signals.

- Performance degrades in dense fog or heavy rain, which is why FSO is often paired with a backup RF link for hybrid availability.

FSO is best used for urban backhaul where trenching is impossible or prohibitively expensive, short-span “fiber gap” bridges, and enterprise sites with clear line-of-sight and a secondary path for redundancy.

Here’s a real-world example: In Lagos, Nigeria, operator MainOne used FSO to connect 20 enterprise buildings in three months—a project that would have taken roughly 18 months and cost about five times more using fiber alone. The FSO links deliver 10 Gbps with 99.9% uptime, and the approach is now being extended to residential areas.

Satellite Low Earth Orbit (LEO) Strengths

- LEO constellations can deliver immediate coverage almost anywhere on Earth, without terrestrial build-out.

- They are ideal for remote regions, maritime and aviation connectivity, and rapid deployment in disaster zones.

Satellite (LEO) Weaknesses

- Higher latency than terrestrial fiber (typically 20–40 ms), limited per-user capacity (often 50–200 Mbps), and higher cost per Mbps (for example, $100–$200 per month for residential plans).

- As subscriber density increases, congestion risk grows.

Satellite LEO is best used for: Remote and rural regions with no viable terrestrial options, backup connectivity for critical infrastructure, and mobile platforms such as ships, planes, and vehicles.

The point is not to crown a new winner. It is to match each medium to the situations where it delivers the best combined outcome on speed, cost, and reliability.

Play 4: Design Hybrid

The real gains come when you design networks as hybrid from the start, instead of treating non-fiber technologies as temporary workarounds. Optimal Hybrid Placement means planning fiber, RF, FSO, and satellite together, assigning each to the roles where they are physically and economically strongest.

Consider an illustrative scenario from rural Montana. A regional internet service provider (ISP) needed to connect 5,000 homes across 200 square miles of mountainous terrain. A fiber-only design was estimated at $80 million and a four-year timeline.

Instead, the ISP built a hybrid network:

- Satellite (for example, Starlink) provided immediate coverage for the most remote 20 percent of homes—about 1,000 subscribers.

- Fixed wireless (Citizens Broadband Radio Service) served suburban clusters of roughly 3,000 subscribers, delivering 100–300 Mbps service.

- FSO links provided multi-gigabit backhaul between wireless tower sites and existing fiber points of presence, avoiding more than 40 miles of difficult trenching.

- Fiber connected the overall network to the regional backbone at two strategic points.

The results were decisive: the network could launch in about nine months, at a cost of $32 million—roughly 60 percent less than the fiber-only design and about four times faster to deploy. Average subscriber speeds were approximately 200 Mbps.

Hybrid in this context is not a compromise. It is the only approach that can simultaneously hit the necessary targets for speed, cost, and coverage across challenging geographies.

Play 5: Tame the Operational Offset

There is, however, a tradeoff. Hybrid architectures lower upfront capital costs but drive up operational complexity. That operational offset is the real barrier to wide-scale adoption.

Running four distinct platforms—fiber, RF, FSO, and satellite—means managing different vendors, different skill sets, and more complex provisioning and monitoring. Orchestrating seamless handoffs between dissimilar technologies, while maintaining session continuity and quality of experience, adds real operational risk.

Even where the technology and economics are well understood, three systemic factors are slowing hybrid adoption:

- Operator inertia: Many ISPs and telcos are built around fiber-first strategies. Procurement processes, vendor relationships, and engineering organizations have been optimized for fiber deployments for years. Shifting to hybrid requires retraining teams, rethinking network design, and in some cases revisiting business models.

- Standards and interoperability: Hybrid networks need to support continuous sessions that may move from fiber to FSO and then fail over to RF or satellite without breaking the application. Industry standards and orchestration frameworks for multi-technology handoff are still maturing, and operators are understandably cautious about stitching together complex, multi-vendor stacks.

- Financing and policy models: Government subsidies—such as BEAD in the U.S.—have historically been written in technology-specific terms. Grants may favor “deploy fiber to this area” rather than “deliver 100 Mbps service to these households by any reliable means.” Hybrid approaches do not always fit cleanly into these rules, which can discourage operators from pursuing them even when they make technical and economic sense.

At this point, the bottleneck is less about whether hybrid can work, and more about whether operators, vendors, and regulators can align operational models and policy frameworks to make it manageable at scale.

Play 6: Build for Dissimilar Redundancy and AI-Era Resilience

The final play is to design not just for coverage and capacity, but for AI-era resilience. As AI, autonomous vehicles, and distributed industrial internet of things (IoT) systems demand near-perfect uptime, traditional notions of redundancy are no longer enough.

A network built on redundant fiber may look robust on paper, but if both routes follow the same right-of-way, a single flood, wildfire, or backhoe cut can take them down together. Like-for-like redundancy cannot protect against shared failure modes.

True resilience requires dissimilar redundancy. That means pairing different transmission mediums so the failure mode of one is covered by the strength of another:

- An RF backhaul link that is susceptible to rain fade can be paired with a backup FSO path that is less affected by that specific condition, or vice versa.

- A terrestrial path can be backed by a high-reliability satellite failover that keeps critical services online even when ground infrastructure is disrupted.

This multi-layered defense elevates connectivity from a utility to an enabling platform for the future. It is the difference between “usually on” and “designed to stay on” when the environment, demand profile, or threat landscape shifts suddenly.

The Leadership Challenge

The technology to close the connectivity gap already exists, and the economics can work. The harder part is the mindset shift. Vendors must see themselves as collaborators first, working together to grow the overall market, and competitors second. Regulators must evolve funding models from technology mandates to outcome-based targets. Operators must be willing to move beyond fiber-first orthodoxy and design converged networks from day one.

The question is no longer whether converged, hybrid networks will dominate. The question is which organizations will lead this transformation, building the resilient, five-nines infrastructure that the AI future will depend on.

OCP 2025: Arm’s Chiplet Play Aims To Democratize AI Compute

During the recent OCP Summit in San Jose, Jeniece Wnorowski and I sat down with Eddie Ramirez, vice president of marketing at Arm, to unpack how the AI infrastructure ecosystem is evolving—from storage that computes to chiplets that finally speak a common language—and why that matters for anyone trying to stand up AI capacity without a hyperscaler’s deep pockets.

Two years ago at OCP Global, Arm introduced Arm Total Design—an ecosystem dedicated to making custom silicon development more accessible and collaborative. Fast-forward to this year’s conference, and the program has tripled in participants, with partners showing real products both in Arm’s booth and in the OCP Marketplace. That traction sets the backdrop for Arm’s bigger news: an elevated role on OCP’s Board of Directors and the contribution of its Foundational Chiplet System Architecture (FCSA) specification to the community.

Why should operators, builders, and CTOs care? Because the cost and complexity of building AI-tuned silicon is still brutal. Depending on the packaging approach—think advanced 3D stacks—Eddie put the total bill near a billion dollars. That number alone has kept bespoke designs out of reach for all but a few. The chiplet vision changes the calculus: assemble best-of-breed dies from different vendors rather than funding a monolith. But the promise only holds if those chiplets interoperate cleanly across more than just a physical link.

That’s the gap FCSA endeavors to fill. It goes beyond lane counts and bump maps to define how chiplets discover each other, boot together, secure the system, and manage the data flows between dies. If it works as intended inside OCP, we are an inch closer to a real chiplet marketplace—mix-and-match components with predictable integration, not months of bespoke glue logic.

Ecosystem is the keyword here, and not just for compute. Eddie spoke to collaborations across the platform, including within storage, as a case in point. Storage is stepping into the AI critical path, not simply holding training corpora but participating in the performance equation. AI at scale turns every subsystem into a performance domain. If data can be prepped, staged, filtered, or lightly processed closer to where it lives, you free up precious GPU cycles and avoid starving accelerators. Expect to see more of that thinking show up across NICs, DPUs, and smart memory tiers.

There’s also a geographic angle that’s difficult to ignore. Several of the newest Arm Total Design partners hail from Korea, Taiwan, and other regions actively cultivating their own semiconductor ecosystems. That matters for resilience and supply, but also for innovation velocity. When the entry ticket to custom silicon comes down, you get more specialized parts serving narrower, high-value slices of AI workloads—think tokenizer offload, retrieval augmentation helpers, or secure inference enclaves woven into the package fabric.

Underneath the product updates is a posture shift: lead with others. The Arm Total Design ecosystem is designed for co-design, not solo heroics, acknowledging that no one player can keep up with AI’s pace alone. OCP, with its bias toward open specs and reference designs that ship, is a natural forcing function. Putting FCSA into that process doesn’t just rack up community points; it pressures the spec to survive real-world scrutiny—power budgets, thermals, board constraints, and the ugly details that tend to eat elegant diagrams for breakfast.

If you’re operating AI clusters today, you’re already feeling the ripple effects. Racks are transitioning from steady-state power draw to spiky, sub-second pulses. Data movement is the enemy. The “box-first” era is fading into a rack- and campus-first design ethic where each layer—power delivery, cooling, storage, fabric, memory, compute—must flex in concert. Chiplets slot into that future because they can accelerate specialization at the silicon layer while OCP standardization tames integration higher up the stack.

What should you watch next? Three signals. First, real FCSA-based silicon or reference platforms that demonstrate multi-vendor die assemblies with clean boot and security flows. Second, storage and memory vendors showing measurable end-to-end gains on AI pipelines when compute nudges closer to data. Third, OCP Marketplace listings that move from reference intent to deployable inventory you can actually procure for pilot workloads.

If the last two years were about proving that chiplets are technically feasible, the next two will test whether they’re operationally adoptable. Specs are necessary; supply chains and service models are decisive. The teams that align those pieces—across vendors, geographies, and disciplines—will dictate how fast AI capacity gets cheaper, denser, and more power-aware.

TechArena Take

The AI build-out is colliding with real-world constraints—power, thermals, and capital. Ecosystems that compress time-to-specialization without exploding integration cost will win. Arm’s OCP board seat plus the FCSA contribution is a smart bet that interoperability is the bottleneck to unlock. If FCSA becomes the lingua franca for chiplets, operators could see a practical path to tailored silicon without a billion-dollar entry fee. Pair that with smarter storage and memory paths, and you start to chip away at the two killers of AI efficiency: idle accelerators and stranded data. The homework now is ruthless validation: put these pieces under AI-class loads, measure tokens per joule, and prove that “lead with others” doesn’t just sound good on stage—it pencils out in the data center.

Physical AI in Production: Datara AI’s Data-Loop Edge Playbook

The next wave of AI won’t live in a data center—it will weld seams, pick bins, and navigate factories alongside people. Physical AI brings intelligence into machines that perceive, decide, and act at the edge, closing the loop between perception and action.

Real-world factories introduce drift, glare, vibration, dust, and unpredictable human behavior—conditions that most models never see in simulation.

For IT and cloud architects, this is a stack problem with hard requirements: real-time inference under adverse conditions, data pipelines that span OT and IT, and operational discipline that turns pilot demos into consistent, reliable uptime. The gap between ‘works in simulation’ and ‘works every day on the line’ remains the main blocker to scale.

But it’s also a workforce problem. Robots reduce injury risk in hazardous tasks, but displacement effects are real and localized. The question isn’t “robots: yes or no?” but “how do we deploy them responsibly with both operational rigor and a workforce plan?”

Why the Market is Ready

Three curves are converging: cheaper edge compute and sensors, strong perception models, and maturing MLOps for robotics. The International Federation of Robotics reports 542,000 industrial robots installed in 2024—the fourth consecutive year above 500k—with global demand doubling over the past decade. Industrial AI spending is projected to grow from $44 billion in 2024 to $154 billion by 2030.

Standards are accelerating deployment. ROS 2/DDS, OPC Unified Architecture, and OCP’s rack-level guidance are pushing interoperability across sensors, controllers, and training infrastructure. The blockers are shifting from feasibility to integration discipline and change management - not ‘Can we automate?’ but ‘Can we maintain reliability when conditions vary beyond 5–10% of training data?’

Industry-grade platforms now stitch together simulation, data pipelines, and robotics foundation models. NVIDIA’s Omniverse plus Isaac tools let teams generate synthetic data, train policies in digital twins, and validate behaviors before touching a live cell—shrinking iteration from months to days. The missing piece is capturing the tribal knowledge of veteran technicians and encoding it into recovery behaviors robots can execute.

The Architecture That’s Working

I spoke with Durgesh Srivastava, CTO of DataraAI, at the recent OCP Global Summit about what separates production systems from pilot theater. His outlook is pragmatic: target full automation for bounded task families, match human quality, and build graceful fallbacks when reality goes off-script.

DataraAI provides a data engine for physical AI—a data-as-a-service platform that transforms factory experience into machine intelligence. It captures how technicians act, how robots fail, and how edge conditions drift, creating the data foundation real-world robotics has always lacked.

The company emphasizes three pillars:

1. Egocentric Multi-Modal Data Capture – Robots learn from the same viewpoint they act in. DataraAI’s robot-mounted and wearable sensors record RGB-D vision, IMU, tactile, and audio data from real operations. This captures the nuanced cues—force patterns, drift, micro-failures—that static cameras miss.

2. AI-Driven Annotation – DataraAI’s engine automatically labels rare and high-impact events—fires, spills, breakdowns, human-robot handoffs—turning chaos into structured data. It consistently captures scenarios that traditional CV pipelines fail to label or detect.

3. Continuous Learning Loop – New anomalies are fed back into the data engine. Each cycle makes models more resilient and accurate in the field. Every exception becomes new training data, creating a self-improving loop tied directly to real operations.

Early industrial pilots using this loop showed a 53% accuracy lift and 67% better edge-case handling—clear evidence that real-world data closes the performance gap.

The winning pattern is consistent: push perception and control onto the robot, treat the cloud as training and update infrastructure, and run a disciplined data loop that captures real-world anomalies. In harsh conditions—glare, occlusion, spark bursts—edge models must keep the task running. Inference runs locally on the robot, keeping factory data on-site, reducing latency, and enabling real-time adaptation to drift and anomalies. The back end aggregates field data and pushes lightweight updates routinely. That loop turns demos into dependable production.

High-fidelity simulation generates diverse synthetic data and lets teams rehearse rare events safely. Foundation models provide generalizable priors; reinforcement learning in sim refines task skills before transfer to the real world. Edge inference runs locally; telemetry goes upstream for labeling and augmentation; new policies return during maintenance windows. Daily updates are feasible when you structure the pipeline—this reduces drift and grows edge-case coverage.

The Workforce Reality

Case studies show robots cut musculoskeletal risk and reduce exposure to hazardous tasks like forging and welding. Collaborative-robot safety standards (ISO 10218, ISO/TS 15066) and OSHA guidance formalize safe human-robot interaction.

But displacement effects are real. MIT research found that each additional industrial robot per thousand workers reduced employment and wages in affected commuting zones—a meaningful impact not fully offset by productivity gains. The IMF estimates about 40 percent of jobs worldwide are exposed to AI impact; roughly half may see productivity augmentation, while the rest face reduced labor demand without intervention.

The macro takeaway: adoption will rise, safety can improve, and some roles will shift. For architects presenting to leadership or works councils, the deployment plan must address both.

TechArena Take

Physical AI is crossing from pilot theater to production credibility.

The durable advantage comes from operational muscle: how quickly you spin the loop from floor data to better policies and back to the line, while moving people from hazardous repetitive work to higher-value tasks with clear retraining paths. Start with one cell, one exception, and a fallback procedure. Prove you can turn tribal knowledge into machine-executable behaviors and safety risks into measurable improvements. Then scale the loop. That’s the compounding edge in physical AI.

Inside the New Rules of Responsible AI Governance

AI is no longer just powering apps; it is determining credit, authorizing vendors, and deciding who to grant access to critical services.

It has moved from research centers to the heart of our financial institutions, healthcare systems, online retail sites, and even government agencies. But with such rapid proliferation, there is fierce scrutiny. The question being asked in boardrooms, policy circles, and living rooms is simple: How do we make AI fair, transparent, and accountable?

This is where Responsible AI governance becomes imperative. Responsible AI is ultimately about trust-building, creating systems that are safe, ethical, and respectful of human values. It’s about putting guardrails in the design, development, and deployment of AI to ensure a balance between risks and innovation.

Above all, Responsible AI is not something that can be managed within the confines of a single company. It extends to the whole ecosystem of users, regulators, and partners. Whether it’s banks complying with global anti-money laundering rules, or e-commerce platforms authenticating sellers without bias, governance involves cooperation and shared standards.

And though experts refer to it in various ways, “trustworthy AI,” “ethical AI,” or “principled AI,” the goal is the same: maximizing the value AI generates while minimizing the risks. That includes making sure systems continue to be reliable throughout their lifecycle, eliminating bias, securing data, and ensuring decision-making can be explained.

Defining Responsible AI Governance

The answer to the question of “how do we make AI fair, transparent, and accountable?” lies in Responsible AI governance, a set of principles, policies, and practices that guide how AI is developed, deployed, and governed.

While no single definition exists yet, governments, researchers, and businesses are all at least united on this: responsible AI is building trust. Different frameworks place emphasis on different aspects. For example, the European Union's High-Level Expert Group on AI refers to AI as lawful, ethical, and resilient. Singapore's guidelines place a focus on transparency, fairness, and human-centric design. And big tech has emerged with its own approaches, requiring explainability, accountability, and safeguarding against bias.

Simply stated, “responsible” can mean very different things based on who you talk to. But the shared purpose is clear; AI should work for people, not against them. It needs to augment human choice and protect individual rights and societal values.

Principles of Responsible AI

Across numerous frameworks, a shared set of principles has come to the fore. They are not philosophical constructs; they are practical standards that all organizations ought to remember while applying AI:

- Robustness and Safety – The systems must be resilient against errors, adversarial attacks, or misuse. In practice, it means stress-testing AI models, watching out for drift, and building contingency plans.

- Inclusivity and Fairness – AI should not amplify human bias. Banks, for instance, must ensure certain credit models do not unfairly reject certain groups. Online shopping sites must prevent recommendation engines from amplifying discrimination.

- Privacy and Security – Data is the lifeblood of AI but mismanaging it undermines trust. Robust data governance, transparent documentation, and adherence to regulations such as GDPR are now minimum expectations.

- Explainability and Transparency – If users can’t see how a model is making decisions, they won’t trust it. Explainable AI (XAI) tools are becoming essential for compliance and customer trust.

- Accountability and Governance – Humans need to be in charge ultimately. That equates to clear oversight structures, audit trails, and escalation paths when AI is incorrect.

By integrating these principles into operations and strategy, organizations achieve a balance between innovation and protection. Done correctly, Responsible AI is a source of competitive advantage rather than a compliance exercise.

The Policy Landscape: U.S. and Europe

Governments are not sitting on the sidelines watching AI progress; they’re making the rules of the game.

United States: The White House introduced the Blueprint for an AI Bill of Rights in 2022, outlining five principles to which all AI systems must adhere: safety, non-discrimination, data privacy, transparency, and the right to a human alternative. The National Institute of Standards and Technology (NIST) thereafter published its AI Risk Management Framework (2023), which, while voluntary, has become the de facto business playbook for those wanting to prove their AI is trustworthy.

At the state level, momentum is also gaining. Colorado passed the country’s first state-wide AI law in 2024 that requires companies to assess and minimize algorithmic bias in high stakes uses such as employee recruitment and credit.

Europe: The European Union took it further with the AI Act, implemented in August 2024. It is the first legally binding law of this kind anywhere and adopts a risk-based approach.

The financial industry illustrates the stakes. AI already dominates fraud detection, credit scores, risk management, and robo-advisory services. While these technologies bring efficiency and inclusivity, regulators want them to also be explainable, fair, and secure. Under the AI Act, even general-purpose AI systems such as generative systems fall under transparency obligations such as labeling AI-created content or flagging deepfake content.

Enforcement is not an afterthought. Fines of up to €30 million or 6% of global revenue have been set by the EU for wrongdoing. In the United States, regulators such as the FTC and CFPB are increasingly framing biased or deceptive AI systems for consumer protection violations, suggesting that more stringent enforcement is in the pipeline.

Why Policymakers Care

For governments, Responsible AI governance is much more than compliance. It is a competitiveness factor, a citizens’ protection factor, and a matter of establishing trust globally. Policymakers face the dual challenge of driving innovation while requiring safety provisions to safeguard people.

Consider the banking sector. Banks utilize AI to inform credit decisions, fraud detection, and anti–money laundering (AML) systems. If biased or opaque, they can discriminatorily reject customers, drown compliance teams with false alarms, or even create systemic financial risk. Regulators like FinCEN in the United States and the European Banking Authority in the EU therefore emphasize explainability and fairness in AI-based AML systems.

The e-commerce sites themselves are not immune to similar risks. AI powers seller sign-up, product suggestion, and content moderation. Without regulation, the same technologies can facilitate fraud, permit misrepresentation, or result in biased conclusions for sellers and buyers. The consequences are trust erosion and risk of regulatory fines.

The Path Forward

Responsible AI governance is not a bucket list; it is a collective sense of responsibility. For organizations, it is about embedding AI principles into customer experience, compliance infrastructure, and corporate brand. For policymakers, it is about creating guardrails that are enforceable but support innovation. For technologists and researchers, it is about creating tools of explainability, resilience, and fairness.

If done effectively, governance contributes trust and creates enduring value. If neglected, threats, discrimination, misinformation, and systemic flaws can overshadow rewards.

Responsible AI is ultimately the cornerstone of the long-term future of technology. For policymakers, it saves rights. For companies, it protects reputation and maintains compliance. For society, it ensures that technology supports human values.

Efficiency: The New Moat for Data and AI Teams

For years, the tech world equated innovation with scale: more data, more models, more compute. But 2025 has revealed a different truth. Scale alone is no longer a differentiator. The most forward-thinking data and AI teams are still innovating, but they are doing it by designing for efficiency—building smarter, not just bigger.

Across industries, leaders are realizing that intelligent design—not brute force—drives lasting progress. As cloud budgets rise and sustainability becomes a board-level priority, the smartest teams are treating efficiency as strategy, not just cost-cutting. According to Gartner’s Top Trends in Data & Analytics for 2025 report, data initiatives are shifting “from the domain of the few to ubiquity”—and leaders now face pressure “not to do more with less, but to do a lot more with a lot more.”

McKinsey, in its Seizing the Agentic AI Advantage report, finds that companies succeeding with AI are the ones that optimize every layer of their technology stack for speed and cost.

Efficiency Is the Real Moat in AI and Data Engineering

The edge in AI no longer lies in model complexity; it lies in how well teams orchestrate their resources. A group that can run the same workload faster or cheaper instantly earns more room to innovate.

Yet cloud waste remains immense. Organizations lose an estimated 30 % of their cloud budgets — according to Flexera’s State of the Cloud Report — due to idle or misallocated resources. Progressive teams are embedding FinOps dashboards directly into their pipelines, tracking cost, carbon, and performance in real time.

Efficiency has evolved from a side project to a design philosophy. It now helps determine which teams survive budget cuts and which scale with confidence.

Architecture Matters More Than Algorithms

Generative AI put algorithms at the center of attention, but it is architecture that sustains innovation. The strongest data platforms today are modular, event-driven, and self-healing.

Traditional ETL pipelines are being replaced by composable frameworks built on open formats such as Iceberg and Delta Lake. These modern table architectures enable schema evolution, time travel, and cost-efficient versioning. Databricks, in its The Future of the Modern Data Stack webinar, notes that open-standards and flexible architectures are dramatically simplifying enterprise data platforms.

True innovation happens when systems are simple to extend, easy to test, and quick to evolve. Big no longer means better. Adaptable does.

Sustainability Metrics Will Shape the Next Wave of Innovation

As AI workloads grow, energy transparency is becoming inseparable from performance. Cloud providers are now publishing sustainability data alongside billing metrics, allowing engineers to see the environmental impact of every query.

Microsoft’s Cloud for Sustainability platform and Google Cloud’s Carbon Footprint tool, for example, provide visibility into energy use per workload. This turns sustainability from a talking point into a measurable engineering discipline.

By 2026, success will depend not only on how fast teams generate insights but also on how efficiently they convert energy into intelligence. The most forward-thinking innovators will measure their progress in joules as carefully as they do in dollars.

Constraints Drive Creativity

It is a common belief that efficiency stifles experimentation. In practice, it often does the opposite. When teams have to work within limits, they tend to think deeper, design cleaner, and test smarter.

Harvard Business Review’s Why Constraints Are Good for Innovation article shows that when teams embrace constraints, they tend to focus on what truly matters—often generating more original and effective ideas.

In data engineering, those constraints spark leaner algorithms, reusable components, and automation breakthroughs. Efficiency, when embraced thoughtfully, becomes a powerful catalyst that channels innovation instead of constraining it.

Efficiency Is Becoming the Language of Leadership

CFOs, CTOs, and sustainability officers now share a common language built on efficiency. They talk about cost per insight, energy per transaction, and governance per gigabyte. Success is no longer measured only by how much was delivered, but by how responsibly it was achieved.

Leaders who once cared only about uptime now care about utilization curves and carbon intensity. This cultural shift shows that efficiency is no longer an operational concern; it has become a leadership mindset that connects finance, engineering, and sustainability goals.

These trends point to a clear reality: efficiency is no longer a constraint. In practice, efficiency is the price of admission for sustainable innovation at scale.

Conclusion

Efficiency is not the opposite of innovation; it is how leading teams make their innovation durable and scalable.

As the excitement around massive AI models begins to settle, the real winners will be the teams that engineer with discipline, measure with integrity, and optimize with purpose. The future belongs to those who understand that every dataset, every compute cycle, and every design choice carries a cost.

True innovation means creating maximum impact with minimal waste.

How is your organization redefining innovation through efficiency?

Resilience in the Age of AI: Inside Commvault’s ResOps Pitch

If you listen to enough AI keynotes, you start to hear similar refrains: AI is transformative, the pace is unprecedented, and security hasn’t kept up. What was different at Commvault’s SHIFT event was less the diagnosis and more the operating model they’ve put around it: ResOps and Unity.

Commvault’s leadership argued that cyber resilience needs a new name, a new architecture, and a promotion in the enterprise hierarchy. They call their answer “ResOps”—resilience operations—and they introduced Commvault Cloud Unity, a unified platform that embodies that ResOps model across security, identity, and recovery.

You don’t have to buy into the branding to see the signal: resilience is being pulled out of the back office and moved to the center of how AI-era infrastructure is designed and run.

From Cyber Resilience to AI Resilience

Two years ago, Commvault elevated data protection into a more strategic posture they call “cyber resilience,” emphasizing that data protection is more than a last-line-of-defense tape in a vault. At SHIFT, CEO Sanjay Mirchandani pushed that idea further: in an AI-first world, resilience isn’t just about systems and data anymore; it’s about how thousands of autonomous agents interact with those systems and data in real time.

The framing is straightforward:

- Generative and agentic AI are driving an explosion in data volume, variety, and sensitivity.

- AI systems are increasingly being used by attackers as well as defenders.

- Identities are proliferating, with non-human and machine identities outnumbering humans.

In that context, Mirchandani argued that “AI resilience” requires three things to move in lockstep: security, identity, and recovery. If any one of the three lags, AI becomes a new fragility multiplier instead of a growth engine.

Many large enterprises are already living this reality: fragmented data estates, software as a service (SaaS) and cloud-native sprawl, and a rising tide of identity-driven attacks. SHIFT’s contribution is to put a more opinionated operating model around those forces and to insist that resilience needs its own closed loop.

ResOps: Category Creation or Real Operational Shift?

ResOps, as Commvault describes it, is a continuous loop across three stages:

- Understand and govern data and identities (who or what is accessing what, and under which policies).

- Detect anomalies and threats in near-real time, across both identities and data.

- Recover cleanly and predictably at scale, with as little data loss as possible.

On paper, that sounds familiar. Security teams talk about “detect, respond, recover” all the time. What Commvault is doing is pulling data protection and identity recovery into that motion as first-class citizens, rather than something the security team hands off to infrastructure after the incident is contained.

ResOps is less about inventing a new discipline and more about admitting that the old silos are breaking down.

In many organizations today:

- SecOps, identity teams, and backup teams all operate with different tools, policies, and metrics.

- Automation exists, but it’s often local to a domain: SOAR (security orchestration, automation, and response) playbooks, runbooks in ITSM (IT service management) tools, scripting in backup platforms.

- AI is introduced at the edges—a chatbot here, anomaly detection there—without a single control plane that understands resilience end-to-end.

What Commvault is really arguing for is convergence: one fabric that connects identity posture, data governance, threat signals, and recovery orchestration. Whether you call that ResOps or just “finally connecting the dots” is semantics, but the direction of travel is clear across the industry.

Identity Becomes the New Perimeter

One of the more grounded sections of the SHIFT program focused on identity resilience. The thesis: if identity is the new perimeter, then identity recovery and forensics have to be just as mature as server and storage recovery.

A few key points stood out:

- Most breaches still start with stolen or misused credentials, according to industry data like CrowdStrike’s ~80% figure cited on stage.

- Machine identities and service accounts are rapidly becoming one of the dominant attack vectors, especially as automation proliferates.

- Traditional identity recovery is either too coarse (all-or-nothing forest restore) or too manual for crisis conditions.

Commvault’s answer is a set of capabilities around Active Directory and Entra ID that continuously audit changes, flag risky privilege drift, and allow rollbacks of specific changes or entire “attack chains.” In their demo, a compromised service account quietly spreads a malicious group policy; the platform detects the pattern, allows an operator to unwind the changes, and then feeds that insight back into a vulnerability view.

It’s interesting that identity recovery and identity analytics are now being positioned as central pillars of resilience, not niche features. As AI agents increasingly act on behalf of users and services, the blast radius of a compromised identity gets bigger. The ability to unwind that blast radius precisely—without flattening an entire domain—will matter more than it has in the past.

Clean Recovery as a First-Class Outcome

Another recurring theme in the keynote was the “billion-dollar question”: when you recover, how do you know the data is both clean and current?

Traditionally, recovery teams have had to choose:

- Roll back further in time to ensure a clean copy, and accept more data loss.

- Stay as close to the event as possible and risk reintroducing malware.

Commvault’s proposed answer is an approach they call synthetic recovery, paired with threat scanning and cleanroom testing. Conceptually, it works like this:

- Scan backups with multiple signals (anomalies, encryption patterns, malware signatures, and external threat intel).

- Use that understanding to automatically assemble a composite recovery point that pulls in only the last known-good versions of corrupted files.

- Rebuild systems into an isolated “cleanroom” environment using golden images, then reattach the cleaned data for validation before going back to production.

Embedded in this approach is an important shift: recovery is no longer just about hitting a recovery point objective/recovery time objective (RPO/RTO) number. The new bar is “provably clean” plus “minimally lossy,” with a testable chain of evidence you can show to a CISO, a regulator, or your own board.

That’s a much harder problem than it sounds, and vendors across this space are still evolving their answers. But the directional signal is right. As AI accelerates both attack automation and business reliance on data, the cost of a “dirty” recovery—one that quietly reintroduces the threat—gets higher every year.

Cloud, On-Prem, and the Unity Story

Unity, as positioned at SHIFT, is Commvault’s attempt to bind together three worlds under one control plane:

- SaaS workloads (M365, Google Workspace, Salesforce, DevOps platforms, and more).

- Cloud-native stacks across AWS, Azure, and Google Cloud.

- On-prem environments using their Hyperscale appliances and reference architectures.

Again, the specifics are vendor-branded, but the pattern is market-wide. Enterprises don’t live in one world anymore. A single business process might touch Kubernetes, SaaS customer relationship management (CRM), cloud databases, edge stores, and an on-prem analytics farm. Resilience that stops where a hyperscaler’s responsibility ends is no longer enough.

The architectural bet we’re seeing is:

- Separate control planes from data planes.

- Scale protection and recovery elastically in the cloud, while allowing enterprises to bring their own storage and appliances where they need to.

- Wrap everything in a common policy model and observability layer so you can reason about posture across environments, not per tool.

Unity is one version of that story. Other vendors are building their own versions.

The TechArena Take

If we zoom out from the SHIFT announcements and marketing language, a few broader trends come into focus:

- Resilience is becoming an operating model, not a product line: Boards and CEOs are now asking “how fast can we recover from the inevitable?” in the same breath as “what is our AI strategy?” That pushes resilience into day-to-day operations and out of the “insurance” category.

- Identity and data are converging in resilience conversations: The old model treated identity as identity access management’s (IAM’s) problem and backup as an infrastructure problem. AI collapses that separation. In an agentic world, identity mistakes and data mistakes are tightly coupled.

- “Clean” is the new RPO: Recovery objectives are no longer just about how much data you lose or how fast you come back. They are about how certain you are that you haven’t re-imported the adversary into production.

- AI is both the accelerant and the tool: The same AI that makes it easier to discover vulnerabilities and automate attacks is also being harnessed to correlate signals, propose recovery points, and orchestrate complex workflows. The arms race is well underway.

- SHIFT doesn’t change the fundamentals: Enterprises still need clear ownership across SecOps, identity, and infrastructure. They still need to rationalize tool sprawl and understand where each platform begins and ends. And they need to test recovery assumptions in realistic scenarios, not just on paper.

What SHIFT underlines is that resilience is now part of the AI conversation, not an afterthought. As enterprises experiment with AI factories, agentic systems, and data-native product development, the resilience stack underneath is being reimagined just as aggressively as the AI stack on top.

In the arena, that’s the story to watch: not which platform has the most features this quarter, but which operating models help enterprises withstand—and learn from—the inevitable failures that come with AI at scale.

Inside the New Product DNA: AI, Causality, Experimentation

For decades, intuition, gut feel, and post-launch hindsight have driven product development. Teams brainstormed, launched features, and hoped for the best. Success stories were celebrated, and failures were dismissed as bad timing.

Today, that’s no longer the case. The new DNA of the product is intrinsically data-native and experiment-driven, where AI meets causal inference meets automation.

In this new paradigm, learning is the product itself. Every release, click, and interaction feeds a continuous feedback loop that helps products evolve faster and more intelligently.

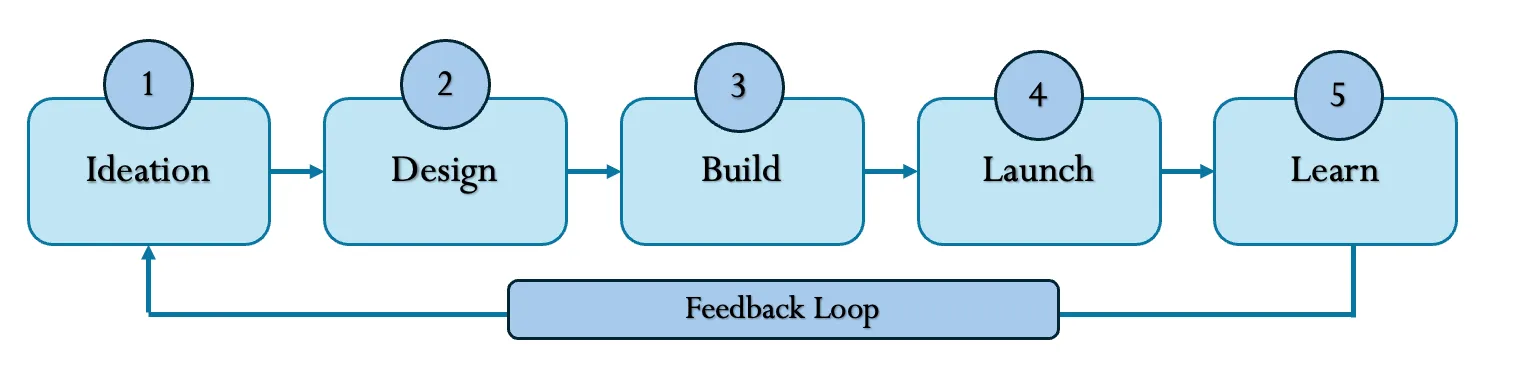

Evolution of Product Development

Each phase has shortened the learning cycle—from slow post-mortem reviews to real-time decision-making. AI now serves as the connective tissue, turning every user signal into actionable insight.

How AI is Rewriting Product Development

AI’s impact is not just in automation, it’s in amplifying human experimentation capacity. Here’s how it’s transforming each layer of the product lifecycle:

1. Ideation → Generative Exploration

LLMs and copilots are helping teams explore what to build faster than ever. Prompt a model with “How might we reduce checkout friction for Gen Z users?” and it can instantly generate hypotheses, user flows, and even A/B test copy variants.

2. Design → Data-Infused Creativity

Design tools powered by AI (like Figma’s AI assistant or Uizard) now simulate user reactions, predict engagement heatmaps, and propose design alternatives based on prior experiment data.

3. Build → Experiment-Ready Engineering

Modern engineering frameworks integrate feature flags, metric tracking, and causal validation directly into the codebase. This allows for safe experimentation at scale, every rollout is testable by design.

4. Launch → Causal Attribution

Instead of asking “Did this feature correlate with higher conversion?” teams now ask “Did it cause it?” Causal inference frameworks (Propensity Matching, Difference-in-Differences, Meta-learners) help isolate true impact from noise.

5. Learn → Automated Knowledge Loops

Agentic AI systems summarize experiment learnings, identify patterns across experiments, and suggest next actions, forming a self-improving experimentation ecosystem.

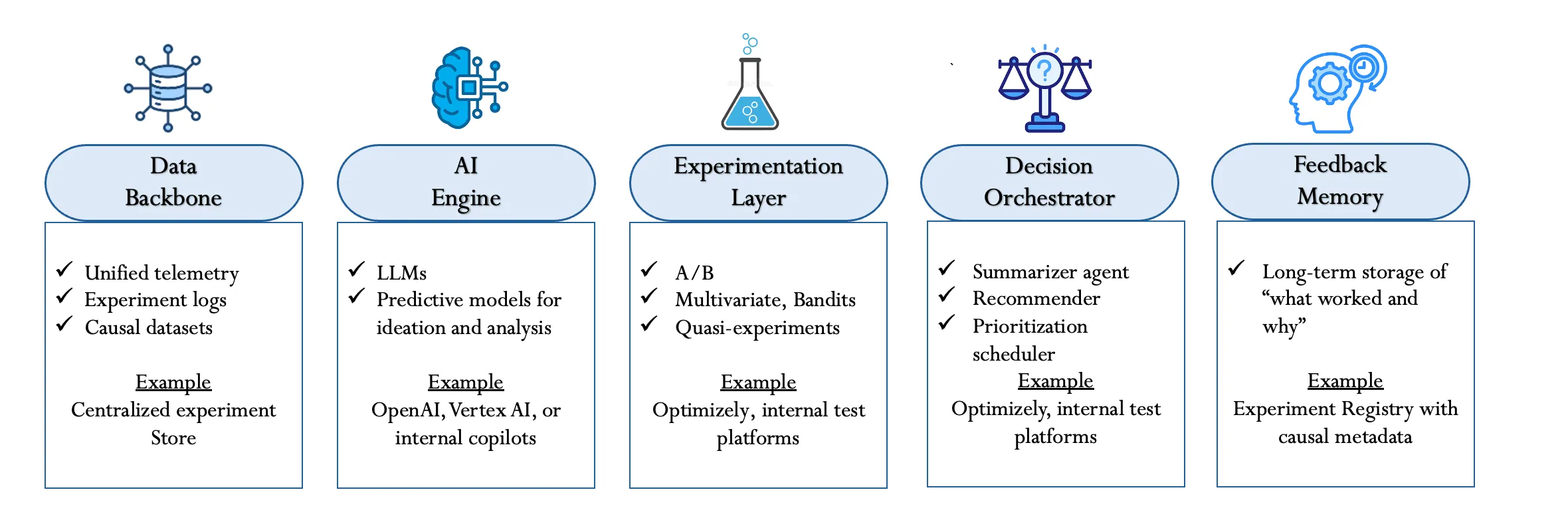

The New Product DNA: Core Components

Modern product organizations are evolving from static roadmaps to adaptive, learning systems. At the heart of this transformation lies a new architecture (highlighted below) - a Product DNA where AI, data, and experimentation form the building blocks of continuous innovation.

Real-World Example: The AI Experimentation Loop in a Digital Health Platform

Consider a digital health platform for the management of chronic conditions such as diabetes and hypertension. The goal of this product would be to improve daily engagement through encouraging users to record their vitals, adhere to care plans, and take medication as directed. Rather than setting fixed reminders, the product team will create an AI-powered experimentation loop that continuously learns the user's behavior and gradually fine-tunes its interventions in real time.

It begins with a Data Backbone: every interaction is captured—glucose logs, step counts, coach messages, and reminders-unified into one secure telemetry system. This causal-ready foundation connects wearable sensor data, app behavior, and contextual signals like time of day and mood, allowing for cause and effect to be measured with precision.

Through the identification of such patterns, the AI Engine applies LLMs and predictive models to formulate hypotheses. It may identify that users logging meals less than 10 minutes after eating are showing a much higher degree of adherence, triggering new tests of customized notifications or even empathetic message tones for users who display fatigue.

Each of these ideas moves into the Experimentation Layer, where different behavioral nudges are compared in controlled A/B or adaptive tests. For instance, one group receives fixed daily reminders, while another group gets adaptive prompts triggered from sensor data. Effectiveness is determined by metrics such as adherence, the number of app openings, and glucose stability, each automatically favoring better variants through bandit algorithms.

The Decision Orchestrator then summarizes results-for example, "adaptive reminders improved adherence by 8% among evening users" - and schedules the next round of tests. Finally, insights feed into Feedback Memory, a long-term intelligence system that stores metadata on what worked, for whom, and why.

Over time, the platform becomes a self-learning health ecosystem where every interaction reinforces its knowledge of user behavior. The result is more than greater engagement; it's a living, data-driven product that continuously tailors care and fuels innovation.

Next in the Series

Banani’s Next Article: “Rewiring Product Management with Generative AI: From Roadmaps to Deployment.” She’ll explore how generative AI is reshaping product strategy from idea generation to roadmap alignment and real-time user feedback loops.

Nebius at SC25: Building the Neocloud for Enterprise AI

From SC25 in St. Louis, Nebius shares how its neocloud, Token Factory PaaS, and supercomputer-class infrastructure are reshaping AI workloads, enterprise adoption, and efficiency at hyperscale.

Inside SC25: AI Factories From Racks to Qubits

AI didn’t just show up at Supercomputing 2025 (SC’25) in St. Louis—it took over the agenda. From exabyte-scale storage and 800 Gbps fabrics to liquid-cooled racks and emerging quantum accelerators, SC25 made it clear that the next era of HPC is really about building AI factories end to end.

Below is a structured look at the announcements the TechArena team is tracking, organized around the major layers of the stack.

1. Data and Memory Platforms for Agentic AI

The most urgent theme on the show floor: getting more useful work out of every GPU. That starts with memory and data.

WEKA: Breaking the GPU Memory Wall and Storage Economics

WEKA formally took its Augmented Memory Grid from concept to commercial availability on NeuralMesh, validated on Oracle Cloud Infrastructure (OCI) and other AI clouds. The goal is to extend GPU key-value cache capacity from gigabytes into the petabyte range by streaming KV cache between HBM and flash over RDMA using NVIDIA Magnum IO GPUDirect Storage.

The reported gains are significant: 1000x more KV cache capacity, up to 20x faster time-to-first-token at 128k tokens versus recomputing prefill, and multi-million IOPS performance at cluster scale. For long-context LLMs and agentic AI workflows, that means fewer evictions, less recompute, and better tenant density per GPU — directly attacking inference cost structures on OCI and other platforms.

On the hardware side, WEKA’s next-gen WEKApod appliances push the economics further. WEKApod Prime uses “AlloyFlash” mixed-flash configurations to deliver 65% better price performance while preserving full-speed writes, and WEKApod Nitro focuses on performance density with 800 Gb/s networking via NVIDIA ConnectX-8 SuperNICs. Together, they target AI factories that need high GPU utilization, high density, and lower power per terabyte.

VAST Data + Microsoft Azure: AI OS Meets Cloud Scale

VAST Data is extending its AI Operating System into Microsoft Azure. VAST AI OS will run on Azure’s Laos VM Series with Azure Boost, giving customers a unified “DataSpace” global namespace so they can move between on-prem and Azure without refactoring data pipelines.

InsightEngine and AgentEngine let customers run vector search, RAG pipelines, and agent workflows directly where the data lives, and the underlying disaggregated, shared-everything (DASE) design allows independent scaling of compute and storage. The combined effect is a cloud-native AI operating system tuned for agentic AI pipelines, built to keep Azure’s GPU and CPU fleets saturated.

MinIO ExaPOD: Exabyte as a Design Point, Not an Edge Case

MinIO’s ExaPOD reference architecture plants a big flag for exascale AI data. It’s a 1 EiB usable building block (about 36 PiB usable per rack) that scales linearly in performance and capacity. In the reference design, ExaPOD delivers on the order of 19.2 TB/s aggregate throughput at 1 EiB with 122.88 TB drives, around 900 W of power per PiB including cooling, and modeled all-in economics in the $4.55–$4.60/TiB-month range at exabyte scale.

Built on Supermicro servers, Intel Xeon 6781P, and Solidigm D5-P5336 NVMe, ExaPOD is clearly aimed at hyperscalers, neoclouds, and large enterprises that see exabytes as the new baseline for LLMops, simulations, and observability.

2. Power, Cooling, and the Introduction of PCE

As AI deployments creep toward gigawatt footprints, power and cooling have shifted from “facility detail” to board-level design constraint.

Airsys PowerOne and Aegis: Cooling as a Compute Multiplier

Airsys introduced PowerOne, a modular, multi-medium cooling architecture that scales from 1 MW edge sites to 100+ MW hyperscale data centers. It’s tailored for AI and HPC density with a standard cooling stack (CritiCool-X chiller, FluidCool-X CDU, MaxAir fan wall, Optima2C CRAH) and a LiquidRack spray-cooling architecture that can operate in compressor-less modes with dry coolers where climate allows.

Beyond traditional PUE, Airsys is pushing Power Compute Effectiveness (PCE)—a metric that measures how much provisioned power turns into usable compute. The message is that cooling should unlock stranded power and convert it into AI capacity, not just shave a few basis points off energy overhead.

In parallel, Aegis, an affiliated liquid-cooling arm, is being positioned as an agile R&D hub building two-phase CDUs, cold plates, and control systems using rapid 3D manufacturing to keep pace with AI thermal demands.

Schneider Electric and Motivair: Integrated Power + Liquid Cooling

Schneider Electric is leaning into its acquisition of Motivair, blending global power and infrastructure capabilities with more than 15 years of exascale and accelerated-computing cooling experience. The combined portfolio spans chip-level cold plates, rear-door heat exchangers, CDUs, and facility-level power and control systems.

The through-line is that liquid cooling is now being evaluated as part of a full-stack design conversation with power and infrastructure, especially for hyperscale, co-locators, and high-density AI factories where 100 kW-plus racks are quickly becoming normal.

Iceotope KUL BOX: Liquid-Cooled AI at the Noisy, Messy Edge

Iceotope’s KUL BOX brings the AI factory cooling story out of the core data center and into edge environments that were never designed for dense clusters. It’s a compact, liquid-cooled AI inferencing cluster built as a turn-key system: a 24U rack with six Iceotope KUL AI chassis, up to 24 NVIDIA GPUs, top-of-rack switching, and a fully integrated liquid-cooling loop.

The key twist is deployment model. KUL BOX captures almost all of the system’s heat using Iceotope’s precision immersion cooling and rejects it through a separate liquid-to-air outdoor cooler—meaning it can be installed in locations without existing facility water, dry chillers, or traditional white-space infrastructure.

Iceotope highlights several benefits for edge AI and HPC workloads: consistent GPU throughput and reliability from stable thermals, lower energy and cooling overheads, quiet, fanless operation, and a single-vendor solution that bundles rack assembly, fluids, pipework, logistics, on-site installation, and a three-year service plan. Target use cases include telcos and colocation providers, labs running sensitive compute-heavy tasks, and industrial edge deployments with unusual constraints or sustainability requirements.

3. Server and Computing Hardware

On the compute side, vendors largely converged on the same message: more FLOPS per rack, more memory per GPU, and more network bandwidth behind every accelerator.

Dell Technologies: AI Factory Building Blocks

Dell made its AMD Instinct-powered PowerEdge XE9785 and XE9785L servers generally available and introduced the new Intel-powered PowerEdge R770AP. All three are tuned for demanding AI and HPC workloads as part of the Dell AI Factory with NVIDIA.

On the network side, Dell’s new PowerSwitch Z9964F-ON and Z9964FL-ON switches deliver 102.4 Tb/s of switching capacity, targeting dense AI fabrics. Dell also announced integration of ObjectScale and PowerScale storage systems with NVIDIA’s NIXL library, tightening the connection between storage services and GPU-centered inference stacks.

Supermicro, ASUS, Compal, EnGenius: Dense GPU Nodes and Liquid Cooling

Several OEMs showcased how fast they can pack accelerators into standard racks:

Supermicro highlighted Data Center Building Block Solutions featuring NVIDIA GB300 NVL72 systems with 72 Blackwell Ultra GPUs and liquid cooling up to 200 kW per rack. It also launched a 10U air-cooled AMD Instinct MI355X server that claims up to 4x compute and 35x inference performance versus its predecessor.

ASUS unveiled its XA AM3A-E13 server with eight AMD Instinct MI355X GPUs and dual AMD EPYC 9005 CPUs, offering 288 GB of HBM and up to 8 TB/s of memory bandwidth in a modular 10U chassis. The platform complements ASUS’ broader AI infrastructure portfolio, including NVIDIA GB300-based systems.

Compal brought high-density, liquid-cooled SG720-2A/OG720-2A servers supporting up to eight AMD Instinct MI325X GPUs with forward compatibility for MI355X, plus the SG223-2A-I immersion-cooled system that supports up to eight PCIe GPUs in a 2U chassis.