Walmart and Cornelis Expose the Costs of Network Inefficiency

Billions of customer interactions during peak seasons expose critical network bottlenecks, which is why critical infrastructure decisions must happen before you write a single line of code.

Giga Computing on Rack-Scale AI: From Training to Inference

Recorded at #OCPSummit25, Allyson Klein and Jeniece Wnorowski sit down with Giga Computing’s Chen Lee to unpack GIGAPOD and GPM, DLC/immersion cooling, regional assembly, and the pivot to inference.

Cyber Conflict vs War on Drugs: Mitigating the Unwinnable

As a national security professional developing next-generation tools and tradecraft on the front lines of the cybersecurity war, I’ve been wondering: Is this conflict winnable?

I polled a couple dozen friends and colleagues—CISOs, federal law enforcement officers, hackers, interns, and others, and the consensus was sobering: the cybersecurity war is a stalemate. Tech cuts both ways. Attackers and defenders keep leveling up, and there’s no silver-bullet tool that ends the fight.

That led me to a question: Is this cyber conflict fundamentally analogous to the War on Drugs? Both look like persistent, systemic battles that can never be fully won, unlike, say, train robbery in the American West—a criminal trend that burned out by the early 1900s.

It seems to me that these two wars are not merely technical or economic problems; they are enduring conflicts.

That nature determines how we should engage. If the cyber war could be ended by single technical breakthrough—like train robbery faded with the disappearance of physical cash on trains—we should put all effort into inventing and adopting that tool. If, on the other hand, it is an enduring arms race, we shift focus from preventing every breach to building a resilient digital immune system.

Pivoting the Mission From Preemption to Fault Tolerance at Speed

The strategy shifts from prevention to resilience, reflected in today’s emphasis on ZTA (Zero Trust Architecture), SOAR (Security Orchestration, Automation, and Response), and XDR (Endpoint Detection and Response). Success for a CISO is measured less by the absence of breaches and more by speed and recovery: mean time to detect, time to contain, and time to recover.

Both the cybersecurity war and the War on Drugs are enduring struggles powered by strong economic incentives, global in scope, and defined by asymmetric contests against adaptive, networked adversaries.

President Richard Nixon declared the War on Drugs in 1971; the Drug Enforcement Administration followed in 1973. For decades, the focus was enforcement. In recent years, many states have legalized or decriminalized marijuana and the federal stance has shifted toward more public-health-oriented approaches. After immense effort and sacrifice, the practical outcome resembles a stalemate rather than a decisive victory.

By contrast, train robbery in the late-19th-century American West was a localized, tactical crime against fixed infrastructure. Consider the Wild Bunch’s 1900 attack on a Union Pacific train near Tipton, Wyoming: the target was a safe with gold and banknotes. As banks shifted to electronic transfers and reduced the movement of physical cash, the opportunity evaporated and the crime largely disappeared.

The War on Drugs and cybersecurity don’t behave that way. They are global and dynamic, with adversaries who adapt to every intervention. They demand continuous management and strategic adaptation, not promises of final eradication.

Structural Parallels

Both domains operate as markets with durable incentives. Enforcement and defense actions raise operational risk; in illicit markets, that can increase margins, attracting more sophisticated actors—the Hydra effect.

Operations are borderless. Transnational networks span jurisdictions; gray logistics, cryptocurrency rails, and dark-web marketplaces collapse distance and jurisdiction, enabling payments, laundering, procurement, and coordination. In narcotics markets, growing use of cryptocurrencies and dark-web services shows the convergence; in cybercrime, the same rails fund ransomware and broker access.

Adversaries are adaptive and decentralized. Networked cells and affiliate models enable rapid mutation in tactics, techniques, and procedures.

Human Costs

The general population bears the brunt of these illicit economies. This includes the terrible crisis of drug addiction, the devastating impact of violence on civil society, and the massive financial loss and disruption of trust caused by cybercrime and data breaches. Additionally, law enforcement, officers, and military personnel globally endure intense danger as they confront sophisticated, well-funded criminal networks. Their dedication comes at a high cost.

The lesson from the War on Drugs is that we must abandon the language of winning a war that is fundamentally systemic and adopt a posture of strategic management and resilience.

In cybersecurity, every early detection, every rapid containment, and every clean recovery is a tactical win that raises the adversary’s cost of doing business. Cybersecurity leaders should emphasize the achievable and sustainable goals of availability and resilience. We may never win this war outright, but we can ensure our vital functions retain availability.

Avayla and OCP Redefine Cooling Standards for the AI Age

The current speed of the data center industry’s transformation is unlike any in its history. Where infrastructure upgrades once followed multiyear cycles, the pace now is annual, at the speed of consumer electronics. My recent conversation with Kelley Mullick, CEO and founder of Avayla, at the Open Compute Project (OCP) Global Summit in San Jose revealed how liquid cooling has moved from niche application to critical infrastructure component, and why standardization will determine whether the industry can meet the moment.

During our TechArena Data Insights episode with Solidigm’s Jeniece Wnorowski, Kelley shared insights from her extensive career in cooling technologies and her current role as chair of OCP’s industry liaison team. Her perspective illuminates both the technical challenges operators face and the collaborative frameworks emerging to address them.

Rapid Adoption Reshapes the Market

As data centers continue to evolve in the race to support AI-enabled workloads, Kelley noted that scalability has emerged as the primary challenge facing operators today. During our conversation, she cited an OCP keynote by Meta’s head of infrastructure, Dan Rabinovitsj. What once took multiple years now happens annually, leading him to compare current deployment cadences to consumer electronics rather than traditional data center timelines. “That was a big insight for me,” she said. “I live and breathe in this space, but it is a real insight to make that comparison.”

With this primary challenge of scalability come a host of secondary challenges, including cooling this infrastructure, and preparing for liquid cooling. Before 2022, more than 90% of data centers relied on traditional air cooling. In just two years, liquid cooling adoption has surged to approximately 30% of the market. This rapid acceleration has driven significant growth in coolant distribution units (CDUs), the critical infrastructure components that deliver coolant from chips to distribution systems. Recognizing this need, at the 2025 global summit, OCP announced a new working group focused on CDU specifications and best practices.

Driving Standards Development with Industry Partnerships

As the industry faces these challenges, collaboration and defining industry standards become more important than ever. At OCP, Kelley is chair of an industry liaison team that connects external standards organizations to OCP. This year, the team had two announcements to make. First, OCP and the American Society of Heating, Refrigerating and Air-Conditioning Engineers (ASHRAE) signed a memorandum of understanding, establishing formal collaboration to eliminate duplicative efforts and create clearer pathways for standards development. In addition, a new liaison role connects OCP directly into ASHRAE’s processes, ensuring thermal management standards align with open infrastructure development. Second, ASTM International launched a new subcommittee on insulating fluids for immersion cooling applications, with a member of the industry liaison team working with that organization as well.

In another example of the importance of collaboration, Kelley highlighted the Universal Quick Disconnect (UQD) specification 2.0, which addresses quick disconnects within the cold plate cooling loop from the chip to the CDU.

“We had many different technologies…There was also a lot of proprietary designs, and this was creating a lot of problems and challenges within the industry,” she said. “Specification 2.0 was one of the first within liquid cooling to come out and really make standards more readily available to address the challenge of interoperability and heterogeneity within the data center.”

Embracing Hybrid Approaches to Liquid Cooling

Looking ahead, Kelley sees a future that will likely involve hybrid cooling strategies rather than single-solution deployments. While direct-to-chip cooling currently dominates new deployments, Kelley anticipates growing adoption of immersion cooling as workloads demand complete thermal management for entire compute stacks. Cooling networking equipment, memory modules, and storage alongside compute will become increasingly important. Immersion cooling’s ability to capture 100% of generated heat makes it a valuable complement to direct-to-chip solutions, particularly interest in heat recapture and reuse rises.

The TechArena Take

As AI-enhanced workloads continue driving unprecedented infrastructure demands, the industry’s ability to standardize rapidly will determine whether operators can deploy at scale while maintaining flexibility for future innovation. Kelley Mullick’s leadership in standards development through OCP demonstrates how open collaboration can accelerate adoption while maintaining interoperability. Organizations that engage with standards bodies today and build relationships across the ecosystem will be best positioned to capitalize on the liquid cooling transition reshaping data center design.

For more information about Avayla, visit avayla.net. To learn about OCP’s Cooling Environments working group and standards development, visit the OCP wiki.

Tejas Chopra on Building for Impact

Tejas Chopra builds for scale—and teaches others how. He designed metadata at Datrium, re-architected storage at Box, and now leads ML and storage platforms at Netflix, delivering reliability under pressure. He extends that mission beyond systems, co-founding EnsolAI and GoEB1 to help people put AI to work for growth.

As one of our newest TechArena voices of innovation, Tejas cuts through the hype cycle with a builder’s lens: agent reliability, how to separate research from product, and why quiet, iterative work actually moves the needle. Expect lessons that translate—from billion-dollar infra teams to two-person startups, grounded in real problems, not buzzwords.

Q1: Can you tell us a bit about your journey in tech?

A1: I’ve always been drawn to systems that scale. From building metadata storage engines at Datrium to re-architecting storage infrastructure at Box, and now leading machine learning and storage platforms at Netflix, my focus has been on creating reliability at scale. Over time, that curiosity extended beyond large-scale systems into how technology can drive opportunity—which led me to found EnsolAI and GoEB1, both built to help people leverage AI for meaningful professional growth.

Q2: Looking back at your career path, what’s been the most unexpected turn that shaped who you are today?

A2: Moving from deep systems engineering into entrepreneurship. Building startups taught me a new kind of scalability—not of data or compute, but of people, purpose, and conviction. It forced me to think like both an engineer and a customer—and that ultimately made me a better technologist.

Q3: How do you define “innovation” in today’s rapidly evolving tech landscape? Has your definition changed over the years?

A3: Innovation, for me, used to mean cutting-edge algorithms or new architectures. Today it means solving a real problem in a way that’s repeatable, cost-aware, and human-centric. The best innovations don’t always come from new technology—they come from seeing a familiar problem differently.

Q4: What’s one emerging technology or trend that’s flying under the radar but will have significant impact in the next 2–3 years?

A4: We’re overlooking agent reliability—ensuring AI agents act safely, predictably, and accountably. As multi-agent systems become mainstream, frameworks for trust, observability, and control will determine which companies sustain long-term adoption and which don’t.

Q5: When you’re evaluating new ideas or technologies, what’s your framework for separating genuine innovation from hype?

A5: I ask three questions: Does it remove a pain point or just sound exciting? Can it scale sustainably? And would someone pay for it today? If all three are yes, build it. Otherwise, it’s research—not a product.

Q6: What’s the biggest misconception you encounter about innovation in the tech industry?

A6: That innovation has to look glamorous. In reality, it’s often quiet, iterative, and unglamorous. The real breakthroughs come from people fixing what everyone else tolerates.

Q7: How do you see the relationship between AI advancement and human creativity evolving—competitors or collaborators?

A7: Collaborators. AI amplifies creative range but can’t replace intent or taste; the human role shifts from generating to guiding—shaping AI outputs with context, nuance, and moral clarity.

Q8: If you could solve one major challenge facing the tech industry today, what would it be and why?

A8: Bridging the gap between technical capability and access. So much potential is locked behind systems, jargon, and privilege. If we can make advanced tools—like AI—accessible and affordable, we democratize innovation itself. That belief drives both EnsolAI and GoEB1.

Q9: What’s a book, podcast, or idea that fundamentally changed how you think about technology or business?

A9: Business Sutra by Devdutt Pattanaik. It reframed how I think about leadership and innovation—not as rigid hierarchies of control, but as dynamic relationships among purpose, people, and context. It taught me that how we think determines what we build. That lens helps me design systems and teams that are adaptable, not brittle.

Q10: When you’re facing a particularly complex problem, what’s your go-to method for finding clarity?

A10: I break it down to constraints and first principles. What’s unchangeable? What’s optional? Once you know that, complexity usually reduces to a few core decisions. I write, diagram, and simulate trade-offs until the signal emerges.

Q11: Outside of technology, what hobby or interest gives you the most inspiration for your professional work?

A11: Travel. Seeing how people solve everyday problems with limited resources constantly resets my design lens. It’s humbling and practical—both qualities tech needs more of.

Q12: What excites you most about joining the TechArena community, and what do you hope our audience will take away from your insights?

A12: TechArena brings together people who care about depth over noise. I’m excited to share learnings from building at Netflix scale and from starting lean, self-funded ventures. I hope readers take away that innovation can happen anywhere—from billion-dollar infra teams to two-person startups—if you focus on solving real, painful problems well.

Q13: If you could have dinner with any innovator from history, who would it be and what would you ask them?

A13: Pãnini or Ãryabhata. Pãnini’s precision in defining Sanskrit grammar mirrors the elegance we seek in programming languages today, while Ãryabhata’s mathematical imagination still informs how we model the world. I’d ask how they balanced logic and intuition—how they derived universal truths from patterns in language and nature. That balance is what all great technology ultimately strives for.

Inside Cornelis Networks’ Focused Fight to Redefine AI Performance

“More isn’t always more.”

In the competitive landscape of AI infrastructure, conventional wisdom suggests that more resources create better outcomes. But in my recent Fireside Chat with Lisa Spelman, CEO of Cornelis Networks, she argued exactly the opposite, saying strategic constraints and focused execution enable smaller companies to outmaneuver established giants. With Spelman marking her first year as CEO and Cornelis Networks celebrating its fifth year as a company, our conversation provided an opportunity to reflect on how these principles have shaped the company's approach to competing in AI infrastructure.

The Power of Strategic Constraints

Spelman, who joined Cornelis Networks after years at Intel Corporation, emphasized that constraints sharpen focus in ways abundant resources cannot. At larger organizations, teams may pursue projects that, while technically sound, don’t directly address the most critical customer problems. In contrast, Cornelis maintains discipline around resource allocation, ensuring every engineer and dollar drives toward solving specific customer challenges in network efficiency and performance.

“Constraints open up creativity and they dial in focus,” she said. “The focus that you can have in a small company allows you to not have resources that wander. It’s not that they’re doing bad work or not focused on good things, but they’re not staying hung in on what is the most important thing for your company to solve your customer’s problem.”

Beyond Marketing: The Adoption Challenge

While the company remains “maniacal” in is focus of addressing major challenges in the performance and efficiency of AI and high-performance computing (HPC) applications, Spelman noted that in her own role as the CEO of a smaller company, that work can take many forms. Her days blend vision setting and operational leadership with technical evangelism, which is especially key for a small company with a performant technology competing against entrenched solutions.

Spelman noted that many data center professionals claim immunity to marketing influence. Yet awareness, familiarity, and comfort—building trust—remain essential stages in technology adoption. “We welcome the opportunity to compete on our technical merits,” she said. “But you don’t go from 0 to 60 without making sure you cross off some of those steps of familiarity and comfort with your solution.”

Navigating Speed Without Losing Direction

The pace of AI market evolution exacerbates a classic strategic tension for CEOs. Moving too fast risks over-investing in solutions for markets that don’t yet exist, but moving too slow results in irrelevance. In considering this trade-off, Spelman said she errs toward speed, noting that companies can create markets through vision and execution. “Sometimes that’s actually what you need to do,” she said. “It’s not easy, but nothing is easy. It’s not meant to be.”

Cornelis addresses this challenge through intensive customer engagement, using its ecosystem relationships to validate and refine product roadmaps continuously. And the company’s smaller size provides decision-making advantages over larger competitors. Strategic discussions that might require months at enterprise organizations conclude in 30 minutes at Cornelis. This agility allows rapid incorporation of customer feedback without navigating competing priorities and shared resource constraints typical of large companies.

Building an AI-Native Organization

The cultural transformation accompanying this strategic approach extends beyond external positioning. Over the past year, Cornelis evolved from its high-performance computing roots into what Spelman describes as an AI-native organization. This shift encompasses customer engagement models, workload prioritization, and fundamental integration of AI tools throughout operations. The founding team’s early adoption of AI accelerants created infrastructure that enables the company to match market pace.

Spelman reflected on what makes this environment compelling for team members. In a smaller organization, every person’s contribution directly impacts outcomes. “There’s just something about being at a place where every single day, every single person here knows that their work matters,” she said. “I believe that we have a chance to improve the way AI is delivered, used, and consumed. We have a chance to ease the human condition.”

Each team member serves as the expert in their domain, creating mutual accountability between leadership and individual contributors. This structure connects daily work to larger missions around improving AI efficiency and enabling discovery.

The TechArena Take

Cornelis Networks demonstrates how strategic constraints combined with technical depth can create competitive advantages against larger, established competitors. The company’s focused approach, rapid decision-making, and AI-native culture illustrate that market success in infrastructure depends less on absolute resource levels than on alignment, agility, and deep customer understanding. As AI infrastructure demands continue evolving, organizations that maintain sharp focus while adapting quickly to customer needs will be best positioned to compete regardless of their size.

For more information about Cornelis Networks’ approach to AI networking infrastructure, visit cornelisnetworks.com or follow Cornelis Networks on LinkedIn. The company will be exhibiting at SC25 in November and maintains an active presence at industry events focused on AI infrastructure.

Watch the podcast | Subscribe to our newsletter

RackRenew & Circularity: Remanufacture, Recertify, Redeploy

From #OCPSummit25, this Data Insights episode unpacks how RackRenew remanufactures OCP-compliant racks, servers, networking, power, and storage—turning hyperscaler discards into ready-to-deploy capacity.

Open-Source AI and the Road to Broad Deployment

Open-source AI has quickly evolved from lab experiments to today’s role as an infrastructure backbone of modern enterprise deployments. Taking advantage of this powerful resource can accelerate IT agility, but as with many open-source alternatives, implementing with eyes wide open is critical to deployment success.

I recall working with a customer who prototyped an LLM-based retrieval system using an open model. The experience drove great results in test, but once pushed to production, it fell apart. The result? The customer experienced inconsistent latency, scaling failures, memory pressure, GPU underutilization, and patchy support. While open AI stacks bring advantages of transparency, adaptability, and community velocity, without the right platform foundation, things can go off the rails.

The Path to Broad Deployment

We’ve designed Xeon CPUs with open-source platform support in mind. In fact, a core strength of Xeon CPUs is their compatibility with broad open-source toolchains including TensorFlow, PyTorch, and ONNX, based on over a decade of investment in platform optimization. We have extended that with support for quantized inference, CPU acceleration libraries, and solution portability, helping to reduce friction when deploying open models across hybrid environments.

Of course, that is just a start to what’s needed to ensure an agile platform foundation. Open-source tools often lag in orchestration, monitoring and service management support. Intel and ecosystem partners have invested in tuning orchestration layers and performance libraries like OpenVINO and oneAPI to bridge that gap.

Many leading cloud providers are integrating open source LLMs natively into their services, accelerating adoption, with examples that have gained traction including infima, Mistral, and Llama. In the research community, frameworks like Hugging Face mature weekly, lowering barriers for enterprise adoption. And of course, underlying CPU optimizations including support for BF16 and INT8 drive open model performance higher, making them applicable for a number of AI inference targets in the enterprise.

An Open-Source Enterprise Future

To get started with open-source AI, become familiar with framework alternatives and tools available to help implement within your environment. Plan the right infrastructure for your entire AI pipeline, and consider Xeon 6 processors as your CPU foundation, whether for a head node of an accelerated platform or a CPU driven workload where accelerated processing is not required.

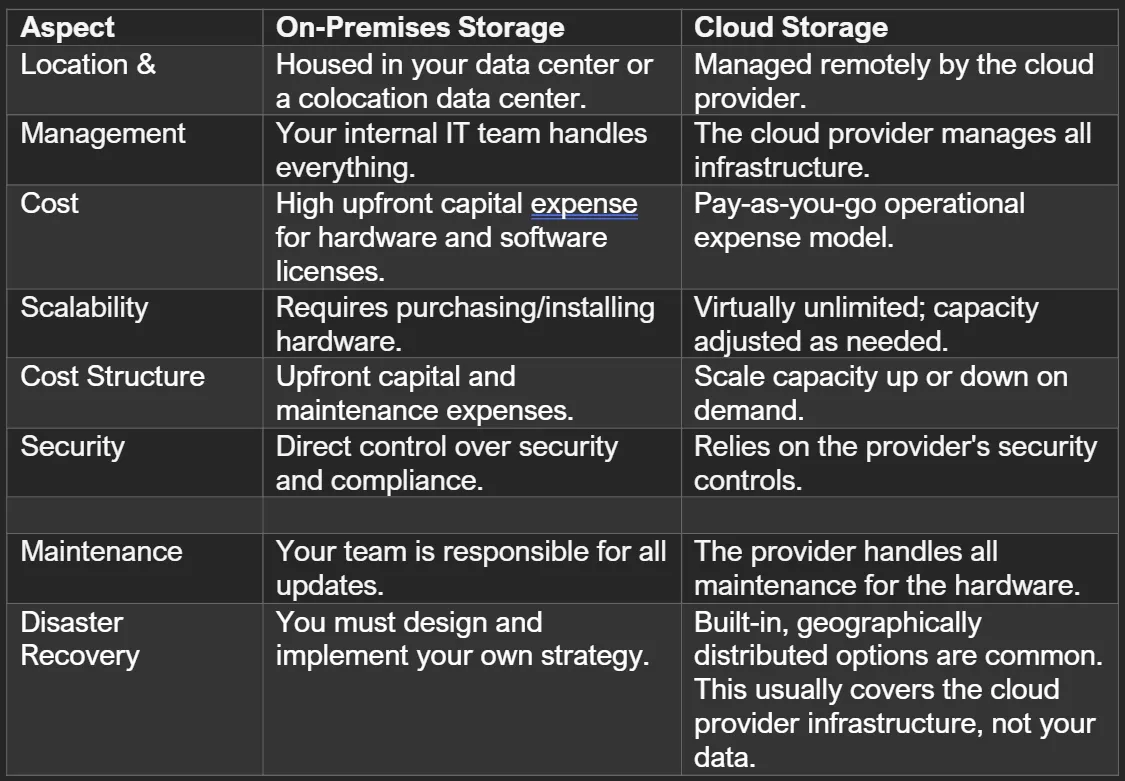

A Guide to On-Premises and First-Party Cloud-Native Storage

A modern storage solution is more than just a place to keep files; it’s a strategic combination of hardware and software designed to manage, protect, and access your most critical asset.

These days, this strategic choice often comes down to two main paths: traditional on-premises storage arrays and first-party, cloud-native services. In this post, we'll break down what each option means, compare their differences, and help you decide which is the right fit for your business.

What is a storage solution?

A storage solution refers to the combination of hardware and software components that are designed to store, manage, protect, and retrieve digital data. At its core, a storage solution includes the physical devices, hard disk drives (HDDs) or solid-state drives (SSDs), that hold your data.

More complex disk arrays aggregate multiple drives to provide higher capacity, improved reliability, and better performance. These disk arrays often support features such as redundancy (RAID), hot-swappable drives, and scalable architectures.

Beyond the hardware, storage solutions also encompass the software that controls and optimizes the storage environment.

- Provisioning and monitoring: Allocating storage space and monitoring performance.

- Data optimization: Using techniques like deduplication and compression to save space.

- Automated tiering: Moving frequently used data to faster storage for quick access.

- Security and recovery: Encrypting data to prevent unauthorized access, creating backups for disaster recovery.

Understanding On-Premises Storage Arrays

The concept of data storage goes all the way back to 1837 and Charles Babbage’s Analytical Engine. Here’s a timeline:

- 1950s: Mainframe storage

- Late 1980s: NAS (Network Attached Storage)

- 1990s: SANs (Storage Area Networks)

- Mid 1990s: Object storage

Storage management software handles provisioning, monitoring, and optimization, tasks like data deduplication and compression. Modern storage arrays add automated tiering, encryption, and integration with cloud platforms for hybrid deployments.

Modern storage arrays come in various forms: NAS for file sharing, SANs for block-level storage, and object storage for unstructured data. While these systems provide full control over hardware and software, they also require your IT team to handle maintenance, updates, and scaling.

What is first-party, cloud-native storage?

First-party, cloud-native storage is a service developed, managed, and offered directly by a major cloud provider, such as Amazon Web Services (AWS), Microsoft Azure, or Google Cloud Platform (GCP). Instead of buying and managing hardware, the cloud provider handles everything.

Some traditional storage vendors have partnered with hyperscalers to offer their technology as first-party services. Examples include:

- NetApp: Azure NetApp Files, Amazon FSx for NetApp ONTAP, Google Cloud NetApp Volumes

- Pure Storage: Pure Storage Cloud Azure Native

This gives you the best of both worlds: trusted storage technology delivered with the convenience of a native cloud service

On-Premises vs. Cloud Storage: A Head-to-Head Comparison

How do these approaches compare? The choice depends on your needs for cost, control, and scalability.

What About Other Ways to Connect Arrays to the Cloud?

Not all storage vendors offer first-party, cloud-native services. Companies like Dell, HP, Hitachi, and Nutanix provide cloud connectivity through different models. These are often called "cloud-integrated" or "third-party" solutions.

Typically, these vendors allow you to run their storage software on public cloud infrastructure. This means you can extend your on-premises environment to the cloud for things like backup or disaster recovery. While this approach offers flexibility, it usually means the storage service is managed by you or the vendor, not by the cloud provider itself. This can add complexity to management and may not offer the same deep integration as a true first-party service.

Is First-Party Cloud-Native Storage Better?

Deciding between a first-party, cloud-native service and extending an existing array to the cloud depends on your priorities.

First-party, cloud-native storage is often the better choice if you prioritize:

- Simplicity and Scalability: These services are designed for effortless scaling and deep integration, reducing the burden on your IT team.

- Reduced Operational Overhead: With automated updates and management handled by the provider, you can focus on innovation.

- Built-in Resilience: Native services often include robust, geographically distributed backup and recovery options.

Extending an on-premises array might be a better fit if you need to:

- Leverage Existing Investments: Continue using the hardware and management tools your team already knows.

- Meet Specialized Requirements: Support legacy applications or unique workloads that are not a good fit for the cloud.

- Maintain Granular Control: Keep direct oversight of your storage environment for specific compliance or security reasons.

Building Your Future-Proof Storage Strategy

The way you store and manage data is fundamental to your business success. While on-premises arrays offer control, first-party, cloud-native services from providers like AWS, Azure, and Google Cloud deliver scalability and integration.

Both approaches offer distinct advantages, and understanding these differences is key to building a resilient and future-proof storage strategy. For ongoing insights and practical tips on cloud storage and IT infrastructure, be sure to follow my posts on LinkedIn.

Data Insights at OCP: DataraAI on Edge Robotics

DataraAI CTO and co-founder Durgesh Srivastava unpacks a data-loop approach that powers reliable edge inference, captures anomalies, and encodes technician know-how so robots weld, inspect, and recover like seasoned operators.

Cooling for the Gigawatt Era: JetCool’s Roadmap for AI Data Centers

As AI data centers push into the gigawatt era, cooling is moving to center stage—not just to keep systems within spec, but to enable the next generation of compute.

At the recent AI Infra Summit in Santa Clara, Jeniece Wnorowski and I sat down with Scott Twomey, Senior Director of Global Business Development at Flex, for a Data Insights conversation on how the company is scaling advanced cooling technologies for the AI era.

Flex is best known as a global manufacturing powerhouse, operating more than 100 locations across 30 countries. With 17 U.S. facilities spanning seven million square feet and an additional nine million in Mexico, Flex also has one of the largest advanced manufacturing footprints in North America. In 2024, it acquired JETCOOL Technologies Inc., a leader in advanced thermal management solutions. Together, the teams are integrating JetCool’s patented microconvective cooling technology into Flex’s global manufacturing and data center infrastructure. Combined with Flex’s comprehensive compute and power solutions, including vertical power delivery, they deliver a vertically integrated approach to power, cooling, and rack infrastructure—streamlining deployment for hyperscale AI systems.

As part of Flex, JetCool specializes in single-phase, direct-to-chip cooling technology that uses an array of microjets to target processor hot spots directly, delivering a significantly lower thermal resistance than conventional microchannel cooling. This technology is now integrated into Flex’s server reference platforms, improving heat transfer, minimizing heat gradients, and stabilizing CPU temperatures across complex silicon architectures and IT stacks. The outcome is faster time-to-market, higher performing chipsets, and better thermal management for the industry’s most demanding workloads.

Twomey shared how Flex is helping scale JetCool’s production globally, leveraging its advanced manufacturing footprint and expertise to bring liquid cooling solutions to market faster. Together, they’re expanding their cooling portfolio and building a unified thermal roadmap—from SmartPlate cold plates and embedded semiconductor cooling to vertically integrated liquid-cooled racks—to meet both current and future AI cooling demands.

An End-to-End Liquid Cooling Portfolio

As JetCool builds out their liquid cooling ecosystem, the company is evolving into a comprehensive, end-to-end liquid cooling provider—streamlining deployments through a single partner. Backed by Flex’s global manufacturing infrastructure, JetCool is expanding its portfolio to support deployments from the die level to the rack, row, and facility systems. This broader offering flattens supply chains, reduces vendor integration friction, and enables easier customization of modular liquid cooling solutions. The current product line includes cold plates, coolant distribution units, manifolds, and quick disconnects, with more in development for 2026. With Flex’s support, JetCool is positioned to deliver scalable, integrated cooling solutions that meet the evolving demands of AI infrastructure.

Designing for the Gigawatt Era

Rising power density and thermal loads are now a structural trend, not a spike. The response must be continuous engineering—greater thermal capacity, faster design cycles, tightly integrated solutions, and architectures that scale generation over generation. Cooling can’t be an afterthought; it must advance in lockstep with processor TDP, with built-in headroom for future Superchips.

“One of the many reasons that we looked at JetCool from an acquisition standpoint is that they did have a portfolio of solutions to address the here and the now as well as the future,” he said.

SmartPlate, SmartLid, and SmartSilicon

JetCool’s strategy spans three tiers to match rising processor thermal design power (TDP).

SmartPlate is a fully sealed liquid cold plate designed for specific processor families, cooling over 4,000 watts per socket.

SmartLid extends headroom by removing both thermal interface layers to directly route fluid to the processor lid, cooling over 5,000 watts per socket, preparing for the next wave of ultra-dense accelerators.

SmartSilicon embeds JetCool’s microjet array directly into the silicon substrate—an approach that requires tight collaboration with end customers, chipmakers, and foundry partners.

Together, these solutions give customers a clear path from current high-TDP processors to tomorrow’s even denser AI hardware.

TechArena Take

The gigawatt era is redefining what’s possible in AI data center cooling. As GPU power envelopes climb toward 5,000 watts and beyond, incremental improvements won’t cut it. Cooling is becoming a strategic enabler, not a support function.

JetCool and Flex’s roadmap—from SmartPlates to SmartSilicon—reflects the kind of multi-layered innovation and manufacturing scale the industry will need. By combining Flex’s global manufacturing muscle with integrated solution design, JetCool is positioning itself as a key player in scaling high-density, AI-optimized data centers.

*Microconvetive cooling, SmartPlate and SmartLid are JetCool trademarks.

From Laptops to Data Center Racks: Ventiva’s Thermal Play at OCP

At the recent OCP Global Summit in San Jose, I chatted with Carl Schlachte, CEO of Ventiva, to talk about something that sounds counterintuitive at first blush: what five years of grinding on laptop thermal design can teach hyperscale and enterprise data centers. The short answer, in Schlachte’s telling, is “a lot”—and soon.

Ventiva has been heads-down in one of the harshest thermal environments outside a rack: thin, sealed consumer and commercial laptops where millimeters matter, acoustics are unforgiving, and reliability thresholds are brutal. Schlachte says that discipline—solving for tight envelopes, variable duty cycles, and field reliability—translated cleanly to servers and accelerators once the right people took notice. That notice didn’t come through a cold pitch; it came laterally. Some of the same firms that collaborate with Ventiva on next-gen laptops also have server and data-center teams.

That “reference sold” path—laptop counterparts vouching Ventiva into server and facility groups—matters for two reasons. First, it shortens the confidence cycle when a new thermal approach shows up in a risk-averse environment. Second, it implies the solution isn’t a bespoke one-off for a single chassis; it’s a design pattern hardened by millions of laptop hours that can be application-engineered into many form factors.

Schlachte also hinted at timing. Ventiva is preparing announcements around CES—framed as “groundbreaking” systems that, in his words, “change the nature of what a laptop is.” While details are under wraps, the more interesting part for data-center buyers is what he claims won’t be necessary to port the tech into servers: net-new R&D. The building blocks are already validated for lifetime and scale in a tougher mechanical envelope. What remains is application engineering—integrating into the physical realities of 1U/2U servers, dense accelerators, and varied sleds, and aligning with rack-level airflow and power designs.

Why would laptop learnings carry weight in a 600 kW row? Constraints rhyme. In both spaces, thermal budgets are tight and rising, hotspots shift under dynamic workloads, and acoustics or vibration can’t become a side effect. Reliability is non-negotiable. In laptops, the penalty for errors shows up as throttling or returns; in AI racks, it’s stranded GPUs, erratic performance, and higher TCO. Techniques that squeeze higher heat flux out of compact geometries—whether through novel heat spreading, phase-change management, or smarter flow control—map well to constrained server envelopes and to edge locations where facility retrofits aren’t feasible.

The OCP Summit context matters here. Over the past 18 months, the industry has been pivoting from server-first to rack- and multi-rack-first thinking. As power densities spike and liquid cooling proliferates, the battleground has moved to materials, manifolds, safety regimes, and serviceability in brownfield realities. Ventiva’s message: there’s still real gain to be had inside the box—at the component and sled levels, especially by reusing tactics proven in tight-tolerance laptop designs. That doesn’t replace facility-level innovation; it complements it by reducing the thermal tax inside each box.

Schlachte describes the reception at OCP as “amazingly good,” and that tracks with what we heard on the showroom floor: operators want both macro and micro levers. On Monday, teams modeled coolant loops; on Tuesday, they fought a stubborn NIC hotspot and the fan curves needed to keep a CPU in bounds while a GPU surged. Even a few percent more stable performance per server—or holding the same acoustic or power profile at higher load—added up fast at scale.

There’s also a deployment story embedded here. If the core technology ships in laptops first, the supply chain, QA, and lifetime data will ramp quickly. For data-center adopters, that can de-risk qualification, shorten pilot cycles, and improve spares forecasting. The open question is the integration path: which OEMs and ODMs pick this up, and how fast do they tune sled designs to exploit it? Schlachte frames Ventiva’s next step as heavy application engineering—helping partners adapt form factors and operational playbooks without forcing a full mechanical redesign.

For operators, the practical questions are straightforward. What is the delta on junction temperatures at given loads? How does the solution behave under bursty AI inference vs. sustained training? What’s the impact on acoustics, airflow directionality, and contamination risk? And crucially, how does it coexist with emerging liquid strategies—direct-to-chip, cold plates, or hybrid air/liquid racks? Schlachte suggests it’s not either/or; it’s making the box smarter so that rack-level choices deliver more consistent returns.

TechArena Take

We like the vector here: translate hard-won laptop thermal tricks into compact, serviceable gains at the server and edge. The go-to-market signal—being ushered into data-center teams by adjacent laptop engineers—cuts through typical skepticism and hints at broad applicability. That said, the data-center bar is high. To win trust, Ventiva should publish clear, apples-to-apples results: sustained workload deltas, hotspot mitigation under mixed CPU/GPU loads, acoustic and power impacts, and field maintainability. Even better, show coexistence with standards-based server designs and liquid-cooling topologies in the wild.

Net: the demand is here. Land a few lighthouse deployments with OEM/ODM partners, document coexistence with standards-based components, and ship pragmatic integration guides. Do that, and Ventiva’s differentiation becomes a de-risked choice for operators who need every watt and every degree back in the AI era.

Cornelis Networks & AMD on Scaling AI Without the Chaos

From CPU orchestration to scaling efficiency in networks, leaders reveal how to assess your use case, leverage existing infrastructure, and productize AI instead of just experimenting.

GTC DC: NVIDIA’s Big Bets on AI Factories, DOE, and 6G

NVIDIA brought its GTC event to Washington, D.C. for a reason.

Spanning three days at the Walter E. Washington Convention Center, the event targeted policymakers, integrators, and program leaders deciding where national-scale AI capacity will live and how it will be governed.

The keynote message, delivered today by Jensen Huang, landed clearly: treat AI as an industrial system, not a server purchase. In practice, that means Department of Energy (DOE) supercomputers, quantum-classical coupling, AI-infused radio access networks, autonomy at fleet scale, and a drumbeat on U.S. manufacturing.

The headline announcement centered on the DOE. Argonne National Laboratory will stand up two new AI systems—Solstice at roughly 100,000 Blackwell GPUs and Equinox at about 10,000—both targeted for the first half of 2026 and tied together with NVIDIA’s networking stack. Oracle is the prime hyperscale partner on the larger system. The subtext is supply and cadence: NVIDIA guided to an eye-popping bookings run rate, reinforcing that Blackwell-class capacity will be allocated, not casually procured. For public-sector programs and regulated industries, planning windows now start with guaranteed delivery of GPUs, interconnect, racks, and liquid cooling in the same contracting cycle.

A 6G-Ready, AI-infused Radio Stack

RAN is the linchpin of AI at the edge, and NVIDIA has been pressing this front for roughly three years. The Nokia alignment doubles down on an AI-RAN path that moves inference and optimization into the radio stack itself for latency, efficiency, and fleet-level control.

Beyond speeds-and-feeds, this is about industrial policy: rebuilding leadership in critical infrastructure by composability across RAN silicon, GPU acceleration, and software. For carriers and federal networks, the takeaway is that AI will live at the edge as much as in the region, and procurement will increasingly reward end-to-end blueprints over stitched one-offs.

The Nokia play makes that third leg—edge—explicit, carrying the same AI toolchain out to radios and cell sites. If you want performant AI at the edge, you have to start with the RAN.

Hybrid Quantum-Classical Moves from Slideware to Workflow

Quantum computing moved from slideware to an integration story. NVQLink is NVIDIA’s architecture to couple GPUs with early-stage quantum processors so error correction, classical pre/post-processing, and AI-driven orchestration can sit close to QPUs. Dozens of partners—from lab programs to vendors like IonQ and Rigetti—give the idea immediate surface area. The pragmatic read for near-term users is straightforward: hybrid quantum-classical workflows can accelerate today, long before fault-tolerant machines arrive, provided the links are tight and the toolchains are familiar.

Robotaxis at Scale Require Tight Retrain Loops

Autonomy returned to the roadmap with scale. NVIDIA and Uber set a target to field an autonomous fleet on the order of 100,000 vehicles starting in 2027, framed as an AI data-factory problem as much as a sensor stack. On the vehicle side, NVIDIA’s DRIVE platform continues to broaden its bench with Stellantis, Lucid, and Mercedes-Benz in the fold. The message is consistency: ingest, simulate, retrain, and redeploy in tight loops—exactly the “factory” model NVIDIA wants buyers to internalize.

Onshore Manufacturing: From 2020 Imperative to 2030 Priority

Since 2020, onshore manufacturing has been table stakes—not a new pivot. What’s changing now is its weight in RFP scoring across this decade: locality, sovereignty, and supply assurance sit alongside performance-per-watt. Jensen Huang’s emphasis on U.S. milestones for Blackwell and new assembly footprints (Arizona, Houston) signals that “where” and “how” you build will remain a first-order decision throughout the decade.

Google’s Monetization Turn on AI

Rather than just supplying connective tissue, Google is clearly moving to monetize its AI stack. Blackwell-based instances on Google Cloud pair with an on-prem path via Google Distributed Cloud running Gemini on Blackwell systems. The pitch is commercial, not merely architectural: one toolchain, multiple SKUs, and consumption paths that let buyers pay for capability where it runs best.

This isn’t either-or. It’s yes-and: burst to cloud, anchor sensitive work on-prem, and, increasingly, extend the same models and MLOps to the edge.

Agentic EDA and GPU-Powered Simulation Compress Schedules

Synopsys added a concrete proof point that “AI + accelerated compute” collapses engineering schedules. NVIDIA is piloting Synopsys AgentEngineer for AI-enabled formal verification integrated with the NeMo Agent Toolkit and Nemotron open models—an early signal that agentic workflows are entering signoff. On the simulation side, Synopsys highlighted dramatic gains: lightning-fast computational fluid dynamics claims with GPU acceleration and AI initialization via Ansys Fluent, and up-to-15× speedups for QuantumATK atomistic simulations on CUDA-X and Blackwell. A defense electronics customer cited jobs dropping from weeks to hours. Those numbers, even if workload-dependent, are exactly what program managers want to hear when timelines and budgets are under pressure.

Deployment is now a three-part system: cloud for elasticity, on-prem for control, and edge for immediacy. The Nokia RAN work is the connective tissue that makes the edge leg viable at scale.

TechArena Take

Call it what it is—an operating plan for national-scale AI. NVIDIA framed AI as an industrial system across labs, networks, vehicles, and factories, and positioned itself to supply the muscle, the middleware, and the maps.

DOE wins plus Nokia and Uber partnerships reinforce one theme: assemble end-to-end AI factories and simplify the buy. Synopsys’ gains suggest the next bottleneck moves to orchestration, data pipelines, and power as verification agents and GPU-accelerated physics compress schedules.

This was an assertion of scale at the very moment scale is contested. The partnerships and roadmaps are real, but so are the political and community headwinds around AI factories. If GTC DC shifts anything, it’s the center of gravity of the debate: from “can we build it?” to “where, how, and on whose terms?”

Cornelis and OCP Examine Why AI Infrastructure Must Evolve

From the OCP Global Summit, hear why 50% GPU utilization is a “civilization-level” problem, and why open standards are key to unlocking underutilized compute capacity.

WEKA: Make Tokens Flow with Memory-Like Storage

As enterprises move artificial intelligence (AI)-based solutions further into production, inference speed is becoming a key factor in whether deployments succeed or fail. Real business value, and real infrastructure challenges, lie in how quickly models can generate responses for end users.

In a recent TechArena Data Insights episode, I spoke with Val Bercovici, chief AI officer at WEKA, and Scott Shadley, director of thought leadership at Solidigm, to explore how inference workloads are exposing infrastructure bottlenecks that threaten AI economics. Their conversation revealed why a metric called time to first token has become essential for measuring inference performance, and how storage architecture designed for this phase of AI can transform both productivity and profitability.

The New Currency of AI Performance

In a relatively short time, one metric has emerged to measure AI responsiveness: time to first token. As Val and Scott explained, this is a measure of the time it takes for a model to respond to a given prompt. It has emerged as a key metric because it directly translates to business value. “Time to first token literally translates to revenue, OPEX, and gross margin for the inference providers,” Val said.

As a concrete example, Val cited real-time voice translation, where instantaneous responses are critical to natural conversation. “Who wants to wait an awkward, pregnant pause of 30, 40 seconds for a translation?” Val asked. “We want that to be real-time and instantaneous, and time to first token is a key metric for that kind of use case.”

The metric matters because it reflects deeper infrastructure realities for AI inference workloads. Behind the response to every prompt lies a complex process that can be considered in two phases. In the pre-fill phase, prompts are converted into tokens and then expanded into key value (KV) cache, essentially the working memory of the large language model. Then in the decode phase, the model generates the actual output users see. Graphics processing units (GPUs) are currently being asked to do both at once, which is, in Val’s words, “a very expensive kind of context switching,” and the latter phase makes extreme demands of memory as well.

Rethinking Storage Architecture for AI Workloads

The conversation revealed how AI workloads differ fundamentally from traditional computing. Modern GPUs contain over 17,000 cores compared to a CPU’s hundred cores, creating entirely different performance requirements. This architectural shift demands a fresh approach to storage design, one that treats solid-state drives (SSDs) not merely as storage devices, but as memory extensions.

WEKA’s NeuralMesh Axon technology demonstrates this evolution. The solution embeds storage intelligence close to the GPU and creates software-defined memory from NVMe devices, allowing inference servers to see NVMe storage as memory, delivering memory-level performance from SSD hardware. This approach addresses one of inference computing’s most significant challenges: providing sufficient memory bandwidth to feed GPU cores without incurring prohibitive costs.

The Assembly Line Problem

One of the discussion’s most striking revelations centered on what Val termed the “assembly line” problem. While data centers optimized for AI are often described as “AI factories,” AI inference today operates more like a job shop than an assembly line. Data movement remains inefficient, causing expensive re-pre-filling operations and consuming kilowatts each time.

This inefficiency manifests in real-world constraints that AI users encounter daily. The rate limits imposed by AI service providers reflect the genuine economics of token generation. Coding agents and research tools that consume 100 to 10,000 times more tokens than simple chat sessions can’t be served profitably at current infrastructure costs, forcing providers to limit access even to customers willing to pay premium prices.

The Path Forward for Enterprises

Scott and Val offered practical guidance for IT leaders navigating the transition from proof-of-concept projects to production AI deployments. Scott stressed the importance of aligning hardware and software planning, noting that AI infrastructure demands closer collaboration between traditionally siloed teams. Val encouraged leaders to approach AI infrastructure with fresh perspectives, setting aside assumptions from previous technology generations.

The TechArena Take

As AI moves from experimental projects to production workloads generating measurable business value, infrastructure choices increasingly determine competitive advantage. Organizations that optimize storage architecture for token economics position themselves to scale AI profitably, while those applying traditional storage approaches risk creating bottlenecks that limit innovation. The enterprises that act decisively today in implementing high-performance storage architectures designed specifically for AI workloads will find themselves better positioned to capitalize on AI’s transformative potential.

For more information on WEKA’s AI infrastructure solutions, visit WEKA.io. Learn about Solidigm’s AI-optimized storage innovations at solidigm.com/ai.

The Network Revolution: Cornelis Tackles AI’s Efficiency Crisis

As artificial intelligence (AI) systems grow increasingly complex and demanding, a critical bottleneck has emerged that threatens to limit the transformative potential of enterprise AI: network efficiency. While organizations pour billions into graphics processing units (GPUs) for compute power, a surprising percentage of that computational capacity sits idle, waiting for data to move through inefficient network architectures.

Lisa Spelman, CEO of Cornelis Networks, and I recently discussed her perspective on this challenge. During our conversation, she revealed how Cornelis is addressing what she calls “the efficiency problem plaguing AI and HPC mega systems” through network design that promises to unlock significantly more value from existing infrastructure investments.

The Hidden Cost of Network Inefficiency

The scale of the efficiency problem becomes clear when examining GPU utilization patterns. Research reveals that GPUs spend 15% to 30% of their time in non-math mode, purely handling communications rather than performing the calculations that drive AI breakthroughs. This represents billions of dollars in computational capacity that organizations have purchased but are not fully using.

“We are throwing more compute at the problem, putting more scale around the problem, putting more concrete, more power, all these things around the problem and saying we just have to brute force through these models,” Lisa explained. “We’ve got to move to elegance.”

That elegance comes through innovation that addresses bottlenecks at the system level. Multiple bottlenecks exist, but for the network, Cornelis has developed an end-to-end backend network architecture with unique features that improve GPU utilization and compute efficiency while maximizing the value of existing power budgets and infrastructure investments.

The Enterprise On-Premises Opportunity

While hyperscale cloud providers continue to drive frontier model development, Lisa identifies a significant opportunity in enterprise on-premises AI infrastructure. She notes that cloud providers currently capture 40 to 50% of the AI infrastructure market, which leaves substantial opportunity for enterprises, neoclouds, and sovereign cloud implementations that prioritize economics, privacy, security, and specific use case optimization.

This distributed approach to AI infrastructure creates new requirements for network efficiency, since enterprise implementations must maximize utilization within relatively constrained environments. The network becomes even more critical in these scenarios. Inefficiencies that might be tolerated by hyperscalers become prohibitive bottlenecks in enterprise deployments.

Real-World Impact Through System-Level Innovation

The practical benefits of network optimization extend far beyond theoretical performance improvements. Measurably better results can be achieved through Cornelis Networks’ solutions, including the recently launched CN5000, a 400-gigabit end-to-end network platform.

These improvements manifest in multiple dimensions: better GPU utilization translates to faster model training and inference, and reduced power consumption per workload enables more intensive processing within existing power budgets. Improved overall system efficiency allows organizations to tackle larger problems with the same hardware investments, delivering system-level benefits that improve total cost of ownership and accelerate time to value for enterprise AI initiatives.

Looking Ahead: Sustainable AI Infrastructure

Recent studies suggest that over 90% of enterprise AI efforts struggle to achieve meaningful return on investment. However, Lisa believes the industry stands at an inflection point where that dynamic is about to reverse completely. And as organizations move from experimental AI projects to production deployments that must deliver measurable business value, efficiency optimization becomes crucial for long-term success.

Lisa’s confidence in this transformation stems from her experience across multiple technology waves, including her time managing IT infrastructure during the early cloud computing era. The pattern suggests that enterprises that embrace efficiency-focused AI infrastructure today will establish competitive advantages that become increasingly difficult for competitors to match.

The TechArena Take

Cornelis Networks’ approach addresses a critical gap in current AI infrastructure discussions. While much attention focuses on computational power and model sophistication, network efficiency represents an often-overlooked opportunity to unlock significant additional value from existing investments.

Lisa’s emphasis on moving from “brute force to elegance” reflects a maturing industry that recognizes sustainable AI deployment requires optimization across the entire infrastructure stack. Organizations that prioritize network efficiency alongside compute power will be better positioned to achieve the ROI that has proven elusive for many enterprise AI initiatives.

The convergence of AI-native enterprise cultures with efficiency-optimized infrastructure creates conditions for the kind of transformative business impact that will differentiate winners in the next phase of AI adoption.

For more insights on Cornelis Networks’ approach to AI infrastructure optimization, visit cornelis.com or connect with Cornelis Networks on LinkedIn.

Scality and Solidigm Reshape Storage Protection in the AI Era

For 15 years, Scality has operated at a scale most enterprises never contemplate. Its customers routinely manage petabytes, tens of petabytes, and now exabytes of unstructured data. My recent conversation with Paul Speciale, chief marketing officer of Scality, alongside Jeniece Wnorowski from Solidigm, revealed how this software-defined storage pioneer is navigating a shift in how organizations think about data protection in an era of ransomware threats and AI workloads.

Shifts in the Data Protection Landscape

The ransomware threat has fundamentally changed the data protection conversation. As Paul noted, you can’t go a day without seeing news of a cyber attack, and this constant barrage has added a new priority for chief information officers: in addition to backup speed, recovery speed is now top of mind. Organizations need their backup systems to serve as insurance policies that can quickly resurrect operations after an incident. This demand requires special architectural considerations and performance characteristics from the backup system.

AI also has introduced additional complexity to the data protection equation. First, the integrity of data feeding AI systems becomes paramount. “Imagine training your AI on data that’s been tampered with or has some kind of integrity constraints,” Paul said, raising the possibility of the potentially catastrophic outcomes. Second, AI itself becomes a tool for both attack and defense. While Scality explores embedding AI capabilities to detect suspicious access patterns and questionable payloads, ransomware actors are simultaneously weaponizing AI for sophisticated phishing attacks and impersonation schemes.

The Flash Storage Tipping Point

The flash storage revolution is reshaping Scality’s deployment patterns in ways that would have seemed unlikely just years ago. Historically, Scality’s massive capacity deployments relied primarily on high-density hard disk drives (HDDs), with flash comprising only 1-2% of total capacity to accelerate metadata operations and data lookups. The converging costs between flash and HDD storage, combined with performance demands from analytics and AI workloads, are driving increased adoption of all-flash configurations.

Paul cited a conversation with a major analyst firm that reinforced this trend: AI models in cloud environments are already training on object storage backed by flash. The same pattern is emerging for on-premises deployments. Even fast backup operations can benefit from all flash storage. “Why? Because again, it’s back to this restore equation,” he explained. “If I have flash, I can do a faster job of restoring.”

Software-Defined Advantage in a Competitive Market

Paul emphasized Scality’s software-only approach as a core differentiator in an increasingly competitive storage market. By remaining hardware agnostic, Scality can leverage best-of-breed components like Solidigm’s high-capacity SSDs while optimizing for specific media characteristics. This flexibility extends to energy efficiency, performance tuning, and media-specific optimizations.

The conversation revealed Scality’s vision toward becoming a data platform, extending their reach beyond storage. “And what is a platform?” he asked. “A platform is something that has a series of services. It might be a search service, a data cleansing service, a vectorial database for AI.” Paul sees software vendors as holding advantages in delivering these integrated service offerings given they are not tied to specific servers or form factors.

Object Storage and the Path Forward

Object storage’s mainstream emergence represents another important industry change. After 15 years of evangelizing object storage’s benefits, Paul sees the market finally recognizing its fundamental advantages for managing unstructured data at scale. Object storage’s inherent scalability, combined with its understanding of metadata and data characteristics, positions it ideally for immutable data protection and AI workloads. The hyperscalers have already validated this approach through Amazon S3 and Azure Blob adoption for AI applications. “The opportunity is huge as an object storage player in that arena,” he said.

The TechArena Take

Scality’s 15-year journey demonstrates the importance of being able to adapt alongside shifting enterprise priorities. Storage solutions must balance performance, resilience, and flexibility to meet the growing demand for data restoration capabilities and to address the changes rising from AI’s dual role as both a workload and security tool. As object storage enters the mainstream and flash economics continue improving, organizations managing massive unstructured data volumes will increasingly depend on software-defined approaches that optimize across media types and deployment models. Scality’s platform vision, rooted in pure software architecture and object storage expertise, positions the company to address these converging demands.

For more information on Scality’s data protection solutions, visit scality.com.

From OCP 2025: Arm’s Chiplet Push and the AI Ecosystem Play

Allyson Klein and co-host Jeniece Wnorowski sit down with Arm’s Eddie Ramirez to unpack Arm Total Design’s growth, the FCSA chiplet spec contribution to OCP, a new board seat, and how storage fits AI’s surge.

CelLink at OCP: 3 Key Takeaways for AI Factories

During CelLink’s first-ever exhibit at the Open Compute Project Foundation’s 2025 global summit, what struck me wasn’t a single system or new tech announcement. It was the massive scale and complexity of the systems on display in San Jose. Design considerations that once were limited to the inside of a chassis now span racks, pods, and even data halls. Power and cooling, data transmission, and commissioning strategies are being simultaneously developed into an integrated plan for massive data centers. For an electric vehicle supplier entering the data center market, there’s a clear signal: the data center universe needs system-level thinkers more than ever. Below are three takeaways I brought from San Jose.

1) The unit of design has shifted to the rack (and multi-rack)

For many years, the data center industry treated the server box as the center of gravity and the rack as a container. At OCP 2025, the gravitational center sat firmly at rack scale and beyond. Vendors weren’t just announcing parts; they were showing buyable racks and reference pods with standardized mechanics, predictable service windows, and modularity that assumes multi-vendor integration.

When the entire data center is the product, interoperability and collaboration are fundamental requirements, not one-off, after-the-fact engineering projects. Power distribution, liquid manifolds, fabric, and service clearances need to be engineered together, tested together, and delivered as a single, repeatable unit. That reduces onsite “glue work,” accelerates time-to-online, and makes capacity build-outs behave more like a supply chain problem instead of an R&D project.

2) Efficiency must span the whole power path, from the grid down to the GPU

Energy costs and utility constraints are hard walls that every operator contends with. The conversation used to stop at power supplies or busbars; now it spans the entire power path. From upstream skids and medium-voltage gear, through prefabricated power pods, into rack-level distribution and finally to the last few centimeters inside the server, every interface is being scrutinized for energy loss, complexity, and long-term reliability.

Two themes stood out. First, high voltage power delivery and fewer mechanical connections are becoming a design tenet, not just wishful thinking. Second, manufacturability is part of the efficiency story. If you can pre-integrate cleanly at the factory, you don’t just save electrons; you save weeks of field labor, re-terminations, and retests that sap schedules and budgets. Efficiency is both electrical and operational.

3) Liquid cooling is table stakes—now make it efficient and flexible

Every serious roadmap now assumes liquid cooling. The debate is no longer air vs. liquid; it’s which liquid strategy unlocks density and speed without limiting the facility to a shorter lifespan. Cold plate, immersion, hybrid approaches—they all have a place. But the winners will minimize facility disruption, maximize reliability, fit into standardized rack mechanics, and keep serviceability reasonable.

I heard operators ask a simple question: Will this let me scale up and scale out without ripping up what I just installed? Solutions that pair rack-native mechanics with straightforward commissioning, safe quick-disconnects, and clear maintenance windows are getting the nod.

What This Means for How we Build

For AI factories, we should co-design power, cooling, and interconnect with the same discipline that goes into a production line. Standard racks, standard interfaces, and predictable service envelopes. The upside is more than density, it’s repeatability. That’s how we compress power-on schedules and make capacity a planning exercise we can trust.

Just as important: It’s imperative that the industry reduce manual, low-value assembly steps across the chain. Every extra wire to cut, crimp, route, label, and verify is a schedule risk and represents a potential field failure. Automation at the component level matters, but the real leverage appears when you can eliminate multiple assembly steps in server assembly and data center build-out.

CelLink PowerPlane: Efficient Power—Coupled with Integrated Cooling

At CelLink, we are focused exclusively on power and cooling. Our PowerPlane product replaces bundles of discrete wires with a space-efficient, flexible laminated power circuit that can deliver high currents in a flat and ultrathin form factor. Instead of connecting motherboards together one cable at a time, motherboards are docked directly to the Powerplane with a simple and repeatable installation process.

This matters to a server manufacturer for several reasons:

Density without chaos. By consolidating conductors into a flat, designed path, we free up precious volume for more compute and signal transmission – the true value-add functions of a server. The PowerPlane makes it easier to place accelerators and signal connections where they need to be and maintain clear service windows.

Cooling-ready by design. Power and cooling shouldn’t fight each other for space. The design approach we take to deliver power in a very thin (<1mm) form factor can be tailored to align with liquid-cooling manifolds—keeping designs clean, minimizing interference, and opening the door to integrated power-and-cooling layers that enable a revolution in rack and system design.

Unlocking vertical power delivery. By delivering power and cooling to the backside of the motherboard, the PowerPlane provides efficient heat removal from vertical power voltage regulators, ensuring that the regulators operate in their most-efficient temperature range.

Stepping back, this isn’t just a different cable. It’s a revolutionary design concept that eliminates manual wiring and replaces it with a high-precision, repeatable, space-saving component.

The PowerPlane product is trademark CelLink.

Banani Mohapatra on AI with Purpose

Banani Mohapatra leads experimentation and causal measurement for Walmart Plus, where testing isn’t a checkbox—it’s the operating system. She treats causal inference as a product capability, using disciplined experiments to turn ambiguous ideas into decisions the business can trust.

In this conversation, Banani—one of TechArena’s newest voices innovation—shares how moving from India to the U.S. rewired her problem-solving lens, why innovation now lives as much in system design and governance as in algorithms, and how AI and human creativity work best as collaborators.

She breaks down a first-principles framework for separating signal from hype—customer impact, quantifiable value, scalability, and responsible deployment—and explains why disciplined iteration beats flashy launches.

Q1: Can you tell us a bit about your journey in tech?

A1: My journey in technology spans over 13 years across analytics, AI, and data science leadership. I began at MarketRx, a pharma analytics startup later acquired by Cognizant, where I learned to turn raw data into actionable business stories long before modern BI tools existed. From there, I joined Citibank’s risk modeling team, designing and validating predictive models to manage financial risk and optimize decision-making at scale. In 2015, I relocated to New York with Citi and began collaborating closely with data engineering and product teams to embed analytics into large-scale business systems. Subsequent consulting roles at Visa, Cisco, and Realtor.com broadened my exposure to diverse domains, from financial services to e-commerce, revealing how context, customer behavior, and scale shape the practice of data science.

At Walmart, I lead experimentation and causal measurement for Walmart Plus, transforming an ad-hoc analytics function into a structured experimentation platform. When I started, we ran one experiment per quarter; today, we execute over 150 annually, driving measurable impact across pricing, marketing, and engagement. What excites me most is how experimentation has evolved from a testing mechanism to a strategic driver of innovation. Building systems that empower teams to learn faster, make data-informed decisions, and embed causal thinking into product development has been the most fulfilling part of my journey. For me, the true impact of technology lies in helping organizations by analyzing data to continuously learn and adapt.

Q2: Looking back at your career path, what's been the most unexpected turn that ended up shaping who you are today?

A2: Without a doubt, my transition from India to the U.S. was the defining moment. It wasn’t just a geographic move; it was a cultural and professional transformation. It pushed me to unlearn and relearn, adapt to new problem-solving frameworks, and navigate ambiguity in diverse teams. That experience taught me resilience and the importance of contextual intelligence, understanding not just what to solve, but how to make it relevant for the environment you’re in.

Q3: How do you define “innovation” in today’s rapidly evolving tech landscape? Has your definition changed over the years?

A3: Absolutely. My definition has evolved alongside the field of data science itself, from deterministic and predictive models to adaptive and generative intelligence. Earlier, innovation meant improving accuracy or efficiency, now it’s about scalability, interpretability, and accessibility. With the evolution of machine learning to deep learning and now to generative and agentic AI, innovation isn’t confined to algorithms anymore; it’s about system design, governance, and responsible deployment. True innovation now means enabling impact at scale, responsibly, inclusively, and sustainably.

Q4: When you’re evaluating new ideas or technologies, what's your framework for separating genuine innovation from hype?

A4: When evaluating new ideas or technologies, I always start from the first principles - what business problems are we solving, and who benefits from it? Innovation only has meaning when it’s anchored in purpose and outcomes.

The first lens I apply to is customer impact. Every idea, no matter how exciting, must solve a real, measurable problem for the end user. Technology should simplify, empower, or enhance an experience - not just exist because it’s novel. Next, I focus on quantifiable outcomes. If we can’t tie an idea to clear success metrics - whether in efficiency, engagement, or revenue - it’s usually a sign that the value proposition isn’t strong enough. Data-backed validation helps separate genuine innovation from experimentation for its own sake. Then comes sustainability and scalability. True innovation must move beyond the proof-of-concept stage. It should be capable of evolving across teams, products, and time - without losing its integrity or business alignment. Finally, every decision is weighed against governance and privacy considerations. In an era where trust defines technology adoption, building responsibly isn’t optional - it’s essential.

For me, innovation without discipline is just noise. The real differentiator lies in how consistently an idea delivers measurable, ethical, and sustainable impact at scale.

Q5: What's the biggest misconception you encounter about innovation in the tech industry?

A5: One of the biggest misconceptions about innovation in tech is that it’s all about speed and novelty, building something new, fast, and flashy. But true innovation isn’t defined by how quickly you can launch a product; it’s how meaningfully you can create sustained value through technology. What many overlook is that innovation isn’t just an invention, it’s integration. It’s about reimagining how technology, people, and processes come together to deliver something enduring. Some of the most meaningful advancements in tech today, from generative AI to adaptive experimentation systems, didn’t emerge from a single breakthrough, but from years of disciplined iteration and scaling inside large ecosystems.

Q6: How do you see the relationship between AI advancement and human creativity evolving? Are they competitors or collaborators?

A6: I’ve always seen AI and human creativity as collaborators rather than competitors. AI has this incredible ability to process information at a scale and speed we could never match, it can generate possibilities, surface hidden connections, and even inspire new directions we might not have considered. But creativity, at its core, is deeply human. It’s shaped by emotion, context, curiosity, and even imperfection - things machines don’t quite grasp.

What excites me most is how the two can amplify each other. When AI takes on the repetitive or data-heavy parts of the creative process, it gives humans the space to think, imagine, and explore. I’ve seen this in my own work, using AI to model outcomes or test ideas frees up energy for the “why” and “what if” questions. The future of creativity isn’t replacing humans with algorithms; it’s co-creation - humans setting up the vision, and AI expanding what’s possible.

Q7: When you're facing a particularly complex problem, what's your go-to method for finding clarity?