Delivering a Foundation for AI, 5G and Edge with NVIDIA

TechArena host Allyson Klein chats with NVIDIA’s Rajesh Gadiyar about his company’s strategy to accelerate 5G and edge adoption including cloud native vRAN.

Achieving Sustainable Building Construction with Urban Machine

TechArena host Allyson Klein chats with Urban Machine CTO Andrew Gillies about how his company is achieving a breakthrough in sustainable construction through the AI and Robotics powered Machine.

A VRAN Deep Dive with Intel

TechArena host Allyson Klein chats with Intel vice president of wireless access networks Cristina Rodriguez about the white hot VRAN market.

Mixed Signals on Eagerly Anticipated Private 5G Hockey Stick Growth

Private 5G networks are foundational technology to edge computing proliferation, and we’ve heard about superior capabilities of private 5G deployments from the industry for years. In 2015 the US government opened Citizens Broadband Radio Service (CBRS), a 150 Mhz spectrum band that enabled organizations to establish private 5G networks with a caveat that they must be deployed with the proper security. This was a grand departure in the U.S. for spectrum allocations and brought to the table the opportunity to use the coming 5G network as a wireless solution where WiFi did not fit. 5G was seen as a superior technology due to the number of devices supported by each access point (AP) and for its superior signaling in high noise environments like factory floors where interference could make WiFi unreliable. 5G also offers soft handoff between APs to ensure lossless connectivity valued in industrial applications.

The past half decade has showcased incredible industry innovation in private 5G solutions and proof of concept testing with leading providers. However, the technology, like many communications network solutions, has been slow in gaining mass traction with enterprises. This past week we have seen some news that suggests that large growth is ahead supporting industry forecasts for up to 47% CAGR for private 5G networks through 2030. Nokia announced that they’ve seen a 50% increase in private 5G customer adoption QoQ and have more than 500 private 5G customers in their client base. Not to be left out, Ericsson also shared that sales are up 33%.

These industry stalwarts showing growing momentum give hope that the highlight anticipated hockey stick growth of private 5G is upon us. While these deployments are led from the US, Germany, UK, China and Japan, we are also seeing additional announcements of spectrum allocations for private 5G deployments across the world most recently in Spain and Norway opening the door for customer adoption in these countries.

The the value that was envisioned by industry architects years ago is also coming to fruition. An example is one of the first private 5G deployments in India where Apollo Hospitals is tapping its private 5G network to conduct AI guided colonoscopy procedures utilizing technology from Tech Mahindra and Airtel, a leading use case described by industry leaders during the development of this technology in its creation.

Still, macro-economic headwinds may stand in the way of a fait accompli of private 5G deployments. Verizon, for one, signaled it had miscalculated private 5G demand uptake last week as it provided its 2023 economic outlook, and industry softness in this space would fall in line with broader IT spending conservatism. As we look forward to next month’s Mobile World Congress event in Barcelona, I’ll be looking for more industry examples of successful broad scale deployment of private 5G networks, more news about additional spectrum allocations, and insight about new infrastructure innovation that delivers the performance, scale and security required across enhanced mobile broadband, massive internet of things and mission critical service private 5G use cases.

WebAssembly: Paving a Path to Distributed Computing with Fermyon

TechArena host Allyson Klein chats with Fermyon CEO Matt Butcher about WebAssembly and how it addresses many of the challenges that containers and virtual machines have brought to the cloud.

UCIe Unleashing Chiplet Innovation with NVIDIA

TechArena host Allyson Klein chats with NVIDIA Data Center Product Architect and Universal Chiplet Interconnect Express organization board member Durgesh Srivastava about the new UCIe specification and how it will reshape the foundations of compute architectures.

Real-Time Supply Chain Control with Oii

TechArena host Allyson Klein chats with Oii CEO Bob Rogers about his team’s AI-based solution for real-time modeling and management of supply chains and the opportunity for AI to drive actionable solutions for business and society.

Oii: AI Driven Supply Chain that Shifts with the Market

Supply chain management shifted from an operational topic to a crisis worthy of national security concern over the past two years. We all felt supply chain issues viscerally from empty grocery shelves to skyrocketing prices. According to the White House, 36% of small businesses felt material impact from supply shortages during the pandemic with impact concentrated most within trades, manufacturing and construction sectors. The average American also likely has feelings now about the supply of semiconductors, something that likely never was a thought pre-2020. I, for one, wondered how this could all go so wrong. Didn’t we, after all, have computing models and complex analysis for supply chains? It turns out that traditional supply chain management for a lot of industries had much room for improvement with Excel spreadsheets written once every year or two being a go to model for management.

Enter Oii, a new supply chain solution that utilizes AI to map supply variables and custom tune a company’s supply chain to optimize for business objectives. Oii CEO Bob Rogers is an expert in enterprise data management formerly serving as the Chief Data Scientist at Intel and running a hedge fund that utilized advanced data analytics to predict the stock market. You could say that Bob lives and breathes inventing ways to analyze data to organizational and societal benefit. His team trained their Oii model to establish control of supply from cost, sustainability, and customer response perspectives and give supply chain managers the ability to update the model in real time in order to manage through changes in the market. This is important. Response time to changes in market environment can represent enormous advantage to a company’s ability to thrive in variable conditions.

I loved listening to Bob tell the story of Oii and hope you listen to the episode. He’s a guy who can take something incredibly complex and distill it down to a simple and actionable solution, and he’s great at bringing us mere mortals along for the ride. I’m excited to see Oii’s progress in the market as an incredibly valuable application of AI for business. As for Bob, he discussed his view on Chat GPT as a bold step towards AGI and actually published his book this week co-written by Chat GPT and Theresa Hart entitled “ChatGPT, an AI Expert, and a Lawyer Walk Into a Bar...: The Evolution of Creativity and Communication”. Those following TechArena will know that this is on the top of my reading list. Expect more to come on this topic. As always, thanks for engaging. - Allyson

Measuring Hunga Tonga’s Climate Altering Impact with NASA

TechArena host Allyson Klein chats with NASA research scientist Ryan Stauffer about how NASA's SHADOZ program measured increased stratospheric water vapor caused by the Hunga Tonga volcanic eruption and its impact on climate.

Walking on the Edge

Between every two technologists there are three or more definitions of edge computing. There’s nowhere on the tech landscape today that creates more divergence of perspective as the term edge, and in my opinion, lack of crisp taxonomy limits our collective progress. I am, after all, a words person, and therefore am biased on the importance of coalescence on terms. We’ve seen this challenge before. Cloud comes to mind as a term that required ages to get crisp, and today some would argue that we’re still not unified on that definition. We are turning our focus at the TechArena to the edge with a span of compute, industrial, network and mobile edge and will be talking to industry icons and disruptors about how they’re defining their edge implementations and where market adoption is today for edge services. But before we get to all of that, I wanted to ground on my current definition of the edge in terms of what the industry is delivering today that is disrupting the technology landscape.

Let’s start by laying out some TechArena guardrails. The edge is not everything that is not in a data center. It is not all devices that are not directly human controlled (ie PCs, phones and smart watches). The edge is not a single place and it’s not a single thing…but the various edges do have some common characteristics that unify these edgy things as a unified commonality. And at this point of the diatribe, I’m going to promote the edge to the Edge…because there was use of the term edge in many corners of our industry before we started talking about the Edge. Those things may be part of the Edge…but they may not, and there is not a grandfathering clause that if you were once referred to as a part of the “insert term” edge you must be Edge.

With those guardrails, I’d like to explore why the focus on the Edge exists, in other words what problem the Edge is trying to solve for us. In listening to people discuss what they’re doing with Edge I think the three forces that created opportunity for Edge is as follows:

- Boatloads of data are growing on a distributed computing landscape.

- There are latency and data movement limitations in a traditional data center computing model where large compute capacity resides in a limited number of locations and network limitations bottleneck data movement.

- The operational efficiency and scale of cloud architectural models derived in data centers offer a way to unify computing across a growing diversity of compute environments.

If we combine these forces, together we can derive a definition of Edge:

A computing environment residing apart from a traditional data center location and following the core definitions of a cloud operational model to deliver efficient and fast digital services and/or data analysis.

Let’s test this definition against use cases from across the technology landscape.

Industrial Control

A manufacturing floor has deployed sensors and cameras to collect real-time production data to measure factory output and ensure factory safety compliance. This data collection is analyzed by Edge servers to ensure real-time control of the factory while sending summarized analysis to the organization’s data center. In this usage the servers as well as potential on-camera analytics are examples of Edge computing. Smart sensors that provision work across a sensor network may also be part of the Edge implementation within our taxonomy, and it’s the operational model of service provisioning, not the hardware’s existence, that would determine inclusion. Broader “collect only” sensors or “record only” cameras are part of the larger IoT network providing data collection for the Edge. Industrial Edge implementations will often tap 5G private networks for a mobile Edge computing solution within the factory environment – watch this space for more information to come about 5G private networks and mobile Edge.

An example of industrial Edge implementation is Worchester Bosch’s implementation of industrial Edge control of their UK boiler plant leveraging robotics, a sensor network of machine and collision sensors, and Edge server analytics controlled by a private 5G mobile network. This implementation has driven up factory output by 2% while increasing safety within the factory environment. Ericsson provides more information about this implementation on their site.

CDN (Content Delivery Networks)

Content delivery networks saw massive growth during the pandemic while we were all bound to our homes and are one of the fastest growing applications of Edge computing. In this case, an OTT provider deploys Edge servers close to customer locations to serve content to minimize latency and media consumption of core networks. These CDNs also provide Edge network security and microservices for collection of billing data. The CDN is functioning as part of the Edge per our taxonomy as it’s delivering cloud services outside of a traditional data center location and may be providing some analytics on customer traffic patterns to re-deploy most desired content to maximize customer viewing experience.

An example of a CDN in action is Netflix OpenConnect Edge network distributed to the >200 million customers streaming their content daily. This Edge implementation is matched with an AWS cloud backend where Netflix runs its data storage, customer analytics, recommendation engines, transcoding and more services which are not limited by latency requirements. The unified Edge to cloud solution ensures customers do not experience disruptions of service and Netflix is able to continue analyzing customer consumption data to deliver meaningful content recommendations and move content across their network to serve optimal content to customers.

VRAN (Virtualized Radio Access Networks)

In some ways, VRAN is the holy grail of Edge implementations simplifying and speeding radio access network services (those things that keep you and I reliably connected to mobile networks) while unifying core, Edge and RAN in a common platform. To understand VRAN we need to introduce some additional terms including centralized unit (CU) an access control point which provides RAN oversight and non-real time data processing, distributed unit (DU), controlling lower-level protocols including MAC layer control through real time processing, and remote radio unit (RRU), providing physical layer transmission and reception. By virtualizing these three functions, or containerizing them in a cloud-native implementation, telecom providers are able to run their radio access networks on standard server hardware and reduce or eliminate costly proprietary solutions.

While there are various configurations of VRAN solutions, some featuring open interfaces for the RRU, others maintaining priority hardware for this portion of the RAN, the cost savings of these virtualized solutions are driving mass disruption as providers move to 5G networks and seek cloud native service to deliver the full promise of the 5G standard. To do this they require the cloud operational control of services from core to Edge including the RAN. The expected investment in this space is staggering with over $550 billion in collective VRAN investment in the next few years alone. Watch this space for more information on the state of VRAN and to hear about progress in VRAN solution deployments in the weeks ahead.

These three examples are just scratching the surface on Edge implementations, and the TechArena is exploring other use cases to bring into the discussion. We’re also looking to hone this definition with the industry in the months ahead as we begin discussions with innovation experts from across the multi-faceted Edge landscape. As always, thanks for engaging - Allyson

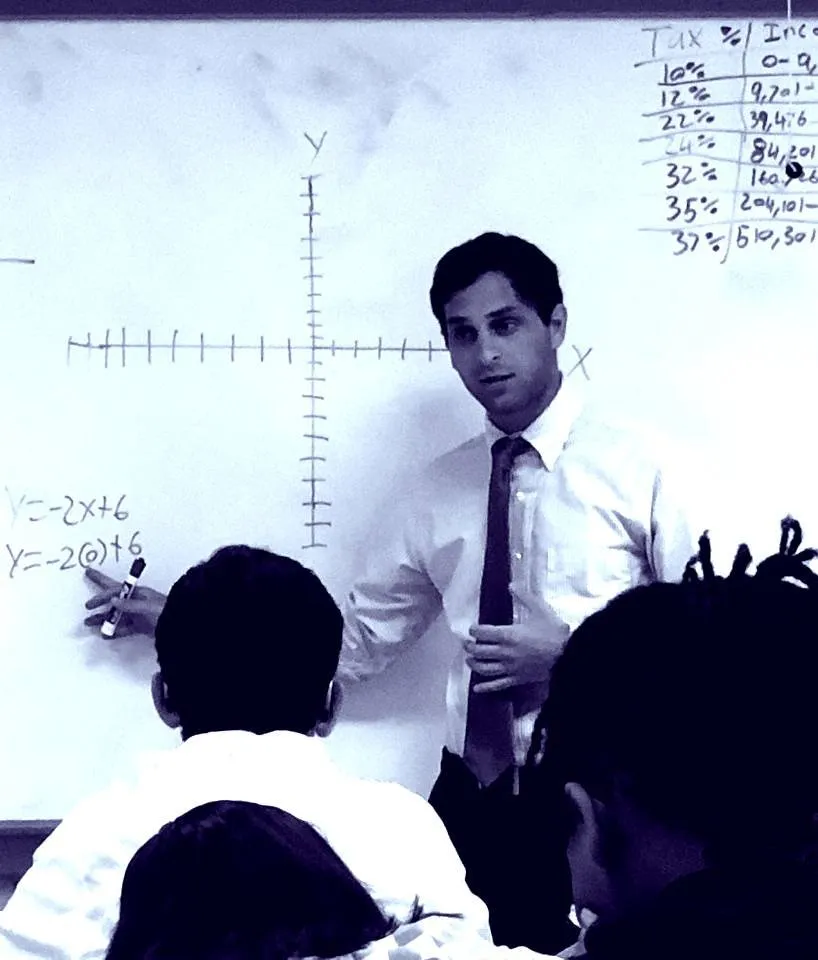

Intel Showcases IT Strengths with Sapphire Rapids Delivery

Today, Intel delivered the 4th generation of Intel Scalable processors to the world complete with a 52 SKU lineup from bronze to new Max Series solutions. With their arrival comes a new battle for data center deployments as Intel seeks to compete more effectively with AMD’s 4th generation EPYC processors. So what is the landscape for data center compute heading into 2023, and how should we view these new Xeon Scalables vs. AMD alternatives? Most importantly, what will enterprise customers choose? Let’s break it down:

Intel takes acceleration to the Max

With today’s launch, Intel doubled down on a message of workload acceleration as it’s both the breadth and type of workload accelerators that give Intel its competitive edge. The new generation of Xeons feature new AI acceleration with the introduction of Advanced Matrix Extensions expanding on the vector processing acceleration of its previous generations and competitive CPU offerings. But Intel has done more here. They’ve re-structured their positioning of embedded accelerators introducing a Data Streaming Accelerator, an In-Memory Analytics Accelerator, and an expansion of Advanced Vector Instructions for VRAN implementations. Imbued with this slew of accelerators Intel is making it very clear that they intend to drive optimized performance across workload classes with unique optimizations from AI and analytics to network functions and security. While the launch notably leaned into gen over gen performance comparisons avoiding competitive bakeoffs, we should expect to see how these accelerators compete with the brute performance of AMD’s EPYC processors in the days ahead. I’d also expect to see competitors trying to dismantle the embedded accelerator approach as costly overhead compared to more nimble designs as we heard earlier from Ampere on the TechArena.

A little help from their friends

Intel expanded on workload acceleration by leaning into the depth of industry and stack expertise at their command including a decades-long history of optimizing workloads to run best on their processors. It’s no surprise that the launch featured cloud stalwarts including AWS, Google Cloud and Microsoft Azure, but there was also a reminder that Intel has invested heavily in network with inclusion of Ericsson and Telefonica and a surprising highlight on NVIDIA while they launched their Max GPUs, potentially as a circling the wagons response to AMD’s recent MI300 announcement.

The TechArena Take

While it would be easy to conclude that though Sapphire Rapids delivers breakthrough capability compared to previous generation it still does not deliver the max performance of EPYC. I, for one, am guilty of focusing on top bin performance headlines. However, we must remember that most customers purchase mid-range CPUs and select processors for myriad reasons including full stack tuning and platform trust. When I think about this battle of the CPU titans my mind drifts to the automotive industry…where similar comparisons are made between car engines and zero to sixty times. There is a small sub-set of enthusiast drivers who purchase based on speed off the line, but most buyers are looking at brand trust, cockpit experience, and other factors to fuel their purchase decision. In 2023, Intel is showcasing its strength of intimate knowledge of what customers care about, and it would be wise to not underestimate the value of this knowledge in the marketplace. I can’t wait to pop some popcorn and see how this plays out. As always, thanks for engaging - Allyson

Futurecasting Technology, Society and You

TechArena host Allyson Klein talks with futurist Brian David Johnson about future and threatcasting, and how taking agency to envision our future places us in the drivers seat to shape it.

The super-sized future is here with AMD’s Instinct MI300

Lisa Su waited until the tail end of her speech last night to pull a literal behemoth out of her bag of tricks with the introduction of the Instinct MI300, a new high performance processor that blends advanced CPU and GPU technology towards maximum benefit for AI and HPC workloads. It’s not surprising to see AMD deliver another performance best. They’ve been on quite a roll for years most recently with the addition of their performance leading 4th generation EPYC processors late last year. What caught my eye about the introduction of the MI300 was the elegance of its design and what it foretells for the entire semiconductor arena moving forward. Let’s break it down.

Chiplet architecture wins the day

The MI300 is, per AMD’s claim, the world’s first chiplet based processor combining CPU and GPU cores. This is important for high performance computing as a combination of CPU and GPU can best support the demands of HPC and AI workloads. AMD has pulled this feat off through 3D stacking of nine 5nm chiplets sitting atop four 6nm chiplets and surrounded by a breathtaking 128GB of HBM3 memory. What we are seeing is modern silicon innovation tapping different silicon architectures tightly coupled with high performance memory and I/O reducing both compute latency and platform overhead, and it’s a harbinger of where the entire silicon ecosystem is aiming for future products.

The performance claimed by AMD is eye-opening. 8X the performance and 5X the perf/efficiency of the MI250, the nifty processor that fuels the AMD based Exascale class supercomputer at Oakridge National Lab. As Dr. Su held the chip aloft you could see that as a microprocessor architect herself she well understood what she’s delivered to the market and the importance to the trajectory of silicon innovation, and with that initial chip reveal the team at AMD should be proud.

Super-sized silo designs

But some questions come to mind. When will we see others jump into this APU/XPU pool? Intel shared more details of its upcoming Falcon Shores product at SC’22 which will feature a combination of Intel Xeon CPU and Intel Data Center GPU Max Series and is hinted to hit the market in 2024, behind anticipated MI300 launch in 2H'23. NVIDIA is also hard at work innovating what must be one of the best named products in the industry with its Grace Hopper superchip, again combining ARM CPU and GPU into a single package met with a proprietary 900 GB per second coherent interface.

As you look at this landscape it doesn’t take too long to realize that while these super-sized value meal chips will disrupt the market, some customers will want more control of exactly what chiplets they use. Enter UCIe, the new industry standard that will provide a common interconnect for chiplet designs which could open the door for a mixed architecture implementation. This is the reason I called UCIe one of the biggest disruptions on the compute landscape in an earlier blog. My mind also goes to the importance of foundry services in an era of multi-vendor chiplet designs as well as the emerging RISC-V architecture open-sourcing logic in a way we’ve never seen. Watch this space for more updates on all of these topics in 2023. For now, we’ll all need to wait as the MI300 and other supersized processors are readied for market. As always, thanks for engaging - Allyson

OCI: Making Bespoke Cloud Services Simple

When you think of Oracle you don’t often think of scrappy disruptor. However, if you’ve been reading Oracle’s recent headlines you’ll uncover a company in transformation, shedding its skin as a traditionally minded enterprise software supplier to a cloud first services mindset. Along the way, OCI has gained major momentum with enterprise customers as the company leans in on a core differentiator – intimate understanding of enterprise customers.

I’ve been intrigued to see this story of mature player as disruptor play out, and I was excited with the chance to talk to Bev Crair, Senior VP of Oracle Cloud Infrastructure Compute, about how her team has architected its cloud to deliver differentiated value to its customers. Bev has a history of leadership across the industry most recently at Lenovo and Intel and has a reputation for driving high performance teams to deliver breakthroughs under her watch. What she described in our talk was a maniacal focus on delivering what, and only what, the customer requires. This seems simple but is awfully difficult to do within the world of automated re-provisioning of infrastructure to stand up customer services. More on the complexity of automation can be found in my talk with Abby Kearns. However, Oracle is doing just that and making it simple for customers to choose their services as part of a multi-cloud strategy.

Does this matter? Earlier I wrote about Couture Silicon for the Cloud, and Ampere’s delivery of processors devoid of instructional overhead of x86 alternatives. More plainly, Ampere is designing chips solely for the cloud so don’t need to think about capabilities that would be used in other designs like laptops or edge applications. This creates efficiency of design that is then passed on to the customer in terms of value. The chips fit like a glove for cloud workloads…or like a custom-tailored shirt if you extend the metaphor. Oracle’s promise to customers is similar. They’re delivering exactly the processor, memory and I/O configuration desired vs. finding closest proximity recipes found with the rest of the major cloud providers. And while many off the rack alternatives will do a great job delivering the performance required for the workload, there’s likely cost overhead that could otherwise be avoided.

Check out the interview to learn more about OCI innovation including their approach to multi-cloud and vendor partnerships delivering a rich selection of service offerings. And watch this space for more information about cloud service innovation. As always, thanks for engaging - Allyson

Bespoke Cloud Services with Bev Crair

TechArena host Allyson Klein interview’s Senior Vice President of Oracle Cloud Infrastructure Compute Bev Crair about how OCI has delivered disruptive cloud services and simplified multi-cloud opportunity.

The Battle for Multi-Cloud Security

TechArena host Allyson Klein chats with Checkpoint Software's TJ Gonen about the state of cloud security and how security solutions must start with a developer lens

The Rising Demands on Cloud Infrastructure

TechArena host Allyson Klein chats with Cloudflare infrastructure VP and Open Compute Board Chair Rebecca Weekly about the rising demands on cloud infrastructure across performance, design modularity, and sustainability.

Going Down the Rabbit Hole with ChatGPT

Dear friends, It was time to confront the existential question on my mind for the last week. Have I been replaced by AI with the advent of ChatGPT? Will content forevermore be produced by machines, or does the turn of a phrase and unique and funny perspective still matter?

Let’s Get Ready to Rumble

To test my question, I decided to put ChatGPT to the task of writing a blog post on a topic I’ve been working on: the rise of complexity of cloud computing infrastructure and oversight. To add to the challenge, I wanted to include my two most recent guests on the TechArena to the writing assignment. I typed the following query…and waited approximately 45 seconds for the blog to be output. “Write a blog about cloud computing complexity and mention Rebecca Weekly and Abby Kearns”.

Read it…and Weep?

Here is ChatGPT’s view on cloud complexity with shout outs to Rebecca and Abby.

Cloud computing is a rapidly evolving field that has transformed the way we think about and use technology. While the benefits of cloud computing are numerous, it has also introduced a new level of complexity to the world of technology.

One of the key figures in the world of cloud computing is Rebecca Weekly, who is widely regarded as one of the foremost experts on the subject. She has written extensively about the complexity of cloud computing and the challenges it presents.

Weekly points out that the sheer scale of cloud computing systems can make them difficult to manage. These systems often consist of thousands of servers and other hardware components, all of which must be carefully configured and maintained in order to function properly. This can be a daunting task, even for experienced IT professionals.

Another source of complexity in cloud computing is the sheer number of different technologies and platforms that are involved. Cloud computing systems often use a wide range of different technologies, from virtualization software to data storage systems and more. This can make it difficult for IT professionals to keep up with the latest developments and ensure that their systems are running smoothly.

Abby Kearns, who is the executive director of the Cloud Foundry Foundation, has also spoken about the complexity of cloud computing. In a recent interview, Kearns noted that the complexity of cloud computing systems can be overwhelming, and that it is important for organizations to have a clear understanding of their specific needs in order to make the most of these systems.

Despite the challenges, however, the complexity of cloud computing systems is also a source of opportunity. As IT professionals continue to develop new tools and technologies to manage these systems, they are also creating new opportunities for businesses and organizations to take advantage of the power of the cloud.

In conclusion, while cloud computing has introduced a new level of complexity to the world of technology, it has also opened up new possibilities and opportunities. With the right tools and expertise, organizations can harness the power of the cloud to drive innovation and growth.

My Take

Well, I’d love to hear your perspective, but I don’t see this as a replacement for humans just quite yet. Is it a stunning first draft tool for people with writers block? Could be…a bail out students at mid-terms? Definitely. But at least with this first test I see a lack of, well, point of view which makes sense given the author. But of course, I didn’t have the courage to add “from my voice” to the prompt. More on that next time! As always, thanks for engaging - Allyson

The State of Cloud Automation with Abby Kearns

TechArena host Allyson Klein chats with cloud innovator Abby Kearns about the state of cloud automation and how further advancement is required to keep apace of growing cloud complexity

Oh What a Fine Mess We’ve Made: The State of Cloud Automation

More than a decade ago I worked on a team at Intel that introduced a vision for the future of cloud based on concepts of federation and automation. The goal was to build the cloud as a self-aware infrastructure where services were automatically provisioned and the cloud itself would monitor and re-balance resources based on workload usage. A colleague later coined this as a data center that thinks for itself.

As technology has progressed, we have made fantastic strides in delivering the core capabilities for this vision. Cloud native computing with the workload portability required for re-provisioning. Underlyling infrastructure that has flexible performance capabilities to manage different workload types, and the promise of infrastructure composability on the horizon. And stack automation giving us the ability to manage orchestration by policy. AI promises even more advancements towards that data center that can actually think through decisions historically managed by humans.

So why haven’t we achieved this nirvana? You could argue that the cloud itself has begat the core issue. Its very nature has made it incredibly easy to spin up workloads, different types of workloads (serverless, microservices etc) and this complexity has given rise to a equally complex set of tools to oversee more complex allocation of resourcing. I got a chance to talk to Abby Kearns, a leading innovator in cloud computing about the challenge. Abby is a powerhouse when it comes to deeply understand cloud stacks. As the former CTO of Puppet, she led the app stack automation company’s delivery of technology that helped inspire broad industry innovation in the space. Her viewpoints are also shaped by her time leading the Cloud Foundry, a powerful cloud consortium.

In our discussion, Abby pointed to this complexity as a key challenge that gates IT oversight today and offers some hope on cloud stack innovation. Check out the interview and as always thanks for engaging - Allyson

TechArena’s Three Innovations You Need to Know about Heading into 2023

The last few weeks have been rich with new technology announcements, some with a lot of fanfare and others that happened in the corners of the tech arena that will grow in importance next year. Three grabbed my attention as topics that should squarely fit on your radar as tech innovations that will disproportionally reshape technology as we know it. Let’s take a look.

The new Google? OpenAI introduces ChatGPT (version 3.5)

We have all interacted with chat bots, and to interact with chat bots is to sometimes be infuriated by them. The technology simply has not advanced enough to mimic human thought, asking follow up questions, delivering detailed answers, and because of that we often find ourselves caught in a loop of a very predictable data decision tree that doesn’t deliver. I love Alexa, but she still can’t answer what the most important news of the day is, summarize abstract thoughts, or suggest ideas other than to place items in my Amazon shopping cart. I’m seeking a deeper relationship that at this point is very much one way.

Enter ChatGPT from the OpenAI team. This new AI model feels like it may be finally delivering on what we have been seeking all along…and more. Human-like communication that can deliver detailed answers, seek follow-up, and admit mistakes. This will be a step forward for consumer facing applications, but there’s more to the story. ChatGPT has shown promise to code software, write prose in the fashion of a specific author, and orchestrate cloud instances. The breadth of application is inspiring as the community starts integrating this technology into development streams, and the acute developer interest suggests that this will happen quickly. That doesn’t mean that there won’t be bumps in the road. Stack Overflow almost immediately suspended ChatGPT due to challenges with incorrect information in queries overwhelming the site. Others have called out that ChatGPT lacks morals, doesn’t necessarily like humans, and has an equal ability to do things like create phishing scams and Malware as software for good intentions. More alarmist views express that it will re-define our markets and eliminate endless jobs, which certainly could be the case with this and other AI technology long term but likely won’t happen in week one of implementation.

The net takeaway? This is a powerful tool and integration into business could provide incredible advancement making it a technology and a space with expected future innovation not to be ignored.

Meta’s new Protein-Folding AI will Re-Shape Life

OK, maybe that sub-head was a bit hyperbolic, but Meta’s introduction of the ESM Metagenomic Atlas is an important follow on from DeepMind’s 2020 announcement of AlphaFold2 in massively accelerating protein shape predictions at the molecular level. First discussed on the TechArena by VAST Data’s Jeff Denworth, AlphaFold2 has had an incredible impact on the scientific community. Institutions like the Max Plank Institute have already come out stating that these tools have solved protein structure challenges that traditional science was failing to unlock, and Amazon made a splash earlier this year with the introduction of AWS Batch providing the computing power at scale to fuel research.

What’s the impact? Researchers can apply this to understand the human genome at a level that has been out of reach accelerating treatments for disease, new vaccinations and other medical breakthroughs. The Metagenomic Atlas has already mapped over 600 million proteins in its database driving National Geographic to name it as one of the top 22 innovations in 2022. Science magazine had already named AlphaFold2 and other AI driven research in this space as 2021’s breakthrough of the year.

The dystopian thinkers will connect the dots that all of the large cloud players are racing to control the foundations of life and the power that comes with such knowledge. They may also reflect that Meta is working double-time to re-shape its image towards the metaverse and this advancement places them very far away from Facebook likes. For me, these concerns are far outweighed by the fact that both Google and Meta have made these tools open source for the scientific community to integrate into labs immediately, and we all stand to benefit from the accelerated innovation from their collective discoveries. Watch this space.

A Sledgehammer to Moore’s Law Limitations

You’ve read up to this point and thought, Allyson I’ve already heard of chatbots and protein folding. True! These have garnered a boatload of attention in their respective circles. But my third technology to watch heading into 2023 is one that quietly launched at Supercomputing last month, the Universal Chiplet Interconnect Express, or UCIe specification. I first wrote about it in the summary of SC22’s advancements and have since got even more excited about its promise after talking to its architects. So what is UCIe, and why am I holding it in such high esteem?

To answer this question, we need to first look at the problem that UCIe and other technologies like the Compute Express Link (CXL) are trying to solve. Moore’s Law is running out of gas, and shrinking process technology, the foundation for computing performance advancement since the birth of the microprocessor, will only get us so far in advancing semiconductor density. While CXL has provided a fantastic industry standard to connect chips on a computing platform and has eliminated historical proprietary interconnect schemes that have gated true heterogeneous computing, UCIe is going a leap further enabling this same industry standard foundation on chip package. What does this mean? Chiplet architectures, first envisioned by DARPA’s CHIPS program back in 2017, can finally be advanced without the limitation of proprietary technology. This means that both industry and consumers of computing can dial in the right balance of CPU, GPU, DPU etc chiplets into a a microprocessing package to deliver the best performance capabilities for targeted workloads. The who’s who of semis have engaged in the UCIe specifications both from a standpoint of silicon suppliers (enter AMD, ARM, Intel, NVIDIA Samsung, TSMC and more) and large service providers with their own silicon aspirations (hello Alibaba, Google, Meta, and Microsoft). Notably absent is Amazon, and we’ll need to watch to see if they really want to fight an standard consensus that seems destined to take off in products across the industry.

So why am I elevating UCIe to this lofty position? Microprocessor advancement is still foundational to breakthroughs of everything that runs on silicon. Without access to more performance our advances will be gated to today’s processing capabilities, and the computing industry sits alone in terms of the rate of innovation enjoyed for decades based on silicon advancement. Further breakthroughs simply require it, and for me that is a big call for applause for the team who delivered the UCIe 1.0 specification. Expect more details on the TechArena soon. As always, thanks for engaging – Allyson.

May the Odds be Ever in Your Favor

I love studying the societal disruption that is AI, and we’ve uncovered some phenomenal stories on the TechArena including NASA’s study of global air pollution and Lyssn’s work in improving mental healthcare for all. I’m a strong believer that if we’re to solve the largest challenges facing humanity from climate change to medical breakthroughs artificial intelligence will be at the solution’s core. Of course, AI has disrupted the inspirational to the banal (yes, Amazon, I AM the perfect person for the Dragon Pip plush toy that you’ve recommended) and that is what makes watching its arc so much fun.

One of the better stories in this realm of fun ways AI is improving our existence is WalterPicks. Anyone that knows me knows that I love Fantasy Football and often cajole others into playing through the football season. Draft day is an occasion in the Klein household with experts consulted, statistics vetted, and coffee consumed to ensure that I’m at the top of my game. But what if there was a better prognosticator for draft selections and weekly lineups? I came across the founders of WalterPicks through a small mention in an online story and was wowed. They have developed a model that uses machine learning to predict fantasy football outcomes, and they’re outperforming the big guys Yahoo and ESPN by 17% over the last two years alone.

What’s better, they developed this model out of a passion for the game in a machine learning course at Ithaca college. Did I mention that I love stories like this? Once they put their tool to the test during the season they knew they’d struck fantasy gold. In an industry that is growing past $45 billion by 2027 (a fact that is startling to all who do not play fantasy sports games), WalterPicks could be an essential tool for all gamers hoping to win their leagues. I hope you enjoy the episode and thank you for engaging. - Allyson

Fantasy Football Supremacy with WalterPicks’ Sam Factor

TechArena host Allyson Klein chats with WalterPicks co-founder Sam Factor about how AI helps deliver 17% superior recommendations to fantasy football lineups vs. the major recommendation sites.

Improving Mental Health with AI

Improving mental health through AI

As we kick off the holiday season this week with Thanksgiving in the US and Black Friday everywhere it’s important to recognize that this time of year can be more stressful on many. In fact, according to the American Psychological Association, 38% of people surveyed stated that stress increased during the holidays due to time and financial pressures, gift giving and family gatherings. With our mental health providers already stretched by a populace stressed by pandemic and economic concerns, I was curious about what our industry was doing to help. This is when I discovered the team at Lyssn (pronounced listen).

Lyssn was formed in 2017 by a group of psychologists and engineers keen to apply artificial intelligence to improve the quality of therapy and assist practitioners. The underlying technology was born out of a study on AI training of therapists funded by the NIH, but researchers realized they were onto something that could have meaningful application for both public mental health resources and private sector clinics.

One thing that struck me about this company in particular was that the leadership were actually former practitioners themselves making them both better apt to identify the parameters for algorithm training and the mindset for practitioner adoption. In fact, Lyssn co-founder Zac Imel, continues as a professor of counseling psychology at the University of Utah in addition to his responsibilities at the company. Our discussion covered the interesting journey of Lyssn since its foundation, how AI is a resource for therapeutic practice, not a replacement for human-to-human engagement, and how state and local governments and clinics across the nation have signed up for Lyssn solutions.

Listen to the episode to hear how Zac and other researchers trained their models specifically for the therapeutic environment and how AI has evolved in this short time to provide more robust assistance to practitioners tapping natural language processing, to analyze conversations in real time and make recommendations for improving the quality and outcome of the therapy experience. I hope you find this application of AI as inspirational as I did as you consider the real-world impact driven through adoption. Thanks for engaging and Happy Thanksgiving - Allyson

.jpg)