From Chaos to Control: The Future of Automotive E/E Architecture

With Halloween just around the corner, it seemed appropriate to bring a little intrigue and mystery into this week’s blog. In the heart of Silicon Valley is the Winchester Mystery House which, throughout the years, has grown to become a relatively popular tourist attraction.

Owned by Sarah Winchester, the heiress of the Winchester Repeating Arms fortune, (think Winchester rifles), this mansion was constructed over the course of 36 years from 1886 to 1922. The legend goes somewhere along the lines that she regularly heard haunting spirits tell her that tragic things would happen if she ever stopped construction on the house. This was the motivation that caused her to transform her eight-room farmhouse into a 24,000 square foot mansion with 160 rooms, 47 stairways and fireplaces, with 13 baths, 6 kitchens and a host of other amazing factoids regarding the ridiculous size and scale of this mansion.

Beyond its sheer scale, what is most unique about this mansion is that these rooms were added on with very little rhyme or reason or forethought. Sarah Winchester’s obsession with adding rooms led to a floor plan where the location of the rooms and the function that they served were irrelevant. The resulting mansion, that over 12 million people have visited since it was opened to the public in 1923, is a hodgepodge of illogical rooms, hallways, and dead-ends.

Perhaps a harsh analogy, but in a similar fashion, it feels like throughout the years, the automotive industry has continued to add more and more electronics to the vehicle employing an E/E architecture with similar levels of forethought as Sarah Winchester’s floor plan. The continued addition of electronics has led to high-end vehicles with over 130 microcontrollers that are physically located wherever it seemed to make best sense at the time – resulting in what’s commonly referred to as distributed architecture. The resulting wiring harness required to interconnect all the distributed microcontrollers and other devices, has grown from 55 wires with a total length of 150 ft in 1948 to the present day where the wiring harness for a vehicle based upon a distributed E/E architecture weighs roughly 120 lbs and contains 1,500 to 2,000 wires with a total length of 5,280 feet (one mile).

To a certain degree, it’s not too hard to see how this distributed architecture may have come into existence. More than two decades ago, the automotive OEMs viewed electronics as context, not core to their businesses. This led to the eventual spin-out of the OEMs’ EE teams and the formation of Tier 1s, an external company that had indirect responsibility for the OEM’s electronics and associated E/E architecture.

Today, automotive OEMs are becoming acutely aware of the importance and impact of both the electronics and the underlying E/E architecture. This is driving the mature OEMs to shift back to their original model where the electronics teams were vertically integrated. Fun fact, I have been told that this trend is not uncommon across different industries and that the Harvard Business Review refers to this shift from dis-integration back to vertical integration as “the double helix.”

With this greater, more immediate focus on the electronics and underlying shift to greater control of the vehicle through electronics, there is a similar increase in focus by the OEMs that is placed upon the underlying E/E architecture. More specifically, there is a keen focus on how to reduce the size, weight, complexity, cost, and associated poor reliability of the wiring harness. The impact of the wiring harness, which has typically been considered innocuous, has now become very significant.

EV startups without the baggage of legacy architectures have mostly embraced the centralized E/E architecture, which holds the promise of addressing all the above-mentioned challenges associated with the distributed architecture. However, for most mature automotive OEMs with legacy architectures, the move to centralized can prove too much of a stretch. So the approach is to move from a distributed architecture to a domain architecture and then eventually to the centralized architecture.

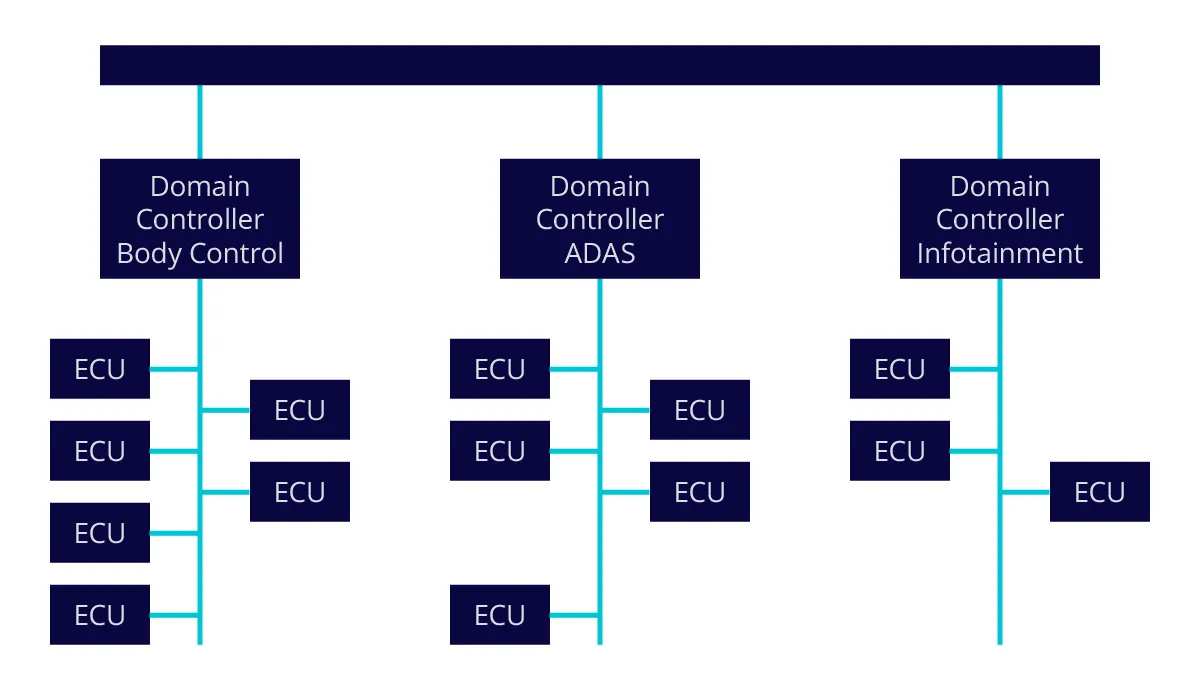

Domain controllers allow for rich communications between electronics control units (ECUs) within a common domain, while isolating unrelated communications between other, unrelated domains.

As shown in Figure 1., one of the key concepts behind the domain architecture is that not all electronics control units (ECUs) need to communicate with one another at all times. This concept is similar to the way that office computer networks are typically constructed. Breaking the network traffic into local area networks with common communication needs leads to greater efficiency and reduced costs. As an example, personnel within an engineering department need to regularly communicate with others in the engineering department, but less frequently with personnel in the shipping department. Breaking these groups into two unique local area networks, i.e. engineering and shipping, allows for rich communication within each group and isolation between the two groups via a bridge. This allows only relevant conversation between either group to occur, and leads to a reduction in wiring while greatly improving communications throughput and reliability.

While the domain architecture offers significant improvements over the distributed architecture, the physical location of the different ECUs directly impacts the physical length of wire required to connect to the appropriate domain controller. The centralized architecture, on the other hand, addresses wiring length and provides many additional, significant benefits.

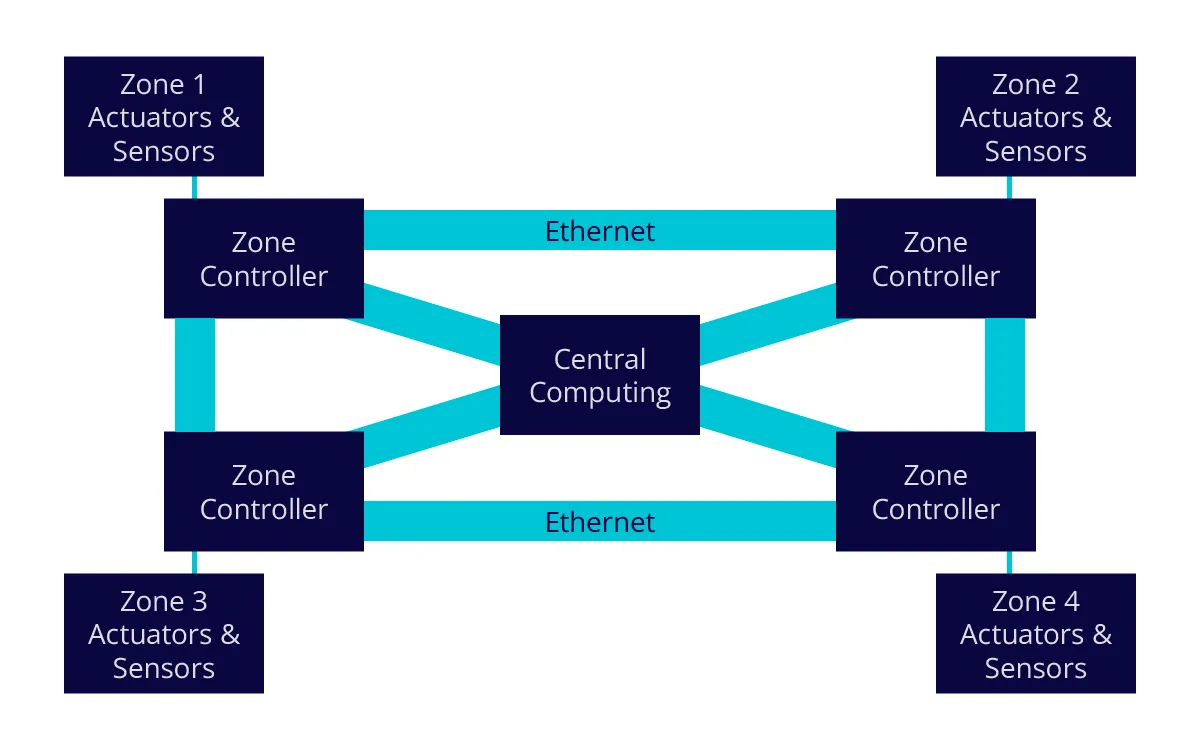

The centralized architecture, as shown in Figure 2, is the eventual, target E/E architecture for most incumbent OEMs. While there are variations on a theme of this actual architecture, typically what they all hold in common is the majority of the computing is aggregated into one common, centralized location. The zone controllers are physically located in different quadrants of the vehicle and connect the different actuators and sensors that are found in the same physical location of a given zone.

For purposes of definition, an example of an actuator would be the physical controller that engages the brakes in the case of anti-lock or automatic emergency braking, whereas a sensor might be a camera or radar that is again, located in the given quadrant, or zone of the vehicle.

As noted in Figure 2., the different zone controllers are interconnected via Ethernet in a mesh configuration – implying that every zone controller has more than one way of connecting to the central computing block. Ethernet connectivity is chosen because it is a very mature, proven, robust serial communication technology that relies on lightweight cabling for connection. Variants of Ethernet, including TSN (time sensitive network) are gaining momentum in automotive as they offer greater levels of determinism in communication timing performance, typically a requirement in real-time embedded applications.

Employing a mesh-based connectivity architecture allows for one or several segments of the network to fail without impact. The network simply seeks an alternative connection path, which is enabled through this scheme. This connectivity topology is typically found in today’s data center in support of what’s referred to as a “fail-over” architecture. As previously mentioned, in many different ways, the vehicle of the future is increasingly becoming a data center on wheels.

The centralization and consolidation of compute resources also leads to a significant reduction in the total number of discrete ECUs while also more readily accommodating computational redundancy, again leading to greater levels of reliability.

Within a given zone, the sensors and actuators can be added based on trim level of the vehicle in a manner similar to populating a PC with a new add-in card. The sensor or actuator identifies its presence and personality to the centralized computer via a unique IP address and the vehicle’s computer’s operation is updated accordingly. This allows for greater levels of simplicity in the manufacturing of different classes of vehicles, which typically contain different numbers and types of sensors and actuators. Different types and numbers of sensors are added on the manufacturing floor based on vehicle type or level. This type of “plug-and-play” also supports the concept of upgrading a vehicle to a higher trim level years after the vehicle has left the showroom floor.

With software scaling into the 100 to 300 millions of lines of code, the importance of practical support of over-the-air OTA software updates cannot be overstated. The distributed architecture with over 130 MCUs that are distributed throughout all corners of the vehicle, does not lend itself to simple OTA updates. A similar point can be made in regards to the manner in which the centralized architecture leads to a more rational and practical way to address cybersecurity.

Without a centralized architecture, the hot industry buzzword, Software Defined Vehicles (SDV) would be almost impossible to realize.

In the same way that the evolving E/E architectures are driving transformation, the SDV is also poised to transform the auto industry in even more significant ways.

This is, however, another topic that I will discuss in a future blog.

Roy Chua of AvidThink on AI, Networking, and Sustainability at OCP

Allyson Klein and Roy Chua, founder & principal of AvidThink, explore AI-driven networking, sustainability challenges, energy efficiency, and more in this insightful episode, recorded at OCP Summit 2024.

Exploring AI Innovations: Chen Lee of Gigabyte at OCP Summit

Join host Allyson Klein and co-host Jeniece Wnorowski in this episode of Data Insights as they chat with Gigabyte's Chen Lee about AI innovations and the future of server technology at OCP Summit.

Ocient: Revolutionizing Data Warehousing for AI Workloads

Live from OCP Summit 2024, this Data Insights podcast explores how Ocient’s innovative platform is optimizing compute-intensive data workloads, delivering efficiency, cost savings, and sustainability.

JetCool's Innovative Liquid Cooling Tech Powers High-Performance AI

JetCool Founder & CEO Bernie Malouin discusses the company’s breakthrough liquid cooling tech, which targets processor hotspots with jets of coolant to boost efficiency across intensive workloads.

Google Cloud’s Amber Huffman on AI, OCP & Future Innovations

Live from OCP Summit, Google Cloud’s Amber Huffman shares insights on AI's future, open standards, and innovation, discussing her journey, data center advancements, and the role of collaboration at OCP.

GEICO’s Rebecca Weekly on IT Transformation and OCP Innovation

In this episode, Rebecca Weekly shares how GEICO is rethinking cloud strategy and embracing OCP for improved efficiency, security, and cost savings in its infrastructure journey.

From Desktops to Data Centers: Zane Ball on Silicon Innovation

In this episode – recorded live at the OCP Summit – host Allyson Klein catches up with Intel Corporate VP Zane Ball to discuss silicon innovation, AI evolution, and the future of enterprise adoption.

Data Insights: Arm’s Eddie Ramirez on Data Center Innovations

Join Allyson Klein and Jeniece Wnorowski as they chat with Eddie Ramirez from Arm about how chiplet innovations and compute efficiency are driving AI and transforming data center architecture.

CoolIT: Revolutionizing Data Centers with Liquid Cooling Tech

Learn how CoolIT Systems is driving efficiency and performance in AI and data centers with cutting-edge liquid cooling solutions in our latest Data Insights podcast.

How Flex Manages Power, Heat and Scale in the AI Era: Part 2

Join us as Rob Campbell from Flex discusses the challenges and innovations in data centers, focusing on power, heat, and scale, while shaping the future of AI and sustainable solutions. This podcast is the second episode in a two-part series. Listen to part 1 here.

How Flex Manages Power, Heat and Scale in the AI Era: Part 1

Rob Campbell of Flex discusses how Flex is driving data center transformation with cutting-edge solutions like liquid cooling, AI-ready infrastructure, and vertical integration for global hyperscalers. This podcast is the first episode in a two-part series. Listen to part 2 here.

A Trio of Trackable Topics at OCP Summit

The OCP Summit kicked off last night with its customary opening reception. This event is the highlight of the year in how hyperscale infrastructure is advancing, and this week's Summit is rumored to be the largest in history.

The elephant walking the halls of the San Jose Convention Center is how infrastructure will adapt to the continued pressure of AI performance demands, and whether open hardware design pivots to a world where performance density and acceleration are the keys to the kingdom. The TechArena is delighted to once again be a media sponsor of the event, and we'll be delivering key takeaways from the show all week. Here are the top things we're targeting for the biggest news at the event:

Performance: How will OCP pivot its focus to address the world of accelerated computing? This is on top of many people's minds at the show that has been dominated by CPU-centric designs for over a decade. This year's Summit is different, with NVIDIA taking a much more visible role in the proceedings and NVIDIA having delivered reference designs to OCP for construction of rack-level AI compute. We'll be tracking how much NVIDIA technology is being embedded into OCP workstreams within our conversations with both members of the foundation and industry representatives.

Power and Cooling: With emphasis on expansion of data centers and delivery of more watts per square foot, the power and cooling industry will be out en masse at this week's Summit. Last year, Submer made waves, literally, with an immersion cooling tank system that got everyone's attention. This same tank was featured in yesterday's podcast with CircleB. However, the discussion on direct-to-chip and immersion cooling approaches continues to bubble, and we expect to hear a lot from the industry on cooling advancements, collaborations like the one announced by Flex on our podcast today, and discussions of dielectric alternatives sending chem geeks into molecular bliss.

Standards: Last year's Summit featured a major announcement about Ultra Ethernet, and AMD made waves yesterday with the introduction of the first Ultra Ethernet adapter to hit the market. We've seen standards advancements in scale up networking, chiplet designs and expect to hear more about memory advancements at the conference. We'll be covering all announcements from the show through interviews with standards leaders assembled in San Jose.

Are these the topics you're seeking from OCP Summit? Follow along by following us on LinkedIn, subscribing to our feed or connecting with us to provide feedback on what you want to hear about most.

TechArena Data Center Compute Efficiency Report 2024

Check out TechArena’s Data Center Compute Efficiency Report 2024 to discover how AI-driven innovation is reshaping data centers, reducing power consumption, and driving efficient compute solutions.

Scaling Innovation: How MIPS Balances Performance with Flexibility in a Data-Driven World

MIPS CTO Durgesh Srivastava shares insights on AI, data centers, automotive edge, and how MIPS is leveraging RISC-V to drive efficient, flexible computing solutions.

OVHcloud Carves Out Unique European Space in Global Cloud Market

Jeniece Wronowski and I recently got the chance to sit down with Gregory Lebourg of OVHcloud, a major European cloud provider that’s been making significant strides in the global cloud market. With a focus on sustainability, data sovereignty, and competitive pricing, OVHcloud is challenging carving out a space that’s distinctly European. Our conversation delved into OVHcloud’s unique approach, their mission, and the trends they see shaping the future of cloud computing.

One of the first things that Gregory emphasized was OVH’s identity as a European service provider. While the cloud market is dominated by American and Chinese giants, OVHcloud stands out as a provider deeply rooted on the continent, adhering to European standards and business practices. This isn't just about where they’re based, but about how they operate. Data sovereignty is at the core of their operations, and OVHcloud ensures that customer data remains protected under European regulations, providing a significant advantage for businesses looking to avoid the complexities of non-EU data jurisdiction.

Gregory leads OVH’s sustainability practices, and in terms of environmental impact, OVHcloud is setting a very high bar. They’ve adopted a circular economy approach, focusing on minimizing waste and optimizing resource efficiency across everything they do. In our chat, Gregory shared that OVH data centers are equipped with custom water-cooling systems that reduce energy consumption by up to 50% compared to traditional air-cooling methods. This innovative approach has earned them an impressive PUE (Power Usage Effectiveness) rating of around 1.1 across most of their facilities, which is significantly better than industry averages. But that’s not all. They also use refurbished servers, which helps them keep costs low while reducing their carbon footprint. OVHcloud’s data centers operate on a massive scale, with more than 400,000 servers across 33 data centers globally. Despite this scale, they’ve managed to maintain competitive pricing without compromising on performance, a feat they attribute to their sustainability practices and vertically integrated supply chain.

We also had a chance to discuss pricing with Gregory, and it became clear that OVHcloud’s commitment to affordability is about more than just competing with other cloud giants — it’s part of their mission to democratize cloud access. By controlling their supply chain and building their servers in-house, they’re able to offer services at 20%-50% lower costs than the major competitors. This cost advantage has been crucial in helping small and medium-sized businesses access high-performance cloud services that might otherwise be out of reach.

One area where OVHcloud is particularly focused is in supporting multi-cloud strategies. Businesses are increasingly looking for flexibility in their cloud environments, and OVHcloud has responded by offering a range of services that can integrate seamlessly with other providers. This approach provides customers with more choices and enables them to build cloud architectures that suit their unique needs.

In today’s digital landscape, data security and privacy are critical concerns. OVHcloud takes a strong stance on data sovereignty, a major selling point for European customers wary of foreign jurisdiction over their data. They’ve also aligned their services with GDPR (General Data Protection Regulation) requirements, which gives customers the peace of mind that their data is protected according to some of the strictest standards in the world.

During our chat, Gregory underscored their commitment to transparency and compliance. They’re actively involved in initiatives like GAIA-X, which aims to create a federated and secure data infrastructure for Europe. This aligns with OVHcloud’s long-term vision of building a robust digital ecosystem in Europe that champions trust and user control over data.

When it comes to future technologies, Gregory shared that OVH is keeping its eyes on the horizon. They’re particularly interested in quantum computing and AI, areas they believe will transform the cloud landscape in the coming decade. Their partnership with the French government on the Plan Quantum initiative exemplifies their proactive approach to these technologies. As Gregory sees it, quantum computing holds the potential to revolutionize data processing and encryption, making it a game-changer for sectors like finance, healthcare, and defense. Meanwhile, OVH is investing in AI-driven tools that will enhance cloud services, offering more intelligent insights and automation options for customers.

So what’s the TechArena take? After our conversation, I walked away with a sense that OVHcloud is setting a very high standard for innovative cloud services, designed for their market and aimed at delivery with sustainability in mind. Services are high-performance, affordable, and sustainable, reflecting European customer priorities. Their commitment to data sovereignty is particularly timely, as businesses are becoming increasingly pressured to manage these aspects to keep aligned with government regulations. OVHcloud’s approach is refreshing in a market dominated by a few powerful players. For businesses in Europe and beyond, OVHcloud is proving to be a compelling alternative to the usual suspects, and I’m excited to see how they continue to evolve in the years to come.

Listen to the full conversation with OVHcloud here.

CircleB: Optimizing Data Centers for AI Workloads

Tune in as Jason Maselino of Circle B discusses the role of Open Compute Project in revolutionizing data centers with energy-efficient solutions, modular designs, and AI-ready infrastructure.

Exploring Aerospace Innovation with Airbus Acubed's Arne Stoschek

I recently had the pleasure of hosting Arne Stoschek, VP of AI and Autonomy at Acubed by Airbus, In the Arena. If you’re not familiar with Acubed, it’s the innovation hub of Airbus based in Silicon Valley, where they’re tackling the big questions of tomorrow’s aerospace industry. Arne and his team are at the forefront of pioneering advancements in autonomous flight and digital design tools. Our conversation gave a fascinating glimpse into the future of aerospace.

The Vision Behind Acubed

Arne shared Acubed’s mission to push the boundaries of what’s possible in aviation. While traditional aerospace projects can take decades to develop, Acubed operates at Silicon Valley speed, testing and iterating quickly to fast-track technology to maturity. It’s this ability to act nimbly that distinguishes Airbus from other aerospace companies working as a “startup within a big company” where Arne’s team has the creative freedom to explore without being bound by traditional corporate constraints.

When you consider the level of scrutiny that goes into design and manufacture of aircraft, this approach is fantastic from my perspective given the unfettered ability to dream big prior to facing integration of technology through regulatory hurdles. Arne shared that he focuses on harnessing the creativity and innovation found in tech while leveraging Airbus’s deep knowledge and resources within the aviation domain to produce results that are both innovative and practical for the arena in which they are targeted.

Autonomous Flight Advancement

One of the most exciting areas of Acubed’s work is in advancement of autonomous flight, something that at rudimentary level has existed for some time in aircrafts. Arne explained that Acubed is building advanced autonomous systems that can operate safely and efficiently alongside human pilots with an ultimate goal to reduce pilot workload and increase safety, particularly in urban air mobility (UAM) scenarios.

When it comes to autonomous vehicles, many of us might think first of self-driving cars. However, Arne believes the impact of advancement in autonomous flight could be even more profound. He envisions a future where fleets of autonomous aircraft could relieve urban congestion by transporting people and goods in ways we’ve only seen in sci-fi movies. Arne pointed out that Acubed’s technology is already proving itself in smaller pilot projects, which is a huge step toward making advanced autonomous flight a reality.

Digital Design Tools: The Key to Faster Innovation

Arne shared how digital design tools are transforming the aerospace industry. Traditionally, aircraft design is a painstakingly slow process, but Acubed is leveraging digital twins and other cutting-edge technologies to speed things up. These tools allow his team to create virtual models of their projects, test them in simulated environments, and identify potential issues long before a physical prototype is built.

Arne believes these digital tools will be crucial for the future of aerospace, as they enable rapid iteration and refinement. By incorporating real-world data into these simulations, Acubed can make better, faster decisions that ultimately result in safer and more efficient aircraft.

So what’s the TechArena take? Listening to Arne, it was hard not to get swept up in the excitement of Acubed’s work. They’re tackling some of the most complex and ambitious projects in aerospace, with a clear focus on sustainability, safety, and efficiency. What stood out to me most was Airbus’ approach to innovation — bold, forward-thinking, and unafraid to challenge the status quo. As someone who’s watched the tech industry evolve over the years, I’m always thrilled to see groups like Acubed bringing that same spirit of innovation to other sectors. Arne and his team are not just imagining the future; they’re building it, and I can’t wait to see where they’ll take us next.

To learn more check out our interview with Arne.

5 Fast Facts with Flex’s Rob Campbell

I recently caught up with Rob Campbell at Flex to learn more about how power and cooling technology choice helps yield improved efficiency to solution delivery in the market. As an advanced, end-to-end global manufacturer, Flex offers

the data center industry a unique combination of manufacturing, products, and

lifecycle services focused on data center IT and power infrastructure.

Rob is Flex’s President of Communications, Enterprise & Cloud. He oversees the team that supports leading cloud, data center operators and communication solutions providers to fuel the expansion of next-generation AI data centers, enterprises and communication networks. In the data center space, this includes hyperscalers, tier-two operators, colocation companies, and the solution providers that support them.

ALLYSON: Rob, thank you for being here today. Flex engages with some of the world’s largest cloud providers to deliver foundational technology to fuel the digital services we all rely on. Why is this moment so unique in hyperscale buildout?

ROB: The proliferation of artificial intelligence (AI) has broken the typical model for data center design and operations, and the industry faces a number of challenges around power, heat, and scale.

There’s increased IT complexity in the data center core and major power challenges at every level of the data center, and even out into the grid. Whether a cloud service provider is upgrading an existing data center or building a new one, there is a need to think about compute capabilities along with the entire power envelope of the data center. These dynamics are driving the evolution towards integrated systems and solutions.

Since every data center has different requirements depending on the mix of applications, size, and a variety of other factors, there is no one-size fits all solution. This means that the largest companies require customization in the various products and services that are part of a vertically integrated solution. At the same time, data center operators want more plug-and-play like deployments for speed and simplicity.

Finally, demand has been consistently high, unlike the normal variability we see in other industries. And companies have compressed timelines to deliver everything from new processors to greenfield data centers.

There are a lot of dynamics at play, and Flex is well positioned to support the industry to turn challenges into opportunities.

ALLYSON: When you consider the size of investment for greenfield data centers, the challenges in grid availability for greenfield projects, and the complexity of brownfield upgrades, how does a power and cooling partner like Flex work with customers to ensure the scale and speed of solutions to get data centers powered up swiftly and reliably?

ROB: A lot of companies are trying to support accelerated timelines for AI technology and data center expansion. They may have great technology, but they often lack the ability to deliver at the scale needed to relieve the pressure on these data center operators.

What makes Flex stand apart is the fact that we offer a portfolio of data center IT and power solutions combined with advanced manufacturing services across the product lifecycle for servers, storage, vertically integrated racks, and power products coupled with cooling at the board, rack and facility levels. We deliver scale and speed so companies can execute their ambitious AI and data center strategies and accelerated timelines.

We deliver a number of embedded and critical power products, but let’s use the example of a power pod from Anord Mardix, a Flex company. These power pods enable the rapid deployment of power capacity, which could be in a greenfield data center or to upgrade power capacity in brownfield data centers.

Our team designs, builds, tests, delivers, and commissions these modular, vertically integrated pods with everything needed to connect to the grid. We’re able to deliver 84% reduction in on-site man hours during installation phase and 75% reduction in onsite assembly and testing. This saves a lot of time and money. For brownfield data centers, you can drop a pod onto the site and increase power capacity almost overnight. For greenfield projects facing a shortage of construction workers, this solution eliminates that headache, among others.

ALLYSON: The metrics of power from platform to rack to data center are changing dramatically with the growth of AI server cluster deployments. How does this change the fundamentals of what you deliver to the market?

ROB: The rapid adoption of AI servers, primarily by hyperscale data centers, requires a different approach to how you think about power at the server, the rack, and the entire data center.

We have customers deploying 30kW racks today, and that will need to double to over 60kW in the near term and exceed 100kW in the next several years. This means we have to think about the rack as an integrated system where we consider factors such as server power density, rack power, and rack cooling. This means that data center operators are looking for partners that have the capabilities to design and manufacture a complete AI server cluster and deploy it at scale worldwide.

Our fundamental value is the combination of power products with advanced manufacturing and services across the product lifecycle, delivered on a global scale.

We understand the long-term trends and challenges and continue to drive R&D and innovation to help customers stay ahead of the challenges. We engage data center operators and vendors very early in their product development lifecycle, often years ahead of an official launch. This includes custom power modules designed for GPUs, CPUs, FPGAs and SoCs, as well as data center racks, power shelves, power pods and a host of other data center infrastructure solutions. We’re then able to deliver these innovations at a scale and speed that is unique and valuable to our customers.

Technically speaking, AI creates multiple ripples starting with the chip and cascading across the data center and ultimately the grid. Power consumption, rack density, heat generation, energy loss and grid disturbances are a few of the challenges.

Flex is focused on improving power efficiency and distribution, architecture optimization, and cooling. This includes ongoing architectural advances to place point-of-load DC/DC converters as close to the power source as possible for greater power accuracy, efficiency and latency. For most applications, the traditional lateral placement of a converter alongside the processor on the printed circuit board (PCB) is effective, and forced air cooling works well with this design.

AI is different. These AI processors and DC/DC converters need to be as close as possible to avoid static and dynamic voltage drops across power rail connections. A vertical power delivery (VPD) module, as the name suggests, places the DC/DC vertically underneath the processor on the bottom side of the PCB for optimum power transfer and minimum power delivery network losses. This design also aligns well with direct-to-chip liquid cooling with a cold plate alongside the DC/DC converter and the processor.

We’re designing power shelves to stay ahead of expected 125kW and 1MW rack requirements. We’re also looking at the use of different voltages and more concentrated AI clusters to better manage power demands.

Additionally, high-speed switching with GPUs can create disturbances and transients that affect power quality. This makes it necessary to manage these impacts carefully to avoid further inefficiencies. We recently announced Flex’s Capacitive Energy Storage System designed with hyperscale partners to balance peak power and protect the grid from line disturbances during AI training and inference periods. The solution includes state-of-the art capacitor technology from Musashi Energy Solutions.

These are just a few examples of the innovation we’re driving in collaboration with customers and the ecosystem.

ALLYSON: Cooling technologies are critical within this context as well. How do you see the change to liquid cooling, and how does Flex plan to play a role in this arena?

ROB: Liquid cooling has multiple drivers that are supporting the adoption and growth of liquid cooling technologies in the data center. For example, rising rack power densities have already exceeded the ability for traditional air cooling at the server and rack level, and are driving innovation in cooling technologies. Also, large data centers have seen marginal improvements in efficiency with the current cooling technology and are looking to innovate and re-architect data center designs to meet their sustainability goals.

Flex has over a decade of experience in liquid cooling, and we continue to invest in liquid cooling technologies to provide our customers with innovative power and cooling solutions that support their large-scale deployments of AI server clusters. Liquid cooling is a critical part of the Flex strategy to be the partner of choice for our data center customers, and complements our existing portfolio of critical power, embedded power, mechanicals, and IT infrastructure products and services.

ALLYSON: Where can our readers find out more about Flex solutions in this space and engage the Flex team to learn more?

ROB: Visit our website at www.flex.com and if you’re attending the OCP Global Summit, stop by booth A11.

You Can’t Have It All: Managing Expectations in Complex Projects

“You know, when I fumbled and lost the West High game, it took a whole roll of LifeSavers to make me feel better.”

“You got a whole roll?”

“Here, there’ll be other games, kiddo.”

-Commercial from 1976

A lesson in planning: Ineffectiveness is habitual.

There. It’s in print. Especially as veterans of the technology community, we feel that we can escape the ravages of ennui, entropy, etc., because we took the hard road, and it steeled us for non-failure. Our plans are exceptional, and setting those clear goals and expectations will magically coalesce the team to whip whatever schedule or project into a shining example of efficiency and excellence. (Leading to exasperation and excuses.

Several current high-tech examples come to mind, but the personal is always the best. So we offer the following lesson, still ongoing.

Yer’ Humble Author (YHA) was reminded of the rules of recently, specifically during a recent discussion with local county government officials about a complex taxpayer-funded project in our purview* that was about to tip over the top of budgetary expectations. The conversations were sanguine on at least one end, mostly because some failure is usually an option in certain circles. But it prompted several long-ago planning and organizational lessons to come to mind.

In a couple of those conversations, an old technology saying was presented. When faced with the triumvirate of schedule, features, and budget, you-the-management may pick any two. That doesn’t mean you’ll get both, but you clearly won’t get the three. Pressure comes in all shapes and sizes. And while it’s supposedly good for carbon and silica, it’s not so much for plans. Even those of us who are pretty good at screaming into the buffeting winds are eventually sandblasted to submission. Low expectations aside, it can be hard to accept that you can’t keep sliding the levers and hope nobody notices the migrating goalposts.

One opinion (the correct one) would point back to clear goals and expectations: it will cost this much and arrive on this date.

Said project still looks very good for schedule (two months left!). And the entire team is currently very focused on understanding the trade-offs that are pushing the budget and limiting any new unnecessary expectations that might occur. We’re all sure that it’ll be a non-contentious product introduction that the community will mostly appreciate. This leads to another habit good planners embrace.

YHA’s favorite direct manager (yes, they’re all ranked, and most of them know where they land on the spreadsheet) was very fond of building his organization around a simple methodology: We Suck Less. Like hikers encountering ursine denizens, sometimes it’s okay to just be a little faster. While this particular project looks to be taking 2.5% more than hoped, any someone could cast within the territorial boundaries of said government budget and find four or five similar projects that freewheeled one of the three and are currently blowing the other two. The comparisons will still create eventual kudos that will further create favorable memories when we’re done.

It’s still about expectations. Even while reminding those in charge that We Suck Less, it’s useful to gently remind the charges that We Still Suck. As said above, permission to spend above budget isn’t unlimited. So those of you in planning, please remember, pick your two and be firm in your fluidity. Your products and your customers will thank you for it.

*(When one “retires” a bit too early, one ends up volunteering skills taught in the brutal territory of high tech to help the less experientially-fortunate, which will be a common feature in this space. It’s okay to be bad at retirement sometimes.)

Don’t Skip Leg Day

Weightlifting, like many sports, has a distinct culture that I started learning about when one of my sons started working towards maxing out his scores for a military PT test. He was oriented entirely around one objective and set of exercises until the test was updated. That military branch realized they needed to test for proficiency in movements more typical in the field. At this point, skipping leg day was not an option since chicken legs aren’t efficient or effective at transporting a payload amounting to 180 lbs of sheer upper body muscle.

Computing services have also tripped over the same over-engineering of a single element, because there’s no time, no cash or no clarity on what commercially viable uses a technology or system will have. It is inevitable that if you get enough processing power to solve a problem, your next challenge will be keeping that processor from stalling because – like a no leg day curlbro with chicken legs – the transport layer is too tiny to move the payload except at a snail’s pace. Recently a friend at one of the hyperscaler cloud providers told me “we have demonstrated we can multiply numbers effectively with AI GPUs. Now it’s a mere matter of keeping the beast fed.”

Having had the opportunity to work on 3DXP memory technology that would sustain a data lake the depth of Lake Tahoe and was inhibited by its connection to the rest of the world being the width of a drinking straw – I do wonder who tames whom. Will the unique movement, uses, types and amounts of data that feed the AI beast transform the network? Or, given how much the network has clapped back at compelling but unsustainable business models (true cloud gaming, for example), perhaps I should wonder what the network will do to the AI beast.

AI requires enormous amounts of data to support model advancements. Omdia recently stated that by 2030 75% of all network application traffic will involve AI content generation, curation or processing. When I was running a “Visual Cloud” business in 2020, Cisco’s annual networking report made a near identical claim that video traffic represented 75%+ of all internet traffic. Despite the dominance of video, there were a number of network characteristics that video-based workloads have to work around to improve delivery of their payloads. The hardware at the end points processing these video payloads had a nearly inconsequential role in the service – the network in between enabled or eradicated video business economics.

When it comes to AI bandwidth “more is more” – yet anyone who tells you bandwidth is cheap and plentiful is, in the words of the Dread Pirate Roberts – selling something. AI imposes the requirement for near real-time responses. Network responsiveness correlates to distances – in the datacenters, between data centers and on the internet. AI requires lossless delivery networks. In the 1980’s “Sneakernet” was coined because interoperability and lossless communications were safer if transporting data on physical discs between compute networks. In the 2020’s, “command streaming” seemed like the best way to make cloud gaming economically feasible, yet GPUs couldn’t reliably count on a perfect, lossless transmission and often displayed garbage due to the occasional packet drop.

If this isn’t a tall enough order, the network must be zero-trust, since the data used in AI is often sensitive, while sustaining extensive east-west traffic exchanges. All that, plus adhere to a broad industry standard with a diversity of suppliers, while reaping the benefits of high volume manufacturing economics.

I would love your perspectives here:

-Will AI force the industry to get serious about network “leg day,” accepting that networks are a foundation for AI infrastructure as much as legs are a foundation for a fully optimized human body?

-Or, similar to the infamous “penguin walk” after leg day, are the past 30 years of network architecture buildout such a disincentive to change that AI infrastructure will have to adapt to the network?

Why My Wife and I Decided to Write a Book Using ChatGPT

Toward the end of 2022, ripples of ChatGPT-generated buzz began to propagate through my network of technical colleagues. The whisper was, “This is new. This is something.” I dutifully set myself up with a ChatGPT account, and like almost everyone who tries modern AI for the first time, my head began to fill with questions and concerns. I was also impressed.

It is true that there are future existential threats that humanity will need to navigate, and which I will touch on below, but my existential fears were more immediate: I had recently published my third book on AI and I began to wonder, “Was that my last book? Has AI overtaken humanity in the realm of creative writing, and nudged me off the top of the food chain?”

I shared my concerns with my wife, Theresa Hart, who is an entrepreneur and attorney, but rather than fret, we hatched a plan. We began work on a new AI book, to be written entirely by ChatGPT and illustrated by an AI service called Night Café. Thus, “ChatGPT, An AI Expert, and a Lawyer Walk Into a Bar…The History of Creativity and Communication” was born. The rules were simple: We could enter any prompt we liked, but we could not directly edit the resulting text.

Testing ChatGPT’s early capabilities

We explored the limits of that version of ChatGPT, we asked dumb questions, we asked evil questions, we asked ChatGPT to write jokes and to entertain us. What we found was a tool that went far beyond any previous technology that had ever existed in the areas of language creation, and in many cases we observed behavior that mimicked creativity. We also found that ChatGPT had limitations that prevented it from always getting facts right, and we noted that it could not form an opinion or argue a point for the purposes of making a case or telling a story.

In short, ChatGPT was a powerful tool for augmenting humans, but not yet one that could replace them. I began to think of Generative AI (“GenAI”) as more of a colleague than as a competitor. Phew!

Fast forward almost two years…We have seen explosive growth in the development and propagation of new GenAI models: multiple versions of GPT and ChatGPT, Claude, Gemini, and Llama, just to name a few. Of particular note are the multimodal GenAI models that can generate images, video, and audio from text, and vice versa, because these technologies have been used by criminals to scam companies and individuals out of millions of dollars and they have been used to manipulate the public with false imagery.

These developments are generating excitement, but also reigniting our original existential fears. Will my job be displaced by AI? Will I be scammed by an AI? Will AI take over the world? What legislation should be put in place to protect human society from AI?

This last question is particularly timely: Governor Gavin Newsom of California just vetoed some broad legislation aimed at regulating AI and making companies developing AI accountable for some misuse of their tools, and requiring specific features to be incorporated into AI systems by developers.

I can see on social media that the majority of my high-tech colleagues are celebrating this veto as a victory in the name of speed to market, innovation, and fewer regulatory hurdles to develop products. I personally would assert that it is fortunate that this legislation did not pass because it arose out of fearful, somewhat uninformed thought processes and was not likely to result in beneficial, meaningful improvements in the way that we develop and deploy AI.

Thoughtful AI legislation requires discussion across disciplines

I also know for a fact that leaders in a variety of disciplines are strongly in favor of thoughtful legislation that will help ensure protections for consumers and workers without putting unrealistic expectations on the folks who are developing new technology. I was very fortunate to participate in a private, round table discussion with a dozen well-informed and connected leaders from Silicon Valley, Texas and Washington, DC, covering areas of expertise as broad as quantum computing, robotics, healthcare, governance and policy, venture capital, and international tech journalism. Frankly, it was such a who’s-who, that I had to pinch myself that I was included in the cast.

What I experienced was a balanced discussion coming from a diverse group of perspectives, all converging on a few key ideas: “bad” regulation will hurt innovation and potentially negatively impact national security; “good” regulation is needed to ensure transparency about the dangers and limitations of the technology that is being developed so rapidly right now; concerns about job loss in some sectors are real, so incentives for upskilling employees as automation of their jobs increases will be necessary, but using regulation to slow down deployment of new automation technology is not a good idea.

Bad regulation in this context tended to be specific requirements on what an AI system could do, how it was developed, requirements to expose the details of data used to train AI, and importantly, direct accountability for misuse of tools by third-party evildoers.

Good regulation tended to include labeling, so that users know they are interacting with AI or experiencing AI-generated content, increased transparency around the potential risks and shortcomings of AI output, and expansion of existing rules to ensure that using AI to scam or manipulate people is punished vigorously.

I was impressed by the overall sentiment from this group of technical and tech-adjacent leaders, that their role is not to resist regulation at all cost, but to provide informed input to lawmakers that will ensure that we end up with sensible regulation that is good for society as a whole.

AI tool developers: Inform the public, lawmakers about capabilities and risks

Getting back to the original subject of this article, it is crucial that the people who are developing these tools, and those who are using these tools on a daily basis to create value, continue to inform the public and lawmakers about what the real capabilities of the latest GenAI systems are, where there are known risks and design flaws, and what we might realistically need to prepare for in the next one to two years. Beyond this timeframe, the future is not knowable, so we also need to maintain some flexibility in our approach to AI regulation. I hope that the discussion I participated in, which was intended to generate real inputs to lawmakers, is being mirrored by many others in tech who can really speak to the strengths, weaknesses and evolving abilities of modern GenAI.

One of the most distinguished members of the group pointed out that it is hard to fix law once it is in place. He cited the HIPAA law that was designed as an initial attempt to protect healthcare patient data privacy in anticipation of an increasingly connected world. The law was written in 1996, before the Internet, before mobile phones, before social media, and is now painfully outdated, and yet, despite these shortcomings it has not really changed since 1996. So we need to be flexible in our approach to AI legislation.

As I reflect on all of this, I wonder if perhaps it’s time for Theresa Hart and me to get together with ChatGPT to write another book. This one will be about AI safety, societal impact, and the quest to create beneficial AI legislation. Maybe it will be called, “ChatGPT, an AI Expert, and a Lawyer Walk into Congress…”

A Primer on Functional Safety in Auto

Deep thoughts - if two self-driving cars get into an accident… who would be at fault? The automotive industry’s case for functional safety.

Hopefully this is a somewhat tongue-in-cheek way to grab your attention. To be clear, self-driving cars aren’t the primary driver of automotive functional safety (FuSa). Automotive functional safety has been an area of focus since 2011, when the Automotive Safety Integrity Level (ASIL) based upon the ISO 26262 specification was first introduced.

However, the awareness of cars with increased levels of autonomy is indeed driving an even greater focus on FuSa. Understandably so, as increasing levels of autonomy also lead to significant increases in the amount of semiconductors employed in the vehicle. In turn, these semiconductors have greater levels of physical control of the vehicle. The increase in semiconductors in the vehicle also drives explosive levels of growth in overall system complexity.

As an illustration of the complexity associated with today’s car, a high-end vehicle contains over 300 million lines of software. This is expected to grow to 1 billion by the end of this decade. With these levels of complexity, there is a good reason why there’s such a keen focus on FuSa.

Functional safety, as it’s defined, is the discipline of implementing safety measures to prevent or reduce the risk of harm caused by a vehicle’s electrical or electronic systems failing or behaving unexpectedly.

There are a lot of key components to unpack from what appears to be a fairly innocuous statement.

- Implementing safety measures to prevent or reduce risk: Safety measures typically translate into additional hardware overhead that needs to be added when a semiconductor or system is being designed. This overhead needs to accomplish several key things:

- Know what “normal operation” looks like & detect when a system is not acting “normally”

- Once abnormal behavior has been detected, either

- Provide a corrective action that restores the failed system to “normal operation”

or - Provide a flag or warning from the failed system that will allow the vehicle to be able to take the appropriate action in response to the flagged departure from “normal operation” – depending on the severity of the failure, the right response from the vehicle could from “take no action” (one pixel on a display is not working) to cripple the vehicle (something affecting vehicle safety has failed) pulling it over to the side of the road.

- Provide a corrective action that restores the failed system to “normal operation”

- Risk of harm caused by a vehicle’s electrical or electronic systems: ASIL, which is measured by the letters A,B,C, & D where each progressive letter of the alphabet leads to increasing levels of scrutiny. The elements that are typically considered in establishing the required safety level (A vs. D for example) for a given component (i.e. Antilock Braking System, Air Bag, Lane Keeping, Headlight, etc.) includes the following:

- Hazard analysis

- What could happen to the vehicle in the case of a failure?

- Risk analysis

- How likely is a failure going to happen?

- How severe will the impact be if a failure happens?

- Hazard analysis

The ASIL is then determined by:

- The failure rate - how likely the failure may occur?

- What is the level of tolerance in the system to undetected failures

While an argument might be to ensure that undetected failures never occur, higher levels of ASIL typically come with significantly higher costs due to the additional hardware overhead required to detect failures. So if the inherent failure rate of a component is very low and the impact of an undetected failure is quite limited, then specifying an ASIL D for that component will likely result in a loss-leader, especially so in the cost-conscious automotive industry.

As an extreme example, ASIL D solutions can be based upon triple mode redundancy (TMR). TMR employs three identical copies of the same hardware with additional hardware that can detect if the output of the three copies match. When they don’t match, typically one of the three outputs doesn’t match the other two. The checking hardware will flag that there was an error but rely on the two matching outputs for the correct value. As you can begin to see, the costs of achieving ASIL D certification can get expensive.

- Systems failing or behaving unexpectedly: Another important point that can be easily overlooked in this statement. While this was just alluded to, which is the ASILs correlate to different detected failure rates, ASIL does not specify the absence of a failure. It is anticipated that failures will indeed naturally occur in a system for a number of reasons.

While functional safety is not primarily focused on preventing a random failure, it is, however, the responsibility of the system, to “always” be able to recognize when the system is failing or behaving unexpectedly.

The term FIT (Failure in Time) is the term used in FuSa to specify how many failures - i.e. missing an event when a component isn’t working properly over a period of time that is specified in the ISO 26262 spec and reflected in the ASIL rating. ASIL D, with a rating of 10 FITs implies that only 10 failures are acceptable in 1 Billion Hours of operation. That is equal to 10 failures in roughly 114,000 years or 1 failure in just over 11,400 years. To the best of my knowledge, there are no cars on the road that have reached that age - yet. In other words, this specification is very stringent.

It’s also key to note that I have been trying to use the word “component” carefully, because while ASIL certification is specified at the semiconductor device level, it is also measured and specified at the system level – which takes into account all the devices associated with a given system / component in the vehicle. This implies that while an ASIL D FIT rate of 10 is required at the system level, this budget of 10 will be distributed across the different semiconductor devices that make up the system. This implies that the FIT rate at the device level must be some percentage of that 10 – further increasing the complexity of designing a device targeting auto while still remaining profitable.

These insights into these stringent specifications should hopefully instill a sense of increased confidence that there is very significant scrutiny in the electronics systems in the automobile - with levels of scrutiny that are directly correlated to the impact of failure of a given system.

There are many different topics that could be discussed when looking at FuSa and it’s easy to go down a rabbit hole. To put the scale and importance of FuSa into perspective, an automotive OEM typically will have their own safety department with teams of engineers focused purely on FuSa. The same level of staffing also exists for the semiconductor manufacturer who is selling components into automotive applications. Both the OEM and semiconductor companies will require a “Safety Office” staffed with a “Safety Manager” and multiple safety engineers. This is also true for the Tier 1s.

Lastly, two more topics to cover – systematic fault coverage and random fault coverage.

Systematic fault coverage evaluates the design, test, verification, documentation, and other such processes to ensure that there are faults or errors that have been systematically introduced due to bad hygiene in the areas of design and test of the device. Systematic fault coverage also extends into the manner that software is developed both in the form of firmware as well as overall system software. Stringent processes and methodologies are called out in the ISO 26262 specification with correlated levels of scrutiny as dictated by the given ASIL.

The importance of addressing systematic fault coverage requirements cannot be overstated. Several years ago, the highly visible case of a vehicle that suffered from a faulty “stuck accelerator” was found to have not employed best practices in the development of the underlying software that was used to control the operation of the accelerator. Realizing that poor software development practices were at fault resulting in “spaghetti code,” the OEM quickly settled the case resulting in several combined financial settlements in excess of $2.5 B over 10 years ago.

Random fault coverage focuses on the random hardware failures which can occur unpredictably during the lifetime of a component. These failures can occur even if there have been no flaws in the development and manufacturing of the component. These failures typically are caused by cosmic neutron strikes or alpha particles from the package material. Here again, there are different ASILs that correspond to the rate at which these random failures go undetected. ASIL D specifies 10 FIT for the probabilistic metric for random hardware failures (PMHF).

ASIL D is a very difficult specification to achieve at the component level and so typically systems are designed using devices that have a lesser ASIL random fault coverage rating i.e. ASIL B. Through ASIL decomposition, which is a structured way of adding redundancy to the system, the requisite ASIL can be achieved. Random faults ultimately will be detected via the redundancy.

FuSa is a very complex topic of which I've barely scratched the surface. These rigid processes ensure that the vehicle is designed to stringent specifications to minimize the effect of the failure of a component. As mentioned, with an ever-increasing amount of complex systems taking over the control of the vehicle, the need for rigid safety processes can’t be overstated. Hopefully as you have been able to see in this blog, there is a lot of scrutiny and rigor in designing a system to achieve a given ASIL to hopefully avoid two self-driving cars from getting into an accident.

.webp)

Powering AI with ZincFive’s Sustainable Battery Solutions

Tod Higinbotham, COO of ZincFive, discusses the role of nickel-zinc batteries in supporting AI workloads, improving data center efficiency, and advancing sustainability in power infrastructure.

5 Fast Facts with Justin Murrill at AMD

I recently caught up with Justin Murrill at AMD to learn more about how processor innovation helps yield improved efficiency to data center workload delivery. AMD is delivering leading compute silicon with a broad offering of foundational CPU and acceleration technologies aimed at data center environments.

Justin is the AMD Director of Corporate Responsibility and is responsible for coordinating the company's sustainability strategy, goals and reporting.

ALLYSON: Justin, thank you for being here today. As you know, AI has fundamentally changed the requirements for computing in the data center. With estimates that >40% of all servers will be dedicated to AI by 2026, how is AMD seeing computing evolving across CPU, GPU, and FPGA?

JUSTIN: As AI continues to evolve rapidly, having a broad range of compute engines will be critical to scaling performance and efficiency. We see each of these different compute engines playing a unique role, particularly as AI workloads shift and as use models evolve. We also look to enable more on-device processing across the edge and end devices — further helping to drive efficiencies. Having our full range of processing engines allows customers to balance the competing demands for disparate performance levels, throughput, capacity, energy consumption, programmability and available space. Offering compute engines that address these needs while increasingly accommodating AI requirements is a critical differentiator for AMD.

ALLYSON: This increased compute demand is placing greater emphasis on compute efficiency. How does AMD integrate efficient design into roadmap planning, and what has this influenced in your architecture?

JUSTIN: The computing performance delivered per watt of energy consumed is a vital aspect of our business strategy. Our products’ cutting-edge chip architecture, design, and power management features have resulted in significant energy efficiency gains. AMD has a track record of setting long-term energy efficiency goals and incorporating them into engineering roadmaps for successful execution.

We have a bold goal to achieve a 30x improvement in energy efficiency for AMD processors and accelerators powering HPC and AI training by 2025—our “30x25 goal”.1 If all AI and HPC server nodes globally were to make similar gains to the AMD 30x25 goal, we estimate billions of kilowatt-hours of electricity could be saved in 2025, relative to baseline trends.2 Achieving the goal would also mean we would exceed the industry trendline for energy efficiency gains and reduce energy use per computation by up to 97% as compared to 2020. As of late 2023, we achieved a 13.5x improvement in energy efficiency from the 2020 baseline, using a configuration of four AMD Instinct MI300A APUs (4th Gen AMD EPYC™ CPU with AMD CDNA™ 3 Compute Units).3 Similarly, this kind of philosophy and process helped influence design choices across our “Zen” architectures for the AMD EPYC server CPU family—to develop a core infrastructure that balances performance, efficiency and density.

To embed energy efficiency goals into the design process, we collaborate closely with customers to understand their unique needs for performance, power targets, features and more. All this input is included in the design process, and teams have different stages in the process to check on the alignment with the end functionality that they are targeting.

One example of how AMD is addressing customer performance needs and configuration flexibility, while also advancing sustainability, is the modular architecture we pioneered with chiplets. Instead of one large monolithic chip, AMD engineers reconfigured the component IP building blocks using a flexible, scalable connectivity we designed known as Infinity Fabric. This laid the foundation for our Infinity Architecture, which enables us to configure multiple individual chiplets to scale compute cores in countless designs. Not only does this further optimize energy efficiency, but it also reduces environmental impacts in the wafer manufacturing process.

By breaking our designs up into smaller chiplets, we can get more chips per wafer, lowering the probability that a defect will land on any one chip. As a result, the number and yield percentage of “good” chips per wafer goes up, and the wasted cost, raw materials, energy, emissions, and water goes down. For example, producing 4th Gen AMD EPYC™ CPUs with up to 12 separate compute chiplets instead of one monolithic die saved approximately 132,000 metric tons of CO2e in 2023 through avoidance of wafers manufactured, 2.8 times more than the annual operational CO2e footprint of AMD in 2023.4

ALLYSON: With markets like the EU placing greater emphasis on embedded carbon and other sustainability metrics, how is AMD working with manufacturing partners to deliver transparency within your product offerings?

JUSTIN: We work with our Manufacturing Suppliers to advance environmental sustainability across a variety of metrics, including emissions related to AMD purchased goods and services (Scope 3 emissions). Aligned with guidance from the GHG Protocol, we directly survey manufacturing suppliers representing ~95% of our related supply chain spend and use the data to estimate and publicly report our related Scope 3 emissions.

We also set goals for our manufacturing suppliers and report our progress annually. Our 2025 public goals are for 100% of manufacturing suppliers to have their own public GHG reduction goal(s) and 80% to source renewable energy. Our latest report shows 84% of our Manufacturing Suppliers had public GHG goals and 71% source renewable energy.

Carbon emissions in our supply chain are primarily generated at silicon wafer manufacturing facilities. Most AMD wafers come from TSMC, which implemented more than 800 energy savings measures in 2023, saving approximately 92,960 MWh of energy and 46,000 metric tons of CO2e attributed to AMD wafer production. In September 2023, TSMC announced an accelerated renewable energy roadmap, increasing its 2030 target from 40% to 60% of the total energy supply, and pulling in its 100% renewable energy target from 2050 to 2040.

In the near term, the amount of renewable energy available in Taiwan and other regions in Asia is very limited. To help address this challenge, AMD is a founding member of the Semiconductor Climate Consortium and a sponsor of its Energy Collaborative working to accelerate renewable energy access in the Asia-Pacific region.

ALLYSON: How do you see AI actually influencing the delivery of efficient compute as a strategic tool for AMD innovation in the future?

JUSTIN: Energy efficiency has always been a key pillar of our design and ecosystem enablement approach, and we take a holistic view at the silicon, software and system level to get the most performance and functionality out of every watt consumed. The benefits are so much more impactful when hardware, software and systems can evolve in more relative lockstep, which requires deep technical collaborations.

We also embrace the scaling of efficiency impacts that AI can enable as it matures and proliferates – from helping assign compute resources more optimally to monitoring and diagnosing underperforming hardware. Some of the leading application vendors we collaborate with in electronic design, simulation and verification are developing AI-enabled tools to optimize system and cluster performance, automate and augment common routines, reduce waste and improve productivity.

ALLYSON: Where can our readers find out more about AMD solutions in this space and engage the AMD team to learn more?

JUSTIN:

- Explore the AMD 2023-24 Corporate Responsibility Report

- Learn more about how AMD is advancing data center sustainability here

- Get the details on AMD EPYC server CPU energy efficiency here

- Use the AMD TCO Greenhouse Gas Calculators – Bare Metal and Virtualization versions

1 Includes AMD high-performance CPU and GPU accelerators used for AI-training and high-performance computing in a 4-Accelerator, CPU-hosted configuration. Goal calculations are based on performance scores as measured by standard performance metrics (HPC: Linpack DGEMM kernel FLOPS with 4k matrix size; AI-training: lower precision training-focused floating-point math GEMM kernels such as FP16 or BF16 FLOPS operating on 4k matrices) divided by the rated power consumption of a representative accelerated compute node, including the CPU host + memory and 4 GPU accelerators.

2 “Data Center Sustainability,” AMD, https://www.amd.com/en/corporate/corporate-responsibility/data-center-sustainability.html (accessed May 15, 2024).

3 EPYC-030a: Calculation includes 1) base case kWhr use projections in 2025 conducted with Koomey Analytics based on available research and data that includes segment specific projected 2025 deployment volumes and data center power utilization effectiveness (PUE) including GPU HPC and machine learning (ML) installations and 2) AMD CPU and GPU node power consumptions incorporating segment-specific utilization (active vs. idle) percentages and multiplied by PUE to determine actual total energy use for calculation of the performance per Watt. 13.5x is calculated using the following formula: (base case HPC node kWhr use projection in 2025 * AMD 2023 perf/Watt improvement using DGEMM and TEC +Base case ML node kWhr use projection in 2025 *AMD 2023 perf/Watt improvement using ML math and TEC) /(2020 perf/Watt * Base case projected kWhr usage in 2025). For more information, www.amd.com/en/corporate-responsibility/data-center-sustainability

4 AMD estimation based on defect density (defects per unit area on the wafer), chip area and n-factor (manufacturing complexity factor) to estimate the number of wafers avoided in one year. Yield = (1 + A*D0)^(-n) where A is the chip area, D0 is the defect density and n is the complexity factor. The area is known from our design, D0 is known based our manufacturing yield data, and n is a number provided by a foundry partner for a given technology. The calculations are not meant to be precise, since chip design can have a large influence on yield, but it estimates the area impact on yield. The carbon emission estimates of 132,064 mtCO2e were calculated using the estimated number of 5 nm wafers saved in one year, based on the TechInsights’ Semiconductor Manufacturing Carbon Model. Comparison to AMD corporate footprint is based on AMD reported scope 1 and 2 market-based GHG emissions in 2023: 46,606 mtCO2e. Water savings estimates of 1,110 million liters were calculated using the estimated number of 5 nm wafers saved in one year times the amount of water use per 300mm wafer mask layer times the average number of mask layers. Comparison to AMD corporate water use is based on AMD 2023 reported value of 225 million liters.

.jpg)