Sustainability in Cloud Services with OVHcloud's Grégory Lebourg

During this episode of Data Insights sponsored by Solidigm, Grégory Lebourg – Global Environmental Director at OVHcloud – discusses how companies can meet their environmental goals effectively.

5 Fast Facts with Solidigm’s Dave Sierra

As we prepare to roll out the TechArena 2024 Data Center Efficiency Report, I was honored to sit down with Solidigm’s Dave Sierra to learn more about how storage media innovation will help yield improved efficiency to solution delivery in the market.

Solidigm has been featured extensively on TechArena and is a leading supplier of storage media aimed at data center-to-edge environments. Dave is part of Solidigm’s Data Center Solutions Marketing team and is responsible for proving and conveying the value of their storage products.

ALLYSON: Why is compute efficiency becoming so critical in 2024? Is this just about AI, or are other factors like the slowing of Moore’s Law coming into play?

DAVE: As a former Intel-er, I greatly appreciate the nearly 60-year run of Gordon Moore’s Law, but technology, physics, and economic limitations are bringing that era to a close. What isn’t slowing is the pace of compute innovation and performance improvement, largely driven by the economics of the AI opportunity. While GPUs and other compute elements are indeed becoming more energy efficient, it comes at the price of huge overall increases in raw power needs. Securing and allocating more and more power for compute resources is becoming the critical factor in efficient AI data center design.

ALLYSON: How is storage part of the energy efficiency solution, and why is the move to SSDs a critical part of any IT strategy?

DAVE: You can’t talk about energy efficiency without talking about AI, and vice versa. The mind-boggling energy needs of today’s GPU infrastructure and the corresponding difficulty in sourcing power rightly grabs headlines. But storage is an underappreciated component of a modern data center’s power consumption, with several estimates pegging it at 30-35% of overall DC IT power. Solutions designed with performant, power efficient, high-capacity SSDs enable you to store more data in less space versus legacy storage options. Designing for fewer drives in a solution is more energy efficient, requires fewer servers, and reduces overall cooling infrastructure needs.

ALLYSON: Solidigm is a leader in delivering storage media to the market with decades of experience working from data center to edge. How have you differentiated your solutions from an efficiency perspective?

DAVE: As a company, Solidigm’s sole focus is delivering reliable and innovative storage solutions from the data center core to the edge. We paved the way for the industry’s ongoing transition to more space and energy efficient EDSFF (Enterprise and Data Center Standard Form Factor) SSDs with our first-to-market ‘Ruler’ design in 2019. Today, our ultra-high-density Quad Level Cell (QLC) SSDs allow infrastructure efficiency leaders to consolidate less efficient, less reliable storage designs onto a modern, high capacity, power and space efficient infrastructure. We have a roadmap of innovations that will continue to deliver improved core-to-edge efficiency value in the coming years.

ALLYSON: What do you see as the lifecycle of storage media, and is it changing?

DAVE: When SSDs first entered the market, there was significant industry concern around drive wear out – a limited number of times you could reliably move data in and out of a NAND cell. With improved technology and algorithms, those concerns are mostly a thing of the past, but today’s high-density SSDs are further improving the narrative around SSD endurance. Consider the 61.44TB Solidigm™ D5-P5336 SSD, with a lifetime petabytes written (PBW) rating of 65.2 petabytes. A typical Content Delivery Network (CDN) workload might take 14 years to write that much data to a single drive. ‘Your mileage may vary’, but you can model your own workload characteristics against Solidigm’s products using our endurance estimator at https://estimator.solidigm.com/ssdendurance/index.htm.

ALLYSON: Where can readers find out more about Solidigm products and connect with the Solidigm team?

DAVE: A great place to learn more about our power and space efficient storage is at https://www.solidigm.com/solutions/artificial-intelligence.html. Other ways to connect:

- https://www.linkedin.com/company/solidigmtechnology/

- https://www.facebook.com/Solidigm/

- https://www.youtube.com/c/Solidigm/

- https://x.com/solidigm

AI and Autonomous Advancement at Airbus

Join Arne Stoschek, VP of AI and Autonomy at Airbus Acubed, as he discusses the role of AI in aviation, the future of autonomous flight, and innovations shaping the industry at Airbus.

5 Compute Efficiency Takes with WEKA President Jonathan Martin

As TechArena prepares to roll out the 2024 Compute Sustainability Report, I was privileged to sit down with WEKA President Jonathan Martin to discuss how the right data foundation is critical to make GPUs more efficient and improve the sustainability and performance of AI applications and workloads.

WEKA's leading AI-native data platform software solution, the WEKA Data Platform, was purpose-built to deliver the performance and scale required for enterprise AI training and inference workloads across distributed edge, core, and cloud environments.

Jonathan, WEKA’s President, is responsible for the company’s global go-to-market (GTM) functions and operations, which include sales, marketing, strategic partnerships, and customer success.

ALLYSON: Jonathan, thank you for being here today. It used to be that data storage was an arena not exactly known for innovation. With organizations utilizing multiple clouds and looking to do more interesting things with data, that has fundamentally changed. How do you view data platforms today?

JONATHAN: While we are still in the early days of the AI revolution, we’re already seeing how transformative this technology can be across nearly every industry. Enterprises are now adopting AI in droves – and despite being relatively new in the market, generative AI is eclipsing all other forms of AI. A recent global study conducted by S&P Global Market Intelligence in partnership with WEKA found that an astounding 88% of organizations say they are actively exploring generative AI, and 24% say they already see generative AI as an integrated capability deployed across their organization. Just 11% of respondents are not investing in generative AI at all.

This rapid shift to embrace generative AI is forcing organizations to reevaluate their technology stacks, as they struggle to reach enterprise scale. The same study found that in the average organization, 51% of AI projects are in production but not being delivered at scale. 35% of organizations cited storage and data management as the top technical inhibitor to scaling AI, outpacing compute (26%), security (23%) and networking (15%).

At WEKA, we believe that every company will need to become an AI-native company to not only survive, but thrive, in the AI era. Becoming AI-native will require that they adopt a disaggregated data pipeline-oriented architectural approach that can span edge, core and cloud environments. A typical AI pipeline has mixed IO workload requirements from training to inference and is extremely data-intensive. Legacy data infrastructure and storage solutions fall short because they weren’t designed to meet the high throughput and scalability requirements of GPUs and AI workloads.

A unified data platform software approach also gives organizations the ultimate flexibility and data portability they need at the intersection of cloud and AI. Instead of vertical storage stacks and data siloes, a data platform creates streaming horizontal data pipelines that enable organizations to get the most value from their data, no matter where it is. A unified data platform is the data foundation of the future.

ALLYSON: WEKA has made a name for itself with sustainability. Why is this important for the way you’ve designed your solutions?

JONATHAN: WEKA’s software was designed for maximum efficiency, which is inherently more sustainable. Legacy data infrastructure contains a lot of inefficiencies, which have a steep environmental cost. Further compounding the problem, AI workloads are incredibly power-hungry and enterprise data volumes are growing, so the environmental impact of AI is a big global issue, and a growing area of concern for businesses.

In fact, in the S&P Global study, 64% of respondents worldwide say their organization is “concerned” or “very concerned” about the sustainability of AI infrastructure, with 30% saying that reducing energy consumption is a driver for AI adoption in their organization.

There are a few ways organizations can start addressing AI’s energy consumption and efficiency issues. The first is GPU acceleration. On average, the GPUs needed to support AI workloads sit idle about 70% of the time, wasting energy and emitting excessive carbon while they wait for data to process. The WEKA Data Platform enables GPUs to run 20x faster and drives massive AI workload efficiencies, reducing their energy requirements and carbon output.

Second, traditional data management and storage solutions copy data multiple times to move it through the data pipeline, which is wasteful in multiple ways—it costs time, energy, carbon output, and money. The WEKA Data Platform leverages a zero-copy architecture, helping to reduce an organization’s data infrastructure footprint by 4x-7x through data copy reduction and cloud elasticity.

For WEKA customers, this means they’re not only getting orders of magnitude more performance out of their GPUs and AI model training and inference workloads, but they’re also saving 260 tons of CO2e per petabyte stored annually. When it comes to their data stack, we don’t believe organizations should have to choose between speed, scale, simplicity, or sustainability – we deliver all four benefits in a single, unified solution.

ALLYSON: What do you think is critical for the enterprise data pipeline today, and how is WEKA meeting these challenges with your solutions portfolio?

JONATHAN: The first critical element of a data pipeline is having ultimate flexibility. Most organizations are challenged by growing data volumes and data sprawl, with data in multiple locations. The second thing enterprises must have, and this is also tied to flexibility, is a solution that can grow with them into the future. The third factor is simplicity, because data challenges are becoming more complex in the AI era.

When ChatGPT emerged in late 2022, no one could have predicted just how fast AI adoption would proliferate. As we discussed before, a whopping 88% of organizations say they are now investing in Generative AI. That’s an astounding rate of adoption in just over a year.

Although WEKA couldn’t predict the dawn of the AI revolution, a decade ago, our founders could see modern high-performance computing and machine learning workloads were on the rise and likely to become the norm, requiring a wholly different approach to traditional data storage and management approaches.

Our founders deliberately designed the WEKA Data Platform as a software solution to provide organizations with the flexibility to deploy anywhere and get the same performance no matter where their data lives, whether on-premises, at the edge, in the cloud, or in hybrid or multicloud environments. It also offers seamless data portability between locations.

While it’s difficult to predict what an organization’s technology requirements will be in five or 10 years, the WEKA Data Platform is designed to scale linearly from petabytes to exabytes to support future growth and keep pace with their innovation goals. Today, WEKA has several customers running at exascale. Tomorrow? The sky's the limit.

Additionally, WEKA saw that many customers were struggling to get AI into production and needed a simplified, turnkey option that enabled them to onramp AI projects quickly. We introduced WEKApod™ at GTC this year to provide enterprises with an easy-button for AI. WEKApod is certified for NVIDIA SuperPOD deployments and combines WEKA Data Platform software with best-in-class hardware, minus the hardware lock-in. Its exceptional performance density improves GPU efficiencies, optimizes rack space utilization, and reduces idle energy consumption and carbon output to help organizations meet their sustainability goals.

ALLYSON: From a sustainability perspective, how do you see AI influencing the data center of the future?

JONATHAN: As we’ve discussed, AI’s energy and performance requirements are already shaping how organizations are evaluating their data center investments. Whether its implementing new data architectures, embracing hybrid cloud strategies, shifting infrastructure vendors, or moving AI workloads to specialty GPU clouds, organizations are already reinventing how, when and where they store and manage their data. Power is shaping up to be the new currency of AI. The data center of the future will need to balance AI’s need for highly accelerated compute with its massive energy needs. There are various ways companies can address this, ranging from leveraging public and GPU clouds that leverage renewable energy, to deploying cooling technology, adopting and embracing more efficient data infrastructure solutions that support sustainable AI practices.

ALLYSON: Where can our readers find out more about WEKA solutions in this space and engage the WEKA team to learn more?

JONATHAN: Visit our website at www.weka.io and follow our latest updates on LinkedIn and X.

Source: 451 Research, part of S&P Global Market Intelligence, Discovery Report “Global Trends in AI,” August 2024

Ayar Labs Aims to Light up AI Applications with Optical I/O-based Fabrics

Imagine the amount of data required to train ChatGPT and the size of the compute cluster required to train it. Now imagine the speed and scale of connectivity required to move all of that data across compute cluster nodes. As we look at AI era computing, the connectivity of compute is an ever- scaling challenge, which is why I was so excited to catch up with Ayar Labs CEO Mark Wade at the AI Hardware Summit last week. Ayar is a leading provider of integrated optical connectivity solutions, and Mark shared how their optical I/O is revolutionizing AI performance. Mark delved into how optical is uniquely positioned to solve data bottleneck challenges within the data center. With the explosive growth in AI workloads, traditional copper based I/O is struggling to keep up, leading to latency issues and inefficiencies. Ayar Labs’ integrated optical technology addresses this by significantly improving data transfer speeds, reducing power consumption, and unlocking higher levels of performance.

One of the most exciting parts of our discussion was how Ayar Labs solutions can handle terabytes of data per second with incredible efficiency. Mark emphasized that this technology enables 8-10x more bandwidth than traditional copper interconnects, all while consuming 10x less power. This is a game changer for large-scale AI systems where speed and energy efficiency are paramount. Mark explained that within compute clusters, there’s a requirement for scale up and scale out fabric connections, also known as the back end and front end network. Ayar’s technology is aimed primarily at the scale-up fabric where ultra-high bandwidth is required. Mark highlighted that Ayar Labs has already secured partnerships with several leading chipmakers who are embedding Ayar Labs technology into their future chiplet based designs, and they're seeing increasing interest from companies looking to upgrade their scale up fabrics without overhauling their entire infrastructure.

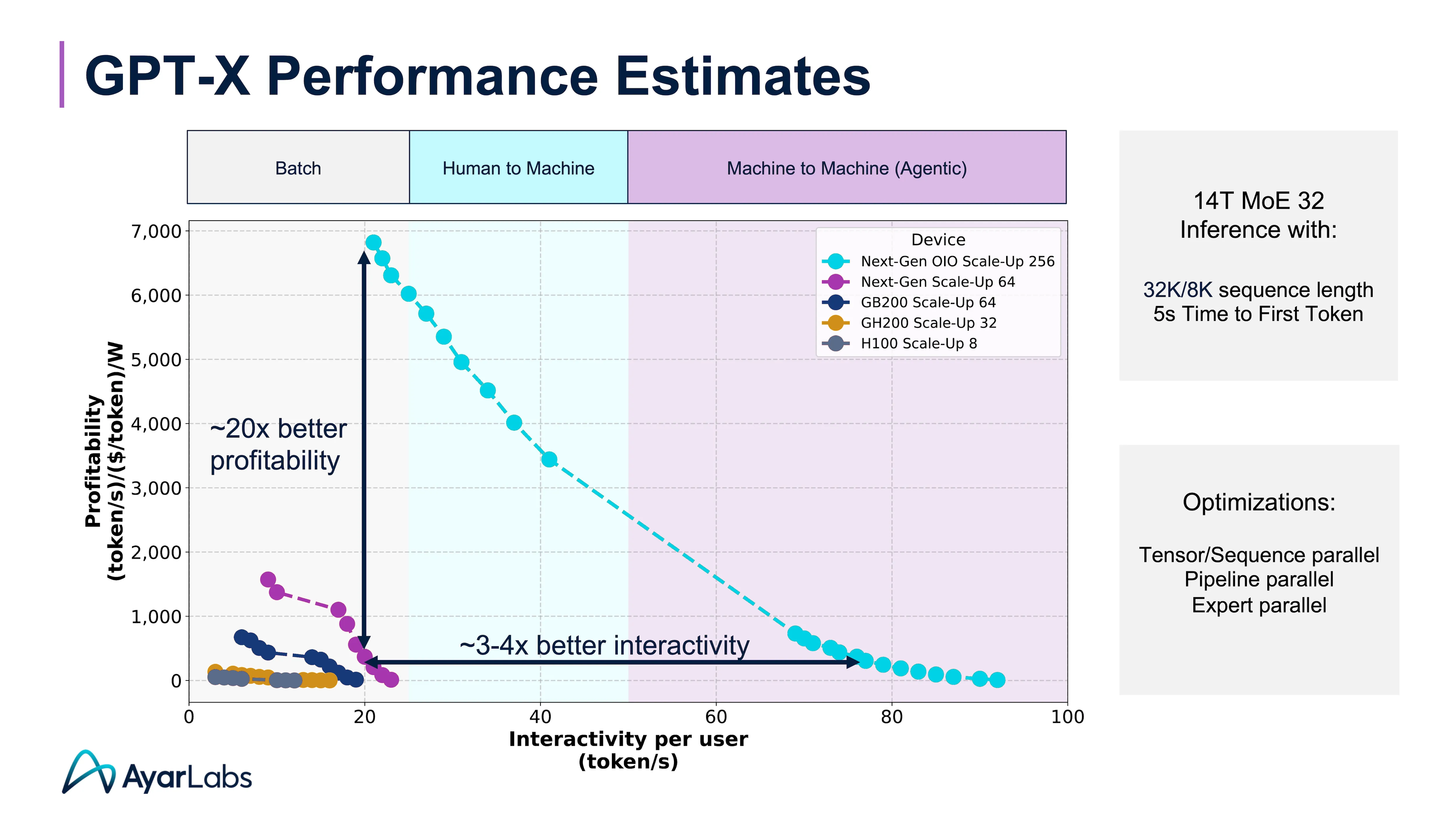

What really caught my attention was Mark’s explanation of Ayar’s new metrics for measuring effectiveness of GenAI delivery across profitability, interactivity and throughput. Here Ayar is taking a leadership position to drive common metrics on implementations with a focus on being a big part of the solution in driving successful results.

Mark detailed his excitement for delivering dramatic improvements in profitability and interactivity for AI inference with optical I/O. “At the end of the day, why we're so excited about bringing forth optical I/O, is that you see dramatic improvements in profitability and in interactivity. You can open up larger domains of interactivity to support machine-to-machine communications and agentic workflows. But you do that in a way that creates enough room for application builders and customers to actually build profitable business models on top of this.”

So what’s the TechArena take? As AI continues to push the limits of computing, technologies like optical I/O will become essential. Ethernet’s next speed bump will likely push copper out of the running as a viable solution. It’s not just about making AI faster; it’s about enabling more complex, data-intensive models across data intensive workloads. Ayar Labs has a differentiated solution in integrated optical, and integration into a chiplet delivers differentiated performance and efficiency. I expect the market to move in this direction, positioning Ayar squarely in the sweet spot for market uptake, and I can’t wait to see more from the company as enterprise AI adoption hits its stride in the next two years.

5 Key Considerations for Ethical AI Deployment

Artificial Intelligence (AI) is rapidly transforming the digital landscape, offering unprecedented opportunities for growth and innovation. However, the widespread use of AI also raises important ethical questions that technology leaders must grapple with. If you're steering an organization through the AI revolution, here are five critical ethical considerations to keep in mind.

1. It's crucial that we develop AI in an ethical manner.

Artificial Intelligence (AI) isn't a one-size-fits-all term. Even when we simplify things, AI can be categorized into three buckets: Narrow AI, Artificial General Intelligence (AGI), and Artificial Super Intelligence (ASI). Currently AGI and ASI are still theoretical, in large part because the computational power to build them is not available yet.

Narrow AI includes Generative AI, analytical AI, as well as limited memory AI, which trains the algorithms that enable self-driving cars. Narrow refers to the narrow focus of these algorithms. They can’t do anything beyond the single thing on which they’ve been trained.

It is imperative to build Narrow AI systems ethically because AGI and ASI will be built on their shoulders.

2. Data transparency is essential to ensure ethical AI.

Developing AI models requires massive amounts of training data. Where does it all come from? This is one of the biggest ethical dilemmas in the field of AI today.

Open AI uses data that is publicly available on the internet, data licensed from third parties, and data provided by users or “human trainers” to train ChatGPT. However, sourcing training data from the internet has led to models containing stereotypes related to gender, race, ethnicity, and disability (On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? - via ACM Digital Library).

If biases are present in the training data, that can lead to prejudiced outcomes. This can impact everything from hiring practices to criminal justice systems. Question where your data comes from and scrutinize its fairness. Ensuring data transparency is not just a best practice but a necessity to ensure ethical AI.

3. Effectively overseeing AI systems is essential to avoid reinforcing social inequalities.

AI systems have the potential to perpetuate social inequalities if not carefully managed. In the book “Automated Inequality: How High-Tech Tools Profile, Police, and Punish the Poor,” Virginia Eubanks writes about the ways data mining, algorithms, and predictive risk models can have disastrous results when not managed carefully.

One example Eubanks provided was of the Family and Social Services Administration in Indiana. This agency provides programs like food stamps and welfare for some of the most vulnerable populations.

The state turned to automation to streamline services and prevent welfare fraud. They got rid of caseworkers and relied on an automated database to deliver services. The result? Algorithmic bias prevented qualified individuals from collecting services when they needed them the most.

4. We’ve seen multiple examples of algorithmic bias.

Algorithmic bias is when an AI system uses “unrepresentative or incomplete training data or [relies] on flawed information that reflects historical inequalities.” (Source: Princeton University Journal of Public and International Affairs).

It comes back to the data again. If the data used to train the models includes data that historically enforced inequality, then that will be embedded into the AI tool that is trained by that data. We’ve seen examples of this in hiring, word associations, and criminal sentencing.

This isn’t a result of overt bias, but instead issues with the data. A historical human bias is baked into our data, especially if it was scraped from public internet. Insufficient training data can also lead to coded bias. This happened to Dr. Joy Buolamwini while she was at MIT, leading her to research facial recognition. She found that one reason faces like hers couldn’t be found by common tools was because of a lack of diversity in the tools’ datasets.

5. We can’t underestimate the environmental impact of AI model training.

Environmental, Social, and Governance (ESG) scores are becoming increasingly important as companies strive for ethical accountability. While AI can optimize resource use, training the models takes an enormous toll on the environment. In 2019, researchers at the University of Massachusetts Amherst found that the energy required to train one LLM is the same as 125 round-trip flights between New York and Beijing.

It's not just the training that impacts the environment. All the data needed by AI models has to be stored someplace, meaning data center capacity is increasing. According to this article, Google reports that 15% of all energy usage of the past three years has been dedicated to machine learning workloads.

The environmental impact of training models is influenced by their locations and the power grids they utilize. When data centers rely on grids powered by fossil fuels, the impact is more significant. Additionally, data centers situated in Asia, or the Southwestern United States often require substantial water for server cooling, further contributing to environmental impact.

These are some of the things to consider if you're responsible for reporting to your ESG administrator. The paper Quantifying the Carbon Emissions of Machine Learning gives more actionable items, including a link to a calculator.

Conclusion

Navigating the ethics of AI is a challenge that technology leaders must face head-on. By considering these five key areas you can guide your organization through the complexities of ethical AI deployment. Staying informed about regulatory landscapes and engaging in policy discussions can help ensure that innovation is both responsible and compliant.

PhoenixNAP's Bare Metal Cloud Meets AI's Data Center Demands

I love hearing from providers on how they’re grappling with delivery of cloud services to support customer adoption of AI. Jeniece Wronowski and I got that chance in a recent episode of our Data Insights podcast when we hosted Ian McClarty, President of PhoenixNAP, for a deep dive into the evolving role of AI in data centers and how bare metal cloud is meeting the demand for infrastructure that’s up to the AI performance challenge.

Our conversation started with Ian sharing his view on the enormous impact AI is having on data centers and the unique demand they bring to both operators and their customers. They require immense compute power as well as low latency communication, putting significant strain on traditional cloud infrastructure. Ian pointed out how the explosion of data—from IoT devices, streaming, and cloud applications—continues to fuel the AI boom, and that AI can’t be treated as another workload in the data center. It demands a completely fresh approach to data center infrastructure, something Ian and his team at PhoenixNAP are laser-focused on providing.

Ian then turned to bare metal cloud offerings, something PhoenixNAP is famous for delivering, and how they are particularly suited to meet AI’s growing infrastructure needs. Unlike typical cloud solutions that share resources, bare metal cloud provides dedicated servers that give companies access to raw, non virtualized hardware. This is key, Ian explained, for resource- hungry AI workloads. Companies working on AI algorithms need the ability to quickly scale, spin up resources on demand, and process huge amounts of data—capabilities that bare metal cloud supports seamlessly.

Ian highlighted three key advantages to this approach: performance, scalability, and control. In traditional virtualized environments, AI workloads can face latency issues or performance bottlenecks due to resource sharing. In addition, bare metal cloud allows for rapid scaling, whether a company needs to deploy a few servers for small-scale training or dozens for large AI models. The infrastructure can be customized and scaled up or down based on demand providing flexibility that is crucial as AI workloads can vary significantly in terms of compute power needed. Control is equally important, and Ian stressed how organizations want more control over their infrastructure when it comes to AI. With bare metal cloud, companies have the freedom to configure the hardware environment to suit their specific needs, which is especially important for workloads involving sensitive or proprietary data. This level of customization and control just isn’t possible in shared cloud environments.

As we love to do on the Data Insights series, we turned the conversation to sustainability. With recent reports placing energy consumption of data centers forecasted to represent up to 20% of the world’s energy supply due to the rise of AI, operators are grappling with driving efficiency across every vector of computing. Ian acknowledged the industry’s responsibility to address the environmental impact and noted that PhoenixNAP is taking proactive steps to design data centers with energy efficiency in mind, from improving cooling technologies to optimizing server utilization. PhoenixNAP is also exploring renewable energy utilization to power their facilities. While the journey to a fully sustainable data center is ongoing, the strides they’re making are encouraging. Ian believes that future innovations in both hardware and software will make sustainability not just an add-on but a core feature of high-performance computing environments.

The conversation made it clear that PhoenixNAP is primed for infrastructure transformation to support AI’s growth. The company’s focus on performance, flexibility, and sustainability positions it uniquely to meet the challenges and opportunities that AI presents. I left the conversation energized about the possibilities bare metal cloud offers for AI innovation and the impact it will have across industries.

Tune in to the full episode for more insights from Ian and how PhoenixNAP is reshaping the future of data centers.

VMware Drives Pull Optimized Solutions for the Edge

Day one of Edge Field Day 3 kicked off with a presentation from VMware. This sage of enterprise cloud and expert in workload automation entered the presentation room with a challenge for us to forget about all of that and focus on the edge.

Chris Taylor, Product Marketing Manager and Alan Renouf, Technology Product Manager for the Software Defined Edge group, bring fantastic technical chops to the table. Alan has an extensive background in workload automation from the data center and Chris brings a unique perspective in manufacturing as a mechanical engineer - as well as seasoning in the security arena - to the table.

So what is the state of edge from a VMware perspective? VMware has created an edge compute stack leveraging an intelligent overlay of VMware VeloCloud SDWAN and SASE Security software and the VMware Telco Cloud Platform driving 5G, Fixed and LEOS network support. This software stack provides a horizontal foundation for deployment across edge environments and vertical use cases. Top markets extend across manufacturing floors, retail, energy generation and distribution, logistics operations and more. What drives customers to edge deployments? Too much data sitting at the edge, unreliable or inefficient networking from edge to cloud, and challenges in moving data due to regulatory requirements like data sovereignty are key customer pain points met by VMware customers.

And with this vast market and clear challenge driving deployment, you’d expect that edge implementations would be scaling like a hockey stick. But Chris and Alan pointed to key challenges for deployment sharing a slightly stunning stat from World Economic Forum that 70% of companies investing in industrial 4.0 projects get stuck in the pilot phase.

The challenges that many of these companies face include oversight of the scale and differentiation of myriad edge devices, use cases, and support required. Underpinning all of this is the noted difference in edge as compute that cannot be managed with the exact approach as cloud. After all, data centers are centralized…they’re rich with IT support, and they are, well, clean and orderly.

So what is VMware’s view on management of this wild west? Alan and Chris stated the approach needs to start with a change in workload management away from push architectures of cloud to pull mode. They signaled that the world of mobile app updates provide a parallel to how they’ve architected their edge stack to perform where management checks for updates and pulls updates when there is network capacity and compute cycles to load.

So how is VMware differentiated? Their edge optmization accounts for real time apps and simplified operations and is based on a flexible platform that grows with edge implementation and breadth of apps deployed. They’ve also built in support for both VMs and K8s with zero-touch orchestration delivering provisioning via preconfigured edge HW authorization and profiles established by an edge admin residing in a central location. This allows for plug-and-play deployment, which allows for an edge device to pull desired apps once plugged in without on-the-ground IT support. Edge admins maintain all workloads across edge locations with monitoring and downloads of components to match desired end state, all delivered by VMware’s Edge Cloud Orchestrator (VECO).

So who is tapping VMware for edge management? This is one area that I wanted to hear more as VMware KNOWs the enterprise. While a breadth of customers was not provided, the one customer example referenced was a big one – Audi. Audi is looking to transform their factory floors for Industrial 4.0 – details here.

What’s the TechArena take? With solutions like ESX and VMware’s SDWAN configurations, we expect those IT admins who have trusted VMware in the cloud may also trust VMware at the edge, all questions about the current state of the company now as part of the Broadcom empire notwithstanding. The solutions discussed provide a practical approach to automated edge oversight, and I’d hoped the speakers would take more credit for what is VMware’s ace in the hole: understanding of customers at a very deep level. I would expect that customer discussions on this edge solution would reflect bringing the entire heritage of VMware to the table, and I expect to see other name brands like Audi to join as notable customer deployments for the edge stack solution.

Zededa Drives Edge Application Management at Scale

Michael Maxey, Manny Calero and Jason Grimm from Zededa shared their vision for the edge today at Edge Field Day 3. Zededa was founded in 2016 with a mission to deliver a cloudlike experience for the edge and most notably began delivering Edge Application Services last year.

Their investors have guided their initial use cases, and with Chevron and Porsche on board, they’ve targeted oil and gas implementation and automotive as well as agriculture, renewable energy, manufacturing and more.

Unpacking the solution, Zededa delivers an open-source operating system based on Linux, a cloud control for edge app management, and a global marketplace for partner applications. They also have a broad ecosystem of supported infrastructure they support from the industry from the usual suspects. Expounding further, their cloud gives central management of all apps at the edge with built-in security, policy-based management, and pull updates for edge to address the unique environmental challenges of managing this fleet of devices.

The LF Edge operating system supports workloads across virtual machines, containers, K8s clusters, VNFs, CNFs, and custom runtimes, giving incredible flexibility to organizations. It is uniquely designed for the edge, providing unique security control given the challenges of maintaining data protection with remote devices. It’s an API-only system - meaning there is no log-in at the edge, which seems like the right choice given relative technical skillsets in edge environments.

The configuration has flexibility for Linux distributions as well as embedded hypervisors and supports partitions to allow for staging of workloads prior to activation. This also means that configurations can be rolled back to ensure reliability and continued uptime.

Zededa has invested in unique edge control in the automotive arena, and Manny walked us through details on a massive deployment they’ve driven with the second largest automotive manufacturer on the planet.

The challenge within automotive? We’ve covered the electrification of automobiles on the TechArena through Robert Bielby’s series, and there are many targets for “data center on wheels” deployment across system control, infotainment, autonomous control and more. Zededa has focused on system control aspects of the automobile to drive secure transfer of data for service activation at the dealership. They’ve delivered a platform that offers the scale required to support millions of vehicles across 70,000 dealerships with an open-source foundation enabling the customer to avoid lock-in to one specific application.

Zededa delivers this configuration with support for all major platforms across x86, ARM and RISC-V. With this configuration, you can expect dealers to improve customer support while increasing high-margin service revenue and grow likelihood for repeat sales in the process.

Zededa also walked through a rail freight use case, and Jason walked us through the details in this space. Their solution has achieved 12 fortune 500 deployments with tens of thousands of nodes in production with zero trust oversight and a host of connectivity options for delivering the solution. The solution was deployed as a bungalow connected via cellular and satellite connected to edge cameras capturing images of train movement. This resilient solution protects against connectivity failures and provides central control via the Zededa cloud controller.

So what’s the TechArena take? What marked Zededa’s session was the scale of deployments they’ve been able to achieve, the real world use cases that they described, and the broad scale of options customers have at every layer of the stack. I’m impressed with their team’s deep knowledge of customer challenges and ability to transform this knowledge into deployed solutions, solving real world business challenges. If companies are evaluating edge orchestration and application management alternatives, Zededa should be on the short list.

OnLogic – Driving Rugged Infrastructure at the Edge

OnLogic capped Edge Field Day 3, and they brought a fantastic story to the event. Lisa Groeneveld and her team described how OnLogic is not the typical VC-funded startup. They are a 20+ year old company headquartered in Burlington, Vermont and led by a husband and wife team that are co-founders of the firm.

They drive infrastructure anywhere – delivering industrial computers, rugged computers, panel PCs, and edge servers to a wide variety of edge deployment use cases.

They specialize in expert technical consultation and built-to-order solutions. They listen to their customers and work to deliver solutions that meet what customers require most. The team shared details of the actual infrastructure. Starting with the far edge, they break down infrastructure requirements as devices and edge servers, and they look at landing infrastructure in these environments to meet temp, dust, space, and vibration realities that exist in these environments.

To tell the story of their infrastructure, the team utilized a series of deployment examples including agriculture. OnLogic’s customer sought foundational compute for robotic food harvesting. Enter the Karbon 800 series computer offering a fanless design, extended temperature range, and military grade shock and vibration tolerance. This computer runs an embedded Intel i9 processor and GPU expansion ports for AI acceleration and comes in three configuration options. The Karbon fueled the robotic solution the customer required to improve crop yields and save costs.

The team shared an example in the mining sector with a partner called Flasheye. Flasheye was seeking to tap visualization to reduce belt downtime to mining operations in horrifically rugged environments. Their customer operated a mine in Northern Sweden whose belt experienced spillage and required human intervention increasing safety risk. With the Flasheye solution, powered by an OnLogic Karbon 400 series platform. This computer can drive uptime up to -40-degree temps with an efficient Atom processor and flexible I/O that supported the visualization integration desired by the customer.

The conversation next turned to a steel mill use case where intense heat and grime and other particulate are a constant challenge. The customer, Steel Dynamics, sought to deliver monitoring of the fabrication environment. Enter the OnLogic Tacton TC401, an all in one panel PC, PCs that are built into the panels of industrial equipment. This PC operates from temperatures between -20C to 70C and features Intel 12th Gen CPU with a ModBay I/O expander supporting wifi and cellular connectivity.

The team rounded out use cases with a residential application, more specifically in hi-rise elevator control. Nantum AI brought OnLogic a challenge for an interesting use case – how to deliver AI control to elevators to predict a power outage and avoid stranding people on elevators. Enter the Helix Industrial Edge Computer featuring a 10th-12th Gen Intel embedded Core processor, solid state design, and operating temps between 0C-50C. The Helix offers slightly less ruggedness than the other solutions mentioned making it perfect for a building deployment.

Finally, the team walked us through their partners. This started with Intel, which was no surprise given the continuous refrain of Intel processors across their fleet. While processors begin the collaboration, OnLogic is also tapping the OpenVino toolkit to optimize software for their targeted solutions. The depth of this partnership, given my own personal history, comes as no surprise. Intel has invested deeply in embedded, IoT, and edge optimization including ruggedization of long-life CPUs. OnLogic’s depth of collaboration extends to AWS. OnLogic is providing infrastructure for AWS Greengrass. The company also extended their collaboration discussion with software providers RedHat, Avassa, and GuiseAI.

What’s the TechArena take? If I ever head back to industry, OnLogic represents the type of culture I’d want to be a part of. This company is led with integrity, and my guess is that extends to customer and partner relationships. But beyond that, they deliver the meat and potatoes of what is required at the edge – computing tailor designed for rugged environments with the core capabilities needed to ensure long life, continued uptime, and feature options to fuel desired workloads. They’ve built a reputation for both broad product configurability as well as trusted reliability. We expect their business to continue its growth path as edge use cases advance.

Why a Self-Driving Car Might Run a “STOB” Sign: To ViT or Not To ViT

The all-too-familiar hexagonal red STOP sign with white trim around the border (at least in the United States) instructs the driver to come to a complete stop, look in both directions, and yield to traffic as required before proceeding. If the stop sign had the misfortune of being vandalized, perhaps a casualty of graffiti, the human driver would most likely be able to look beyond the graffiti to recognize that it is a STOP sign, and take the appropriate action.

It could be said that this behavior is analogous to “inductive priors,” a term used in the science of neural networks, where logical assumptions are made based upon the input data – even though some of the input data may be corrupted or occluded in some manner. In this case, the driver has seen enough stop signs and has enough cues in this sign to recognize it as a stop sign, despite the fact that it has been defaced.

Convolutional Neural Networks (CNNs) typically employ an equivalent type of inductive priors. This class of neural networks assumes that nearby pixels from a camera image are related (typically a reasonable assumption). This class of neural net also assumes that the importance of the different parts of the image is weighted similarly (no one part of the image is any more important than another part). The result is that for CNNs, a relatively limited amount of training is required before the network starts to demonstrate significant levels of accuracy. However, even when fully trained, the inherent accuracy of the CNN is limited when compared to other classes of neural nets, which means it might “jump to conclusions too quickly” to make a judgment and make mistakes.

Slightly off topic for a second, the intelligence of different breeds of dogs is typically measured by how many times a dog of a certain breed typically has to hear a command before that breed of dog can recognize a command, or is trained. I am going to draw the analogy that different classes of neural networks are like different breeds of dogs in both the manner as to how long it takes before they are trained and the accuracy by which they are repeatedly and accurately able to recognize a command from different voices.

While CNNs have been the mainstay deep neural network (DNN) employed to address perception in ADAS applications, as of late, Vision Transformers (ViT) have been gaining significant interest within the ADAS design communities, primarily due to their improved accuracy in object classification over CNNs. In the case of the ViT, however, the increased accuracy comes with the need for a very extensive set of training data. The ViT also demands increased compute performance over that of the CNN during deployment.

ViTs have very low inductive biases. While this is one of the factors leading to improved accuracy (this form of neural networks is less prone to “jump to conclusions”) a significantly larger training set is needed before the ViT can achieve a level of accuracy equivalent to that of the CNN. Also, because the ViT does not assume the importance of adjacent pixels, the full frame with all pixels present must be in place before ViT calculations are performed. This, in turn, is one of several factors that drive up requisite compute performance during deployment/inference.

Increasingly, image sensors with higher resolutions are being employed in the automobile as they can provide a greater amount of information about the surrounding environment of the vehicle as compared to their lower resolution (Mpixel) counterparts. Sensors that provide more information enable objects to be more readily detected from greater distances. This, in turn, enables the vehicle to operate autonomously at higher speeds. As an example, a lower resolution camera may provide only 5 pixels, which reflects an object that has been detected off in the distance. This, however, is an insufficient amount of information to accurately recognize the image and plan the appropriate action. The higher resolution camera, on the other hand, may provide 100 pixels of that same object, enabling the ADAS perception engine to readily recognize that object off in the distance as a pedestrian, cyclist, or stationary object providing more time to the autonomous vehicle to take the right action.

While the CNN doesn’t require the full frame of the image to accurately detect the image, the ViT does. This further exacerbates the computational requirements of the ViT due to the greater amount of data within the frame that must be processed from the higher-resolution camera image in the same time period.

Just as some breeds of dogs have different propensities towards being trained, neural network architectures can be viewed as being on a spectrum of inductive biases from strong to weak. CNNs are on one end of the spectrum while ViTs are on the other end.

As typically seems to be the case, there is no free lunch. The increased power consumption of the ViT driven by the inherent higher computation and the required increased training datasets and training times represent meaningful recurring and non-recurring costs. Hybrid architectures are emerging that combine CNNs and ViTs into one architecture, leveraging the inherent strengths of each neural network. These architectures strike a middle ground between CNNs’ and ViTs' inductive biases, finding a balance between the learning flexibility and accuracy of the ViT while reducing the amount of training required.

While the focus has been on comparing CNN vs. ViT, similar debates and tradeoffs are ongoing in other areas wherever AI is being employed. To that end, this is why it is paramount that upgradable AI architectures are deployed in the field to ensure that the optimal neural network can be deployed as it is unearthed and thus avoiding the wrong response to a STOB sign.

TSecond Delivers Bryck AI for AI Inferencing at the Edge

The co-founders from TSecond, Sahil Chawla CEO, and Manavalan Krishnan, CTO, were on hand at Edge Field Day 3 to showcase Bryck and Bryck AI, black box edge devices chock full of storage, and in the case of the AI flavor, AI accelerators.

These solutions are created due to large stores of data being generated across the edge, and in rising cases, driving AI inference at the edge eliminating the need to move large data stores to the cloud.

We have covered rugged storage platforms for the edge on TechArena, and some of the vertical targets sounded very familiar – media, content delivery, defense to name a few. However, what made my ears perk up was a strong focus on aerospace and deep investment from Boeing in this growing organization.

Let’s go under the covers. Manavalan took us on a detailed tour of the hardware, describing that the standard Bryck is a storage platform with up to one PB of storage running 40GB/sec throughput with 256 parallel PCIe Gen 4 data lanes and integrated security capabilities.

A Bryck requires 240-800W to run, bringing it in as energy efficient vs. storage alternatives. The platform promises to be faster and lighter than competitive offerings as well, while promising rugged resiliency for edge environments, hot pluggability, fault tolerance, and - due to low weight - cost effective to ship to edge locations. TSecond claims less than $100 to ship anywhere in the US – which matters when you consider the scale of edge device delivery in this growing market and you consider that competitive solutions weigh in at up to 500 lbs vs. 20 lbs for Bryck. Bryck can also scale with a multi-Bryck configuration as well as Bryck Mini with various capacities.

Bryck integrates the expected storage software including support for AWS, GCP and Azure for easy integration. They also offer Data Dart, a collaboration with Equinix to accelerate data transport to the cloud utilizing physical Bryck upload with movement of data from Equinix to the cloud provider of choice.

Avassa: The Swedes Have Invaded the Edge

I was delighted to see Avassa back at Edge Field Day showcasing the latest technology that the company is delivering for edge application and orchestration oversight.

Avassa is a software only, cloud based, Control Tower platform for edge that is container first in orientation. Container first is a mantra at the company as Avassa called out the trend that “cloud out” is trending towards VMs eventually being sunset out of existence and “IoT in” seeking containers eventually sunrise as the de facto standard.

Carl Moberg, CTO of Avassa and a TechArena innovation voice, said that full elimination of VMs will be difficult due to pesky Windows-based applications that continue to require support and are unfriendly in native containerized environments.

So what is Avassa doing to support this continuum? They noted that open-industry standard frameworks for integrated VMs and containers is required that supports containerization of VMs for legacy apps.

Avassa delivers encapsulation through a stack containing a container image encapsulating a VM image and start script sitting on top of a container runtime using KVM with integrated health probes. While their container orchestrator cannot natively manage VM updates, they have developed means for this management and demonstrated this live in the session.

Their management extends to leading edge deployments of AI models or web assembly (WASM) containerized alongside Linux-based modern applications. This natural containerization of AI models and web assembly makes sense given that the latter have been primarily developed by born-on-the-cloud organizations by the very same engineers in many cases that built cloud native computing models.

The Avassa Control Tower demonstration highlighted ease of control of edge applications as well as depth of information collected by the Avassa oversight including the aforementioned health probes. They demonstrated migration of a VM-based service to a containerized alternative without disruption of service.

So what about Avassa’s decision to control with Docker runtime with KVM vs. K8s? This was a point of contention in last Edge Field Day’s presentation from the company, and Carl provided a helpful insight on market traction, stating that many companies from mid-market to hyperscalers have embraced this configuration for far or leaf edge environments where Avassa is targeted. He admitted that when edge starts looking like a data center in control and complexity, K8s may make sense, but that this is not Avassa’s target. This makes sense given the leaner stack offered by the Avassa solution for the constraints of edge infrastructure.

Carl and Fredrik moved on to management of applications in offline edge scenarios. Once edge devices are up and running with Avassa’s Control Tower, how are applications managed, and what happens when facing a scenario of upstream outages? The Avassa team provided a demo with a literal cut cord which made the Edge Field Day delegates quite twitchy. After all, many are former or current IT administrators. Carl pointed out that edge requires a different approach to cloud environments, which relies on failover in a situation like this.

How Avassa has managed this is to rely on a downstream admin with unique control of nodes at the site. This control creates an updated stack at the edge disconnected from central Control Tower, and customers choose how they manage the complexity of central workloads vs. local deployments once edge resources come back online.

So what is the TechArena take? Avassa has delivered a compelling central control across device orchestration and application management. I think they’ve made some tough choices on how to manage edge environments with the efficiency and control required for these environments, and I think they’ve chosen wisely for delivery of services within the far edge. For customers looking to deploy containerized environments, they’re a terrific option and should be evaluated by enterprises across industries. To learn more about Avassa, visit their site and check out TechArena’s content featuring the company.

Recap Report: 2024 AI Hardware and Edge AI Summit

Check out our in-depth look at key takeaways from AI Hardware and Edge AI Summit, highlighting how advancements in AI infrastructure, acceleration, and connectivity are transforming the future of computing.

Alphawave Semi Advances with New Solutions, Chiplet Innovation

I love talking to Letizia Guiliano. Letizia drives product marketing and management at Alphawave, but her passion for silicon design and standards innovation is infectious. In our latest chat on my In the Arena podcast, I spoke with her about the game-changing innovations required for AI advancement across connectivity and broader silicon foundations.

Letizia shared a fascinating view on how Alphawave is pushing the boundaries of high-speed interconnects to support the ever-growing demands of AI infrastructure, and how new competition is coming to the connectivity arena in this growing market.

Letizia and I have been discussing chiplets since last year, and last week’s chat highlighted how the designs are revolutionizing semiconductor implementation by improving both performance and efficiency. Unlike traditional monolithic chips, chiplets allow designers to break up complex components into smaller, interconnected pieces, optimizing for power, performance, and cost.

I challenged Letizia on progress towards an open chiplet industry, and she explained that while Alphawave is focusing on open standards to ensure interoperability between different chiplets and systems, current designs focus on bespoke customer solutions. There is still challenge in the market for standards-setting as well as tension that some players benefit more from an open industry than others.

One area that is advancing rapidly with standards delivery is scale-up fabric technology. With AI workloads growing at an unprecedented rate, moving massive amounts of data quickly and efficiently between processing units is a key challenge. Letizia was particularly excited about the industry’s progress in this space, highlighting that the new Ultra Acceleration Consortium is advancing standards that will enable interoperability across multiple company’s accelerator technology. Today, this area of technology is largely controlled by NVIDIA GPUs and NVlink serving as a barrier to entry to other accelerator alternatives.

We also touched on the importance of scalability. As AI models like GPT-4 and LLaMA continue to grow, the ability to scale up infrastructure to support these models is becoming increasingly critical. Alphawave is aiming to provide scalable solutions that not only meet today’s needs but are also future-proof, ensuring that data centers can easily expand to handle tomorrow’s AI workloads.

Letizia shared that the future of AI isn’t just about more powerful models, but about smarter infrastructure. And with Alphawave’s commitment to driving forward innovation in both connectivity and standards embracing design, the company is on track to be an important player in the future shaping of AI silicon.

For those who want to dive deeper into the details of Alphawave Semi’s solutions in this space, I highly recommend tuning into the full interview and visiting their website.

OmniPath Taking on InfiniBand for AI Fabric Leadership

For those of you who follow the TechArena – or the technology landscape – you’re likely familiar with the name Lisa Spelman. Former head of all things Xeon at Intel, Lisa has driven tens of billions in business creation in her career as well as gaining real-world experience in the IT realm at the company.

Her knowledge of both the data center arena and of the large hyperscalers is unquestioned, and we at TechArena were excited by her recent move to take on leadership as CEO of Cornelis Networks as the company seeks to drive their technology into the AI landscape. Cornelis is the provider of Omni-Path fabric technology, a competitive fabric to InfiniBand that heretofore has had great success in HPC clusters across the globe.

I finally got to catch up with Lisa on her vision for the company at the AI Hardware and Edge Summit about how she is going to harness Cornelis technology to compete squarely with NVIDIA’s InfiniBand technology as a fabric foundation for AI training clusters. Lisa did not disappoint. We dove into the future of AI scale-out and how Cornelis is leading the charge with their Omni-Path solutions with an approach to maximize performance, scalability, and interoperability for data centers, ensuring that AI models can scale effortlessly across different environments.

Lisa highlighted how Omni-Path provides the foundation for building out next-gen AI infrastructure, making it easier for businesses to scale their AI workloads without running into bottlenecks of other technologies like Ethernet. She shared that Cornelis has already seen substantial traction with customers who are looking to optimize their data center operations for AI. One of the key takeaways for me from our conversation was how AI scale-out is moving beyond just a cloud discussion. Companies are increasingly interested in hybrid models that balance the power of cloud computing with the control and security of on-prem solutions.

What’s the TechArena take? If you’re keeping an eye on AI infrastructure, you’ll want to closely watch what Cornelis Networks is doing. Cornelis is helping companies deliver to the hybrid vision, and their goal of future-proofing AI infrastructure and providing an alternative technology to an all-NVIDIA solution is something that businesses can't afford to ignore. Cornelis’ focus on true interoperability with Ethernet and Ultra Ethernet extends Omni-Path into, from my view, a very viable and competitive solution in the market addressing both technology and business needs for new AI cluster deployments.

Their innovative approach to fabric delivery, and frankly their mere existence as a competitor to NVIDIA, will be a game changer for companies looking to optimize their near-term AI operations while maintaining the flexibility to grow in a constantly evolving tech landscape. Cornelis is indeed in a perfect position to disrupt a market, and with Lisa’s extensive knowledge of the data center landscape, key players, and business savvy, I expect a bright future for Omni-Path.

With Lemony, Uptime Industries Stacks Up AI at the Deskside

In my recent chat with Sascha Buehrle from Uptime Industries, we dove deep into the exciting world of AI at the edge, powered by their innovative platform Lemony. What stood out to me is how Lemony is designed to bring scalable and secure generative AI right to your desk. Instead of relying on massive, cloud-based infrastructure, Lemony offers an on-premise solution that is not only easy to deploy, but also incredibly accessible for small and mid-sized businesses.

One of the key highlights from Sascha was Lemony’s model support. It comes preconfigured to support several generative AI models with an emphasis on Meta’s Llama, the predominant open source tool de jour, which means you can hit the ground running with everything from natural language processing to image generation tasks and take advantage of a variety of tools to fit the job. It's a seamless integration for businesses that want to start using AI but don't have the resources to maintain large AI clusters or hire a specialized data science team.

Storage is another critical aspect when considering AI training at the deskside, and Lemony comes with options for up to 10TB of storage, making it perfect for businesses that need to manage substantial amounts of data securely. Sascha mentioned that Lemony’s edge AI solution can handle complex datasets while maintaining data sovereignty — a huge selling point for companies that prioritize privacy and data control. And since it's all on-premise, there’s no need to worry about the potential risks of sending sensitive information to the cloud.

One thing I’d called out in my pre-AIHW Summit show blog was a question on customer traction, and Sascha delivered insight into the early success the team has had with Lemony. It’s impressive to see the traction the company is gaining with customers. In just under a year, Uptime Industries has secured over 100 early adopters across a variety of industries — from finance to healthcare — who are using Lemony to streamline their operations with AI. This is a massive vote of confidence in the platform’s ability to scale and deliver real business value. Businesses are particularly drawn to the security features and the fact that Lemony is an all-in-one box solution, which means you can deploy it right in your office and start seeing the benefits immediately.

What’s the TechArena take? I don’t think Lemony represents the alpha and omega of the future of generative AI, but it does bring a fantastic product to the market that could tap a sweet spot of early adoption. Lemony is about bringing AI to where it’s most needed today: closer to your data, closer to your operations, and in a way that fits many companies’ specific usage model. I can’t wait to see where Uptime Industries goes from here, but with the momentum they’re building, I have no doubt they’ll play a key role in making AI practical and accessible for the rest of us. Watch this space as Lemony continues to evolve, and don’t miss out on the opportunities AI at the edge can bring to your business.

.webp)

AI, Data Centers, and Bare Metal Cloud: Insights from PhoenixNAP

During our latest Data Insights podcast, sponsored by Solidigm, Ian McClarty of PhoenixNAP shares how AI is shaping data centers, discusses the rise of Bare Metal Cloud solutions, and more.

Exploring Cerebras' AI Innovations: Inference, Llama, and Efficiency

Sean Lie of Cerebras Systems shares insights on cutting-edge AI hardware, including their game-changing wafer-scale chips, Llama model performance, and innovations in inference and efficiency.

.webp)

AI Connectivity & Chiplet Innovation at Alphawave Semi Unveiled

Letizia Giuliano of Alphawave Semi discusses advancements in AI connectivity, chiplet designs, and the path toward open standards at the AI Hardware Summit with host Allyson Klein.

Optimizing AI Scale-Out: Cornelis Networks’ Vision with Lisa Spelman

Lisa Spelman, CEO of Cornelis Networks, discusses the future of AI scale-out, Omni-Path architecture, and how their innovative solutions drive performance, scalability, and interoperability in data centers.

Graid Delivers Innovative Storage for AI and Data Analytics

Kelley Osburn gets storage. As an industry veteran and leader at Graid Technology, Kelley recently shared his insights on how the storage arena is rapidly transforming to fuel AI workloads and how his company’s SupremeRAID™ solution – a revolutionary approach to tackling modern data storage challenges – is hitting a sweet spot in the market.

So why is traditional RAID no longer sufficient? Kelley explained how these configurations struggle with high-performance computing demands, especially in data-intensive environments. He emphasized the need for innovation in data storage as the exponential growth in data continues to challenge existing systems, explaining that RAID's original purpose was to provide redundancy and protection against disk failures. While this redundancy is still valued, it lacks the performance desired by many customers.

As data stores grow and speed-of-delivery of data becomes more urgent, innovation to the approach helps extend RAID solution viability while meeting customer demand. Graid's SupremeRAID™ solution, for example, optimizes storage performance by offloading RAID tasks to a dedicated hardware device, enhancing speed and efficiency without compromising data integrity. This makes it an ideal solution for customers managing massive amounts of data for applications like AI, machine learning, and big data analytics.

Kelley detailed the core value of Graid’s solution, describing how SupremeRAID™ addresses critical bottlenecks in traditional storage systems by offering unprecedented performance gains while reducing the computational load on CPU and system resources. The innovation lies in its architecture, which integrates both hardware and software in a way that eliminates RAID-specific processing burdens from the host server, thus allowing server resources to focus on other tasks. The result is a solution that dramatically improves throughput and reduces latency, creating a more balanced and efficient data environment.

In addition to AI and ML, SupremeRAID™ also proves to be a valuable tool in applications in media and entertainment, where high-resolution content creation, editing, and rendering demand significant data processing power. Its ability to handle these workloads without compromising performance makes it a game-changer for companies managing large data sets.

Industry Implications and Future Outlook

So what are the broader implications of Graid's innovations for the storage industry? Kelley explained that as companies continue to generate vast quantities of data, the demand for more efficient and performant storage solutions will only grow. Graid’s SupremeRAID™ is positioned to address these challenges head-on, providing enterprises with the tools they need to manage, protect, and access their data faster and more reliably than ever before. This will rely on underlying storage media delivering the performance and density required for these tasks, and Kelley pointed to Graid’s strategic collaboration with Solidigm as an example of how high performance QLC memory delivers unique value to customers.

Looking to the future, Graid plans to continue evolving its technology to meet the ever-increasing demands of the data economy. As data volumes grow, so too will the need for innovative storage solutions that can handle not just the size, but the speed and complexity of modern workloads. SupremeRAID™ represents a critical step in that direction, offering a glimpse into the future of RAID technology and its role in addressing the data challenges of tomorrow.

Want to learn more? Check the episode here.

AI on Your Desk with Uptime Industries’ Lemony

Join Sascha Buehrle of Uptime Industries as he reveals how Lemony AI offers scalable, secure, on-premise solutions, speeding adoption of genAI.

PNNL Advances AI Capability

In the latest episode of The Tech Arena, I had a fantastic conversation with Neeraj Kumar, the Chief Data Scientist at Pacific Northwest National Laboratory (PNNL), about how AI is shaping the future, especially around energy efficiency and large-scale data processing.

Neeraj is a dynamic thinker who’s deeply invested in how AI can revolutionize everything from scientific discovery to sustainable technology. Together, we explored some of the key innovations and challenges in the AI space, with a special focus on energy consumption and the balance between advancing technology and environmental responsibility.

Neeraj kicked off by discussing one of the most pressing topics in AI today — how to manage the skyrocketing demand for computing power without exacerbating the climate crisis. AI is, after all, a double-edged sword. While it brings incredible breakthroughs in fields like medicine, climate modeling, and autonomous systems, it also consumes a huge amount of energy. Neeraj highlighted this tension between innovation and sustainability and stressed that AI's future hinges on solving this dilemma.

He shared insights into his work at PNNL, where AI is driving cutting-edge research. One area he focused on was the collaboration between PNNL and Micron to improve memory systems for AI and high-performance computing (HPC). As AI systems evolve, they require ever-greater amounts of data to be processed in real-time. That’s where memory plays a critical role. Neeraj pointed out that memory bottlenecks can cripple even the most advanced AI models, and optimizing these systems is crucial for enabling more energy-efficient AI. He believes that advancements in memory technology will be a game-changer in achieving the balance between computational power and energy efficiency.

I loved how Neeraj dove into the technical aspects of how PNNL and Micron are innovating in this space. They're working to enhance memory architectures to meet the needs of next-generation AI systems. The aim is to reduce the energy footprint of AI while improving its capacity to handle massive datasets. This kind of optimization is vital for industries like healthcare, where AI can sift through oceans of data to discover new treatment methods or predict patient outcomes, but only if the computational infrastructure can keep up.

We also discussed how intertwined the worlds of AI and compute efficiency have become. It’s no longer enough to simply innovate; those innovations must also be energy-conscious. Neeraj made a great point about how the energy demands of AI systems are growing exponentially, and we need to rethink how we design hardware and software. He highlighted how PNNL’s research is pushing the boundaries on what's possible, from creating AI models that are more efficient in their data use to developing entirely new computing architectures that reduce energy consumption without compromising performance.

Neeraj shared his vision that AI itself can be a powerful tool for combating climate change on a broader scale. For instance, AI can help optimize energy grids, improve the efficiency of wind and solar farms, and even model climate patterns more accurately. But to truly leverage AI in the fight against climate change, we must first ensure that the technology is sustainable. Neeraj was candid about the fact that we’re not there yet, but with ongoing research and innovation, we’re heading in the right direction.

Climate change is not the only area where AI is contributing to advancement in the scientific community. Neeraj shared some compelling examples of how AI is transforming research at PNNL. One standout example was how AI is being used to accelerate scientific discovery by identifying patterns in data that humans might miss. This is particularly important in fields like materials science and chemistry, where AI can analyze vast datasets from experiments and simulations to uncover new materials with potential applications in everything from energy storage to quantum computing.

The role of AI in scientific discovery is something Neeraj is particularly passionate about, and it was evident in the way he spoke about its potential. He emphasized that AI doesn’t replace scientists; rather, it amplifies their ability to make new discoveries. In many ways, AI acts as a partner to researchers, sifting through enormous amounts of data and generating insights that can lead to breakthroughs much faster than traditional methods. This is where AI’s promise really lies — in its ability to accelerate progress in some of the most complex and pressing challenges of our time.

Towards the end of our conversation, we touched on the future of AI and what it might look like in the next decade. Neeraj is optimistic about where the field is headed but also realistic about the challenges that lie ahead. The next big leap, according to him, will come from integrating AI with other emerging technologies like quantum computing. This, he believes, will open up new frontiers in both AI capabilities and energy efficiency.

We cover a lot on the TechArena about enterprise applications of AI, and the podcast with Neeraj was a welcome reminder of how AI is contributing positively to society at large, advancing science in ways we wouldn’t otherwise accomplish. While there are significant challenges ahead to tap the full potential of AI to these use cases, especially when it comes to energy consumption, with leaders like PNNL and other US labs driving innovation, I’m confident that we’re on the path to a more sustainable, AI-driven future.

.jpg)

.webp)