.jpeg)

A Deeper Dive on Functional Safety (FuSa)

Last October, I wrote a blog on Functional Safety, which provided a high-level overview of this critical, complex topic. The blog received very strong interest, proving to be one of my most popular to date. Given the strong interest in FuSa (or ISO 26262), it seemed appropriate to do a deeper dive on it, with a specific focus on random fault coverage, which was very briefly covered in the previous blog.

To recap, the key points regarding FuSa are as follows:

- The key objective of any system that affects the safety of the vehicle is to never experience a failure, and this is addressed through design rigor and high-quality devices and processes. However, FuSa anticipates that even with the best designs and quality, failures are still unavoidable. FuSa focuses on detection of failures when they occur and flagging those failures, so the vehicle can respond accordingly. It’s not necessarily that failures don’t occur – what’s important is, the detection of the failures when they do occur.

- FuSa anticipates that, even while additional hardware (called safety mechanisms) is added to detect failures, it is prone to failure.

- The Automotive Safety Integrity Level (ASIL) specifies the acceptable frequency at which failures escape detection by the safety mechanisms. The ASIL is measured in letters A through D, with increasing levels of stringency, where ASIL D represents the most stringent level. The more critical the impact that a failure has over the control of the vehicle, the higher the required ASIL.

- There are two primary components to FuSa:

- Systematic fault coverage – Ensures that the processes used to design, document, test, and verify the device have a certain rigor - the level of that rigor is specified by an associated ASIL A through D.

- Random fault coverage – Focuses on random hardware failures that can occur unpredictably during the lifetime of a component.

- Typically, devices that have meaningful impact on the safety of the system are required to be certified to ASIL D/B - which means that the device supports ASIL D systematic fault coverage and ASIL B random fault coverage. Achieving ASIL D random fault coverage at the “system level” (multiple devices working together in one system) typically relies on a concept called decomposition. This is a topic that will be covered in a future blog.

Now that the reader is well versed on FuSa and ready to be a Safety Manager (a real term for an individual who is responsible for overseeing the safety efforts of the company, a requirement of being compliant to the specification), we are going to spend some time taking a more in-depth look at random fault coverage.

Random hardware failures occur unpredictably over the lifetime of a product – however, they tend to be probabilistic in nature. These errors are the basis for the term probabilistic metric for random hardware failures (PMHF), and occur for various reasons, which are independent of design and quality rigor. Typically, random failures occur at different rates over the lifetime of the product during three distinct periods.

- Burn in – Typically referred to as infant mortality, when devices, shortly after manufacturing, can exhibit large numbers of failures until the device has been fully burned in.

- Useful life – This is the period that is post burn-in and is also the period in which the device is actively employed in the end-application. It is during this period that the typical random failure rates are low and is when random failures are generally calculated/ assessed.

- Wearout – This is theperiod when the device has served its useful life and is now operating beyondthe period that the device has been designed to operate – typically referred toas the “mission profile” of the device. Similar to the burn-in period, duringthe wearout period, the device typically exhibits a significantly higher randomfailure rate.

As part of the safety analysis of a device, a thorough analysis of the potential failure modes, including those due to neutron strikes, are evaluated. Random failures are measured in failures in time (FIT). One FIT is equal to one in 1 billion operating hours, or 114,000 years. To say these specifications are stringent is perhaps an understatement, but these types of extremely low failure rates are important when considering that the electronics ultimately have control over the vehicle.

In addition to evaluating the PMHF of the device, there is also an analysis which is conducted that looks at how well a design can withstand a single-point fault, which is referred to as the single point fault metric (SPFM). This metric evaluates the effectiveness of the safety mechanisms to both detect and handle single-point / isolated faults. In other words, to understand if there is a case in which a single fault of a specific type can overwhelm the safety mechanism.

Lastly, the final key metric that is evaluated in the context of achieving a given ASIL is referred to as the latent fault metric (LFM). This is a metric that determines the effectiveness of a system’s safety mechanisms in detecting faults that may go undetected for extended periods of time. The required values for the various metrics by ASIL are shown in the table below.

Consistent with the points that were made earlier, increasing ASILs drives more stringent requirements.

And yet again, we have only scratched the surface on this topic. But it is probably easiest to get your arms wrapped around this topic by taking small, bite size pieces. There are many other topics to cover in this complex field, which is of extreme importance, as growing numbers of semiconductor devices with increasing complexities are taking greater control over the vehicle.

In upcoming blogs, we will look at the concept of decomposition, or how to achieve ASIL D random fault coverage at the system level, while employing devices that are only certified to support ASIL B random fault coverage.

.webp)

Scaling AI and Biotech for Global Health Impact

In this episode of In the Arena, hear how cross-border collaboration, sustainability, and tech are shaping the future of patient care and innovation.

DeepSeek: Revolutionizing AI with Intel's Advanced Compute Solutions

Please raise your hand if you knew what DeepSeek was December 2024? Thanks.

The last 2 years of innovations in the AI field have been astonishing, with the only constant being unpredictability. The trend is not expected to change, and projects like DeepSeek are confirmation of this rapidly changing environment. Beside the technical value it brought to the industry with their detailed technical report, there is a significant message hidden in the initiative: “Complete” with massive resource scale is not enough anymore, it’s time to pursue the “Good.”

If yesterday’s focus was on unlocking new capabilities, today’s is optimizing them. Science history is full of examples: computers that once required the size of a house can now fit in a pocket and do way more than their predecessors. While AI is beginning and we should expect much more, there is an incumbent need from the market and the developer communities: the need to do more with less. This need is further motivated by financial considerations and policies on environmental impact.

This is the hidden ambition driving demand for an evolution of all the components of AI pipelines, such as software and hardware, and is not limited to the algorithmic approach itself.

The encouraging aspect is that Intel was able to intercept some of these needs at very early stages, even before DeepSeek became a buzzword, and now developers and enterprises can have the opportunity to “get more with less” out of their infrastructures or preferred devices.

Before seeing how, let’s expand on the key changes proposed by DeepSeek.

DeepSeek News: Separating the Wheat From the Chaff

DeepSeek represents a paradigm shift in AI model development. But what exactly did they do, and why did it generate so much hype?

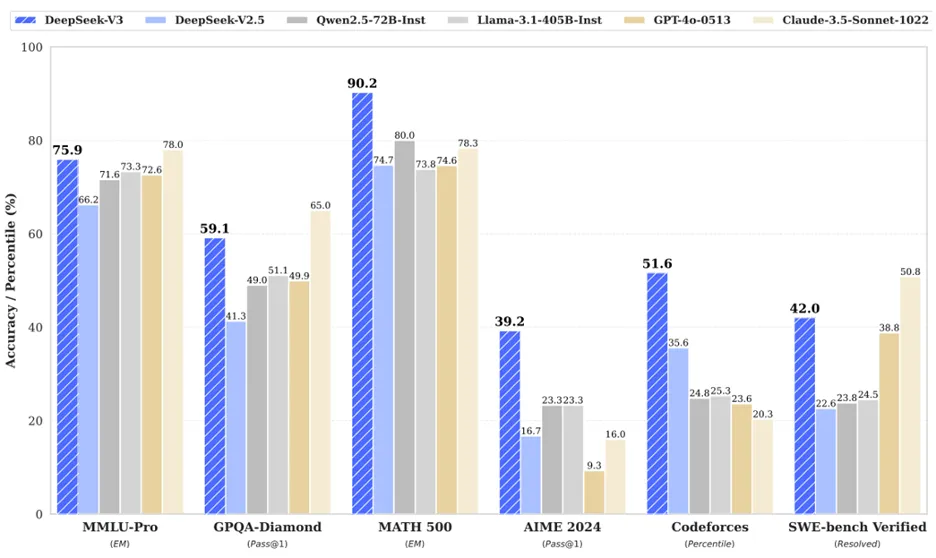

We can split between business and technical contributions. On the Business side, they proposed a free-to-use model (open-weights with MIT license that allows to build and sell products with it) with competitive performance (see Fig.1 below), obtained using a fraction of the resources claimed by others.

On the technical side, they combined several state-of-the-art methods to squeeze maximum performance at runtime. These include architectural optimizations such as Mixture-of-Experts (MoE), Multihead Latent Attention (MLA), MultiToken Prediction (MTP), revised training procedures like DualPipe, and quantization to FP8 and post-training optimizations such as Group-Relative-Policy-Optimization (GRPO). The explanation of these techniques is outside the scope of this article but all the details can be found in the DeepSeek technical report.

Fig.1: DeepSeek performance comparison with other models. Source: https://arxiv.org/pdf/2412.19437

Key Takeaways for the Market?

To maximize performance during execution time, DeepSeek went beyond the commonly used libraries, like CUDA, and rewrote some of the communication and memory allocation modules from scratch [1]. This means that DeepSeek went off-rail to achieve their goal with what they had. They picked the custom approach over brute-force. It went smarter instead of bigger. Quality over quantity. Maybe they were less scared of customizing code than investing more money in Hardware?

The point is that the market needs alternative routes, not only for training, but also for hosting the inference. This is also confirmed by the variants released by DeepSeek [2], which allow developers to adopt either the 671B version or the smaller 1.5B alternatives [3].

Another bold step they took was to have the model working at a lower resolution, such as FP8. Normally in AI pipelines, the training is performed using more digits (i.e. BF16 or FP32) to better capture the nuances of the data and the correlations in it. More digits make the algorithm more granular, hence more effective. Reducing the number of digits can surely speed-up computation, at the expense of the final model accuracy. More common is quantization being adopted at inference time for easier deployment and faster execution (particularly helpful when dealing with edge devices). In this case, the DeepSeek team quantized the model during training. This is a milestone that can encourage others to follow this approach.

How Does This Match Intel’s Strategy?

Intel has been advocating quantization as a critical method since the launch of its 2nd generation of Intel® Xeon® scalable processors in 2019, when it was only available for speeding-up inference. It extended to training with the 4th generation and confirmed onboard of the latest Intel Xeon 6.

But as mentioned at the beginning, the only constant aspect in AI landscape has been unpredictability. This is why Intel has worked on various fronts to enable AI tools to be hosted everywhere, from edge-to-cloud ensuring maximum flexibility to reduce risks and maximize ROI for enterprises. But let the actions speak for themselves!

Below are reported the best-known-methods to host DeepSeek locally, where no data is shared externally to protect privacy on client platforms, Intel® Core™ Ultra desktop processors (series 2), AI PC, using the distilled and smaller version of the model, as well as on Intel Xeon servers, fully enabled with Intel® Gaudi® as AI accelerators for the 671B version. The examples are not limited to DeepSeek, and there are many cases where Xeon-only might represent the best performance/value option to host the models. Below is a reference on how to test additional models, using Intel Xeon CPU only, to get initial evaluation.

Run DeepSeek using CPU, NPU and ArcGPU

Run DeepSeek using Intel Gaudi 3 and Intel Xeon

Additionally, here is a technical research paper with experiments on 671B DeepSeek-R1 done on Intel Gaudi 3

Conclusion

Intel’s mission is to democratize access to leading technologies and to offer our customers a choice of deployment options for AI. DeepSeek's effort on the creation of more efficient models that can run on advanced compute solutions paves the way for a future where AI is more accessible, sustainable, and versatile. As these technologies continue to evolve, the potential for AI to transform industries and improve lives is boundless, heralding a future where intelligent systems are seamlessly integrated into the fabric of our daily lives.

[2] https://github.com/deepseek-ai/DeepSeek-V3

[3] https://huggingface.co/deepseek-ai/DeepSeek-R1-Distill-Qwen-1.5B

Has GenAI Hit the Chasm? Unpacking Microsoft's News

Over the weekend, Satya Nadella announced that the economic opportunity from Gen AI is not manifesting as expected. In true AI-era style, Microsoft followed up at warp speed, announcing the cancellation of hundreds of megawatts of future compute capacity by terminating leases for planned AI data center expansion. Speculation on the street is that this is reflective of their OpenAI compute commitments and a rapidly evolving market. This update will likely send shockwaves across the infrastructure community this week as we all grapple with what this will mean for broader infrastructure demand, what it will represent in terms of enterprise adoption curves of generative AI, and how AI innovations will be fueled moving forward. The news reminded me of Geoffrey Moore, Clayton Christensen, and an insightful conversation I had last week. Let's unpack.

What is driving this change? Satya signaled that this is about the forecasted economic return for generative AI. But what changed in the forecasts from the dizzying expectations of... three months ago? To answer this question, I posit two possible explanations: either the enterprise demand that large model providers were seeking has not materialized, or the introduction of new, efficient models has re-shaped the GenAI cost curve.

We all were stunned by the introduction of DeepSeek and the efficiency delivered by the model vs OpenAI. While we discussed the tech approach, one thing that was maybe not discussed as much is how economically DeepSeek is a classic Christensenian disruptive innovation from an economic standpoint, delivering a million tokens for somewhere between $0.14 and $0.55, depending on whether you're seeking new or previously used input. This compares to a cost of $15-60 with ChatGPT prior to DeepSeek introduction, or $7.50 today... for similar performance. While this is a boon for anyone seeking an affordable model, it's also a sign that OpenAI's revenue forecasts just hit an iceberg, and future revenue forecast for new infrastructure may not materialize. The generative AI model market is complex and innovating quickly, and this is an oversimplified snapshot of but two models in a vast sea of alternatives. We will be unpacking some of these diverse models in the days ahead, and why they are needed, but for today, one is left to wonder if this is an OpenAI problem or a broader generative AI problem. Microsoft signaled the latter, which takes us to unpacking enterprise demand.

Generative AI may have reached its chasm moment. I am, of course, referring to Geoffrey Moore's foundational principle of disruptive innovation. Time and again, technology is billed in heady brilliance for the change it will bring, we reach the chasm where people lose faith in the vision, and we climb up the other side (most of the time) with practical adoption. This can explain where we have been with technologies like virtualization and cloud computing, and can explain where we currently are with 5G, which we will be covering next week at MWC, as well as the change we are seeing in real time with Gen AI.

The truth is Gen AI is a strikingly impactful tool, but at least in the near term, its impact is irregularly felt across job functions. Marketing teams and customer service departments are rapidly evolving today with the power of this technology; operating theaters and factory floors have already felt AI's impact for years from previous iterations of technology. Think image recognition, natural language processing, recommendation engines. These technologies feel like old hat to us today, but are, indeed, enjoying massive deployment as accepted tech.

This phenomenon played out in conversations I had last week at Cloud Field Day, as it became very apparent that the value of Gen AI integration into cloud monitoring tools was seen as anemic due to its focus on admin console chat improvement. Practitioner delegates wanted deeper integration into actual monitoring—leveraging traditional ML—while vendors seemed hard-pressed to justify valuations in the Gen AI hype cycle. The GenAI chasm was palpable in that room as an example of unequal value proposition across job functions.

So, where does this leave us? It’s time to unpack practical application targets, explore ethical considerations, trust and safety, and examine Gen AI's role in text, code generation, and agentic workloads. Microsoft did confirm that its $80 billion in infrastructure spending this year remains on track, meaning that at least for now, data center spend has slowed in forecasts but not cratered. While we had tempered enthusiasm about the broad scale impact of GenAI based on its core value limiting job function integration, we still see mass disruption in knowledge-based work from the application of GenAI tools as powerful accelerators. It's only February! Buckle up. 2025 is going to be a fascinating year in tech.

Flexibility at the Edge: Pragmatic Semiconductor’s Bold Approach

In this podcast, Pragmatic Semi CEO David Moore shares how flexible, sustainable ICs are unlocking new edge AI and IoT applications—powered by a low-carbon, high-volume fab model.

How Verge.io is Rethinking Compute, Storage & AI Readiness

Tune in to our latest episode of In the Arena to discover how Verge.io’s unified infrastructure platform simplifies IT management, boosts efficiency, & prepares data centers for the AI-driven future.

Exploring AIOps with Selector AI

Cloud Field Day 22 is digging deep into network observability, and Selector AI took the stage to share how their innovation is particularly instructive in demonstrating AI’s impact on IT infrastructure management. Deba Mohanty, Sachin Natu, and John Heintz were on hand to introduce Selector and walk us through how Selector’s platform is delivering new capability to network observability.

Deba started with an introduction of where Selector AI fits within the world of infrastructure management. Selector software sits above network data and captures insights regarding event correlation and root cause analysis, network language models, and digital twin technologies. TechArena previewed the concept of their network language model in our preview blog, as it reframes what network administrators can do to simplify oversight. Deba described the complexity cleanly, describing admins moving between dozens of network, infrastructure, and application dashboards, today to root cause issues and glean insights about the state of the enterprise environment. He stated that Selector is obsessed with simplifying this state of affairs, utilizing their tool suite.

What’s the outcome? Deba shared that customers across telco, retailers, broadcast, and finance sectors are targeting Selector AI tools for data center, network backbone, and edge environments. They’ve built a model that is a Slack native interface, designed for mobile optimization for delivery of alerts and prompts for network management. John walked us through a demo of Selector in action. He showed a SelectorAI “smart ticket,” stating that a ticket provides more insight to an admin with integrated action buttons for simple execution of actions. Admins can choose to take action or look for more information based on an AI chat app. From the chat app, admins can view topology maps with color coding for simplified views of network status. In the example, John showed two telco services, Verizon and AT&T, with degraded service (orange) within the Verizon service. The interface provides easy click-down for even more context about a particular service or network function. The dashboard is created dynamically, based on data relevant to the ticket with further drilldown, showcasing broader data that may be useful to the admin. Selector clarified that this real time work is deep data analytics vs Gen AI, as there is no benefit for LLM digesting raw network data. Selector is layering a RAG implementation to take LLM model of customer choice to drive recommendations.

What’s the TechArena take? Integration of Gen AI into observability to help drive better and more efficient actions makes excellent sense. However, the large providers have announced that they’re working on this capability across the full network management realm. We’ve covered this on TechArena in the past, for example, with Juniper’s Mist AI solution, and Marvis/Marvis Minis for wired and wireless network management. In the observability space, NetScout, DataDog, Dynatrace, and others have announced AI integration of some sort. So where does this leave a relatively new entrant like Selector AI? In the world of RAG model implementation, we expect that Selector may become an acquisition target for larger observability players to obtain differentiated IP for maximum market impact. Regardless, if Selector achieves market traction more organically, or through gaining M&A interest, this is a trend for network administrators to have on their radar and a company that could deliver differentiated capability.

Fortinet: Active Security for a Rapidly Evolving Threat Landscape

With the cost of a single corporate security breach reaching $4.88 million, a 10% rise in just one year, and the total cost of cybercrime expected to scale to $10.5 trillion globally by 2030, it’s no surprise that security remains IT executives’ number one priority. AI is opening the door to more sophisticated attacks by a broader range of bad actors, and anyone – from a guy living in his parents' basement to a nation state – can utilize this nefarious technology to annoy, disrupt, and steal from organizations and individuals. Any discussion on cloud is not complete without looking into the latest in cloud security solutions, and luckily for CFD22 delegates, Fortinet was on hand to give us a comprehensive update.

Fortinet is a leader in security solution delivery, with a broad suite of products in the marketplace for cloud-to-edge protection. The team at CFD22 walked us through the Fortinet Security portfolio with stress on active threat detection, a key capability that automatically detects early signs of attacks, combines multiple low-severity signals into one priority alert, and simplifies SecOps overhead with simplified alert communications and enhanced recommendations for action. The advancement of the comprehensive platform struck a common theme from what we’ve seen at the event – integration of advanced analytics and AI to improve cloud management of all types.

How does Fortinet’s solution work? They’ve developed something called Polygraph technology that ingests data from the environment with or without agents, analyzes data based on your cloud topology, and drives action. This works across network, platform, and application security, providing a broad scope of service delivery under one hood.

They’ve delivered this with a heritage of technology innovation, with over 1,000 patents in their portfolio. They’ve built the FortiGuard AI-Powered Security Services platform that is analyzing trillions of events across the globe to continuously update threat detection for their >800,000 lifetime customers. Who does Fortinet work with? Over 300 partners to ensure seamless operation across public cloud services and private cloud stacks. This impressive solution has led Fortinet to be listed in a stunning 10 Gartner Magic Quadrants across security use cases.

It's notable that, since I heard from Fortinet at their last CFD session, they have upped the value of AI integration into the platform. This was demonstrated by Julian Petersohn and his teammates as they walked us through the powerful capabilities of the platform. Julian is a previous guest on the TechArena podcast, and always delivers a lively demo, this time playing the role of a hacker injecting a Java exploit into a cloud server environment. He quickly obtained root control of a Kubernetes container and started to spread control within the environment. Because this system resides in AWS, Julian was able to gain system identity information, seeing that the sys admin had left access open on this particular system - unfortunately an all-too-common occurrence. Julian explained that attackers who now have this control could use the compute capacity for their own purposes for free, sell access, or utilize compute cycles for crypto mining… all at the expense of the company charged for the service. He chose a crypto workload and was off making money.

Enter Forti-CNAPP, or cloud native application protection platform. Julian ceded the floor to his Forti-teammates who walked us through the paces of the solution to this crypto hacker. Forti-CNAPP constantly scans cloud activity, configurations, agentless scans, agent scans, and code itself, looking for anomalies in the data. The team walked us through a step-by-step process where Forti-CNAPP identified the anomaly on the Kubernetes cluster and pulled up the exact code Julian ran including the crypto miner. An alert was issued for engagement for cloud security, as well as network security. As we progressed into action, the team showed how the solution taps an artificial neural network to identify specific code blocks that were threats.

What’s the TechArena take? I’ve always been impressed with Fortinet, dating back to my days in industry when Fortinet was an early mover in confidential computing. The continuous advancement of Fortinet solutions demonstrates their commitment to investment in innovation. Their solutions provide a flexible foundation for customers with a single platform with broad capabilities for modern SecOps requirements, and should be on a shortlist of every organization’s security solution evaluations.

Catchpoint’s Powerful Approach to Internet Monitoring and Security

With monitoring as the hot topic at Cloud Field Day 22, we kicked off the first afternoon with a fantastic presentation from Catchpoint. Catchpoint has carved a leadership position in internet monitoring with 16 years of innovation represented in the solutions they bring to market today. Co-founder Medhi Daoudi and the team were on hand to walk through the latest updates of the solutions portfolio and frame the importance of high-performance internet monitoring for large scale organizations.

Who uses Catchpoint? A lot of enterprises is the short answer, but key industries are the who’s who of financial services, retail, and digital service providers. Based on this broad adoption, I was keen to learn more about what differentiated the Catchpoint solution from traditional alternatives.

Medhi started with the fundamental shift from infrastructure-centric to user- and services-centric monitoring in the internet arena. He continued with a focus on how shifting to a proactive approach to monitoring helps improve customer engagement, address brand risk, and reduce organizational risk on multiple levels. Why and how? Catchpoint takes a different approach than traditional solutions by simulating user behavior to much more quickly filter real events from noise, providing a speedier path to real-time insight. Medhi referred to that as focusing on solving for the unknown unknowns, and explained that by branch predicting user engagement trends, Catchpoint can literally hand businesses insights faster, delivering more time for mitigation of risks and working on root solutions to navigate around pending challenges for customer engagement.

How do they do it? It starts with understanding the complex nature of organizational sites, both on a corporate domain and across the internet. The Catchpoint IPM Platform builds upon a framework of global collectors, a slew of telemetry data, and analytics engines. Global collector agents measure everywhere from the cloud to backbone networks, wired and wireless edge, and edge points across the world. Medhi claimed that this is the largest agent network of over 2,800 agent types running on over 15,000 servers across the globe.

Telemetry is literally pulling data from these agents on a core of attributes, including synthetics, RUM, Endpoint data, real-time BGP, web page performance, and application tracing. What happens with this? Imagine smart anomaly detection, multi-dimensional query, AI-assisted analysis, and more. All of this insight is visualized in various stack maps for customers to utilize to drive actions. Imagine Internet Sonar measuring global service outages across the globe…correlating internal network issues with broader environmental issues.

What’s the TechArena take? Catchpoint is an incredibly powerful tool for large organizations to deliver predictive insight into a myriad of issues, both internal and external, that may affect both an IT environment and customer engagement. The value proposition was clear, and the Catchpoint team backed up their articulation of capabilities with demonstrations. If I was responsible for a large brand’s IT or web ops, Catchpoint would be on my shortlist of required tools.

VAST Data Unveils Game-Changing Tools for AI and Real-Time Data

VAST Data, the company that is busy revolutionizing data platform management, dropped new tools in their growing toolbox today that should excite their growing customer base.

As a reminder, VAST Data has been delivering solutions for the AI market, providing data platform management that integrates resources from across environments and streamlines high performance data utilization for AI application advantage.

This delivery has earned VAST traction with leading cloud providers like CoreWeave and X AI, as well as enterprises like ServiceNow.

So what’s new? The first big delivery is VAST’s new block storage functionality, available next month. To understand why this is a big deal, we need to take a step back and think about data being stored in blocks, files, streams, tables and objects, with block data a very standard format of traditional data management in large enterprises. The integration of native block data support will open VAST as a streamlined solution for many organizations with large block data stores – something that may have been a limitation of VAST deployment for some customers.

What really caught our attention today was the delivery of a new solution called Event Broker, a groundbreaking architectural shift that brings event streaming, analytics, and AI together in a unified, high-performance data platform. Organizations have been frustrated with the immense tuning required from traditional Kafka solutions that have been used in this space, often with less than perfect results.

For the past 20 years, event streaming has been hampered by rigid architectures, operational complexity, and inefficiencies that limit fidelity, scale and analytical potential. With the Event Broker, VAST is changing that. Kafka API-compatible, the VAST Event Broker is a real-time event streaming engine eliminating the need for Kafka clusters and unlocking a new era of scalability, performance, and simplicity. Integration of the Event Broker into VAST’s existing Data Engine provides customers an easy onramp for integration, and delivery of streaming data as SQL tables offers customers a structured approach for real-time analytics.

They’ve added major performance enhancements with low-level tunables, addressing a major challenge with Kafka implementation, and they’ve lowered TCO with built-in data reduction. The integration into the VAST Data admin platform just makes it easier for organizations to spend less time messing with tuning and more time gaining insights from event related data streams.

Who is likely interested in the streaming solution? We expect the financial service industry to engage rapidly to put Event Broker through its paces. Retail environments facing real time sales data, any company relying on large deployments of IoT sensor data, healthcare operators seeking better control of real time patient data, and really anyone who is grappling with gaining insight from real-time data streams will be interested in this new capability.

We expect we’ll likely hear soon from customers on adoption of both Event Broker and native block storage support as VAST is very good at turning technical capability into customer success. Our antennae are up to learn more in the months ahead as these new capabilities deploy across VAST Data platform environments.

Top Trends I'm Tracking at Cloud Field Day 22

It's time for Cloud Field Day 22, the time-honored tradition of vendors pitching their vision, their differentiation, and their market traction to Field Day delegates and the online world. I'm honored to be a delegate of this foundational industry program run by the folks at Gestalt IT, and this edition offers a fantastic lineup of cloud innovators, including Catchpoint, Fortinet, HYCU, Infoblox, and Selector AI. I'll be publishing my takeaways all week, but to set the scene, I thought I'd share the top four trends that I'm tracking heading into the event.

1) AI is Transforming Cloud Requirements...and Reshaping Cloud Oversight

While this is not AI Field Day, I'm starting with this disruptive technological force from two angles. Much of 2024 was spent discussing the changing requirements of cloud to fuel both AI training and inference. This change reshapes organizations’ views on workload placement across public clouds and on-prem, driving discussions of repatriation. However, it also introduces new challenges for data center managers on just what infrastructure to utilize to deliver the capabilities required to handle these workloads.

What is often overlooked in this narrative is how AI integration into cloud management tools is reshaping what is possible for IT administrators, and this is why I'm so excited to hear from Selector AI, a leading provider of Network AIOps. Selector just closed a $33 million series B funding round, which will help them fuel their industry-first network language model into the market. What is a network language model? Imagine using an LLM interface to manage a network in plain language. Further imagine that this model provides recommendations for how to implement improvements to the network. The value proposition of this technology is clear to anyone who has managed a network or knows someone who's managed a network, and it provides a clear understanding of how LLMs will change IT operations. Watch this space for more.

But wait, there's more on the AI infusion front. Network observability provides real-time monitoring of traffic to provide oversight of operations, gain insight into pending challenges, and actively mitigate issues. Enter Catchpoint, a leader in the observability front, delivering tools for both network admins and security teams to monitor network traffic in real time with an innovative approach that provides a view across the entire internet stack and application stack, the Internet Stack Map. Catchpoint is leveraging AI technology to map real-time network behaviors to broader test behaviors to accelerate issue root cause analysis. I can't wait to hear about real world results of putting this new capability to the test in live environments.

2) Threats are Evolving in the AI Era

We have tools that put malware in the hands of anyone with an LLM prompt today, so yes, the world of IT security has shifted since the dawn of generative AI. With threats expanding and getting more sophisticated, a check-in with Fortinet is a highlight of the week. Fortinet is known for their end-to-end security, so much so that they name everything "Forti"-something. For example, see their recently announced FortiAppSec Cloud. This tool, launched late last year, offers a unified platform for web application security, and is just one example of the suite of powerful solutions from the company. One key attribute I'm keen to learn about is their cloud-to-cloud security, given the broad trend of workload repatriation and multi-cloud adoption that comprises enterprise IT today.

3) We're Still Chasing a Single Pane of Glass

A single console to rule them all is something the industry has long been promising, but management integrations mean that reality is often much less single paned than what we have collectively envisioned. The question we may want to ask is: will we reach singularity or a single pane of glass first? In reality, there's progress in the world of management integration, brought to us by Infoblox, a leading provider of Universal DDI solutions. Infoblox claims to break through the silos of CloudOps, NetOps, and SecOps with their new Universal DDI Management offering, delivering the ability to manage across multi-cloud environments with ease. In reading up about Infoblox offerings, I have to admit I'm really hoping for a demo this week of the new solution, so we can see the team put it through its paces.

4) Data Really Matters, and so Does Cloud Storage Management

Data has become newly cool in the last couple of years as organizations are finding new ways to, finally, extract economic return from the wealth of data residing inside corporations. The question is, how can IT operators more elegantly manage data and delivery of data streams to new AI infused models at scale? Enter HYCU, a leader in data management across multi-cloud and on prem resources. HYCU was recently recognized as a top leader in CRN's Cloud 100 list for their cloud native platform that delivers features such as automated backup and recovery, data migration, and data estate discovery and utilization. Stay tuned for details on HYCU's solutions and how they'll help shape 2025 data deployments.

With two full days of sessions and discussions at CFD22, I am hoping these trends will gain new insights this week, which will be shared in my TechArena blog series. Watch this space for more as the proceedings commence.

Infoblox Heralds a New Era for DDI

In my CFD22 preview blog, I introduced Infoblox as a company pursuing a single pane of glass for their customers. CFD22 kicked off with Infoblox Chief Product Officer Mukesh Gupta walking us through what he called a reinvention of critical network services.

Some context setting on why the network centricity: In a multi-cloud world, data and application movement is a critical element of IT oversight, not just from a network admin perspective, but also from a perspective of the SecOps team, and even cloud infra-management. Mukesh’s background is deep into networking with stops including Palo Alto Networks, Illumio and Juniper Networks. At Infoblox, Mukesh is responsible for guiding the product strategy and delivery into the market.

Infoblox is all about DDI – DNS, DHCP and IPAM. Network people, after all, have a unique fondness for acronyms. To break that down for those who don’t live in this space, DNS – Domain Name System, DHCP – Dynamic Host Configuration Protocol, and IPAM – IP Address Management. Infoblox has been working in this space since their launch of a DNS appliance in 2000, and they claim over 13,000 enterprise customers, including 75% of the Fortune 500.

For a company with a quarter century of development, the current moment of multi-cloud management and the move away from VMware within on-prem environments, together, have placed strain on DDI oversight. Add additional security breaches to the mix, and improved DDI is becoming critical for many organizations. Every cloud provider has its own DNS solution, and this mix of solutions provides complexity to enterprises, something we’ve covered before as a barrier to cloud workload movement across clouds and cloud provider lock-in. Other challenges that Mukesh introduced included a rise of human mistakes, increased costs, IP conflicts, ransomware threats, and zombie assets across clouds.

So how does Infoblox’s solution help with these challenges? As we referenced in the preview blog, Infoblox just released their unified platform for networking and security in a hybrid enterprise. This unified platform encapsulates Network Universal DDI, Security DNS DR, and Comprehensive Asset Visibility, all wrapped in cohesive management. The solution extends across all clouds, on-prem data centers, and branch offices and edge devices down to user systems. The major update is focused on that universal DNS management chasing the single pane of glass for IT administrators. This integrates IP address management across all these domains, eliminating the challenge of IP address conflicts across clouds, and gives much more acute visibility into subnets across public clouds.

What has the customer response been? When asked, Mukesh clarified that many of the elements of the new solution have actually been available for the past five years, so major enterprises are viewing this solution as proven. The universal management has been very well received, with notable deployments achieved by a Fortune 5 company, as well as major airlines, since its introduction in September of last year.

Mukesh was not done. We moved on to a deep dive on DNS, where he introduced urgent challenges with Phishing/Smishing/Quishing, Command and Control, Data Exfiltration, and Prompt Injection. Let’s unpack!

We’ve all heard of phishing, but let’s introduce smishing – phishing by SMS text and quishing – phishing by QR codes. All represent threats to enterprise environments. Command and control attacks involve bad actors communicating and taking over a system within the environment with nefarious intent. Data exfiltration is exactly as it sounds – the unauthorized removal of data to outside of the environment. Finally, prompt injection is very 2025 – tricking large language models into nefarious results within the environment. To fight all these threats, organizations need the help of DNS.

Mukesh introduced some results, claiming that Infoblox is blocking an average of 63 days earlier than the rest of the industry, with over 75% of threats detected before the first DNS query, and over 80% within a single day of the first DNS query.

What’s the TechArena take? I was impressed with the progress towards cohesive management, and more impressed with customer adoption. The delegates in the room who have administration experience in their backgrounds liked the full feature delivery across environments and support for all major cloud providers with others, such as Cloudflare, coming soon. The time for this solution is now, given enterprise desire to migrate VMware instances on-prem and a growing reliance on multiple cloud providers, and this integration of capabilities will be welcomed by administrators seeking enterprise class protection for this complex environment. This solution just makes sense as delivering tangible value.

Exploring the Latest in IO Chiplets with Alphawave Semi

In this video from Chiplet Summit, learn about Alphawave Semi’s new AlphaCHIP1600-IO, an industry-first multi-protocol IO chiplet supporting PCIe Gen 6, CXL 3.1, and 800G Ethernet.

Why a Healthy, AI-Driven Technology Ecosystem is Essential

In today's rapidly evolving technology landscape, driven by new disruptor #1 – Artificial Intelligence (AI), a healthy technology ecosystem is crucial for fostering innovation, growth, and sustainability. For the foreseeable future, which will span years, AI will remain the leading disruptor. Like previous technology disruptors, it requires a thriving tech environment that encourages open collaboration among various stakeholders, including startups, established companies, and academic institutions to deliver adoption at optimum speed. A collaborative approach enables the sharing of knowledge, resources, and expertise, leading to the development of groundbreaking solutions that address complex global challenges.

How is a pre-AI company to survive? One approach is to dive in and engage within a strong technology ecosystem, fostering a value exchange between its participants. The value exchange can be broken down into three main pillars: product/service training, product/services innovation access, and co-marketing that drives mutual sales.

- Training ensures that all participants have the necessary skills and knowledge to mutually contribute positively, ultimately driving market growth. By investing in education and professional development, companies can cultivate a workforce proficient in the latest technologies and capable of driving future advancements. Downstream ecosystems will also thrive as the training moves from technology to solutions messaging in a clear and cohesive story line.

- Innovation access allows companies to leverage each other's technological breakthroughs and research. By sharing intellectual property and collaborating on R&D projects, organizations can accelerate the development of new products and services, ultimately benefiting the entire ecosystem. This open exchange of ideas fosters a culture of continuous improvement and adaptability, which is essential for staying competitive in a dynamic world.

- Co-marketing emphasizes the importance of joint efforts in promoting and distributing new technologies. By working together on marketing campaigns and product launches, companies can amplify their reach and impact, tapping into new markets and customer segments. This collaborative approach not only enhances brand visibility but also strengthens relationships between partners, creating a more cohesive and resilient technology ecosystem. Moreover, by pooling resources and aligning efforts, companies can bring products to market faster, reducing time to market and gaining a competitive advantage.

Getting back to disruptor #1, Artificial intelligence (AI) plays a significant role in enhancing a healthy technology ecosystem. AI-driven tools and platforms enable companies to analyze vast amounts of data, identify trends, and make informed decisions more efficiently. In a collaborative ecosystem, the use of open AI technologies can significantly enhance cooperation and knowledge sharing. Open AI systems provide greater accessibility, flexibility, and interoperability, allowing companies to integrate AI solutions seamlessly and share advancements with their partners. In contrast, closed systems may limit collaboration and hinder the collective progress of the ecosystem.

An unhealthy technology ecosystem can be seen in environments where collaboration is stifled, and companies operate in isolation. This fragmented approach can result in duplicated efforts, wasted resources, and missed opportunities for growth. Certainly, in the emerging ecosystem of AI-driven platforms, we are already witnessing gravitational pull develop between disparate platforms. For example, closed markets led to the emergence of DeepSeek, an amazingly cheap alternative to the minds of AI excellence from Silicon Valley. However, whether it was truly innovative or capable of fostering a healthy ecosystem remains to be seen…time will tell.

Collaboration with partners and stakeholders is essential for technology companies to thrive. By working together, companies can pool resources, share risks, and leverage strengths to develop innovative solutions at Internet speed. Ultimately, a cooperative and collaborative approach fosters a vibrant technology ecosystem. If you would like to discuss how you can participate or foster your own ecosystem motions, shoot me a note at keate@techarena.ai.

How AI-Optimized Storage is Eliminating Data Bottlenecks

Join us on Data Insights as Mark Klarzynski from PEAK:AIO explores how high-performance AI storage is driving innovation in conservation, health care, and edge computing for a sustainable future.

Unlocking the Future of Chiplet Innovation

Discover how OCP’s Open Chiplet Economy is setting hardware and software standards to drive chiplet innovation, enabling scalable, modular solutions for AI and HPC growth.

Europe Enters the AI Race with €200 Billion InvestAI Initiative

Europe laced up its running shoes and hurtled into the AI race today, announcing a €200 billion InvestAI initiative – including a €20 billion fund for AI gigafactories across the EU – during the Artificial Intelligence (AI) Action Summit in Paris.

The EU sprinted into the global AI race on the heels of a flurry of announcements, including China’s unveiling of DeepSeek, an open-source generative AI model outperforming OpenAI's GPT models, and the U.S.'s $500 billion Stargate initiative aimed at maintaining American AI dominance. The convergence of these major initiatives sets the stage for a fierce contest among global powers to lead the future of AI and underscores the critical importance of this disruptive technology.

InvestAI – designed as a public-private partnership – aims to democratize access to AI resources, enabling not just tech giants but also startups and researchers to participate in AI development.

“AI will improve our healthcare, spur our research and innovation and boost our competitiveness,” said Commission President Ursula von der Leyen during the historic announcement. “We want AI to be a force for good and for growth. We are doing this through our own European approach – based on openness, cooperation and excellent talent. But our approach still needs to be supercharged.”

The recent launch of DeepSeek has stirred the global AI community, placing some players on their heels and others seeking for ways to leverage the new model for market opportunity. DeepSeek features reportedly superior performance and innovative training techniques that have brought new efficiency into model development. While this has raised questions about the accuracy of reported costs and the transparency of its training process, its efficiency highlights China’s advancement in AI leadership. The Chinese government has invested heavily in AI, with companies like ByteDance, Alibaba, Tencent and more committing billions to bolster AI capabilities both domestically and internationally.

Meanwhile, in the U.S., OpenAI’s Stargate project, backed by a $500 billion investment from Microsoft, NVIDIA, and other tech giants, reflects America's determination to retain its AI leadership. Stargate’s focus on large language models (LLMs) and cutting-edge AI infrastructure demonstrates the U.S.'s aggressive approach to pushing LLM capability. With hyperscalers leading the charge, the U.S. is betting on its established tech ecosystem to create a geo-political advantage on the world’s stage.

Europe’s InvestAI offers a refreshing approach. While the U.S. and China focus on strategic dominance, the EU is emphasizing openness, collaboration, sustainability, and trustworthy AI. The gigafactories funded through InvestAI, according to the announcement, will foster an environment where companies of all sizes – not just tech giants – can access large-scale computing power to develop advanced AI models. This cooperative approach reflects Europe’s broader regulatory framework, including the recently passed AI Act, which sets global standards for ethical AI development. (Also, check out TechArena's recent AI Ethics Great Debate here.)

AI Infrastructure for the Future

The EU’s investment is designed to fund four AI gigafactories specialized in training next-generation AI models. These facilities are critical for breakthroughs in fields like medicine, climate science, and biotechnology. With each gigafactory housing 100,000 AI chips, Europe’s AI infrastructure will rival similar facilities worldwide.

The European Investment Bank (EIB) will play a key role in financing these projects. The EU budget will help derisk private investments, encouraging public-private partnerships to drive AI advancements.

The Sustainable AI Approach

The InvestAI initiative aligns with the EU’s broader goals of reducing energy consumption and promoting environmental stewardship.

The gigafactories will be required to adhere to the Energy Efficiency Directive, which mandates regular disclosures of energy and water consumption, the use of renewable energy, and efficient cooling systems. This reflects Europe’s commitment to balancing technological innovation with environmental responsibility—a stark contrast to the unchecked growth of energy-hungry data centers in other regions.

Additionally, the AI Act mandates that AI developers document and report on the energy efficiency of their models. While some critics argue that these regulations may slow innovation, they ensure that AI development in Europe aligns with societal values and long-term sustainability goals.

So, what’s the TechArena take? With InvestAI, Europe is making a decisive move to shape the future of AI on its own terms. The initiative reflects a commitment to openness, collaboration, and ethical innovation, distinguishing Europe from approaches in the U.S. and China.

However, challenges remain. Balancing regulatory oversight with the need for rapid innovation will be critical. Europe must also ensure that its investments translate into tangible outcomes, fostering a thriving ecosystem of AI startups, researchers, and industry leaders.

As the AI race continues, Europe’s success will depend on its ability to leverage its unique strengths—a commitment to sustainability, a robust regulatory framework, and a collaborative approach to innovation.

DeepSeek’s AI Breakthrough: Cheaper, Faster, but at What Cost?

Chinese startup DeepSeek recently made quite the splash in tech news with their large language model (LLM) DeepSeek-R1. This open-source model is quickly catching up in capabilities to popular models, such as those from OpenAI. DeepSeek claims that DeepSeek-R1 was trained at a far lower cost than other models.

What does it all mean?

Defining Terms: What is a Model?

According to IBM, “an AI model is a program that has been trained on a set of data to recognize certain patterns and make certain decisions without human intervention.” It works by using algorithms against large amounts of data.

A large language model (LLM) is an AI model that has been trained on a huge amount of text from books, websites, and other sources to understand and generate human-like text. You’ve probably interacted with an LLM by asking it questions or giving it prompts. The LLM can respond with relevant information, write essays, have conversations, and more. It's been programmed to understand the context and nuances of language to provide helpful and coherent answers.

IBM explains it like this: LLM models are “defined by [their] ability to autonomously make decisions or predictions, rather than simulate human intelligence."

Examples of popular LLMs include OpenAI’s GPT-3 and -4, Google’s BERT and T3, Facebook’s RoBERTa, and DeepSeek-R1.

What is a GPU?

GPUs, or graphical processing units, are hardware processing cards that were originally developed to enhance graphics for video games and virtual desktops. Someone realized that the intense math required to deliver stellar graphics was similar to the math data scientists did to create AI models. This helped speed up the systems hosting the models, which led to performance improvements.

Not surprisingly, the prospect of training a model that required fewer GPUs shook up the industry! In fact, the industry was so shaken that NVIDIA lost nearly $600B in market value the day DeepSeek made their announcement.

What's Behind the Excitement about DeepSeek?

One major reason for excitement over the new model is that DeepSeek claims to have built an open-source model that does what Open AI’s models do, but at a fraction of the cost. To be precise, the company claimed it only cost them $6 million dollars and 2,046 GPUs to train. For comparison, the cost of GPT-4 was estimated to be between $50 - $100 million.

GPUs are one of the biggest expenses required to train models. Additionally, since everyone is racing to be part of the AI revolution, GPUs are hard to find, as vendors like NVIDIA are having a hard time keeping up with demand.

On October 7, 2022, export controls on the sale of GPUs to China were put into effect. That meant that DeepSeek needed to find a way to train a model without leaning on hardware accelerators. And they figured it out with some ingenious computer science.

Technical Achievements Unlocked

DeepSeek also caused a stir because of technical achievements that allowed the team to train their model with fewer GPUs. According to The Register, DeepSeek R1 was fine-tuned from their V3 model that was released at the end of last year.

The team mixed up how the model was trained.

- Chain of Thought (CoT) prompting: This requires the model to show the steps it goes through to answer a prompt. Showing this “reasoning” makes the model’s responses more reliable, more transparent, and easier to debug.

- Reinforcement learning. This is a machine learning technique. As the software makes decisions, it’s rewarded for taking actions that work toward your goal. Actions that aren’t useful are ignored.

- Model Distillation: This is another machine learning technique. It transfers knowledge from a bigger system (the teacher model) to a smaller model (the student model). This gives the student model all of the lessons learned without having to make the training investment made with the larger teacher model.

Mind the Hype

When you’re evaluating AI models, you have to mind the hype. The most obvious place to start is the $6 million cost DeepSeek claims it took to train this model. That is a 94% decrease!

The independent research and analysis firm Semianalysis looked at the probable total cost to train this model. They don’t believe the $6M price tag, saying: “This is akin to pointing to a specific part of a bill of materials for a product and attributing it as the entire cost.”

They believe that $6M was only for the pre-training number, and that the hardware spend has surpassed $500M over the company history. Additionally, there is the cost of the teams who spent months developing and testing the new ideas and configurations for the new model to consider.

Dangers of this Model

There are a few things to be wary of if you plan to use this model. First of all, since the model was trained with distillation, that means it was trained with synthetic data. Is that data correct? Probably as correct as any LLM can be.

Also remember that DeekSeek is a Chinese company. This means it is subject to its country’s laws and regulations. Because of that, it can’t answer questions about the Tiananmen Square massacre or the Hong Kong pro-democracy protests, for instance.

While DeepSeek, Meta, and OpenAI say they collect data from account information, activities on the platform, and devices they are using, DeepSeek “also collects keystroke patterns or rhythms, which can be as uniquely identifying as a fingerprint or facial recognition and used a biometric.”

Things to Ponder…

The DeepSeek announcement was something to get excited about. A company has figured out how to train their model faster and more efficiently, making it more affordable. However, don’t get caught up in the hype. Dig into statements that seem improbable – they probably are focusing on just one part of the story. If you don’t understand the vocabulary, look up the words or ask an expert. Here is an overview of how LLMs work from a session I presented at VMworld.

Always remember that AI is just computer science, and not magic!

Inside the Living Edge Lab: Drones, AI, and Edge Innovation

Jim Blakley, of Carnegie Mellon University’s Living Edge Lab, shares insights on edge computing, AI-driven drones, private 5G, industry partnerships, and real-time innovation.

5 Top Takeaways from Day 2 of the Oregon AI Conference

Day two of the Oregon AI Conference brought together a wide range of attendees focused on the role of artificial intelligence (AI) in society. Discussions centered on the ethical implications of AI, how small-to-medium-sized businesses (SMBs) can integrate AI into their operations, and the challenges of automation. An interactive Q&A panel—featuring Mackenzie Bristow (Senior UX Designer at Home Depot), Nick Parish (Content Strategist at Work & Co), and Sebastian Chedal (CEO of Digital Agency Fountain City)—set the tone by engaging the audience in real-world questions. This open format sparked a dynamic conversation centering around the balance of AI’s efficiency with the need for human oversight and accountability.

A recurring question emerged throughout the day: How can AI systems remain both reliable and ethical as they become more deeply integrated across industries? Below are the top takeaways gleaned from the sessions and discussions, illustrating the delicate balance between innovation and responsibility.

- Beware of Bias

Financial services offer a prime example of how AI can generate both efficiencies and risks. Julia Carlson, CEO of Financial Freedom, showcased how tools like Quicken and QuickBooks automate financial planning. However, she emphasized that AI-driven platforms can exhibit biases—for instance, overly favoring investments in AI-related companies. This highlighted the importance of human review and transparency in any AI-assisted system, especially when user data might be feeding a broader, often public, knowledge pool. Ultimately, while financial AI can streamline processes, organizations must maintain oversight to ensure recommendations truly serve client interests. - Automate Tasks, Not Roles

Pedro Luraschi, Co-Founder of Hal9, explored how chatbots and AI agents can handle routine tasks—like qualifying leads—without removing the vital human element from the process. By leveraging accessible no-code tools, SMBs can quickly implement AI solutions that reduce manual workloads in an environment of labor shortages, though Luraschi cautioned that automating entire roles carries the risk of sacrificing human judgment. Instead, AI should be introduced as an augmentation strategy, allowing teams to focus on higher-level analysis while leaving repetitive tasks to intelligent systems. - AI and Creativity: Human Intuition Still Matters

A discussion on whether AI could create a sewing pattern from a photograph reinforced the fundamental differences between AI’s mathematical models and genuine human creativity. Nick Parish contrasted AI’s capacity to imitate human thought patterns through statistical models with the innate intuition that humans bring to creative processes. Similarly, Sebastian Chedal addressed the complexities of AI-generated music and its implications for intellectual property. While AI can recombine existing works, legal and creative gray areas persist, reaffirming that true innovation often relies on the distinctly human ability to synthesize new ideas. The day’s discussions consistently reminded attendees of how human oversight is essential not only for ethical governance but also for sustaining the spark of originality that AI cannot replicate on its own. - Spatial-Temporal AI: Predictive Insights with the Right Data

Lindsay Richman, Founder of Innerverse AI, highlighted the power of spatial-temporal AI in predictive modeling. By combining geolocation data with time-based inputs, these models can identify trends that static data sources might miss—ranging from mapping customer behavior to analyzing disease spread. Real-world applications include enhanced navigation, personalized recommendations, and detailed business forecasting. Richman emphasized that the accuracy of these models hinges on high-quality, comprehensive data. For SMBs and personal users, the ability to harness rapid insights from multiple data streams can be transformative, but only if the foundational inputs are well-curated and reliable. - High-Speed Robotics with Human Safety in Mind

In a lightning-round presentation, Robert Toppel, CEO and Co-Founder of Electron Robotics, demonstrated a six-axis system designed to pick food at high speeds to address global labor shortages. Toppel drew a memorable comparison between AI-driven robotics and a diligent sheepdog: self-sufficient in many respects, but still dependent on human guidance to steer it in the right direction. Despite its impressive capabilities, Toppel stressed that automated efficiency should never undermine human oversight and safety measures.

Balancing Innovation and Responsibility

By the end of day two, it was clear that while AI offers remarkable possibilities for efficiency and innovation, human accountability remains essential for ensuring that AI systems stay ethical, reliable, and beneficial. From financial services that need oversight to prevent biased investment strategies, to creative applications that rely on human intuition, the conference showcased both the promise and the complexity of AI. Attendees left with a renewed sense of optimism about AI’s potential in driving innovation for SMBs, but they were also reminded of the ethical frameworks and vigilant human supervision required to steer AI in a socially responsible direction. As AI continues to evolve, maintaining this balance of innovation and responsibility will remain a pivotal challenge—and opportunity—for organizations across all sectors.

Optimizing AI Workloads with Solidigm: High-Performance Storage from Core to Edge

Join Ace Stryker and Scott Shadley of Solidigm as they explore the vital role of storage in AI performance. From tackling storage bottlenecks to shifting from HDDs to SSDs, they discuss power efficiency in inference, the move to distributed compute, and TCO benefits. Learn how Solidigm’s SSD innovations support evolving AI workloads and the future of AI infrastructure.

3 Day-One Trends from the Oregon AI Conference

Organized and launched in an impressive 40 days, the inaugural Oregon AI Conference took place on February 1 and 2 in the coastal city of Newport—showcasing both the speed at which modern technology can bring people together and the depth of possibility AI holds for small and medium-sized businesses (SMBs). Hosted over a weekend, the event also featured an on-site mobile application, built in just four hours using Glide, underscoring how agile development and AI-driven innovation are fast becoming cornerstones of today’s tech ecosystem.

TechArena was among the enthusiastic participants drawn to Newport to witness firsthand how AI, once seen as a specialized tool reserved for large corporations, is now accessible and highly relevant to SMBs across sectors. From real-time demos of advanced language models to candid discussions of data privacy risks, attendees explored numerous facets of AI’s present and future. The conference highlighted practical examples—from automating repetitive tasks to democratizing analytics.

Several notable speakers, including professors, startup founders, and enterprise managers, offered compelling insights. Dr. Donna Z. Davis, a professor at the University of Oregon’s School of Journalism and Communication, and Andrew Hallberg, Co-founder of HirelyAI and Lead Program Manager for AI at Nike, underscored both the promise of AI and the caution required for its implementation. By the end of the first day, three themes had clearly emerged: a hopeful environment for SMBs, the rapid evolution of AI tools (with particular attention to DeepSeek), and AI’s capacity to foster collaboration and trust.

- A Hopeful Environment for SMB Leaders

“AI is not replacing creativity and strategy—it’s scaling them.”

– Andrew Hallberg

A recurring message throughout the conference was that individuals and small- to medium-sized businesses are poised to benefit enormously from AI. Several speakers—including Andrew Hallberg, who leads AI initiatives at Nike and is a co-founder of AI staffing firm HirelyAI—emphasized that the technology’s greatest strength is in augmentation rather than replacement. Hallberg and others highlighted how AI tools can automate repetitive tasks, free up employees for more strategic work, and amplify human creativity in surprising ways.

Dr. Donna Z. Davis, professor at the University of Oregon’s School of Journalism and Communication, shared how she uses AI to summarize her lectures and encourages students to do the same—provided they remain vigilant about verifying accuracy. Drawing parallels with Wikipedia as a reference source, Dr. Davis reminded attendees that human oversight and critical thinking still matter in every AI-powered workflow. She drove the point home by asking ChatGPT to produce a biography of herself—it hallucinated that, although she was standing in front of the crowd speaking, she had died in 2017.

From streamlining report generation to building efficiencies in structuring data, many sessions stressed that real-world AI applications need not be limited to tech giants. Dr. Davis summarized it well by saying that “there’s an AI tool for just about anything you’re trying to do.”

- The Rapid Evolution of AI Tools, with a Focus on DeepSeek

“There’s the good, the bad, and the terrifying” – Donna Z. Davis

If there was a single AI product on everyone’s minds, it was DeepSeek. Remarkably, although their R1 chatbot launched just a few weeks prior to the conference, countless references—both praising its capabilities and highlighting potential hidden costs—filled the sessions.

In a discussion of security implications of AI, Janet Lee Johnson of the AI Governance Group pointed out that DeepSeek’s terms of service include provisions for an advertising platform that could potentially leverage user data for marketing. This point drove home a larger theme among speakers that users should be wary of supplying private data into publicly available models.

Meanwhile, Andrew Hallberg spoke to the ways in which HirelyAI is already using DeepSeek within their internal teams. For a product that was released so recently, its rapid adoption showcased how fast the AI market is evolving, and how critical it is for businesses to stay informed.

Yet the very fact that DeepSeek’s name surfaced in nearly every talk highlighted the AI industry’s heightened pace of innovation; building a long-term, actionable framework for SMB leaders to think about AI was a hallmark of the event.

- AI as an Enabler of Collaboration and Trust

"With textual interfaces at the center of generative AI, writers become even more important creative collaborators." – Nick Parish, Director of Writing Content and Strategy, Work & Co.

Nick Parish shared a personal anecdote about building a machine learning model using TensorFlow based on his own writing, long before ChatGPT became mainstream. The model replicated his voice, generating endings to incomplete pieces, foreshadowing the AI-driven creative tools used today. He used this example to stress that writers and creators are more vital than ever. His closing message urged attendees to stay mindful of their AI usage, reminding them, "Your influence can determine the path any technology takes."

Throughout discussions and panels, speakers shared the common prediction that AI tools will facilitate new modalities of connection between businesses, and help to build trust between teams and their partners.

As day one of the Oregon AI Conference came to a close, the 40-day journey of organizing the event itself stood as a testament to how quickly AI initiatives can take off—and how accessible these tools now are to smaller businesses.

In our day-two summary, we discuss how conference leaders guided participants through practical implementations, from data-privacy best practices to shaping internal AI policies. These workshops reaffirmed the day-one point that embracing AI is no longer just for Silicon Valley heavyweights—SMBs can leverage these rapidly evolving tools to stay competitive, spur innovation, and cultivate an environment where technology and human creativity flourish side by side.

.webp)

The Great Debate: Navigating AI Ethics

Our own Allyson Klein moderates a powerhouse panel on AI ethics, with panelists representing Loyola University Chicago, Google, MLCommons, VAST Data and Momethesis.

Synopsys’ Multi-Die Technology Enhances Chiplet Capabilities

In this video from Chiplet Summit, Shekhar Kapoor discusses how Synopsys’ transition to a multi-die approach to chiplet development has allowed them to innovate beyond the limitations of traditional monolithic chips.

Security for the Quantum Age with Winbond

The In the Arena podcast has a commitment to look to future innovation, and this is why I was so excited to have Winbond’s Jun Kawaguchi in the studio for an insightful conversation. Winbond has long been known as a memory player based in Taiwan, but their development of Post-Quantum Cryptography Flash has ushered in a new chapter of differentiated innovation for the firm. As we stand on the brink of the quantum computing era, the potential vulnerabilities quantum introduces to our current cryptographic systems cannot be overstated. Jun provided invaluable insights into how Winbond is pioneering strategies to safeguard our digital future.

The Quantum Threat Explained

Quantum computing promises to revolutionize industries and re-frame compute capability with its unparalleled processing potential. However, this advancement comes with a caveat: the same power that enables quantum computers to solve complex problems also poses a significant threat to the methodologies used to secure existing compute platforms. Algorithms that currently protect our data could become obsolete, leaving sensitive information exposed.

Jun emphasized that while quantum computers capable of breaking today's encryption aren't yet mainstream, the urgency to develop quantum-resistant solutions is paramount. The industry’s window to prepare is narrowing, and proactive measures are essential to stay ahead of potential threats. Winbond has recognized the gravity of this impending challenge and is at the forefront of developing quantum-resistant security solutions. Jun detailed their multi-faceted strategy, which includes:

- Research and Development: Investing heavily in R&D to explore and implement cryptographic algorithms that are resistant to quantum attacks. This involves collaborating with global experts and institutions to stay abreast of the latest advancements in quantum-safe cryptography.

- Hardware-Based Security: Leveraging Winbond’s expertise in semiconductor advancement, the company is integrating robust security features directly into their storage portfolio. This approach ensures that devices are equipped to handle quantum-resistant algorithms efficiently, based on existing required components rather than esoteric solutions.

- Industry Collaborations: Understanding that cybersecurity is a collective responsibility, Winbond actively participates in industry consortia and standards bodies. By contributing to the development of global standards for quantum-resistant cryptography, the firm aims to foster a unified defense against emerging threats.

The Importance of Early Adoption

Early adoption of quantum-ready security is paramount to the success of keeping data secure. Jun pointed out that data intercepted today could be stored and decrypted in the future once quantum computers become powerful enough—a concept known as "harvest now, decrypt later." This makes it imperative for organizations to implement quantum-resistant measures with urgency. Transitioning to quantum-resistant cryptography is not without challenges. In order to implement now, organizations will need to navigate the following hurdles:

- Performance Overheads: Quantum-resistant security algorithms can tap more compute resources, potentially impacting system performance. Balancing security and efficiency is a delicate task that requires innovative engineering solutions and system level architecture to build in performance headroom for required security cycles.

- Compatibility Issues: Ensuring that new cryptographic methods are compatible with existing systems is crucial to facilitate a smooth transition. This involves extensive testing and validation to prevent disruptions.

- Awareness and Education: Raising awareness about quantum threats and educating both internal and external stakeholders is essential. Many organizations may not yet fully grasp the urgency of the situation, and spreading knowledge is a vital step toward widespread adoption of quantum-resistant technologies.

The TechArena Take

While quantum is still a few years ahead, Jun raised a very important concern about current data threats from future quantum capability. The time is now to secure data stores across environments to prepare for upcoming technology advancement. We were intrigued to see Winbond take this bold step in advancing security capability utilizing flash and look forward to learn more about the upcoming innovations promised in the interview. We think it’s the right time to engage with Winbond to learn more about their roadmap and build a strategy for organizational readiness for the coming quantum revolution. Listen to the full conversation here.

.jpg)