Commvault Private Cloud Software Suite Provides Cloud Resiliency

Commvault engaged the Cloud Field Day 23 delegates today, sharing their vision for cloud data resilience with a full-solution stack of security solutions. If you’re not familiar with the company, they have been providing security solutions since 1996 when they spun out of ATT labs and have an established reputation as a leader in the cloud security arena.

Michael Fasulo, Commvault’s senior director of product management, walked us through the stack, starting with a discussion on operational compliance with a focus on minimizing organizational attack surfaces as well as resilience for operational recovery from infrastructure failures, natural disasters, and human error.

Once operational compliance is in place, Commvault extends to address cyber resilience. This protects from infrastructure breaches as well as placing early warning systems for cyber deceptions constantly present within organizational risks.

Finally, Commvault addresses recovery from successful security breaches, starting with measuring indicators of compromise and extending to cleanroom recovery, a cloud-based isolated recovery environment (IRE), keeping compromised areas of the cloud isolated from the rest of the organization.

This suite has set Commvault apart from competitors given the end-to-end nature of their support, all fueled with Metallic AI, traditional machine learning that has been embedded in the platform for years. Michael also talked about how Commvault is resilient across operating environments, extending data protection from on-prem cloud into public cloud instances, including deep support for software-as-a-service (SaaS) applications, and to the edge.

The big question in my head is how this landscape is being impacted by GenAI. Michael explained that organizations are most threatened from new threats from GenAI-based exploits by not having a good hold on what data they’ve got across the cloud landscape. He starts working with customers to get a foundational view of what data is present; where it’s stored across public, on prem, and edge; and what threat levels different data represent to the organizations. Commvault offers unique tools within their data compliance suite to establish this foundation, something equally useful from quantum attacks.

The TechArena Take – Commvault Is a No-Brainer for Enterprise

So what’s the TechArena take? Part of the conversation today focused on the sheer number of SaaS software present within IT organizations, with a majority of enterprises tapping 25+ solutions to run their businesses. Managing across software environments creates complexity to IT oversight, and this is no different within the security space, making Commvault a very strong option given the end-to-end suite of core capabilities. Add the flexibility of single-pane-of-glass oversight across on-prem, public, and edge operations, integration of cloud-native protection and recovery, and front-footed AI integration, and it’s clear that Commvault has a winning solution for the market. I’d like to hear more on where they are investing next, as the threat landscape is shifting fast across quantum and AI threat advancement.

VDURA Debuts V-ScaleFlow Tech, Cuts AI Storage Costs 60%

Today, VDURA announced Version 11.2 of its VDURA Data Platform. The latest version of their data storage and management platform for AI and high-performance computing (HPC) workloads introduces new capabilities that VDURA says boost performance while delivering 60% lower total cost of ownership (TCO) compared with flash-only competitors.

The release previews V-ScaleFlow, a new data flow management capability within the VDURA Data Platform that orchestrates the movement of data between hyperscale-class quad-level cell solid-state drives (QLC SSDs) and ultra-dense hard disk drives (HDDs). VDURA cites several factors that help achieve this reduction in cost with the introduction of V-ScaleFlow:

- V-Burst technology handles write-intensive AI checkpoints by absorbing data spikes and then writing sequentially to 128 terabyte (TB) QLC NVMe drives. This reduces flash requirements by more than 50% and lowers the cost per TB.

- V-ScaleFlow stores data on 30+ TB hyperscale HDDs to handle long-tail datasets and model artifacts. This use of hyperscale HDDs halves price and power consumption per petabyte versus all-flash alternatives.

In addition to these innovations, VDURA’s ease of use means that it has lower operational overhead costs that also contribute to the 60% TCO reduction: only one-half of a full-time-equivalent position is needed to manage 1 to 100 PB systems.

Version 11.2 also includes a native Kubernetes container storage interface (CSI) plug-in that simplifies multi-tenant Kubernetes-based deployments with zero-script persistent-volume provisioning and management. In addition, it offers end-to-end encryption to protect data in transit and at rest.

“V11.2 delivers the speed, cloud-native simplicity, and security our customers expect—while V-ScaleFlow applies hyperscaler design principles, leveraging the same commodity SSDs and HDDs to enable efficient scaling and breakthrough economics,” says Ken Claffey, CEO of VDURA.

VDURA was previously known as Panasas before rebranding in 2024. The company transitioned from a hardware-focused business model to being an AI and HPC data infrastructure software company operating under a software subscription-based business model.

The Tech Arena Take

VDURA’s announcement comes as enterprises face mounting pressure to manage escalating data volumes from AI applications and their associated costs. In our recent Data Center Infrastructure Requirements to Scale AI report, we covered how AI data centers require special design considerations due to their vastly greater computer and power requirements.

VDURA’s platform update directly addresses those challenges, and in doing so, benefits enterprises trying to balance AI adoption with controlling costs. This type of cost optimization will be crucial to keeping the expenses of AI infrastructure in check as businesses of all sizes, and budgets, look to adopt AI solutions.

Cornelis CN5000 Launches: Rewiring AI and HPC Networking at Scale

Cornelis Networks today announced the launch of its CN5000 family – a high-performance, scale-out networking solution engineered to meet the rising demands of AI and high-performance computing (HPC). Built on the company’s proprietary Omni-Path® architecture, CN5000 delivers a bold promise: up to 30% higher HPC application performance, double the message rate of current solutions, and six times faster collective communication for AI workloads.

That’s not just an incremental upgrade – it’s a direct shot across the bow at InfiniBand and Ethernet in the race to support massive, compute-intensive deployments.

CN5000 claims 2x higher message rates, 35% lower latency, and significant speedups in real-world workloads like Ansys Fluent, seismic simulation, and large language model (LLM) training.

Omni-Path Advantage

At the heart of CN5000 is the updated Omni-Path architecture, known for its lossless, congestion-free data flow. It uses credit-based flow control and adaptive routing to keep traffic moving smoothly at scale.

With support for up to 500,000 endpoints, roadmap support to scale into the millions, and seamless integration with CPUs, GPUs, and accelerators from AMD, Intel, and NVIDIA, CN5000 positions itself as vendor-neutral and future-ready.

Product Family Components

CN5000 includes 400G SuperNICs, modular switches (including a 576-port Director-class option), an open-source OPX software suite, and cabling options optimized for dense, scalable deployments.

Whether it's shortening LLM training cycles or accelerating weather modeling, CN5000 is optimized for real-world throughput and scalability – not just theoretical peak speeds.

Strategic Roadmap

Cornelis isn’t stopping at 400G. The company outlined plans for future products:

CN6000 (800G) will blend Omni-Path with RoCE-enabled Ethernet.

CN7000 (1.6T) aims to redefine ultra-scale performance by integrating Ultra Ethernet Consortium standards with Cornelis’ core architecture.

That roadmap signals Cornelis’ ambition not only to compete but to help define the next era of AI and HPC networks.

The TechArena Take

So glad to see you re-enter the arena Cornelis! The CN5000 is the clearest signal yet that the future of AI and HPC networking isn’t just about one vendor. With this delivery Cornelis has placed a spotlight on the dusty technologies being tapped today for AI and HPC clusters and provided an alternative that removes architectural friction and frees up GPUs that are sitting around in idle. That’s right, according to Cornelis estimates, AI GPUs are in idle for most of their cycles which is astounding given their pricetags.

If performance claims are delivered with this end-to end network solution, a network upgrade will pay for itself with increased GPU compute delivery. We can’t wait to see how this plays out.

Google's AI Ultra Plan Promises Creative Superpowers – For a Price

Google is consolidating its most advanced AI tools into a single, top-tier subscription with the launch of Google AI Ultra, a new $249.99/month plan aimed at creatives, researchers, developers, and power users.

Announced on May 20, the subscription gives users access to Google’s most capable models, premium feature sets, and experimental tools across the Gemini ecosystem. Google is offering AI Ultra at half off the sticker price for the first month.

Positioned as a VIP pass to Google AI, the plan includes highest-tier usage across apps like Gemini, Flow (AI filmmaking), Whisk (idea exploration), NotebookLM, and new integrations within Gmail, Chrome, and Docs. Users also get early access to Gemini's next-generation reasoning engine, Deep Think in 2.5 Pro, and Project Mariner, an agentic prototype capable of juggling up to 10 tasks simultaneously. Here what's included for the price:

Gemini Pro Access: Use of the highest-tier Gemini features including enhanced reasoning and research support

Flow: Advanced cinematic video generation, early access to Veo 3, 1080p output, and refined camera control

Whisk Animate: Generate vivid 8-second video clips from text and image prompts

NotebookLM: Increased limits and capabilities later in 2025

Gemini in Gmail, Docs, Chrome: Deep integration of assistant features across popular Google services

Project Mariner: Agentic dashboard for managing multitasking workflows

YouTube Premium + 30TB Google Storage

Google AI Pro Also Gets a Boost

In addition to launching Ultra, Google is enhancing its existing AI Premium plan (now rebranded as Google AI Pro) with select features from the Flow and Gemini in Chrome suites – at no additional cost. The company is also offering free access to Google AI Pro for a school year to university students in the U.S., Japan, Brazil, Indonesia, and the U.K.

The TechArena Take

The announcement of Google AI Ultra marks a big bet by the search and cloud giant on subscription-based AI access – one that bundles creativity tools and assistants with priority access to its latest research-grade models. The power of the tools is unquestionable, and the Veo3 video capabilities blew us away in terms of advancement beyond what was the cutting edge of Sora.

Still, the $249.99/month price tag raises important questions about accessibility and adoption. At that rate, Google AI Ultra is clearly targeting elite individual users and enterprise budgets – leaving startups, smaller teams, and everyday creators wondering if AI excellence is quickly becoming a pay-to-play game out of their reach. While AI Ultra may appeal to creators and professionals who need bleeding-edge capabilities, it also prompts a timely industry question: Are we headed toward a future where the best AI is gated behind hyperscaler paywalls?

And there’s a bigger strategic undercurrent here. By bundling storage, YouTube Premium, agentic tooling, advanced video generation, and core productivity apps under one AI roof, Google is signaling a desire to own the entire AI experience. This move could pressure users to abandon separate subscriptions for tools like ChatGPT, Claude, Midjourney, or Perplexity in favor of an all-in-one ecosystem – one tightly controlled by a single hyperscaler.

We at TechArena regularly use multiple advanced AI tools, and our approach has been to continually try new things to find what works best. Based on our use of Google tools, we have mixed reviews: While Gemini is among our favorite AI platforms, we’ve noticed a few areas where Google’s creative tools differ from others: the image tool initially only provided (1:1) aspect ratio photos and the AI’s handling of subtle nuance can occasionally be less intuitive. The video capabilities alone, as mentioned before, are pushing that aspect of the landscape forward, and the integration into smarter search, something no one on the planet knows better than Google, gives us confidence in Gemini’s long-term value. Our sense is that users won’t want to be locked in to a single platform, and are more likely to opt for using whichever tool works best for the task at hand. Given Google’s discussion of an open agent platform, the pricetag is something we wouldn’t be surprised to see revisited and reformed to access this audience.

We’ll be watching with interest and will continue coverage of emerging AI tools. Stay tuned in the next week for our take on who’s who in the agentic AI zoo.

Start-Ups Stake Their Claim in Humanoid Robot Rush

The race to develop general-purpose humanoid robots is on: literally, in the case of a recent half-marathon in China. While humans have dreamed, schemed and engineered solutions to create mechanical beings in our own likeness for centuries, thanks to recent advancements with large language models (LLMs) and end-to-end AI systems, the finish line now appears closer than ever.

The trophy at stake is a share in what could be a $38 billion market by 2035, and up to $7 trillion by 2050. Currently, an estimated 200 to 300 companies are vying for their share of that prize, with competitors based in the U.S., Europe and Asia. The robots could further transform manufacturing and industries that to date have not seen significant effects from automation, such as hospitality and health care.

Earlier this month, we at TechArena took note of a report by Reuters on China’s efforts to win a majority of the market share. Between significant government investment and “domination” of the ecosystem that manufactures hardware components for humanoid robots, China has staked out an extremely strong position. The Chinese government sees the investment as a potential solution to the issue of population decline, helping the country face challenges like workforce shortages in industries from manufacturing to elder care.

To understand the potential effects of China’s massive investment on this growing global market, we sat down with Niv Sundaram, chief strategy officer for Machani Robotics, a U.S.-based AI and robotics start-up creating humanoid companion robots.

“China’s aggressive investment in humanoid robotics, supported by government funding and vast manufacturing capabilities, is undeniably reshaping the global landscape,” Niv says. However, she still sees plenty of runway for start-ups with a strong value proposition to thrive in the years to come.

“While China excels in hardware production and scale, and the U.S. leads in broad AI innovation, our focus on empathy companions allows us to target niche, high-impact markets such as senior care and health care, where emotional connection is paramount,” she says.

The company’s empathy-driven design philosophy has led to the development of Ria, a humanoid robot built to provide companionship and address common yet challenging situations like combating loneliness in seniors or supporting children with special needs.

“We integrate multimodal emotion recognition, such as analyzing facial expressions and speech sentiment, to enable personalized and compassionate interactions. These technologies allow Ria to recognize and respond to human emotions,” Niv explains.

In the long run, Niv sees a significant advantage to being a start-up with a clear vision in this extremely competitive market.

“Our advantages lie in our agility and specialization. By addressing specific emotional needs, we tap into underserved areas that larger players might not prioritize. Our ability to quickly adapt and form strategic partnerships with care facilities further strengthens our position,” she says.

“The humanoid robotics race isn't just about technological supremacy,” Niv adds. “It's about defining the future of human-robot relationships. As demand grows for companions that address isolation and emotional well-being, our innovations could inspire a new generation of robots that prioritize human connection, impacting both technology development and ethical standards worldwide.”

What’s the TechArena take? While large-scale players in China appear poised to dominate in scale and infrastructure – not to mention in news cycles – there’s still significant opportunity for start-ups in the race for humanoid robots. By focusing on innovation and specialized, high-value applications, “niche” players can still have a large impact on the future of robotics.

Understanding Agentic AI and Multi-Agent Systems

Imagine a world where technology doesn’t just follow instructions, but adapts, collaborates and solves problems on its own. From self-organizing delivery drones to software managing dynamic supply chains, agentic AI is setting the stage for smarter, more independent systems that are reshaping industries. But what exactly is agentic AI, and why does it matter? Let’s break it down.

Defining our Terms

Before we explore the world of agentic AI, let’s clarify a few key terms:

Agentic: Originating from psychology, this term refers to the capacity to act independently and make purposeful decisions. When applied to AI, agentic systems exhibit autonomy and goal-driven behavior, meaning they can function without constant human oversight.

Agent systems: These are individual software entities or technologies that operate autonomously. They can gather data, make decisions and take action to fulfill specific objectives.

Multi-agent systems: A collection of agent systems working together. These systems are designed to share information, collaborate and adapt to solve complex problems collectively.

Where It All Began

The concept of “agentic” comes from psychology, specifically Albert Bandura’s social cognitive theory. It describes human qualities, such as intentionality and self-direction, or simply the ability to make choices and act independently. Over time, this idea crossed over into the field of artificial intelligence, where researchers began exploring how to design systems with similar autonomous and goal-driven behavior. Dickson Lukose lays out the heritage in this post.

This shift in thinking laid the groundwork for what’s known today as agent and multi-agent systems. These are computational systems designed to perform tasks on their own, often working together to solve big, complex problems.

Understanding Agent Systems and Multi-Agent Systems

An agent system, in simple terms, is a piece of software or technology that operates on its own with minimal human input. It can gather information, make decisions based on that data and act. Multi-agent systems organize multiple agents to collaborate and achieve a shared goal.

A good example is a fleet of autonomous robots that stock warehouse shelves. Each robot independently assesses its assigned tasks, communicates with the other robots and adapts to shifting demands (when new inventory arrives). They don’t wait for step-by-step instructions, and they work as a team to stock the warehouse shelves.

Some key features of these systems include:

Autonomy: Agents operate without constant supervision.

Interactivity: They communicate and coordinate with each other.

Adaptability: They adjust to changes in real time.

Proactiveness: They don’t just react; they anticipate.

How Agentic Technology Elevates Workflow Systems

One of the most exciting uses of agentic AI is in workflow and orchestration engines, which are tools businesses use to manage complex processes. Traditionally, these engines need constant monitoring and manual intervention to deal with unexpected disruptions. Integrating agentic technology into workflows provides a method to incorporate intelligent automation.

Let’s imagine the company that owns the warehouse we talked about before is having a massive sale event. Orders are piling up, inventory levels are fluctuating and shipping deadlines need to be met. Here’s where agentic AI steps in. Intelligent, autonomous agents within the workflow engine can monitor warehouse stock, allocate resources and reroute deliveries in response to a storm causing delays. They adapt without needing manual adjustments, ensuring that operations continue smoothly even in challenging conditions.

This ability to adapt and self-manage doesn’t just save time; it reduces errors and increases overall efficiency. It’s like giving workflows a GPS that reroutes automatically when there’s traffic ahead.

The Road Ahead

Agentic and multi-agent systems aren’t just a glimpse into the future of AI. They are already transforming industries. Already companies use these systems to coordinate fleets of autonomous vehicles, improve healthcare delivery and streamline global supply chains.

Agentic AI is paving the way for a smarter, more adaptable future where technology works seamlessly alongside humans to solve the world’s toughest problems.

That future is closer than you think, but there is lots of work that needs to be done to get our current systems and data ready in order for agentic AI.

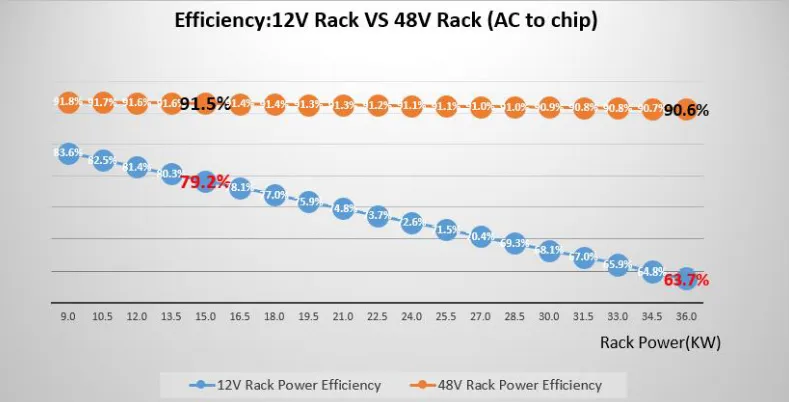

Scaling Data Center Infrastructure for AI

Industry experts from Avayla, Perpetual Intelligence and the Liquid Cooling Coalition discuss liquid cooling, thermal design, and policy blind spots as rack power for AI workloads surges past 600kW.

VAST Data Launches AI Operating System

Today, VAST Data introduced its new VAST AI Operating System, designed to power the next era of AI.

As enterprises across the globe race toward agentic computing and organizations prepare to deploy intelligent agents at scale, VAST’s unified infrastructure approach promises to radically simplify and accelerate this transition.

The launch marks a defining moment for VAST and the broader computing landscape. With AI reshaping the fabric of business and society, VAST presents an operating system built from the ground up for reasoning machines. According to VAST, this vertically integrated software stack delivers a full runtime and orchestration platform, a federated data and compute cloud, and a low-code framework to bring AI agents to life.

From Data Platform to Operating System

The journey to today’s unveiling began in 2023, when VAST introduced its Data Platform to support the largest AI builders in the world. Customers deployed tens of exabytes of infrastructure to power training and inference pipelines, storing massive unstructured datasets in the VAST DataStore and rich metadata in the VAST DataBase. But as reasoning models matured and agentic workflows gained traction, VAST recognized the need to elevate its platform beyond traditional definitions.

Data platforms, VAST argues, were designed with batch computing in mind – ill-equipped for the real-time, data-intensive demands of modern AI. Legacy systems impose limitations on latency, scalability, and architecture, creating friction that slows progress. VAST’s Disaggregated Shared Everything (DASE) architecture, by contrast, eliminates these bottlenecks by enabling massive parallelism, global scale, and seamless access to all data in a single tier.

Introducing the VAST AgentEngine

At the heart of today’s announcement is the debut of the VAST AgentEngine – a low-code, AI agent deployment and orchestration runtime that completes the company’s vision for an AI Operating System. According to VAST, AgentEngine allows organizations to:

Define and deploy intelligent agents

Pair agents with reasoning models

Equip agents with tools for data, functions, and APIs

Observe and govern agentic workflows with rich telemetry

The system provides what's needed to run massively scaled, fault-tolerant AI pipelines, including scheduling, message queues, tool servers, and support for agents with multiple personas and security credentials. Most importantly, AgentEngine reportedly brings agents to life directly within the VAST DataEngine, allowing reasoning to occur where the data lives – without latency, silos, or complex integration layers.

A Full-Stack OS for AI

The VAST AI Operating System unifies six key components:

VAST DataSpace: A global namespace for federated compute and storage

VAST DataStore: High-performance storage for unstructured data

VAST DataBase: A real-time, scalable metadata and vector database

VAST DataEngine: A distributed compute engine for orchestrating workloads

VAST InsightEngine: A data refinery that uses AI to generate context and embeddings

VAST AgentEngine: The new AI runtime for intelligent agent orchestration

Together, these components form a cohesive platform designed for real-time data capture, contextualization, and action, making it possible to transition from passive data management to intelligent decision-making systems.

Utility Agents and Developer Enablement

To accelerate adoption, VAST plans to begin releasing a series of open-source utility agents – simple but powerful reference implementations designed to showcase the possibilities of agentic computing. These will include:

A compliance agent to enforce policy and governance

A reasoning chatbot grounded in organizational data

An editor agent for rich media creation

A life sciences researcher for bioinformatics workflows

In addition, VAST Forward, a series of global developer workshops, will provide hands-on training in building on the platform.

The TechArena Take

With this launch, VAST further cements itself as foundational to the next computing era. The AI Operating System is built to democratize access to frontier AI capabilities and make agentic infrastructure a reality for enterprises of every size.

In the words of VAST CEO Renen Hallak, this isn’t just a product launch – it’s the beginning of a new paradigm. And with a clean-sheet architecture, a unified software stack, and support for over a million GPUs already deployed globally, VAST is poised as a leader in the era of the Thinking Machine.

Trump’s Gamble on Global Tech Leadership

President Donald Trump’s recent Middle East tour has marked a decisive pivot in U.S. foreign policy, emphasizing strategic economic partnerships over traditional diplomatic norms. A centerpiece of this approach is a landmark agreement with the United Arab Emirates (UAE) to supply 500,000 of NVIDIA’s most advanced AI chips annually, beginning in 2025. This move signals a significant shift from the previous administration’s restrictive export controls, aiming to bolster U.S. influence in the global AI race.

The proposed deal, nearing finalization, would allow the UAE to import over a million advanced NVIDIA AI chips through 2027. Approximately 20% of these chips would be allocated to G42, a state-backed AI firm in Abu Dhabi, with the remainder distributed among U.S. companies like Microsoft and Oracle establishing data centers in the region. This agreement underscores the UAE’s ambition to become a global AI hub and reflects a broader U.S. strategy to strengthen ties with allies rather than imposing blanket export controls.

However, these efforts raise urgent questions about regional power infrastructure. To activate this scale of AI compute, the UAE has announced plans for up to 5GW of AI data center capacity – equivalent to powering millions of homes. Saudi Arabia’s net-zero AI campus in Oxagon will require an additional 1.5GW of sustained energy. According to the International Energy Agency, global electricity consumption by data centers could nearly double by 2030, surpassing 900 terawatt-hours annually – driven largely by AI demands. Without serious investments in renewables and grid modernization, these ambitions could strain energy systems across the Gulf.

Saudi Arabia’s $600 Billion AI Investment

Simultaneously, Trump secured over $600 billion in commitments from Saudi Arabia, including substantial investments in AI infrastructure. NVIDIA agreed to supply 18,000 of its latest Blackwell GPUs to Humain, a newly established AI firm backed by Saudi Arabia’s sovereign wealth fund. Additionally, AMD announced a $10 billion collaboration with Humain to develop 500 megawatts of AI computing capacity over five years. These initiatives aim to position Saudi Arabia as a leading center for AI development outside the U.S.

This push not only strengthens U.S. alliances, but signals a deeper strategic goal: to redraw the map of global tech power by creating a regional counterweight to China's rapidly expanding AI footprint. According to the Washington Post, the Biden administration had previously warned about unchecked Chinese influence in the Middle East. Now, Trump’s policy appears to take that a step further – arming Gulf nations with the tools to lead their own AI revolutions under an American umbrella.

A New Era of U.S. Export Policy

The Trump administration’s approach marks a departure from previous policies that imposed stringent export controls to limit China’s access to advanced AI technology. By rescinding these restrictions, the U.S. aims to foster innovation and strengthen alliances with trusted partners. However, this shift has raised concerns among U.S. lawmakers about the potential for AI chip smuggling and the need for safeguards to ensure that sensitive technology does not fall into adversarial hands.

Trump’s Middle East strategy reflects a broader vision to counter China’s technological ascent by empowering allies with access to advanced U.S. technology. By facilitating the development of AI infrastructure in the UAE and Saudi Arabia, the U.S. aims to create a counterbalance to China’s growing influence in the region. This approach not only enhances economic ties but also positions the U.S. as a pivotal player in the global AI landscape.

But these moves also raise a deeper question: who will shape the narratives generated by this AI infrastructure? As countries like the UAE and Saudi Arabia invest in national AI platforms, there are rising concerns about the role of AI in information control. In nations where press freedom is limited, there is a risk that state-controlled AI systems could propagate selective or misleading narratives – a form of what some analysts call “AI nationalism.” The ability to generate, curate, and disseminate digital content at scale means these platforms won't just influence global markets – they could influence global truth.

While the long-term implications of these agreements remain to be seen, they undoubtedly mark a significant shift in how the U.S. engages with its allies and addresses global technological challenges.

What’s the TechArena Take?

While President Trump’s approach in the Middle East certainly could strengthen the U.S. position in the AI race, there are inherent risks involved in relaxing export controls, particularly concerning the transfer of sensitive technologies to regional allies. It's also relevant to consider the trend in regional regulatory oversight concerning illicit financial activities. While the UAE has officially condemned terrorism, historical reports have indicated instances where financial networks within the region were implicated in supporting militant groups, including, according to some analyses, in connection with the financing of the September 11 attacks. Therefore, the introduction of such powerful AI technologies necessitates careful consideration of how effectively policies and enforcement mechanisms are evolving to prevent misuse and ensure these advanced tools align with global security norms.

The broader implications of this deal will play out in the coming years, as countries like the UAE and Saudi Arabia evolve into major players in AI, potentially reshaping the geopolitical landscape. For tech companies, this offers a unique opportunity to expand into new markets, but it also calls for vigilance to ensure that technology is not misused or diverted into unintended hands. The question remains: will this strategy ultimately benefit U.S. interests, or will it open the door to unintended consequences that undermine the integrity of American technological leadership – or even the American way of life as we know it today.

Synopsys Accelerates AI Innovation With Microsoft, NVIDIA

In a series of strategic announcements at Microsoft Build in Seattle and NVIDIA's Computex 2025 in Taipei, Synopsys solidified its position as a critical enabler in the rapidly evolving AI and semiconductor landscape.

At Microsoft Build, Synopsys was highlighted as a launch partner for Microsoft's new Microsoft Discovery platform, an initiative aimed at transforming research and development through agentic AI. This collaboration focuses on integrating Synopsys’ AI-driven design solutions with Microsoft's platform to enhance semiconductor engineering processes. Raja Tabet, Synopsys’ SVP of engineering excellence group, emphasized the significance of this partnership, stating that combining Synopsys’ AI capabilities with Microsoft Discovery can “re-engineer chip design workflows, supercharge engineering productivity and accelerate the pace of technology innovation.”

The partnership aims to leverage AI to manage the increasing complexity of chip design, enabling engineering teams to innovate more efficiently. By incorporating agentic AI, the collaboration seeks to create more autonomous and intelligent design processes, ultimately reducing time-to-market for new semiconductor products.

Advancing AI Compute Infrastructure

At Computex 2025, Synopsys announced its participation in NVIDIA's NVLink Fusion ecosystem, a move that underscores its role in advancing AI infrastructure. NVLink Fusion is NVIDIA's initiative to create a semi-custom AI infrastructure by allowing integration of third-party CPUs and AI accelerators with NVIDIA's GPUs. Synopsys’ involvement includes providing silicon design services and solutions that facilitate this integration, enabling the scale-up and scale-out of critical AI infrastructure.

“Data centers are transforming into AI factories,” said Sassine Ghazi, Synopsys president and CEO, highlighting that Synopsys’ solutions are mission-critical enablers in this transformation. By supporting NVLink Fusion, Synopsys contributes to building an open and scalable ecosystem for next-generation AI and high-performance computing.

Enhancing EDA Solutions with NVIDIA's Blackwell Platform

Furthering its collaboration with NVIDIA, Synopsys is integrating its electronic design automation (EDA) solutions with NVIDIA's Blackwell platform. This integration aims to accelerate chip design and manufacturing processes by leveraging NVIDIA's CUDA-X libraries and Blackwell architecture. Synopsys' EDA tools, including PrimeSim, Proteus, S-Litho, Sentaurus Device, and QuantumATK, have demonstrated significant performance improvements when run on the NVIDIA B200 GPU, achieving up to 30x speedups in circuit simulations and substantial gains in other computational tasks.

Sanjay Bali, SVP of strategy and product management at Synopsys, noted that this collaboration “unlocks unprecedented performance gains” and “redefines how EDA is enabling semiconductor manufacturing innovation.” By accelerating simulation and design processes, Synopsys and NVIDIA are enabling faster development cycles and more efficient manufacturing workflows.

Strategic Implications and Industry Impact

Synopsys’ recent collaborations with Microsoft and NVIDIA reflect a strategic alignment with the industry's shift towards AI-driven design and manufacturing. By integrating AI into chip design processes and supporting scalable AI infrastructure, Synopsys is addressing the growing demand for more efficient and intelligent semiconductor solutions.

These partnerships enhance Synopsys’ product offerings and position the company as a central player in the AI and semiconductor ecosystem.

The TechArena Take

The AI boom is no longer about theoretical future states – it’s about practical infrastructure now. What Synopsys has done with Microsoft and NVIDIA demonstrates an important concept: the AI era will be won not only by those building powerful models, but by the companies that empower those models to be designed, validated, and deployed at speed.

Synopsys’ integration with Microsoft Discovery shows how AI will not only be the outcome of innovation but also the engine driving it. By embedding with NVIDIA’s NVLink Fusion and Blackwell platform, Synopsys is asserting its relevance across both the design and deployment layers of the AI stack.

For the industry, the takeaway is clear: if your tools aren’t optimized for AI, you’re already behind. Hats off to Synopsys for helping lead a redefinition of the rules of modern compute infrastructure. This isn’t just EDA innovation; it’s architectural evolution.

Special Report: Data Center Infrastructure Requirements to Scale AI

This special report explores the infrastructure innovations required to support AI-scale data centers, highlighting the escalating demands of generative AI on power, cooling, and rack architecture.

Peak:AIO Optimizes AI Infrastructure to Scale Enterprise Adoption

The tech world is evolving rapidly, and few advancements capture attention quite like the transformative shift in AI infrastructure. At the recent GTC conference, one such innovation that stood out was Peak:AIO’s approach to scaling AI technology. We caught up with Scott Shadley, director of leadership narrative and evangelist at Solidigm, and Roger Cummings, CEO and founder of PEAK:AIO, to discuss Peak:AIO’s vision for more intelligent data placement and workload management.

So, what makes this shift significant? Last year, the focus was on simply throwing more hardware at the problem, with rows of GPUs and racks of servers as the go-to solution. However, as we learned from Roger, this approach is evolving. The conversation is no longer about just adding more hardware — it’s about optimizing and refining what’s already in place. This year, the GTC conference revealed a deeper, more solution-oriented approach, where innovation is driven by making the underlying technology not only simpler, but also more efficient for enterprises to adopt and scale.

One of Peak:AIO’s strategies is to focus on maximizing the efficiency of each individual node. By optimizing performance, space and energy efficiency, Peak:AIO is ensuring that each node in an AI infrastructure can deliver six times the performance while maintaining a smaller physical footprint. This efficiency is essential as AI continues to grow more complex and demanding. As Roger aptly pointed out, enterprises can’t afford to let performance bottlenecks slow down innovation, especially as the lifecycle of AI moves from data collection to training and, ultimately, to inference.

This approach doesn’t just apply to large-scale data centers. It’s also vital at the edge, where AI workloads are increasingly being processed closer to the data they need. The role of intelligent storage solutions like those Peak:AIO offers is pivotal in ensuring that data can move efficiently within these distributed environments. By creating dense, high-performance nodes in a 2U frame, Peak:AIO allows businesses to bring AI intelligence closer to the data. This is a game-changer for customers who need the ability to process more data without compromising on speed or efficiency.

One of the most exciting aspects of Peak:AIO’s forward-looking strategy is its focus on AI lifecycle optimization. AI workloads require intelligent data placement and provisioning to ensure that they are always delivered where and when they are needed most. By offering GPU-as-a-service capabilities and prioritizing performance optimization, Peak:AIO is putting businesses in a position to get more out of their existing infrastructure. The result is more cost-effective, efficient and intelligent AI solutions that are scalable as businesses grow and evolve.

So, what the TechArena take? As we look to the future, it’s clear that Peak:AIO is setting the stage for a new era in AI infrastructure. The company’s continued focus on solving performance bottlenecks, optimizing data placement and scaling AI infrastructure is poised to have a lasting impact on how enterprises implement and scale AI technology. For businesses seeking to push the boundaries of AI innovation, Peak:AIO’s solutions offer the intelligent infrastructure required to stay ahead in an increasingly competitive landscape.

For more information about Peak:AIO’s cutting-edge solutions, visit their website at www.peakaio.com or connect with Roger Cummings on LinkedIn. See the related video here.

TechArena Report: 2025 Storage Demands Call for a Change

As AI drives explosive data growth, next-gen SSDs deliver the speed, density, and efficiency to outpace HDDs—reshaping storage strategy for tomorrow’s data-centric data centers.

Databricks to Acquire Neon and Boost AI Agent Capabilities

Databricks, a leading analytics and AI company, announced Wednesday, its agreement to acquire cloud-based database startup Neon in a deal valued at approximately $1 billion.

This acquisition aims to enhance Databricks’ analytics platform with Neon’s serverless open-source PostgresSQL (or “Postgres”) database offering, which provides developers, and now AI agents, with a fast, open and economical option to manage data. We at TechArena noted the purchase with interest, as it is the latest move by Databricks to expand its AI portfolio, following the acquisition of generative AI startup MosaicML in 2023.

Neon, founded in 2021, revolutionized the database industry with an innovative architecture that decouples storage from compute. This technology allows for rapid provisioning, branching, and forking of databases.

These abilities, originally intended to benefit developers, make the solution ideal for AI agents, which require speed and flexibility. In their announcement of the deal with Databricks, Neon revealed that recently more than 80% of databases on their platform were provisioned by AI agents rather than humans.

Ali Ghodsi, co-founder and CEO of Databricks, emphasized the significance of this acquisition: “The era of AI-native, agent-driven applications is reshaping what a database must do. By bringing Neon into Databricks, we're giving developers a serverless Postgres that can keep up with agentic speed, pay-as-you-go economics, and the openness of the Postgres community.”

This move aligns with Databricks’ ongoing strategy to position itself as a top service for building, testing and deploying AI models and agents. It previously acquired MosaicML, an open-source startup specializing in generative AI, with the vision that the acquisition would enable “any company to build, own, and secure best-in-class AI models while maintaining control of their data.” The MosaicML Foundation Series, known for its MPT-7B and MPT-30B models, has enabled organizations to build and train state-of-the-art models using their own data in a cost-effective manner.

What’s the TechArena take? We’ve recently covered how agentic AI is disrupting how enterprises manage their workflows, data, and infrastructure (such as in this Fireside Chat with Intel’s Lynn Comp). Databricks’ acquisition demonstrates how crucial it is for companies to embrace architectures and data management strategies that will prepare them for the increasing demands caused by the accelerated adoption of AI workflows.

We’ll be watching with interest to see how Neon is integrated into Databricks’ offerings, with more information expected to come as part of the Data + AI Summit in June.

Insights on AI and Data Management from Intercontinental Exchange

In today’s rapidly advancing tech landscape, optimizing infrastructure to handle massive data sets has become more crucial than ever. One noteworthy story emerging from NVIDIA GTC is how Intercontinental Exchange (ICE) is tackling the growing complexity of data management, AI implementation and storage optimization across its vast network of financial exchanges, data services and mortgage technologies. We sat down with Anand Pradhan, the head of the AI Center of Excellence at ICE, and Roger Corell, senior director of leadership marketing at Solidigm, to discuss how ICE is using technology to stay ahead of the curve.

ICE, known for operating the New York Stock Exchange, processes over 700 billion transactions daily. With such massive volumes of data, building and maintaining an optimized, highly redundant infrastructure is essential. It’s not just about the network and servers — the flow of data through these systems makes storage a critical focus in ICE’s technology strategy.

Anand explained that ICE handles around 10 to 12 terabytes of data every single day with nanosecond granularity. This data, crucial for tracking financial trades, must be stored and accessed at lightning speeds. With millions of trades, real-time analysis and preventing fraud are key, which means both data retrieval and storage processes must be supercharged for efficiency.

One of the biggest challenges is the sheer volume of data and the input-output bottlenecks that arise when reading and writing to storage systems. To solve this, Anand’s team works closely with the InfraSolutions architecture team to fine-tune the storage infrastructure, ensuring that it scales easily, remains flexible and is resilient to failure. This involves rigorous testing and investment in systems that allow for fast, uninterrupted data access, while minimizing latency and maximizing performance.

But Anand’s insights extend beyond just infrastructure; he also highlighted how AI is shaping the company’s approach to data aggregation. At ICE, AI models are primarily used for processing unstructured data, such as images of real estate properties. The AI extracts valuable insights from these photos, identifying key artifacts, such as doors, kitchens or even the color of a room. With real estate photos pouring in from across the U.S., this AI-driven data processing is a massive undertaking. AI models are deployed at scale to make sense of the raw data, which is then converted into structured, usable information for the company’s real estate services.

As ICE’s AI adoption grows, so too does its need for an optimized storage solution. The storage systems of the future, Anand noted, need to accommodate millions of files — whether flat files, images or video data — and ensure they can be accessed quickly. As more and more workloads move to the AI space, fast access to large datasets and the ability to scale storage seamlessly are becoming essential. This is where storage systems that can horizontally scale, offer fast write speeds and support massive volumes of data will stand out.

Looking ahead, ICE’s evolving use of AI and machine learning is transforming its infrastructure and redefining what modern storage systems must deliver. What’s the TechArena take? With growing demands for speed, scale and real-time access, ICE’s journey offers a clear example of how AI is driving a fundamental shift across the industry. As adoption accelerates, organizations at the forefront of tech will need to rethink their approach to storage — those that do will be best positioned to gain a lasting competitive edge.

To learn more about ICE, visit www.ice.com, or find Anand and ICE on LinkedIn.

What’s next in CVEs Shaping Cybersecurity Compliance in 2025?

The landscape of Common Vulnerabilities and Exposures (CVEs) is evolving in 2025. This shift is driven by the transition of CVE management from MITRE Corporation to the CVE Foundation, and also by increasing recognition of the limits of CVE-centric metrics. By scrutinizing the governance of CVE reporting, one can better understand that more context-aware CVEs are unique identifiers for publicly known information-security vulnerabilities in released software.

For 26 years, MITRE Corporation managed the CVE program, a US government program that identifies and catalogs publicly known vulnerabilities. This centralized effort raised awareness and promoted dialogue on security risks. However, the recent development of the CVE Foundation – spurred by the US government funding uncertainties for MITRE – signals a potential paradigm shift in how CVEs are governed. This transition comes as cybersecurity experts acknowledge, at least privately, that simply counting CVEs often misrepresents their organization’s true security posture. The cybersecurity industry is grappling with the need for more meaningful metrics that genuinely reflect risk reduction, moving beyond performative measures to achieve tangible security improvements.

3 Key Trends Shaping the Future of CVEs & Their Impact on Cybersecurity Compliance in 2025:

1: The Transition to the CVE Foundation: Implications for Governance and Transparency Description: The most significant development is the ongoing transition of the CVE Program's management from MITRE to the newly established CVE Foundation. This shift aims to ensure the long-term sustainability and independence of the program through a more diversified funding model. In April 2025, the US government funding for the CVE program was cut with no transition plan. After public outcry, the US government extended funding for 11 months. This opens the potential for a more globally representative and resilient CVE program, less reliant on a single government funding source. The CVE Foundation (https://www.thecvefoundation.org/) was formed on April 16, 2025 and is actively working toward assuming full operational control and responsibility for the CVE program. Concerns regarding the transparency of its formation, and whether it is sufficiently independent from biased corporate self-reporting, have been raised within the cybersecurity community. This may affect the objectivity and reliability of the CVEs in the future.

2: The Declining Relevance of Simple CVE Counts as a Security Metric Description: There's a growing understanding that merely counting the number of CVEs identified or remediated is a flawed and easily gamed metric for assessing an organization's security risk. Although having an industry-wide quantifiable metric for budgeting, staffing, and tracking progress (KPIs), the severity and exploitability of individual CVEs vary – making simple tallies a poor measure of security improvement. This shift supports a move away from misleading metrics toward more context-aware risk assessments. It also encourages organizations to focus on risk rather than CVE counts. Organizations setting goals to "reduce severe CVEs by 5%" might achieve this by addressing less critical issues, while high-impact vulnerabilities remain unpatched, highlighting the inadequacy of this metric in reflecting true security improvement.

3: The Rise of Context-Rich Vulnerability Assessment and Prioritization Description: The future of cybersecurity compliance is trending toward a greater emphasis on vulnerability assessment approaches that incorporate more context beyond the CVE itself. This includes understanding the specific environment, existing security controls, and the potential impact of exploitation. New market segments, such as Adversarial Exposure Validation, are emerging to provide this richer context. Companies to watch include: Horizon3.ai, BreachLock, Picus Security, Cymulate, and others. These solutions enable m ore effective prioritization of remediation efforts based on actual risk and provide a better understanding of which vulnerabilities pose the most significant threat to a specific organization. Cyber threat actors actively exploit known CVEs – often those for which patches exist but have not been applied. This highlights the need to move beyond counting CVEs to actively validating exposure and prioritizing remediation based on exploitability in a specific environment.

How to Prepare

Organizations should prepare for these shifts by:

- Learning about risk-based vulnerability management: Prioritize remediation efforts based on the severity, exploitability, and potential impact of vulnerabilities within their specific environment, rather than solely on the number of CVEs.

- Staying informed about the CVE Foundation's developments: Monitor the CVE Foundation's announcements and governance structures to understand how the CVE program will evolve and how this might impact compliance.

- Exploring and adopting richer vulnerability assessment methodologies: Investigate and implement tools and services that provide contextual information beyond basic CVE data, such as Adversarial Exposure Validation, to gain a more accurate understanding of their security posture.

- Re-evaluating security metrics: Move away from simplistic CVE counting as a primary security metric and instead focus on measures that genuinely reflect improvements in security and reductions in actual risk.

Conclusion

The landscape of CVEs and their role in cybersecurity compliance is changing in 2025. The transition to the CVE Foundation and the growing recognition of the limitations of CVE-centric metrics signal a move toward a more nuanced and risk-aware approach to vulnerability management. Organizations must adopt better assessment methods to manage risks and stay compliant with their governance frameworks.

- Call-to-action: Share your thoughts on the future of CVEs and their impact on cybersecurity compliance in the comments below. Click here to learn more about risk-based vulnerability management strategies.

- Integration with Automated Security Tools: Discuss how the integration of CVE data with automated security tools, such as Security Information and Event Management (SIEM) systems and vulnerability scanners, can enhance real-time detection and response to threats.

- Machine Learning and Predictive Analytics: Explore how AI is being applied to CVE data to anticipate potential exploits and prioritize vulnerabilities based on historical trends and threat intelligence.

Global Collaboration and Data Sharing: Highlight the role of increased global collaboration and data sharing among cybersecurity entities, including how crowd-sourced vulnerability information and international partnerships could shape the CVE process and improve overall cybersecurity resilience.

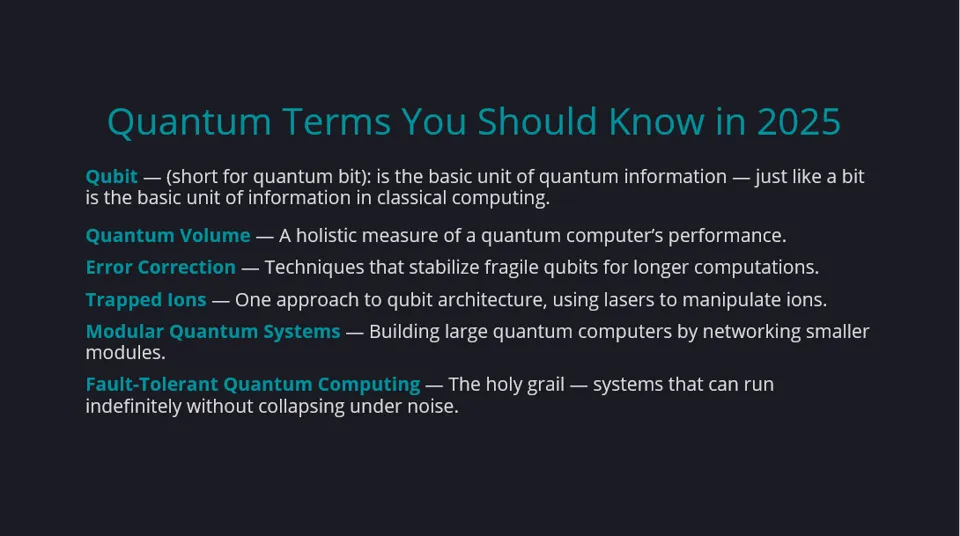

Quantum Momentum: Nearing a Tipping Point or Just Growing Hype?

While many of us are focused on AI’s dizzying pace of innovation, an epic tech advancement has been coming closer to reality – quantum computing.

In the past six months, the quantum computing ecosystem has witnessed significant advancements, notably through the collaborative efforts of Microsoft and Quantinuum. Microsoft unveiled Majorana 1, the world's first quantum processor, powered by topological qubits, constructed using a novel material termed a "topoconductor," that combines topological and superconducting properties. This innovation aims to create more stable and inherently error-resistant qubits. Concurrently, Microsoft and Quantinuum achieved a breakthrough in quantum error correction by applying Microsoft's qubit-virtualization system to Quantinuum's H2 trapped-ion quantum computer, resulting in the creation of 12 highly reliable logical qubits with error rates 800 times lower than those of physical qubits. Additionally, Quantinuum's Reimei trapped-ion quantum computer became fully operational at RIKEN's facility in Japan, marking a significant step in integrating quantum computing with high-performance computing (HPC) systems like RIKEN's Fugaku supercomputer.

Just before the new year, Google introduced Willow, a 105-qubit superconducting processor. The announcement highlighted a benchmark, Random Circuit Sampling (RCS) computation completed in under five minutes, a task estimated to take today's fastest supercomputers 10 septillion (1025) years. This demonstrated significant progress in reducing error rates as qubit count scales.

The rapid pace of these and other quantum innovations – such as IBM’s Condor chip, NVIDIA’s launch in March of CUDA-Q, and Intel’s 12-qubit research chip – raises the question: Are we nearing the tipping point for quantum computing?

Technical Breakthroughs Accelerate, Funding Climbs

For years, experts have pointed to quantum error correction as the final boss to defeat. Microsoft and Quantinuum’s achievements show meaningful progress here, not just lab demos — bringing fault-tolerant quantum computers one step closer.

After a cautious funding year in 2023, venture capital and corporate investment into quantum startups are climbing again. Big players clearly see opportunity on the horizon.

New government initiatives from the U.S., EU, and China are pouring billions into quantum R&D. The global race is shifting from “if” to “how fast.”

Error Correction Needs Scaling, Real-World Impact Limited

On the other hand, today's most impressive systems still require thousands or millions of physical qubits to stabilize a single logical qubit — meaning commercial utility is still a work in progress.

And while we’re seeing a ton of innovation – no preferred or dominant quantum architecture has emerged. From superconducting qubits to trapped ions, to silicon spins and photonics, each approach comes with pros, cons, and scaling challenges.

While breakthroughs are exciting, most quantum advantage use cases (such as simulating complex molecules or optimizing massive logistics networks) remain just out of reach for mainstream businesses.

Quantum pioneers like John Preskill remind us that the NISQ (Noisy Intermediate-Scale Quantum) era may last well into the 2030s before fault-tolerant, at-scale systems become a norm.

The TechArena Take

The TechArena take is that we’ve reached the “Quantum Decade,” a period in which early enterprise experiments scale up, hardware and software ecosystems mature, winners in platform architecture and developer tooling start to emerge, and real-world proofs of concept – especially in pharma, logistics, and finance – begin to surface. The companies (and countries) that build quantum muscle now will be best positioned when the true inflection point hits — likely later this decade.

The journey to quantum computing is a marathon, not a sprint. The energy around quantum computing today feels significant. If you're not paying attention to quantum yet, 2025 is the year to start.

Dell’s Project Lightning: A Game-Changer for AI-Optimized Storage

At GTC, all things AI took center stage, and one thing that grabbed attendees’ attention was Dell’s latest innovation for AI computing, Project Lightning. We sat down with Scott Shadley, director of leadership narrative and evangelist at Solidigm, and Rob Hunsaker, director of engineering technologies at Dell, for a conversation that offered valuable insights into how Dell’s storage solutions are evolving to meet the growing demands of the AI market.

For those following AI developments, it’s clear that the performance demands of AI workloads are shifting. Traditional file systems are no longer sufficient to handle the immense data volumes and speed requirements of modern AI applications. Enter Project Lightning, Dell’s next-generation parallel file system, designed from the ground up to be the fastest solution in its market segment.

What sets Project Lightning apart is its ability to address extreme performance needs in AI environments. As Rob explained, the project was announced last year at Dell Tech World and is specifically optimized for AI use cases. This new file system offers unmatched speed and efficiency, which is essential as AI workloads continue to grow in complexity and scale. By leveraging Dell’s own intellectual property, Project Lightning represents a significant leap forward in storage technology, making it a game-changer for industries relying on AI.

This new addition to the PowerScale family of storage solutions isn’t just about speed. It’s about ensuring that storage solutions can scale with the growing demands of AI. Dell’s approach is rooted in the idea that data is the most critical asset in any enterprise, and having the right tools to manage and store that data is key to enabling AI’s potential. As Rob highlighted, Dell is working to ensure that all of its storage products are prepared for the future, with a strong focus on making data easily accessible and manageable.

One of the highlights of the conversation was Dell’s broader vision for data storage. Rather than simply providing individual storage products, Dell’s focus is on offering complete solutions that address the full spectrum of customer needs. The Dell Data Lakehouse, for example, is a powerful tool designed to unify storage, PowerEdge and software features into a comprehensive solution. This platform is designed to support AI applications by providing a reliable and scalable data management system that can handle the vast amounts of unstructured data AI processes require.

Throughout the discussion, it became clear that Dell’s role in the AI ecosystem goes beyond just providing storage solutions — it’s about creating a seamless environment where data can be used effectively to drive innovation. As Rob pointed out, the enterprise sector is just beginning to fully embrace AI, and Dell is committed to helping them navigate that transition. By ensuring that storage products can meet the needs of AI applications, Dell is positioning itself as an essential player in the future of enterprise AI.

In an industry where storage often takes a backseat to more glamorous technologies like GPUs and inference engines, it was refreshing to hear a conversation that highlighted the importance of reliable, high-performance storage. After all, as Rob noted, if the storage fails, the data is lost, and with it, the entire AI workload.

The partnership between Dell and Solidigm, known for their high-capacity quad-level cell (QLC) drives, further demonstrates the importance of resilient, high-performance storage. The TechArena take? By working together, Dell and Solidigm are able to provide a robust storage infrastructure capable of supporting the intense demands of AI environments, ensuring that customers’ data is safe and accessible.

To learn more about Dell’s cutting-edge storage solutions and their ongoing advancements in AI, visit the Dell InfoHub (infohub.delltechnologies.com) or check out their Unstructured Data Quick Tips blog for the latest updates.

Putting the AI Pieces into the Sustainability Puzzle

AI is revolutionizing industries and transforming our daily lives, but as we harness its power, it is crucial to consider its impact on the environment. Last week, at the GreenAI Summit, there was plenty of discussion around how AI impacts the environment and policy. A common theme was the significant energy demand that AI will put on IT, compute facilities, local and state environmental impacts, and, of course, the utility and water grids. With the speed at which the IT industry is moving, AI systems are already getting fragmented, and we have quickly moved to a decision-making era where “one size doesn’t fit all” when it comes to the type of AI system to build and where to run it.

Let us start with the distinctions between agentic AI, generative AI (Gen AI) and instinctive AI.

Initially, there was only Gen AI. We all have used Gen AI to create new content, such as text, images and music. Gen AI systems use machine learning algorithms to generate outputs based on patterns learned from existing data. Examples include OpenAI’s GPT-3, which can generate human-like text, and DALL-E, which creates images from textual descriptions. These are the “culprits” that give AI a bad name. Since they consume so much energy, they have raised many environmental and sustainability issues — and rightly so! IDC forecasts that AI data center energy consumption will grow at a compound annual growth rate (CAGR) of 44.7%, reaching 146.2 terawatt-hours (TWh) by2027. Put another way, that’s more than Sweden’s annual energy requirements(121 terawatt-hours of power).

Quickly on the heels of Gen AI came agentic AI. Agentic AI refers to AI systems that can autonomously perform tasks and make decisions based on predefined goals. They are designed to act independently, often mimicking human behavior. Suddenly, it seemed that every software and data management company was building AI agents — flooding the data centers with significant unplanned compute and storage requirements. Some examples of agentic AI include autonomous vehicles, robotic assistants and intelligent personal assistants, like Alexa+. However, agentic AI did not address the energy consumption issue, especially those involving robotics and autonomous vehicles — another bad rap!

And finally, the AI system that I find very cool — instinctive AI. Instinctive AI refers to AI systems that mimic instinctive human behaviors and responses. These systems are designed to react to stimuli in real-time, similar to how humans instinctively respond to their environment. Examples include AI-driven chatbots that provide instant customer support and real-time fraud detection systems. Instinctive AI brings two challenges to the energy consumption and emissions footprint debate: continuous real-time processing, which can be energy-intensive, but also have difficulty scaling effectively without compromising performance. I think that the business benefits, such as being able to provide instant solutions and support, enhancing user satisfaction and improving operational efficiency, will take priority over environmental concerns.

These AI systems create applications and workloads that require careful placement in any IT infrastructure. With environmental sustainability being the leading goal or criteria, businesses should look at a range of data center options. Cloud service providers offer the lowest levels of carbon intensity, while co-locations have low carbon intensity for sovereign AI. On-premises or enterprise data centers solve the “data gravity” challenge, where the compute resources are close to the data, whereas enterprise edge computing platforms offer the best inferencing environments, but at the cost of the highest carbon intensity.

Sustainable AI represents a crucial intersection between technological advancement and environmental stewardship. By understanding the differences between agentic AI, generative AI and instinctive AI, we can better assess their impact on the environment and leverage their benefits to promote sustainability. While challenges such as energy consumption and resource usage need to be addressed, the potential of AI to drive efficiency, innovation and data-driven decisions offers a promising path towards a greener future.

OVHcloud Innovates Cloud Infrastructure with AI, Sustainable Tech

At this year’s CloudFest, we caught up with Chris Ward, senior sales account manager at Solidigm, and Guillaume Gojard, product director at OVHcloud, to dive deeper into OVHcloud’s unique infrastructure strategy, its evolving role in AI and its long-standing commitment to sustainable innovation. Amid the cloud announcements and industry buzz, OVHcloud stood out for how it’s quietly reshaping the cloud landscape — one custom-built server and water-cooled data center at a time.

At a time when cloud providers are often defined by how they manage their hyperscale partnerships, OVHcloud stands apart by owning its full value chain. The company builds its own servers at facilities in both Europe and North America, operates more than 40 data centers worldwide and manages its own global fiber network. This level of integration isn’t just about control — it enables OVHcloud to deliver a price-performance ratio that resonates, particularly in today’s AI-hungry world.

And AI is everywhere. “It’s in every mouth,” Guillaume said, capturing the sentiment that defined the expo floor this year. For OVHcloud, this isn’t about scrambling to catch up. It’s about expanding what they started years ago. The company has been offering GPU-based compute since 2017, and it recently began rolling out ready-to-use large language models (LLMs) and AI endpoints — giving developers a practical starting point to integrate generative models into their stack. OVHcloud is pairing its AI push with an upcoming data platform designed to streamline how customers manage and leverage data inside complex workflows.

But the conversation didn’t stop at AI. Another topic discussed was OVHcloud’s long-standing use of water cooling, which is now getting mainstream attention. “And for example, water cooling with one glass of water, we can cool down one server for 10 hours of use,” Guillaume explained, noting that the company’s approach uses seven times less water than the industry average. That’s not a gimmick — it’s industrial innovation rooted in sustainability. It’s also a reminder that OVHcloud isn’t jumping on trends — they’re often ahead of them.

A large part of that innovation comes through partnerships. In this case, OVHcloud’s collaboration with Solidigm has allowed the company to push high-performance storage capabilities in its high-grade servers. “Blazing fast data access on our NVMe storage capacity, which is really great, because this is what the market demands,” Guillaume explained. For demanding use cases like real-time analytics, that speed translates directly into customer value. More importantly, the partnership gives OVHcloud the flexibility to respond to shifting demands — something Guillaume said has been smooth and responsive from day one.

Looking ahead, OVHcloud’s eye is on quantum computing. OVHcloud is supporting startups and even has a quantum computer. The company is also quietly building a quantum-friendly cloud platform, positioning itself to support ecosystems that will, like AI, demand entirely new infrastructure paradigms.

The TechArena take? In an industry full of noise, OVHcloud’s approach is refreshingly holistic. From LLM toolkits to next-gen cooling to the quantum horizon, it’s not just about where things are now — it’s about where they’re headed.

M2M and Solidigm Lead Industry Trends in AI and Cloud Computing

At CloudFest 2025, Khilna Chandaria, operations manager at Solidigm, caught up with Charlie Hacker, sales and marketing director at M2M Direct, and TechArena to chat about the latest trends in cloud computing and the innovations that are reshaping the industry. The conversation highlighted the growing importance of AI, the rising demand for high-capacity storage and the key attributes businesses are seeking in their cloud solutions. As cloud computing continues to evolve, M2M’s consultative distribution approach is helping organizations adapt to these changes, particularly through their collaboration with Solidigm.

The shift toward AI-driven cloud computing was one of the key topics of discussion.

“Cloud computing is shifting to an AI-based model, both for private and public clouds,” Charlie shared. This shift is reshaping how cloud environments are used, enabling businesses to handle larger amounts of data with greater efficiency and scalability.

As cloud adoption accelerates, businesses are looking for solutions that offer flexibility, scalability and competitive pricing. “Flexibility and price, price or flexibility, either or,” Charlie explained, emphasizing the trade-offs many organizations are grappling with when choosing their cloud partners. But these aren’t the only important factors. Scalability is also essential, as businesses must be able to expand their storage and processing capabilities as their needs grow.

Security is another major consideration in today’s cloud landscape. With more data being transferred across private and public clouds, keeping that data secure is a top priority. As businesses increasingly rely on cloud solutions to store and process sensitive information, they must prioritize robust security measures that can protect against cyber threats, ensuring both compliance and the integrity of their data.

One of the most pressing needs in cloud computing today is high-capacity data storage. As AI and other data-intensive technologies continue to advance, the demand for larger, faster storage solutions is growing at a rapid pace. “Size, size, size, size,” Charlie remarked, stressing how critical storage capacity has become in the face of AI’s massive data requirements. Solidigm’s quad-level cell (QLC) products, offering up to 122 terabytes of capacity, are meeting this demand head-on. With such large-scale storage solutions, businesses can manage and process enormous datasets efficiently, without sacrificing speed or performance.

M2M’s role in this evolving landscape is to provide not just products, but consultative services that help businesses navigate the complexities of cloud migrations and data center upgrades. “We’re like a sales arm for Solidigm, and Solidigm is a sales arm for us,” Charlie explained.

What’s the TechArena take? Collaborations like this one between M2M and Solidigm ensure that cloud infrastructure providers can deliver the right products at the right time, supplying on-demand solutions that are essential for businesses undergoing significant infrastructure changes.

For those interested in staying updated on the latest advancements in cloud computing and AI, Charlie encouraged viewers to follow M2M on LinkedIn and visit their website (m2m-enterprise.com) for the latest product offerings and updates.

Insights with Intel on Navigating Agentic AI Transformation

At TechArena, we’re continuously exploring the ways AI is shaping industries, and my recent Fireside Chat with Lynn Comp, VP and head of Intel’s AI Center of Excellence, brought to light some thought-provoking insights. One major takeaway: agentic AI is set to disrupt how enterprises manage their workflows, data and infrastructure.

During our chat, Lynn provided an in-depth look at how enterprises are navigating their AI journeys, moving beyond traditional machine learning (ML) to embrace more advanced approaches, such as generative AI and agentic computing. For Lynn, this shift begins with data — specifically, the ongoing challenges around data governance and readiness that continue to hinder enterprise AI adoption.

Lynn highlighted a key point that underpins all AI systems: it all starts with data. Whether it’s structured databases or the increasingly popular vector databases for generative AI, data management remains a significant challenge. For enterprises implementing agentic AI, the complexity ramps up even further, as multiple agents can sample data from

different sources, leading to potential misalignment. The need for observability in these systems is critical to avoid costly errors and ensure that the right data is being used by the right agents at the right time.

As enterprises explore agentic AI, Lynn also addressed the computational demands that come with it. The need for more powerful infrastructures that can handle the increased traffic between agents and the massive data flows of generative AI models is becoming evident. This is not just about scaling; it’s about optimizing performance while managing cost variability — another factor many enterprises are grappling with as they explore cloud-native solutions and increasingly complex workflows.

Lynn also delved into the commercialization challenges that lie ahead for agentic AI, particularly when it comes to interoperability and creating a thriving ecosystem. Drawing parallels to cloud-based services, she highlighted how successful agentic computing will require an open framework, akin to a software-as-a-service model, where agents can communicate seamlessly across different platforms. Google’s recent developments around their agent-to-agent platform point toward the necessity of such interoperability to avoid a lock-in scenario. By enabling agents to exchange data across ecosystems, enterprises can unlock the full potential of AI while maintaining flexibility.

Building out a marketplace for agents — where enterprises can purchase and implement AI tools based on specific needs — is another hurdle to overcome. Lynn emphasized that while the technology is promising, organizations will need to ensure that usage models are clear and predictable, especially in terms of cost. Enterprises are currently challenged by a lack of standardization in how agents are priced, with complexities such as pay-per-use and time-of-use models still in the works. Without clear pricing structures and observability tools, organizations will struggle to scale AI effectively while avoiding unforeseen financial burdens.

Looking ahead to the next 12 to 18 months, Lynn stressed the importance of laying a strong data foundation. For businesses, this means focusing on data pipelines and architectures that are flexible enough to support a variety of AI applications. Whether implementing machine learning or natural language processing, organizations that can build adaptable systems will be in a better position to leverage agentic AI for everything from logistics optimization to advanced predictive analytics.

What’s the TechArena take? In the fast-moving world of agentic computing, the next few years will be critical. As Lynn pointed out, the real breakthroughs will come when enterprises, hyperscalers and startups work together to build scalable, interoperable and user-friendly AI ecosystems that will transform industries and business processes alike. At TechArena, we can’t wait to see how these developments unfold — and to continue bringing you the latest insights on the intersection of AI and enterprise innovation.