Announcing TechArena’s 2025–2026 Voices of Innovation Fellows

At TechArena, we believe innovation doesn’t happen in isolation—it happens when bold ideas find the right platform and the right audience. This was a foundation of the establishment of the platform in 2022 and a guiding force in establishing our Voices of Innovation program. Today, we’re excited to introduce the 2025–2026 Voices of Innovation Fellowship cohort, a group of tech leaders shaping the future of AI era computing.

The Voices of Innovation Fellowship is a TechArena-sponsored program that amplifies practitioner perspectives across the most critical areas of computing. In a time when the industry is driving innovation at unprecedented pace, too many brilliant practitioners still struggle to have their insights heard. Our Fellowship exists to close that gap.

Meet the 2025–2026 Voices of Innovation Fellows

This year’s fellows represent a diverse range of expertise and leadership across technology and infrastructure:

Srija Reddy Allam, Fortinet

Srija Reddy Allam is a cloud security architect at Fortinet, where she partners with global customers to design, architect, and deploy Fortinet’s full suite of security solutions in public cloud environments. In this role, she engages directly with enterprises across industries, guiding them on securing critical workloads, applications, and data as they scale and modernize in the cloud. Her work spans the Fortinet portfolio, ensuring customers adopt solutions that align with both their business goals and regulatory requirements.

Matty Bakkeren, Momenthesis

Matty Bakkeren is a senior technology executive with a rare blend of deep technical expertise and strategic sales & marketing leadership, honed through years of managing high-stakes B2B partnerships at Intel with global leaders like Red Hat and Accenture across EMEA. As founder of Momenthesis, he helps accelerate datacenter transformation by scaling growth, driving innovation, and aligning technical solutions with business needs. His collaborative approach and ability to simplify complexity make him a valued advisor in technology and business.

Robert Bielby, Automotive Expert

Robert Bielby is a semiconductor and automotive tech leader with four decades of experience across hardware design, system architecture, product marketing, and P&L. He’s helped define markets in memory, ASICs, FPGAs, and AI, bridging lab innovation to scaled business. A hands-on educator, he champions practical, power-aware design and demystifies auto tech.

Tejas Chopra, Netflix

Tejas Chopra is an advocate of sustainable computing—AI and Cloud—and an active speaker on the topics of AI, Cloud Computing, Distributed Storage Systems and Engineering Culture. At Netflix, his role centers on advancing the company’s machine learning platform infrastructure. As a means to give back to the community, Tejas co-founded GoEB1 with a mission to build thought leadership for immigrants. It has now evolved into EnsolAI, a self-serve subscription platform for finding relevant opportunities to build brand and impact.

Lynn Comp, Intel

Lynn Comp is a global strategy, marketing, and product management executive with deep experience in the technology industry. She has a passion for creating and delivering solutions that address customer needs and unlock new business value and leverages this passion in driving broad customer adoption of Intel architecture solutions for the data center. She is also a board member at NeuReality, a startup that develops AI solutions, bringing her expertise in cloud, enterprise IT, software, 5G, product marketing, ecosystem development, and strategy.

Sean Grimaldi, Cybersecurity Expert

Sean Grimaldi is a seasoned digital engineering leader and innovator with a proven track record of driving technological advancements and strategic growth. Holding active clearances, Sean brings extensive experience in cloud computing, cybersecurity, and emerging technologies. He has held leadership roles at Anduril Industries, VectorZero.ai (as Co-Founder and CTO), the CIA, and Microsoft, consistently delivering impactful results.

Mark Grodzinsky, Network and Edge Expert

Mark Grodzinsky is a product and market leader who has spent his career at the intersection of semiconductors, wireless, SaaS, and AI. From early startup roles to Fortune 500 leadership, he has built ecosystems that turned Wi-Fi, IoT, and cloud innovations into global platforms. Most recently, he led an AI-first shift in network observability. He is passionate about bridging deep technology with real-world adoption to drive the next wave of innovation.

Tannu Jiwnani, Threat Detection Expert

Tannu Jiwnani is a principal security program manager at Microsoft and a global leader in cybersecurity. She drives innovation in identity security, leads high-impact incident response, mentors women worldwide, and serves on advisory boards. Through her leadership and advocacy, she is shaping a safer, more inclusive future in technology.

Banani Mohapatra, Walmart

Banani Mohapatra is a seasoned AI/ML leader with 13+ years of experience at the intersection of data science, product management, and enterprise innovation. As a senior data science leader at Walmart, she builds scalable AI/ML systems powering e-commerce personalization for millions of customers, delivering measurable business impact through AI-driven product lifecycle solutions. She has published applied AI research with IEEE in areas such as Generative AI, Agentic AI, causal inference, and experimentation platforms, and is a frequent speaker at global AI summits on responsible and enterprise-scale AI adoption.

Anusha Nerella, Financial Services Expert

Anusha Nerella is a senior principal software engineer and a seasoned leader in AI/ML, DevOps, big data, and automation. She has advanced trading platforms and enterprise systems at Barclays, Citibank, and USPTO, while driving AI-powered innovation. A mentor and researcher, she champions knowledge sharing and inspires the next generation of tech leaders.

Gina Rosenthal, Cloud Computing Expert

Gina Rosenthal is the founder of Digital Sunshine Solutions and a senior product marketing leader with deep expertise in cloud, virtualization, and data security. She has driven product launches, brand growth, and multi-million–dollar impact at VMware and beyond. A Pragmatic Institute Ambassador and Tech Field Day Delegate, she shares insights on AI, cloud, and edge across global platforms.

Niv Sundaram, Robotics, AI and Data Center Expert

Niv Sundaram is the chief strategy officer at Machani Robotics. Prior to this role, Niv spent 15 years at Intel in engineering and leadership positions spanning datacenter, AI, cloud infrastructure, content-protection & video conferencing solutions, contributing to $15B+ in annual server and client revenue. Now focused on the intersection of advanced AI orchestration and human-centered experiences, she applies her deep technical expertise to develop AI systems that understand and respond to human needs with genuine intelligence and emotional awareness. Niv is based in Oregon and holds a Ph.D. in Electrical Engineering from the University of Wisconsin-Madison, two issued patents, and several peer-reviewed publications. A strong advocate for women in tech, she also creates comics about women in technology to inspire the next generation @saywhatcomics.

Ryan Tabrah, Data Center and AI Expert

Ryan Tabrah is founder and CEO of SkillsForge AI, building adaptive learning technology that helps people and companies upskill faster and stay competitive in the AI era. He is former VP and general manager for Xeon and Compute Products at Intel, where he led a multi-billion-dollar portfolio of high-performance, energy-efficient solutions. With more than two decades of experience across AI, cloud, and data infrastructure, Ryan combines technical depth with business strategy while championing sustainability and diversity in tech.

Vivek Venkatesan, Data Engineering & AI Expert

Vivek Venkatesan is a lead data engineer at Vanguard and a senior member of IEEE with 15+ years of experience in data engineering, cloud architecture, and applied AI. He has delivered solutions ranging from a COVID-19 workforce safety platform to cost-saving enterprise data architectures, and contributes as a mentor, speaker, and author in the global data and AI community.

Bhavnish Walia, Amazon

Bhavnish Walia is a senior risk manager, AI/ML at Amazon in New York, where he leads global initiatives in risk management, responsible AI governance, compliance automation, and fraud detection. With over 13 years of experience spanning e-commerce, fintech, and banking, he has built frameworks for LLM risk governance, deployed Generative and Agentic AI for enterprise innovation, and automated anti-money laundering workflows that have delivered millions in annual savings.

Why It Matters

The Fellowship embodies TechArena’s brand promise: to deliver original takes from across the tech landscape on the pulse of tech innovation and deliver insights from those who are defining tech’s future. Their publications ensure that insights reach the audiences who can act on them—industry decision-makers, innovators, and the broader tech community.

Throughout 2025–2026, readers can expect thought-provoking articles, Q&As, and features from this group of innovators.

We’re thrilled to welcome this year’s cohort and can’t wait to share their stories. We’ll begin next week with introductory posts from each Voice.

AI Benchmarking Hits New Heights with MLPerf Inference 5.1 Release

AI inference benchmarking leveled up today with the release of MLPerf Inference 5.1 results from MLCommons. This latest release not only set another round of records in participation and benchmark performance; it expanded the number of benchmarks to meet the evolving demands of AI applications, as reasoning models, speech recognition, and ultra-low latency inference become increasingly important for enterprise AI deployments.

Here’s the TechArena breakdown of what matters most.

Performance Breakthroughs Continue

First thing’s first: those looking for performance improvements across existing benchmarks will find plenty to analyze in the latest round of data. The Llama 2 70B benchmark—the most popular workload for the second consecutive round—shows median performance improvements of 2.3x since the 4.0 release, which was only about 18 months ago, with the best results showing 5x gains.

What’s driving these dramatic improvements? Larger system scales are becoming more common, as is the adoption of FP4 (4-bit floating point) numerical precision. Even accounting for larger systems, however, results comparing the Llama 2 70B benchmark over time still show improvement at the per-accelerator level.

Three New Benchmarks Reflect AI’s Expanding Reach

Beyond ongoing benchmarks, MLPerf Inference 5.1 introduces three benchmarks that capture AI’s performance beyond large language model (LLMs) with reasoning, speech recognition, and efficient text processing.

DeepSeek-R1 marks the first reasoning model in MLPerf Inference history, a 671-billion parameter mixture-of-experts system that breaks down complex problems into step-by-step solutions. This model generates output sequences averaging 4,000 tokens (including “thinking” tokens) while tackling advanced mathematics, complex code generation, and multilingual reasoning challenges. The benchmark combines samples from five demanding datasets and requires systems to deliver the first token within 2 seconds while maintaining 80-millisecond-per-token speeds, constraints that reflect real-world deployment requirements for agentic AI systems.

Whisper Large V3 brings automatic speech recognition into the MLPerf ecosystem with a transformer-based encoder-decoder model featuring high accuracy and multilingual capabilities across a wide range of tasks. The inclusion reflects growing enterprise demand for high-quality transcription services across customer service automation, meeting transcription, and voice-driven interfaces. With 14 vendors submitting results across 37 systems, the benchmark captures a wide range of hardware and software support.

Llama 3.1 8B replaces the aging GPT-J benchmark with a contemporary smaller large language model (LLM) designed for tasks such as text summarization. With a 128,000-token context length compared to GPT-J’s 2,048 tokens, this benchmark reflects modern LLM applications that must process and summarize lengthy documents, supporting both data center and edge deployments with different latency constraints for various use cases.

Interactive Scenarios: The Low-Latency Imperative

In response to community requests, MLPerf Inference 5.1 expands “interactive” scenarios—benchmarks with tighter latency constraints that reflect the demands of agentic AI and real-time applications. These scenarios now cover multiple LLM benchmarks. The interactive constraints push systems to deliver over 1,600 words per minute, enabling immediate feedback for chatbots, question-answering systems, and other applications where user experience depends on responsiveness.

Hardware Innovation on Full Display

Finally, the hardware landscape represented in MLPerf Inference 5.1 showcases an industry in rapid transition. Five newly available accelerators made their benchmark debuts: AMD Instinct MI355X, Intel Arc Pro B60, NVIDIA GB300, NVIDIA RTX 4000 Ada, and NVIDIA RTX Pro 6000 Blackwell Server Edition.

The TechArena Take

MLPerf Inference 5.1 arrives at a moment when AI procurement decisions carry unprecedented strategic weight. The benchmark results provide critical data points for enterprises evaluating everything from edge inference appliances to hyperscale data center deployments.

As MLCommons reaches the 90,000 total results milestone across all MLPerf benchmarks, the organization continues to demonstrate that transparent, reproducible benchmarking can keep pace with an industry moving at breakneck speed. MLPerf Inference 5.1 represents not just a snapshot of current AI capabilities, but a preview of the performance standards that will define the next generation of AI infrastructure.

Schneider Electric Powers Ahead in Sustainability

Ahead of Yotta 2025, we connected with Anna Timme, VP of Sustainability at Schneider Electric, to explore how the company continues to lead the charge in climate action and circular design.

Named TIME’s Most Sustainable Company two years in a row, Schneider is showing that sustainability is more than a responsibility—it’s a growth strategy. Timme offers a firsthand look at the milestones, challenges, and innovations that keep Schneider ahead of the curve.

Q1: Schneider Electric has been named TIME’s Most Sustainable Company two years running. What do you think sets you apart from other companies in achieving this level of industry leadership, and how do you continue to stay ahead in sustainability?

A1: For the past two decades, sustainability has been at the core of everything we do at Schneider Electric, embedding it into our purpose, culture, and business. This represents more than just a responsibility.

At Schneider, sustainability is a key driver of business success. We’ve been monitoring our environmental and societal impact since 2005: two decades of experience. We have firsthand knowledge of how these efforts lead to innovation. They lead to new revenue streams, improved operational efficiency, risk management, and stakeholder trust. It also elevates employee satisfaction—all of which are critical for long-term success.

For example, we adopted and maintain a circular economy approach, designing products to use longer, use better, use again. This reduces the need for raw materials, minimizing waste and yielding cost savings.

Our Environmental Data Program gives customers unprecedented clarity into the impact of our products, helping them meet regulations and adopt circular practices themselves.

We recognize that our positive impact goes hand-in-glove with strong financial performance. As the world’s most sustainable company (by Time Magazine and Corporate Knights), we believe that what makes Schneider stand out today and tomorrow is that we are an Impact company where we do well to do good, making sure that our entire ecosystem is brought along this journey toward a more sustainable and inclusive future.

In 2021, we launched the Schneider Sustainability Impact (SSI) 2021–2025 roadmap, focused on climate, resources, equal opportunities, trust, generations, and local communities. Our SSI drives impact and action across our operations, partners, and customers! Our clients and customers have played a crucial role in our success in this spec—we couldn’t do it without them.

Ultimately, sustainability is good business. As CEO Olivier Blum says, “What is good for the planet is good for the wallet.” Through electrification and digitalization, we’re building a better future for all—where environmental, societal, and financial benefits go hand in hand.

Q2: Your Q2 2025 sustainability report shows some impressive milestones—like surpassing one million people trained in energy management and reaching 734 million tonnes of CO₂ emissions saved for customers. What does hitting these ambitious targets mean for your long-term sustainability strategy?

A2: It’s indeed a proud moment for us, and a reminder of what’s possible when purpose meets action. Looking forward, these results reinforce our commitment to keep raising the bar. Our long-term strategy is to continue scaling solutions that electrify, digitize, and decarbonize energy consumption while expanding access to sustainable energy worldwide. Every milestone we reach motivates us to go further, faster—because the challenges ahead require ambition matched with measurable action.

Achieving these milestones is a robust validation of our long-term sustainability strategy. Training over one million people in energy management and helping our customers avoid 734 million tonnes of CO₂ emissions are impressive figures. They reflect the real-world impact of our commitment to climate action and inclusive progress.

They also demonstrate that our approach—combining innovation, education, and collaboration—is working. By equipping individuals with the skills to manage energy more efficiently, we’re building a global movement toward smarter, more sustainable practices. And by deploying technologies that reduce emissions at scale, we’re helping industries transition to low-carbon operations.

Looking ahead, these achievements reinforce three strategic priorities:

1. Scaling impact through digital and electric solutions—especially in critical sectors like data centers, where efficiency and resilience are paramount.

2. Driving responsible innovation—developing technologies that are not only high-performing but also energy-efficient and circular by design.

3. Mobilizing ecosystems—engaging partners, customers, and employees to accelerate collective progress toward net-zero goals.

These milestones are not an endpoint—they’re a springboard. They inspire us to go further, faster, and to continue embedding sustainability at the heart of everything we do.

Q3: Your Zero Carbon Project has helped reduce supplier emissions by 48%—that’s a huge achievement. What advice would you give to other companies looking to tackle their supply chain carbon footprint?

A3: The Zero Carbon Project is a cornerstone of our SBTi Corporate Net Zero-aligned strategy. I am excited and proud of the fact that we are on track to slash 50% of the operational emissions of our top 1,000 suppliers by the end of this year! To achieve this, we are “drinking our own wine,” using a Schneider Electric’s supply chain decarbonization service that we offer to our customers across industries.

Better yet, it doesn’t have to be a single company initiative! Industry-specific supply chain consortia are a fantastic way to pool resources and accelerate impact. In this vein, Schneider Electric services are the engine behind the Energize program for the Pharmaceutical & Healthcare sector and the Catalyze program for the semiconductor industry. The iMasons Climate Accord has begun work on developing a potential program for digital infrastructure—join us there to earn more!

Getting back to the question, I have two pieces of advice:

1. Don’t get distracted by the desire for perfect data from suppliers. Not taking reduction actions because you have data gaps is like refusing to eat well and exercise to lose weight just because you don’t have a scale at home. Start enabling suppliers to prioritize operational efficiency and shift to carbon free energy sources now. In parallel, help them build the capability to measure and report.

2. Treat this is a journey—the entire effort is a necessary ingredient for your Net Zero ambition. The more mature our suppliers are, the stronger their contribution will be! So the focus needs to be on how you can support them on the journey.

Q4: Data center owners are increasingly looking to tackle their scope 3 footprint, which is often larger than their operational emissions, especially for those who are procuring carbon-free energy. What is Schneider doing to help address this?

A4: When we look at a hyperscaler’s scope 3 footprint, it’s almost entirely upstream (embodied carbon) from materials like cement and steel, and from equipment such as mechanical, electrical, IT, storage, and networking systems.

At Schneider, we’re thinking about more than performance in isolation. Our broad portfolio, deep ecosystem partnerships, and ambitious decarbonization goals allow us to take a wide-angle lens, aiming for longevity and reduced environmental impact of all solutions.

Each new product starts with the previous generation’s Environmental Product Disclosure (EPD), a standardized, third party–verified document quantifying all lifecycle impacts. From there, we define environmental design goals and achieve them by using less materials, using lower impact materials, designing for circularity (longevity, serviceability, etc.), and increasing efficiency.

This is in turn is supercharged by Schneider Electric’s:

• SBTi-aligned decarbonization goals: Net Zero ready in our operations and -25% end-to-end by 2030,

• supply chain decarbonization program (discussed previously), and

• broader efforts to support critical mass in grid decarbonization.

Many of our customers have bold 2030 scope 3 targets. Thanks to this broad approach, we can co-develop tailored plans to draw down embodied carbon of our business with them on defined timelines.

Q5: As our industry builds ever faster, and data centers become much more power dense to support AI workloads, what can data center design engineers and operators do to both find the power they need as quickly as possible, and stay on track with their sustainability goals?

A5: The power demands of our industry are indeed daunting. The International Energy Agency (IEA) projects that data center energy usage will double by 2030. The process of applying for new loads with utilities and grid operators was a matter of course only a couple of years ago and is now a source of serious uncertainty and risk as data center operators look to site new projects.

The good news is that there are major intersections between solutions for power availability and those for sustainability. First, it’s helpful to remember that solar, wind, and storage are not just the cheapest new sources of power you can build in just about every market around the world, but also the quickest to deploy. Policy support for these technologies will not only help advance us toward a Net Zero future; it will also help ensure that we have plentiful power for our growing industry, and that energy costs are kept under control. At Schneider, we are helping our customers secure renewable power around the world through our Renewable Energy and Climate Advisory Services.

Data center companies are also investing aggressively in onsite power solutions to either provide bridge power until utility power is available, or as permanent prime power to obviate the need to find grid capacity. Currently, methane/natural gas turbines and engines appropriate for data center loads are experiencing extended lead times, often of five years or more. Many players in the industry are investing in newer technologies both to pursue lower emissions, and to continue to build, including fuel cells, co-located wind, solar, and storage, and modular nuclear reactors. Schneider is working with our customers to design and deploy microgrids across the globe, integrating a variety of distributed energy resources, and managing both onsite loads and interaction with the grid through our industry leading controls.

Inside Arm’s Drive to Advance AI’s Data Center Transformation

As AI fuels a $7 trillion-dollar infrastructure boom, Arm’s Mohamed Awad reveals how efficiency, custom silicon, and ecosystem-first design are reshaping hyperscalers and powering the gigawatt era.

Equinix & Solidigm on the Real Cost of AI Infrastructure Demands

Equinix’s Glenn Dekhayser and Solidigm’sScott Shadley discuss how power, cooling, and cost considerations are causingenterprises to embrace co-location among their AI infrastructure strategies.

5 Fast Facts: Vigilent’s AI for Cooling Plant Optimization

We sat down with Dr. Cliff Federspiel, founder, president & CTO at Vigilent, to talk about compute efficiency in the AI era—specifically, how smarter cooling optimization can keep SLAs tight as rack densities rise. From sensing to predictive control, Cliff explains why letting an on-prem AI continuously tune the cooling plant beats static setpoints, helping operators protect reliability while cutting energy and carbon.

The company builds an on-prem, vendor-agnostic AI control layer for data-center cooling. A sensor network and machine learning generate an Influence Map® of how each AHU affects rack inlet temperatures; the system then adjusts fans and unit states only where needed. Vigilent integrates with BMS/DCIM and also monitors and controls chillers, which allows for optimization across the entire cooling plant. Guardrails and fail-safes—including snap-to-full-cooling—ensure resilience while delivering measurable efficiency gains.

Q1: Vigilent has been applying AI/ML to cooling optimization for 17 years—well before today’s wave of AI-driven data center demand. How has this early market entry shaped your leadership position as operators now face power densities and cooling challenges beyond the limits of traditional air cooling?

A1: Data centers are highly variable in how they’re configured and operated. There are different cooling technologies from different vendors. Control strategies also vary. For example, temperature vs. pressure. Facility layouts are different, for example, raised floors vs. slab and varying types of containment. As you say, Vigilent has been around for a long time, and that means we’ve seen all of these configurations and learned how to optimize cooling in each case. Years ago, we scratched our heads when seeing something new, but by now we’ve pretty much seen it all, including the complexities associated with optimizing across the air-side and chiller plant.

Increasing IT power densities just add another layer of complexity to the cooling challenge, and we’ve got the experience and gray hairs, and software and engineering talent, needed to deal with it.

Q2: Most traditional cooling systems rely on static setpoints, while Vigilent uses machine learning to understand the influence of each cooling unit on IT equipment across a facility. How does this dynamic approach transform cooling efficiency compared to conventional methods, and what measurable outcomes—like SLA compliance, energy use, or equipment longevity—can operators expect?

A2: Machine learning enables Vigilent’s AI to empirically understand exactly what’s going on in a data center. If a fan is ramped up or down, or a cooling unit is turned on or off, where will temperatures go up, and where will they go down? Machine learning allows the AI to create a predictive model, basically knowing in advance the effects of any actions it takes. This enables the AI to deliver very high SLA compliance with a bunch of other benefits. One colocation operator went from about 94% SLA compliance to 99.96% compliance. In the same data hall, this operator reduced PUE by 10% and energy and carbon emissions by 32%. Since cooling is only used when and where it’s needed, there has been a big reduction in wear and tear, which means their cooling infrastructure will last longer and there are fewer replacement parts. They are now using the AI as a competitive differentiator vs. other colocation operators.

Q3: With over 1,000 deployments across 35 countries and partnerships with companies like Schneider Electric, NTT, and Siemens, how does Vigilent’s global footprint enhance your AI models, and what unique advantages does this breadth of operational data provide to new customers, particularly those entering high-density AI computing environments?

A3: To address the diversity in data center designs and operations, Vigilent has developed platform capabilities that complement our core AI technology. We integrate with any type of cooling infrastructure, whether it be air cooling, liquid cooling, or the chiller plant, and also with BMS and DCIM systems plus other assets like power equipment. We also rapidly deliver bespoke capabilities with a tool called Vigilent Studio. And we have an information layer in our platform called Vigilent Insights, which uses the data captured and generated by Vigilent’s AI to provide facility staff with guidance about how to improve resilience or operate more efficiently.

Our strategic partners are global leaders in providing infrastructure and services to data center operators. This has positioned us well for the increased densities we’re seeing now. For example, Schneider Electric acquired the liquid cooling company Motivair and collaborates with NVIDIA on designs for AI data centers.

Q4: As rack densities push past 100 kW and liquid or hybrid cooling strategies become mainstream, where do you see AI-driven optimization playing the biggest role? How can it accelerate both sustainability and carbon reduction goals for next-generation infrastructure?

A4: Joint optimization of the air-side and water-side of the cooling plant will be increasingly important as rack densities rise and liquid cooling is used to remove some, but in most cases, not all, of the IT heat load. Higher densities shorten ride-through times in the event of a cooling failure, and hybrid cooling increases the complexity of the cooling plant. There is plenty of research showing that AI-driven vehicles are safer and more fuel-efficient than human-driven vehicles. Similarly, AI-driven optimization not only improves the efficiency and sustainability of complex data centers, but it also improves their reliability.

Q5: For operators considering AI-driven cooling optimization, what are the top three factors they should prioritize in evaluating solutions? And where can readers learn more about Vigilent’s approach to dynamic cooling management?

A5: People can be understandably nervous about letting AI control data center cooling, just as people are nervous getting into a self-driving car. But with proper safeguards, they can ensure data center resilience and be rewarded with significant benefits. What are those safeguards?

• First, make sure the AI operates within the premises, within the corporate firewall. This will avoid security risks associated with cloud-based applications.

• Second, make sure the AI has guardrails that protect against hallucinations, and fail-safes that ensure full cooling if there is ever a problem with the AI.

• Third, make sure the AI is proven. Has it been deployed in other mission-critical environments? How many? What were the results?

Learn more about Vigilent.

Clearing AI’s Costly Bottlenecks with Cornelis Networks

CEO Lisa Spelman explains how tackling hidden inefficiencies in AI infrastructure can drive enterprise adoption, boost performance, and spark a new wave of innovation. Check out Cornelis Networks.

Synopsys Accelerates Chip Design with AI Copilot Release

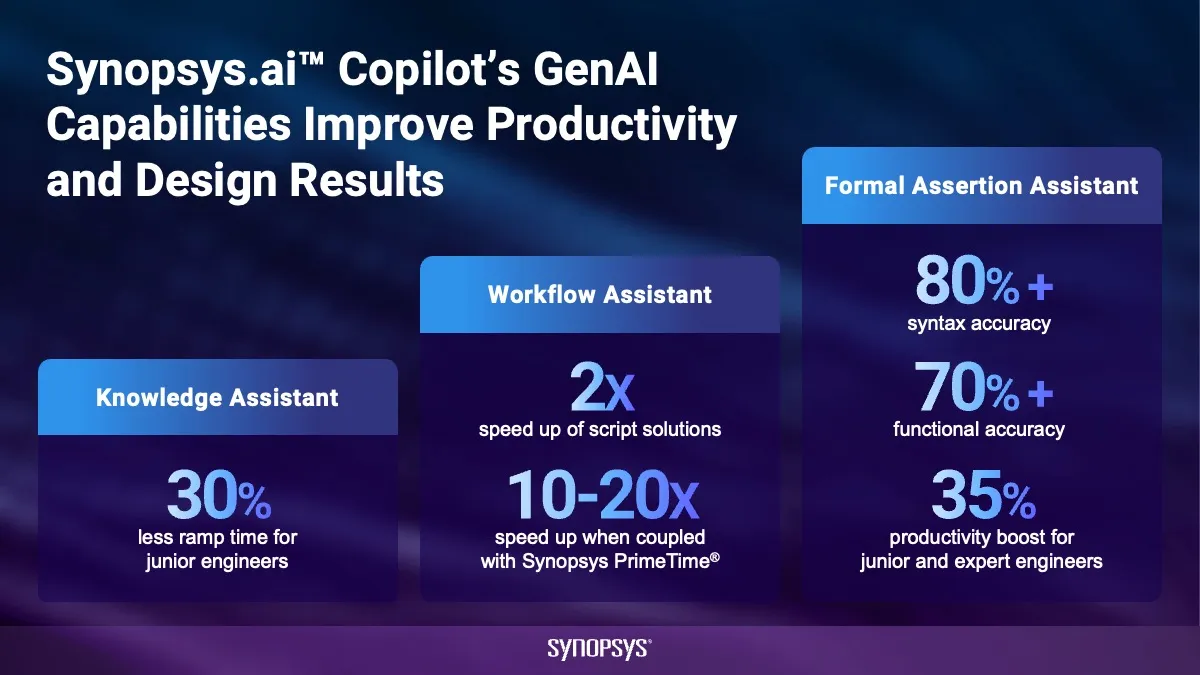

This week, electronic design automation (EDA) leader Synopsys unveiled expansions to its Synopsys.ai Copilot generative AI capabilities, promising to transform workflows for semiconductor engineering teams, enabling them to take on more complex designs on accelerated timelines.

Synopsys reports that the new generative AI (Gen AI) capabilities accelerate workflows that previously consumed days into tasks completed in mere hours, and processes that took hours into operations finished in minutes. Early adopters are already reporting extraordinary gains: 30% faster ramp time for early-career engineers using knowledge assistant and 35% boost in engineering productivity for early-career and expert engineers within formal verification workflows using formal assertion assistant.

At the heart of this transformation lies Synopsys.ai Copilot’s dual approach: assistive and creative AI capabilities. The assistive features focus on knowledge management and workflow optimization, helping engineers to navigate complex documentation, generate scripts, and guide newcomers through the labyrinthine world of chip design. The creative capabilities venture into more ambitious territory, automatically generating formal assertions and register-transfer level (RTL) code with greater than 80% syntax accuracy and more than 70% functional accuracy.

This AI expansion gains additional significance when viewed through the lens of Synopsys’ recently completed acquisition of Ansys. The integration is already paying off with the recent introduction of Ansys Engineering Copilot and updates to Ansys SimAI, extending AI capabilities deep into simulation and analysis workflows.

In addition, Synopsys is looking beyond current generative AI applications toward what it calls AgentEngineer technology for chip design. Developed in collaboration with Microsoft, this represents a progression toward increasingly autonomous design systems. The company envisions a five-level evolution: from step-level actions (L2) to complex multi-agent operations (L3), dynamic flow optimization with adaptive learning (L4), and ultimately autonomous decision making (L5).

The first prototype offers a glimpse of a future where AI agents don’t just assist engineers but actively participate in the design process. As Microsoft’s Aseem Datar notes, “Together, we are not just optimizing existing workflows—we are introducing a new paradigm to advance engineering innovation and productivity for next-generation chip designs.”

The TechArena Take

In an industry where time-to-market can determine billion-dollar market positions, Synopsys has just handed its customers a significant advantage. The timing is particularly crucial as the industry grapples with a combination of design complexity and workforce shortages. Advanced AI chips require sophisticated design methodologies, while simultaneously, the pool of experienced semiconductor engineers remains constrained.

Synopsys’ AI capabilities directly address this paradox by accelerating novice engineers’ learning curves while amplifying expert productivity. The real test will be execution at scale, but the early results suggest meaningful progress toward AI becoming a more integral part of the semiconductor design process.

Cracking the Code on Storage Efficiency with Solidigm

Explore myths, metrics, and strategies shaping the future of energy-efficient data centers with Solidigm’s Scott Shadley, from smarter drives to sustainability-ready architectures.

Inside Data-Centric Strategy with Equinix and Solidigm

Equinix’s Glenn Dekhayser and Solidigm’s Scott Shadley join TechArena to unpack hybrid multicloud, AI-driven workloads, and what defines a resilient, data-centric data center strategy.

Hyperscalers Weigh In on Immersion Cooling’s Future

As AI drives power demands sky-high, hyperscale leaders share opportunities, obstacles, and the urgent path forward for immersion cooling adoption.

Antillion’s Alistair Bradbrook on Designing Edge Tech That Lasts

Alistair Bradbrook, founder and COO of Antillion, has spent decades wrestling with one central challenge: getting the right information to the right people at the right time—no matter the environment. From early exposure to Phase I clinical trials at his father’s pharmaceutical company to leading edge-AI hardware design, Bradbrook’s career is a study in curiosity, iteration, and purposeful design.

In this conversation, he shares how Antillion approaches technology, leadership, and research/testing at the edge.

Q: You helped found Antillion with a mission to bring cutting-edge performance to the edge. What was the spark that told you this company needed to exist?

A: There wasn’t one epiphany—it was a series of moments over decades. My interest in collecting data at the source started when I was 12 or 13. I worked with my father in his business, which was a pharmaceutical company that did Phase I trials, testing drugs in humans for the first time. The challenge in that business had always been about collecting data—like heart rate, ECG, and all those metrics that are key to understanding the effect of drugs on humans—quickly enough to aggregate them so decisions could be made.

This was ~35 years ago, so the idea of putting high-performance computing at the edge was unimaginable. We were working with the home computers of the day—not exactly capable edge devices. But the challenge was the same as today: collect data close to its source, aggregate it quickly, and empower people to make decisions in real time.

That fascination stayed with me—whether in healthcare, environmental sensing, or defense—and eventually led me to Antillion. I’d grown frustrated with existing products and felt that if no one else was building the kind of capable, usable, and non-intimidating technology I envisioned, then we should do it ourselves.

Q: Antillion’s products blend engineering rigor with industrial design aesthetics. What does “good design” mean to you in the context of edge technology?

A: My industrial design lead and I start pretty much conversation with a few factors that are really critical. The object we’re building needs to not feel alien—it needs to not feel so brand new that it’s not relatable and people don’t understand how to use it. So, coming up with designs that are not alienating is key.

We also think a lot about portability—not just size, weight, and power (SWaP) in the defense sense, but true portability. Can one person move and operate it easily? Does its form factor match its purpose? We’ve built devices that, in hindsight, didn’t meet that standard—they were too big for their capability or too small to be useful. That balance matters.

We also iterate quickly, moving from sketch to 3D design to a first physical prototype, because some flaws only reveal themselves when you can hold and use the object. Perfection is unattainable, but each release gets closer.

Q: Running a fast-moving company at the frontier of hardware and edge AI is no small feat. What have you learned about leadership in unpredictable environments?

A: My style is collaborative and passion-driven. I’ll never ask someone to do something I wouldn’t do myself. In a ~30-person company, I can stay connected with everyone, but as we grow, that culture will rely on managers carrying it forward.

I want people who have genuine interest in what we’re building—not just a nine-to-five mindset. The best moments are when someone takes an idea I’ve shared, runs with it, and comes back with not only what I asked for, but something I hadn’t even considered. That’s where the magic happens.

Q: Your R&D approach starts with research-first thinking. How does that influence your company culture?

A: I’m naturally inquisitive. Every new idea starts with research—often me digging into the “why” before handing it to engineering or marketing to explore further.

We won’t build something just to compete unless we see a way to make it meaningfully better. If I can’t see that potential, I park the idea until I can. If I do see it, we’ll prototype quickly, test functionality, and only then think about aesthetics and packaging.

This approach encourages the team to think critically, test early, and be comfortable with iteration.

Q: ANTEX (Antillion’s field research activities) tests products in the wild, not just in the lab. Can you share an example where this approach paid off?

A: One example was a small device designed to extend radio range in mountainous terrain. We tested it in the Alps—hiking, throwing it in the snow, getting it damp and cold, using it the way it would actually be used.

It passed the environmental tests, but more importantly, we learned how people actually carry, deploy, and treat the equipment. That kind of insight simply can’t be replicated in a lab.

Q: As AI moves from cloud to edge, how do you see the role of field-deployed systems changing?

A: I’m still not sure how much of the current push for AI at the edge is driven by genuine need versus commercial opportunity. There are clear cases where edge AI is essential—especially when you need faster decisions or want to limit the data sent upstream—but I think the technology may be a little ahead of widespread operational readiness.

The real opportunity isn’t “AI” as a buzzword—it’s making better decisions closer to where data is created, in ways that either empower local operators or optimize system efficiency.

Q: Antillion operates in high-stakes environments where failure isn’t an option. How do you build resilience into your team?

A: We don’t really talk about “failure.” We invest heavily in early-stage research and iteration to reduce the chance of failure, but when it does happen, it’s rarely black and white. We treat it as another step toward solving the problem. Resilience comes from seeing it as a shared responsibility—between our team, our products, and our customers—to mitigate and learn from setbacks.

Q: When people look at Antillion 10 years from now, what do you hope they say?

A: I’d love for one or more of our products to still be in use, still delivering on—and maybe exceeding—their original purpose. Longevity is the ultimate proof of value. If our technology continues to serve customers in ways we couldn’t predict at launch, I’ll consider that a success.

Powering AI: Solidigm’s Vision for the Future of Storage

Industry leader Scott Shadley reveals how Solidigm’s innovations in SSDs, partnerships, and architecture are reshaping data centers to meet the rising demands of AI, edge, and enterprise workloads.

MLPerf Automotive v0.5: A New Benchmark for AI in Vehicles

As AI becomes more tightly integrated into applications such as robotics, manufacturing automation, and autonomous vehicles, the need for industry-specific performance benchmarks becomes increasingly important. Today, MLCommons announced it is rising to the challenge of vertically oriented benchmarking with the release of MLPerf Automotive v0.5.

This new benchmark suite provides a trove of data for the automotive industry as its members evaluate AI systems destined for safety-critical vehicle applications. The release establishes the first standardized performance baseline for automotive AI workloads, which will help procurement decision makers in the automotive supply chain.

Cross-Industry Collaboration Drives Innovation

The benchmark emerged from a collaboration between MLCommons and the Autonomous Vehicle Compute Consortium (AVCC). It brings together technical expertise from organizations spanning the AI and automotive manufacturing ecosystems, including Ambarella, Arm, Bosch, C-Tuning Foundation, CeCaS, Cognata, Motional, NVIDIA, Qualcomm, Red Hat, Samsung, Siemens EDA, UC Davis, and ZF Group.

This collaborative approach reflects the complexity of modern automotive AI systems, which must integrate everything from silicon-level optimizations to safety-critical software stacks. The benchmark addresses this reality by measuring complete system performance rather than isolated component capabilities.

“As vehicles become increasingly intelligent through AI integration, every millisecond counts when it comes to safety,” said Kasper Mecklenburg, Automotive Working Group co-chair and principal autonomous driving solution engineer, Automotive Business, Arm. “That’s why latency and determinism are paramount for automotive systems, and why public, transparent benchmarks are crucial in providing Tier 1s and OEMs with the guidance they need to ensure AI-defined vehicles are truly up to the task.”

Addressing Real-World Automotive Demands

MLPerf Automotive v0.5 introduces three core performance tests: 2D object recognition and segmentation, and 3D object recognition. The tests use high-resolution, 8-megapixel imagery that reflects real-world camera systems.

“Many of the key scenarios for AI in automotive environments relate to safety, both inside and outside of a car or truck,” said James Goel, Automotive Working Group co-chair. “AI systems can train on 2-D images to be able to detect objects in a car’s blind spot or to implement adaptive cruise control….In addition, 3-D imagery is critical for training and testing collision avoidance systems, whether assisting a human driver or as part of a fully automated vehicle.”

The benchmark implements two distinct measurement scenarios designed for automotive contexts. The “single stream” scenario measures raw performance and throughput for applications like highway vehicle tracking. The “constant stream” scenario addresses mission-critical functions where AI systems must process data at fixed intervals, such as collision detection systems.

Setting the Foundation for Industry Evolution

The initial submission round included entries from NVIDIA and GATEOverflow, establishing baseline performance data for development systems (evaluation systems not inside a production vehicle) across the closed and open benchmarking divisions. The closed division enforces strict rules to enable direct apple-to-apple comparisons between systems. The open division allows more flexibility in implementation approaches, showcasing cutting-edge techniques.

The benchmark’s impact extends beyond simple performance comparison. By standardizing measurement approaches, it promises to streamline the notoriously complex automotive procurement process, where original equipment manufacturers (OEMs) traditionally navigated a complex comparison challenge among many suppliers with limited standardization.

The TechArena Take

The race to implement AI in automotive just shifted into a new gear. MLPerf Automotive v0.5 creates the first neutral ground with transparent, safety-focused metrics that matter to vehicle manufacturers. Now there’s a common measuring stick with the results that can drive real procurement decisions across the global automotive market.

For OEMs, this benchmark suite eliminates the guesswork from multi-million-dollar platform decisions. When choosing between competing AI systems for next-generation vehicles, they finally have standardized, reproducible data to base their decisions on.

We expect the existence of these standardized benchmarks to accelerate automotive AI innovation cycles. When performance gaps become visible through standardized benchmarks, engineering teams move faster to close them. The result: better, safer AI systems reaching production vehicles sooner.

Inside Entertainment’s Storage Revolution with Dell Technologies

In highly collaborative industries like media and entertainment, time isn’t just money—it’s opportunities. Giving your animators, designers, and visual effects artists more time means they have more space to coordinate and develop better creative outcomes. And when you have hundreds of collaborators, saving each one just a few minutes every hour can exponentially increase the amount of time spent on creative endeavors instead of, for example, waiting for software to load.

I recently had the opportunity to explore how storage innovation is enhancing collaborative workflows in the media and entertainment industry with Alex Timbs, Senior Business Development Manager of Media and Entertainment at Dell Technologies, and Scott Shadley, Leadership Marketing Director at Solidigm. During our Data Insights episode, it became clear that changes in content production workflows from pre-production to final edits are causing a fundamental shift in how storage supports content creation, moving flash storage from “nice to have” to essential for modern production pipelines.

Alex brought a unique perspective to our conversation, having spent 15 and a half years at Animal Logic (now Netflix Animation) before joining Dell. His experience as the company scaled from 80 to over 1,000 people globally provided compelling real-world context for understanding storage evolution in creative environments.

Alex saw firsthand the “serendipitous performance improvements” that emerge when organizations transition to flash storage and save minutes that add up to hours of freed-up creative time, witnessing gains that went far beyond what traditional metrics might predict. At Dell, he’s worked with customers who achieved this as well. He cited a recent Dell film studio customer who achieved 100x performance improvements—not 100% gains, but literally 100 times faster workflows.

The need for faster storage has been recently accelerated by AI and real-time workloads, which demand rapid filling and flushing of video random access memory (VRAM) on graphics processing units (GPUs). Where 24GB VRAM used to be sufficient, today’s workloads often demand 96GB or more. To keep these GPUs fed, VRAM must be filled and flushed at extreme speeds, making high-performance flash storage no longer a luxury, but an absolute necessity.

Scott emphasized how storage has transformed from an afterthought to a critical performance enabler. The concurrent access patterns required by modern workflows—where multiple users need simultaneous access to large files alongside their associated metadata—can only be efficiently handled by flash technology. Existing spinning HDD storage simply cannot deliver the random access performance required for today’s collaborative, high-resolution content creation environments.

Dell’s AI Factory serves as a robust foundation for media and entertainment organizations striving to lead amid surging data growth, new content formats, and adoption of AI-powered workflows. The platform uniquely combines validated, full-stack solutions, enabling companies to start small and scale incrementally, directly addressing the sector’s dual mandates of technological advancement and financial discipline.

At its core, Dell AI Factory leverages the PowerScale family: from the cost-effective F210, optimized for studio or departmental use, to the high-density, high-performance F910 designed for the most demanding enterprise-scale operations. This architecture empowers customers to only pay for what they need today, with the confidence they can scale both performance and capacity linearly as their needs evolve, eliminating the risks of overprovisioning or stranded investment.

The result is a unified platform that streamlines collaborative workflows (including editing, visual effects, and broadcast), consolidates data silos, and supports both on-premises and multi-cloud deployment, all with high security and efficiency. Multiple industry-leading media organizations already rely on PowerScale for everything from 4K/8K post-production to real-time virtual production and generative AI–driven analytics. Dell’s integrated data reduction, metadata solutions, and cyber protection further drive down operational costs, while the modular “grow as you go” model enables ongoing financial prudence. This makes the AI Factory a trusted partner: future-ready, validated by top global brands, backed by deep ISV partnerships, and proven to accelerate creative delivery while protecting the bottom line.

The edge computing dimension adds another layer of complexity and opportunity. A modern film production might have 10 cameras that are capable of capturing resolutions up to 17K, and the crew will want to start working with that immediately. Alex described in-camera visual effects (ICVFX) scenarios where directors give real-time creative feedback, viewing final-quality visual effects directly on on-set monitors. This surge in edge computing for ICVFX pushes the need for high-performance storage that can operate in demanding production environments, all while delivering the rock-solid reliability that tight shooting schedules require.

Interestingly, Alex compared today’s transformation to the shift from analog film to digital photography. Just as digital cameras delivered instant feedback and removed the high cost of mistakes tied to film processing, modern workflows in content production combine real-time creative feedback with minimal risk. This immediacy allows teams to iterate more often, experiment more boldly, and ultimately achieve stronger creative outcomes by removing traditional bottlenecks.

Solidigm’s collaborative approach resonates strongly with this philosophy. Rather than pushing customers toward the highest-performance solutions regardless of need, Scott described how their solutions lab and upcoming AI lab allow customers to test workloads before making commitments. This “try-before-you-buy” model helps organizations right-size their storage investments while ensuring they can achieve their performance objectives.

Looking ahead, both experts see storage demands continuing to accelerate. Organizations working in 4K today need to prepare for native 8K workflows tomorrow, requiring storage architectures that can scale both performance and capacity over multi-year timeframes.

The TechArena Take

The convergence of AI, real-time workflows, and edge computing is fundamentally reshaping storage requirements across industries, with media and entertainment serving as the proving ground for technologies that will eventually transform other verticals. As Alex noted, the future belongs to organizations that can make the most informed real-time decisions possible, and that capability fundamentally depends on having the right storage foundation in place. Dell and Solidigm’s partnership demonstrates how thoughtful collaboration can deliver solutions that scale from individual creators to global production companies.

For more insights on Dell’s storage solutions for media and entertainment, visit their website www.delltechnologies.com/powerscale or connect with Alex Timbs on LinkedIn. Learn more about Solidigm’s AI-focused storage solutions at solidigm.com/ai or reach out via LinkedIn to Scott Shadley.

Optum’s AI Health Care Vision: Privacy-First Innovation

The use of AI in health care promises a remarkable transformation. For an industry facing chronic staffing shortages against increasing demand, the potential for always-on support for care providers and an ability to move toward proactive, predictive care systems would literally save lives. My recent discussion with Dr. Rohith Vangalla, lead software engineer at Optum, revealed how AI has the potential to reshape everything from infrastructure architecture to clinical workflows, and why privacy-first design has become the cornerstone of scalable health care AI.

During our conversation ahead of the upcoming AI Infrastructure Summit, Rohith shared insights from his unique background, which includes backend development, aviation (he’s also a licensed helicopter pilot), and academic research. These diverse experiences have shaped his perspective that AI must focus on creating tools that make health care “smarter, faster, and more human-centric.”

The regulatory landscape in health care presents unique challenges that many industries don’t face. As Rohith emphasized, “A bad model doesn’t just mean poor performance. It literally costs lives.” Rather than viewing regulations as obstacles, he sees them as essential safety rails that prevent innovation from going off track. The real danger, he argued, lies in under-regulation that could allow biased or opaque models into clinical care, leading to misdiagnosis and eroding trust in health care systems entirely.

With trust acting as a crucial cornerstone in health care AI delivery, privacy-first architecture has emerged as an essential element to new solutions. Rohith highlighted how federated learning enables hospitals and rural clinics to train shared models without moving patient data off their servers, maintaining local data control while harnessing collective intelligence. When combined with zero-trust frameworks that verify every access request, and confidential computing that keeps data encrypted even during processing, these technologies create infrastructure that doesn’t sacrifice privacy for performance.

The conversation revealed how these architectural strategies are opening doors for international collaboration that wouldn’t have been possible otherwise. Rather than slowing down innovation, privacy-first design is actually accelerating it by enabling secure data sharing across previously isolated health care systems.

Real-world impact is already visible across multiple health care domains. AI can highlight tiny anomalies on X-rays that experienced radiologists might miss, reducing diagnostic errors and accelerating treatment. Voice-enabled documentation frees physicians to spend more time connecting with patients. And on the operational side, AI-powered call centers could route patients to appropriate specialists in seconds, eliminating anxiety-inducing hold times.

Looking ahead, Rohith identified the most exciting frontier as the shift from reactive to proactive care. Predictive analytics can now identify early risk factors for conditions like heart failure or sepsis before symptoms appear, enabling clinicians to intervene before patients require emergency care. This capability becomes even more powerful when considering underserved areas. A rural clinic without a cardiologist, for example, could leverage AI-powered tools to support general practitioners in making critical diagnoses.

The infrastructure evolution supporting these advances focuses on efficiency and accessibility rather than raw computational power. Technologies for fine-tuning large language models (LLMs) enable organizations with limited resources to customize powerful models without enormous infrastructure investments. Rohith shared an compelling example from a university hackathon where students created a lightweight AI system that could run directly in ambulances, helping paramedics triage patients en route based on vitals and symptoms without requiring cloud connectivity.

At his upcoming AI Infrastructure Summit presentation, Rohith will address the critical balance between privacy and performance when choosing cloud, on-premises, or hybrid deployments. While cloud offerings provide speed, scalability, and cost optimization, on-premises solutions offer greater control and data residency, which are crucial factors in health care. Hybrid architectures often hit the sweet spot by keeping sensitive data local while offloading heavy compute workloads to the cloud.

The TechArena Take

Rohith’s vision for health care AI represents an industry focused on practical, ethical solutions that prioritize patient outcomes. His emphasis on privacy-first architecture, responsible AI development, and proactive care models demonstrates how thoughtful engineering combined with regulatory compliance can drive meaningful innovation.

The future Rohith envisions, where AI serves as a non-judgmental health and wellness companion working in the background to ensure people feel seen, supported, and safe, reflects the true potential of AI in health care. It’s not about the technology itself, but about how that technology can bridge gaps in access, improve care quality, and ultimately save lives through early intervention and predictive insights.

Connect with Rohith on LinkedIn to continue the conversation about AI infrastructure in health care. Learn more about Optum’s AI initiatives at the Optum Marketplace, where you can find the latest articles and trials on health care AI innovation.

HPE Discover: SSDs Are Rewriting Enterprise Storage

Surveying 250 IT pros, we found 29% already run SSDs beyond performance tiers, 81% would migrate when TCO wins, and storage innovation is a top lever to free power and space across the data center.

‘Open to Work’: The Quickening of AI & the Future of Jobs

From Intel’s layoffs to stealth automation, AI is reshaping work at a pace that outstrips human adaptation—driving record stress, uneven gains, and a scramble to reskill before the next downturn hits.

Dell’s AI Data Innovation: When Storage Takes Command

In the not-so-distant past, data center storage was somewhat of an afterthought. You needed a place to gather data; you needed it to be reliable; and you needed it to be economical. And that’s pretty much where the conversation ended. Now in the era of AI workloads, storage is taking center stage for the critical role it plays in data activation. Having the right storage solutions in the right place provides the flexibility, efficiency, and security to feed AI at scale.

I recently had the opportunity to explore this transformation with Saif Aly, senior product marketing manager at Dell, and Scott Shadley, leadership marketing director at Solidigm, to explore how enterprise storage requirements are evolving in response to AI-driven workloads and data-intensive applications. During our TechArena Data Insights episode, it became clear that storage has evolved to the critical foundation enabling AI success.

The AI workload revolution has created unprecedented demands on storage infrastructure. As Saif explained, these workloads require sustained throughput, low latency, and massive scale simultaneously. The challenge extends beyond simple performance. Enterprises face data fragmentation across edge, core, and cloud environments, creating operational complexity that can lead to vendor lock-ins and underutilized graphics processing unit (GPU) resources.

Dell’s response centers on their AI Data Platform, built on the principle that modern storage must support the entire data lifecycle. The PowerScale platform serves as the foundation, delivering what Saif described as unmatched performance improvements: 220% faster data ingestion and 99% faster data retrieval compared to previous generations. The introduction of MetadataIQ further accelerates search and querying capabilities, directly supporting AI workload requirements.

Scott emphasized how customer conversations have evolved beyond traditional capacity discussions to focus on “time to first data”—how quickly organizations can access information when they need it. In AI application workloads, different data types require varying levels of accessibility and performance characteristics. The challenge lies in understanding what data needs to sit directly adjacent to GPUs versus what can be retrieved from more distant storage tiers.

The discussion revealed how inference workloads, particularly retrieval-augmented generation (RAG) architectures, create unique storage demands. These systems require large datasets to be readily accessible for real-time referencing while simultaneously managing active data processing next to compute resources. Success depends on optimizing the balance between high-performance local storage and efficient data movement from archive locations.

While flash storage dominates high-performance applications, both experts acknowledged that hard disk drives (HDDs) retain value for cold and warm datasets. The key insight: not all data is equal, and successful architectures blend flash-based solid-state drives (SSDs) and HDD storage within unified namespaces to balance performance and cost considerations.

The conversation highlighted remarkable capacity evolution, with Saif recounting his amazement at holding Solidigm’s 122 TB drive, a device containing massive data volumes in a small form factor. This density revolution, progressing from 30 TB to 60 TB to 122 TB drives just in the last year, enables dramatic improvements in rack space efficiency, power consumption, and cooling costs while maintaining the throughput AI workloads demand.

Scott connected this capacity evolution to practical customer needs, explaining how optimization now focuses on the right bandwidth, density, and time-to-data characteristics rather than simply maximum speed. As storage capacity per device increases, the focus shifts to infrastructure optimization that delivers customer value through improved total cost of ownership and operational efficiency.

Real-world impact emerged through customer examples Saif shared. Kennedy Miller Mitchell, the studio behind the Mad Max franchise, used PowerScale to enable pre-visualization of entire scenes before filming. That capability allows directors to iterate creatively and make real-time decisions. Subaru leveraged the platform to manage exponentially growing data volumes, handling 1,000 times more files than previously possible and directly improving their AI-driven driver-assistance technology accuracy.

Looking ahead, both experts see storage demands continuing to accelerate, driven by AI’s exponential data growth and evolving workload requirements. As Saif noted, “the data explosion is not going to stop,” with AI both consuming and creating massive amounts of data. The distributed nature of modern computing—spanning edge, core, and cloud environments—requires storage solutions that provide consistent experiences and seamless data mobility across all locations.

The TechArena Take

The convergence of AI workloads, massive data growth, and distributed computing architectures is fundamentally reshaping enterprise storage from a cost center to a strategic enabler. Dell and Solidigm’s partnership demonstrates how thoughtful collaboration can deliver solutions that scale from individual creators to global enterprises while addressing the critical balance between performance, capacity, and cost efficiency. As storage continues to assert its place as a foundation of modern workloads, organizations that invest in flexible, high-performance architectures today will be best positioned to capitalize on tomorrow’s AI-driven opportunities.

For more insights on Dell’s enterprise storage solutions, visit Dell.com/PowerScale or connect with Saif Aly on LinkedIn. Learn more about Solidigm’s AI-focused storage innovations at solidigm.com/AI or reach out via LinkedIn to Scott Shadley.

Lightwave Logic’s Robert Blum on Polymer Optics for AI

Allyson Klein and Robert Blum of Lightwave Logic unpack how electro-optic polymers, paired with silicon photonics, lower power and boost density on the road to AI-fueled 400G-per-lane optics—with a 2027 volume ramp in sight.

TechArena’s ‘In the Arena’ Podcast Wins a Stevie® Award

TechArena’s flagship interview series earned a 2025 Stevie® Award in the International Business Awards® annual contest, recognizing authentic, executive-level conversations on AI, data centers, edge, and sustainability.

The In the Arena podcast took home recognition in the highly competitive “Shows – Technology” category. The win acknowledges a simple idea that has guided the series from day one: put real innovators in the spotlight and make complex technology understandable for decision-makers. It’s a gratifying milestone for a show that has quietly grown into a go-to forum for leaders shaping the infrastructure of the AI era.

Now in its 22nd year, the IBAs are widely regarded as one of the world’s premier business awards programs, drawing thousands of entries from organizations of all sizes and across industries. Winners are selected by the average scores of more than 250 global executives, with this year’s judging taking place from May through July.

In the Arena is hosted by industry veteran Allyson Klein—TechArena’s founder and principal—and produced by the TechArena editorial team. Across three seasons, the show has welcomed executives and founders from companies representing more than $9 trillion in market capitalization—including Microsoft, NVIDIA, Google, and Meta—alongside standout startups pushing the edges of compute, storage, networking, and energy. The conversations are deliberately accessible without sacrificing depth, aiming to surface the real decisions behind AI deployments, data center architectures, and sustainability initiatives.

Judges called out the podcast’s high production quality, editorial clarity, and guest caliber, noting that the series “bridges executive insight with emerging innovation.” Several praised the show’s consistent focus on AI and sustainability, and highlighted its effort to elevate underrepresented voices in tech leadership—an intentional part of the booking strategy since the series’ inception.

For TechArena, the recognition is as much about community as it is about content.

“This recognition celebrates the innovators who’ve delivered insights across our 176 episodes and our audience, which values the conversation as technology accelerates in the era of AI,” Klein said.

Beyond the accolades, the show’s impact shows up in the themes it consistently returns to: the operational realities of AI at scale; the fast-changing power and cooling profiles behind GPU clusters; the role of high-capacity flash and data orchestration in breaking bottlenecks; the promise (and limits) of optical I/O; the emergence of agentic workflows in semiconductor design; and the practical steps organizations can take to reduce environmental impact while improving performance. The result is a library designed for CTOs, architects, and product leaders who need both strategic direction and applied lessons, not just highlight reels.

The win also comes at a moment when enterprise leaders are hungry for grounded dialogue. AI adoption has accelerated, but success still hinges on fundamentals: data placement, latency, reliability, energy, and cost. In the Arena aims to be a steady companion through that transition—candid, technical when it matters, and always human.

Looking ahead, the team is lining up episodes leading into upcoming conferences including Yotta, AI Infra, OCP Global and SC’25.

To everyone who’s listened, shared an episode, or joined us behind the mic: thank you. And to the judges—thank you for recognizing a show built on curiosity, clarity, and respect for the people doing the work.

Privacy-First AI in Healthcare: Optum’s Dr. Rohith Vangalla

From federated learning and zero-trust to confidential computing, Dr. Rohith Vangalla shares a practitioner’s playbook for explainable, scalable AI that moves healthcare from reactive to proactive.

Permission Debuts a Digital Mini-Me for the Agentic Era

For every headline celebrating agentic AI’s potential to revolutionize business, there’s a data privacy lawsuit quietly working its way through the courts—a reminder that innovation has outpaced consent infrastructure. This week, Permission announced Permission Agent, a system designed to broker high-quality human data with verifiable consent and contributor rewards.

“AI is only as good as the data it’s trained on, and the best data comes directly from people—with their permission,” said Charlie Silver, CEO of Permission. “Permission Agent is the missing bridge between individuals and AI systems, enabling direct, compliant, and mutually beneficial data exchange at scale.”

How Permission Agent Works

Permission Agent operates as a persistent, identity-tied “digital mini-me.” It collects only user-approved signals (e.g., intent, preferences, context) and attaches usage rights and consent metadata to each record. Buyers receive structured datasets with provenance and audit trails so they can prove lawful basis and honor revocation. Contributors are compensated in $ASK, which Permission has made omnichain via LayerZero’s OFT standard, allowing movement across supported chains without wrapped tokens.

Why This Matters Now

Enterprises racing into agentic architectures are discovering their identity and governance foundations weren’t built for autonomous actors. Without machine-readable consent and revocation, agents can overstep policies and contracts, raising legal exposure as author and media cases proceed and as regulations (from GDPR to the EU AI Act) tighten expectations for transparency and consent.

Market Context

Permission isn’t the first to reward data contributors, but it’s one of the few aiming squarely at consented human signals for AI rather than a single vertical. Where Brave compensates attention and projects like Hivemapper/DIMO target maps and vehicle telematics, Permission’s pitch is a portable, auditable consent layer and marketplace that personalization teams and AI builders can safely use.

Permission Agent is in early access for enterprises and individual contributors. AI organizations can request sample datasets; consumers can join the waitlist.

A Buyer’s Checklist for Evaluating Consent-First AI Data Platforms

For enterprises considering purchasing permissioned data for AI, here are some due diligence suggestions:

- Start with provenance and consent semantics. Require a documented consent-scope model tied to specific data elements and enforceable purpose limitation, plus a true right to withdraw at the row, feature, and embedding level—complete with deletion that propagates to vector indexes and models.

- Treat agent identity and guardrails as a first-class security domain: autonomous agents should have unique credentials, least-privilege, time-bounded scopes, step-up authentication for sensitive actions, and human-in-the-loop approvals.

- For audit and forensics, require an immutable chain of custody that captures when and where each datum was collected, the consent state at capture and at use, and every downstream touchpoint, with machine-readable deletion receipts confirming propagation to feature stores, embedding indexes, caches, and backups.

- Scrutinize reward mechanics and compliance: how contributor rewards are calculated and distributed across chains; how custody and transfers are secured; and how KYC/AML, tax reporting (e.g., 1099-DA in the U.S.), consumer-protection, dispute-resolution, and contributor communications are handled.

The TechArena Take

Agentic AI doesn’t scale without machine-consumable consent. Permission’s move is notable for putting the individual at the center of a permissioned data supply chain aimed at training and personalization—and for bundling compensation and auditability from the start.

Two execution risks will determine impact:

Adoption density: Quality requires sustained, diverse contributors and buyer demand—plus SDKs that make capture, revocation, and downstream enforcement trivial across RAG, fine-tuning, and personalization stacks.

Enforceable revocation: Recording consent isn’t enough. Buyers will expect deletions to propagate to feature stores, embeddings, caches, and logs—fast.

Bottom line: If Permission proves dataset quality, scalable revocation, and low-friction integration, it could become the default vendor for “human signals with receipts” in agentic pipelines—good for builders, and overdue for the people whose data fuels the system.

Want to learn more? Check out www.permission.ai/.

Verizon Business’ Nikhil Tyagi on Edge AI Innovation

Verizon Business’ Nikhil Tyagi shares insights on scaling AI at the edge—from small language models and multimodal experiences to infrastructure challenges and adaptive inference. Want to learn more about the AI Infra Summit and see Nikhil Tyagi live? Find out more here.

Google’s Vision for Data Center Storage with OCP L.O.C.K.

The data center industry faces an ongoing challenge: how to securely reuse storage devices when decommissioning them without compromising data integrity. My recent Great Debate with Amber Huffman and Jeff Andersen from Google revealed not just the scope of this challenge, but how the Open Compute Project’s Layered Open-Source Cryptographic Key-management (OCP L.O.C.K.) initiative could reshape how the industry approaches storage security and sustainability.

During our discussion, Amber and Jeff painted a picture of an industry with a dilemma. Everyone would like to get longer lives out of storage devices. But to date, there hasn’t been a solution that still meets the top priority of protecting users’ data. As Amber explained, in the second-hand market, even encrypted drives face potential threats from nation-state actors with substantial resources, and evolving technology could eventually break older encryption algorithms.

In current practices, organizations physically destroy drives or perform time-consuming multi-pass overwrites. Destruction, while secure, creates significant operational inefficiencies and environmental waste; overwrites take a long time and are failure prone.

OCP L.O.C.K., currently at 0.85 specification and available for review, is a new alternative, a comprehensive project to deliver an open implementation at CHIPS Alliance that provides encryption key management services to storage drives and hosts. It builds on the established Caliptra open-source hardware root of trust, also an implementation at CHIPS Alliance, and will be integrated into Caliptra 2.1.

OCP L.O.C.K. improves on traditional data security methods in several ways. At its core, OCP L.O.C.K. ensures that only trusted, verified components can access the encryption keys that protect data on drives. The system creates multiple layers of key management. So when a drive is provisioned with OCP L.O.C.K., cloud service providers can trust that data remains inaccessible without the proper access credentials. And when that drive needs to be decommissioned, OCP L.O.C.K. attests that process has been completed successfully.