Ai4 2025: Agents, RAG, and the Enterprise AI Reality Check

The Ai4 conference kicked off today at the MGM Grand in Las Vegas, drawing an expected 8,000+ attendees for three days of keynotes, 600+ speakers across 50 tracks, and a 250-plus-vendor expo aimed squarely at applied, enterprise AI. Organizers touted a sharpened focus on agentic systems, governance, and real-world deployments.

Opening day set the tone with two back-to-back arena sessions: a fireside chat on AI’s impact on classrooms and childhood with AFT president Randi Weingarten, followed by Tengyu Ma, Chief AI Scientist at MongoDB, on “RAG in 2025: State of the Art and the Road Forward.”

What’s new this year

Ai4’s program stretches across 19 stages and 50 themed tracks, including Generative AI, Agentic Systems, AI Policy, a reimagined AI Research Summit, and a “Beginner’s Summit” to onboard first-timers. Exhibit-hall action will formally begin Tuesday morning and run alongside daily receptions.

Tuesday’s opening main stage will feature Shirin Ghaffery of Bloomberg News moderating a fireside chat with Geoffrey Hinton, who is known as the visionary godfather of AI. The talk is titled "AI, Ethics, and the Future of Humanity." The event will highlight a slate of sector tracks, live demos across the show floor, and a social-impact spotlight with Colossal Biosciences’ Ben Lamm discussing de-extinction and AI in a fireside format.

Wednesday’s keynotes will lean into the future of perception and agents. Fei-Fei Li will dive into world models, spatial intelligence, and human-centered AI, followed by a Cisco fireside where President & CPO Jeetu Patel will explore what it will take to safely catalyze an “agentic AI revolution.”

For buyers: where the rubber meets the road

If you’re attending Ai4 to evaluate products, the expo floor is positioned as more than a showcase. Expect hands-on demos spanning AI applications & agents, data platforms, security/governance, and cloud infrastructure—plus brand activations.

Signals to watch

With launches and briefings from governance vendors and platform players, expect concrete approaches to policy, observability, and controls for autonomous workflows.

Ma’s keynote framed how retrieval augments, rather than competes with, fine-tuning and long-context—important for enterprises balancing cost, latency, and source-of-truth requirements.

With Fei-Fei Li headlining, spatial intelligence and world modeling will continue to pick up steam as the path beyond text-only systems—especially for robotics and simulation-heavy industries.

The TechArena Take

Ai4’s program reads like a referendum on agentic AI at enterprise scale. Three practical threads stand out for IT and cloud architects:

- Control planes for agents will define winners. The next six months won’t be about flashiest copilots—it’ll be about who ships the cleanest hooks for policy, identity, data-scope, and human-in-the-loop stops across multi-agent workflows. If you can’t prove guardrails, you won’t get production sign-off.

- Data gravity beats model gravity. Ma’s framing of RAG vs. finetune vs. long-context mirrors what we’re hearing from large shops: push reasoning to the edge of your governed data layer; keep PII and contracts in-bounds; and reserve heavyweight finetunes for workloads that truly demand it.

- Infra is the quiet story. As agents expand from chat to autonomous task execution, winning stacks will hinge on event-driven integration, vector/search performance, observability, and low-latency networking—plus storage patterns that keep embeddings and history auditable.

Storage Is the Catalyst Revolutionizing Enterprise AI

The enterprise AI landscape is undergoing a fundamental transformation. While organizations have focused heavily on graphics processing unit (GPU) compute power and model sophistication, a critical infrastructure component has emerged as the new performance differentiator: storage. The Supermicro Open Storage Summit, running from August 12 to 28 with online sessions from leading solutions providers, promises to reveal how innovative storage strategies are delivering breakthrough performance improvements that could reshape your AI deployment economics.

The Hidden Performance Multiplier in AI Infrastructure

As organizations scale from AI experimentation to production deployment, they’re discovering that inference workloads demand different storage characteristics than training pipelines. The data tells a compelling story: enterprises deploying solid state drive (SSD) storage solutions are seeing 10x to 20x throughput improvements, 4,000x input-output per second (IOPS) scaling improvements, and up to 40% total cost of ownership (TCO) reductions compared with traditional storage solutions.

These aren’t theoretical gains. Real-world implementations for retrieval-augmented generation (RAG) workloads have demonstrated that storage optimization with SSDs can deliver 70% increases in queries per second while simultaneously reducing memory footprint by 50%. For enterprises struggling with the economics of AI deployment, these performance multipliers represent an opportunity to maximize return on investment.

Two Critical Sessions You Can’t Afford to Miss

The Supermicro Open Storage Summit expands on these opportunities with two must-attend sessions that tackle the most pressing storage considerations facing enterprise AI deployments today.

Storage to Enable Inference at Scale (August 19, 10:00 AM PT) brings together industry leaders from Solidigm, Supermicro, NVIDIA, Cloudian, and Hammerspace to explore how new storage protocols and distributed inference frameworks are enabling large-scale inference processing. This session will reveal how organizations are moving beyond traditional storage approaches to deploy validated infrastructure optimized for GPUs that unlocks real-time performance at scale.

Enterprise AI Using RAG (August 27, 10:00 AM PT) dives deep into RAG, one of the most critical enterprise AI use cases. With experts from Solidigm, Supermicro, NVIDIA, VAST Data, Graid Technology, and Voltage Park, this session addresses how enterprises can operationalize generative AI securely and efficiently while maintaining proximity to their most valuable data assets.

The SSD Revolution: Beyond Traditional Storage Thinking

One of the most compelling insights emerging from enterprise AI deployments challenges conventional storage wisdom. Solidigm’s recent breakthrough work, which will be discussed in the upcoming sessions, demonstrates that strategically offloading data from memory to high-performance SSDs doesn’t just reduce costs: it actually improves performance in many scenarios.

The company’s innovative approach involves moving model weights and RAG database components from expensive distributed random-access memory (DRAM) to optimized SSDs, achieving better performance at lower cost. In one demonstration involving a 100 million vector dataset, this approach delivered 57% less DRAM usage while maintaining or even improving query performance. The economic implications are huge as enterprises can run complex models on GPUs that would otherwise lack sufficient onboard memory.

From Data Center Footprint to Power Efficiency: The Complete TCO Story

The storage optimization story extends far beyond raw performance metrics. In the upcoming sessions, Solidigm will also discuss how cutting-edge storage solutions are demonstrating dramatic improvements in TCO across the entire infrastructure stack.

Take a practical example, a 50-petabyte dataset deployment with 12 NVIDIA H100 systems. Traditional HDD-based approaches require nine racks consuming 54 kilowatts. Deploy high-density 122TB SSDs, and that footprint shrinks to a single rack with up to 90% power reduction and 50% increase in available GPU footprint.

These efficiency gains matter more than ever as enterprises grapple with data center space constraints, cooling challenges, and escalating power costs.

The TechArena Take: The Storage Revolution Is Here

Organizations that leverage cutting-edge storage optimization strategies are positioning themselves for sustainable competitive advantage. While competitors struggle with infrastructure costs and performance limitations, early adopters are achieving superior AI outcomes at lower total cost of ownership.

The ability to deploy more sophisticated models, process larger datasets, and deliver faster inference responses directly translates to better customer experiences and operational efficiency.

The window for competitive advantage is narrowing rapidly. As these storage optimization techniques become mainstream, the organizations that implement them first will establish performance and cost advantages that become increasingly difficult for competitors to match.

Register Now

The Supermicro Open Storage Summit provides an opportunity to learn directly from teams of industry leaders who are defining the future of AI infrastructure. With sessions featuring experts representing all layers of the stack, you’ll gain access to the collective expertise of the companies driving AI infrastructure innovation. The summit’s focus on real-world implementations, demonstrated performance improvements, and practical deployment strategies makes it essential viewing for any organization serious about scaling AI effectively.

Don’t let storage bottlenecks limit your AI ambitions. Register below today and discover how strategic storage optimization can transform your enterprise AI performance while dramatically improving your deployment economics.

Storage to Enable Inference at Scale | August 19, 10:00 AM PT

Enterprise AI Using RAG | August 27, 10:00 AM PT

FMS 2025: Honoring Jim Pappas, Driving AI Innovation

I still remember walking through the bustling electronics markets of Tokyo and Hong Kong with Jim Pappas, marveling at the incredible diversity of devices and form factors surrounding us. As my manager early in my Intel career, Jim had a unique way of opening my eyes to the bigger picture—showing how the foundational work we were doing in semiconductor and standards development was sparking creativity and innovation across the globe.

This week, as the Future of Memory and Storage (FMS) conference concluded in Santa Clara, I was thrilled to see Jim receive the event’s most prestigious recognition—the 2025 Lifetime Achievement Award. As someone who witnessed firsthand his passion for the vibrant innovation that happens through standards delivery of technology, watching the industry honor his decades of foundational contributions felt like a full-circle moment.

Jim has had a tremendous impact on the industry throughout the trajectory of his career. Just imagine a world without a USB standard for a second...or without PCI and all its variants. These technologies that Jim and his collaborators helped define have formed a foundation for compute innovation. I was recently at a Tech Field Day event where I listened to the delegates sharing their Jim Pappas stories, how each of them had been impacted by his leadership over the years. One thing I was lucky enough to know is that beyond his technical brilliance, Jim’s approach to management was transformational. For me personally, he enabled me to take risks, provided incredible autonomy, and helped accelerate my career and set me up for success. To FMS: Well done on this recognition. To Jim: thanks for all that you did to propel a young Allyson forward. Your impact informs my work every day.

AI Takes Center Stage at FMS 2025

The conference that honored Jim’s legacy also marked a pivotal moment for the memory and storage industry, as AI dominated the conversations in Santa Clara. In fact, FMS 2025 featured AI in more than 60% of its keynote presentations and expert panel sessions—a clear signal that AI workloads are fundamentally reshaping the entire storage industry.

“Artificial intelligence is no longer just part of the conversation—it is the conversation,” said Tom Coughlin, Conference Chair of FMS. The three-day event brought together industry leaders to explore how innovations in DRAM, NAND, CXL, and computational storage are revolutionizing AI inference and training at scale.

Honoring Three Decades of Standards Leadership

Jim Pappas’s recognition with the Lifetime Achievement Award represents more than individual accomplishment—it celebrates the foundational standards work that enables the entire industry to thrive. His journey began in 1991 with establishing the PCI-SIG and PCI standard, work that later evolved into defining the PCI Express (PCI-e) standard that remains foundational to computing platforms today.

After joining Intel in 1994, Jim collaborated with seven companies—including IBM and Microsoft—to create the hugely successful USB standard. His recent contributions include launching SNIA’s Persistent Memory Technology Initiative, chairing the Compute Express Link (CXL) Consortium, driving formation of Universal Chiplet Interface Express (UCIe), and serving as President of Ultra Accelerator Link (UAL) for AI scale-up architectures.

“For over 30 years, Jim Pappas has played a pivotal role in creating a number of the most important standards and industry organizations which have been critical in the dramatic growth of the memory and storage industries,” Coughlin noted during the award presentation.

Having worked directly with Jim, I can attest that his influence extends far beyond technical standards. He understands that true innovation happens through collaboration and ecosystem building—principles that shaped not just technologies like USB and PCI, but entire generations of technologists who learned from his example.

Record-Breaking Innovation Recognition

FMS 2025 concluded with the presentation of its Best of Show Awards, with the program receiving a record-breaking number of nominations in its 19th year. The awards showcased the breadth of innovation across memory and storage technologies, particularly highlighting solutions addressing AI infrastructure challenges.

Celebrating Women in Technology

AMD’s Rita Gupta earned the 2025 SuperWomen of FMS Award, sponsored by Hammerspace and Pure Storage. As a Fellow in AMD’s Server System Architecture team and CXL End-End Architect, Gupta leads advanced CXL memory system architectures for current and next-generation EPYC platforms including Genoa, Turin, and Venice.

Her impact extends beyond AMD as co-chair of the CXL Consortium Memory Systems Workgroup, where she’s helped shape industry standards by authoring JEDEC CMC01 and JESD325 specifications and contributing significantly to CXL 2.0 and 3.0 development. Gupta’s work exemplifies the collaborative, standards-driven approach that Jim Pappas championed throughout his career.

Innovation Across the Storage Spectrum

The Best of Show Awards highlighted breakthrough innovations across multiple categories:

- Pure Storage earned recognition with DirectFlash QLC winning the Most Innovative Hyperscaler Implementation award, showcasing leadership in enterprise flash storage optimized for large-scale deployments. The technology represents a significant advancement in QLC NAND deployment for enterprise workloads requiring massive capacity and cost efficiency.

- Solidigm won the Most Innovative Technology award for its Liquid Cooled Hot Swappable NVMe SSD in the SSD Technology category, addressing critical thermal management challenges in high-performance AI and HPC environments. This innovation enables continuous operation at peak performance levels while maintaining system reliability through advanced cooling integration.

- VAST Data secured the Most Innovative Artificial Intelligence (AI) Application award for its collaboration with the NHL® in the Media and Entertainment Solution category, highlighting the intersection of AI and entertainment technology. The platform demonstrates how AI-driven storage solutions can transform real-time sports analytics and fan engagement experiences.

- Verge.IO claimed the Most Innovative Startup Company award for VergeIQ in the Virtualization IT Infrastructure category, demonstrating the continued evolution of virtualized infrastructure solutions. Their platform showcases how next-generation virtualization can simplify data center operations while reducing infrastructure complexity.

Additional notable winners included Micron for 1-Gamma Node LPDDR5X LPDRAM, Samsung for PM1763 16-Channel PCIe Gen6 SSD, KIOXIA for LC9 Series 245.76 TB SSD with BiCS FLASH generation 8 Memory, and Western Digital for Advanced Rare Earth Material Capture Program.

Standards as Innovation Enablers

A significant theme throughout FMS 2025 was the critical role of industry standards in enabling the AI revolution. Multiple awards recognized standards organizations, including the CXL Consortium for CXL 3.X Specifications, UALink Consortium for UALink 200G 1.0 Specification, and various SNIA technical work groups.

These standards ensure interoperability and scalability as the industry races to meet exponentially growing AI workload demands. The emphasis on standardization reflects industry maturation and recognition that collaborative approaches—the kind Jim pioneered with USB and PCI—remain essential for addressing complex technical challenges.

The TechArena Take

As FMS 2025 concluded, it became clear that the memory and storage industry has firmly embraced its role as a foundation for the AI revolution. The convergence of advanced memory technologies, innovative storage architectures, and industry-wide standardization efforts positions the sector for continued rapid growth as delivery of data across an AI pipeline becomes pervasively critical to organizations.

The record-breaking attendance and award nominations demonstrate the vitality and innovation driving the industry forward. With AI workloads continuing to evolve and scale, the memory and storage ecosystem will remain at the forefront of enabling next-generation computing capabilities.

Reflecting on Jim Pappas’ recognition and the broader innovations showcased at FMS 2025, I’m reminded of those walks through Tokyo electronics markets years ago. Jim’s vision of how foundational standards could spark global creativity and innovation has proven remarkably prescient. Today’s AI revolution builds directly on the collaborative, standards-driven approaches he championed—USB ports powering development workstations, PCI Express connecting GPUs and accelerators, and newer standards like CXL enabling the memory architectures that make modern AI possible. With so much grappling on how standards keep pace for AI innovation, I think its prescient to remember how we all benefit from this collaborative innovation and not be swayed by a need for stovepiped custom designs for bespoke deployments.

My final takeaway is how memory and storage are climbing into the center of the AI conversation. Without innovation in this space, costly GPUs can spend time in idle waiting for data delivery and costing organizations wasted cycles and opportunity. FMS is part of this solution. As Coughlin noted, “FMS is the place where the entire ecosystem meets to solve these challenges head-on.” The 2025 event proved that this industry, built on foundations laid by pioneers like Jim Pappas, continues to rise and meet each new technological moment with collaborative innovation and unflinching determination.

Dell on Storage Innovation in the AI-Driven Enterprise

Dell and Solidigm leaders explore how modern storage—flash, SSDs, and flexible architectures—enables AI, accelerates performance, and helps enterprises manage data across edge to cloud.

Insights with sayTEC on Sovereign IT Infrastructure Revolution

Fragmented approaches to security and IT solutions have frustrated the private and public sector for decades, creating a need for costly integrations while still leaving vulnerabilities. My recent Data Insights interview with Jeniece Wnorowski, director of industry expert programs at Solidigm, and Bora Güzey, senior IT consultant at sayTEC, revealed how organizations are finally solving this problem as they demand unified, security-first solutions that eliminate the complexity of these legacy approaches to IT architectures.

During our conversation, Bora provided an in-depth look at how sayTEC is pioneering sovereign IT infrastructure by fundamentally reimagining how security, access, and storage work together. This transformation begins with recognizing that traditional IT models treat these critical components as separate, siloed systems — an approach that increases risk, cost, and administrative overhead while leaving organizations vulnerable to evolving cyber threats.

The Unified Security-First Approach

Bora highlighted a key differentiator that sets sayTEC apart from conventional solutions: their holistic approach to IT security. sayTEC has built a unified platform where access control, data protection, and system performance are integrated from the ground up. This security-first architecture is based on zero trust and includes built-in regulatory compliance. The combination ensures that protection isn’t an add-on but is embedded across every layer of the system, delivering what Bora described as “military-grade security without compromising performance or cost efficiency.”

The company’s hyperconverged infrastructure (HCI) platform combines compute, S3 object storage, backup, and secure remote access into a single integrated system. Thanks to partnerships with companies like Solidigm and Virtuozzo, sayTEC can deliver impressive performance metrics — S3 storage speeds of up to 150 gigabytes per second and seamless scaling up to 200 petabytes, all with zero downtime.

Zero Trust and Sovereign Data Handling

As enterprises grapple with increasingly sophisticated cyber threats, Bora addressed how sayTEC’s zero trust architecture goes beyond basic implementations. Their sayTRUST VPSC (Virtual Private Secure Communication) technology actively monitors the full communication path, blocking unauthorized traffic before it even enters the tunnel. The system deploys pre-tunnel verification, token-based access control, and layered encryption, including perfect forward secrecy, to create what they call a “darknet environment” for secure communications.

For sovereign data handling — a critical concern for government and enterprise customers dealing with sensitive information — sayTEC’s systems ensure full control over where and how data is stored and accessed. This resonates particularly strongly with organizations dealing with critical infrastructure or sensitive personal data, where sovereignty and adaptability are paramount.

Scalability Without Compromise

One of the most impressive aspects of sayTEC’s solution is their promise of dynamic scaling without system downtime. Bora explained how their modular architecture allows customers to start with as few as three nodes and scale up to hundreds without interrupting operations. This is achieved through distributed workloads, erasure coding for redundancy, and live data migration capabilities.

For organizations facing rapid growth or stringent regulatory demands, this means no painful transitions or migrations. They can grow their infrastructure in real time while maintaining full compliance, business continuity, and budget predictability.

Strategic Technology Partnerships

Bora also emphasized the importance of strategic partnerships in delivering exceptional value to customers. The company’s research and development collaboration with Solidigm enables them to leverage high-performance NVMe drives that dramatically reduce latency while optimizing energy efficiency. These partnerships have allowed sayTEC to reduce infrastructure costs by over 50%, accelerate deployment times, and offer return on investment often within just 12 months.

Market Traction and Future Roadmap

sayTEC’s solutions are particularly well-suited for sectors where security, compliance, and scalability are non-negotiable. The company has seen strong demand in finance, public sector, defense, and health care — industries that deal with sensitive data and face constant regulatory scrutiny. In addition, their simplified deployment model and competitive cost structure are increasingly attracting medium-sized enterprises looking for secure, future-proof IT systems without requiring large in-house expertise.

Looking ahead, Bora outlined an ambitious roadmap that includes hyperconverged infrastructure with GPU computing for AI and machine learning workloads, enhanced zero trust for mobile environments, privileged access management integration, and plans to double S3 storage acceleration to 300 gigabytes per second. The company also plans to expand compute power support to 256 cores per node and scaling up to one petabyte per node.

The TechArena Take

In the rapidly evolving landscape of enterprise IT security, sayTEC’s approach represents a significant departure from traditional fragmented architectures. By delivering a truly unified, security-first platform that combines infrastructure, access, and storage into a single system, they’re addressing fundamental challenges that have plagued enterprise IT for decades.

The company’s focus on plug-and-play systems that simplify complexity while delivering military-grade security positions them well for the growing demand for sovereign IT solutions, particularly in Europe, where data sovereignty regulations are becoming increasingly stringent.

Check out sayTEC’s full range of solutions at www.saytec.eu. To connect with Bora and learn more about their sovereign IT infrastructure approach, you can reach out via LinkedIn or email for direct inquiries and demo opportunities.

MLPerf Storage v2.0 Eclipses Records in AI Benchmarking

MLCommons today announced results for its MLPerf Storage v2.0 benchmark, setting new records with over 200 performance results from 26 organizations. The results provide a trove of new data for AI trainers looking to make informed storage decisions and avoid bottlenecks in machine learning (ML) workloads.

The dramatic surge in participation compared to the v1.0 benchmark signals how critical storage has become for AI training systems as they scale to billions of parameters and clusters reach hundreds of thousands of accelerators. Companies ranging from tech giants to specialized storage providers submitted results, representing seven different countries in what officials called unprecedented global engagement.

“The MLPerf Storage benchmark has set new records for an MLPerf benchmark, both for the number of organizations participating and the total number of submissions,” said David Kanter, Head of MLPerf at MLCommons. “The AI community clearly sees the importance of our work in publishing accurate, reliable, unbiased performance data on storage systems, and it has stepped up globally to be a part of it.”

A total of 26 organizations submitted results: Alluxio, Argonne National Lab, DDN, ExponTech, FarmGPU, H3C, Hammerspace, HPE, JNIST/Huawei, Juicedata, Kingston, KIOXIA, Lightbits Labs, MangoBoost, Micron, Nutanix, Oracle, Quanta Computer, Samsung, Sandisk, Simplyblock, TTA, UBIX, IBM, WDC, and YanRong.

Benchmark Suite Tests Real-World AI Training Scenarios

The MLPerf Storage benchmarks focus on testing a storage system’s ability to keep pace with accelerators, either graphics processing units (GPUs) or application-specific integrated circuits (ASICs). Among other metrics, the suite measures if the storage system can maintain accelerator utilization levels above 90% across different ML workloads.

The v2.0 results reveal storage systems now simultaneously support roughly twice the number of accelerators compared to the previous benchmark round, a critical improvement as training clusters continue to grow to meet demand.

The suite evaluates how well storage systems handle the data demands of actual AI training without requiring organizations to run full training jobs. The benchmarks work by simulating the “think time” of accelerators, the processing periods when they’re computing rather than reading or writing data. This approach generates realistic storage access patterns while testing whether storage systems can maintain the required performance levels to keep accelerators fed with data across different system configurations.

The v2.0 suite carries over three core workloads from v1.0 that represent common AI applications: 3D U-Net for medical image segmentation, ResNet-50 for image classification, and parameter prediction for scientific computing in cosmology.

New Checkpoint Benchmark Tests Address “Chronic Issue” in AI Training

The v2.0 suite introduces new tests to meet a harsh mathematical reality of AI training: in a 100,000-accelerator cluster running at full utilization for extended periods, failures can occur every 30 minutes. In a theoretical million-accelerator system, that’s a failure every three minutes.

The new checkpointing tests address this challenge head-on. Regular checkpoints—saved snapshots of training progress—are essential to mitigate the effects of accelerators failing. To optimize the use of these checkpoints, however, AI trainers require accurate data on the scale and performance of storage systems. The MLPerf Storage v2.0 checkpoint provides that data.

More information on checkpointing and the design of the benchmarks can be found in a blog post by Wes Vaske, a member of the MLPerf Storage working group.

Technical Diversity Reflects Industry Innovation

The submissions showcase remarkable diversity in approaches to high-performance AI storage. The v2.0 results include 6 local storage solutions, 2 systems using in-storage accelerators, 13 software-defined solutions, 12 block systems, 16 on-premises shared storage solutions, and 2 object stores.

This technical variety reflects what MLPerf Storage working group co-chair Oana Balmau called innovation driven by necessity. “Everything is scaling up: models, parameters, training datasets, clusters, and accelerators,” she said. “It’s no surprise to see that storage system providers are innovating to support ever larger scale systems.”

Major Players Showcase Enterprise-Grade Solutions

Enterprise storage leaders demonstrated significant advances in supporting massive AI training clusters.

DDN’s AI400X3 appliance achieved over 110 GiB/s sustained read throughput while supporting up to 640 simulated H100 GPUs on ResNet-50, representing a 2x performance improvement over the previous generation.

HPE submitted results for its Cray Supercomputing Storage Systems E2000. The E2000 more than doubles I/O performance compared to previous generations and powers six of the world’s fastest top 10 supercomputers, demonstrating proven scalability at unprecedented computational scales.

IBM showcased real-world performance with its Storage Scale system, which delivered 656.7 GiB/s read bandwidth for the massive Llama 3 1T model—equivalent to loading the entire trillion-parameter model in approximately 23 seconds—while simultaneously supporting mixed production workloads.

Quanta Cloud Technology (QCT) demonstrated the effectiveness of thoughtful system design through its QuantaGrid D54X-1U server platform, testing configurations with both Solidigm D7-PS1010 NVMe SSDs for low-latency metadata operations and D5-P5336 NVMe SSDs for high-capacity streaming read throughput.

The TechArena Take

When you’re running million-dollar training jobs that can fail every few minutes, storage is mission-critical infrastructure. The overall improvement in the number of accelerators that storage systems can support and record participation numbers reveal an ecosystem that’s taking storage seriously as a potential bottleneck to AI training efficiency.

We’re also excited to see the diversity of approaches represented in these results. Six different storage architectures, spanning everything from local NVMe to object stores, suggests there’s no single “right” answer yet. The industry is still experimenting, which means significant performance gains are likely still on the table. We’ll be watching for those gains in the next benchmark round.

The complete MLPerf Storage v2.0 results are available at MLCommons.org.

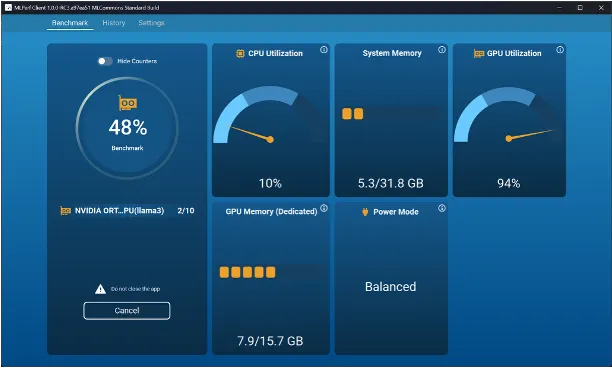

MLCommons Raises the Bar for AI PCs with MLPerf Client v1.0

As the AI PC market moves from hype to real deployment, MLCommons has released a critical piece of infrastructure: MLPerf Client v1.0, the first benchmark specifically designed to measure the performance of large language models (LLMs) on PCs and client-class systems.

The release marks a major milestone in the effort to bring standardized, transparent AI performance metrics to the fast-emerging AI PC market, ML Commons officials said.

It’s a move that couldn’t be more timely. From developers building AI-first applications to enterprises deploying productivity tools powered by on-device inference, there’s a growing need for standardized, vendor-neutral performance metrics that reflect real-world usage. MLPerf Client v1.0 delivers just that.

What’s New in MLPerf Client v1.0?

MLPerf Client v1.0 introduces a broader and deeper evaluation suite than its predecessor. Here’s what stands out.

Expanded LLM support:

- Llama 2 7B Chat

- Llama 3.1 8B Instruct

- Phi 3.5 Mini Instruct

- Phi 4 Reasoning 14B (experimental)

New prompt categories:

- Structured prompts for code analysis

- Long-context summarization (roughly 4,000- and 8,000-token inputs)

Wider hardware support:

- AMD NPUs & GPUs via ONNX + Ryzen AI SDK

- Intel NPUs & GPUs via OpenVINO and Windows ML

- NVIDIA GPUs via DirectML and llama.cpp w/ CUDA

- Qualcomm NPUs & CPUs via Genie + QAIRT SDK

- Apple Mac GPUs via MLX and Metal

Benchmarking made easy:

- GUI with real-time compute/memory readouts, historical results, and CSV exports

- CLI for automation, scripting, and regression testing

With participation from AMD, Intel, Microsoft, NVIDIA, Qualcomm, and top PC OEMs, this version represents one of the broadest industry collaborations yet in the AI PC space.

The TechArena Take

The AI PC conversation just got real. MLPerf Client v1.0 gives the industry a common language to talk about performance—not just raw inference speed, but usability across context lengths, structured prompts, and compute environments that look more like real end-user conditions.

It’s especially important in an ecosystem full of proprietary benchmarks and marketing-led performance claims. For OEMs and chipmakers racing to stake out territory in the AI PC era, this is a reality check.

But the bigger picture is this: AI workloads are going local. And that means we need tools that reflect how AI is actually used on devices with power, memory, and thermal constraints. MLPerf Client v1.0 answers that call with open, standardized, and scriptable benchmarks—all the ingredients needed to build trust across the ecosystem.

As AI PC adoption ramps, expect MLPerf Client to play a foundational role—not just in performance reviews, but in how next-gen silicon, SDKs, and even software experiences are shaped.

Download MLPerf Client v1.0: mlcommons.org/benchmarks/client.

Dell Leads the Entertainment Industry’s Storage Revolution

Dell and Solidigm explore how flash storage is transforming creative pipelines—from real-time rendering to AI-enhanced production—enabling faster workflows and better business outcomes.

Hypertec Shrinks the Data Center with Immersion-Born Servers

I recently sat down with Solidigm’s Jeniece Wnorowski and Mohan Potheri, principal solutions architect at Hypertec, to unpack how immersion cooling is reshaping data-center economics for AI and high-performance computing (HPC). During our discussion, it became clear that the biggest constraint on AI progress isn’t silicon — it’s keeping that silicon cool. Hypertec, founded in 1984 and now shipping over 100,000 servers a year to customers in more than 80 countries, has spent four decades learning how to squeeze more compute into less space without breaking the power budget, an experience that set the stage for our conversation.

Mohan painted a sobering picture of an industry straining under the weight of its own momentum. AI, HPC, and edge-computing workloads have pushed power and cooling demand to record highs just as sustainability-focused goals demand lower energy footprints. Operators face a conflicting mandate: deploy clusters faster than ever, but do so with tighter efficiency targets and, in many sites, within real-estate footprints that can’t grow any further. Space-constrained facilities must find ways to condense more compute while still meeting aggressive thermal budgets, all without blowing out capital or operating expenses. These pressures, he said, turn traditional air-cooled data centers into bottlenecks the moment racks tip into multi-kilowatt territory.

Hypertec’s answer is to start with liquid rather than retrofit for it. The company’s single-phase "immersion-born" servers live permanently in dielectric fluid, eliminating fans and chillers and cutting cooling power by roughly 50% while driving site-level power usage effectiveness (PUE) down to about 1.03.

Because every component is designed for submersion from day one, the servers avoid material-compatibility problems that plague air-cooled hardware dipped into tanks after the fact, and they let central processing units (CPUs) and graphics processing units (GPUs) sustain 90-95% of peak clocks instead of throttling under heat. A 10-megawatt deployment that would normally sprawl across 100,000 square feet collapses into roughly a tenth of that footprint, and Hypertec’s field data shows hardware lasting up to 60% longer thanks to the vibration-free, contaminant-free bath.

Tanks roll in pre-assembled, set up in under 10 minutes, and fill with fluid in less than half an hour, giving operators a shortcut from loading dock to AI production. Add immersion-ready storage nodes that put as much as two petabytes beside the compute they feed, plus 800 Gigabit-per-second networking, and Hypertec delivers a dense, sustainable, and rapidly deployable platform that sidesteps the very constraints throttling its air-cooled peers.

Before we wrapped, Mohan shifted the spotlight to storage—the quiet partner that can still slow an otherwise cutting-edge system. He explained that if data can’t reach the processors quickly, even the fastest GPUs and CPUs end up waiting. To avoid that pinch-point, Hypertec extends its immersion approach to storage as well, placing dense drive enclosures in the same fluid bath and on the same high-throughput fabric as the compute nodes. By treating cooling, compute, and data as one integrated stack, the company keeps every component working in sync and lays a cleaner path to future scale.

The TechArena Take

What’s the TechArena take? Together, these solutions make a compelling argument: immersion isn’t a niche experiment but a practical response to AI’s insatiable appetite for watts, racks, and real estate. Hypertec’s immersion-born solutions show how vendors can rethink server design to meet that challenge head-on—reducing energy, shrinking footprints, extending equipment life, and freeing budgets to buy more compute instead of more chillers.

Listen to the full conversation here, to learn how immersion cooling is quickly moving from “interesting” to inevitable.

The Lamb Lays Down with the Lion to Avoid Being Eaten by the Wolf

Earlier this month, European automotive original equipment manufacturer (OEM) leaders BMW, Mercedes Benz, and VW announced an agreement to collaborate in the development of an open-source shared software platform for electric vehicles (EVs). This unlikely collection of competitors has decided to join forces to stave off the heated competition from the Chinese automotive EV OEMs.

In a recent interview updating my predictions for the automotive market for 2025, I highlighted the point that BYD, a Chinese automotive OEM, today ships more EVs annually than Tesla. It’s not just BYD’s momentum alone that has the German OEMs concerned; the Chinese EV market is exploding as evidenced by the more than 100 new EVs that were introduced by various Chinese OEMs at this year’s Shanghai International automotive show. In short, China is dominating the “new energy” vehicle market segment, leading to the German OEMs taking drastic measures to stave off the stiff competition.

In principle, collaboration among the major OEMs to address a global competitive threat makes sense. In practice, achieving this objective can prove to be tricky, if not impossible. While some of the key underpinnings for success are in place—such as the collaboration focusing on areas that don’t establish a vehicle’s brand or differentiated value—as recently as two years ago, 10 of the major Japanese automotive OEMs formed a similar consortium with a similar set of objectives and constraints, only to disband this effort after just six months.

The 10 “J OEMs,” named so in recognition of the 10 Japanese automotive OEMs that banded together to address global competition, collectively came to the realization that establishing a common hardware platform was not tenable because of the very different market segments the different OEMs addressed, which spanned from very low-end to very high-end.

While one of the key motivations of the software defined vehicle (SDV) is to abstract away the underlying hardware such that high-end vs. low-end hardware platforms appear similar in nature, it’s not always clear what software features and capabilities establish brand identity and differentiation—especially given the nascent nature of this evolving market.

To that end, the smartphone is often cited as a good illustration of the concept of a software defined platform, a good parallel to the SDV. Today’s smartphone operating system, which addresses a wide range of underlying hardware combinations, raises the question—what are the critical differences between an Apple iPhone and a Samsung Galaxy? Is it the software, or is it the hardware? I believe the answer is yes….it’s both. So, developing a universal software platform that provides significant industry momentum while allowing different OEMs to retain differentiation and brand identity will prove to be a bridge too far, unfortunately, is my prediction.

It’s been said that the best way to determine if a strategy will succeed is to execute that strategy. In this case, however, there have already been multiple industry-wide collaborations that have spectacularly failed as the industry grapples with this new world order of self-driving and new energy cars with an ever-increasing number of new entrants and business models that stand to challenge, if not eliminate, the long-term incumbents.

That said, there are also parallel efforts to the recently announced initiative by the German OEMs, including the SOAFEE SIG (Scalable Open Architecture for Embedded Edge special interest group), an industry-led initiative focused on defining a new open-standards-based architecture for SDVs. Similarly, a key goal of SOAFEE is to enable software to be developed and deployed across different hardware platforms, simplifying development and reducing the need for platform-specific code.

SOAFEE is a collaborative effort involving worldwide automakers, semiconductor companies, software providers, and cloud technology leaders and has been established since 2021. It’s unclear how the efforts of SOAFEE compare to those of the recently announced German OEMs, but again, time will tell.

And to muddy the waters just a little more, the Autonomous Vehicle Computing Consortium (AVCC), which also comprises many industry-wide automotive OEMs and solutions providers, is also focused on establishing open-source solutions to help accelerate the development of and deployment of SDVs. How these all differ from one another is a task left for the reader.

As the title of this blog states—the lamb lays down with the lion to avoid being eaten by the wolf. The competition is fierce, and there are too many irons in too many fires with significant R&D investments that will ultimately lead to spectacular losses. It’s a brave new world in the automotive industry, and while the industry seems to recognize as much, there don’t appear to be many clear or sound strategies to navigate this evolving landscape.

AI Chip Design Hits Crossroads in Agentic Era

As AI continues its meteoric rise, the technologies enabling that growth – compute, memory, networking, and chip architecture – are being stretched to their limits. While the pace of innovation is accelerating to address these limits, the application of AI in the workflows continues to innovate as we move from reinforcement learning to generative AI and now, AgenticAI. During Gen AI Week, I had the distinct honor of moderating a panel of silicon industry heavyweights to explore how the next wave of chip design is evolving to meet the challenges of large-scale AI deployment.

Our discussion underscored a common theme: the age of agentic AI is upon us and it will fundamentally reshape how chips are architected, manufactured, and even conceived.

Joining me on stage were:

- Harish Bharadwaj, VP of marketing in the ASIC product division at Broadcom

- Bob Brennan, VP of customer solutions engineering at Intel Foundry

- Kelvin Low, VP of sales at Samsung Foundry

- John Koeter, SVP at Synopsys, leading IP development

Why AI Is Reshaping Semiconductor Design

The panel kicked off with a look at the current state of chip design and why AI is creating unprecedented pressure on silicon teams. As AI models double in size nearly every year, chipmakers must accelerate the speed and the intelligence of their design processes.

Kelvin Low of Samsung Foundry pointed to the growing complexity of IP subsystems, which are now often pre-optimized for AI workloads. He noted that the industry is moving beyond traditional chipmaking, focusing instead on delivering pre-optimized IP subsystems and full-stack solutions tailored to specific AI workloads.

John Koeter echoed that sentiment, highlighting how today’s hyperscalers are pushing for 5–10x improvements in silicon performance, at a time when Moore’s Law and Dennard scaling are plateauing. He emphasized that the semiconductor industry is at an inflection point, where traditional scaling is no longer enough and entirely new approaches, like multi-die design and agentic AI, are needed. We need to re-engineer design workflows from the ground up.

Compute, Memory, and the Architecture Bottleneck

While GPUs have dominated headlines, the panelists emphasized that AI infrastructure relies on a broader constellation of compute and memory technologies. Brennan outlined two parallel trends: going big with monolithic training chips and going out by scaling across multiple smaller units.

He also introduced the idea of compute density versus capacity, especially when it comes to high-bandwidth memory (HBM). He stressed that while HBM plays a key role in performance, it also introduces significant power challenges – accessing these memory stacks alone can consume hundreds of watts.

Low explained how the industry is working on custom HBM stacks optimized for specific workloads, with next-gen configurations offering lower power and higher integration thanks to basedies utilizing a logic-process instead of a DRAM process.

“Power is everything,” he said. “We just do not have enough power to fit into the data center. So wherever possible to reduce power, we can do that.”

Data Movement and Networking: From Bottleneck to Co-Architect

As AI clusters grow from 20,000 to 100,000+ compute nodes, network infrastructure is becoming a primary design constraint.

Harish Bharadwaj explained that AI workloads are pushing data movement beyond traditional thresholds. He noted that AI cluster-level bandwidth is growing up to 10x in a single year, driving the need for far more scalable and efficient network infrastructure.

John Koeter added that networking is no longer just a back-end concern; it has become a critical co-architect of overall system performance. Koeter expanded on the evolution of standards.

“The time between standards used to be three to four years, and that’s been accelerating to 2 years to 18 months,” he said. “And the question is, ‘Why?’ And that’s across the board – memory interface, PCI Express...even good old USB. The interface standards are accelerating. And the reason is because you can pack an enormous amount of compute units onto a chip, but you have to be able to transfer data on and off that chip very, very efficiently.”

Enter Agentic AI: Reinventing the Engineering Workflow

One of the most exciting – and existential – topics of the panel was the rise of agentic AI, or the use of autonomous software agents in chip design workflows.

Koeter explained that AI is transforming not just what engineers design, but how they design. He described a future where networks of autonomous agents assist with key stages of chip development – prompting teams to completely rethink and rebuild traditional engineering workflows from the ground up.

From macro placement to RTL generation, panelists said agentic AI is beginning to automate and optimize historically manual tasks. Brennan noted that although silicon engineering lacks the vast open-source data available in software, AI tools are already producing meaningful speedups.

“What used to take weeks now takes hours,” Bharadwaj said.

Still, the panelists agreed: AI won’t replace chip designers, but designers who use AI will replace those who don’t.

Workforce Implications: New Roles, New Challenges

Agentic AI is also reshaping how teams are structured and trained.

Brennan pointed to workforce challenges, citing predictions that the industry could be short a million engineers in agentic AI by 2030.

“The cool kids are no longer going into silicon,” he said. “They’re going into algorithms and software.”

The panelists called for a shift in training and team structure, with junior engineers gaining AI-augmented capabilities once reserved for veterans. But challenges remain – particularly around proprietary data security and best practices that haven’t caught up with the tech.

Measuring the Payoff

When asked how teams are quantifying productivity gains, Bharadwaj was clear: it’s about pace. He noted that companies are under intense pressure to launch new xPUs annually, and that technologies like agentic computing may play a crucial role in helping the industry keep pace.

Koeter offered a final perspective.

“I tell my team all the time… there’s two types of design engineers in the future: ones that lean in and embrace agentic AI with all their hearts, and dodos and dinosaurs,” he said. “I’m like, don’t be a dodo. You gotta lean in.”

The TechArena Take:

AI is no longer just a workload. It’s a force reshaping the silicon landscape. From custom memory to co-architected networks, and agentic workflows to workforce transformation, this panel revealed the full-stack rethink underway as the industry races toward a trillion-dollar AI economy.

Exploring AI and an Edge Computing Reality Check with OnLogic

The edge computing landscape stands at an intersection of practical necessity and AI transformation. My recent Fireside Chat with Hunter Golden, senior product manager at OnLogic, revealed just how different the reality of what is needed is from the hype. As organizations grapple with deploying AI at the edge, Hunter reveals how smart sizing edge investments will get the best return.

During our discussion, Hunter explained that OnLogic has more than two decades of experience in industrial and edge computing, long before AI became the driving force. OnLogic’s computers have lived running applications behind the scenes in our daily lives — from amusement park kiosks to flight information screens to robots working in warehouses and even harvesting crops. But the onset of AI and an increase in automation opportunities has fundamentally shifted the compute density requirements at the edge while the physical footprint remains largely static.

Hunter emphasized a critical misconception plaguing enterprises looking to deploy AI at the edge: the belief that AI requires massive cloud infrastructure or discrete GPUs. As he explained, “both training and inference can easily occur at the edge” with lower-than-expected compute requirements, noting that even his “not very powerful laptop” could run DeepSeek.

We explored the balance between performance, power, and cost that defines successful edge AI deployment, and how hardware selection and the workload objective are completely intertwined. For example, for computer vision, sizing up the workload includes understanding the number of video streams, resolution requirements, model size, and target frame rates. Once that is understood, organizations can spec appropriate hardware rather than defaulting to expensive, overpowered solutions.

The conversation also highlighted three key advantages of edge AI deployments that can get overlooked in cloud-focused discussions:

Achieving lower latency, with benefits that are immediate and measurable in edge deployments

Maintaining data sovereignty, which is critical in medical applications and other use cases where it’s critical to own your own data

Bypassing network reliability concerns, with edge deployments allowing applications to continue to function even if a network goes down

Hunter’s insights into IT modernization revealed a sector dealing with diverse transformation paths. Some companies are just connecting programmable logic controller (PLC) data to operational technology networks, while others are deploying autonomous mobile robots for material handling. The key is understanding both short-term objectives and long-term roadmaps so you can spec the right hardware and don’t have to rip and replace later on.

Looking toward future infrastructure needs, Hunter underlined the importance of guaranteed lifecycles and scalable architectures. OnLogic’s commitment to five-year lifecycles from launch addresses a common pain point where prototype hardware becomes unavailable by deployment time. The company’s commitment to life cycle transparency when embarking on multi-year projects with customers helps enterprises know they’ll have the right hardware when they get to deployment.

What’s the TechArena take? As organizations like OnLogic continue to balance innovation with practical constraints, we’re witnessing the emergence of edge AI that prioritizes efficiency, reliability, and cost-effectiveness without sacrificing the transformative potential of AI solutions. The real breakthrough is in the thoughtful matching of workload requirements to appropriate infrastructure, supported by partners who understand both the technical challenges and the business realities of edge deployment.

Listen to the full Fireside Chat for more from our conversation. Connect with Hunter Golden on LinkedIn and explore OnLogic’s Ultimate Edge Server Selection Checklist here.

Synopsys Finalizes $35B Ansys Deal for Design Dominance

The semiconductor industry is facing rapid changes and major shifts. One of them, previously announced, is finalized as of today: Synopsys, a leader in electronic design automation (EDA), has acquired Ansys, a giant in simulation and analysis software. The blockbuster deal, valued at approximately $35 billion in cash and stock, aims to create an undisputed leader in “silicon to systems” design solutions.

The acquisition brings together two titans of the engineering software world. Synopsys’ foundational tools for chip design will be combined with Ansys’ broad portfolio that simulates how those chips and entire products will perform in the real world. This fusion is designed to address the soaring complexity driven by AI, widespread silicon proliferation, and software-defined systems.

Synopsys’ President and CEO Sassine Ghazi released a video message regarding the acquisition today, calling it an “exiting day” for Synopsys employees, customers and engineering innovators everywhere.”

“We have completed the acquisition of Ansys,” he said in a blog post, “…a transaction that combines leaders in silicon design, IP, and simulation and analysis to create the leader in engineering solutions from silicon to systems.

“Together, we will maximize the capabilities of engineering teams broadly, enabling them to rapidly innovate AI-powered products.”

The move was a “logical next step” to the seven-year partnership between the companies, Ghazi said.

Ajei Gopal, President and CEO of Ansys, echoed the sentiment, stating, “This transformative combination brings together each company’s highly complementary capabilities to meet the evolving needs of today’s engineers and give them unprecedented insight into the performance of their products.”

Key Strategic Drivers

- Unifying Design and Physics: The deal directly addresses the growing need to merge the world of electronics (the chip) with physics (the system it operates in). This allows for a more comprehensive and predictive design process for everything from cars to satellites.

- Massive Market Expansion: Synopsys is now positioned to win in an expanded $31 billion total addressable market (TAM). This also provides a stronger foothold in high-growth adjacent markets like automotive, aerospace, and industrial manufacturing, where Ansys has a major presence.

- Significant Financial Synergies: The acquisition boosts Synopsys’ strong financial position and outlook with expanded margins and greater free cash flow generation, enabling rapid deleveraging, according to the companies.

The TechArena Take

Synopsys’ acquisition of Ansys is more than just a massive financial transaction; it’s a bold declaration about the future of engineering and product design. The traditional walls between chip design, software development, and physical system analysis are crumbling, and Synopsys is betting the house on owning the entire, integrated workflow. In an era where AI-powered smart devices are becoming ubiquitous, the ability to create a “digital twin” — a perfect virtual replica of a product that can be tested before it’s built — is no longer a luxury, it’s a necessity.

This move is a direct challenge to competitors like Cadence and Siemens EDA. By creating a one-stop-shop for engineering everything from the transistor to the final system, Synopsys is aiming to build a deeply entrenched platform that is difficult to displace. It’s a classic vertical integration play for the digital age, locking down the foundational blueprint of modern technology.

The ultimate test, however, will be execution. Integrating two massive companies with distinct cultures and complex software portfolios is a monumental task – though the companies’ deal website addresses this point – saying the cultures are complementary cultures of innovation, with the formal acquisition building on eight years of strategic partnership to “drive the fusion of electronics and physics, augmented with AI.”

The promise of a “seamlessly integrated” platform is powerful, but delivering on it will be the true measure of success. The race to own the end-to-end design chain is on, and Synopsys just made a decisive, multi-billion-dollar move.

Immersion Cooling: Revolutionizing AI & Edge Computing

Allyson Klein and Jeniece Wnorowski welcome Mohan Potheri of Hypertec to explore how immersion cooling slashes energy use, shrinks data-center footprints, and powers sustainable, high-density AI, HPC, and edge solutions on this Data Insights episode. Find the audio-only podcast here.

Ansys + Rohde & Schwarz: Hardware Meets Virtual Cities

I recently had the delightful opportunity to moderate a fireside chat with technologists from Ansys and Rohde & Schwarz about how the convergence of simulation, test and measurement is fundamentally changing how 5G and 6G radio systems are developed and validated.

The conversation centered on a groundbreaking collaboration that enables developers to virtually replicate any installation site, bringing real-world RF environments directly into the laboratory for early, reliable validation. This applies not only to outdoor urban, rural, or mobile networks but also to indoor and mixed outdoor-indoor installations for private networks in locations like factories, warehouses, and hangars.

The chat featured Shawn Carpenter, Ansys Program Director for 5G/6G and Space, Andreas Roessler, Rohde & Schwarz Technology Manager, and Jayraj Nair, Ansys Field CTO – high-tech.

The Challenge: From Simple Antennas to Complex Systems of Systems

Shawn discussed how dramatically antenna design has evolved, reflecting back on how single band antennas were used in the development of antennas for 2G and 3G systems.

“Today, as we unwrap the new spectrum allocations for 5G and explore millimeter wave, there's a wide number of channels and spectrum that we have to accommodate,” he said.

The complexity doesn't stop at frequency bands. Modern 5G/6G systems must handle multiband operations, manage thermal characteristics that can detune antennas, and incorporate sophisticated spatial diversity techniques. As Jayraj described, we’ve reached an era in which validating wireless systems is akin to reengineering a plane while it's midair with paying customers aboard.

The Innovation: Digital Twins Meet Hardware Testing

Ansys and Rohde & Schwarz bring together two traditionally separate worlds: simulation and test and measurement. Their solution creates highly accurate virtual environments – digital twins of real cities – complete with five-centimeter resolution models that capture everything from street furniture to window frames and trees.

Here's where it gets interesting: the system can model electromagnetic wave propagation through these virtual environments in real time, capturing the complex interactions that occur as signals bounce off buildings, reflect from surfaces, and encounter moving objects. These channel characteristics are then fed into Rohde & Schwarz's signal generators, creating authentic RF conditions that real devices can be tested against in the lab.

“You could do a virtual representation of where you want to deploy a digital twin, use the Ansys tool to do the channel modeling, put it into test measurement equipment, and optimize machine learning algorithms for that particular channel representation,” Andreas said.

Five Key Takeaways from the Future of Wireless Validation

- Novel Customization: Teams can now replicate any environment they choose without leaving the lab. This means testing scenarios that would be impossible or prohibitively expensive to recreate in the real world.

- AI-Driven Optimization: The platform supports the development and validation of machine learning-based signal processing algorithms that could improve channel performance by up to 3dB – effectively doubling capacity, which means maximizing spectrum efficiency.

- Hardware-in-the-Loop Validation: Unlike pure simulation, this approach tests real hardware against synthetically generated, but physically accurate channel conditions. This bridges the gap between theoretical performance and real-world deployment.

- Future-proofing for 6G: With 6G expected to support everything from immersive applications to integrated sensing and communication, having a flexible validation platform that can model any scenario becomes essential. The system can also simulate UAS communications at an altitude of 400 feet, where interference patterns differ dramatically from ground-based devices.

- Real-Time Network Optimization: The technology could enable future 6G networks to adapt in real-time. Networks could collect operational data, retrain their algorithms for site-specific optimization, and validate changes before deployment – all autonomously.

The Broader Impact: Transforming Development Cycles

What resonates with me most is how this innovation addresses a fundamental challenge in modern technology development: the growing complexity of systems paired with shrinking validation timelines.

By enabling comprehensive testing in controlled laboratory environments, this approach could accelerate time-to-market while improving reliability. Companies using similar simulation-driven approaches have realized up to 3x acceleration in development time and cost reductions of up to 60%.

The technology also opens new possibilities for regulatory compliance and public safety validation. Shawn mentioned exploring how base station signals might interact with aircraft radar altimeters – critical safety research that can be conducted safely in simulation before any real-world testing.

Looking Ahead: The Era of Adaptive Networks

The most exciting aspect of this development isn't just what it enables today, but what it makes possible for tomorrow. Andreas hinted at a future where 6G networks could continuously optimize themselves.

“You could collect data in your network, take that data and retrain that default model and do a site-specific adaptation,” he said.

Imagine networks that automatically adapt their signal processing algorithms based on changing environments, all validated through digital twin technology before implementation.

The TechArena Take

As I reflect on this conversation, I'm reminded of how often the most transformative innovations come from combining existing technologies in novel ways. Ansys and Rohde & Schwarz’ marriage of high-fidelity simulation with hardware testing represents a breakthrough that could fundamentally change how we develop, validate, and deploy wireless systems.

The implications extend far beyond telecommunications. Any industry deploying complex RF systems – from automotive radar to IoT networks – could benefit from this approach. As we stand on the brink of the 6G era, with its promise of supporting everything from autonomous vehicles to immersive reality applications, having the tools to validate these systems thoroughly before deployment becomes essential.

The future of wireless technology isn't just about faster speeds or lower latency – it's about creating systems that adapt to our ever-changing world.

Agentic AI in Engineering: From Vision to Workforce Transformation

Earlier this year, I shared two stories that signaled a profound shift underway in the world of silicon design.

In March, during Synopsys’ annual user group conference , the company laid out a bold roadmap for agentic AI: a vision in which autonomous AI agents assist human engineers and become co-designers of the most complex compute systems on Earth. Weeks later, at the TSMC Technology Symposium, Synopsys announced a set of certified AI-driven design flows for the A16 and N2P nodes, tightening the loop between angstrom-era process technology and AI-native tools.

These developments underscore that AI isn’t just changing how we design chips – it’s changing who the designers are.

That message came into sharp focus during a recent panel I moderated between leaders at Microsoft, Arm, Marvell, Sandisk, and NYU. Held in conjunction the Design Automation Conference, the panel featured an early model multi-agent RTL design demo – code-based and powered by Synopsys tools that are in the proof-of-concept phase. But what struck me most wasn’t the code. It was the conversation that followed, centered around three questions that will shape engineering leadership in the agentic era:

1. What happens when every engineer becomes a manager of agents, from both a technology and leadership perspective?

2. What does it mean when a junior designer skips straight to system-level orchestration?

3. How do we reimagine engineering teams when a 10-person squad can operate at the velocity of 100 engineers today?

From Inspiration to Integration

Synopsys and Microsoft kicked off the panel with a prototype demo using early models of the multi-agent platform in testing, showcasing a fully autonomous flow that generated, validated, fixed, and revalidated RTL for a complex product design. Utilizing real code with Synopsys tools in the back end, this example demonstrated how capabilities come together.

This accessibility speaks to a major inflection point for engineers and the drawing card of a packed house for the executive discussion. And while the demo ran autonomously, the team emphasized the importance of human-in-the-loop integration in real-world deployments. The agents are being designed to collaborate with engineers to help move faster to market.

Engineers as Agent Managers

That collaborative theme echoed throughout the panel and each panelist stressed that human engineers will still hold the baton for silicon delivery. Bill Chappell, CTO of Microsoft’s strategic missions and technology, offered one of the most striking observations of the night on this topic.

“Everybody is now a senior dev – because you now have 100,000 virtual workers working for you, and you have to have that instinct to know when things are going wrong and be able to sign off on that,” he said. “And so, the ability to manage all of the things that are going to be able to be done is going to be the hardest thing.”

It’s a compelling redefinition of engineering. In the past, career progression often meant expanding from focus on one element of a chip to multi-sub-system and then full chip architecture. In the agentic age, it might mean graduating from writing simple instructions to orchestrating teams of specialized AI collaborators across complex designs.

Aman Joshi, vice president of design enablement and automation at Sandisk, explained it this way:

“Our...post-production test people always get this data that is very old. They're like, ‘Hey, your RTL doesn't match the documentation,’ and (in testing these early models), you can actually dive deep into the RTL and extract the information,” he said. “So you’re finding lots of very useful cases in that sense. So very productive, and also not only productive, very accurate, and also catching some of these problems.”

In practice, that means that AI has the potential to accelerate verification, improve documentation, and even reduce onboarding time for junior engineers. But it also demands a new kind of vigilance.

“It's very tempting today, with all these agentic things, you have an agent that...parses a…report, figures out the critical path, then generates the histogram, puts it into a slide, (and) sends it out in an email,” said Soumya Banerjee, senior vice president of ASIC design, CAD and methodology at Marvell Semiconductor. “But the worry there is, if the engineers stop thinking about those reports and don't look at it, what are they going to miss? And I don't think we are at that robustness level today to sign off on it.”

Building Teams for the Agentic Era

This comes with a key conclusion: the integration of agentic tools must transform how engineering leaders build organizations and train skillsets for newer in career staffers. Panelists from Microsoft and Arm emphasized a shift from centralized Centers of Excellence to cross-functional teams in which every engineer is expected to prototype, validate, and own more of the stack.

“There's a foundational shift in the shape of teams,” said Microsoft’s Chappell. “The PM role has foundationally changed.”

This shift demands both technical upskilling and a cultural willingness to evolve. Several panelists described senior engineers who’ve gone from writing every line of CAD code to overseeing the generation and validation of that code in real time as they’ve been testing these tools. They pointed to the fact that agentic automation redefines engineering jobs in a way that many engineers may not be prepared for because they are used to writing code themselves.

Panelists expressed clear concerns about skill atrophy, loss of engineering intuition, and the risk of over-automation. But the consensus was clear: organizations that prepare their teams for orchestration – not just execution – will be the ones that thrive and scale their design delivery.

Productivity: Tool or Trap?

As often happens when engineers congregate, the conversation shifted to how to measure the productivity gains delivered by agentic AI on engineering teams over time. While several companies projected 20–30% productivity gains, some leaders warned of “agentic sandbagging,” in which team members could underreport impact to protect future headcount. It’s also a question of how leaders use their engineering talent to reach further vs. simply reduce staff size.

“I will say it's a true cultural test for a company,” Chappell said. “Given (a projected) 30% more productivity across the board, what do you do with that? If you reduce your workforce, that's admitting that you don't know how to start new things. How well you can actually get into new fields and start new areas is going to be a true test.”

Others agreed that AI is not a replacement for the workforce, but a scaling mechanism. Teams will need to deliver more customized silicon, with smaller, more nimble teams, and ultimately customers benefit with more choice of solutions in the market.

“...More and more, we’re seeing in the marketplace that people want...a custom solution to their needs, and chip organizations will not scale if everything becomes custom,” said Kevork Kechichian, executive vice president of solutions engineering at Arm. “You can make that customization almost incremental on R&D teams and chip teams. That's where I see the value coming in, where you deliver something to a partner that seems custom to them, but you're benefiting from the scaling and all the tools that you put in place.”

From Roadmap to Reality

Synopsys’ roadmap targets L1 capabilities by late 2025 and early access to L2/L3 capabilities – such as autonomous static analysis agents, e.g. Lint agents – also by year's end.

These tools aren’t just changing how chips are built. They’re changing how engineering is taught, led, and imagined.

“Curiosity and confidence is the only thing that matters in the education process,” Chappell said. “That is what we need to be teaching. You don't really care what you’re learning – it's how you learn. You own the system. The system doesn't own you.”

The TechArena Take

This panel delivered more than a status check. It gave us a metric for readiness – both technical and organizational. Agentic AI is moving from whiteboard to workflow. Engineers are becoming orchestrators. And leaders are being called to reimagine how teams learn, structure, and scale. The braintrust on the panel, and in the room, reflected how urgent and important this topic is to the silicon arena. It also served as a case study for broader implications across job categories, one that I hope is treated with the same amount of forethought as exhibited by these engineering leaders.

From my vantage point, this is the most exciting and consequential moment in engineering since the rise of EDA. And like all meaningful revolutions, it’s not about the tools – it’s about the people, the trust we build, and the futures we’re willing to imagine. I suggested that we hold another panel next year at DAC to gauge progress, and I can’t wait to hear how engineering teams advance with these powerful tools.

As I said onstage: It’s time to go invent the future.

Stanford’s Daniel Wu on Trust, Agents & the Future of AI

The AI landscape is evolving at breakneck speed, and my recent Fireside Chat with Sanford’s Daniel Wu revealed just how transformative this moment truly is. As we prepare for the AI Infra Summit, where Daniel will deliver a keynote, his insights illuminate an industry balancing unprecedented innovation with the critical need for trust and responsible deployment.

During our discussion, Daniel painted a picture of Stanford’s AI Professional Program that mirrors the broader democratization of AI knowledge. What began in 2019 as a single technical course has expanded into seven comprehensive courses serving physicians, executives, teachers, and product managers alongside software engineers – reflecting AI’s expanding reach across every sector.

Daniel emphasized four major trends reshaping the AI landscape. First is agentic AI, which he called “the clear star of the moment.” We’re witnessing a shift toward autonomous systems capable of reasoning, planning, and executing complex tasks. Markets and Markets projects the agentic AI market will grow from $13.8 billion this year to over $140 billion by 2032, a 40% compound annual growth rate.

The second trend, embodied AI, represents the physical manifestation of these intelligent systems. Companies like Tesla with Optimus and Figure AI are developing humanoid robots for warehouses, factories, and homes. Daniel noted that 2025 is positioned as the first year of mass production for industrial robots, with the World Economic Forum suggesting billions could be operating globally by 2040.

Supporting these advances is multimodal AI, which enables systems to process text, images, audio, and video simultaneously. This capability is critical for AI to operate in real-world complexity, with the market expected to leap from $2.5 billion in 2025 to over $42 billion by 2034.

Perhaps most importantly, Daniel highlighted the trend toward trustworthy AI. A KPMG study revealed that 44% of US workers admit to using AI improperly at work, while only 41% are willing to trust AI systems. As Daniel said, “Building trust and building robust, ethical and reliable systems is not just about a trend. It’s an absolute necessity for any of the technology to realize its true potential. That’s also a core part of what I'm passionate about, and what I will be touching on in my keynote this year.”

When we explored AI’s most impactful applications, Daniel identified four transformative areas where AI is accelerating discovery at unprecedented scales.

- Science: AI can create a new era of accelerating discovery, with McKinsey suggesting AI could effectively double the pace of R&D.

- Health care: AI opens up new use cases like analyzing a person’s genetic makeup and lifestyle to recommend tailored treatments.