VAST Data Soars with Industry Heavyweights

With new industry, collaborations VAST Data accelerates their ambition as the AI data platform.

VAST Data has been on a tear. If you haven't been following this company, you've missed a great story about transformation from storage platform to insightful data control for the AI era. Today vast unveiled multiple industry collaborations that fill in details of their strategy and showcase VAST’s bold direction to re-define data storage and oversight from cloud to edge.

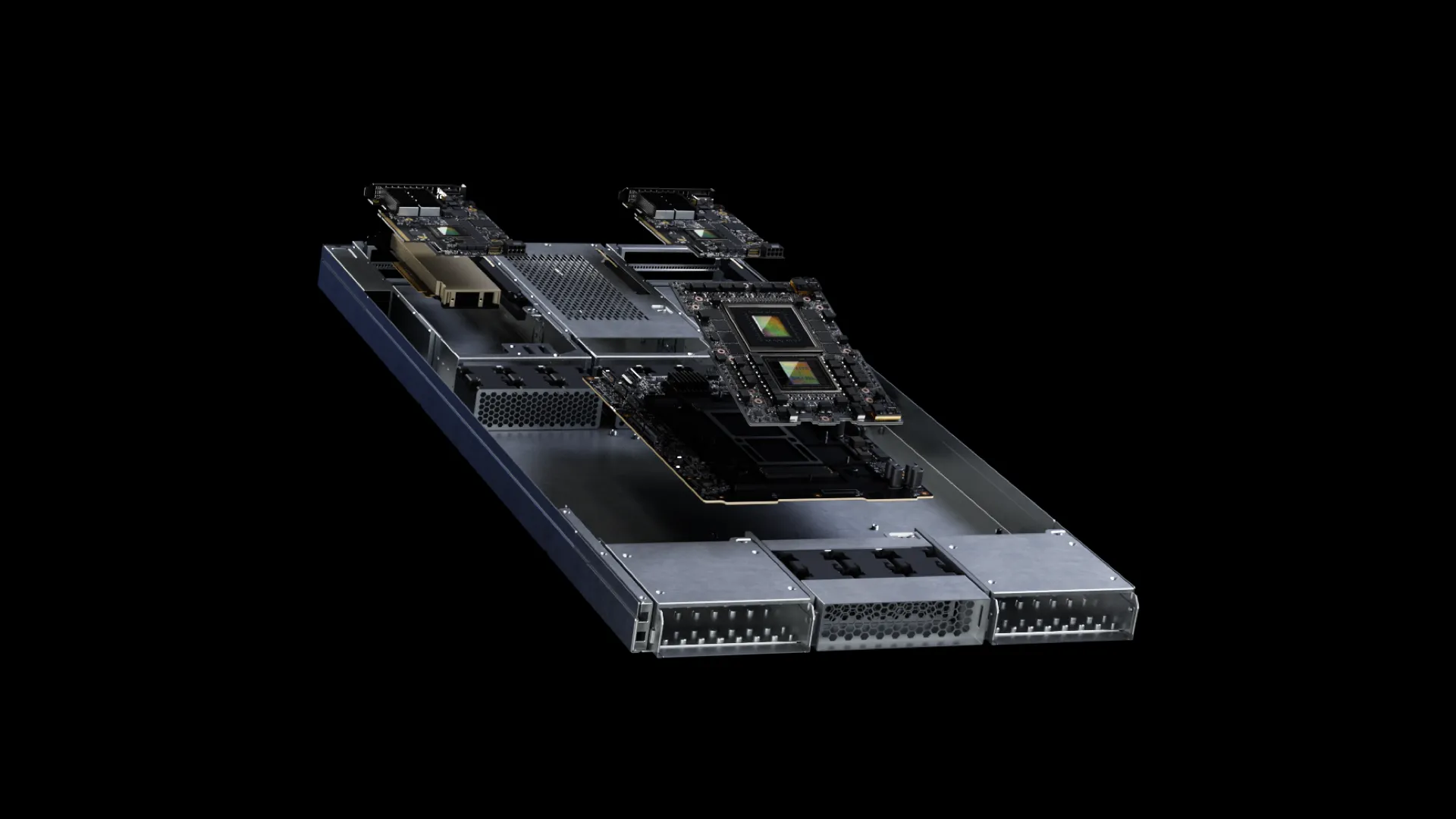

Let’s unpack today’s news. We’ll start with an announcement on the new VAST Data AI platform featuring NVIDIA DPU's. This is a fundamentally different take on data and redefines platform architecture into a powerful GPU platform complemented with Bluefield DPUs each running a containerized version of the VAST OS embedding storage and database processing directly into the GPU platform.

What you’ll notice is missing is any mention of x86 architecture eliminating the concept of the CPU head node. VAST points to the increased efficiency of this design claiming a power utilization and footprint reduction of up to 70% and a “net energy consumption” savings of up to 5% vs previous platforms featuring VAST distributed data services and NVIDIA silicon.

VAST is also leaning into the core capabilities delivered with this platform with increased QoS through direct reads and writes to shared name spaces across the cluster, enhanced zero-trust security through use of industry standard NFS, SMB, S3 and Apache Arrow service delivery, and the addition of native block storage to compliment historic file and object storage.

So where do you get this platform? Enter collaboration announcement number two, a comprehensive collaboration with Supermicro to fuel system delivery for the AI factory. Here, the two companies point to delivering platforms that scale to exabyte level clusters. VAST and Supermicro promise support for the entire data pipeline from data prep to data training with VAST’s DataBase and DataStore solutions. Supermicro is known for swiftly getting innovative platforms to market, so I’m excited to see what they actually deliver to support this bold move for both companies.

What’s TechArena’s take? The AI training game is moving from GPU centric to GPU native with new architectural frameworks fueling these massive clusters. VAST Data’s history in high performance computing and their DASE (disaggregated share everything) architecture places them as a central player for organizations looking to integrate distributed data into AI training. AI is the killer app of the era forcing re-definition of the fundamental constructs that have fueled computing for the last thirty years, and this magnitude of disruption will reshape the infrastructure industry. Based on recent history and today’s announcements, VAST Data is positioning itself well as a disruptive force. I can’t wait to see more from the company in 2024.

The Demand for Memory Innovation to Fuel ML with Netflix

TechArena host Allyson Klein interviews Netflix’s Tejas Chopra about how Netflix’s recommendation engines require memory innovation across performance and efficiency in advance of his keynote at MemCon 2024 later this month.

Advancement of the Network with Roy Chua

TechArena host Allyson Klein chats with Avidthink Principal Roy Chua about advancement of the network across 5G, edge, AI integration and more as the two share insights from MWC24.

Exploring the Leading Edge of Private Wireless Security with CTOne

TechArena host Allyson Klein chats with Hideyuki Tsugane, Vice President of Business Development at CTOne, about his organization’s vision of private wireless security and the staus of private 5G deployments as his company readies for a hockey stick of growth.

MemCon 2024: Memory Innovation Sits at the Heart of AI Delivery

When you consider the massive investment in industry innovation based on AI, it doesn’t take too long to realize that cutting edge AI models are being constrained by their underlying infrastructure. While processor and accelerator advancements garner the lion’s share of the headlines, a key bottleneck to consider is the memory hierarchy. The faster data can be delivered for AI training, the faster a new large language model can be delivering inference to fuel a new workload. While servers continue to scale the amount of standard DRAM capacity - notably AMD’s industry leading 6TB of memory capacity across a dozen channels - the leading edge is seeking lower latency and more alternatives than standard configurations. In fact, cloud service providers have pointed to memory as one of the key infrastructure gaps facing AI training moving forward.

This industry challenge is why MemCon is a must attend event on my 2024 roadmap and one that the TechArena is delighted to sponsor. Pulling together some of the leading data center operators and the leading edge of memory innovation, MemCon features two days of discussions on the requirements for memory, the latest memory innovations, and how the industry should work together to bridge the gap for the insatiable demand represented by AI workloads. Highlights of this year’s events includes an opening keynote from Microsoft’s Zaid Kahn who also spoke at last year’s event. Since this time Zaid has become the chair of the Open Compute Project and continued his vocal evangelism for infrastructure innovation including in the memory arena. Joining Zaid from the operator perspective include sessions from Netflix, EY, Oracle, Shell, Roche, Berkeley Research Lab and Los Alamos National Labs. They’ll be joined by speakers from across the industry and from the industry consortiums shaping the standards that will fuel future memory innovation and collectively highlight the intersect between high performance computing and AI cluster development as well as broader scale opportunities with increased memory capacity, tiered memory with CXL, and more.

Whether you’re in the memory arena, run a data center and feel the pain of memory bound workloads, or are delivering platforms to the market and need to keep pace with the latest silicon advancements, prioritize your schedule to attend MemCon this year. As a media sponsor, we’re happy to deliver a registration discount code TECHARENA15 for 15% off registration. And if you’re going to be at the show, please reach out to meet up or even be on the TechArena podcast.

Re-architeting Patient Care with Physia

TechArena host Allyson Klein chats with Physia about their generative AI based patient care platform and how they aim to create a new AI + doctor model to improve patient care and transform the medical industry.

Accelerating Simulated Design to Fuel Innovative 5G and Edge Use Cases with Ansys

TechArena host Allyson Klein chats with Ansys Chief Technologist Christophe Bianchi about his company’s mission to deliver design simulation across industries, how the communications arena represents an opportunity from silicon to systems of systems, and how AI is accelerating capabilities in Ansys solutions.

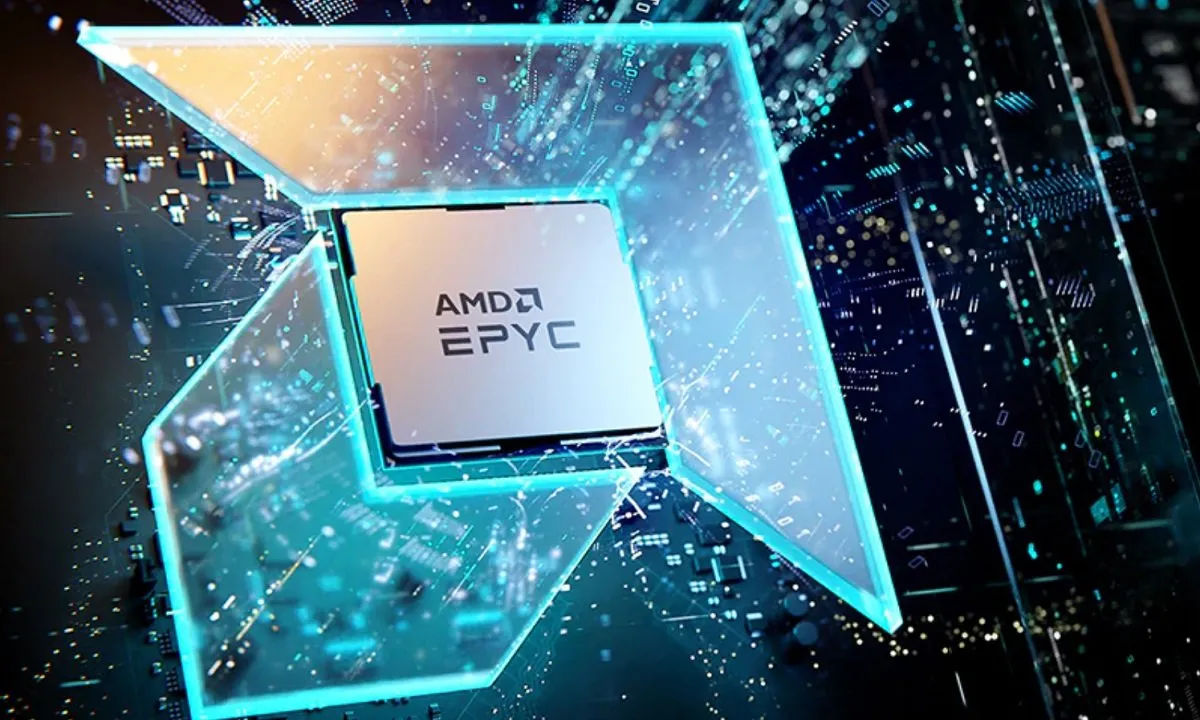

AMD EPYC Dials Up Network and Edge Performance

I kicked off my Mobile World Congress reporting today with a fascinating interview with AMD’s Kumaran Siva on his company’s strategy for network, the intelligent edge, and AI. Kumaran leads AMD market development across the strategic industry segments where EPYC processors shine, and I was keen to get his views on 5G deployment progress and the key use cases and technology developments that will be featured at MWC this week.

At this year’s congress, all eyes are on the edge and specifically progress in adoption of VRAN solutions. These solutions require high performance and energy efficiency, especially in Europe where operators have been hit hard with spiked energy costs. At last year’s MWC, initial implementations of VRAN representing the final frontier of network virtualization was one of the hottest topics.

In our interview, Kumaran was quick to highlight AMD’s progress in 5G and increasing customer interest in EYPC CPUs for deployment success. Those who have followed the TechArena know that I’m somewhat obsessed with the efficiency and flexibility of chiplet architectures, so it may come as no surprise that AMD’s chiplet design enabled them to speed Siena processors to market, delivering the performance, efficiency, and security dialed in for this market. Kumaran confirmed that the Siena series has garnered operator attention delivering low latency, high bandwidth connectivity at the edge. Kumaran went further stating that he sees more uptick in edge deployments due to, in part, the need for high speed connectivity to fuel AI inference at the edge.

What is AMD’s approach in network and telecommunications? It starts with deep collaboration with both partners and operators. Kumaran called out AMD’s partner-centric approach as something uniquely prioritized here at AMD vs other stops on his career journey, and a central driver of the company’s continued gains in market share with their EPYC product line. As 5G continues to proliferate, I’d expect to see AMD continue to make inroads especially where single core performance and performance efficiency is required for workload delivery.

As for Kumaran, he’s interested to see the industry conversation on AI in Barcelona and the transformative juggernaut that continues to drive change in the comms arena. While AI will influence 5G workload evolution, its true force will be felt in 6G standards which AMD plans to be deeply engaged with, in regards to standards finalization.

To learn more about AMD’s engagement in the telecommunications arena, check out our interview, and to learn more about AMD’s 8004 series processors and the entire AMD EPYC processor lineup, visit https://www.amd.com/en/processors/epyc-server-cpu-family

Using an AI Powered Nose to Detect Fires Before They Start with Dryad Networks

TechArena host Allyson Klein chats with Carsten Brinkshulte, CEO of Dryad Networks, about his company’s mesh network solution aimed at alerting forest fires before ignition, how his team tapped IOT and AI technology to develop their solution, and his aim to deploy hundreds of thousands of sensors in forests across the globe in 2024.

It’s a GPU World for High Performance RAN

Whenever I talk to Rajesh Gadiyar, VP of Engineering for Telco and Edge at NVIDIA, I walk way with better insights about the state of the network arena and where GPUs fit into the future of network deployments. Rajesh is the type of technologist who beams when talking about new industry innovation or when sharing how a particular challenge has been overcome by engineering advancements, and you can’t help but catch the wave of excitement when talking to him.

I was lucky enough to catch up with him in advance of MWC to hear about the latest advancements NVIDIA has made in the network. It’s been about a half dozen years since NVIDIA made it’s ambitions known in this arena coming to MWC with claims of GPU superiority for the RAN. At the time, many dismissed the move as mere TAM expansion aspirations for the GPU vendor. Of course, the world’s changed a lot in that half decade, and NVIDIA’s Ariel platform has emerged as a serious contender for virtualized RAN infrastructure. Late last year, NVIDIA unveiled the world’s first GPU-Accelerated 5G Open RAN solution with NTT DOCOMO pointing to TCO improvements of 30%, network design utilization improvement of 50%, and reduction of power in half as compared to NTT’s legacy solution.

While these advancments are impressive and certainly give NVIDIA a claim to legitimacy in the space, the elephant in the room is on everyone’s lips in Barcelona this week…the infusion of AI into the network. AI is being discussed to help deliver improved service agility, drive deeper service and network automation, and just overall transform how networks operate. Operators are clamoring to integrate LLMs into everything from customer support to billing functions while seeking AI solutions to drive more efficiency to the network and help unlock the ROI from incredible investments in 5G network infrastructure. Looking to the future, those who steer 6G standards efforts are seeing seamless AI integration as a core pursuit within many standards workgroups. And with this the path for NVIDIA’s deeper engagement into the network becomes clearer as AI transformed workloads are immediately at least considerations for GPU acceleration. In our interview

Rajesh assured me that NVIDIA plans to be at the heart of 6G standardization efforts as they work to support their growing ecosystem with Ariel SDK and cloud support to fuel broad innovation. Watch this space for more information about advancements of NVIDIA in the network, and if you want to learn more about Ariel and what NVIDIA’s intentions are for the future of the network attend GTC 2024 next month where Rajesh and his team will be on hand to share the latest updates.

Human Creativity, AI and the Future of Film with Artefacto’s Anna Giralt Gris

TechArena host Allyson Klein chats with Artefacto’s Anna Giralt Gris about her views on the future of film and the impact that AI will make in re-shaping one of humanity’s most creative mediums.

Exploring the Intelligent Middle Mile with Ribbon Communications

TechArena host Allyson Klein chats with Ribbon Communications’ Jonathan Homa and David Stokes about the progress of 5G proliferation and the importance of the adoption of the intelligent middle mile in reaping full benefit from 5G services.

Architecting the Future of the Network with Samsung’s Yue Wang

TechArena host Allyson Klein chats with Samsung’s Head of 6G Advanced Network Research and one of the industry’s top women in 6G, Yue Wang, about her vision for the next generation communications standard, what technical forces are shaping its progress, and how the industry stands to capitalize on its fruition.

Riding the Visual Edge with Varnish

TechArena host Allyson Klein chats with Varnish CTO Frank Miller about his company’s innovation in delivering media at the edge.

Dynamic Spectrum Allocation and the Future of Digital Services with DGS CEO Fernando Murias

TechArena host Allyson Klein delves deep into dynamic spectrum allocation and new government policy that opens up innovation in how spectrum is accessed for all with DGS CEO Fernando Murias.

NVIDIA Extends AI Supremacy in the Network

TechArena host Allyson Klein chats with NVIDIA VP Rajesh Gadiyar about how his company is riding the AI wave into the heart of the network and edge with innovative platforms like the company’s powerful Ariel VRAN stack.

Cellnex Pushes Cross Border 5G Limits with 5GMED

One of the best aspects of Mobile World Congress is uncovering interesting use cases and deployments of the latest technology. That’s why I was so excited to talk to Cellnex’s Jose Lopez Luque about his company’s engagement with the EU funded 5GMED project. 5GMED is testing resilient connection across borders for low latency, high bandwidth service delivery. Cellnex is a leading tower provider and a core participant in 5GMED focused on the France Spain border.

Why is this a focus point? 5G’s full promise delivers increased performance at the edge for services like autonomous driving or in vehicle infotainment. Those of us who travel internationally are all too familiar with the text message that comes from crossing a border stating the new carrier who is providing service and the small lag in connectivity that precedes its arrival. It’s this small lag, a tolerable nuisance for for text and talk connections, that becomes a deal breaker for more demanding applications like video transcoding at the edge. If you happen to be watching a streaming movie while a passenger of a train or car crossing a national border, you’re likely going to experience jitter at best or a dropped service. When you consider the low latency requirements of real time autonomous control of vehicles, you can see that even the smallest lag of connectivity can represent potential disaster.

This is what makes 5GMED so exciting as explained by Jose. With companies working together, these most demanding of network edge services are being put to the test. Cellnex is in a unique position to deliver core capability in this space as borders are likely rural environments without a tremendous amount of dedicated operator connections. A tower operator like Cellnex can deliver shared bandwidth to multiple providers delivering the connectivity and performance users need while keeping costs manageable for all providers.

With the successful delivery of 5GMED, we can expect broad proliferation of 5G access points across European borders in the near future opening the door for things like approval of autonomous vehicles in the EU. To learn more about what Cellnex is delivering in this space, check out our interview, and to learn more about 5GMED, visit the group’s website.

AMD Extends Network Performance and Efficiency Leadership at MWC24

TechArena host Allyson Klein chats with AMD’s Kumaran Siva about his company’s gaining momentum in telco network and edge deployments fueled by EPYC processors and the Siena products uniquely designed for these environments.

5G MED’s Cross Border Service Resiliency with Cellnex’s Jose Lopez Luque

TechArena host Allyson Klein chats with Cellnex’s Jose Lopez Luque about the EU funded 5G MED project testing service resiliency across borders to fuel low latency use cases like autonomous driving, and how his company is poised to deliver efficient and scalable services for broad deployments in 2024.

Curving Towards a Network Future at #MWC24

The great architect and artist Antonio Gaudi once said “There are no straight lines or sharp corners in nature. Therefore, buildings must have no straight lines or sharp corners.” I love Gaudi’s architecture, the elegance of curves, the simplicity of statement that the world is not linear, it is nuanced and circuitous. As we descend on Gaudi’s city of Barcelona, one that is literally littered with many of his master works and infused by his spirit wherever one turns, his inspiration can be felt in this present moment in the communications arena.

I’ve been attending Mobile World Congress for a decade and have seen the evolution of network virtualization and the promise of 5G. We’ve discussed software defined everything, the breaking of stovepipe infrastructure, and the slicing of networks. And yet, just like a Gaudi exterior, we find ourselves curving towards a destination that we can’t quite reach. The true promise of 5G has met less than full joy, and certain promises of the standard including full virtualization of the RAN, delivery of private 5G, and even cloud native network provisioning and automation are sitting at less than ubiquitous deployments. Telecom operators, who have collectively invested billions in 5G infrastructure, are still seeking the revenue streams that will deliver ROI that they’d aimed for through creation of this technology.

The question remains: will 2024 be the year that we finally see 5G hit broad scale across all of its promise? Will enterprises push through to private 5G deployments, will RAN finally be virtualized, and will cloud native NFV finally be deployed across all network services? And the million-dollar question…will AI be the disruptive force to spark this final act for 5G supremacy?

The TechArena will be engaging with companies from across the communications and edge landscape to search for progress, evaluate industry players for breakout stars, and gain proof of the hoped for promise. We’ll look at some pretty ridiculously cool use cases featured in Barcelona, we’ll delve deep into the possible future with 6G, and we’ll sketch out our views on the state of the network and edge in a tsunami of blog posts and podcast interviews befitting the king of communications conferences. And hopefully, we’ll find some time to pay homage to Gaudi himself. Watch this space for the latest from Barcelona, and if you’ll be at MWC24 next week, please connect with us!

MemryX Delivers AI Performance at the Edge

TechArena host Allyson Klein talks to MemryX VP of Product and Business Development Roger Peene about how his company is transforming AI at the edge with their silicon and how we’re sitting in an AI revolution.

MemryX Making a Case for AI Acceleration at the Edge

Yesterday at AIFD4, I wrote about Intel’s latest musings on right sized silicon for AI inference and how the company positions Xeon processors vs. GPU alternatives. This was the latest of many publications on this platform regarding the renaissance of silicon innovation in the AI arena, and one that I expect will be a feature throughout 2024 and beyond. One company that I’ve wanted to talk to in this space is MemryX, a startup founded at the University of Michigan and positioned squarely in delivering AI acceleration of visual processing at the edge.

Why is this important? Once again, we come back to data gravity. While massive AI clusters in the cloud may be the heavyweight champs of AI, the movement of data for AI inference is oftentimes impractical, expensive or downright unfeasible given latency considerations. Instead solutions require fast inference at the edge which can be delivered within the constraints of a vast landscape of edge environments. This calls for solutions that offer performance and watt sipping energy consumption.

MemryX has designed a solution that fits that bill. I caught up with VP of Product and Business Development Roger Peene, and he described a solution that delivered up to 100X more efficient than a CPU and 10X more efficient per watt than a GPU. What makes me impressed with MemryX is that they know what they want to be! They are squarely focused on a high in demand workload in the rapidly advancing edge infrastructure market. And unlike many startups in this space who talk a great PowerPoint game, they have actual silicon! In fact, they received their first silicon late last year and have it up and running in labs today, and with customers in the near future. I’m delighted to see the move to real product delivery in a space so desperate for solution alternatives to fuel customer demand, and I can’t wait to hear more from Roger and the team as solutions reach expected customer trials and deployments in the months ahead.

VAST Data Upends Storage in the AI Era

The heart of AI is utilization of data, and since the first kilobit of data was stored, we’ve been challenged with movement of data to the right place at the right time. As we’ve entered the AI Era, the concept of a data pipeline has evolved which describes the steps of preparing data for AI training. Without this prep, training will be flawed at best. The data pipeline itself is not sexy work, think of it as gathering the right ingredients before one cooks a delicious meal. And like much in life, the hard work part is necessary to get to that sublime experience. Organizations are challenged with data gathering and prep at scale based on the pure limits of traditional storage solutions and distributed data locations.

Enter VAST Data. The team at VAST recognized this opportunity in the creation of their massively scalable NAS solution that provides intelligence injection and oversight for both structured and unstructured data. First gaining traction in the HPC arena whose architectural foundations have formed the structure for AI training clusters, it makes natural sense that VAST would offer the same value for the speed, scale, and structure that these clusters require. And they have the data pipeline down cold…see this image from their AIFD4 presentation, one of the clearest depictions of the AI pipeline that I’ve seen.

What makes them really stand out to me is what they’ve delivered to manage data across distributed environments with VAST Data Spaces which helps enterprises get their arms around their data across on prem, in the cloud, and at the edge.

I first engaged with the Vastronauts at SC 22 at the launch of the TechArena, and there’s a reason I started this wonderful journey with their inclusion. What VAST is building represents the future of how we’re going to compute, and their ability to arrange, organize and compile data in the way organizations want is just the beginning of the VAST Data story. The team and their partners are driving innovation on the VAST Data platform at a ridiculous pace, and I expect to be covering a lot more in this domain in 2024. If they aren’t already, place VAST Data on your must watch list, and if you’re struggling with your data pipeline, check out their solutions.

Intel Modestly Lays its Case for AI

Today at #AIFD4 is Intel Day! Color me excited to hear about how the folks at Intel are pursuing AI workloads in 2024 given the acute innovation across the silicon arena in this space. Disclaimer: for readers unaware of my background, I spent 22 years at Intel including ownership of Xeon and AI marketing so I have a special place in my heart for this groundbreaking platform and its role in cloud to edge performance. And I don’t think it’s a surprise to anyone that Intel has been taking it on the chin in the AI arena over the past few years. They’ve at lost core CPU performance leadership to rival AMD, while NVIDIA at the same time has claimed AI silicon supremacy with their GPUs delivering the training required to unleash LLMs like Chat GPT.

Revenues and profit have suffered, and many are talking about the era of CPU centric computing coming to an end in the era of AI. At the TechArena, we’ve written extensively about the budding silicon industry developing GPU alternatives for both AI training and inference and across model sizes, power constraints, and locations from cloud to edge. So…what is Intel doing to right the ship?

Today Ro Shah, Xeon AI Director at Intel, led the charge with a discussion of where CPUs perform best and where accelerators (assuming including Intel’s own offerings) would be better tools for the job. He described a moat of 20B+ size of LLM as the magical divide between this CPU accelerator decision and outlined the evolution of AI acceleration embedded on Xeon processors over the years including the most recent integration of Intel AMX technology for INT8 and BF16. The result was a shockingly modest positioning coming from the market leader on where CPU will perform. Many of the delegates responded to this modesty with follow up questions…because there must be more right? With accelerators being developed in the “CPU zone” and targeting power sipping TDPs, we are left wondering about the magical divide being an accurate depiction of the market and where it’s going. And does it leave some of the real value that is Intel on the table?

The world today still largely runs on x86, and IT departments are ridiculously comfortable deploying Xeon processors, even today. That’s what you get when you’ve delivered consistently in market for decades. As we see the proliferation of AI extend from the sophistication of large cloud environments, and the engineers who live there that build AI models at breakfast, to the mid-market, organizations need an easy button for AI inference and Intel is uniquely poised to offer this to customers. They’ve delivered the investment in integrated AI acceleration and tools like OpenVINO to get initial AI solutions off the ground, and in so doing garner continued customer loyalty. They are also delivering Intel Core platforms for client and winning the perception game of AI optimization on these platforms which are integrating more and more inference over time.

I would like to see them claim more here, and I’m looking forward to seeing more from them as they continue to deliver both advancements in the core CPU and acceleration elements of their portfolio. I’m also excited to hear more from the other players in the industry on how accelerators will target the inference arena, how NVIDIA will leverage GPU supremacy for broader workload targets, and how AMD will leverage its performance to gain ground in broad market deployments. 2024 is going to be an exciting ride! Watch this space.

Google Cloud Talks AI Trends at AIFD4

Google knows a bit about AI. After all, their data stores include every search ever done since the birth of their browser, and they’ve been on the cutting edge of AI integration across their business from the earliest days of machine and deep learning integration. Today’s speakers from Google cloud introduced their talk by positing that AI is the next platform shift…from internet to mobile…and now AI. It’s an interesting analogy coming from a foundational force of the Internet age, and they leaned in to this pointing about the acceleration of generative AI and how many of the innovators in this realm choosing Google Cloud as their foundation. They’re taking advantage of Google Gemini, their foundational AI model that works across the Google-verse and introduced in market at the end of last year. Yesterday, Google delivered Gemma, an open model based on the same technology as Gemini and delivered by the team at DeepMind. It’s fantastic to see Google take this step and is consistent with their history of open sourcing some of their best engineering to the benefit of all (Kubernetes comes to mind as a disruptive opensource innovation delivered in a similar manner).

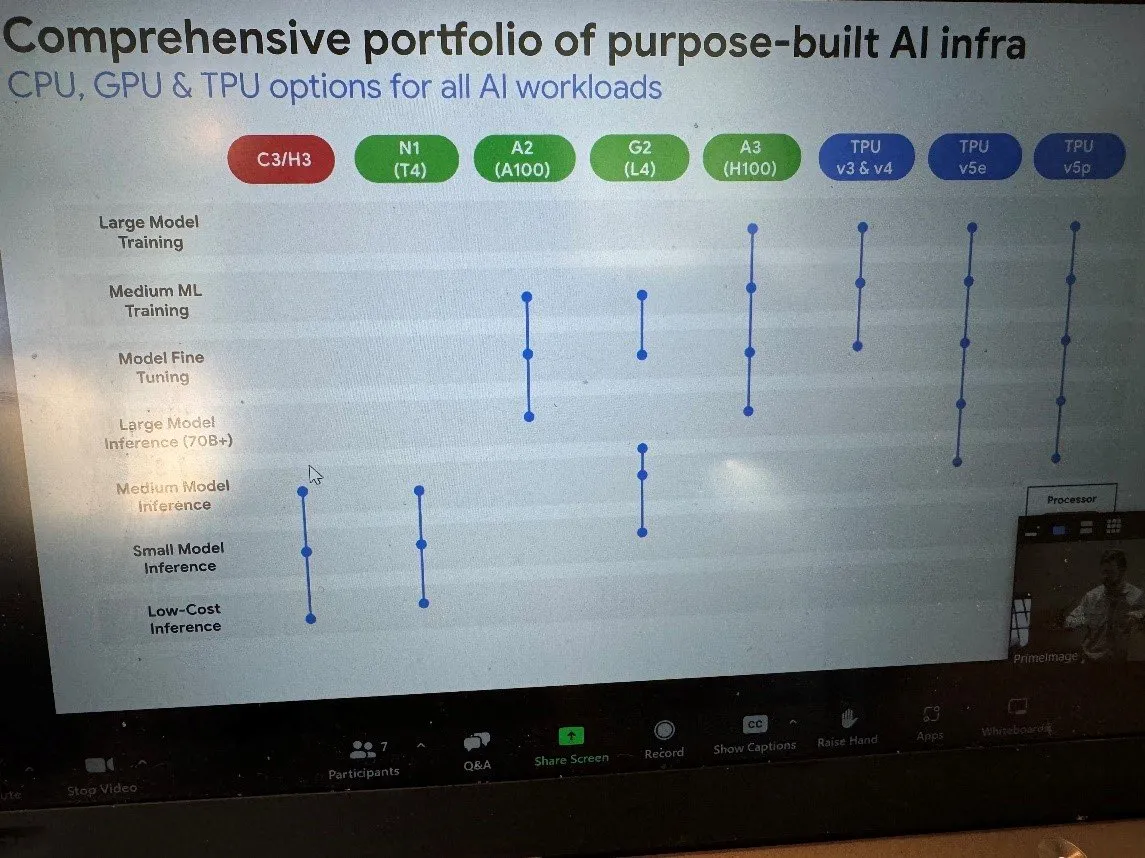

How is Gemma going to roll out? There’s a natural integration of Kubernetes that supports scaling up to 50K TPU chips and 15K nodes per cluster that extend support for both training and inference for a broad AI ecosystem. And while Google is talking during #Intel day at #AIFD4, they did introduce a graph showcasing their extensive support across major silicon environments:

Google went further, calling out that a lot of AI still runs on CPU and noted that Intel’s AMX technology extends the capability of CPU performance for different AI models. Model size is the consideration that will fuel platform choice across CPU/GPU/TPU with Google aligned with Intel’s positioning of smaller models being the natural domain for CPU deployment. Where do Google’s own TPUs play? Google positions them at the other extreme for very large models supported by Pytorch efforts within the company. Now in their fifth generation (they’ve been delivering TPU custom chips since 2018), TPUs are available to customers on the Google Cloud platform. This delivery fits with the frenzy of AI silicon development and showcases Google’s end game in this space. I’d expect to see more emphasis here from all large cloud players who prioritize their own silicon delivery with their own AI training models, and as this landscape evolves I wonder if we’ll continue to see a multi-architecture support slide or one that more fully leads with bespoke custom silicon offerings.

.jpg)