How Security Tech has Evolved to Make Us Safer (or not) Since 9/11

“I.N.T.E.L.L.I.G.E.N.C.E. is down! I repeat, we have no I.N.T.E.L.L.I.G.E.N.C.E.!”

- Lisa, Team America: World Police*

Numbers and dates sometimes seem to take on an oversized significance. One could posit that the random patterns AI sometimes finds in a stream are the equivalent of the random patterns burned into the human noggins by repetition, creating reputation. This could lead to a topic for another time: does human bias lead to implicit programmatic bias by accident? Only the ones and zeroes know, and those got buried in the compiler eons ago in computer years.

Anyway, some date combinations evoke visceral reactions among the subsets of populaces. Drop a 12/25 into a Western Culture discussion, and the twinkly lights and a sudden introduction of forestry into the household takes mental hold. In China, mention of 8/8 sends the wedding planner industry into a tizzy. Some dates are less about happiness. In former days, a mention of 12/7 (though they called it December 7th back then) brought out the somber reactions of those who remember when our nation was lured into war by the attack on Pearl Harbor. Though fading, that pattern still evokes a response.

These days, the date of today’s publication seems to resonate in the minds of many, 9/11. It’s fresh in the cultural mind, and the global implications of the day a bit more than two decades ago keep washing ashore like some geopolitical flotsam. Among we, the tech weenies, a lot of the response drifted to the feeling that technology needed to create change to protect the innocent. We’re only accidental technocrats, after all. Two subjects drift to mind, proving that patterns evoke patterns.

One that we can address later in detail was the realization that redundancy and continuity involved more than putting the backup tapes in the basement of the same building. The needed push of number-crunching close to the trading floor (someone wrote about distance and latency recently, can’t remember where…) was fighting the pull of real-estate values and commute times. One firm’s bright techie idea of building some redundancy across the Hudson in New Jersey led to copycats that did the same. That migration alone likely shortened the closure of the largest markets to six calendar days from the months it would have otherwise taken.

On another vector, though, the fairly new power the computer industry was feeling resonated off the political firewalls: “We can use technology to make us all safer against global threats.” The bright engineering minds educated in the 30-year trough of relative international peace saw the opportunity for sea change. Even as we said it, the creep of horror at what technology as a tool might unleash sowed the seeds of its own inertia.

Yer Humble Author (YHA) remembers not long after the infamous date visiting one of the largest database vendors in the world with a shiny new concept server. Said system would throw all of its 32 threads across eight processors at a then-incredible 8TB of DRAM. It was 20 years ago, you stand on the shoulders of ants, friends. Rhetorically, YHA asked the team of think-biggers what Homeland Security could do with an 8TB in-memory database. The conversation that followed was interesting, and a little scary, though the implications seemed to point to the positive…if you squinted.

Now that a quick trip to the open-source Gits and the virtual card swipe at Amazon provides everyone similar capability, how has technology made the globe safer against the shadowy baddies? Well, many of the apparent changes in safety and security seem to be fractional, in the diddly over squat range. Technology purchasing in the hopes of a more secure globe certainly absorbs the tax dollars of many, but our tools don’t appear to have created that vital change that moves us into the realm of 60’s Star Trek unity. Some of us want the transporter, airports really suck post-9/11.

And therein lies the conundrum. Technology is a tool. The redundant tools that saved the backsides of the finance industry aren’t all that different from those analytics tools that get tossed at global metadata for security. And yet, the conflicting thought processes – political of all stripes, apolitical liberty, the need for consensus of committee, trying to explain all this to leadership who stopped at algebra – grind some tools to a nub of inefficiency. The tool is not to blame, it’s the users. Accidental technocrats, untie (sic).

Hope should never be lost where technology is available. The leaps of innovation repeatedly outperform the bloat of human decision making. And maybe the human bias projecting into machine bias is a re-reflection of the same human bias. We’d probably just feel better on some digitally-significant days if we didn’t always stand in our own way.

*(Seriously, that might have been the only quote from the movie that could be used in a family-friendly space. But now that you’re at the end, you know that’s the only movie we could have used.)

Ayar Labs' Mark Wade on Optical I/O Boosting AI Performance

Mark Wade, CEO of Ayar Labs, explains how optical I/O technology is enhancing AI infrastructure, improving data movement, reducing bottlenecks, and driving efficiency in large-scale AI systems.

Targeting the Next Unicorn with Hitachi Ventures’ Gayathri Radhakrishnan

Gayathri Radhakrishnan knows how to spot differentiated value and understands well the difference between innovative technology and well-designed PowerPoint.

As a partner at Hitachi Ventures with a deep pedigree in venture capital, she’s a savvy expert in valuation of what comes next. This is why I was so excited to catch up with her at this week’s AIHW & Edge AI Summit in the valley. The AIHW conference is many things – a landscape of silicon innovation, a trend source for AI model development, and also a hub for venture deals in-the-making as startups showcase their new tech and silicon valley investors evaluate respective offerings. I wanted to check in with Gayathri on the state of the AI market, what areas of innovation will be garnering venture investment in the future, and how she sees the landscape solidifying as we enter the second half of the decade.

Hitachi Ventures has made a few moves of late in the AI space, most recently announcing investment in Archetype AI, Ema, Strikeready and Trustwise. Their portfolio shows an acute focus on mindful investment in use cases that will drive real enterprise value, fitting with Hitachi’s overarching stable approach to the tech arena. Gayathri shared that evaluation for real innovation is nothing new, harkening back to the cloud era rife with startups that were delivering virtualization solutions and branding them cloud. AI is no different. There may be fantastic analytics solutions under development, but ferreting out what is truly using machine learning, what is truly automated, is the key. That metric, Gayathri explains, is based on what layer of the stack is in play and what unique value is required for that layer of innovation to disrupt in the market.

Gayathri and her team are also surveying the infrastructure landscape for innovation in efficient delivery of computing to fuel AI. With US data centers forecast to consume 9% of the world’s energy by 2030 alone, it’s no surprise that this focus on efficiency is a priority. She underscored the push and pull in the market between customer demand for more efficient IT and industry engineering investment to deliver. This starts at the boardroom and C-level and trickles down to IT vendor conversations. influencing both disruptive tech evaluation as well as potential M&A moves from large infrastructure and cloud players with startup infrastructure solutions.s

All of this innovation and investment is ultimately focused on delivering value to the enterprise. Gayathri stressed Hitachi Ventures’ broader purview across industrial markets and the need for enterprises to adopt this new powerful technology responsibly, with focus on safety and reliability required for the solutions enabled by its use in the marketplace. While she sees continued advancement of large AI models and breakthrough technologies like AI agents, smaller model integration into reliable use cases will be a feature for 2025.

If you’re at AIHW Summit this week, connect with Gayathri to learn more about her perspectives and be sure to check out her panel, Industry Landscape Overview: Funding Trends & Emerging Business Models in Generative AI Start-Ups, & Enterprise Adoption Patterns, featuring insights from Applied Ventures, Silicon Catalyst Ventures, and NGP Capital.

Ellison Delivers Vision for Enterprise Adoption of AI

It’s Oracle CloudWorld week, and I was excited to hear from industry legend, Larry Ellison, on his view of the future of computing and the AI era. Oracle holds a distinct view of the enterprise and adoption of technology, so there are likely very few people who can project enterprise adoption curves better than Larry.

The keynote did not disappoint. Larry emphasized Oracle’s vision of AI for the enterprise, where practical AI solutions are designed to address real business needs. He highlighted the integration of generative AI across Oracle’s cloud applications, with tools supporting functions like finance, HR, marketing, and customer service.

This was lack of splash of the Open.AI crowd and more meat and potatoes for grizzled IT veterans. Larry emphasized that Oracle’s AI focus is on transforming business processes, not just hype-driven tools, and delivering AI-driven automation at scale.

Larry also spotlighted Oracle Cloud Infrastructure (OCI) as a trusted platform, built to handle sovereign cloud needs and ensure secure, region-specific data processing. This commitment to AI readiness has positioned Oracle as a trusted provider for industries like healthcare, finance, and government. Oracle’s approach underscores its reliability and adaptability in helping enterprises deploy AI efficiently, combining performance with the security enterprises demand.

One of the most compelling aspects of Larry’s speech was the focus on vector search, a method Oracle uses to process large volumes of unstructured data, seamlessly embedding AI into database environments like Oracle Database 23ai. This ensures that enterprises don’t need to move their data to leverage AI — AI can operate directly within the database environment, optimizing workflows for speed and scalability.

Oracle’s generative AI technology is geared to simplify complex tasks, automate workflows, and enhance decision-making. For example, Oracle Fusion Applications integrate AI for tasks like project proposal generation, strategy development, and content summarization, tailored to support business functions directly.

One thing I thought was interesting was Oracle’s ambition to follow a similar path with AI as it did with the cloud — building a trusted ecosystem that delivers real value for enterprise applications. Larry noted that while Oracle’s focus isn't on flashy AI tools for consumers, its enterprise-first strategy offers the reliability and scale required for mission-critical applications, very similar to its path with delivery of cloud services that met specific enterprise requirements.

What’s TechArena’s take? I think of a lot of companies before Oracle in terms of pure AI innovation. Those born on the cloud who lean forward into the tip of innovation will likely deliver the most impactful transformative tech in the near term. But…for those meat-and-potatoes workload enhancements with AI automation and agent control, I think Oracle will make an outsized impact on adoption. Time will tell if Oracle can once again re-imagine itself for this latest era of computing and prove to continue its centrality on enterprise application and service delivery.

AI Innovation, Energy Efficiency & Future Trends with Neeraj Kumar

Neeraj Kumar, Chief Data Scientist at PNNL, discusses AI's role in scientific discovery, energy-efficient computing, and collaboration with Micron to advance memory systems for AI and high-performance computing.

With Lemony, Uptime Industries Tackles AI at the Edge

I believe that AI at the edge will start dominating AI chatter in 2025 as enterprises large and small seek to integrate generative AI tools into operational applications.

This is what we think of at the TechArena as the 2nd wave of gen AI, the first being the training of behemoth models by brute force of massive AI clusters in the largest clouds on the planet. While the hyperscalers continue on their quest for AGI supremacy, the rest of us are looking for real world integration into business operations to ramp the efficiency and insight at our fingertips with this powerful technology. Of course, whenever we discuss enterprise adoption, we need to consider words like reliability, trust, security and more. Hallucinations and other risks of too careless of AI adoption can represent brand reputation loss or worse.

The team at Uptime Industries has designed a solution to enable smaller businesses and work teams to harness the power of generative AI for unique workloads with that trust and reliability in mind.

Meet Lemony, a secure, on-premise generative AI platform that delivers an all-in-a-box solution for on-prem deployment at the edge. A single Lemony box will run you $499 for up to five users, and boxes can scale to address larger team requirements. What’s best, your data, combined with generative AI model power, can work in tandem in a transparent and secure fashion, to capture the application advancements you need to fuel your business.

After reading about Lemony, I’m intrigued to learn more! I love innovative solutions that take the heady and complicated of new tech innovation into a consumable tool that even companies the size of TechArena can use. I love the focus on data security and on- prem deployment for organizations that don’t want to compromise on data location or control. I love the speed that this company has been moving as well, going from formation in March of 2023 to seed round in January of 2024 complete with a core team of experts from across the companies you’d expect to help make them a success.

Frankly, I have a lot of questions for the Uptime Industries team, which I’ll get answered at next week’s AIHW and Edge Summit. The Uptime Industries team will be showcasing their wares at the event. While most of the companies assembled will be discussing the latest innovations to pave the path to AGI, they will be discussing how those who aren’t running the world’s largest data centers can tap AI’s power to transform our businesses. Watch this space for more next week.

Unilever Delivering Customer Insight, Efficiency with AI Adoption

Arun Nandi, VP of Global Data and Analytics at Unilever, knows his way around data and how to apply it for positive business outcomes.

In my recent conversation with Arun in advance of this week’s AIHW and Edge AI Summit, Arun shared how his company is leveraging AI and data analytics to enhance business operations, drive sustainability, foster innovation and more.

Arun’s insights centered on the transformative potential of AI in improving corporate decision-making, optimizing supply chains, and refining product offerings. What’s better, Arun believes all of this can be delivered while maintaining a focus on environmental responsibility.

AI and Analytics Sit at the Core of Innovation

Unilever is one of the world's largest consumer goods companies. They handle vast amounts of data daily from across their business portfolio, ranging from information on supply chain logistics to culled insights on consumer preferences. Arun sees AI and advanced analytics as crucial in handling this data to extract meaningful insights that inform decisions at all levels of the organization, and that this extraction is critical for competitive advantage in today’s market.

From product development to marketing strategies, AI is being used to refine and predict consumer needs, ensuring that Unilever can keep ahead in an ever-changing market.

Arun shared some details about customer behavior modeling taken on by businesses like his. By analyzing consumer behavior data, organizations can personalize marketing and product recommendations, providing a more tailored and relevant experience for customers. This not only improves customer satisfaction, but also increases loyalty and retention, and this is widely deployed today utilizing existing analytics and AI capabilities. With new generative AI models, the ability to forecast consumer behavior will only grow more accurate and impactful.

Sustainability Through Data

Our interview also covered the challenge with efficient IT to fuel AI, and Arun highlighted that this is a core value at Unilever. In fact, AI is playing a pivotal role in helping the company achieve its environmental goals. Arun shared that Unilever is committed to reducing its carbon footprint and creating more eco-friendly products. Through data analytics, the company can identify areas where it can reduce waste, optimize resource use, and create sustainable products without compromising on quality or performance.

For example, AI helps Unilever track the environmental impact of its supply chain, ensuring that the company can source raw materials more responsibly and efficiently. AI also allows the company to forecast demand more accurately, reducing the chances of overproduction and excess waste. These efforts contribute to Unilever’s broader mission of achieving net-zero emissions and promoting a circular economy. And, they are part of a larger trend in corporate use of the powerful technology to enhance energy use and implement sustainable business practices.

The Future of Enterprise Data Architecture

Arun also provided his vision for the future of enterprise data architecture as one where AI and machine learning will become even more integral where continued investing in AI advancement is required to stay ahead of the curve. This includes building robust data infrastructures that can handle the increasing complexity and volume of data Unilever manages while ensuring privacy and security for consumers.

One of the challenges that Arun touched upon is the need for a cultural shift within organizations to embrace AI and data-driven decision-making fully. He stressed the importance of upskilling employees and creating a data-driven mindset across all departments to ensure the successful integration of AI technologies. This requires new avenues of collaboration, not just across Unilever business groups, but across the industry.

The solution for this collaboration is new partnerships between businesses, academia, and governments to drive innovation and tackle global challenges such as climate change and resource scarcity. By sharing data and working together, companies can amplify their positive impact on the world and achieve shared sustainability goals.

What’s the TechArena take? Our conversation with Arun was a fantastic reminder that AI has been implemented in IT organizations for years, automating critical functions in relation to advanced analytics. These powerful tools are opening new business opportunity and driving efficiency to corporate processes. While much of the chatter on AI is focused on advancement of large language models, the enterprise is happily deploying core AI-enabled applications across business functions with an eye to improve these functions with new model capability. As we look ahead to 2025 and expected deployments of gen AI in the enterprise, they likely will be built atop what’s already been done in many organizations.

Our second take? We see an important trendline on AI’s positive impact to overall corporate sustainability efforts and mindful use of energy and resources. Expect more stories from corporations in the months ahead on this theme as companies look to counter set the energy consumption utilized for deploying these models from data center to edge.

If you’re at AIHW Summit this week, be sure to check out Emerging Architectures for Applications Using LLMs, the Transition to LLM Agents, featuring Arun alongside experts from Stanford, Union.ai and Nava Ventures as well as his talk, Revolutionizing Language Models: Innovative Designs in Database Layers for Retrieval Augmented Generation, both within Tuesday’s lineup.

The Evolution of AI in ADAS 2.0

One of the most compelling factors driving autonomous vehicles is the reduction of fatalities and accidents. More than 90% of all car accidents are caused by human failures. Self-driving cars play a crucial role in realizing the industry vision of “zero accidents.” Beyond addressing the high power consumption typically associated with the high-performance AI computing required to achieve “just” level 3 ADAS, (the power goes up considerably more for L4 & L5), the fully self-driving car must address some additional really hard challenges: It needs to be able to learn, see, think and then act. And it must do so in a wide range of weather conditions, road conditions, lighting conditions, and also do so flawlessly in a wide range of traffic scenarios.

For the vehicle to “see” (perception) and ultimately understand its surroundings, the vehicle employs a mix of different sensors which includes cameras, radar, and LIDAR (light detection and ranging). While controversy exists in the industry regarding the need for LIDAR, reduced price points and learnings from many millions of miles of trials are leading to broader industry adoption. The reason different types of sensors are employed is to offset the limitations inherent to a given sensor. - i.e. cameras don’t see well in the dark, so by creating a composite image by combining the LIDAR sensor (which works well at night) with the camera sensor, the vehicle can see at night. Unlike cameras and LIDAR, radar is not affected by fog. When radar is combined with the other sensors, the vehicle can now “see” in a wider range of driving conditions. The combining of data from the different sensors is known as sensor fusion.

The AI processing performance required for sensor fusion is relatively modest when compared to the AI performance required for perception. The Convolutional Neural Network (CNN), which is typically employed in sensor fusion, is a form of a deep learning neural network commonly used in computer vision. While the AI processing required to address aspects of sensor fusion may be lightweight, addressing other aspects of sensor fusion are quite complex.

To illustrate that point, today there are vehicles on the road that employ 11 cameras, 1 long-range radar, and 1 LIDAR, in addition to 12 ultrasonic sensors (radar). It’s important to note this collection of sensors and sensor types is employed just to achieve Level 2+ ADAS. Successfully creating a composite image through the fusion of this large number of disparate sensors is a very challenging task. Not only does the aggregated data rate of all these different sensors present significant dataflow and data processing challenges, but the cameras typically have different resolutions, frame rates, and operate asynchronously. These challenges are further compounded when fusing the LIDAR and radar images with the camera images.

4 approaches to sensor fusion commonly employed to yield a composite image include:

- Early fusion

- Mid-level fusion

- Sequential fusion

- Late fusion

Each approach describes the point when the fusion of the different sensors occurs within the fusion signal processing chain. Early fusion typically delivers higher precision at the expense of completeness of scene coverage whereas late fusion offers more coverage of the environment at the expense of precision. Mid-level and sequential fusion offers more balanced trade-offs between coverage and precision. Once fusion has been completed, the composite image is sent to the Perception engine which is responsible for making sense of the fused image.

As shown, the autonomy workload consists of 4 major tasks: perception, prediction, planning, and control. Level 4 autonomy, where a vehicle can operate in self-driving mode with minimal human interaction, requires autonomous driving tasks (e.g. scene understanding, motion detection, image inference tasks, etc.) to be executed continuously with significant consideration for functional safety. While the above signal chain shows a linear signal flow from left to right, functional safety considerations drive the need for redundant signal paths, leading to even further complexity both to the overall signal chain architecture and the underlying computations.

Except for the motion control block, deep-learning neural networks are employed across the entire ADAS signal chain. Each block has different demands regarding the required AI computing performance and the neural nets that are employed. There is an ever-growing population of neural networks that tend to differ in structure, network connectivity, and their target end application while offering different trade-offs in complexity, accuracy, and performance. What they all have in common is that the networks mirror the human brain, (hence the name neural networks), in their approach to solving a very different class of problems than traditional CPUs have for many generations. This field is rapidly evolving and hit its stride when research led to the development of neural networks that could more accurately perform object detection and recognition than a human. Hence why the application of AI in autonomous systems is very logical.

The Convolutional Neural Network CNN, which was widely employed in ADAS perception for object detection, was quickly displaced by the advent of region-based CNNs (RCNNs). RCNNs marked a significant improvement in perception performance, by focusing primarily on just a region of interest within the image vs the entire image. However, this drove up a corresponding increase in AI computational demands. Since then, newer models including Single Shot Multibox Detector (SSD) and "You Only Look Once" (YOLO), which deliver even better efficiency and improved accuracy for object detection have gained industry momentum.

Most recently, vision transformers, which borrow from neural network concepts used in natural language processing (NLP) – think Siri – are now beginning to emerge as a leading neural network considered for image classification. Vision transformers surpass the performance of Recurrent Neural Networks (RNNs), in accuracy however, this is at the expense of extended training times – which we haven’t discussed yet. But just like learning how to ride a bike, there is a training phase required before you can ride any distance without training wheels. Networks need to be trained to be able to accurately detect and recognize objects in the vehicle’s surroundings.

As another benefit, vision transformers are also less sensitive to visual occlusion. Here again, with this more advanced and accurate neural network, there is an increased cost associated with the AI computing performance required to achieve similar frames-per-second performance when compared to earlier networks.

Suffice it to say, it’s awe-inspiring to witness the almost breakneck pace at which the automotive industry is embracing state-of-the-art technologies, including neural networks, that, in some cases are not quite off the drawing room table yet, but are being embraced ultimately to improve the safety of automotive transportation.

Introducing TechArena 2.0

As someone who has spent a quarter of a century immersed in the technology sector, I’ve witnessed seismic shifts in how this disruptive force has changed how we work, communicate, and innovate. But never have I seen a moment quite like this.

The convergence of AI, cloud and edge computing and advanced network technology is reshaping the world around us at a pace that’s sometimes difficult to comprehend, let alone keep up with. We are at a critical juncture in human history, where technology doesn’t just support daily life — it defines it. IT leaders are being called to quickly harness this technology to fuel massive organization transformation or be left behind. And industry technologists are being challenged to innovate every element of computing to deliver to this north star of opportunity.

We are all challenged to keep up with the pace of innovation and change. We need connection with those at the center of the storm and guidance about how to harness new opportunities ahead. Innovators have stories to tell about their unique delivery of technology that will advance AI’s influence on our industries and society, will make hyperscalers’ quest for AGI more attainable, and will tip the industry landscape to deliver new market introduction.

That’s why I’m thrilled to announce the launch of TechArena 2.0. — an enhanced media platform designed to fill today’s tech news and information void and connect the architects of technology deployment with the engineering gurus driving its creation. This isn’t just another tech blog or news site; it’s an unparalleled hub of innovator connection dedicated to bringing you in-depth coverage and expert perspectives on the topics that matter most: AI, data centers, edge computing, networking, and compute efficiency. Our foundation is based on the premise that direct access to those driving industry innovation is your best bet to charting a successful course in next-generation technology adoption. Our content features direct access to the brightest minds in the tech landscape with an arena filled with tech titans representing >$9T in market capitalization and scrappy startup CEOs and founders sharing their disruptive visions for the future.

Why Now? Because Timing is Everything.

The truth is, tech storytelling has not evolved fast enough to keep pace with the innovations reshaping our world. Traditional media often falls into the trap of either overwhelming audiences with jargon or caring more about winners, losers and earnings, and less about sound guidance on deployment strategies. In this landscape, TechArena is stepping up to do what no other platform is doing: combining tech domain expertise with a clear-eyed, authentic approach that connects innovators directly with the audiences they care about. Our new platform features exclusive interviews, technology deep dives, and expert analysis — delivered in a way that cuts through the noise and speaks to the heart of what IT organizations really need to track.

Unmatched Expertise, Unfiltered Voices

We’re bringing together an editorial team that isn’t just reporting on the industry — they are the industry. From seasoned technologists to data center architects, our contributors bring unparalleled expertise and a deep understanding of the solutions that are redefining our world. This isn’t content created from the sidelines pontificating about what may happen; it’s first-person perspectives from those who have lived in the arena itself and know the stakes of tech decision making.

I started TechArena in 2022 with a vision for a hub for tech innovation in mind. Today, we move closer to that vision’s realization, and I couldn’t be prouder of the entire TechArena team with its delivery. In order to tell this story authentically, I’ve brought in tech content expert Rachel Horton – a longtime tech editor and journalist – to lead our editorial, and Chad Koontz, a digital swami with years of tech sector expertise, to head our digital practice – because I believe our job is not done until content published is delivered to its intended audience every time. These seasoned leaders will help ensure that the TechArena platform hums with valuable stories that educate our audience and influence technology deployments.

Covering the Critical Issues in Compute Efficiency

In a time when energy use is as much a technological challenge as it is an ethical imperative, our platform will also focus heavily on how innovations in AI, data center, edge, and networking can drive more efficient solutions. We know that the future of technology cannot exist in isolation from the future of our planet, and we actually believe the tech sector will play a leading role in helping mitigate our climate crisis ahead. TechArena will continue to explore the intersection of tech and energy utilization with the urgency and depth that the topic demands, and we welcome stories from innovators focused on efficient and sustainable technology across the cloud to edge landscape.

Building a Network for You

Our goal is not just to inform, but to engage. We’re building a community of like-minded professionals, engineers, and business leaders who are passionate about tech and pushing the boundaries of what’s possible. Through live online discussions, community forums, and face-to-face network engagements at industry events, we’ll offer you more than just articles — we’ll provide engagements for meaningful connection and collaboration that can help reshape your business opportunity.

Join Us on This Journey

TechArena’s vision will be fully realized with your collaboration, whether that be engaging in consumption of our content, contributing your voice to our platform, or connecting with us to drive content creation. We want to hear from you. Thank you for being a part of the TechArena community, and I look forward to having you join us on this journey as we continue to shine a light on the voices of innovation.

Allyson Klein

Principal and Founder, TechArena

.webp)

Exploring AI Innovation with Hitachi Ventures' Gayathri Radhakrishnan

Guest Gayathri “G” Radhakrishnan, Partner at Hitachi Ventures, joins host Allyson Klein on the eve of the AIHW and Edge Summit to discuss innovation in the AI space, future adoption of AI, and more.

Privacy, AI, and the Future of Cloud Computing with Rita Kozlov

Join Allyson Klein and Jeniece Wnorowski as they chat with Rita Kozlov from Cloudflare about their innovative cloud solutions, AI integration, and commitment to privacy and sustainability.

.webp)

3 Key Trends We're Seeking from AIHW and Edge Summit Next Week

To say that the TechArena team is excited for next week's AIHW and Edge Summit is an understatement. With 2024 being completely dedicated to all things AI, this event is a fantastic opportunity to check in on disruptive innovation to fuel the next wave of AI buildouts from hyperscale to the enterprise.

And with industry conversations focused on supply chain constraints of critical technology like GPUs and HBM, it's clear to see that innovation is required to keep up with customer demand and introduce more choice of solutions into the market. We will be coming at you with a deluge of content featuring bright stars in the AI hardware development arena which we recently kicked off with our conversation with Arun Nandi from Unilever. Here's what we will be tracking at the event next week:

Will NVIDIA GPUs Finally Face Real AI Competition in 2025?

The year has been filled with stories of NVIDIA AI Factories packed with Blackwell platforms and broad deployments of GPU clusters scaling to 100,000 nodes. While the extreme shortages of GPUs of the first half of the year have eased, the cost and power requirements to fuel these performance engines are eye-opening. AMD, the event's primary sponsor, has delivered the MI300X accelerator to the market, a viable performance alternative for many AI models that is gaining traction in the market. We'll also be talking to many silicon startups that are delivering unique solutions for AI trying to capture some of the growing logic TAM in this space. The real question is if the large players will move off of NVIDIA for any of these alternatives or simply continue to advance their internal designs and if the real market for new entrants to AI processors are aimed at the next layer of cloud providers and in the enterprise data center and edge.

Will 2025 Usher in AI Fabric Innovation?

While much of the industry attention has been focused on performance drivers of compute acceleration, the AI fabric cannot be ignored. This is a two-part target for us. First, we are keen to check in to viable competition to InfiniBand for offering the latency and scale required for AI training clusters. Today, NVIDIA also has a corner on this market with their tech acquired from Mellanox. While last year saw the announcement of the Ultra Ethernet consortium and a flurry of new specification development to bring Ethernet closer to the capabilities of InfiniBand, we may see other technologies emerge at the conference delivering an alternative for high-end fabric capability. The second target is a look at fabric connectivity and the age-old question of transition to optical. Copper continues to gasp out a life within the data center, but its limitations and power costs are putting increased pressure on providers to migrate more of their connectivity to optical. We'll be checking in with optical providers for the latest innovations in this space to see if 2025 is the year that optical will finally take over.

What About All That Power?

While the allure of AI is unquestioned, we may actually tap the planet's resources at the rate we're moving before we reach the age of AGI. Fundamental change to design principles including more efficient hardware innovation within every element of the data center is required. We will be looking to the vendor community to bring news of advancements on energy efficiency, embedded carbon, and circularity of designs, talk to operators at the show on how their organizations are tackling the challenge of power delivery and management, new cooling technology options, and more, and checking in with venture capital leaders to see how the energy quotient will help shape investments moving forward.

Got more things you'd like covered from next week's show? Please connect with us to share. And if you're going to be at the Summit and would like to connect, please hit me up on LinkedIn.

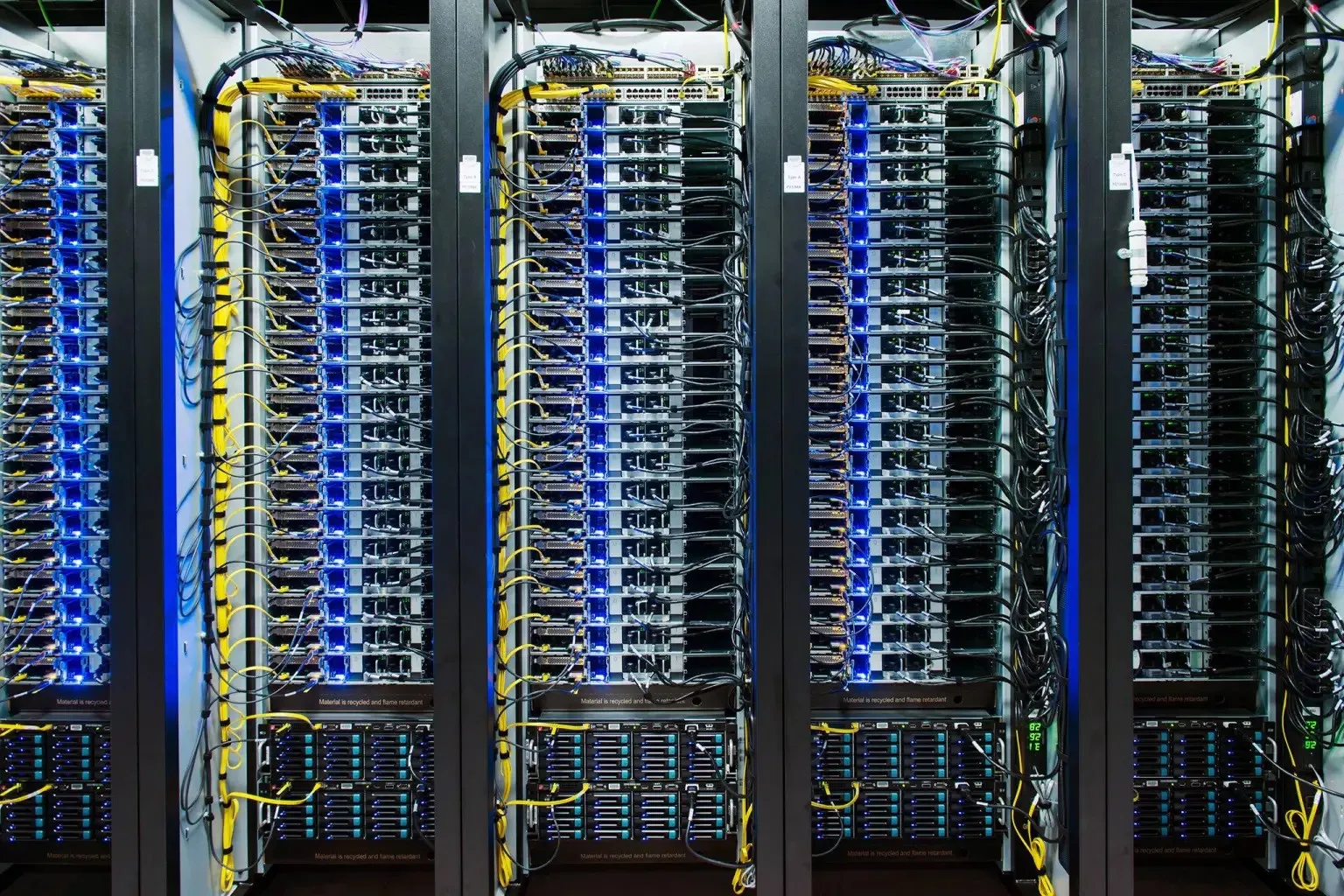

OPEN Innovation to Face Off Data Center Challenges

I’ve been active with hardware infrastructure since the Windows NT era, when Novell with SFT level III and Pentium Pro was all the craze and networks were built of coax cables running token ring. (Yes, I’m that old).

Generations and generations of technology innovation later, the data center industry is bumping up against the amount of energy available worldwide, and cooling and space are challenging to deal with in any data center. We must pivot. It’s essential that we re-think how we design, run, operate and optimize data centers.

Since my days in Intel corporation, I’ve had a front seat to technology transitions and adoption of innovation in the compute, network and storage space. Data centers are at the heart of digital transformation, which is touching almost every industry and person on the planet. All the way from the massive hyperscale data centers to the tier-2 Cloud service providers and co-location partners and enterprises. We’ve built an enormous and diverse ecosystem of industry players around data centers that cover any conceivable topic – from the financial investments and insurance down to the network and power equipment and connector specifications. All these industry players work hand-hand based on strict standards and protocols to ensure smooth data center operations.

The data center space has always been a hotbed of innovation, and the continuous digitization of society will continue this trend for some time to come. The demand for capacity is likely to soar, but it’s challenged by the availability of locations to build data centers, the cost and available supply of energy and skilled staff to build and maintain these critical facilities, to name a few. At the same time, the data centers are under increasing scrutiny and regulations. And the mass adoption of AI is driving an increased set of demands for the data center, at a speed we’ve not seen before.

Because of these competing dynamics, it’s an industry that naturally fosters innovation to overcome these challenges. And over time, the business of building and running a data center has been standardized with modular data center filled with 19-inch racks and x86 infrastructure building blocks complimented with adequate cooling, fiber and power networks. We industrialized it to a level where a have a good understanding of the business model, the available solutions, the leading indicators and how to align supply and demand.

However, the complexities described above are quickly compiling as the demand is outstripping capacity and the cost and availability of energy is no longer endless. The requirements are increasing to a level where it’s unsustainable. In addition, we all want to be sustainable, but the speed of innovation is driving new technology adoption at an increased pace.

When we consider innovating the data center ecosystem, it’s key to get as many creative ideas as possible from a wide range of players so we can filter and fine-tune to find the best solution. The OPEN innovation model is a great way to do that. I believe this mechanism will be pivotal for this industry to leverage to out-innovate ourselves of the data center infrastructure challenges we are facing.

Luckily, the data center space is no stranger to OPEN. A lot of the innovative services delivered out of data center are based (in part) on open-source software. OPEN is an innovation engine, I was once told, and I continue to see this statement being validated in our industry. I’m going to abstract a few things here to a high level to give a few examples.

Let’s take a look at some of the most recent technological adoptions that effected data centers:

- HPC matured because it leveraged standardized X86 compute and ethernet networks with open-source operating system + tools. It was a cheap alternative to the big scale up & proprietary HPC solutions.

- Once we complemented those ingredients with ADSL & Fiber and 3G as a ubiquitous and affordable last-mile connectivity solution for companies and homes and storage cost lowered in part in due to opensource solutions levering storage in a new way, we got cloud computing. We now had a cheap & reliable alternative to local storage.

- BigData was a combination of relatively cheap storage and compute and allowed us to do analysis on fast data lakes of information, and we had a lot of open-source tools such as data sciences benches developed which evolved to assist us in this analysis.

- Now add the open-source frameworks and tools for AI to the mix above and we’ve arrived in the age of Artificial intelligence.

As you can see, Open Source had a hand in all these major tipping points and while software is an innovation engine, it needs hardware to make it work to deliver its promise.

What if we could take the power of OPEN innovation and apply it to the data center IT infrastructure space?

The Open Compute Project does exactly that. It takes this OPEN approach so common in software development and applies this framework to the development of future data center IT infrastructure. This community-led effort spans many different domains, allowing all the different ecosystem players to come together and align on innovation efforts in the data center space. From the concrete used to build data center down to the chiplets on the board, there is a sub-project anyone can join to consume, contribute or learn about the latest developments.

Another exciting thing about OCP is its origin. It was instantiated by META (formerly Facebook) who in 2011 foresaw this need to redesign the IT infrastructure. Based on their scale and complexity, it’s only natural they had this foresight. But it also drove the innovation coming out of OCP. Because of their scale and size, the hyperscale’s engineering team had the manpower, skills, experience and the resources to come up with a different formfactor and power delivery, which still fits in the so loved 19-inch rack outer dimensions but with a 21-inch inner width in the rack. This way there is more physical real estate to house components and move sufficient air for cooling through the unit. And by centralizing power distribution for the whole rack via a busbar, a saving up to 45% power consumption can be achieved per rack! These are just some examples of the innovation that META brought to OCP, and there are many more.

Many companies soon joined the ranks to consume, contribute or adopt OCP in their offerings. The most recent one was Nvidia, whose DGX infrastructure now leverages OCP for power and liquid cooling purposes. Both Jensen Wang and Mark Zuckerburg even commented about the flexibility and cost savings of OCP in a keynote during SIGGRAPH 2024.

Unknown to many, a number of OCP innovations are most likely already in your existing infrastructure. Per example the OCP3 nic’s are widely adopted by many traditional vendors in their current product designs and have even become the defacto standard. Also the recent modular redesign of motherboards to a more modular design which is known as the Data Center Modular Hardware System (or DC-MHS for short) is also making its way into a number of new products entering the market. Examples are the recently announced Dell PowerEdge R670 CSP Edition and R770 CSP Edition servers or the Supermicro X14 servers.

Open is an innovation engine and the OCP foundation offers a community for all the IT infrastructure industry players to align and build actual solutions to face the challenges this industry is up against. It’s an exciting community that is gaining more momentum and one to watch or even better, participate in!

Revolutionizing RAID: Graid's Supreme Solution for Modern Data Challenges

Allyson Klein and Jeniece Wnorowski chat with Kelley Osburn of Graid about SupremeRAID™ and its role in tackling high-performance storage challenges in data-driven environments.

.webp)

3 Exec Insights on Cultivating Successful Industry Partnerships

There can be an attitude that people who are partner managers are not as sensitized to achieving revenue, developing products and services or the challenges of taking them to market. Don’t ever believe that industry partnerships have little real effect on company revenues or market successes. The people in those roles must know their own corporate business and their partners' business drivers for anything of consequence to be accomplished. Let me walk you through the “why” behind that claim.

Everyone has a boss with authority to “fire” them.

I will start with the argument I’ve heard many times: “I can’t wait until I save up enough money so I can run my own company, no one can fire me!” Unless you are wealthy enough to pour money into a company that can’t cover its operating costs, your customers are your boss. They get to vote with their wallets and “fire” you by not buying from you at all.

A Board of Directors has “bosses” – shareholders, stakeholders and regulators. Shareholders can “fire” directors in a few ways – hostile takeovers, purchasing enough stock to have a voting seat on the board then voting everyone else off the board, or dramatically selling off the company’s stock from their investment portfolios. There are three primary duties for BOD members: Duty of care (diligence in being informed and involved), Duty of Loyalty (prioritize the corporations’ best interests along with its shareholders) and Duty of Obedience (personal and corporate actions comply with local, state and federal regulations).

CEOs have a boss – The Board of Directors. Two top failures by a director in exercising their fiduciary responsibilities are failing to oversee management and making decisions without adequate information. The implication is that a good director prioritizes preventative involvement with a CEO.

It is not “just business.”

Humans are social/relational – even engineers! Regardless of the title or level, humans all have similar fears, hopes and ambitions. In many cases, the fear of failure or being exposed as an imposter is highest in the C-suite compared to the working level.

Everyone has goals set by their “bosses” that advance personal success within the company or cause personal loss of capital with their management chain. Are you approaching a given partnership as if more than your goals matter?

Ask yourself if there is a way to help increase your counterparts’ chances of success while executing the work you want to accomplish with them. Understand the business they are in, how they are working to improve their profitability, and their brand value to their customers. Know whether your success results in their success – at both an individual level and a corporate level.

Do the math. Literally.

Over a decade ago I was responsible for executing a previously negotiated agreement between my company execs and a critical partner’s executives. My counterpart and I started hashing out how to adhere to the agreement while advancing the goals each company had for the partnership.

Just one tiny innocuous comment from my GM caused me to dig a little deeper after some puzzling behavior from my counterpart at our partner: “Don’t worry – they make so much more money with us; they have no interest in stalling our partnership in favor of other actions.”

I did the math. I truthfully hoped to calculate how much more money they would make from our partnership to present that in ongoing negotiations when I instead confirmed they did NOT make more money in our partnership as written. This explained why my counterpart, who like me was part of the pre-close negotiations, was so uncomfortable with the suggestions I made. Inadvertently, I was asking that individual to expend significant capital with their management chain, who were accountable to the CEO, who was accountable to their BOD, to shareholders, on down the line.

As much as my counterpart appreciated me as a person and valued the way I collaborated, they were unable to execute against many of the items my company hoped for. An action from my counterpart that either hurt or simply failed to advance their company’s overall business interests would ultimately be unwound.

Concluding thoughts

Industry partnerships are some of the most rewarding experiences I have had, because the sense of joint accomplishment in executing something that moved everyone’s business forward has very few equals. To take it to the next level, be a student of your own company’s business as well as that of your partner’s. You would be truly astounded at how often these simple concepts are overlooked, and surprised by what you discover when applying them to your own situation.

AI, Analytics, and Innovation: Insights from Unilever’s Arun Nandi

Arun Nandi of Unilever joins host Allyson Klein to discuss AI's role in modern data analytics, the importance of sustainable innovation, and the future of enterprise data architecture.

Data Center in the AI Era with Jen Huffstetler

Join Allyson Klein as she welcomes former colleague/ industry innovator Jen Huffstetler. Jen shares her extensive experience driving advancements from client devices to the data center, including groundbreaking technologies like Centrino and 3D packaging.

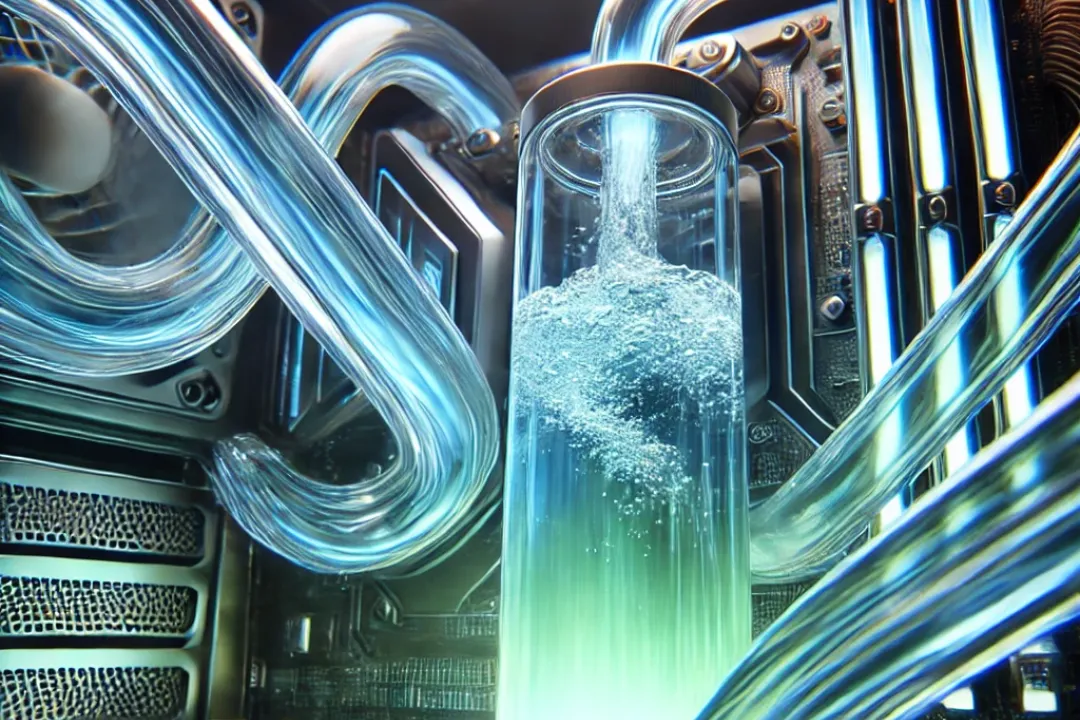

Iceotope Delivers Great Chemistry for Cool Data Centers

With GPU-driven AI training ruling the moment, we have finally come to the asymptotic moment for liquid cooling to overtake air cooled data center infrastructure for many environments. Consider, for a moment, that NVIDIA Blackwell-based racks are drawing from 60kW to 120kW per rack, a dramatic shift from the historic 5-10kW per rack delivered to fuel general purpose applications. When you extrapolate that power across football fields of racks for a hyperscale training cluster, you realize that there’s a LOT of heat to extract. The debate has quickly shifted from air vs liquid to what type of liquid to utilize, opening the door for market disruption and new player entry.

This is why I was so excited to talk to Dr. Kelley Mullick, vice president of technology advancement at Iceotope. Kelley joined Iceotope, a Sheffield, England-based immersion cooling startup, last year, bringing with her a technology leadership pedigree and the notable achievement of having delivered the first industry liquid cooling warranty while at Intel in 2022. Her PhD in chemical engineering and lengthy engagement in industry standards work places her squarely in the middle of liquid cooling advancement.

So why liquid cooling? Kelley confirmed that AI is the primary driver for urgency in transition to liquid cooling due to its serial computing nature, but also stated that broader commitments to sustainability have driven hyperscalers to consider liquid alternatives. She outlined the three alternatives in play in the liquid market: cold plate, tank immersion and precision liquid cooling. While all are more effective and efficient than air, each of the alternatives offer different advantages for consideration. Cold plate has the advantage that it has been widely deployed in HPC environments and utilizes air to cool parts of the chassis where liquid plates are not uniquely targeted, supporting retrofit opportunities for existing infrastructure. Tank immersion delivers a solution where heat can be captured for secondary usage but is also delivered at a weight that requires reinforcement of flooring in existing data center tile flooring, likely limiting to greenfield buildouts. Finally, precision liquid is somewhat of a hybrid, offering advantages of immersion cooling with alternative chemistries to water and similarities to cold plate, offering deployment in existing vertical racks.

If this complexity wasn’t enough, there’s also the topic of chemistry, and it’s here that Kelley really lit up. To start, the options for liquid cooling are water (used in cold plate designs) and dielectric fluid (used in cold plate, immersion, and precision designs). Dielectric fluid is composed of hydrocarbon or fluoridated hydrocarbon fluid with most vendors targeting hydrocarbon options because of its non-toxic composition and ability to be recycled. For two phase cooling solutions, however, only fluoridated hydrocarbon solutions can be used, introducing toxic chemicals into the data center and representing increased challenges from a circularity perspective.

Iceotope is delivering a pretty special chemistry within this landscape. Kelley explained that solutions are delivering precision cooling at up to 1500 watts with thermal resistance 0.037 Kelvin/watt, at par with fluoridated solutions with a sustainable and environmentally friendly chemistry. This technology is delivered in adaptable form factors including racks, power shelves and more, enabling customers to deploy across data center and edge environments. Kelley also noted that different types of infrastructure from GPUs and CPUs to storage JBODs can be submerged in dielectric fluid. Iceotope has done extensive testing of material compatibility to ensure customer deployments will keep cool without reliability erosion.

What’s the TechArena take? We were delighted that we were able to feature this story on our Data Insights series sponsored by Solidigm as cooling is critical to delivery of the data pipeline. Iceotope is delivering disruptive technology in this space, and I expect to hear much more about their solutions as we head into the OCP Summit this fall. If liquid cooling is not on your radar today…put it on your radar. With hyperscalers moving rapidly to liquid alternatives, we expect solutions to scale to meet edge requirements and broader scale AI configurations in data centers. To learn more, check out the interview and visit Iceotope’s site.

It’s No Coincidence that Wait-and-See Rhymes with Latency

Why Wait? Sometimes That’s the Only Choice

“All good things arrive unto them that wait - and don't die in the meantime.”

- Mark Twain

Memory is always a tricky thing. And we’re not just talking about trying to find the TV remote for five minutes only to finally discover that it’s in the fridge next to the five-pack. Don’t judge until you’ve done your 1.6 km in a certain pair of size 14’s, thank you. Anyway, Your Humble Author (YHA) remembers many a discussion on system memory, cache topologies, drive characteristics, and all those other fun things that are associated with an engineer’s most-dreaded, four-letter “L” word: latency. During one particular discussion with a senior software VP at a large OS company, he opined, “All processor architectures wait equally fast.” So, so true.

Pretty much every one of those computer architectures arranges the logic units to operate on a series of data registers. We promise this is going somewhere… and we’re also simplifying a lot so everyone can grab a bone and pick. Data registers load and store from memory. Some architectures will also allow for direct memory operations. But which memory? This is where waiting comes to play.

The following analogy has been changed to protect the innocent, but YHA thanks the smart people for the concept. Let’s say the desired memory is stored in the nearest cache (with typical latencies in the one nanosecond range these days). Let’s also say that acquisition is the equivalent of finding the TV remote again next to the three-pack. Easy. Processor architectures typically have a series of larger caches or on-chip memories these days, followed by DRAM on the motherboard. It could take a walk to the neighbor’s house for a quick wave while opening the fridge in the garage to accomplish that particular DRAM “fetch.”

If that particular data happens to be stored on some of the latest and best solid-state memory on a coherent bus, the equivalent would be a short drive to the local convenience store. Leave the remote at home, please. A slightly older SSD configuration – or a poor driver routine (processor people always blame the software) – could mean a slightly longer trip to the grocery store in town. The average AI cluster data transfer across the mesh would involve stopping for a sit-down dinner before heading home. That’s a lot of work for one individual piece of data, all while the particular system thread is waiting.

One more. The best network topologies in the datacenter these days usually guarantee a maximum of 5 milliseconds latency from any server to your register. THE COMPANY where YHA used to work offered the promise of an 8-week sabbatical. Were that entire sabbatical devoted to the acquisition of one beverage unit, we’d be in the ballpark of the latency we’re discussing. That sucker better not be an over-hopped IPA. And what’s the system thread doing that whole time? To use the Yiddish: bupkis.

Clearly, those decades of work between hardware and software geeks on multi-threading, data-ordering, data promotion, appropriate memory and SSD sizing, (keep going, it was decades) were all pretty vital. The modern datacenter rack topology is now largely being driven by collaborative, hive-mind organizations like the Open Compute Project (OCP). And even with all that effort, many of those cores are still essentially thirsting for that next drink of data. There’s a very cogent person a few TechArena articles away from this one that noted it can be very disappointing to pay $1B for some AI hardware only to see $500M of work happen. These are not inconsequential decisions.

So what to do? First, don’t panic (towel and exploding planet optional). There are plenty of resources out there that can help optimize whatever you’re building. Look for similar organizations in your region or technology circle and ask questions, many will be very open if they’re not competing with you, some even if they are. Look to organizations like OCP as a guide for setting a proper configuration. Also, your software providers likely have a much better view of configurations that best run their work, mostly because of those decades of work. Finally, look to cloud options, since your provider will then be taking the risks.

And keep the analogy going. Maybe in the flat world of data analytics we’ll collectively find out what data fetch has us all going to the moon and back. By the way, is anyone else thirsty? And where the heck is the remote?

Sometimes We Wonder What Intelligence is Artificial

“If a machine is expected to be infallible, it cannot also be intelligent.”

- Alan Turing

“Shall we play a game?”

- Joshua, War Games

Total aside that will become common in this space. We’ve been out camping to celebrate our 28th anniversary. How the heck she’s tolerated me that long is a story in itself, THE COMPANY only stood me for 26.5!

We were short on a bottle for the pre-dinner drink. Thirty minutes to the closest liquor store (relax, we needed groceries, too), and we found a Rebel 100 (Lux Row Distillers, Bardstown, KY). It’s made using a traditional wheated recipe. The correspondent and the lovely bride both have a fondness for the grassiness wheat adds, and there’s a little beyond-bourbon sweetness that you normally don’t get in a 100 proof taste. The smokiness might come from the barrel, or it might be that the AQI is well into the 200 range because half of Oregon is on fire. Anyway, cheers.

Your Humble Author (YHA) has a fascination with the stock market, mostly because compound interest provides to us, the willfully underemployed. One particular amusement these days is watching all the little Whos in Whoville screaming, “But we have AI!” when being dragged to the dust speck boiler of earnings results. First off, a quarter of the time it’s not AI, stop lying to your marketing department. We get that Machine Learning is esoteric and hard to explain, but it also generates results you can audit. Another quarter involves using someone else’s chatbot to produce not very meaningful results. That remaining half? The development department was looking for some “me time” and figured that a 60% accuracy rate was better than the dartboard they usually get from upper management, so they did an implementation hoping to get Friday afternoons off. There’s some good, but there’s a lot of not good.

AI has been around for a long time, the Turing Test is named after a really smart guy who passed in the 1950’s, after all. Some of us would probably say that the current rush on AI is due to the accumulation of data, but that’s a little misleading. There’s always been data sloshing around the world. It’s just that very little of it was in the right place, and it certainly was not in the right structure to be scrutinized. If some government-funded project wanted to study global temperatures, it had to do the work of getting the data from every ledger to one computer storage spot, in one format, with some serious programmers to write serious code to produce meaningful results.

The PC/Internet revolution of the 90’s started the ball rolling on creating a “place” for everything. After a couple decades of upper management demanding that everything go up in the cloud, we finally solved the access problem, but not the structure one. If that now bigger government-funded project wanted to take a look now, it’s not only got the old temperature data, but access to a billion backyard weather stations, all the articles about the prior art, and a serious amount of social media memes vamping on a repetitive Nelly tune. So now it’s down to figuring out how to access all the slosh in that giant container.

A more relevant aside than usual: YHA remembers being at an IBM Conference a few years back where a Watson executive told the audience that they were tracking potential flu epidemic outbreaks in part by monitoring Twitter for people posting the equivalent of, “I feel like crud today.” They were finding outbreaks about ten days faster than the CDC who was tracking their standard measures like hospital data and pharmacy orders. So this AI stuff CAN work if you know what you’re doing.

Anyway, back when we were all calling it Big Data ten years ago, the structure problem was still a limiting factor in the analytics discussion. We were all about the V’s: Volume, Variety, Velocity. The real winning formula in analytics at that point was addressing the Variety by referencing more and more data types in their natural form, which eliminated the need for costly (money and time) Extract/Transform/Load (ETL) routines. We were right back to those serious programmers, serious code, meaningful if directional results.

Artificial Intelligence is a fundamentally lazy approach to the data problem. Lest you think that’s a condemnation, any good strategist will tell you that the best strategies are ones that are lazy, the hard work is in the tactics. The basic approach of AI is to throw it at all the data and see what patterns it finds. Structure is less of an issue when your algos are seeking repetition independent of prior relevance. So all those companies claiming that AI will solve your problems are likely correct in the long term. Except…

All you data nerds have been thinking: “Dude, you forgot a V a couple paragraphs ago.” You’d be correct. As the data in the cloud continued to grow, we added Veracity to the equation. The sober geeks out there are still sifting the data before they attack it with AI, knowing that garbage in still produces garbage out. Those less experienced, or less aware that tactics are where the hard work hits, are likely to slow their actual decision processes by getting inconsistent results. If you’d like a practical example, hit up any chatbot or AI search engine with a conspiracy theory that goes against your grain, and discover the chaos it creates.

So financial results won’t actually just come at the behest of, “We have AI!” They’ll come when companies build in the tools and training to sort the data and make the sober decisions. We’ll all be able to see this shake out in front of us, even as the new types of data hitting the cloud create a need to do it all over again in a more complex way. As always when dealing with your own personal compounding, caveat emptor, and happy hunting.

Taboola Taps AI to MicroTarget Advertising

In a recent episode of the TechArena Data Insights series, my co-host Jeniece Wnorowski and I had an insightful conversation with Ariel Pisetzky, the Vice President of Information Technology and Cyber at Taboola, about the transformative impact of data and AI on ad placement. Our discussion revealed how these advanced technologies are redefining the advertising landscape, making ad placements more efficient and targeted to business objectives.

The Shift to AI-Driven Advertising

Ariel emphasized that at Taboola, the mission is to "connect people with content they may like but never knew existed." This mission is powered by sophisticated algorithms that analyze user behavior, preferences, and context to deliver highly personalized content. Ariel noted, "Our systems are designed to process enormous amounts of data in real-time to understand user intent and deliver the most relevant ads." As someone whose business in part is driving micro-targeted paid media, I was delighted to learn from Ariel about what he and the team at Taboola are delivering.

I was also delighted to hear that AI is at the core of Taboola's strategy for ad placement. By utilizing machine learning models, Taboola can predict which ads are most likely to engage individual users. Ariel explained, "Our AI systems analyze vast amounts of data in real-time to understand user intent and preferences. This allows us to serve ads that are not only relevant but also engaging."

These AI algorithms take into account various factors, including browsing history, time of day, and even the type of device being used, ensuring that ads are placed in the optimal context, thus maximizing user interaction.

Dynamic and Contextual Ad Placements

One of the key innovations we discussed with Ariel is Taboola's approach to dynamic and contextual ad placements. Traditional ad placement strategies often rely on static parameters, but Taboola's AI-driven platform can adapt in real-time. For instance, if a user frequently reads tech blogs in the evening, Taboola's system might prioritize tech-related ads during that time frame.

Ariel highlighted this capability, stating, "Dynamic ad placements allow us to adjust the content based on immediate user context. This not only improves the user experience but also enhances ad performance for our clients."

Predictive analytics is another area where Taboola excels. By analyzing historical data and user behavior patterns, the platform can forecast future actions and preferences. This predictive power enables advertisers to stay ahead of trends and tailor their campaigns accordingly.

As Ariel mentioned, "Predictive analytics gives us a glimpse into what users might be interested in next. This foresight is invaluable for creating timely and relevant ad campaigns that capture user interest before it peaks."

Optimized Platforms Deliver to Taboola’s Vision

In our discussion. Ariel highlighted the collaboration between Taboola and Solidigm, focusing on how their combined efforts are enhancing data management and AI capabilities. Ariel mentioned that Solidigm’s advanced data storage solutions play a crucial role in supporting Taboola's AI infrastructure. He noted, "Solidigm's innovations in data storage technology have allowed us to manage and process vast amounts of data more efficiently, which is essential for our AI-driven ad placement systems."

Ariel further explained that the high-performance and reliability of Solidigm's storage solutions ensure that Taboola's AI models can access and analyze data in real-time, leading to more accurate and timely ad placements. "With Solidigm, we're able to scale our operations and maintain high performance even as our data needs grow," he added. This partnership exemplifies how cutting-edge storage technology can support the demanding requirements of modern AI applications, enabling more effective and personalized advertising strategies.

Challenges and Ethical Considerations

Despite the collective advancements Taboola has made to its platform, Ariel acknowledged the challenges and ethical considerations involved in using AI and data for ad placement. Privacy concerns and data security are paramount, and Taboola is committed to maintaining high standards in these areas. "We are constantly evolving our practices to ensure user data is handled with the utmost care and transparency," he emphasized.

Looking ahead, Taboola plans to further integrate AI capabilities to refine ad placements. The company is exploring the use of deep learning models to enhance content recommendations and improve ad targeting accuracy. Ariel shared, "Our goal is to push the boundaries of what's possible with AI, making our ad placements smarter and more intuitive." I, for one, cannot wait to see what is on the horizon as Taboola continues to spearhead innovation in this important arena for marketers.

For more insights from this episode, you can listen to the full conversation on TechArena's podcast.

About Solidigm:

Data storage requirements are evolving rapidly with the explosion of the AI era, and it's important to find the right partner that can provide the flexibility and breadth for each specific AI application. Solidigm is a global leader in innovative NAND flash storage solutions with a comprehensive portfolio of SSD products based on SLC, TLC, and QLC technologies. Headquartered in Rancho Cordova, California, Solidigm operates as a standalone U.S. subsidiary of SK hynix with offices across 13 locations worldwide.

Exploring Advanced Internet Monitoring Solutions with Mehdi Daoudi of Catchpoint Systems

In this Tech Arena episode, Allyson Klein interviews Mehdi Daoudi, CEO of Catchpoint, on internet monitoring, observability innovations, and AI's impact on automation. Discover their latest tools enhancing performance.

Catching Up with Urban Machine and AI Fueled Construction Reclamation

For those who listen to the TechArena podcast, you may recall an episode featuring Urban Machine, a leading startup in the construction reclamation space whose solution creatively combines advanced robotics and AI to “clean” used lumber salvage and ready it for a second use in new building construction. When you consider that up to 25% of landfill is derived from construction materials, it is apparent that solutions that embrace circularity are rapidly adopted. Urban Machine has garnered a lion’s share of attention in this arena given accolades at SXSW and more recently named as a top 10 startup to know by Climate Insider. Urban Machine also collected recognition from the US Green Building Council California with a 2024 Mighty Materials Award underscoring the power of reclaimed materials to green construction practices.

We were curious to check in with Urban Machine on the progress it has made in delivering this breakthrough tech to construction sites. The eager engineers at the company report that they are hard at work readying to deliver their first production machine onsite to customers. Check out this video to check out the latest in deployment advancement. I wouldn’t be surprised at all to see this solution garner more than its unfair share of the >$20 million in US EPA funding for clean manufacturing materials.

Want to learn more about Urban Machine? Check out my podcast with Co-Founder and CTO Andrew Gillies as he describes how the machine has come into being, the technologies behind being able to pinpoint fastener locations in wood, pry fasteners out with minimal wood damage, and deliver a safe product back into construction sites, and how developers can engage with the team to get multiple generations out of lumber.

Advanced Packaging Innovations in the Semiconductor Industry with Chee Ping Lee of Lam Research

In this podcast, learn about the challenges of silicon advancement, the importance of advanced packaging, and how Lam is driving breakthroughs to support the future of AI and chiplet ecosystems.

.jpg)