Empowering Innovation

with Solidigm

Explore the cutting edge of computing from data center to edge including solutions unlocking the AI pipeline, all backed by Solidigm's leading SSD portfolio.

Cracking the Code on Storage Efficiency with Solidigm

Explore myths, metrics, and strategies shaping the future of energy-efficient data centers with Solidigm’s Scott Shadley, from smarter drives to sustainability-ready architectures.

Inside Data-Centric Strategy with Equinix and Solidigm

Equinix’s Glenn Dekhayser and Solidigm’s Scott Shadley join TechArena to unpack hybrid multicloud, AI-driven workloads, and what defines a resilient, data-centric data center strategy.

Powering AI: Solidigm’s Vision for the Future of Storage

Industry leader Scott Shadley reveals how Solidigm’s innovations in SSDs, partnerships, and architecture are reshaping data centers to meet the rising demands of AI, edge, and enterprise workloads.

Inside Entertainment’s Storage Revolution with Dell Technologies

In highly collaborative industries like media and entertainment, time isn’t just money—it’s opportunities. Giving your animators, designers, and visual effects artists more time means they have more space to coordinate and develop better creative outcomes. And when you have hundreds of collaborators, saving each one just a few minutes every hour can exponentially increase the amount of time spent on creative endeavors instead of, for example, waiting for software to load.

I recently had the opportunity to explore how storage innovation is enhancing collaborative workflows in the media and entertainment industry with Alex Timbs, Senior Business Development Manager of Media and Entertainment at Dell Technologies, and Scott Shadley, Leadership Marketing Director at Solidigm. During our Data Insights episode, it became clear that changes in content production workflows from pre-production to final edits are causing a fundamental shift in how storage supports content creation, moving flash storage from “nice to have” to essential for modern production pipelines.

Alex brought a unique perspective to our conversation, having spent 15 and a half years at Animal Logic (now Netflix Animation) before joining Dell. His experience as the company scaled from 80 to over 1,000 people globally provided compelling real-world context for understanding storage evolution in creative environments.

Alex saw firsthand the “serendipitous performance improvements” that emerge when organizations transition to flash storage and save minutes that add up to hours of freed-up creative time, witnessing gains that went far beyond what traditional metrics might predict. At Dell, he’s worked with customers who achieved this as well. He cited a recent Dell film studio customer who achieved 100x performance improvements—not 100% gains, but literally 100 times faster workflows.

The need for faster storage has been recently accelerated by AI and real-time workloads, which demand rapid filling and flushing of video random access memory (VRAM) on graphics processing units (GPUs). Where 24GB VRAM used to be sufficient, today’s workloads often demand 96GB or more. To keep these GPUs fed, VRAM must be filled and flushed at extreme speeds, making high-performance flash storage no longer a luxury, but an absolute necessity.

Scott emphasized how storage has transformed from an afterthought to a critical performance enabler. The concurrent access patterns required by modern workflows—where multiple users need simultaneous access to large files alongside their associated metadata—can only be efficiently handled by flash technology. Existing spinning HDD storage simply cannot deliver the random access performance required for today’s collaborative, high-resolution content creation environments.

Dell’s AI Factory serves as a robust foundation for media and entertainment organizations striving to lead amid surging data growth, new content formats, and adoption of AI-powered workflows. The platform uniquely combines validated, full-stack solutions, enabling companies to start small and scale incrementally, directly addressing the sector’s dual mandates of technological advancement and financial discipline.

At its core, Dell AI Factory leverages the PowerScale family: from the cost-effective F210, optimized for studio or departmental use, to the high-density, high-performance F910 designed for the most demanding enterprise-scale operations. This architecture empowers customers to only pay for what they need today, with the confidence they can scale both performance and capacity linearly as their needs evolve, eliminating the risks of overprovisioning or stranded investment.

The result is a unified platform that streamlines collaborative workflows (including editing, visual effects, and broadcast), consolidates data silos, and supports both on-premises and multi-cloud deployment, all with high security and efficiency. Multiple industry-leading media organizations already rely on PowerScale for everything from 4K/8K post-production to real-time virtual production and generative AI–driven analytics. Dell’s integrated data reduction, metadata solutions, and cyber protection further drive down operational costs, while the modular “grow as you go” model enables ongoing financial prudence. This makes the AI Factory a trusted partner: future-ready, validated by top global brands, backed by deep ISV partnerships, and proven to accelerate creative delivery while protecting the bottom line.

The edge computing dimension adds another layer of complexity and opportunity. A modern film production might have 10 cameras that are capable of capturing resolutions up to 17K, and the crew will want to start working with that immediately. Alex described in-camera visual effects (ICVFX) scenarios where directors give real-time creative feedback, viewing final-quality visual effects directly on on-set monitors. This surge in edge computing for ICVFX pushes the need for high-performance storage that can operate in demanding production environments, all while delivering the rock-solid reliability that tight shooting schedules require.

Interestingly, Alex compared today’s transformation to the shift from analog film to digital photography. Just as digital cameras delivered instant feedback and removed the high cost of mistakes tied to film processing, modern workflows in content production combine real-time creative feedback with minimal risk. This immediacy allows teams to iterate more often, experiment more boldly, and ultimately achieve stronger creative outcomes by removing traditional bottlenecks.

Solidigm’s collaborative approach resonates strongly with this philosophy. Rather than pushing customers toward the highest-performance solutions regardless of need, Scott described how their solutions lab and upcoming AI lab allow customers to test workloads before making commitments. This “try-before-you-buy” model helps organizations right-size their storage investments while ensuring they can achieve their performance objectives.

Looking ahead, both experts see storage demands continuing to accelerate. Organizations working in 4K today need to prepare for native 8K workflows tomorrow, requiring storage architectures that can scale both performance and capacity over multi-year timeframes.

The TechArena Take

The convergence of AI, real-time workflows, and edge computing is fundamentally reshaping storage requirements across industries, with media and entertainment serving as the proving ground for technologies that will eventually transform other verticals. As Alex noted, the future belongs to organizations that can make the most informed real-time decisions possible, and that capability fundamentally depends on having the right storage foundation in place. Dell and Solidigm’s partnership demonstrates how thoughtful collaboration can deliver solutions that scale from individual creators to global production companies.

For more insights on Dell’s storage solutions for media and entertainment, visit their website www.delltechnologies.com/powerscale or connect with Alex Timbs on LinkedIn. Learn more about Solidigm’s AI-focused storage solutions at solidigm.com/ai or reach out via LinkedIn to Scott Shadley.

Dell’s AI Data Innovation: When Storage Takes Command

In the not-so-distant past, data center storage was somewhat of an afterthought. You needed a place to gather data; you needed it to be reliable; and you needed it to be economical. And that’s pretty much where the conversation ended. Now in the era of AI workloads, storage is taking center stage for the critical role it plays in data activation. Having the right storage solutions in the right place provides the flexibility, efficiency, and security to feed AI at scale.

I recently had the opportunity to explore this transformation with Saif Aly, senior product marketing manager at Dell, and Scott Shadley, leadership marketing director at Solidigm, to explore how enterprise storage requirements are evolving in response to AI-driven workloads and data-intensive applications. During our TechArena Data Insights episode, it became clear that storage has evolved to the critical foundation enabling AI success.

The AI workload revolution has created unprecedented demands on storage infrastructure. As Saif explained, these workloads require sustained throughput, low latency, and massive scale simultaneously. The challenge extends beyond simple performance. Enterprises face data fragmentation across edge, core, and cloud environments, creating operational complexity that can lead to vendor lock-ins and underutilized graphics processing unit (GPU) resources.

Dell’s response centers on their AI Data Platform, built on the principle that modern storage must support the entire data lifecycle. The PowerScale platform serves as the foundation, delivering what Saif described as unmatched performance improvements: 220% faster data ingestion and 99% faster data retrieval compared to previous generations. The introduction of MetadataIQ further accelerates search and querying capabilities, directly supporting AI workload requirements.

Scott emphasized how customer conversations have evolved beyond traditional capacity discussions to focus on “time to first data”—how quickly organizations can access information when they need it. In AI application workloads, different data types require varying levels of accessibility and performance characteristics. The challenge lies in understanding what data needs to sit directly adjacent to GPUs versus what can be retrieved from more distant storage tiers.

The discussion revealed how inference workloads, particularly retrieval-augmented generation (RAG) architectures, create unique storage demands. These systems require large datasets to be readily accessible for real-time referencing while simultaneously managing active data processing next to compute resources. Success depends on optimizing the balance between high-performance local storage and efficient data movement from archive locations.

While flash storage dominates high-performance applications, both experts acknowledged that hard disk drives (HDDs) retain value for cold and warm datasets. The key insight: not all data is equal, and successful architectures blend flash-based solid-state drives (SSDs) and HDD storage within unified namespaces to balance performance and cost considerations.

The conversation highlighted remarkable capacity evolution, with Saif recounting his amazement at holding Solidigm’s 122 TB drive, a device containing massive data volumes in a small form factor. This density revolution, progressing from 30 TB to 60 TB to 122 TB drives just in the last year, enables dramatic improvements in rack space efficiency, power consumption, and cooling costs while maintaining the throughput AI workloads demand.

Scott connected this capacity evolution to practical customer needs, explaining how optimization now focuses on the right bandwidth, density, and time-to-data characteristics rather than simply maximum speed. As storage capacity per device increases, the focus shifts to infrastructure optimization that delivers customer value through improved total cost of ownership and operational efficiency.

Real-world impact emerged through customer examples Saif shared. Kennedy Miller Mitchell, the studio behind the Mad Max franchise, used PowerScale to enable pre-visualization of entire scenes before filming. That capability allows directors to iterate creatively and make real-time decisions. Subaru leveraged the platform to manage exponentially growing data volumes, handling 1,000 times more files than previously possible and directly improving their AI-driven driver-assistance technology accuracy.

Looking ahead, both experts see storage demands continuing to accelerate, driven by AI’s exponential data growth and evolving workload requirements. As Saif noted, “the data explosion is not going to stop,” with AI both consuming and creating massive amounts of data. The distributed nature of modern computing—spanning edge, core, and cloud environments—requires storage solutions that provide consistent experiences and seamless data mobility across all locations.

The TechArena Take

The convergence of AI workloads, massive data growth, and distributed computing architectures is fundamentally reshaping enterprise storage from a cost center to a strategic enabler. Dell and Solidigm’s partnership demonstrates how thoughtful collaboration can deliver solutions that scale from individual creators to global enterprises while addressing the critical balance between performance, capacity, and cost efficiency. As storage continues to assert its place as a foundation of modern workloads, organizations that invest in flexible, high-performance architectures today will be best positioned to capitalize on tomorrow’s AI-driven opportunities.

For more insights on Dell’s enterprise storage solutions, visit Dell.com/PowerScale or connect with Saif Aly on LinkedIn. Learn more about Solidigm’s AI-focused storage innovations at solidigm.com/AI or reach out via LinkedIn to Scott Shadley.

Storage Is the Catalyst Revolutionizing Enterprise AI

The enterprise AI landscape is undergoing a fundamental transformation. While organizations have focused heavily on graphics processing unit (GPU) compute power and model sophistication, a critical infrastructure component has emerged as the new performance differentiator: storage. The Supermicro Open Storage Summit, running from August 12 to 28 with online sessions from leading solutions providers, promises to reveal how innovative storage strategies are delivering breakthrough performance improvements that could reshape your AI deployment economics.

The Hidden Performance Multiplier in AI Infrastructure

As organizations scale from AI experimentation to production deployment, they’re discovering that inference workloads demand different storage characteristics than training pipelines. The data tells a compelling story: enterprises deploying solid state drive (SSD) storage solutions are seeing 10x to 20x throughput improvements, 4,000x input-output per second (IOPS) scaling improvements, and up to 40% total cost of ownership (TCO) reductions compared with traditional storage solutions.

These aren’t theoretical gains. Real-world implementations for retrieval-augmented generation (RAG) workloads have demonstrated that storage optimization with SSDs can deliver 70% increases in queries per second while simultaneously reducing memory footprint by 50%. For enterprises struggling with the economics of AI deployment, these performance multipliers represent an opportunity to maximize return on investment.

Two Critical Sessions You Can’t Afford to Miss

The Supermicro Open Storage Summit expands on these opportunities with two must-attend sessions that tackle the most pressing storage considerations facing enterprise AI deployments today.

Storage to Enable Inference at Scale (August 19, 10:00 AM PT) brings together industry leaders from Solidigm, Supermicro, NVIDIA, Cloudian, and Hammerspace to explore how new storage protocols and distributed inference frameworks are enabling large-scale inference processing. This session will reveal how organizations are moving beyond traditional storage approaches to deploy validated infrastructure optimized for GPUs that unlocks real-time performance at scale.

Enterprise AI Using RAG (August 27, 10:00 AM PT) dives deep into RAG, one of the most critical enterprise AI use cases. With experts from Solidigm, Supermicro, NVIDIA, VAST Data, Graid Technology, and Voltage Park, this session addresses how enterprises can operationalize generative AI securely and efficiently while maintaining proximity to their most valuable data assets.

The SSD Revolution: Beyond Traditional Storage Thinking

One of the most compelling insights emerging from enterprise AI deployments challenges conventional storage wisdom. Solidigm’s recent breakthrough work, which will be discussed in the upcoming sessions, demonstrates that strategically offloading data from memory to high-performance SSDs doesn’t just reduce costs: it actually improves performance in many scenarios.

The company’s innovative approach involves moving model weights and RAG database components from expensive distributed random-access memory (DRAM) to optimized SSDs, achieving better performance at lower cost. In one demonstration involving a 100 million vector dataset, this approach delivered 57% less DRAM usage while maintaining or even improving query performance. The economic implications are huge as enterprises can run complex models on GPUs that would otherwise lack sufficient onboard memory.

From Data Center Footprint to Power Efficiency: The Complete TCO Story

The storage optimization story extends far beyond raw performance metrics. In the upcoming sessions, Solidigm will also discuss how cutting-edge storage solutions are demonstrating dramatic improvements in TCO across the entire infrastructure stack.

Take a practical example, a 50-petabyte dataset deployment with 12 NVIDIA H100 systems. Traditional HDD-based approaches require nine racks consuming 54 kilowatts. Deploy high-density 122TB SSDs, and that footprint shrinks to a single rack with up to 90% power reduction and 50% increase in available GPU footprint.

These efficiency gains matter more than ever as enterprises grapple with data center space constraints, cooling challenges, and escalating power costs.

The TechArena Take: The Storage Revolution Is Here

Organizations that leverage cutting-edge storage optimization strategies are positioning themselves for sustainable competitive advantage. While competitors struggle with infrastructure costs and performance limitations, early adopters are achieving superior AI outcomes at lower total cost of ownership.

The ability to deploy more sophisticated models, process larger datasets, and deliver faster inference responses directly translates to better customer experiences and operational efficiency.

The window for competitive advantage is narrowing rapidly. As these storage optimization techniques become mainstream, the organizations that implement them first will establish performance and cost advantages that become increasingly difficult for competitors to match.

Register Now

The Supermicro Open Storage Summit provides an opportunity to learn directly from teams of industry leaders who are defining the future of AI infrastructure. With sessions featuring experts representing all layers of the stack, you’ll gain access to the collective expertise of the companies driving AI infrastructure innovation. The summit’s focus on real-world implementations, demonstrated performance improvements, and practical deployment strategies makes it essential viewing for any organization serious about scaling AI effectively.

Don’t let storage bottlenecks limit your AI ambitions. Register below today and discover how strategic storage optimization can transform your enterprise AI performance while dramatically improving your deployment economics.

Storage to Enable Inference at Scale | August 19, 10:00 AM PT

Enterprise AI Using RAG | August 27, 10:00 AM PT

Dell on Storage Innovation in the AI-Driven Enterprise

Dell and Solidigm leaders explore how modern storage—flash, SSDs, and flexible architectures—enables AI, accelerates performance, and helps enterprises manage data across edge to cloud.

Insights with sayTEC on Sovereign IT Infrastructure Revolution

Fragmented approaches to security and IT solutions have frustrated the private and public sector for decades, creating a need for costly integrations while still leaving vulnerabilities. My recent Data Insights interview with Jeniece Wnorowski, director of industry expert programs at Solidigm, and Bora Güzey, senior IT consultant at sayTEC, revealed how organizations are finally solving this problem as they demand unified, security-first solutions that eliminate the complexity of these legacy approaches to IT architectures.

During our conversation, Bora provided an in-depth look at how sayTEC is pioneering sovereign IT infrastructure by fundamentally reimagining how security, access, and storage work together. This transformation begins with recognizing that traditional IT models treat these critical components as separate, siloed systems — an approach that increases risk, cost, and administrative overhead while leaving organizations vulnerable to evolving cyber threats.

The Unified Security-First Approach

Bora highlighted a key differentiator that sets sayTEC apart from conventional solutions: their holistic approach to IT security. sayTEC has built a unified platform where access control, data protection, and system performance are integrated from the ground up. This security-first architecture is based on zero trust and includes built-in regulatory compliance. The combination ensures that protection isn’t an add-on but is embedded across every layer of the system, delivering what Bora described as “military-grade security without compromising performance or cost efficiency.”

The company’s hyperconverged infrastructure (HCI) platform combines compute, S3 object storage, backup, and secure remote access into a single integrated system. Thanks to partnerships with companies like Solidigm and Virtuozzo, sayTEC can deliver impressive performance metrics — S3 storage speeds of up to 150 gigabytes per second and seamless scaling up to 200 petabytes, all with zero downtime.

Zero Trust and Sovereign Data Handling

As enterprises grapple with increasingly sophisticated cyber threats, Bora addressed how sayTEC’s zero trust architecture goes beyond basic implementations. Their sayTRUST VPSC (Virtual Private Secure Communication) technology actively monitors the full communication path, blocking unauthorized traffic before it even enters the tunnel. The system deploys pre-tunnel verification, token-based access control, and layered encryption, including perfect forward secrecy, to create what they call a “darknet environment” for secure communications.

For sovereign data handling — a critical concern for government and enterprise customers dealing with sensitive information — sayTEC’s systems ensure full control over where and how data is stored and accessed. This resonates particularly strongly with organizations dealing with critical infrastructure or sensitive personal data, where sovereignty and adaptability are paramount.

Scalability Without Compromise

One of the most impressive aspects of sayTEC’s solution is their promise of dynamic scaling without system downtime. Bora explained how their modular architecture allows customers to start with as few as three nodes and scale up to hundreds without interrupting operations. This is achieved through distributed workloads, erasure coding for redundancy, and live data migration capabilities.

For organizations facing rapid growth or stringent regulatory demands, this means no painful transitions or migrations. They can grow their infrastructure in real time while maintaining full compliance, business continuity, and budget predictability.

Strategic Technology Partnerships

Bora also emphasized the importance of strategic partnerships in delivering exceptional value to customers. The company’s research and development collaboration with Solidigm enables them to leverage high-performance NVMe drives that dramatically reduce latency while optimizing energy efficiency. These partnerships have allowed sayTEC to reduce infrastructure costs by over 50%, accelerate deployment times, and offer return on investment often within just 12 months.

Market Traction and Future Roadmap

sayTEC’s solutions are particularly well-suited for sectors where security, compliance, and scalability are non-negotiable. The company has seen strong demand in finance, public sector, defense, and health care — industries that deal with sensitive data and face constant regulatory scrutiny. In addition, their simplified deployment model and competitive cost structure are increasingly attracting medium-sized enterprises looking for secure, future-proof IT systems without requiring large in-house expertise.

Looking ahead, Bora outlined an ambitious roadmap that includes hyperconverged infrastructure with GPU computing for AI and machine learning workloads, enhanced zero trust for mobile environments, privileged access management integration, and plans to double S3 storage acceleration to 300 gigabytes per second. The company also plans to expand compute power support to 256 cores per node and scaling up to one petabyte per node.

The TechArena Take

In the rapidly evolving landscape of enterprise IT security, sayTEC’s approach represents a significant departure from traditional fragmented architectures. By delivering a truly unified, security-first platform that combines infrastructure, access, and storage into a single system, they’re addressing fundamental challenges that have plagued enterprise IT for decades.

The company’s focus on plug-and-play systems that simplify complexity while delivering military-grade security positions them well for the growing demand for sovereign IT solutions, particularly in Europe, where data sovereignty regulations are becoming increasingly stringent.

Check out sayTEC’s full range of solutions at www.saytec.eu. To connect with Bora and learn more about their sovereign IT infrastructure approach, you can reach out via LinkedIn or email for direct inquiries and demo opportunities.

Dell Leads the Entertainment Industry’s Storage Revolution

Dell and Solidigm explore how flash storage is transforming creative pipelines—from real-time rendering to AI-enhanced production—enabling faster workflows and better business outcomes.

Hypertec Shrinks the Data Center with Immersion-Born Servers

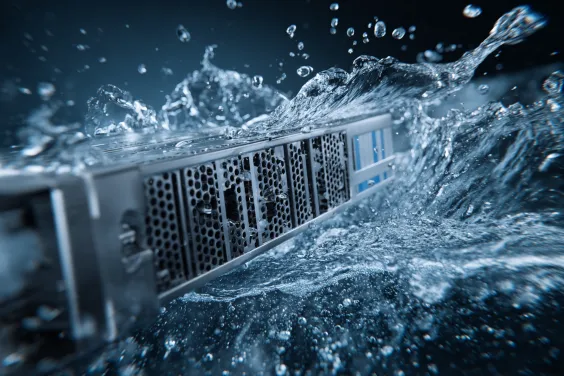

I recently sat down with Solidigm’s Jeniece Wnorowski and Mohan Potheri, principal solutions architect at Hypertec, to unpack how immersion cooling is reshaping data-center economics for AI and high-performance computing (HPC). During our discussion, it became clear that the biggest constraint on AI progress isn’t silicon — it’s keeping that silicon cool. Hypertec, founded in 1984 and now shipping over 100,000 servers a year to customers in more than 80 countries, has spent four decades learning how to squeeze more compute into less space without breaking the power budget, an experience that set the stage for our conversation.

Mohan painted a sobering picture of an industry straining under the weight of its own momentum. AI, HPC, and edge-computing workloads have pushed power and cooling demand to record highs just as sustainability-focused goals demand lower energy footprints. Operators face a conflicting mandate: deploy clusters faster than ever, but do so with tighter efficiency targets and, in many sites, within real-estate footprints that can’t grow any further. Space-constrained facilities must find ways to condense more compute while still meeting aggressive thermal budgets, all without blowing out capital or operating expenses. These pressures, he said, turn traditional air-cooled data centers into bottlenecks the moment racks tip into multi-kilowatt territory.

Hypertec’s answer is to start with liquid rather than retrofit for it. The company’s single-phase "immersion-born" servers live permanently in dielectric fluid, eliminating fans and chillers and cutting cooling power by roughly 50% while driving site-level power usage effectiveness (PUE) down to about 1.03.

Because every component is designed for submersion from day one, the servers avoid material-compatibility problems that plague air-cooled hardware dipped into tanks after the fact, and they let central processing units (CPUs) and graphics processing units (GPUs) sustain 90-95% of peak clocks instead of throttling under heat. A 10-megawatt deployment that would normally sprawl across 100,000 square feet collapses into roughly a tenth of that footprint, and Hypertec’s field data shows hardware lasting up to 60% longer thanks to the vibration-free, contaminant-free bath.

Tanks roll in pre-assembled, set up in under 10 minutes, and fill with fluid in less than half an hour, giving operators a shortcut from loading dock to AI production. Add immersion-ready storage nodes that put as much as two petabytes beside the compute they feed, plus 800 Gigabit-per-second networking, and Hypertec delivers a dense, sustainable, and rapidly deployable platform that sidesteps the very constraints throttling its air-cooled peers.

Before we wrapped, Mohan shifted the spotlight to storage—the quiet partner that can still slow an otherwise cutting-edge system. He explained that if data can’t reach the processors quickly, even the fastest GPUs and CPUs end up waiting. To avoid that pinch-point, Hypertec extends its immersion approach to storage as well, placing dense drive enclosures in the same fluid bath and on the same high-throughput fabric as the compute nodes. By treating cooling, compute, and data as one integrated stack, the company keeps every component working in sync and lays a cleaner path to future scale.

The TechArena Take

What’s the TechArena take? Together, these solutions make a compelling argument: immersion isn’t a niche experiment but a practical response to AI’s insatiable appetite for watts, racks, and real estate. Hypertec’s immersion-born solutions show how vendors can rethink server design to meet that challenge head-on—reducing energy, shrinking footprints, extending equipment life, and freeing budgets to buy more compute instead of more chillers.

Listen to the full conversation here, to learn how immersion cooling is quickly moving from “interesting” to inevitable.

Immersion Cooling: Revolutionizing AI & Edge Computing

Allyson Klein and Jeniece Wnorowski welcome Mohan Potheri of Hypertec to explore how immersion cooling slashes energy use, shrinks data-center footprints, and powers sustainable, high-density AI, HPC, and edge solutions on this Data Insights episode. Find the audio-only podcast here.

SayTEC Powers Scalable, Secure, Sovereign IT Infrastructure

SayTEC redefines IT with a zero trust, hyper-convergedplatform delivering sovereign cloud, seamless scalability, and military-gradesecurity for critical industries.

Antillion Brings Data Center Performance to the Tactical Edge

Antillion is a UK-based technology company focused on edge outcomes that brings together diverse talent to deliver innovative, user-centric solutions through collaborative partnerships with customers.

The company emphasizes research and development, maintaining cutting-edge capabilities at their London-area facility equipped with CNC machines, 3D printers, and quality assurance systems, while their team of engineers and system designers create visually stunning, functional products that blend modern design with advanced technology. Antillion's mission centers on simplifying complexity and addressing genuine user needs through products that provide immediate positive experiences, supported by comprehensive training, expert on-site assistance, and a commitment to exceptional craftsmanship that drives both trust and user satisfaction.

We caught up with Alistair Bradbrook, Antillion's founder and COO, to learn how they utilize storage technologies to bring tactical data center performance to the most challenging edge environments.

1) What are some of the key challenges your customers face related to generating, storing and processing high volumes of data?

At Antillion, we build for customers operating where real-world demands push technology to its limits. These are environments that are harsh, unpredictable, and often disconnected — where you can’t count on infrastructure, power, or even a network signal.

The challenges? They’re complex and relentless.

First, there’s the environment itself. We’re talking about kit that needs to survive inside armoured vehicles filled with dust or bolted to poles in sub-zero Arctic winds. These aren’t lab conditions — this is real-world brutality where traditional IT just can’t cope.

Then there’s SWaP – size, weight, and power. We design platforms that can be carried in a rucksack or strapped inside a vehicle. Performance is still expected — but within incredibly tight physical and power constraints. That’s a huge design challenge.

Connectivity’s another big one. Our customers often operate in degraded or disconnected networks. There’s no cloud to rely on. So, everything — from data ingestion to processing and decision-making — needs to happen at the point of contact. Locally. Instantly.

The operational tempo is relentless too. When you’re in a mission-critical situation, latency isn’t an inconvenience — it’s a risk. Insight has to be real-time. You’re talking about sensor data flowing into compute, through analysis, and into an actionable decision — in seconds or less.

And lastly, usability under pressure. These systems aren’t being deployed by sysadmins — it could be soldiers, field engineers, or emergency responders. There’s no time for training manuals. It just needs to work — fast, reliably, and intuitively. That’s what we focus on delivering.

2) How are you helping to address these challenges with the products and services you provide?

We’ve designed our PACE platforms from the ground up to bring data centre-grade capability to the edge — without compromising on performance, durability, or usability.

For us, it always starts with form and function. We don’t design for static data centre environments — we design for rooftops, vehicle interiors, trenches, and backpacks. Half-width, short-depth, modular hardware that’s built to fit real-world environments. That’s the basis of the PACE design language.

On the compute side, we’re integrating serious horsepower. We’re talking AMD EPYC, Intel Xeon, and even ARM — plus accelerators from NVIDIA and others — packed into ruggedized, sealed, and IP-rated platforms that can be mounted in a vehicle or carried by hand. These systems aren’t just tough — they’re powerful.

Storage is a big part of the picture, too. Solidigm’s EDSFF SSDs have been a game-changer for us. We now offer systems that support the 122TB D5-P5336 from Solidigm — and they’re holding up brilliantly in high-vibration, high-temperature scenarios. Whether it’s a wearable system or something mounted, we can keep massive volumes of data local, reliable, and fast.

Then there’s the design philosophy. We obsess over usability — intuitive deployment, straightforward servicing, and no-nonsense operation. These systems have to work for people in the field, under pressure. The goal is always the same: the tech disappears, the mission stays front and centre.

Whether it’s the ultra-portable PACE AIR or the fully ruggedized PACE FRONTIER, we’re not just making edge compute possible — we’re making it powerful, deployable, and trusted in the toughest environments.

3) How has the shift to high-density SSDs impacted your ability to handle massive-scale workloads, particularly in industries like military, industrial and/or security?

It’s hard to overstate the impact high-density SSDs — especially Solidigm’s 15.36TB, E1.S and 122TB, E1.L — have had on what we can achieve at the edge.

Before, storage was a compromise. If you wanted a compact system, you had to accept limited capacity. Not anymore. Now, even our smallest PACE units — like the A211 — can handle massive mission datasets: multi-stream 4K ISR, full platform telemetry, and raw AI training data. And they can do it right where the data’s generated.

The NVMe performance is a huge enabler. We’re not waiting for data to move — we’re running real-time analytics, AI inference, and sensor fusion right there in the field. That’s crucial when you’re working in denied or degraded networks where the cloud just isn’t an option.

The efficiency is game-changing too — more capacity per watt, per millimetre, and per kilogram. That means smaller, lighter platforms that don’t sacrifice on performance or endurance — exactly what’s needed in vehicle, wearable, or airborne deployments.

From a reliability standpoint, Solidigm’s been rock solid. Across hundreds of deployed drives in some truly hostile environments, we’ve seen zero failures. That kind of trust is critical in military and security deployments — we don’t get second chances in the field.

Fewer drives mean fewer cables, faster builds, and simpler logistics. For our customers, that translates directly into reduced operational burden, easier maintenance, and faster time to deployment.

To put it simply: these drives are what let us bring data centre-class storage to the edge — and make it rugged, mobile, and mission-ready.

4) Can you elaborate on the role of high-capacity SSDs in enabling energy efficiency and sustainability within your data centers?

We don’t build traditional data centres — our philosophy is all about disaggregation and decentralisation. We’re taking compute out into the world, wherever the mission demands it. High-capacity SSDs are critical to making that model both sustainable and efficient.

For starters, SSDs give us a much better performance-per-watt ratio than spinning discs. That means more compute and more storage for less power — which is essential when you're relying on batteries or field generators. In remote, mobile deployments, every watt counts.

They also run cooler. That sounds simple, but it makes a huge difference in our sealed, rugged systems like PACE Frontier. We don’t have the luxury of big fans or data centre HVAC — SSDs let us keep things thermally efficient without adding complexity or extra energy overhead.

Another big factor is data movement — or rather, the lack of it. Because we can store and process petabytes locally on the edge, we’re not constantly pushing data back to central infrastructure. That dramatically reduces energy consumption, especially across constrained or expensive networks.

There’s also the sustainability of the platforms themselves. SSD durability helps extend hardware life. Combine that with our Evergreen program — where we upgrade and refresh existing systems instead of replacing them — and you’re looking at a far longer lifecycle. That means less waste, fewer shipments, and a smaller overall footprint.

It’s not just about energy efficiency — it’s about operational sustainability. We’re building systems that last longer, use less, and deliver more — wherever they’re deployed.

5) What opportunities do these advanced storage solutions unlock for your clients in terms of real-time analytics, data accessibility, and scalability?

QLC drives have been a game-changer for what our customers can achieve in the field. They’ve opened up entirely new mission profiles — especially for defense, security, and industrial applications — by enabling us to deliver massive storage and lightning-fast performance in incredibly compact, rugged formats.

We’re now running AI and analytics right on the edge, on systems the size of a lunchbox. Clients are deploying models for things like object detection, anomaly spotting, and pattern recognition — and doing it in real time, exactly where the data’s being generated. There’s no need to wait for upload or connectivity — the insight happens there and then.

That’s crucial in DIL environments (Disconnected, Intermittent, or Limited networks). With these QLC drives, the data stays local and accessible even when comms are down. It’s not just about speed; it’s about continuity and control. For our customers, that kind of autonomy is often mission-critical.

What’s more, QLC drive density means we can scale up without scaling out. Using Solidigm’s E1.S or E1.L modules, our customers can multiply their storage without changing the physical footprint — same chassis, same power draw, just more capability. That’s especially important when size and weight are tightly constrained.

This tech also helps us move faster. Build and provisioning times are down by up to 30%, which gets systems into the field quicker. In operational terms, that can be the difference between acting now and reacting too late.

And perhaps most exciting — we’re enabling entirely new types of missions. Cybersecurity at the edge, autonomous platforms, predictive maintenance using AI — these just weren’t feasible before. Now they are, thanks to the performance and resilience these QLC drives bring.

As we expand the PACE portfolio with the latest high-core-count processors, greater memory, and high-capacity Solidigm storage, we’re developing more powerful and mission-specific platforms for the far edge. Each one is true to our design-first ethos and built to deliver more compute, more capability, and more outcomes wherever the mission takes them.

Hypertec and Solidigm Dive Into Liquid-Cooled AI Compute

We sat down with David Lim, senior director of marketing for Hypertec, to learn more about infrastructure that is purpose-built for AI and HPC workloads.

Founded in 1984, Hypertec is an award-winning global technology provider offering a wide range of cutting-edge products and services with a strong emphasis on sustainability. Trusted by industry leaders, Hypertec serves clients in over 80 countries worldwide. The company has earned international recognition for its sustainability leadership and innovative manufacturing practices.

1. Hypertec has recently partnered with Solidigm to showcase immersion-born servers at Data Center World. Can you elaborate on the objectives of this collaboration and the specific solutions you're presenting?

At the beginning of this collaboration is a pretty simple idea: the demands on data centers are growing fast, from AI training and real-time analytics to ultra-low latency use cases like streaming and remote surgery. So we teamed up with Solidigm to show how you can handle that kind of pressure with infrastructure that’s built for it.

We're bringing our immersion-born TRIDENT servers, which are designed from day one to run submerged in liquid for better cooling and higher density. Paired with Solidigm’s SSDs, we’re showing what it looks like when compute and data access move faster, run cooler, and scale smarter. whether it’s a massive AI cluster or it’s deploying compute in a hospital or telecom edge site.

2. Immersion cooling is gaining traction in data centers, especially for HPC and AI workloads. How do Hypertec's immersion-born servers, integrated with Solidigm's SSD technology, enhance performance and scalability for these demanding applications?

The magic really happens when you combine immersion cooling with fast, reliable storage. Immersion lets us push performance limits. We’re running CPUs and GPUs at peak power without worrying about throttling or overheating. That’s critical for workloads that don’t stop like training large AI models or running inference in real time at the edge.

Now, add Solidigm’s SSDs, and you’ve got the speed to feed those compute engines. Whether it's a 4K video being streamed from a CDN node, or a radiology scan being pulled up instantly in a hospital, fast I/O makes all the difference. The system doesn’t just run, it flies, and it keeps doing so consistently under load.

3. Given the rapid evolution of AI and high-performance computing, what challenges do data centers face today, and how does the Hypertec-Solidigm partnership address these issues?

Data centers are under immense pressure as AI and high-performance computing (HPC) workloads grow exponentially. These applications demand significant computational power, leading to increased energy consumption and heat generation. Traditional air-cooling methods are struggling to keep up, especially as power densities rise. For instance, average power densities have more than doubled in just two years, reaching 17 kilowatts (kW) per rack, and are expected to rise to as high as 30 kW by 2027.

Moreover, the massive data volumes processed by AI applications require storage solutions that can handle high throughput with low latency. Traditional storage systems often become bottlenecks, hindering overall system performance. Additionally, the increasing energy consumption raises concerns about the environmental impact of data centers. Projections suggest that data centers could consume up to 9% of the United States' electricity by 2030, more than twice their current usage.

To address these challenges, Hypertec and Solidigm have collaborated to develop integrated solutions. The more efficient heat dissipation allows higher power densities, enabling more compute resources in the same physical space while reducing reliance on traditional air-cooling systems. Solidigm's SSDs are designed for high throughput and low latency, addressing data bottlenecks in AI applications. Their high-capacity SSDs enable data centers to reduce the number of physical drives, decrease footprint, reduce power consumption, and simplify maintenance. Together, these technologies offer a scalable, energy-efficient, and high-performance infrastructure solution tailored for the demands of modern AI and HPC workloads.

4. Sustainability is a critical concern in modern IT infrastructure. How does the integration of Solidigm's SSDs into Hypertec's solutions contribute to energy efficiency and a reduced environmental footprint?

It’s one thing to build fast infrastructure, it’s another to build smart, efficient infrastructure. That’s where we’re focused. Immersion cooling is incredibly efficient by removing air-cooling, we cut out a huge portion of the power bill. And Solidigm’s SSDs pull their weight too, with lower power draw and high capacity, so we can do more with fewer drives.

The result? You get the performance you’re looking for with lower carbon cost. And for customers in healthcare, finance, telecom who are all under pressure to hit sustainability goals this isn’t a nice-to-have: it’s table stakes.

5. Looking ahead, how do Hypertec and Solidigm plan to evolve their partnership to meet the future demands of data centers, particularly concerning emerging technologies and workloads?

This is just the beginning. We’re already aligning today around what’s next: support for Gen5 and CXL, AI at the edge, liquid-cooled storage all the building blocks of future-ready infrastructure.

Think about what’s coming: AI models that run in real time on the edge of a 5G network. Robotic surgeries assisted by AI, where latency is measured in milliseconds. High-frequency trading platforms that need zero delay. These are not sci-fi anymore, they’re live today. And we’re building the compute backbone that makes them possible, scalable, and sustainable.

Peak:AIO Optimizes AI Infrastructure to Scale Enterprise Adoption

The tech world is evolving rapidly, and few advancements capture attention quite like the transformative shift in AI infrastructure. At the recent GTC conference, one such innovation that stood out was Peak:AIO’s approach to scaling AI technology. We caught up with Scott Shadley, director of leadership narrative and evangelist at Solidigm, and Roger Cummings, CEO and founder of PEAK:AIO, to discuss Peak:AIO’s vision for more intelligent data placement and workload management.

So, what makes this shift significant? Last year, the focus was on simply throwing more hardware at the problem, with rows of GPUs and racks of servers as the go-to solution. However, as we learned from Roger, this approach is evolving. The conversation is no longer about just adding more hardware — it’s about optimizing and refining what’s already in place. This year, the GTC conference revealed a deeper, more solution-oriented approach, where innovation is driven by making the underlying technology not only simpler, but also more efficient for enterprises to adopt and scale.

One of Peak:AIO’s strategies is to focus on maximizing the efficiency of each individual node. By optimizing performance, space and energy efficiency, Peak:AIO is ensuring that each node in an AI infrastructure can deliver six times the performance while maintaining a smaller physical footprint. This efficiency is essential as AI continues to grow more complex and demanding. As Roger aptly pointed out, enterprises can’t afford to let performance bottlenecks slow down innovation, especially as the lifecycle of AI moves from data collection to training and, ultimately, to inference.

This approach doesn’t just apply to large-scale data centers. It’s also vital at the edge, where AI workloads are increasingly being processed closer to the data they need. The role of intelligent storage solutions like those Peak:AIO offers is pivotal in ensuring that data can move efficiently within these distributed environments. By creating dense, high-performance nodes in a 2U frame, Peak:AIO allows businesses to bring AI intelligence closer to the data. This is a game-changer for customers who need the ability to process more data without compromising on speed or efficiency.

One of the most exciting aspects of Peak:AIO’s forward-looking strategy is its focus on AI lifecycle optimization. AI workloads require intelligent data placement and provisioning to ensure that they are always delivered where and when they are needed most. By offering GPU-as-a-service capabilities and prioritizing performance optimization, Peak:AIO is putting businesses in a position to get more out of their existing infrastructure. The result is more cost-effective, efficient and intelligent AI solutions that are scalable as businesses grow and evolve.

So, what the TechArena take? As we look to the future, it’s clear that Peak:AIO is setting the stage for a new era in AI infrastructure. The company’s continued focus on solving performance bottlenecks, optimizing data placement and scaling AI infrastructure is poised to have a lasting impact on how enterprises implement and scale AI technology. For businesses seeking to push the boundaries of AI innovation, Peak:AIO’s solutions offer the intelligent infrastructure required to stay ahead in an increasingly competitive landscape.

For more information about Peak:AIO’s cutting-edge solutions, visit their website at www.peakaio.com or connect with Roger Cummings on LinkedIn. See the related video here.

TechArena Report: 2025 Storage Demands Call for a Change

As AI drives explosive data growth, next-gen SSDs deliver the speed, density, and efficiency to outpace HDDs—reshaping storage strategy for tomorrow’s data-centric data centers.

Insights on AI and Data Management from Intercontinental Exchange

In today’s rapidly advancing tech landscape, optimizing infrastructure to handle massive data sets has become more crucial than ever. One noteworthy story emerging from NVIDIA GTC is how Intercontinental Exchange (ICE) is tackling the growing complexity of data management, AI implementation and storage optimization across its vast network of financial exchanges, data services and mortgage technologies. We sat down with Anand Pradhan, the head of the AI Center of Excellence at ICE, and Roger Corell, senior director of leadership marketing at Solidigm, to discuss how ICE is using technology to stay ahead of the curve.

ICE, known for operating the New York Stock Exchange, processes over 700 billion transactions daily. With such massive volumes of data, building and maintaining an optimized, highly redundant infrastructure is essential. It’s not just about the network and servers — the flow of data through these systems makes storage a critical focus in ICE’s technology strategy.

Anand explained that ICE handles around 10 to 12 terabytes of data every single day with nanosecond granularity. This data, crucial for tracking financial trades, must be stored and accessed at lightning speeds. With millions of trades, real-time analysis and preventing fraud are key, which means both data retrieval and storage processes must be supercharged for efficiency.

One of the biggest challenges is the sheer volume of data and the input-output bottlenecks that arise when reading and writing to storage systems. To solve this, Anand’s team works closely with the InfraSolutions architecture team to fine-tune the storage infrastructure, ensuring that it scales easily, remains flexible and is resilient to failure. This involves rigorous testing and investment in systems that allow for fast, uninterrupted data access, while minimizing latency and maximizing performance.

But Anand’s insights extend beyond just infrastructure; he also highlighted how AI is shaping the company’s approach to data aggregation. At ICE, AI models are primarily used for processing unstructured data, such as images of real estate properties. The AI extracts valuable insights from these photos, identifying key artifacts, such as doors, kitchens or even the color of a room. With real estate photos pouring in from across the U.S., this AI-driven data processing is a massive undertaking. AI models are deployed at scale to make sense of the raw data, which is then converted into structured, usable information for the company’s real estate services.

As ICE’s AI adoption grows, so too does its need for an optimized storage solution. The storage systems of the future, Anand noted, need to accommodate millions of files — whether flat files, images or video data — and ensure they can be accessed quickly. As more and more workloads move to the AI space, fast access to large datasets and the ability to scale storage seamlessly are becoming essential. This is where storage systems that can horizontally scale, offer fast write speeds and support massive volumes of data will stand out.

Looking ahead, ICE’s evolving use of AI and machine learning is transforming its infrastructure and redefining what modern storage systems must deliver. What’s the TechArena take? With growing demands for speed, scale and real-time access, ICE’s journey offers a clear example of how AI is driving a fundamental shift across the industry. As adoption accelerates, organizations at the forefront of tech will need to rethink their approach to storage — those that do will be best positioned to gain a lasting competitive edge.

To learn more about ICE, visit www.ice.com, or find Anand and ICE on LinkedIn.

Dell’s Project Lightning: A Game-Changer for AI-Optimized Storage

At GTC, all things AI took center stage, and one thing that grabbed attendees’ attention was Dell’s latest innovation for AI computing, Project Lightning. We sat down with Scott Shadley, director of leadership narrative and evangelist at Solidigm, and Rob Hunsaker, director of engineering technologies at Dell, for a conversation that offered valuable insights into how Dell’s storage solutions are evolving to meet the growing demands of the AI market.

For those following AI developments, it’s clear that the performance demands of AI workloads are shifting. Traditional file systems are no longer sufficient to handle the immense data volumes and speed requirements of modern AI applications. Enter Project Lightning, Dell’s next-generation parallel file system, designed from the ground up to be the fastest solution in its market segment.

What sets Project Lightning apart is its ability to address extreme performance needs in AI environments. As Rob explained, the project was announced last year at Dell Tech World and is specifically optimized for AI use cases. This new file system offers unmatched speed and efficiency, which is essential as AI workloads continue to grow in complexity and scale. By leveraging Dell’s own intellectual property, Project Lightning represents a significant leap forward in storage technology, making it a game-changer for industries relying on AI.

This new addition to the PowerScale family of storage solutions isn’t just about speed. It’s about ensuring that storage solutions can scale with the growing demands of AI. Dell’s approach is rooted in the idea that data is the most critical asset in any enterprise, and having the right tools to manage and store that data is key to enabling AI’s potential. As Rob highlighted, Dell is working to ensure that all of its storage products are prepared for the future, with a strong focus on making data easily accessible and manageable.

One of the highlights of the conversation was Dell’s broader vision for data storage. Rather than simply providing individual storage products, Dell’s focus is on offering complete solutions that address the full spectrum of customer needs. The Dell Data Lakehouse, for example, is a powerful tool designed to unify storage, PowerEdge and software features into a comprehensive solution. This platform is designed to support AI applications by providing a reliable and scalable data management system that can handle the vast amounts of unstructured data AI processes require.

Throughout the discussion, it became clear that Dell’s role in the AI ecosystem goes beyond just providing storage solutions — it’s about creating a seamless environment where data can be used effectively to drive innovation. As Rob pointed out, the enterprise sector is just beginning to fully embrace AI, and Dell is committed to helping them navigate that transition. By ensuring that storage products can meet the needs of AI applications, Dell is positioning itself as an essential player in the future of enterprise AI.

In an industry where storage often takes a backseat to more glamorous technologies like GPUs and inference engines, it was refreshing to hear a conversation that highlighted the importance of reliable, high-performance storage. After all, as Rob noted, if the storage fails, the data is lost, and with it, the entire AI workload.

The partnership between Dell and Solidigm, known for their high-capacity quad-level cell (QLC) drives, further demonstrates the importance of resilient, high-performance storage. The TechArena take? By working together, Dell and Solidigm are able to provide a robust storage infrastructure capable of supporting the intense demands of AI environments, ensuring that customers’ data is safe and accessible.

To learn more about Dell’s cutting-edge storage solutions and their ongoing advancements in AI, visit the Dell InfoHub (infohub.delltechnologies.com) or check out their Unstructured Data Quick Tips blog for the latest updates.

OVHcloud Innovates Cloud Infrastructure with AI, Sustainable Tech

At this year’s CloudFest, we caught up with Chris Ward, senior sales account manager at Solidigm, and Guillaume Gojard, product director at OVHcloud, to dive deeper into OVHcloud’s unique infrastructure strategy, its evolving role in AI and its long-standing commitment to sustainable innovation. Amid the cloud announcements and industry buzz, OVHcloud stood out for how it’s quietly reshaping the cloud landscape — one custom-built server and water-cooled data center at a time.

At a time when cloud providers are often defined by how they manage their hyperscale partnerships, OVHcloud stands apart by owning its full value chain. The company builds its own servers at facilities in both Europe and North America, operates more than 40 data centers worldwide and manages its own global fiber network. This level of integration isn’t just about control — it enables OVHcloud to deliver a price-performance ratio that resonates, particularly in today’s AI-hungry world.

And AI is everywhere. “It’s in every mouth,” Guillaume said, capturing the sentiment that defined the expo floor this year. For OVHcloud, this isn’t about scrambling to catch up. It’s about expanding what they started years ago. The company has been offering GPU-based compute since 2017, and it recently began rolling out ready-to-use large language models (LLMs) and AI endpoints — giving developers a practical starting point to integrate generative models into their stack. OVHcloud is pairing its AI push with an upcoming data platform designed to streamline how customers manage and leverage data inside complex workflows.

But the conversation didn’t stop at AI. Another topic discussed was OVHcloud’s long-standing use of water cooling, which is now getting mainstream attention. “And for example, water cooling with one glass of water, we can cool down one server for 10 hours of use,” Guillaume explained, noting that the company’s approach uses seven times less water than the industry average. That’s not a gimmick — it’s industrial innovation rooted in sustainability. It’s also a reminder that OVHcloud isn’t jumping on trends — they’re often ahead of them.

A large part of that innovation comes through partnerships. In this case, OVHcloud’s collaboration with Solidigm has allowed the company to push high-performance storage capabilities in its high-grade servers. “Blazing fast data access on our NVMe storage capacity, which is really great, because this is what the market demands,” Guillaume explained. For demanding use cases like real-time analytics, that speed translates directly into customer value. More importantly, the partnership gives OVHcloud the flexibility to respond to shifting demands — something Guillaume said has been smooth and responsive from day one.

Looking ahead, OVHcloud’s eye is on quantum computing. OVHcloud is supporting startups and even has a quantum computer. The company is also quietly building a quantum-friendly cloud platform, positioning itself to support ecosystems that will, like AI, demand entirely new infrastructure paradigms.

The TechArena take? In an industry full of noise, OVHcloud’s approach is refreshingly holistic. From LLM toolkits to next-gen cooling to the quantum horizon, it’s not just about where things are now — it’s about where they’re headed.

M2M and Solidigm Lead Industry Trends in AI and Cloud Computing

At CloudFest 2025, Khilna Chandaria, operations manager at Solidigm, caught up with Charlie Hacker, sales and marketing director at M2M Direct, and TechArena to chat about the latest trends in cloud computing and the innovations that are reshaping the industry. The conversation highlighted the growing importance of AI, the rising demand for high-capacity storage and the key attributes businesses are seeking in their cloud solutions. As cloud computing continues to evolve, M2M’s consultative distribution approach is helping organizations adapt to these changes, particularly through their collaboration with Solidigm.

The shift toward AI-driven cloud computing was one of the key topics of discussion.

“Cloud computing is shifting to an AI-based model, both for private and public clouds,” Charlie shared. This shift is reshaping how cloud environments are used, enabling businesses to handle larger amounts of data with greater efficiency and scalability.

As cloud adoption accelerates, businesses are looking for solutions that offer flexibility, scalability and competitive pricing. “Flexibility and price, price or flexibility, either or,” Charlie explained, emphasizing the trade-offs many organizations are grappling with when choosing their cloud partners. But these aren’t the only important factors. Scalability is also essential, as businesses must be able to expand their storage and processing capabilities as their needs grow.

Security is another major consideration in today’s cloud landscape. With more data being transferred across private and public clouds, keeping that data secure is a top priority. As businesses increasingly rely on cloud solutions to store and process sensitive information, they must prioritize robust security measures that can protect against cyber threats, ensuring both compliance and the integrity of their data.

One of the most pressing needs in cloud computing today is high-capacity data storage. As AI and other data-intensive technologies continue to advance, the demand for larger, faster storage solutions is growing at a rapid pace. “Size, size, size, size,” Charlie remarked, stressing how critical storage capacity has become in the face of AI’s massive data requirements. Solidigm’s quad-level cell (QLC) products, offering up to 122 terabytes of capacity, are meeting this demand head-on. With such large-scale storage solutions, businesses can manage and process enormous datasets efficiently, without sacrificing speed or performance.

M2M’s role in this evolving landscape is to provide not just products, but consultative services that help businesses navigate the complexities of cloud migrations and data center upgrades. “We’re like a sales arm for Solidigm, and Solidigm is a sales arm for us,” Charlie explained.

What’s the TechArena take? Collaborations like this one between M2M and Solidigm ensure that cloud infrastructure providers can deliver the right products at the right time, supplying on-demand solutions that are essential for businesses undergoing significant infrastructure changes.

For those interested in staying updated on the latest advancements in cloud computing and AI, Charlie encouraged viewers to follow M2M on LinkedIn and visit their website (m2m-enterprise.com) for the latest product offerings and updates.

Oracle Unlocks Telecom’s Future With AI-Driven Network Automation

At TechArena, we’ve been closely following the transformative potential of AI in various industries, and after recently sitting down for a conversation with Solidigm’s Jeniece Wnorowski and Oracle’s Andrew De La Torre, one thing is clear: AI is revolutionizing how the telecommunications industry operates. From network management to customer service, AI is enabling telcos to optimize their operations and pave the way for the future of digital connectivity.

During our chat, Andrew explained how Oracle is at the forefront of AI-driven network automation, particularly in integrating AI with telecom infrastructure. As 5G networks continue to expand and evolve, the need for more efficient, scalable and autonomous systems becomes even more apparent.

The Shift Toward Autonomous Networks

Telcos are undergoing a massive migration from traditional telecom networks to autonomous ones, utilizing the migration to 5G to fuel new capabilities that speed and simplify network management. Andrew described how the integration of AI ops is essential to unlocking the full potential of these self-healing, self-optimizing networks. While it may sound like something from a sci-fi novel, the idea of a network that can monitor, troubleshoot and repair itself with minimal human intervention is becoming a reality.

This vision of an autonomous network is not just about improving efficiency; it’s about enabling telecom companies to deliver services more nimbly and improve service reliability at a fraction of the cost. Oracle’s focus on integrating AI capabilities into every layer of the telco stack — from cloud native infrastructure to front office applications — demonstrates the company’s commitment to transforming the industry.

AI Ops: A New Framework for Telecom Transformation

So, what is AI Ops? At its root, it is a framework designed to automate telecom network functions, which is essential for handling the complexity of 5G networks while minimizing manual intervention. Andrew explained that the key to building autonomous networks is the integration of cloud-native applications, data aggregation and AI analytics. By combining these elements, Oracle helps telecom providers to make data-driven decisions that improve performance and reduce operational costs.

For example, Oracle’s AI models can analyze vast amounts of network data to identify issues before they become critical. This predictive capability allows for proactive troubleshooting and service optimization, which ultimately leads to improved service uptime. With the rise of 5G, increased use of edge computing, growth in IoT and the resultant increased demand for data, this type of automation is becoming less of a luxury, and more of a necessity.

Generative AI vs. Traditional Machine Learning: What’s in Play for Telco?

One of the most insightful moments of the conversation came when Andrew addressed the role of different types of AI technologies in telco. While generative AI is making headlines, he emphasized that AI in telco is a diverse toolkit, with applications ranging from robotic process automation to advanced machine learning techniques.

Andrew pointed out that the telco industry is unique in its complexity, which is why specialized AI models tailored to telco use cases are so essential. AI’s ability to handle large-scale, repetitive tasks — such as network monitoring and optimization — while also supporting more advanced functions, such as natural language processing, is key to driving digital transformation.

The Human Element in AI-Driven Network Automation

Of course, the integration of AI into telco networks doesn’t come without its challenges. Andrew noted that the industry’s slow adoption of automation is partly due to deeply ingrained legacy infrastructure and attitudes. Networks are essential to national economies, and the cautious pace of change is understandable. However, Oracle is actively helping operators to navigate this transition.

Through tools such as automated test repositories and software solutions, Oracle is enabling telco providers to adopt a cloud-native mindset and deploy DevOps-style operations. The human element remains essential in this journey — operators need the right training and support to manage these new AI-driven systems effectively.

The Road Ahead: A More Connected, Autonomous World

In the grand scheme of things, AI-driven network automation is setting the stage for a future where telcos can deliver faster, more reliable and more cost-effective services. By embracing AI ops, Oracle is helping its clients build the autonomous networks of tomorrow — networks that promise to be smarter, faster and more responsive to the needs of both consumers and businesses.

At TechArena, we’re always on the lookout for stories that highlight innovation and transformation in the tech industry. We’re hot on the trail of AI Ops and its control of the IT infrastructure that will...accelerate broader application of AI. The insights shared by Andrew on Oracle's innovation demonstrate that AI Ops has survived the trough of disillusionment and is moving forward into real world deployment. The capabilities at stake will deliver a nimble edge, and provide telcos their potential edge for long term financial prosperity. We’re delighted to see it as fast and ubiquitous networks are a core of broad technology innovation.

Check out the full podcast.

To learn more about Oracle, visit their website at oracle.com.

Solidigm Spotlights AI Storage and Liquid Cooling at OCP

From breakthrough 122TB SSDs to the industry’s first liquid-cooled storage, Solidigm’s Avi Shetty unpacks how storage is powering AI workloads from hyperscale to neo-cloud.

Supermicro and Solidigm: Revolutionizing Storage for AI Workloads

NVIDIA’s GTC is always a hotspot for innovation. This year, two industry leaders, Supermicro and Solidigm, brought their cutting-edge solutions designed for the next wave of AI workloads for CSP and Hyperscalers. From high-density storage to powerful cooling solutions, their collaboration is shaping the future of data centers. Wendell Wenjen, director of storage market development at Supermicro, and Shirish Bhargava, of Solidigm’s global field sales, sat down with TechArena to discuss how their partnership is addressing the growing demands of AI and what lies ahead for next-generation data center infrastructure.

Supermicro, known for pushing the envelope in server technology, showcased their latest systems directed at powering AI applications. Central to their presentation was the NVIDIA HGX B300 NVL16, featuring the powerful NVIDIA Blackwell UltraGPUs— Supermicro’s most advanced GPU solutions to date. These high-performance servers were designed with AI in mind, supporting the growing demand for deep learning and large-scale data processing. Supermicro also introduced a range of storage solutions optimized for AI workloads.

Another exciting announcement was the launch of a new 1U Petascale storage system, equipped with the NVIDIA Grace CPU. This dual-die, high-performance processor delivers impressive power efficiency, ideal for the ever-growing demands of AI. For those seeking even more storage efficiency, Supermicro unveiled a JBOF system powered by NVIDIA’s BlueField-3 DPU, which supports demanding storage workloads with lower power usage.

However, it wasn’t just Supermicro’s hardware that drew attention at GTC. Solidigm, a leader in enterprise solid-state drive (SSD) solutions, brought its own innovative technology to the table. The Solidigm D5 P5336 SSD, a 122 TB powerhouse, was also on display, offering extreme storage density that is essential for AI training and large-scale data management in all form factors. This SSD is part of Solidigm’s broader portfolio designed for AI, providing the kind of performance and capacity needed to handle massive datasets with low latency.

But what makes this collaboration truly stand out is the shared commitment to pushing the boundaries of storage capacity and efficiency. Supermicro and Solidigm’s joint focus on reducing data center footprint while maximizing storage capacity is a game-changer. By using Solidigm’s 122 TB quad-level cell (QLC) SSDs, Supermicro is able to pack three petabytes of storage into a 2U server, a dramatic leap from the previous one-petabyte systems. This compact, high-capacity solution not only optimizes data center space, but also reduces power consumption, making it a win-win for enterprises and service providers alike.

In an industry where cooling and power efficiency are critical, Supermicro’s approach to liquid cooling for high-performance GPUs stood out. Some of today’s most powerful GPUs can consume over 1,000 watts, making liquid cooling a necessity for keeping systems running at optimal temperatures. Supermicro’s comprehensive liquid cooling solution — ranging from cold plates for CPUs and GPUs to large outdoor cooling towers — ensures that even the most demanding AI systems stay cool, efficient and reliable.

So, what’s the TechArena take? This partnership between Supermicro and Solidigm is a testament to the importance of collaboration in driving innovation. Both companies have long been at the forefront of their respective fields, and their combined efforts are delivering practical, high-performance solutions to address the challenges of modern AI workloads.

For those looking to learn more, Supermicro offers an abundance of resources on their website (supermicro.com) and social media channels (X, LinkedIn and YouTube).

Solidigm & Supermicro Bring AI Storage to New Heights

From 122TB QLC SSDs to rack-scale liquid cooling, Solidigm and Supermicro are redefining high-density, power-efficient AI infrastructure—scaling storage to 3PB in just 2U of rack space.

Optimizing AI Compute Value with OVHcloud’s Custom Infrastructure

At CloudFest 2025, OVHcloud shared insights on AI’s expanding role in cloud infrastructure, the benefits of custom-built servers, and how their global network is optimizing performance and efficiency.