VAST Data’s $30B Moment Comes as Its AI Architecture Bet Pays Off

VAST Data announced today that it has closed a Series F financing round, including primary and secondary capital of approximately $1 billion. The financing round creates a $30 billion valuation for the AI operating system company, which more than triples its $9.1 billion Series E valuation from late 2023. The round was led by Drive Capital, with Access Industries as co-lead and participation from new and existing investors such as Fidelity Management & Research Company, NEA, and NVI DIA.

This impressive headline number has the support of strong reported underlying financials. VAST has surpassed $4 billion in cumulative bookings and ended its most recent fiscal year with more than $500 million in committed annual recurring revenue, alongside positive operating margin and free cash flow.

In a blog post accompanying the announcement, VAST Data Co-Founder Jeff Denworth said, “What excites investors about VAST is our unprecedented mix of growth and profitability, demonstrating to the world that a radically disruptive product and focused team can break fundamental business tradeoffs.” The company’s Rule of X score (calculated as the sum of revenue growth rate plus the last twelve months free cash flow margin) is 228%, a remarkable 5 times greater than the 40% typically considered healthy.

So why take the investment? Denworth cites two reasons:

- The investment is a “signal to the world” of the strength of worldwide adoption of VAST’s AI Operating System.

- The capital will support the company’s scaling ambitions and ability to make investments that can accelerate market adoption.

The Architecture Bet Is Paying Off

VAST’s business story traces back to a technical decision made in 2016: designing a distributed systems architecture known as DASE (for disaggregated shared everything) from scratch, specifically for the parallelism demands of deep learning. From that foundation, VAST has created a full-stack computing platform for deep learning, including the VAST DataStore, DataBase, DataEngine, and DataSpace. Earlier this year, the company announced new capabilities for the agentic AI era to build a “thinking machine,” or a system that governs, evaluates, and improves on AI pipelines automatically. VAST reports that today the AI factories that it supports have over 1 billion CUDA cores or over 1 million tensor cores, all accessing a single VAST data platform.

The TechArena Take

As Denworth explicitly said, this funding round is a market signal. The company’s valuation, its Rule of X score, and its underlying financials are proof that the company’s vision can translate into reality. The DASE architecture bet, made in a seemingly distant past when Sam Altman and Elon Musk were working side-by-side at OpenAI, is now paying off 10 years on as enterprises discover that legacy data infrastructure simply cannot keep pace with agentic AI demands. The company seems to have arrived at this moment with exactly the right product.

The open question is what VAST Data’s competition can offer. As Denworth noted in his blog, the company operates in an odd place: while it competes with companies up and down the data stack, it has no direct analog competitor outside of hyperscalers that put together many services to create an equivalent to VAST’s unified platform. For now, that position is difficult to replicate quickly. Unified architectures are not assembled overnight, and VAST’s decade-long head start shows in both the product and the financials. The gap may not last forever, but VAST’s financial strength gives it the runway to keep widening it.

Read more from VAST in their press release.

Allyson Klein on Building Market Advantage in the AI Race

The tech landscape is accelerating faster than traditional consulting was designed to handle. As trillions of dollars flood into AI infrastructure and organizations race to define their positions in a shifting ecosystem, the distance between strategy and execution has become one of the costliest gaps in business.

With the recent launch of the TechArena Advisory, we are featuring a series of 5 Fast Facts Q&As to highlight the operators bringing C-suite-grade intelligence to this new function. We recently sat down with our Founder and CEO, Allyson Klein, who built TechArena on a conviction that has only sharpened with time: in a world redefining what intelligence means, human connection still matters. In this edition of our Q&A series, she discusses the collapse of traditional consulting models, the most acute pressure points facing business leaders right now, and what it means to drive disproportionate growth for clients.

Q1: What has changed in the tech landscape that made the Advisory a priority for you?

The pace changed. What used to be multi-year design cycles and simplified paths to market, has changed into a frenetic pace of innovation to serve the demand for AI. Organizations are racing to deploy trillions of dollars of capital equipment, making consequential decisions faster than at any point in history.

The pressure revealed that traditional consultancy models built on outside-in analysis were not designed for this moment. Outside-in frameworks with no clear integration path simply do not hold up when the stakes are this high and the clock is moving this fast. The Advisory practice is a direct response to that gap. We bring operators who have lived in these environments, made these calls, and steered the foundational companies that architected the modern tech stack.

Q2: What does your experience bring to this moment?

I spent my career at the friction point where plans meet P&L, in some of the most demanding environments in tech. I drove data center and edge marketing at Intel and led marketing and communications at Micron. Both roles put me at the table where decisions were made, where go-to-market battles were won or lost, and where the story you told about your technology not only determined your product success, but the industry’s trajectory.

When I founded TechArena in 2022, I carried all of that forward. We have collaborated with over 100 leading technology companies, helping them claim market advantage in a landscape that was not waiting for anyone to catch up. Our work crystallized my thinking about what businesses actually need right now: operating experience from someone who has sat in your seat and can help you move forward with confidence.

Q3: What challenges are business leaders facing that align with your practice areas?

The pressure is simultaneous and everywhere. Silicon design cycles are accelerating, and data center buildouts that once took five years are happening in 18 months. Leaders are being asked to get product strategy, competitive positioning, go-to-market, and financial governance right, all at once.

The executives I talk to are not short on ambition or know-how. They are short on the right kind of counsel, someone who has navigated this specific terrain at scale and can step in immediately, assess the situation, and turn potential into real business value. That kind of advisor changes the equation in ways that static analysis cannot replicate.

Q4: Are there key areas that you see most pertinent right now?

Go-to-market is probably the most acute pain point. Companies are launching products into markets that are still being defined, competing for mindshare with dozens of well-funded players, trying to build routes to market that did not exist two years ago. Getting that right matters enormously for where a company lands in the ecosystem hierarchy.

Competitive narrative is close behind. In a landscape where technical differentiation is hard to sustain, the story you tell about your position in the value chain can be the deciding factor in whether customers, partners, and investors align behind you.

Organizational readiness is moving up fast on the list too. Companies that scaled aggressively in recent years are now restructuring for AI-native operations. Leadership development and cultural transformation are real operational challenges, not soft-skills exercises, and that is an area where our advisors bring a depth of experience that is hard to find anywhere else.

Q5: What are the outcomes you're targeting to drive?

The advisors we have brought together have grown multi-billion dollar businesses and led organizations through the defining technology inflections of the last two decades. I can’t wait to see the impact that these proven operators can deliver to drive disproportionate growth for our clients.

Early results are already proving the model. For example, Axelera AI came to us with a specific market opportunity. The Advisory team researched their position, helped frame the opportunity clearly, and delivered an action plan they could execute. That is exactly what we are built to deliver, and it is the standard every engagement will be measured against.

If the thought of accelerating your team’s ambitions resonates with you, come check us out.

Innovaccer Reframes AI Governance as Healthcare’s Accelerator

Back in 2015, the “godfather of AI” Geoffrey Hinton made a bold prediction: stop training radiologists immediately, because deep learning would render them obsolete within five years. A decade on, this looks unlikely to happen any time soon, and radiologists remain in just as much demand, showing how important accuracy and safety remain and the unique challenges in adopting AI in this space.

My recent conversation with Tapan Shah, AI Architect at Innovaccer and Agentic AI Work Group Lead at the Coalition for Health AI (CHAI), and our Data Insights co-host Jeniece Wnorowski from Solidigm, shed light on some of the challenges in creating scalable AI systems for healthcare. His role involves creating AI systems and agents that work in actual healthcare environments and enterprise systems that affect patient and provider outcomes.

In Tapan’s view, the hardest problem in healthcare AI is not creating the right models or algorithms, but in designing from the ground up.

The Gap Between Pilot and Production

Tapan opened with an example that cuts to the heart of the challenge. An AI clinical note generator built for a cardiology practice may work great in a pilot and then stumble when deployed for other disciplines like oncology or orthopedics, or even a different practice running a different electronic health record (EHR) system. Even when the underlying model remains the same, the results can be vastly different based on the medical discipline.

“Scaling AI into enterprise healthcare is less of an AI problem and more of a system design problem,” Tapan said. “The real problem here is whether in real-world situations, an AI agent being developed has the right level of access and the capability to create sufficiently transparent and explainable recommendations that even a skeptical clinician can accept.”

From Building Models to Agents

In the past decade, the healthcare AI industry has undergone a seismic shift from building predictive models to building agents. Historically, validating an AI system was relatively straightforward: train a model, measure accuracy on a holdout set, and deploy. This has been successfully validated in cases like early tumor detection, says Tapan.

Agents are a fundamentally different beast. They pull data from multiple data sources, invoke various tools, and combine these inputs to perform complex tasks. Often there is no single source of truth and clinicians can interpret the same data differently. Data can be missing or certain users cannot access certain tools or software. In this scenario, the challenge becomes ensuring that the agent being built is safe and can handle the scenario safely and predictably even in a novel scenario.

And because sensitive data is being handled, safeguards need to be built in the system from the get-go. For instance, a cardiology clinical note generator should not have access to a patient’s psychiatric records.

Governance as Enablement, not Constraint

When the topic turned to governance, Tapan pushed back against the assumption that governance is primarily about controls and restrictions.

“AI governance is not a constraint, it’s enablement,” he said, comparing a good governance framework to a constitution: it can be used as a binding document, or it can serve as the foundation for doing genuinely useful things, based on how you build and use it.

He illustrated this with a scenario where an authorization agent shifted from a 70% auto-approval rate to a 90% auto-approval rate. Effective governance would mean detecting this shift, reviewing the agent’s complete decision graph and identifying the root cause. A successful governance model would enable such decision making to be made in minutes, rather than weeks.

Clinical Consequences

The thorniest issue in the conversation was accountability, especially as AI agents take on decisions with both clinical and administrative consequences. Tapan was candid: there is no perfect solution yet. Legal frameworks are still catching up to the question of what it means for an AI agent to make a consequential decision.

Innovaccer’s current approach is to make sure that there is comprehensive logging of every AI decision, granular access control for agents, and human oversight with the ability to override. For all clinical use cases, and many administrative ones, a human remains in the loop, able to review and reverse any AI-generated decision. As legal and governance frameworks evolve, these foundations will provide the structure to adapt.

Long-term Value

When asked about measuring long-term strategic value, Tapan pointed to two holy grails: improved patient and provider outcomes. Treatment authorizations are a good example of where AI intervention can help, he explained.

“There are cases where it can take upwards of two to three weeks for a prior authorization for a procedure, that leads to delay in care,” he said. “If we can bring that down to, let’s say, a day, less than a day, even a few minutes, it actually impacts patient outcomes and cost of care.”

On the other end, freeing clinicians of administrative burdens allows them to spend more of their time caring for patients, reducing burnout and stress levels.

And because healthcare AI serves multiple stakeholders including operations, compliance and clinical teams, a scalable solution would need to be designed with solid system design principles, with observability, tracing, and monitoring built in right from the very beginning.

The TechArena Take

Innovaccer’s approach demonstrates the challenges in building a successful system that can work across multiple specialties in real-life hospital scenarios. As integrating AI in healthcare has shifted from building models to building agents, the hardest problem to solve isn’t technical performance, but rather ensuring safety, accountability, and governance.

Tapan’s framing that governance should be treated as enablement, not constraint, feels like an important mindset shift for leaders trying to move beyond the pilot stage. By helping to reduce authorization times and administrative burden, AI can help provide long-term benefits such as better patient care and provider experience.

If you’re interested in learning more, check out the full podcast. In addition, the Department of Health and Human Services recently published updated guidelines for AI, and the CHAI and Innovaccer websites provide useful guidance on the use of agentic AI use in healthcare settings.

MLPerf Inference 6.0 Sets New Records Across an Expanded Suite

Last week, MLCommons released results for MLPerf Inference v6.0, setting new records as the benchmarking suite expands to keep pace with the diversity and scale of real-world AI deployments. Showcasing improved performance, new benchmarks for both data center and edge systems, and unprecedented system scale, the tests come at an opportune time for technology decision-makers facing pressure to move models into production.

The Biggest Update in MLPerf Inference Yet

The Inference v6.0 suite included 11 benchmarks for data centers and eight for edge. Five of 11 datacenter tests were either new or substantially updated in v6.0, rate of change that reflects just how fast the AI model landscape is shifting. Here’s what’s new:

- GPT-OSS 120B: A new benchmark for an open-weight 117B mixture-of-experts reasoning model from Open AI targeting mathematics, scientific reasoning, and code

- Text-to-video: The suite’s first generative video benchmark, using Wan 2.2

- Vision-language model (VLM): A new multimodal benchmark using Qwen3 VL 235B and Shopify’s product catalog dataset

- DLRMv3: A modernized recommender benchmark built on Meta’s HSTU model, reflecting the shift to sequential recommendation architectures

- DeepSeek-R1 (updated): Expanded with a tighter-latency interactive scenario and support for speculative decoding

Lambda tested on the new GPT-OSS 120B benchmark as part of its first-ever Open Division submission, an effort that went beyond standard software tuning into algorithm-level research. The company explored smarter token routing across experts in the mixture-of-experts architecture, selectively directing tokens to the second-best expert when the top choice becomes overloaded.

"There's a basic trade-off between the quality of the result and the load balancing of the system," said Chuan Li, Lambda's chief scientific officer. "If we can tune that trade-off well enough, you can still meet an upper quality standard but get even better throughput."

The approach points to a dimension of inference optimization that many teams overlook. Hardware improves with each generation. Software stacks mature every six months. But algorithm-level creativity on top of both can unlock performance gains that off-the-shelf tuning leaves on the table.

Beyond the data center updates, the suite introduced a new YOLOv11 benchmark for edge, updating the edge object detection benchmark to current industry practice. In a sign of strong interest, 30 submissions were received for this test, the most of any in the edge category.

Multi-Node Inference Scales Up

One of the most interesting trends from the v6.0 data is the rapid growth of large-scale, multi-node system submissions over the last year. The v5.0 release last April included just two multi-node submissions. That number climbed to 10 in v5.1, and further to 13 in v6.0. The largest system submitted in this round spanned 72 nodes and 288 accelerators, quadrupling the node count of the largest system from the prior two rounds.

The shift reflects where enterprise AI deployments are heading. As more AI applications move into production at scale, the demand for large, distributed inference systems is growing as well. This complexity introduces technical challenges, and multi-node benchmarks are better suited to demonstrate system performance under such conditions.

24 Organizations, Three New Entrants

The v6.0 submission roster grew to 24 participating organizations, including first-time submitters Inventec Corporation, Netweb Technologies India, and Stevens Institute of Technology. The full list spans hyperscalers, cloud providers, OEMs, and independent software vendors, making the dataset especially useful procurement analysis.

Lambda was the only AI-native cloud provider to publish results for both inference and training on NVIDIA's Blackwell Ultra platform, benchmarking on both a single-node GB300 system and the rack-scale NVL72. The company treats benchmarking not as a marketing exercise but as an operational checkpoint. "We literally see this benchmark as a part of our new product introduction pipeline," Li said. "Before we offer this product to our customer, we need the product to be benchmarked."

That positioning carries weight for procurement teams evaluating cloud providers. Lambda is platform-neutral, with no proprietary silicon to promote, which gives it a clear incentive to pursue transparent, reproducible results. The company publishes its benchmark code as an open-source repository so customers can verify performance on their own infrastructure.

The TechArena Take

By adding reasoning models, text-to-video, vision-language, and modernized recommender workloads in a single release, MLCommons is tracking the speed at which the AI workload landscape is changing. Two of the new benchmarks arrived through direct collaboration with industry practitioners: Shopify contributed the VLM dataset using real product catalog data, and Meta drove the updated DLRM model based on its sequential recommendation architecture. That kind of industry partnership keeps the benchmarks grounded in production reality rather than academic abstraction.

For procurement teams, these updates offer practical benefits beyond the headline numbers. Decision-makers can dig into which organizations are submitting on the new benchmarks, how their results scale across node counts, and where software and algorithm optimizations are driving as much lift as hardware. Lambda's Open Division submission is a good example. It demonstrated that creative approaches to expert routing can push throughput higher without sacrificing output quality, the kind of insight that matters when you're sizing infrastructure for production inference.

Looking ahead, Li pointed to the upcoming MLPerf Endpoint format as a significant evolution. Rather than reporting a single throughput number per system, the new format will present a trade-off curve between latency and throughput, giving customers a way to evaluate systems against their specific service-level requirements. That shift would make the benchmarks more directly actionable for organizations balancing real-time responsiveness against batch processing efficiency.

As AI infrastructure decisions get larger and more consequential, MLPerf remains the go-to industry resource where competing systems can be compared on a level playing field. That kind of transparency is not just useful. It is essential.

Closing the Gap Between AI and Business Value with Jeni Barovian

The AI era is generating investment on a scale that previous technology cycles never approached. The central question facing business leaders has shifted from whether to invest to how to convert that investment into lasting competitive advantage. TechArena Advisory breaks from the traditional consulting model by bringing C-suite operators to the table who have grown multi-billion-dollar businesses from the inside out and understand what it takes to turn investment into value.

Advisor Co-Founder Jeni Barovian has navigated technology waves that reshaped entire industries, from networking and edge computing to data center platforms and AI silicon, holding product and P&L leadership roles at Intel and Altera. What distinguishes her is not just technical depth but a discipline around equipping organizations to drive business outcomes. In this edition of our Q&A series, she discusses the gap between AI investment and realized value, the three areas where that gap most often surfaces, and what it takes to help organizations leverage AI to create scalable competitive edge.

Q1: What has changed in the tech landscape that made the Advisory a priority for you?

Throughout my career, I’ve worked through several major technology waves — the internet, mobility, and cloud. As disruptive as those were, AI is different.

The pace of change is orders of magnitude faster, and the scale of investment is unprecedented. According to Goldman Sachs, companies spent more than $400 billion on AI infrastructure in 2025 alone — data centers, GPUs, platforms, and the talent to run them. The firm projects that number will swell to $500 billion in 2026. Now, leadership teams across the value chain are under pressure to turn that investment into real economic value.

At the same time, AI is reshaping how companies operate and how work gets done. Nearly all knowledge worker roles will be affected by AI-driven workforce transformation.

This moment requires a different kind of leadership and guidance. The winners won’t just deploy AI — they’ll translate it into productivity, new revenue streams, and lasting competitive advantage.

Q2: What does your experience bring to this moment?

I’ve spent my career building and scaling complex product portfolios and businesses — networking, communications, edge computing, and data center platforms. That work sits right at the intersection of infrastructure, product strategy, and business outcomes.

My superpower is connecting technology decisions to business impact by equipping and empowering teams to act. That includes navigating some of the most complex forms of organizational change — businesses scaling through major technology transitions, and M&A from both sides of the table. When you’ve led through those conditions, you develop a sharper instinct for where strategy actually holds under pressure and where it doesn’t.

In this moment, companies are moving incredibly fast, but speed alone doesn’t create value. AI can streamline development, analysis, and execution across nearly every function — engineering, product management, marketing, operations. But without clear strategic direction, you get a lot of noise and homogeneity.

What organizations need right now are experienced operators who understand how to turn new technology into differentiated products, stronger go-to-market strategies, and measurable business results.

AI can accelerate everything, but only strategy turns that acceleration into value, and proven operators can bring that strategic judgement and clarity.

Q3: What challenges are business leaders facing that align with your practice areas?

The most common challenge I see right now is a gap between AI investment and realized value. Companies have invested heavily in infrastructure and tools, but many leaders are still figuring out how to translate that capability into real business impact. That challenge typically shows up in three areas where I spend most of my time advising.

First is product and technology strategy — identifying where technology actually creates differentiated value rather than just adding features.

Second is P&L optimization — ensuring new capabilities are built and sold in ways that generate revenue growth, and the organization is equipped to operate with maximum efficiency. That calculus increasingly includes sustainability: in my experience, companies that treat environmental requirements as a financial lever — not as a compliance exercise — tend to build more resilient P&Ls.

Third is organizational execution — aligning teams, workflows, and decision-making so the company can move at the pace the technology now allows. That often extends to governance — ensuring boards and senior leadership have clear accountability structures for transformation commitments, not just results.

My background running product organizations for multi-billion-dollar businesses bridges the gap between technology ambition and operational reality.

Q4: Are there key areas that you see most pertinent right now?

Three areas stand out to me right now:

First, turning AI infrastructure into economic value. Companies have already made enormous investments in compute, data platforms, and tooling. The question now is where and how that translates into top-line growth, operational velocity, and competitive advantage.

Next, product differentiation in an AI-accelerated world. When everyone has access to similar tools and models, the real differentiator becomes strategy — how companies apply AI to meet customers where they’re at today, and solve meaningful problems.

Finally, organizational adaptation. AI is changing how work gets done across nearly every function, and leaders need to rethink processes, decision-making, and team structures to take full advantage of it. The leaders who get this right invest as seriously in their people as in their platforms — because AI amplifies human capability, it doesn’t replace it.

These three shifts — infrastructure, products, and people — will determine who leads the next wave of technology.

Q5: What are the outcomes you’re targeting to drive?

I focus on helping companies accelerate their path to business impact.

Most leadership teams already know what they want to achieve. Where they get stuck is the gap between a compelling strategic vision and the organizational readiness to execute on it. I’ve sat on both sides of that gap — as an operator accountable for delivering results under pressure, and as someone who has led businesses through the kind of structural change where that gap can widen fast if you let it.

In working with leadership teams, my goal is to help them identify where technology can create the most meaningful value — whether that’s new revenue streams, faster innovation cycles, improved operational efficiency, or stronger market positioning.

I help translate strategy into execution so teams can move quickly and confidently. That’s when transformation becomes real.

Why Most Data Loss Starts With a Simple Email

When leaders think about cybersecurity incidents, they often picture highly sophisticated attacks launched by external adversaries using advanced tools and malware. These scenarios dominate headlines and executive discussions. In reality, many of the most serious data exposure incidents do not begin with complex technical breaches. They begin with a routine human action inside the organization.

An employee forwards a document to a personal email account to continue working after hours. A team member shares internal files with a partner to move a project forward more quickly. A departing employee emails themselves information they believe they helped create.

Individually, these actions may seem harmless. Collectively, they represent one of the most common ways sensitive information leaves organizations today. What makes this risk especially challenging is that it rarely resembles a traditional security incident at the outset.

For leaders, this is a critical blind spot.

The Everyday Nature of Data Loss

Email remains one of the most widely used tools in organizations. It enables collaboration, supports distributed teams, and connects employees with partners and customers. Because email is deeply embedded in daily work, it is often viewed as a productivity tool rather than a potential risk vector.

Research consistently shows that human actions play a major role in data exposure incidents. The Verizon Data Breach Investigations Report highlights that human error and misuse remain significant contributors across industries. Many of these incidents involve employees unintentionally sending information to the wrong recipient or sharing sensitive files outside the organization.

These actions are rarely malicious. In most cases, employees are simply trying to work more efficiently. The leadership challenge lies in recognizing that routine decisions can carry serious consequences.

Once sensitive information leaves the organization through email, it can quickly spread beyond control. When data reaches personal accounts, unmanaged devices, or external parties, recovering it becomes extremely difficult.

Why This Risk Often Goes Unnoticed

Email-driven data loss is frequently underestimated because it does not trigger the same alerts as malware or system intrusions. The activity often appears legitimate: an authorized employee sends an email from a corporate account, and the content may not contain obvious indicators that automated tools detect. This creates a dangerous gap between intent and impact.

Traditional security tools were designed primarily to identify overtly malicious activity, such as unauthorized access or suspicious software. They are far less effective at detecting subtle behaviors that lead to data loss through normal communication channels.

As a result, organizations often discover these exposures only after information has already left their environment. Research from the Ponemon Institute (Cost of Insider Risks Global Report 2023) shows that insider-related incidents, including accidental data sharing, continue to grow in both frequency and cost, and often take longer to detect because they occur through legitimate access paths.

For leadership teams, this means the greatest risk does not always come from external attackers. It often comes from ordinary actions that blend seamlessly into everyday work.

The Human Dimension of Information Security

Addressing this challenge requires leaders to move beyond a purely technical view of security and examine how information is actually used inside the organization. Security controls may define how data should move, but daily work determines how it truly flows.

Modern employees operate in highly connected environments. Remote work, hybrid teams, and constant collaboration with external partners allow information to move across devices, platforms, and organizations faster than ever before. At the same time, many organizations maintain strict data policies that were designed for more controlled environments and do not always align with how work is performed today.

When policies feel disconnected from real workflows, employees often adopt informal workarounds to stay productive. Email frequently becomes the bridge between systems, devices, and teams. Documents are forwarded to personal accounts to continue work after hours, shared with external collaborators to accelerate projects, or moved between platforms that are not fully integrated.

These actions are rarely malicious. In most cases, employees are simply trying to solve problems and move work forward. Yet these everyday decisions can unintentionally expose sensitive information outside the organization’s control.

This reality highlights an important shift in modern cybersecurity thinking. The most significant risks do not always originate from sophisticated external threats. They often emerge from normal human behavior operating within complex systems. Organizations that recognize this dynamic begin to design security strategies that guide behavior, support safe collaboration, and align protection with how people actually work.

The Leadership Opportunity

Recognizing the human element of data protection creates an opportunity for more effective leadership. Rather than focusing solely on preventing mistakes, organizations should aim to make secure behavior the easiest option. This requires clear communication, supportive technology, and a culture that values responsible information sharing.

Effective leadership approaches typically include:

- Clear expectations about what information requires special handling and why it matters

- Practical collaboration tools that support real workflows and reduce risky workarounds

- Education that connects data protection to business impact, customer trust, and regulatory obligations

- Open communication between security teams and business leaders to align controls with operational needs

When employees understand both the risks and the reasons behind security practices, they are more likely to follow them.

Moving From Reaction to Prevention

Historically, many organizations addressed data exposure only after discovering that sensitive information had already been shared externally. This reactive approach forces security teams to respond after the damage may already be done.

Modern organizations are shifting toward proactive awareness. By understanding how information typically flows across the organization, leaders can spot unusual patterns earlier and intervene before significant exposure occurs.

Just as importantly, prevention strategies can guide employees at the moment of decision. Contextual warnings, reminders, or policy prompts can encourage employees to pause before sending sensitive information outside the organization.

These small interventions can significantly reduce risk without hindering productivity.

Leadership Responsibility

Email-driven data loss highlights a broader truth about cybersecurity leadership. Security challenges are not solved by technology alone. They are shaped by people, processes, and culture.

Executives set the tone for how seriously information protection is taken. Managers influence how teams collaborate and share data. Employees ultimately determine how information moves through daily workflows. Organizations that successfully protect sensitive information recognize this shared responsibility. They invest not only in security tools, but also in awareness, communication, and leadership engagement.

The goal is not to eliminate human involvement in data handling that is neither realistic nor desirable. The goal is to guide behavior in ways that support both productivity and protection. In many organizations, the next data exposure will not begin with a complex cyberattack. It will begin with a simple email. Leaders who recognize this reality are far better positioned to prevent it.

Betterworks Uses ‘Horizontal Intelligence’ to Connect HR Silos

The moments that help define an employee's trajectory, including performance reviews and manager feedback, are too consequential to get wrong. AI promises to help managers be better prepared for these important conversations by presenting clear insights that draw from the sea of daily work data. But it can only deliver when it is trusted on all sides.

In my recent conversation with Maher Hanafi, senior vice president of engineering at Betterworks, and Solidigm’s Jeniece Wnorowski, we discussed what it takes to turn AI’s potential into a trusted and valued enterprise solution.

Beyond the Static HR Database

Betterworks describes itself as a talent and performance management platform for global enterprise customers, but Maher is quick to distinguish it from traditional HR software. Where legacy tools function as administrative record-keepers by tracking history, storing documents, and managing lists, Betterworks aims to orient its platform around the flow of work.

“We were looking at the data from a performance lens,” Maher explained. “We’re trying to enable anything that helps go beyond just tracking history…to focus more on the flow of work.” For large enterprises with complex organizational structures spanning multiple regions, that means helping individuals, managers, and business units connect their daily efforts to company-wide goals, a capability that only becomes more valuable, and more technically demanding, as AI matures.

AI as Horizontal Intelligence

Maher offered the useful frame of thinking about AI as enabling “horizontal intelligence.” Before AI, Betterworks’ modules — goals, feedback, one-on-one meetings, talent and skills — operated as largely separate domains. Generative AI has made it possible to interconnect those domains in ways that weren’t previously practical.

“With AI today, it’s just way easier to interconnect all of these,” he said. “I think SaaS products and SaaS platforms will be built as more of an interconnected set of layers that will break the silos between different components and features.”

In practical terms, this means a manager preparing for a one-on-one meeting can receive and review AI-generated insights drawn from an employee’s recent goals, feedback, and performance history before a conversation, rather than manually pulling together and examining months’ worth of data.

Responsible AI as a Design Principle

When AI provides insights that can influence such important conversations, it’s paramount that all parties can trust the system’s output. Operating in this environment, Betterworks has emphasized responsible AI guided by two principles in particular: transparency and explainability. Transparency means the system can show users what sources it drew on to generate a response. Explainability means users understand why an AI suggestion is what it is. With this foundation, when managers are giving feedback to employees based on information AI provides, they can make suggestions and have confidence in the underlying insights.

“We are trying to use AI as a way to really get you as a better individual, better member of the organization and contributing to the big picture versus having AI take control,” Maher said. “You should be in the driver’s seat. AI is just there to help you and be a co-pilot, nothing else.”

Advice for Technology Leaders

As the conversation turned to broader lessons, Maher offered practical guidance for engineering and technology leaders navigating AI adoption inside enterprise organizations.

His first recommendation is simply to stay informed without becoming overwhelmed. “AI is moving very fast…. Picking the one out of the haystack is very challenging,” he said. To manage that, he created what he calls an AI Engineering Lab at Betterworks, a structured environment where engineers could explore tools and run experiments, rather than waiting for top-down mandates on which technology to adopt.

He also urged leaders to take the financial dimension seriously. “There was a huge risk of AI taking too much money without achieving ROI,” he said. “Turning into someone who cares more about the financial aspect and looking at costs on a frequent basis…was a huge success.” In his view, senior technology leaders increasingly need to think with some of the rigor of a chief financial officer when it comes to managing AI infrastructure spend.

Finally, he pointed to the value of frameworks. His own AI maturity framework and a flywheel model focused on planning, building, and optimizing AI systems have helped keep the team oriented even as the technology underneath them continues to shift.

The TechArena Take

Maher’s perspective reflects a measured but substantive view of what AI can deliver in enterprise software, one grounded in the realities of compliance-heavy industries and the organizational complexity of global customers. Rather than positioning AI as a transformation layer bolted onto an existing product, Betterworks has committed to rebuilding the platform’s foundations to make intelligence a native capability. For technology decision makers evaluating AI-powered SaaS in regulated environments, the Betterworks story offers a useful model.

Learn more about Betterworks at betterworks.com, and watch our full podcast episode.

Navigating the Human Side of the AI Race with Dana Bos

Technical tools exist for almost every problem, but the human side of change frequently lags behind.

Managers today serve as the pressure valve for their organizations, navigating hybrid teams and AI disruption while trying to keep people engaged. Senior teams often struggle to provide clear organizational direction to prevent burnout and retain key talent during these disruptive times.

TechArena Advisory provides a high-impact alternative to traditional consultants by offering the strategic blueprints of operators who have already scaled global businesses. Dana Bos brings expertise at the intersection of people, strategy, and operations to help our clients move faster and with far less friction. She shares her insights on building manager readiness and shaping everyday ways of working that keep teams connected.

Q1: What has changed in the tech landscape that made the Advisory a priority for you?

The ground has shifted under tech leaders’ feet, regardless of the sector or the size of their company. AI has moved from “interesting experiment” to “core to our strategy” almost overnight. Products, roles, and expectations are shifting in months, not years.

Many companies are trying to respond with teams and management systems that are already stretched. I’m seeing a lot of good intentions but not a lot of support for the people who actually have to make these changes. Managers are improvising at times without clear organizational direction, employees are fatigued by constant pivots and mixed messages, and systemic change management is getting overlooked. I want to help leaders at all organizational levels respond to and meet this seminal moment, in a way that drives sustainable momentum and attracts/retains talent.

Q2: What does your experience bring to this moment?

My expertise sits at the intersection of strategy, people, and day-to-day operations. Leaders are under immense pressure to deliver results, navigate new tech, and retain their key people. If you put an A player in C system, the system will win every time. The talent market is extremely competitive right now – you need an operating model that drives speed and value while also creating a culture that invigorates your talent and makes them want to stick around.

I help translate high-stakes challenges into actionable strategies their teams can execute, building leadership at every level so success doesn’t rest on a few heroic leaders. We build the skills that power the operating model with consistent concrete behaviors, productive conversations, and repeatable routines.

When it comes to change management, I work with leaders to translate the initiative and goals into a plan that focuses on what will change day to day: what managers say on Monday, what teams feel in the next all-hands, and how this lands for the talent you need to keep. In a moment when AI and constant change are colliding with real human limits, that grounded, people-centered focus keeps execution on track and results sustainable.

Q3: What challenges are business leaders facing that align with your practice areas?

The first big challenge I see is a highly competitive talent landscape. I also see companies recognizing the need to change their strategy or positioning and working hard to get the rest of the company on board and moving with them. Finally, leaders are trying to roll out internal AI-enabled workflows while their people are anxious, confused, or quietly resisting. The tools are there, but the human side of change is lagging. As work becomes more distributed and automated, it’s easy for trust, candor, and accountability to erode.

The need for increased manager capability has never been more pivotal. Many leaders were promoted for technical excellence and now find themselves running hybrid teams, navigating AI, and trying to keep people engaged when the strategy and work shifts, which requires a very different skillset from technical delivery.

My work sits right at those intersections: structuring organizational growth and change in a way people can easily follow, building strong managers who can run healthy, high-performing teams, and shaping everyday ways of working that keep people connected and willing to speak up.

Q4: Are there key areas that you see most pertinent right now?

Right now, I see a few areas where getting it right has an outsized impact. The first is how leaders talk about and model the use of AI internally and how they talk about the company’s products and services intersecting with the markets during this disruptive time. If senior teams are vague or inconsistent, everyone else feels it and fills in the gaps with fear. Second, manager readiness. Managers are the pressure valve for almost everything right now, and if they don’t have the skills and support to lead through change and uncertainty, you will see it in burnout, rework, and loss of talent. That includes how they run meetings, how they handle tension, and how they coach people who are worried or skeptical. The third is the quality of cross-functional collaboration. With work and teams dispersed, botched handoffs and misunderstandings are expensive. The basics—how decisions are made, how conflict is handled, how wins and misses are talked about—either build trust and ownership or quietly undermine them. When these areas are neglected, even strong strategies stall. When they’re addressed, organizations move faster and with far less friction.

Q5: What are the outcomes you’re targeting to drive?

I help organizations bridge the gap between strategic goals and daily execution. By developing leaders who communicate with clarity and managers who foster collaborative, AI-empowered teams, I help you build a culture where high performance and engagement coexist. Over time, this manifests as cleaner execution on priorities, better retention of the key players, and an organizational resilience that turns change into a competitive advantage rather than a source of friction.

Scaling Innovation from Silicon to Solutions with Raejeanne Skillern

The tech landscape is shifting at a breakneck pace, pushing the boundaries of traditional infrastructure and demanding a new level of strategic agility. As AI labs and hyperscalers reshape the competitive environment, there is an urgent need for leaders who can translate the complex data center and cloud ecosystem into differentiated narratives and modernized go-to-market strategies.

Following the recent launch of the TechArena Advisory, we are excited to highlight the exceptional operators bringing C-suite-grade strategic intelligence within reach of organizations at every stage of growth. The Advisory represents our commitment to providing a high-impact alternative to traditional consultants, offering the strategic blueprints of those who have already built and scaled multi-billion-dollar businesses.

Raejeanne Skillern has spent her career navigating these shifts, scaling organizations and driving disproportionate value for global enterprises. With 30 years of executive leadership spanning from silicon to solutions, she understands the precision required to move from “chaos to strategy” in the AI race. To help our audience get to know the experts behind the Advisory, we are continuing our “5 Fast Facts” Q&A series. In this edition, Skillern discusses the pertinent need for adaptability, the importance of strong partnerships in the neocloud era, and her personal commitment to stepping into the arena alongside the next generation of business leaders.

Q1: What has changed in the tech landscape that made the Advisory a priority for you?

The explosion of AI, specifically LLMs and agents, has pushed data center and cloud expertise into the center of every industry. Companies must rapidly evolve their business and marketing strategies to take advantage of the trillions of dollars' worth of market opportunity being created. The ecosystem is rapidly expanding, with Neoclouds, AI labs, and thousands of startups joining the hardware providers and Hyperscalers in this AI race.

I see an urgent need for experts who can distill this chaos into a clear strategy that drives real business acceleration.

Q2: What does your experience bring to this moment?

As a 30-year student of and executive within the cloud, data center and AI technology industry spanning silicon to solutions, I have an extensive track record in building and scaling technology-based businesses. My proven success in owning and growing multi-billion-dollar P&Ls has earned me a reputation as a change agent, navigating the complex tech landscape to drive disproportionate growth for companies.

Q3: What challenges are business leaders facing that align with your practice areas?

Leaders are currently caught between two fires: the need for immediate resource efficiency and the need for extreme flexibility to keep up with AI innovation. They are faced with optimizing or customizing their products and roadmaps for resource feasibility and agility to adapt to the speed at which market adoption is moving. They must modernize global go-to-market execution to provide both intelligent and connected workflows across the marketing and sales teams, leveraging personalized campaigns that meet customers along their unique journeys. Many companies may also be working with hyperscale cloud providers and silicon technology companies in new ways and need to evolve their selling, partnership, and engagement strategies to build strong partnerships with the emerging leaders in this space.

Q4: Are there key areas that you see most pertinent right now?

Helping startups scale, modernizing global go-to-market execution, breaking through with differentiated corporate leadership narratives in a noisy arena, and pressure testing business strategy for competitive strength, end user value, and adaptability to a rapidly moving industry.

Q5: What are the outcomes you’re targeting to drive?

Throughout my career, I have always appreciated when business leaders, board members, and technology experts have partnered with me to break down my challenges and ideate with me on how I can improve myself, my organization, and my business. I want to be that support structure for growing businesses. I want to be their right hand, stepping into the arena with them whether setting a business, product, or marketing strategy, positioning an organization to scale for growth, or translating the complex data center, AI, and cloud ecosystem to modernize sales and partnerships.

Arm Steps into the Silicon Spotlight with AGI CPU

In a strategic move that alters its long-standing business model, Arm this week unveiled the Arm AGI CPU, its first-ever production silicon product designed for data center AI infrastructure.

While the company has spent 35 years providing the blueprints for others to build upon, the AGI CPU represents Arm’s first direct entry into the commercial silicon market.

Developed in collaboration with Meta and enabled by Synopsys’ full-stack design portfolio, the AGI CPU is aimed at a burgeoning category of agentic AI workloads. These tasks, which involve AI models that reason, plan, and execute tasks autonomously, require high levels of scalar performance and memory throughput.

The Design and Verification Framework

Bringing a 136-core, 3nm processor to market as a first-time silicon vendor required a comprehensive design infrastructure. Arm utilized Synopsys’ end-to-end portfolio, spanning electronic design automation (EDA), silicon-proven interface IP, and hardware-assisted verification (HAV).

The technical workflow leveraged Synopsys’ EDA solutions to manage the complexity of advanced process nodes. These tools supported synthesis, power integrity analysis, and signoff timing, which were necessary to meet the performance-per-watt targets specified for next-generation AI environments.

To manage data movement, Arm integrated Synopsys’ interface IP solutions. These components act as the critical communication links within the SoC, facilitating high-speed data transfer between the CPU cores and external memory or accelerators. By using pre-validated IP, Arm aimed to reduce the inherent risks associated with first-pass silicon.

Verification played a central role in the development timeline. Using the Synopsys ZeBu Server 5 emulation system and HAPS prototyping platforms, Arm’s engineering teams were able to validate system functionality and software compatibility months before the physical chips returned from the foundry. This “shift-left” strategy is a standard industry practice to ensure that hardware and software are ready for deployment simultaneously.

Perspectives from the Partnership

Mohamed Awad, executive vice president of the Cloud AI Business Unit at Arm, noted the collaborative nature of the project.

“The Arm AGI CPU reflects the strength of our SoC design and the effectiveness of our collaboration with Synopsys,” he said. “Their design, IP, and verification solutions supported the development and validation of our breakthrough performance-per-watt chip for next-generation AI infrastructure.”

Technical Specifications and Market Positioning

The AGI CPU features up to 136 Arm Neoverse V3 cores per socket, operating within a 300-watt thermal design power (TDP). Built on TSMC’s 3nm process, the chip utilizes a dual-chiplet architecture. It supports 12 channels of DDR5 memory at speeds up to 8800 MT/s, providing approximately 825 GB/s of aggregate bandwidth. For I/O, the processor includes 96 lanes of PCIe Gen 6 and native CXL 3.0 support for memory expansion.

Arm’s internal data suggests that the AGI CPU can provide a 2x increase in performance-per-rack compared to current x86 platforms. By targeting “agentic” workloads, Arm is positioning itself to handle the coordination and data-orchestration tasks that sit alongside dedicated AI accelerators like GPUs.

The TechArena Take

Arm’s shift from an IP architect to a merchant silicon provider is technically impressive, but it creates a delicate situation with its existing licensees. Companies like NVIDIA, AMD, and Intel, who all license Arm IP, now find themselves competing directly with their technology provider in the data center. Arm will need to manage these relationships carefully to avoid appearing to favor its own silicon over the IP it sells to others.

The 300W TDP for a 136-core part is a clear attempt to challenge x86 dominance in power-constrained data centers. In an era where power availability is the primary bottleneck for AI scaling, Arm’s decision to focus on performance-per-watt is a pragmatic entry strategy. However, the true test will be real-world software optimization and how effectively the AGI CPU handles the “unstructured” nature of agentic AI compared to established general-purpose processors.

Forward-Looking Branding

The naming of the AGI CPU is a bold marketing move. While the chip is designed to support the infrastructure for autonomous AI agents, the term AGI (Artificial General Intelligence) remains a theoretical milestone in the research community. By tethering its first chip to the most hyped acronym in tech, Arm is signaling its long-term intent, though the industry will likely judge the silicon on its IPC and latency metrics rather than its nomenclature.

Synopsys as the Industry Glue

This launch reinforces Synopsys’ position as the necessary scaffolding for the custom silicon era. Whether it is a hyperscaler like Meta or a traditional IP house like Arm, the move toward specialized silicon is increasingly dependent on a unified full-stack design flow. For Synopsys, enabling a first-time silicon vendor to hit 3nm targets is a strong proof-of-concept for their “HAV-to-Silicon” methodology.

Globeholder AI Unveils Thinking Lab for High-Stakes Decisions

This morning in Paris and Riyadh, Globeholder AI officially pulled back the curtain on its Thinking Lab, a platform that signals a fundamental shift in the AI trajectory. While the tech world has spent the last few years obsessed with the creative (and often hallucinatory) capabilities of large language models (LLMs), Globeholder is betting on a different flavor of intelligence: Type-2 Reasoning for the physical world.

Beyond the Text: The Case for Physical Grounding

The core thesis of Globeholder, led by co-founders Milene Göknur Jubin, PhD, and Eren Ünlü, PhD, is refreshingly blunt: "The world is not made of text."

Most AI systems today rely on fast pattern recognition, what cognitive scientists call Type-1 reasoning. These systems excel at predicting the next word in a sentence, but they stumble when asked to authorize a $2.1 billion investment in North Sea offshore wind farms. Why? Because energy systems, infrastructure networks, and climate patterns aren’t linguistic constructs; they are governed by physics, regulation, and logistical constraints.

Globeholder’s Thinking Lab is designed to bridge this gap by acting as a "sovereign, computational software environment" where AI agents operate like scientific teams. Rather than providing a probabilistic guess, the platform deconstructs complex questions into physical components, runs simulations, and stress-tests assumptions.

The Architecture of "Type-2" Intelligence

From a deep tech perspective, the Thinking Lab’s architecture is its most compelling feature. Built on a modular, partner-enabled framework, it functions as an operating system for physical-world intelligence.

Key technical pillars include:

- Scientific AI Agents: Operating within autonomous laboratories, these agents generate hypotheses and analyze observational and simulation data.

- Planetary Representation Layer: This layer integrates satellite imagery, geospatial data, and geo-transformer architectures to create a "world model."

- Multi-Signal Data Fusion: The engine combines observational, simulation, and operational data to deliver insights grounded in reality.

The platform’s 6-step workflow, moving from question decomposition to auditable decision delivery, aims to replace the months-long manual analysis typically performed by high-priced consulting firms with transparent, empirical answers delivered in minutes.

A Heavy-Hitting Ecosystem

Globeholder isn’t going at this alone. The startup is part of the NVIDIA Inception program and has deeply integrated its tech with NVIDIA’s Earth-2 and Cosmos models for large-scale weather and climate modeling. On the infrastructure side, the platform is deployed on AWS, ensuring the performance and resilience required for what they call "sovereign-grade decision-making."

TechArena Take

The most striking revelation in the Thinking Lab release isn’t the AI itself, but how it intends to dismantle the traditional "trust-by-proxy" model of strategic consulting.

Globeholder’s competitive differentiation makes a compelling case for why the current status quo is failing high-stakes industries:

- The Velocity Gap: While traditional consultants require six months to synthesize a report, and LLMs provide answers in seconds, Globeholder operates in the "minutes" sweet spot. This suggests a move away from static, manual updates toward real-time learning that can keep pace with volatile physical systems.

- From Visualization to Investigation: Most traditional GIS (Geographic Information Systems) are limited to maps only, offering visual data but lacking deep reasoning. Globeholder shifts the focus from simple spatial visualization to "Type-2" investigative reasoning grounded in the physical world.

- The Death of the Hallucination: LLMs are notorious for pattern matching and hallucinations because they lack empirical grounding. By enforcing evidence-bound chains and a full audit trail, Globeholder provides the glass box transparency that regulated industries, like energy and finance, require to move beyond text-based predictions.

- Cross-Domain Synthesis: Perhaps the most significant advantage is the ability to perform cross-domain synthesis. Traditional methods often trap data in single-domain silos. Thinking Lab is designed to reason across atmospheric science, infrastructure fragility, and fiscal consequences simultaneously.

Scaling Innovation into Enterprise Growth with Lakecia Gunter

Following this week's launch of the TechArena Advisory, we are excited to highlight the exceptional operators who are now bringing C-suite-grade strategic intelligence within reach of organizations at every stage of growth. While TechArena’s foundation is built on media and tech domain marketing, the Advisory represents our commitment to providing a high-impact alternative to expensive traditional consultants. We believe that in an era of rapid disruption, organizations don’t just need advice, they need the strategic blueprints of those who have already scaled multi-billion-dollar businesses.

To help our audience get to know the experts behind the Advisory, we are launching “5 Fast Facts,” a twice-weekly Q&A series. Our first featured advisor is Lakecia Gunter, an enterprise growth architect with a career defined by leading global teams and mastering the intersection of technology and revenue. Below, Lakecia shares her perspective on the widening gap between tech ambition and business execution.

Q1: What has changed in the tech landscape that made the Advisory a priority for you?

We’re at a moment where technology disruption is moving faster than most organizations can operationalize. AI, platform ecosystems, and digital infrastructure are redefining how companies compete, but many leaders are realizing that adopting technology and scaling it into enterprise growth are two very different things.

I see a widening gap across industries between technology ambition and business execution. Companies are investing heavily in AI and digital capabilities, but many are still figuring out how to connect those investments to revenue growth, ecosystem expansion, and long-term competitive advantage.

After decades of leading global teams responsible for multi-billion-dollar businesses, partner ecosystems, and product platforms, I’ve seen firsthand how technology becomes growth, or fails to.

This moment makes advisory work a priority because leaders need more than technical insight. They need guidance from operators who understand how to translate innovation into enterprise scale, revenue expansion, and lasting market leadership.

Q2: What does your experience bring to this moment?

My experience sits at the intersection of technology innovation and enterprise growth.

Throughout my career, I’ve led global organizations responsible for multi-billion-dollar revenue streams, large partner ecosystems, and enterprise transformation initiatives. At Microsoft, I helped lead strategy and technical engagement for one of the world’s largest partner ecosystems. Earlier roles included direct P&L responsibility for global business units and helping scale new technology platforms into global markets.

What this brings to the moment is the perspective of an enterprise growth architect, someone who understands how technology strategy, revenue models, partner ecosystems, and organizational alignment all work together.

My superpower is helping leadership teams turn emerging technology opportunities into scalable business growth. That means aligning strategy, ecosystems, and operating models so innovation doesn’t stay in pilot mode—it drives real market impact.

Q3: What challenges are business leaders facing that align with your practice areas?

Many business leaders today are navigating a difficult balancing act: investing aggressively in new technologies while ensuring those investments translate into real enterprise value.

Three challenges consistently surface in my work.

The first is AI and digital transformation execution. Organizations are experimenting with AI, but many struggle to operationalize it for measurable growth or operational efficiency.

The second is ecosystem monetization. Innovation increasingly happens through platforms and partnerships, yet many companies have not fully developed the strategies required to activate partner ecosystems as engines of growth.

The third is aligning technology investment with revenue outcomes. Digital transformation programs often focus on tools and infrastructure without clearly tying them to market expansion, customer value, or competitive differentiation.

My work helps leadership teams connect these dots, aligning technology strategy, partner ecosystems, and operating models to unlock scalable enterprise growth.

Q4: Are there key areas that you see most pertinent right now?

Three areas stand out as particularly critical for technology and business leaders today.

The first is AI strategy and governance. As AI moves into core business operations, organizations must balance speed of innovation with responsible deployment, security, and regulatory oversight.

The second is platform and ecosystem strategy. The most successful companies today are not building in isolation, they are architecting ecosystems. Leaders who understand how to activate partners, developers, and platforms will scale innovation far faster than those operating alone.

The third is enterprise growth architecture—ensuring that technology investments are tied to clear revenue models, market expansion opportunities, and long-term strategic positioning.

Organizations that master these three disciplines will be the ones that convert technological disruption into sustained competitive advantage.

Q5: What are the outcomes you’re targeting to drive?

My work centers on one core objective: helping organizations turn technology disruption into enterprise growth.

First, I work with leadership teams to build clear growth architectures, linking AI strategy, platform investments, and ecosystem partnerships directly to revenue expansion and market opportunity.

Second, I help organizations activate partner ecosystems as growth multipliers. When companies synchronize the right partners, platforms, and developer communities, they dramatically accelerate innovation and customer reach.

Third, I support leaders in creating operating models that scale transformation, ensuring that new technologies move beyond pilot programs into enterprise-wide impact.

Ultimately, the goal is simple: help companies move from experimentation to execution, ensuring investments in AI and digital platforms translate into measurable growth, stronger market positioning, and long-term competitive advantage.

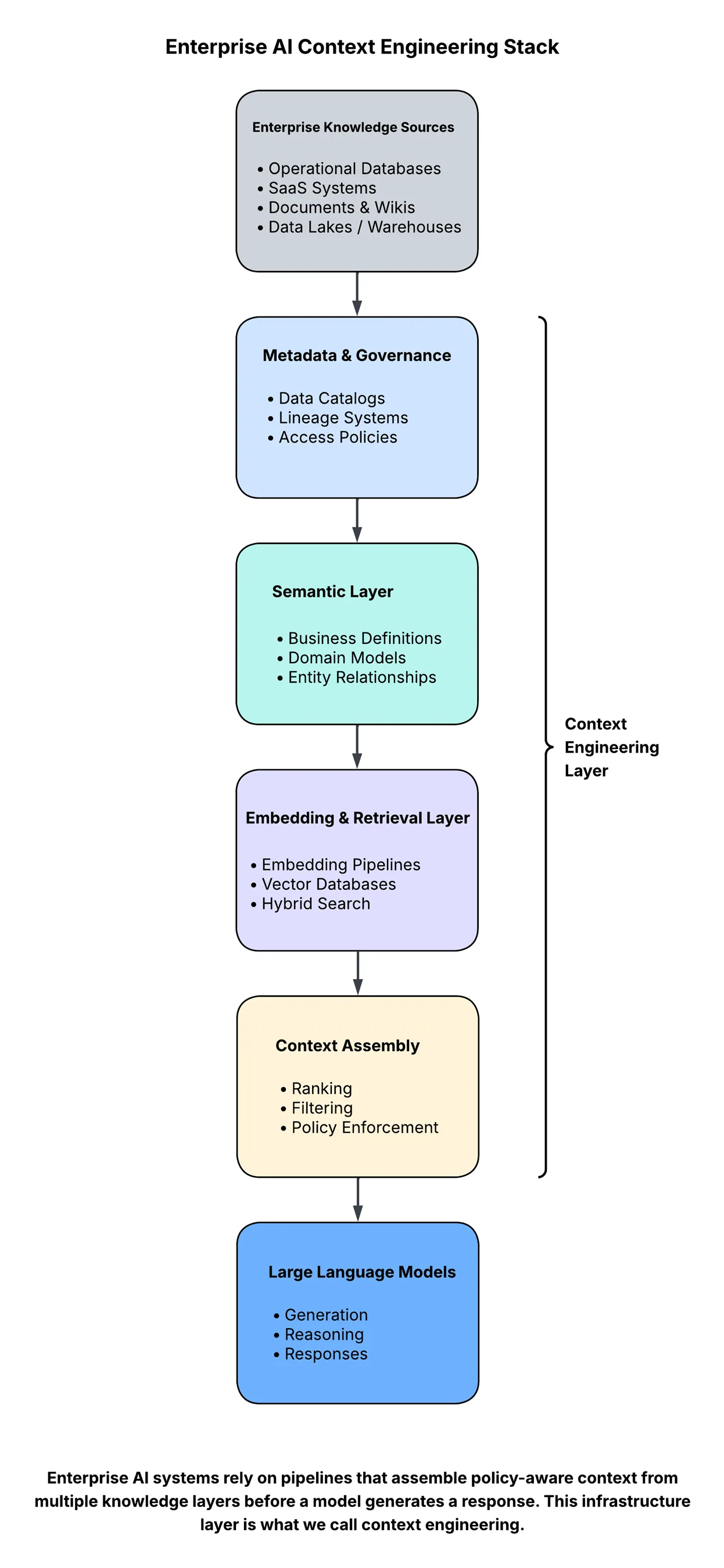

Beyond the Prompt: The Rise of Context Engineering

Last year, a company deployed an internal AI assistant to help employees navigate thousands of internal documents. The system worked beautifully in testing. Then a product manager asked a simple question about the company's policy for handling customer data in Europe.

The assistant confidently returned an answer citing an internal policy document.

There was only one problem. The document had been replaced six months earlier. The AI wasn’t hallucinating. It was reasoning correctly. But on the wrong context.

This is becoming one of the most common failure modes in enterprise AI. Not because models are weak, but because the information surrounding them is poorly engineered. In other words, the biggest problem in enterprise AI is no longer the model. It’s the context.

And solving that problem is giving rise to a new discipline that many organizations didn’t plan for: context engineering.

The Real Bottleneck in Enterprise AI

For the past two years, the AI conversation has revolved around model capability: Which model is larger? Which model is faster? Which model performs best on benchmarks?

But once organizations begin deploying AI inside real systems, they quickly discover something uncomfortable: Most enterprise AI failures are not model failures. They are context failures.

Models are powerful reasoning engines, but they are fundamentally blind to the organizations they operate within. They do not understand internal documentation, product terminology, operational workflows, or governance policies unless that information is explicitly provided to them. And that information rarely exists in one place. It is scattered across data lakes, SaaS platforms, documentation systems, and operational databases. Retrieving the right knowledge at the right moment is not a trivial problem. It is an infrastructure problem.

From Prompt Engineering to Context Engineering

The first wave of generative AI adoption emphasized prompt engineering. Teams experimented with prompt templates, formatting tricks, and system instructions to improve model outputs. While prompts matter, they are ultimately just instructions.

The quality of an AI system’s response depends far more on what the model sees than on how the question is phrased. Enterprise AI systems increasingly rely on pipelines that retrieve and assemble relevant knowledge before a model generates its response.

This architecture typically involves:

- retrieving documents or records from enterprise systems

- ranking and filtering relevant information

- enforcing access policies

- injecting the resulting context into the model prompt

The shift from crafting prompts to assembling context is the foundation of context engineering.

Why Context Is Harder Than It Looks

Most prototypes hide the true difficulty of context management. A small document corpus is embedded into a vector database. Retrieval works well. The AI assistant appears intelligent. But enterprise deployments introduce realities that prototypes ignore, such as:

Freshness: Knowledge changes constantly. If embeddings are not refreshed regularly, AI systems begin reasoning on outdated information.

Permissions: Enterprise data is rarely universally accessible. Context pipelines must enforce access controls before retrieving sensitive information.

Semantic inconsistency: Different systems often describe the same concepts in different ways. Retrieval pipelines must reconcile these differences.

Traceability: Organizations must know where an AI-generated answer originated, especially in regulated industries.

These challenges are not failures of the model. They are failures of context infrastructure. And they are becoming the defining challenge of enterprise AI.

The Architecture of Context Pipelines

To solve these problems, enterprises are quietly building a new layer of infrastructure designed to assemble context for AI systems.

At a high level, the architecture looks something like this:

Every layer introduces challenges that look suspiciously familiar to data engineering teams:

- schema evolution

- metadata management

- governance enforcement

- observability and monitoring

- pipeline reliability

In other words, the infrastructure supporting AI applications increasingly resembles the infrastructure powering modern data platforms.

Why Your Data Team Is Now Your AI Team

One of the more surprising consequences of this shift is how it changes organizational roles. Many companies began their AI journeys assuming the key hires would be machine learning engineers or prompt engineers.

But as deployments mature, organizations are discovering something else. The teams solving the hardest problems in AI are often data platform teams.

Why? Because the quality of an AI system’s answers depends heavily on how well enterprise knowledge is structured, governed, and delivered at runtime.

This requires capabilities, such as:

- building reliable data pipelines

- managing metadata and lineage

- maintaining embedding refresh pipelines

- enforcing data governance policies

- monitoring knowledge freshness and retrieval quality

These are not new problems. They are data engineering problems that are now appearing inside AI systems, which leads to a somewhat uncomfortable observation: Many enterprises are spending millions optimizing model selection while investing almost nothing in the infrastructure that determines what the model actually sees.

The Emergence of Context Platforms

Most enterprise data platforms were designed for human consumption. Dashboards, data catalogs, and analytics systems assume a human analyst interpreting the results.

AI systems operate differently. They require knowledge that is structured, policy-aware, and retrievable in milliseconds. This shift is pushing organizations toward what might be described as context platforms, infrastructure designed specifically to deliver curated knowledge to AI systems.

These platforms combine elements of:

- data lakes and lakehouses

- metadata and lineage systems

- semantic layers

- vector retrieval infrastructure

- governance and policy engines

Together, these components form the operational backbone of reliable AI systems.

The Next Competitive Advantage in AI

Most organizations will eventually have access to similar models. The real differentiator will not be model access. It will be context quality.

Organizations that engineer context effectively will build AI systems that are:

- more accurate

- more explainable

- aligned with enterprise policies

- capable of evolving with the business

Organizations that neglect this layer will continue to experience AI systems that appear intelligent in demos but behave unpredictably in production.

The Infrastructure Beneath Intelligence

Artificial intelligence often appears to operate at the level of reasoning and language. But beneath every reliable AI system lies something less glamorous: pipelines, metadata systems, governance frameworks, and retrieval infrastructure.

In other words, intelligence at scale still depends on infrastructure. The companies that understand this shift earliest will build AI systems that work. Everyone else will keep searching for better models to solve a problem that was never about the model in the first place.

Where AI Inference Hits the Memory Wall

ZeroPoint’s Nilesh Shah explores why data movement, compression, and memory bandwidth now shape AI inference performance, and where heterogeneous systems and quantum may fit next.

TechArena Launches Advisory Practice Led by Former AWS, Intel, & Microsoft C-Suite Executives

PORTLAND, OR & NEW YORK, NY – March 24, 2026 – TechArena announced the formation of a new Advisory practice today, adding former c-suite and executive operators from Intel, AWS, Microsoft, Micron, Flex, and Altera to TechArena's leadership. Advisors bring deep expertise across cloud, AI, data center, and edge computing, with a track record of defining foundational technology, scaling routes to market, and driving business growth through leadership positions across silicon, infrastructure and cloud service leaders. Collectively, they have built $10+ billion businesses, navigated complex value chains, and ushered in major technology inflections including cloud computing,5G networks, and AI data center services.

This announcement, made during the Xcelerated Compute Show in New York, comes as a response to the existential challenges businesses face in navigating today’s accelerated technology landscape as operators race to deploy trillions of dollars of capital equipment to fuel AI’s advancement. Organizations are moving faster through more uncertainty than at any point in history. Traditional consulting models weren’t designed for this. Outside-in frameworks with no clear integration path simply don’t hold up under that kind of pressure. The Advisory practice brings operator experience from the world’s largest technology companies to help solve the most pressing challenges facing industry leaders today.