Empowering Innovation

with Solidigm

Explore the cutting edge of computing from data center to edge including solutions unlocking the AI pipeline, all backed by Solidigm's leading SSD portfolio.

Peak:AIO Optimizes AI Infrastructure to Scale Enterprise Adoption

Insights on AI and Data Management from Intercontinental Exchange

Immersion, Not Hype: Midas on Liquid Cooling at OCP

Midas Immersion Cooling CEO Scott Sickmiller joins a Data Insights episode at OCP 2025 to demystify single-phase immersion, natural vs. forced convection, and what it takes to do liquid cooling at AI scale.

Solidigm Breaks Down Siloed Thinking About AI Infrastructure

No data center component is an island. While the industry conversation around AI infrastructure has focused heavily on graphics processing units (GPUs), a more fundamental truth is emerging: peak performance requires optimizing every component in the stack to work together. In fact, attempting to optimize any single element without considering the broader system inevitably limits what’s possible.

I recently had the opportunity to explore this interconnected reality with Solidigm’s Ace Stryker, product marketing director of AI infrastructure, and Jeniece Wnorowski, director of industry expert programs, to understand how storage requirements are evolving. During our conversation, it became clear that the most significant breakthroughs in AI infrastructure are coming from rethinking how data flows through the entire AI pipeline.

AI’s Expanding Diversity Shapes Storage Requirements

Ace started our conversation by emphasizing that we are still only a few years into AI becoming a prominent cultural force, and that its diverse potential is still yet to be fully uncovered. As AI-enabled workloads diversify with new models, tools, and solution stacks, the requirements for hardware—including storage—are diversifying as well. Truly understanding these requirements, however, means looking beyond storage specifications alone. “From a storage perspective, we’re really concerned about how the storage in an AI cluster interacts with the memory to deliver optimized outcomes,” Ace said. “Storage does not do the job on its own.”

The challenge extends beyond simple read-write speeds. Modern AI systems require careful orchestration between storage layers, host dynamic random-access memory (DRAM), and high-bandwidth memory on GPUs. Understanding how data moves between these memory tiers has become essential for IT architects planning next-generation infrastructure. As Ace noted, attempting to optimize storage in isolation limits the ability to understand what’s actually happening in the AI pipeline.

Challenging Conventional Wisdom

This focus on the interaction between memory and storage has led to research with surprising outcomes. Solidigm recently worked with Metrum AI to examine what happens when significant amounts of AI data are strategically moved from memory onto solid state drives (SSDs) in ways that weren’t typically considered.

The companies used video from a busy traffic intersection and fed it into an analysis pipeline that generated embeddings and created a RAG database, then created a report about what happened in the video with suggestions for safety improvements. By offloading RAG data and inactive model weights from memory to SSDs, they achieved a 57% reduction in DRAM usage for a 100 million vector dataset. More surprisingly, queries per second actually increased by 50% compared to keeping data in memory, thanks to more efficient indexing algorithms in the SSD offload approach.

The implications extend beyond cost savings. The research demonstrated running the Llama 3.3 70 billion parameter model on an NVIDIA L40S GPU, a combination that normally exceeds the GPU’s memory constraints. For organizations looking to repurpose legacy hardware or deploy AI capabilities in edge environments with power limitations, this represents new possibilities for using hardware previously considered inadequate for modern AI-enhanced workloads.

The Density Revolution

While performance optimization captures headlines, capacity evolution tells an equally compelling story. Solidigm’s 122 terabyte (TB) drives, roughly the size of a deck of cards, represent just one milestone in a rapid progression that’s seen capacities jump from 30TB to 60TB to 122TB in a single year. The company has announced plans for 256TB drives, and as Ace said, “It’s not too long before you’re going to see Solidigm and others aiming at a petabyte in a single device, which was unfathomable even five years ago.”

These density improvements deliver practical benefits across the infrastructure stack. Higher capacity per drive means fewer physical devices required, reducing rack space requirements, power consumption, and cooling costs while maintaining the throughput AI-enabled workloads demand.

The Partnership Imperative

Throughout the conversation, Ace returned repeatedly to collaboration as Solidigm’s core operating principle. The company’s logo, an interlocking “S” design, symbolizes partnerships fitting together to solve complex problems. It’s reflected in their approach across the ecosystem, from working with NVIDIA on thermal solutions to collaborating with software orchestration leaders and cloud service providers.

This partnership focus acknowledges a fundamental reality: storage optimization happens within a broader system context involving networking, software orchestration, and compute resources. Solutions that work in isolation rarely deliver optimal outcomes at scale.

The TechArena Take

As AI-enhanced workloads continue their exponential growth, the organizations that understand storage as a strategic enabler rather than a commodity component will gain sustainable advantages. Solidigm’s research demonstrates that intelligent storage strategies can unlock performance improvements while simultaneously reducing costs and expanding deployment possibilities. For IT architects planning next-generation AI infrastructure, the message is clear: look beyond the GPU specifications and examine how data moves through your entire system. The gains not only in efficiency, but overall capability, may surprise you.

Learn more about Solidigm’s AI-focused storage innovations at solidigm.com or connect with Ace Stryker on LinkedIn.

Data Insights: AI in Healthcare with Dr. Rohith Vangalla

In this episode of Data Insights, host Allyson Klein and co-host Jeniece Wnorowski sit down with Dr. Rohith Vangalla of Optum to discuss the future of AI in healthcare.

Castrol on Liquid Cooling: Additives, Filters, Failure Modes

From hyperscale direct-to-chip to micron-level realities: Darren Burgess (Castrol) explains dielectric fluids, additive packs, particle risks, and how OCP standards keep large deployments on track.

PEAK:AIO on Smarter Storage for AI—Data Insights @ OCP

From OCP in San Jose, PEAK:AIO’s Roger Cummings explains how workload-aware file systems, richer memory tiers, and capturing intelligence at the edge reduce cost and complexity.

How Equinix & Solidigm See Evolving Workloads Reshaping IT

Once defined by monolithic architectures and predictable workloads, today’s enterprise data center strategies are shaped by the explosive rise of AI, the realities of hybrid multicloud, and the mounting pressure of regulatory and efficiency demands. I recently spoke with Glenn Dekhayser, global principal technologist at Equinix, and Scott Shadley, leadership marketing director at Solidigm, who shared their perspectives on how enterprises are adapting, and what it will take to succeed in the years ahead.

The conversation began with an important insight on data center infrastructure from Glenn, who noted that AI has “10x’d” hybrid multicloud architectures. As he explained, organizations are grappling with where to deploy AI workloads—cloud, GPU-as-a-service, on-premises, or edge. As those workloads move to production, they’re driving a fundamental shift toward dense power solutions and liquid cooling as enterprises seek to control costs and performance.

But the real transformation is in how organizations think about data itself, with “data-centric” strategy, which while complex in execution, comes down to a simple idea. “Whatever you’re doing, creating value starts with your data,” said Glenn. For enterprises trying to extract new value streams out of data, that means now workloads come to that data, rather than the reverse. Enterprises are creating entire data marts to reflect the change that data no longer has one-to-one relationships with applications, and instead, multiple applications access shared datasets.

This centralized data approach addresses the reality that while workloads are relatively easy to deploy and orchestrate, datasets carry constraints: they’re slow to move, require governance, and face compliance and sovereignty requirements. In response to these challenges, Glenn said he counsels customers to create an “authoritative core,” one copy of active datasets on equipment you control in locations you can access.

This core, of course, must be balanced with the ability to project data where it needs to be for optimal governance, compliance, cost, and performance. That could be in public clouds, at the edge, or in the core data center. Many enterprises, however, “Are starting to realize they’re not necessarily set up to take advantage of all those places,” Glenn said. “And if their data architecture...[isn’t] ready to accommodate this mobility, they’re going to find themselves at a competitive disadvantage.”

As organizations work to upgrade their data architecture to avoid such a disadvantage, data center infrastructure technology advancements are keeping pace. Scott explained how storage technology in particular is evolving to meet the needs of new data center strategies. Large capacity drives help enterprises keep data close and store more while using less power, while performance drives enable fast work with the data at hand, so storage doesn’t become a bottleneck. As he summarized, “A modern architecture of flash tiering, flash plus hard drives, is becoming even more and more valuable.”

When asked about the one question enterprise data center managers should consider that they weren’t thinking about five years ago, both experts converged on a theme: architectural flexibility for unknown future requirements. Scott emphasized not just focusing on capital expenses, but considering operational expenses and building systems with five-year operational efficiency in mind. Glenn emphasized the unknown, saying he often asks organizations how they are “architected for change” in a world where the next transformational service provider could emerge from completely unexpected origins.

The conversation culminated in an analogy: if the best time to plant a tree was 50 years ago, the second-best time is now. For enterprises sitting on large datasets in public cloud environments, the cost and complexity of data mobility only increase with time. For organizations that aren’t yet “architected for change,” the time to remedy that is now, not in one to two years when their data lake will be even larger, and therefore more difficult and more expensive to move.

The TechArena Take

The enterprise data center conversation has evolved from optimizing known workloads to architecting for unknowable futures. Equinix and Solidigm’s insights reveal that success increasingly depends on maintaining data sovereignty while preserving access to innovation. Organizations that establish authoritative data cores with agile connectivity to diverse service ecosystems today will be positioned to capitalize on tomorrow’s transformational opportunities, whatever form they may take.

OCP, Power Grids & Open Racks — with Dr. Andrew Chien

From OCP Summit San Jose, Allyson Klein and co-host Jeniece Wnorowski interview Dr. Andrew Chien (UChicago & Argonne) on grid interconnects, rack-scale standards, and how openness speeds innovation.

Kelley Mullick of Avayla on Rack-Scale Design & Hybrid Cooling

From OCP Summit 2025, Kelley Mullick joins Allyson Klein and co-host Jeniece Wnorowski for a Data Insights episode on rack-scale design, hybrid cooling (incl. immersion heat recapture), and open standards.

MLPerf’s Breakout Year: Benchmarking Expands with AI

As AI spreads into every corner of the technology ecosystem, the industry is under mounting pressure to measure real performance consistently and transparently. Whether it's hyperscale training runs, edge deployments, or domain-specific AI applications like automotive, the need for shared benchmarks is coming into sharper focus.

At the AI Infra Conference in Santa Clara, Jeniece Wnorowski and I sat down with David Kanter, Founder of MLCommons and Head of MLPerf, for a Data Insights conversation that captured a pivotal moment for the benchmarking community. 2025 has been, as Kanter described it, “the summer of MLPerf.”

Benchmarking Matures as AI Expands

“This has actually been a really breakout year for us,” Kanter said. “...In the last couple months, I was jotting an e-mail down to the team and I was describing it as the summer of MLPerf.”

The momentum is tangible. He went on to describe new initiatives that reflect how AI adoption is spreading into new domains.

“We talked about MLPerf Storage. We also have MLPerf Automotive, which came out very recently, [and] MLPerf Client. And so, part of this is, as we’re seeing AI being adopted in more and more places, we have to come in and help fill those gaps. Storage was sort of us working with some storage folks to spot some coming challenges and...automotive was sort of in our response to the automotive folks saying, ‘You know, OK, we are going to be using more AI, we’re making more intelligent vehicles, we need to get our hands around this.’”

These expansions reflect the growing role of benchmarking beyond traditional training and inference in hyperscale environments. Storage and automotive, in particular, highlight the diversification of AI workloads across industries.

Still in “Benchmarking Grade School”

Kanter offered a candid perspective on MLPerf’s age compared to other well-established benchmarks.

“Some of the most established benchmarking organizations that we look up to are 30 years old and they’ve been honing their craft,” he said. “We’re seven years old...We’re still in grade school. The babies of benchmarks.”

That self-awareness underscores the pace at which MLPerf is evolving. Unlike existing benchmarks that matured slowly over decades, MLPerf operates in a landscape where new accelerators, models, and deployment modalities emerge every quarter.

“Just keeping a pace of things is both exciting, a little stressful, and a bit of a challenge,” he said.

Building Community Through Standards

Kanter emphasized that MLCommons isn’t just about benchmarking—it’s a volunteer-driven community.

“I always say to anyone...if any of these resonate with you, please show up. We are a community of volunteers...It came together with a bunch of folks who just saw a problem and said we all want to solve it together.”

Beyond MLPerf, he stressed that MLCommons works on a ton of projects around data including AI risk and reliability, research, and foundational datasets and schemas.

“How do we standardize making data accessible to AI?” he said. “How do we make AI more reliable and responsive to what humans want?”

That openness has been key to MLPerf’s rise as the de facto performance yardstick across the AI ecosystem. Submissions from vendors large and small now shape how the industry evaluates real-world performance.

Why Benchmarks Matter Now

As enterprises wrestle with where to deploy AI—in centralized facilities, at the edge, or in hybrid environments—benchmarks like MLPerf give them objective tools to evaluate trade-offs. They’re also increasingly relevant for sustainability strategies, allowing organizations to understand performance per watt or per dollar across platforms.

In an era of rapid infrastructure buildout and diversification, shared benchmarks provide a common language for vendors, operators, and developers alike.

TechArena Take

Benchmarking is becoming a strategic force in AI infrastructure. What started as a niche performance measurement initiative has grown into a foundational layer that shapes how chips are designed, systems are built, and deployments are optimized.

David Kanter’s reflections highlight both the rapid maturity of MLPerf and the youthful dynamism of a community racing to keep up with AI’s evolution. As AI spreads into storage systems, automotive environments, and edge devices, the role of shared, open benchmarks will only deepen.

The bottom line: In the AI era, you can’t scale what you can’t measure. And MLCommons is ensuring the industry has the tools—and the community—to do just that.

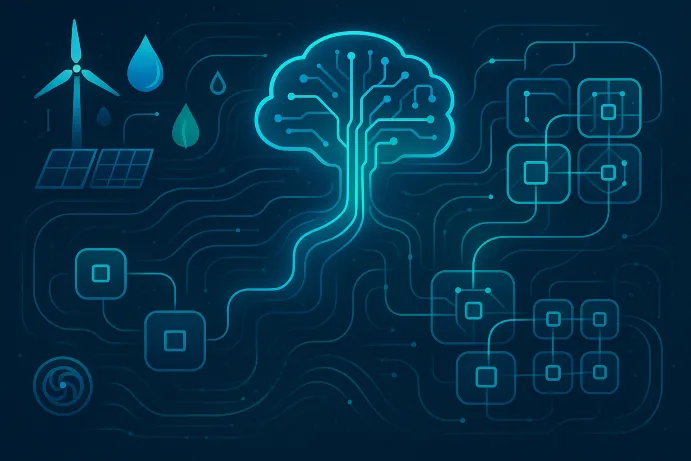

Inside Iceotope’s Mission to Cool AI Sustainably

As AI workloads push data centers to their physical and environmental limits, the industry is waking up to a hard truth: today’s cooling methods can’t sustain tomorrow’s compute demands. The rise of multi-megawatt racks, unprecedented water consumption, and surging energy use are forcing operators to rethink infrastructure from the ground up.

At the 2025 AI Infra Summit in Santa Clara, Jeniece Wnorowski and I sat down with Jonathan Ballon, CEO of Iceotope, for a Data Insights interview on why it’s imperative to consider sustainability in AI infrastructure buildout—and how Iceotope is redefining cooling technology both at the edge and in the datacenter.

The Efficiency Imperative

AI’s rapid growth has triggered exponential increases in power and water consumption. Traditional air cooling is struggling to keep up with the thermal profiles of modern AI accelerators, while water-intensive cooling towers and evaporative systems are under scrutiny for their environmental impact.

“When you look at the amount of resources that are being consumed right now—whether it’s power or water—it’s unsustainable,” Ballon said.

This urgency was one of the key factors that drew him to lead Iceotope. The company’s datacenter liquid cooling technologies use 96% less water than conventional systems and reduce cooling power requirements by up to 80%. Their edge AI solutions can be deployed in almost any environment, without the use of existing facility water or dry chillers.

Those kinds of efficiency gains aren’t just good for the planet—they’re essential for the economics of deploying AI workloads. As data center campuses scale into the gigawatt range and high-throughput edge applications that require low latency continue to grow, sustainable cooling is becoming both a moral and a financial imperative.

Rethinking Infrastructure Without Forklifts

For enterprises, the efficiency conversation is tied closely to infrastructure planning. Many organizations are not starting from scratch—they’re retrofitting existing facilities or deploying AI workloads incrementally.

“I think enterprises need to think carefully about how they’re going to invest in new infrastructure,” Ballon explained. “What we’re seeing is enterprises that are looking for retrofit capability that doesn’t require forklift upgrades so that they can adopt liquid cooling gradually rather than having to do...forklift changes either in their existing infrastructure or in their new builds. Looking for technology that allows you to do that is super important. Otherwise, they could be making major infrastructure changes that could be outdated in three to five years, which could be a major risk.

That message resonates with enterprise IT leaders who must balance innovation with capital discipline. Liquid cooling solutions that integrate into existing footprints without major overhauls offer a pragmatic path forward.

Lessons from Hyperscalers

It’s tempting for enterprises to look at hyperscalers or “neo-clouds” as models for AI infrastructure strategy. But Ballon cautioned against simply trying to emulate the giants.

Instead, he suggests that enterprises use hyperscaler deployments as reference points while tailoring their strategies to their own operational realities. For many organizations, that means exploring modular, flexible solutions that enable growth over time rather than attempting to leap directly to hyperscale patterns.

New Edge Deployment Models

One of the most intriguing threads of the conversation centered on non-traditional deployment locations. Ballon and the hosts discussed the idea of deploying AI infrastructure in underused commercial spaces, like vacant office buildings, as part of an edge strategy.

While a hyperscaler wouldn’t take over an office suite to build something that isn’t at their usual scale, a retailer might, he said. As enterprises look for ways to bring AI closer to where data is generated, creative use of available real estate could accelerate edge deployments, particularly if efficient, compact, and quiet cooling solutions make it viable. Iceotope’s latest product line focuses on edge solutions that don't require access to facility water or dry chillers. Their fan-free, quiet operation makes them ideal for deployment in office environments.

A Legacy-Driven Mission

For Ballon, Iceotope’s mission goes beyond engineering.

“I’m at the stage of my career where I think about my kids, I think about the environment, and what legacy I want to leave behind,” he said.

By dramatically reducing water and energy use, Iceotope aims to make the environmental footprint of AI infrastructure compatible with a sustainable future. It’s a vision that blends technical innovation with a long-term view of responsibility—one increasingly shared across the data center industry.

The TechArena Take

Cooling is no longer an operational afterthought—it’s becoming a strategic lever for sustainable AI growth. Iceotope’s approach demonstrates how innovations in liquid cooling can enable enterprises to meet surging compute demands while dramatically cutting environmental impact.

The big insight from Ballon’s conversation: enterprises don’t need to mimic hyperscalers to succeed in the AI era. By focusing on retrofit-friendly technologies and purpose-built designs, they can chart their own path—one that balances performance, cost, and environmental stewardship.

As AI infrastructure spreads beyond massive campuses into distributed edge locations, energy efficient and water-saving cooling solutions will shape the future of data center design. Iceotope is positioning itself squarely at the intersection of sustainability and innovation.

Giving Storage Strategy a Reboot for Efficiency with Solidigm

Modern storage infrastructure presents a complex balancing act. As solid-state drives (SSDs) evolve to provide performance levels demanded by artificial intelligence (AI) workloads, power consumption has grown alongside speed, prompting a necessary evolution in how organizations evaluate and optimize their storage investments.

During a recent TechArena Data Insights episode, I spoke about this phenomenon with Jeniece Wnorowski, director of industry expert programs at Solidigm, and Scott Shadley, director of leadership narratives at Solidigm. Our conversation revealed the complex factors affecting storage efficiency, and key areas organizations need to consider when undertaking efforts to optimize their systems.

Redefining Storage Efficiency with Modern Metrics

To set the stage for our conversation about storage efficiency, Scott noted that in his work with customers and partners, what’s critical is “Understanding how we manage budgets. And those budgets include power budgets and all the other aspects of building an efficient data center,” he said.

Considering how finite resources are allocated has become increasingly important as modern flash-based storage products are being deployed in architectures that demand unprecedented performance levels. These demands have led SSDs to draw more power than ever expected, given they were designed to be both fast and power efficient.

The challenge, in fact, lies not in the technology, but in the metrics used to determine the best storage solution for use case requirements. As system demands increase, new measures are necessary to make architecture and procurement decisions. “We’ve always used the same metric, dollar per gigabyte,” Scott explained. “There’s a lot of new metrics that we’re focused on today, like watts per terabyte or terabytes per input/output operations per second…so we’ve evolved the ecosystem to talk through what a modern infrastructure looks like.” These measurements provide a more accurate picture of total system efficiency and help guide decisions from being about the fastest or the biggest drive to the right storage solution for the job.

Engineering Efficiency in Every Part of SSDs

While the legacy of SSDs is already rooted in efficiency, Solidigm is actively working on solutions to even further improve storage efficiency. For example, the company has worked with standards bodies and partners to optimize idle times. “These power states that we can put drives in make sure that they make the most of the power available to them. They have fast on, fast off, and things that you just can’t do with other aspects of storage infrastructure,” he explained

The architectural innovations extend beyond power states to fundamental design choices. For example, Scott detailed how Solidigm has long focused on optimizing the design of SSDs’ controllers, which can draw significant power if designed inefficiently. For ultra-high-capacity drives like their 122TB models, they’ve worked within the architecture and firmware design to keep only necessary components active as needed, which becomes critical when hundreds of drives populate enterprise racks.

Tackling System-Level Optimization for Maximum Benefit

Beyond the drives themselves, holistic system changes are critical to optimizing efficiency. Scott emphasized that modernization efforts must address both hardware and software components to realize systems’ full potential. Our discussion revealed a particularly intriguing challenge on the software side: legacy code optimization. Many applications originally designed for spinning media include built-in wait times, which become counterproductive with SSD deployment. These unnecessary delays waste power because systems continue drawing energy while waiting for data that has already arrived.

Taking that challenge of comprehensive improvement a step further, Scott pointed out that drives are just one component of a larger system that must be considered. “It’s not about the drive,” he said. “It’s about the rack, and what you can do with the rack to make that rack more efficient.” A partnership Ocient, which builds a rack infrastructure that reduces the physical footprint required, shows the benefits of this approach. Reducing the footprint reduces the server count and rack-level power, which then translates into true reductions in total cost of ownership.

For organizations beginning efficiency overhauls, Scott recommended focusing on three key areas: software infrastructure optimization to eliminate unnecessary wait times, right-sizing storage performance to actual requirements rather than perceived needs, and leveraging portfolio diversity to match specific use cases with appropriate storage technologies. “Don’t just buy the fastest things, and even sometimes the biggest one isn’t what you need. We’ve got the portfolio to help you make yourself the most efficient system that can also scale,” he said.

The TechArena Take

The evolution of storage efficiency reflects a broader maturation in how enterprises approach infrastructure optimization. While IT teams wrestle with rising power consumption from high-performance storage, Solidigm’s focus on comprehensive efficiency demonstrates that the solution lies in addressing a complex web of factors. The companies prepared to not only work with efficient, modern drives, but to update their purchasing decision metrics and set aside piecemeal optimization strategies for a true systems-thinking approach will see the greatest benefits as workload demands continue to accelerate.

To learn more about Solidigm’s approach to efficiency and storage, connect with Scott Shadley on LinkedIn or explore Solidigm’s efficiency solutions at solidigm.com.

Rewriting the Data Center Playbook for AI with Solidigm

Reliable. Economical. Even predictable. Those were the markers by which enterprises historically measured storage, like a necessary utility. The explosion of AI workloads, however, is forcing a complete reimagining of infrastructure architecture. The challenge is no longer having enough capacity. It’s orchestrating an intricate dance between compute, memory, and storage while managing a critical fourth element: power.

I recently had the opportunity to explore this transformation with Scott Shadley, director of leadership narrative at Solidigm, and Jeniece Wnorowski, director of industry expert programs at Solidigm, during a recent TechArena Data Insights episode. Their conversation revealed how storage companies are evolving from component suppliers to strategic infrastructure partners, and why the traditional approach to data center planning is becoming obsolete.

Finding the Right Balance as Everything Gets Bigger and Faster

Scott emphasized a critical reality that many organizations are desperately grappling with. Modern infrastructures, whether supporting AI workloads or enterprise applications, are requiring exponentially more capacity and performance to support data at unprecedented scales. But this data explosion is happening between two contrasting forces: the constraints of finite data center space, and unconstrained escalating power costs. “As everything gets faster and everything gets bigger, it starts to consume more power,” Scott said.

With these factors in tension, treating compute, memory, and storage as separate architectural components is no longer sustainable. “We spent a lot of time in the industry talking about compute, memory, and storage as three unique architectures,” Scott explained. “We’ve really gotten past that, and it’s now starting to look at how do we really do a better job of load balancing everything.”

Beyond Component Sales: The Strategic Partnership Evolution

With this shift, Solidigm is fundamentally redefining its role in the marketplace, becoming an infrastructure enabler and planning partner to its customers. The company offers deep expertise not just in storage technology, but in the workloads that storage enables. Scott noted that their team includes experts who have become more AI specialists than storage specialists, a strategic decision that enables them to anticipate infrastructure needs rather than simply react to them.

Scott explained that their approach has moved far beyond product specifications and benchmarks: “It’s not about pitching slides. It’s not about ‘buy this because it’s the next big thing.’ It’s really about having those conversations with folks to make sure they understand their need, we understand their needs, and they understand the solutions available.”

Engineering for Tomorrow’s Workloads Today

With this expanded role, Scott emphasized that successful infrastructure planning requires thinking beyond “what’s next” to the “next-next” scenarios: “The current products are amazing, and where we think they’re going to go is great. But if we’re not already thinking about next-to-next type of thing—and we’re not even talking 5-year, we’re talking 10-year roadmaps—you’re never going to be able to design the products fast enough.”

This forward-looking approach is particularly critical as emerging trends like edge computing, software-defined infrastructure, and composable architectures create divergent yet complementary demands. Edge deployments might require everything from ultra-compact drives for space-constrained environments to massive 122TB drives for high-density applications. Each scenario demands different architectural approaches while maintaining consistent reliability and integration characteristics.

The Economics of Future-Proofing

Long-term thinking also affects another facet of decision making: price. “I’m probably going to make a few people mad…but CapEx is not the issue,” Scott said. “I mean, everybody says, ‘I’ve got to spend as little as possible on CapEx,’ but CapEx is for today.”

Scott argues that infrastructure decisions made today will determine operational costs, and that the ability to maximize performance per watt, optimize rack density, and minimize cooling requirements can deliver operational savings that far exceed initial hardware cost differences. And these decisions don’t just affect costs, but capabilities. Today’s investments and architectural decisions determine whether organizations will be positioned to capitalize on emerging opportunities or constrained by infrastructure limitations.

The TechArena Take

Solidigm’s expanding role as an infrastructure partner reflects a broader industry transformation where success depends on understanding workloads as much as hardware specifications. The company’s emphasis on long-term roadmap alignment, customer collaboration, and holistic system optimization demonstrates how technology companies can create sustainable competitive advantages in rapidly evolving markets.

The most compelling aspect of their approach is the recognition that tomorrow’s data center challenges won’t be solved by simply making components faster or denser. Success will depend on architecting solutions that balance performance, power efficiency, and operational flexibility while anticipating workload requirements that haven’t yet fully emerged. Organizations that understand these dynamics, and that invest in understanding workloads rather than just selling products, will be best positioned to navigate the infrastructure complexities ahead.

Connect with Scott Shadley on LinkedIn or explore Solidigm’s AI-focused solutions at solidigm.com/AI to continue the conversation about future-proofing your data center infrastructure.

AI in Finance: Governance, Compliance, and Innovation

Anusha Nerella joins hosts Allyson Klein and Jeniece Wnorowski to explore responsible AI in financial services, emphasizing compliance,collaboration, and ROI-driven adoption strategies.

AI, Flash, and Data Protection: Scality’s Vision with Paul Speciale

Scality CMO Paul Speciale joins Data Insights to discuss the future of storage—AI-driven resilience, the rise of all-flash deployments, and why object storage is becoming central to enterprise strategy.

Valvoline Brings Liquid Cooling Power to AI Data Centers

From racing oils to data center immersion cooling, Valvoline is reimagining thermal management for AI-scale workloads. Learn how they’re driving density, efficiency, and sustainability forward.

Reinventing RAID for AI: Xinnor and Solidigm on Storage’s Future

This Data Insights episode unpacks how Xinnor’s software-defined RAID for NVMe and Solidigm’s QLC SSDs tackle AI infrastructure challenges—reducing rebuild times, improving reliability, and maximizing GPU efficiency.

WEKA on AI Data Centers: A New Infrastructure Playbook

In this episode, Allyson Klein, Scott Shadley, and Jeneice Wnorowski (Solidigm) talk with Val Bercovici (WEKA) about aligning hardware and software, scaling AI productivity, and building next-gen data centers.

Hybrid AI at Scale: Celestica’s Playbook

From AI Infra Summit, Celestica’s Matt Roman unpacks the shift to hybrid and on-prem AI, why sovereignty/security matter, and how silicon, power, cooling, and racks come together to deliver scalable AI infrastructure.

Cooling the AI Heat: JetCool’s Strategy for Data Centers

Discover how JetCool’s proprietary liquid cooling is solving AI’s toughest heat challenges—keeping data centers efficient as workloads and power densities skyrocket.

Solidigm on Building Future-Ready AI Storage

Solidigm’s Ace Stryker joins Allyson Klein and Jeniece Wnorowski on Data Insights to explore how partnerships and innovation are reshaping storage for the AI era.

MLCommons: Setting the Standard for AI Performance

From storage to automotive, MLPerf is evolving with industry needs. Hear David Kanter explain how community-driven benchmarking is enabling reliable and scalable AI deployment.

Dell Highlights Four Shifts Powering the AI Storage Revolution

Dell outlines how flash-first design, unified namespaces, and validated architectures are reshaping storage into a strategic enabler of enterprise AI success.

Rafay’s Haseeb Budhani on Building the Future of AI Infrastructure

Haseeb Budhani, Co-Founder of Rafay, shares how his team is helping enterprises scale AI infrastructure across the globe, and why he believes we’re still in the early innings of adoption.

From AI Infra: AI Strategy, Security, & Human Impact with Daniel Wu

Direct from AI Infra 2025, AI Expert & Author Daniel Wu shares how organizations build trustworthy systems—bridging academia and industry with governance and security for lasting impact.

Equinix & Solidigm on the Real Cost of AI Infrastructure Demands

Equinix’s Glenn Dekhayser and Solidigm’sScott Shadley discuss how power, cooling, and cost considerations are causingenterprises to embrace co-location among their AI infrastructure strategies.

.jpg)