Arm Joins OCP Board, Contributes Chiplet Architecture Spec

Today, Arm took a trailblazing step toward its bold vision to innovate the future of converged AI data centers.

During the Open Compute Project (OCP) Foundation’s 2025 Global Summit, the OCP Foundation announced the company as the latest board of directors member, underscoring the relative importance of Arm processors to the future of hyperscale and AI data centers.

Arm also made a splash, announcing a new contribution to the OCP ecosystem: a vendor-neutral Foundation Chiplet System Architecture (FCSA), with plans to drive further innovation up the compute stack.

“Every AI data center today is chasing densification—packing as much compute as possible into every rack,” said Eddie Ramirez, vice president of go-to-market for infrastructure at Arm. “That means we’re talking about racks that consume the equivalent power of 100 homes. Efficiency isn’t just an advantage anymore; it’s a survival requirement.”

With its new FCSA spec, Arm is doubling down on openness, efficiency, and co-design across the semiconductor supply chain, driving toward establishing vendor-neutral chiplet interoperability. The company also unveiled new growth in its Arm Total Design ecosystem, which has tripled in size since its 2023 launch. Together, these moves underscore Arm's strategy to drive innovation as the data center industry shifts from commodity servers to “rack-level systems and large-scale clusters designed specifically for AI.”

“We’ve reached a point where we’re not just bringing our standards into OCP,” Ramirez added. “We’re bringing the entire Arm Total Design ecosystem.”

From Efficient Cores to Efficient Racks

Arm’s contribution to OCP comes at a pivotal moment. Across the industry, power-hungry AI workloads are reshaping data center design—from servers to racks to entire campuses. The latest AI systems push rack-level power densities to previously unthinkable levels, multiplying power consumption tenfold, and redefining the physics of deployment.

That message plays directly to Arm’s long-standing strength: energy-efficient computing. Arm’s low-power architecture has already enabled hyperscalers like AWS, Google, and Microsoft to deliver significant total cost of ownership (TCO) advantages in the cloud. Now, as AI demand accelerates, those same principles are being applied to massive, heterogeneous data center systems where every watt counts.

Redefining Integration: From Boards to Packages

While OCP has historically focused on modularity at the server level, Arm sees a shift happening inside processor composition itself. AI accelerators now combine compute, networking, and memory into highly integrated System-on-Chips (SoCs) composed of multiple chiplets—discrete dies that can be mixed and matched to optimize performance, cost, and power.

This evolution demands a new kind of open standard, Ramirez said.

“The integration point is moving from the server board to the silicon package,” he said. “AI SoCs now use chiplets for HBM memory, compute, IO, NPUs, and more—all married together. The Foundation Chiplet System Architecture enables that same modularity and interoperability at the silicon level.”

By contributing FCSA to OCP, Arm aims to enable companies to develop interoperable chiplets that can be reused across multiple products—expanding opportunities for smaller design houses and accelerating the overall pace of semiconductor innovation.

Arm Total Design: Scaling the Ecosystem

FCSA builds on momentum from Arm Total Design, a global collaboration launched two years ago to bring foundries, design houses, IP vendors, and manufacturers together to shorten design cycles and reduce development costs.

The ecosystem now includes 36 members, with 10 new additions debuting at OCP—among them Astera Labs, Credo, Eliyan, Marvell, Alchip, ASE, CoAsia, Insyde Software, Rebellions, and VIA NEXT. These companies span the AI SoC value chain, from IO chiplets and interconnect technology to advanced 3D packaging and die-to-die communication.

That infusion of collaboration is designed to create what Ramirez called a “mix-and-match” future where companies can specialize in a single chiplet and integrate it seamlessly into others’ designs through common frameworks and interfaces.

Driving Open, Sustainable AI Infrastructure

Beyond silicon innovation, Arm’s engagement with OCP reflects a broader sustainability and openness agenda. With AI driving exponential energy consumption, efficiency has become inseparable from environmental responsibility.

“The old approach of multiplying power per rack by ten is simply not sustainable,” Ramirez said. “We need to deliver performance gains through efficiency, not escalation.”

He emphasized that Arm’s OCP participation will prioritize vendor-neutral, open ecosystems and collaboration, including among traditional competitors from the x86 ecosystem. The company’s leadership role in OCP’s chiplet work group aims to expand participation from both large and small players, strengthening the global chiplet supply chain.

Looking Ahead: Beyond Training to Inference

While much of the AI infrastructure discussion centers on massive training clusters, Arm is also looking toward the next frontier: inference. Ramirez noted that future OCP efforts will increasingly focus on inference workloads closer to the edge, where latency, efficiency, and scalability drive different architectural requirements than the mega-racks built for model training.

These dual tracks—AI training and inference—mirror the broader compute evolution from cloud to edge, and from monolithic design to modular intelligence.

The TechArena Take

Even five years ago, thinking of Arm as an OCP board member was beyond believable. Arm’s ascendency as a critical player in hyperscale and AI infrastructure has been swift and impressive. The FCSA contribution to OCP could mark a pivotal shift in how the semiconductor industry collaborates moving forward, further underscoring Arm’s relative influence. By opening chiplet design to vendor-neutral standards, Arm is moving the ecosystem closer to the “plug-and-play” era of heterogeneous computing—one where silicon innovation can scale as fluidly as software.

As rack-level AI architectures push power and complexity to their limits, Arm’s strategy—anchored in efficiency, interoperability, and co-design—positions it at the heart of the industry’s most urgent transformation.

Check out Arm’s post for more information.

AMI on Open Firmware for the AI-Native Data Center

AMI CEO Sanjoy Maity joins In the Arena to unpack the company's shift to open source firmware, OCP contributions, OpenBMC hardening, and the rack-scale future—cooling, power, telemetry, and RAS built for AI.

MLPerf’s Breakout Year: Benchmarking Expands with AI

As AI spreads into every corner of the technology ecosystem, the industry is under mounting pressure to measure real performance consistently and transparently. Whether it's hyperscale training runs, edge deployments, or domain-specific AI applications like automotive, the need for shared benchmarks is coming into sharper focus.

At the AI Infra Conference in Santa Clara, Jeniece Wnorowski and I sat down with David Kanter, Founder of MLCommons and Head of MLPerf, for a Data Insights conversation that captured a pivotal moment for the benchmarking community. 2025 has been, as Kanter described it, “the summer of MLPerf.”

Benchmarking Matures as AI Expands

“This has actually been a really breakout year for us,” Kanter said. “...In the last couple months, I was jotting an e-mail down to the team and I was describing it as the summer of MLPerf.”

The momentum is tangible. He went on to describe new initiatives that reflect how AI adoption is spreading into new domains.

“We talked about MLPerf Storage. We also have MLPerf Automotive, which came out very recently, [and] MLPerf Client. And so, part of this is, as we’re seeing AI being adopted in more and more places, we have to come in and help fill those gaps. Storage was sort of us working with some storage folks to spot some coming challenges and...automotive was sort of in our response to the automotive folks saying, ‘You know, OK, we are going to be using more AI, we’re making more intelligent vehicles, we need to get our hands around this.’”

These expansions reflect the growing role of benchmarking beyond traditional training and inference in hyperscale environments. Storage and automotive, in particular, highlight the diversification of AI workloads across industries.

Still in “Benchmarking Grade School”

Kanter offered a candid perspective on MLPerf’s age compared to other well-established benchmarks.

“Some of the most established benchmarking organizations that we look up to are 30 years old and they’ve been honing their craft,” he said. “We’re seven years old...We’re still in grade school. The babies of benchmarks.”

That self-awareness underscores the pace at which MLPerf is evolving. Unlike existing benchmarks that matured slowly over decades, MLPerf operates in a landscape where new accelerators, models, and deployment modalities emerge every quarter.

“Just keeping a pace of things is both exciting, a little stressful, and a bit of a challenge,” he said.

Building Community Through Standards

Kanter emphasized that MLCommons isn’t just about benchmarking—it’s a volunteer-driven community.

“I always say to anyone...if any of these resonate with you, please show up. We are a community of volunteers...It came together with a bunch of folks who just saw a problem and said we all want to solve it together.”

Beyond MLPerf, he stressed that MLCommons works on a ton of projects around data including AI risk and reliability, research, and foundational datasets and schemas.

“How do we standardize making data accessible to AI?” he said. “How do we make AI more reliable and responsive to what humans want?”

That openness has been key to MLPerf’s rise as the de facto performance yardstick across the AI ecosystem. Submissions from vendors large and small now shape how the industry evaluates real-world performance.

Why Benchmarks Matter Now

As enterprises wrestle with where to deploy AI—in centralized facilities, at the edge, or in hybrid environments—benchmarks like MLPerf give them objective tools to evaluate trade-offs. They’re also increasingly relevant for sustainability strategies, allowing organizations to understand performance per watt or per dollar across platforms.

In an era of rapid infrastructure buildout and diversification, shared benchmarks provide a common language for vendors, operators, and developers alike.

TechArena Take

Benchmarking is becoming a strategic force in AI infrastructure. What started as a niche performance measurement initiative has grown into a foundational layer that shapes how chips are designed, systems are built, and deployments are optimized.

David Kanter’s reflections highlight both the rapid maturity of MLPerf and the youthful dynamism of a community racing to keep up with AI’s evolution. As AI spreads into storage systems, automotive environments, and edge devices, the role of shared, open benchmarks will only deepen.

The bottom line: In the AI era, you can’t scale what you can’t measure. And MLCommons is ensuring the industry has the tools—and the community—to do just that.

CelLink Flex Harnesses Enable the AI Power Revolution

To keep up with AI’s relentless pace, companies are innovating on every aspect of technology inside the data center, from silicon, storage, network, and compute systems to the power and cooling technologies that support data center facilities. This innovation extends to system power delivery, where major efforts are being made to re-architect foundational power delivery technologies from the ground up.

The Problem: Power Constraints in the AI Era

Hyperscale operators are now planning gigawatt-class data centers designed to consume more than 1 billion watts of power. At the rack level, individual GPUs frequently draw more than 1,000 watts, compared to CPUs that average a third of that, and multi-GPU servers can easily top 8,000 watts per node. As a result, racks that once operated comfortably at 10–20 kilowatts are being pushed toward megawatt-class racks.

Legacy facilities, sized for lower-density server racks, struggle to manage the heat and stable power delivery for GPU-dense configurations that can surge from idle to peak in milliseconds. Distribution losses remain stubborn: roughly 10% of total data center power is lost in delivery and conversion. The net effect: power delivery is becoming a critical limiting factor to scaling AI compute.

The AI Factory Owner’s Pain Points

Data Center operators know the struggle:

- Cable trays hit limits fast. Bulky copper cables consume space and block airflow.

- Rear-of-rack congestion slows liquid-cooling retrofits. Bend radius and routing constraints make every change a headache.

- Commissioning drags. Crews battle heavy conductors, torque bulky terminations, and chase faults in overcrowded terminations.

- Losses remain stubborn. Roughly 10% of data center power vanishes in distribution and conversion.

- Safety and sustainability risks escalate. Every kilogram of copper is embodied carbon today and represents potential e-waste tomorrow.

In short, today’s power delivery methods are colliding with tomorrow’s density—and that friction shows up as schedule risk, safety risk, and escalating OpEx. This isn’t a problem of inefficiency; it’s a problem of scalability. The rack-level power model built for CPU loads has collapsed under GPU surges.

This surge is stressing infrastructure in several ways:

- Distribution loss: Every extra power conversion stage bleeds watts; long copper runs add resistive power loss, forcing overprovisioning and increasing cooling requirements.

- Physical bulk: Heavy gauge round wire conductors are hard to route, consume white space, constrict airflow, and cap rack density.

- Installation risk: Bulky terminations increase touchpoints and invite human error in assembly.

Rethinking Power Delivery in Alignment with OCP’s Core Tenets

Meeting AI demand isn’t about adding thicker cables or more copper layers in a printed circuit board—it requires a fundamentally new power delivery model. We need solutions that are lighter, denser, more efficient, and built for automation, so operators can deploy faster, pack more compute per unit footprint, and cut distribution losses.

CelLink’s solutions advance the Open Compute Project Foundation’s core tenets of Efficiency, Impact, Scalability, Openness, and Sustainability.

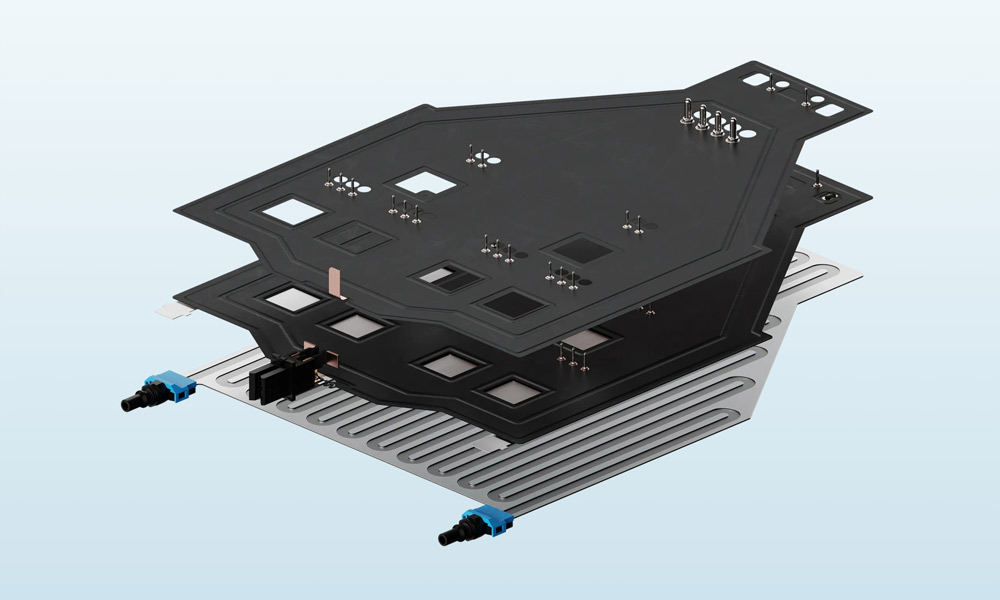

CelLink’s Breakthrough Solution

CelLink already has a track record of driving power delivery innovations in the automotive industry through the deployment of over three million flex harnesses into electric vehicles on the road, and we also have a presence in industrial, aerospace, and power storage applications. We’re now turning our attention to the largest power delivery challenge in the world today: Flex harness technology that redefines what’s possible in data center power delivery. Our solutions aim to address present-day core challenges of power delivery, while also unlocking robotic assembly for systems and removing manual wire terminations and the errors and delays that come with them.

Across the industry, designs are moving toward 1 megawatt IT racks supported by liquid cooling. CelLink’s flex harnesses, already capable of carrying 1 megawatt per harness in the automotive industry, align with that trajectory by freeing rear-of-rack space for cooling hardware, simplifying tight cable routing in high-density racks, and enabling automation-first builds that keep pace with liquid-cooled deployments. Our POC demonstration at the OCP Summit will showcase the integration of liquid cooling directly into power delivery.

Potential benefits for AI factory owners include:

- Greater Compute Density: Thinner, patterned-to-shape cabling means more room for compute hardware per rack.

- Lower material use: Less conductor mass translates to a reduced environmental footprint.

- Compatible with automation: Robotic assembly and a software-defined, reproducible conductor footprint streamlines buildouts, eliminates manual error, and improves field reliability.

- Faster construction: Lighter, patterned-to-shape harnesses and robotic terminations compress install, QA, and rework time cycles.

- Efficiency gains: Backside liquid cooling of vertical power VRMs keeps VRMs operating at high efficiency, reducing waste heat by tens to hundreds of watts per server.

- Future-proofs infrastructure: Positions operators to handle the surge toward gigawatt campuses and megawatt-class racks without being choked by legacy cabling constraints.

What’s Next

With energy consumption projected to double by 2030, solving power delivery is not optional. CelLink’s innovation represents more than a clever engineering tweak; it signals a revolution in power delivery. By replacing bulky round wire cabling with a flat alternative, server manufacturers gain a clear path to building AI factories sustainably and at speed.

The industry has reimagined compute, networking, and cooling. Now it’s time to reimagine power delivery. Check out this tech brief for more details on CelLink’s solutions and connect with CelLink in the Innovation Village at the OCP Summit in San Jose, October 13-16, to learn more.

Inside Iceotope’s Mission to Cool AI Sustainably

As AI workloads push data centers to their physical and environmental limits, the industry is waking up to a hard truth: today’s cooling methods can’t sustain tomorrow’s compute demands. The rise of multi-megawatt racks, unprecedented water consumption, and surging energy use are forcing operators to rethink infrastructure from the ground up.

At the 2025 AI Infra Summit in Santa Clara, Jeniece Wnorowski and I sat down with Jonathan Ballon, CEO of Iceotope, for a Data Insights interview on why it’s imperative to consider sustainability in AI infrastructure buildout—and how Iceotope is redefining cooling technology both at the edge and in the datacenter.

The Efficiency Imperative

AI’s rapid growth has triggered exponential increases in power and water consumption. Traditional air cooling is struggling to keep up with the thermal profiles of modern AI accelerators, while water-intensive cooling towers and evaporative systems are under scrutiny for their environmental impact.

“When you look at the amount of resources that are being consumed right now—whether it’s power or water—it’s unsustainable,” Ballon said.

This urgency was one of the key factors that drew him to lead Iceotope. The company’s datacenter liquid cooling technologies use 96% less water than conventional systems and reduce cooling power requirements by up to 80%. Their edge AI solutions can be deployed in almost any environment, without the use of existing facility water or dry chillers.

Those kinds of efficiency gains aren’t just good for the planet—they’re essential for the economics of deploying AI workloads. As data center campuses scale into the gigawatt range and high-throughput edge applications that require low latency continue to grow, sustainable cooling is becoming both a moral and a financial imperative.

Rethinking Infrastructure Without Forklifts

For enterprises, the efficiency conversation is tied closely to infrastructure planning. Many organizations are not starting from scratch—they’re retrofitting existing facilities or deploying AI workloads incrementally.

“I think enterprises need to think carefully about how they’re going to invest in new infrastructure,” Ballon explained. “What we’re seeing is enterprises that are looking for retrofit capability that doesn’t require forklift upgrades so that they can adopt liquid cooling gradually rather than having to do...forklift changes either in their existing infrastructure or in their new builds. Looking for technology that allows you to do that is super important. Otherwise, they could be making major infrastructure changes that could be outdated in three to five years, which could be a major risk.

That message resonates with enterprise IT leaders who must balance innovation with capital discipline. Liquid cooling solutions that integrate into existing footprints without major overhauls offer a pragmatic path forward.

Lessons from Hyperscalers

It’s tempting for enterprises to look at hyperscalers or “neo-clouds” as models for AI infrastructure strategy. But Ballon cautioned against simply trying to emulate the giants.

Instead, he suggests that enterprises use hyperscaler deployments as reference points while tailoring their strategies to their own operational realities. For many organizations, that means exploring modular, flexible solutions that enable growth over time rather than attempting to leap directly to hyperscale patterns.

New Edge Deployment Models

One of the most intriguing threads of the conversation centered on non-traditional deployment locations. Ballon and the hosts discussed the idea of deploying AI infrastructure in underused commercial spaces, like vacant office buildings, as part of an edge strategy.

While a hyperscaler wouldn’t take over an office suite to build something that isn’t at their usual scale, a retailer might, he said. As enterprises look for ways to bring AI closer to where data is generated, creative use of available real estate could accelerate edge deployments, particularly if efficient, compact, and quiet cooling solutions make it viable. Iceotope’s latest product line focuses on edge solutions that don't require access to facility water or dry chillers. Their fan-free, quiet operation makes them ideal for deployment in office environments.

A Legacy-Driven Mission

For Ballon, Iceotope’s mission goes beyond engineering.

“I’m at the stage of my career where I think about my kids, I think about the environment, and what legacy I want to leave behind,” he said.

By dramatically reducing water and energy use, Iceotope aims to make the environmental footprint of AI infrastructure compatible with a sustainable future. It’s a vision that blends technical innovation with a long-term view of responsibility—one increasingly shared across the data center industry.

The TechArena Take

Cooling is no longer an operational afterthought—it’s becoming a strategic lever for sustainable AI growth. Iceotope’s approach demonstrates how innovations in liquid cooling can enable enterprises to meet surging compute demands while dramatically cutting environmental impact.

The big insight from Ballon’s conversation: enterprises don’t need to mimic hyperscalers to succeed in the AI era. By focusing on retrofit-friendly technologies and purpose-built designs, they can chart their own path—one that balances performance, cost, and environmental stewardship.

As AI infrastructure spreads beyond massive campuses into distributed edge locations, energy efficient and water-saving cooling solutions will shape the future of data center design. Iceotope is positioning itself squarely at the intersection of sustainability and innovation.

Giving Storage Strategy a Reboot for Efficiency with Solidigm

Modern storage infrastructure presents a complex balancing act. As solid-state drives (SSDs) evolve to provide performance levels demanded by artificial intelligence (AI) workloads, power consumption has grown alongside speed, prompting a necessary evolution in how organizations evaluate and optimize their storage investments.

During a recent TechArena Data Insights episode, I spoke about this phenomenon with Jeniece Wnorowski, director of industry expert programs at Solidigm, and Scott Shadley, director of leadership narratives at Solidigm. Our conversation revealed the complex factors affecting storage efficiency, and key areas organizations need to consider when undertaking efforts to optimize their systems.

Redefining Storage Efficiency with Modern Metrics

To set the stage for our conversation about storage efficiency, Scott noted that in his work with customers and partners, what’s critical is “Understanding how we manage budgets. And those budgets include power budgets and all the other aspects of building an efficient data center,” he said.

Considering how finite resources are allocated has become increasingly important as modern flash-based storage products are being deployed in architectures that demand unprecedented performance levels. These demands have led SSDs to draw more power than ever expected, given they were designed to be both fast and power efficient.

The challenge, in fact, lies not in the technology, but in the metrics used to determine the best storage solution for use case requirements. As system demands increase, new measures are necessary to make architecture and procurement decisions. “We’ve always used the same metric, dollar per gigabyte,” Scott explained. “There’s a lot of new metrics that we’re focused on today, like watts per terabyte or terabytes per input/output operations per second…so we’ve evolved the ecosystem to talk through what a modern infrastructure looks like.” These measurements provide a more accurate picture of total system efficiency and help guide decisions from being about the fastest or the biggest drive to the right storage solution for the job.

Engineering Efficiency in Every Part of SSDs

While the legacy of SSDs is already rooted in efficiency, Solidigm is actively working on solutions to even further improve storage efficiency. For example, the company has worked with standards bodies and partners to optimize idle times. “These power states that we can put drives in make sure that they make the most of the power available to them. They have fast on, fast off, and things that you just can’t do with other aspects of storage infrastructure,” he explained

The architectural innovations extend beyond power states to fundamental design choices. For example, Scott detailed how Solidigm has long focused on optimizing the design of SSDs’ controllers, which can draw significant power if designed inefficiently. For ultra-high-capacity drives like their 122TB models, they’ve worked within the architecture and firmware design to keep only necessary components active as needed, which becomes critical when hundreds of drives populate enterprise racks.

Tackling System-Level Optimization for Maximum Benefit

Beyond the drives themselves, holistic system changes are critical to optimizing efficiency. Scott emphasized that modernization efforts must address both hardware and software components to realize systems’ full potential. Our discussion revealed a particularly intriguing challenge on the software side: legacy code optimization. Many applications originally designed for spinning media include built-in wait times, which become counterproductive with SSD deployment. These unnecessary delays waste power because systems continue drawing energy while waiting for data that has already arrived.

Taking that challenge of comprehensive improvement a step further, Scott pointed out that drives are just one component of a larger system that must be considered. “It’s not about the drive,” he said. “It’s about the rack, and what you can do with the rack to make that rack more efficient.” A partnership Ocient, which builds a rack infrastructure that reduces the physical footprint required, shows the benefits of this approach. Reducing the footprint reduces the server count and rack-level power, which then translates into true reductions in total cost of ownership.

For organizations beginning efficiency overhauls, Scott recommended focusing on three key areas: software infrastructure optimization to eliminate unnecessary wait times, right-sizing storage performance to actual requirements rather than perceived needs, and leveraging portfolio diversity to match specific use cases with appropriate storage technologies. “Don’t just buy the fastest things, and even sometimes the biggest one isn’t what you need. We’ve got the portfolio to help you make yourself the most efficient system that can also scale,” he said.

The TechArena Take

The evolution of storage efficiency reflects a broader maturation in how enterprises approach infrastructure optimization. While IT teams wrestle with rising power consumption from high-performance storage, Solidigm’s focus on comprehensive efficiency demonstrates that the solution lies in addressing a complex web of factors. The companies prepared to not only work with efficient, modern drives, but to update their purchasing decision metrics and set aside piecemeal optimization strategies for a true systems-thinking approach will see the greatest benefits as workload demands continue to accelerate.

To learn more about Solidigm’s approach to efficiency and storage, connect with Scott Shadley on LinkedIn or explore Solidigm’s efficiency solutions at solidigm.com.

Bhavnish Walia on AI, Risk, and Responsible Innovation

For Bhavnish Walia, innovation isn’t defined by how fast you move, but by how thoughtfully you build. His career—from Citibank to Amazon—has been about bridging two worlds: the creative urgency of fintech innovation and the rigor of AI governance. Today, he leads the deployment of machine learning models for financial crime prevention, anchoring technological advancement in transparency, fairness, and regulatory compliance, at Amazon.

Joining TechArena’s Voices of Innovation, Bhavnish shares how his views on innovation have matured from chasing novelty to engineering resilience. In this conversation, he reflects on separating hype from substance, fostering human-AI collaboration, and shaping technologies that create both impact and trust at scale.

Q1: Can you tell us a bit about your journey in tech?

A1: I started at Citibank, in the Risk Management group, working on credit card portfolios and customer risk analytics. I then moved into a product role, spearheading the marketplace launch of the high-end airline credit card, a fintech project that had me craft the user experience, reward redemption flows, develop a risk management framework and mobile app enhancements, all with the goal of driving engagement and customer retention.

After that, I worked at Amazon in Risk and Compliance, and as my work progressed, I focused on leveraging AI and machine learning to prevent and detect financial crimes. I am now responsible for rolling out AI/ML models used in Anti–Money Laundering (AML) detection, fraud detection, and automation in KYC, making sure these systems work efficiently, as well as be Responsible AI–compliant, which complies with transparency, fairness, and regulatory standards.

In short, my career has been a journey from innovation in financial products to AI-powered risk management, blending fintech, compliance, and ethical AI.

Q2: How do you define “innovation” in today’s rapidly evolving tech landscape? Has your definition changed over the years?

A2: To me, innovation today is no longer about making something new, it’s about making something reliable, scalable, and responsible. In a time when technology is running faster than regulation, true innovation happens when we design the correct guardrails, release with confidence, and stabilize when they scale.

Earlier in my career, I viewed innovation primarily as novelty and efficiency, finding new ways to solve business problems or improve customer experience. But with time, especially working on risk management and AI, I came to develop a different understanding. I began to appreciate that innovation is about the balance between creativity and responsibility.

Q3: When you’re evaluating new ideas or technologies, what's your framework for separating genuine innovation from hype?

A3: When I think about new technology, I always work through the problem-first approach. The key question I ask is: Which problem is this technology solving, and is this problem meaningful enough to matter at scale?

Then I have three points in consideration:

- Scalability: Can the solution be scaled and implemented on a sustainable basis in different environments?

- Human impact: Does it fundamentally change how humans operate, make decisions, or access opportunities?

- Democratization of knowledge: Can it expand access to information, intelligence, or empowerment, not just for a few, but across industries and communities?

I like to see innovation as substantive and enduring.

Q4: How do you see the relationship between AI advancement and human creativity evolving? Are they competitors or collaborators?

A4: I view AI and human creativity as collaborators, not competitors. AI is highly competent in dealing with repetitive, administrative work that often consumes valuable manual time and energy. By automating these, AI frees this time and energy for higher-order thinking, strategic decision-making, and creative exploration. The future, I believe, is one that belongs to the concept of augmented creativity, in which human and AI work in tandem to design, innovate, and solve problems quickly and more ethically than either could alone.

Q5: What's a book, podcast, or idea that fundamentally changed how you think about technology or business?

A5: I've listened to the Pivot podcast hosted by Kara Swisher and Scott Galloway, for the last five years, and that has had a significant impact on the way I think about technology, leadership, and corporate accountability.

The show goes beyond market trends or valuations; it dives into the human and ethical dimensions of innovation, from the impact of social media on mental health to the need for accountability in Big Tech.

It’s made me more conscious of how we can integrate social good and responsible growth into product and AI development, to build systems that don’t just scale profits, but also support well-being, inclusion, and trust. That’s a philosophy I try to apply every day in my work at the intersection of AI, risk, and governance.

Q6: When you’re facing a particularly complex problem, what’s your go-to method for finding clarity?

A6: When I’m faced with a complex problem, my first step is to write it down; breaking it into smaller, structured components helps me see patterns and dependencies more clearly. From there, I turn to my network of peers and mentors to get diverse perspectives. I often ask myself, “Who should I talk to next who might have solved something similar?”

These conversations usually lead to valuable resources: books, podcasts, or frameworks that help me connect the dots. I’ve found that clarity often emerges not from isolation, but through curiosity, structured thinking, and collective insight.

Q7: Outside of technology, what hobby or interest gives you the most inspiration for your professional work?

A7: Outside of technology, I find a lot of inspiration through mentorship and community engagement. I enjoy mentoring recent graduates, helping them navigate career goals, understand the corporate landscape, and build confidence in their professional paths. It’s incredibly rewarding, and I often learn as much from their fresh perspectives as they do from my experience.

I also love participating in hackathons and startup events, listening to early-stage pitches, and volunteering with organizations that bring innovators together.

Q8: What excites you most about joining the TechArena community, and what do you hope our audience will take away from your insights?

A8: What excites me most about joining the TechArena community is the opportunity to connect with people from diverse industries and backgrounds who are all shaping the future of technology in their own ways. It’s a space where practitioners can share real-world experiences, challenges, and lessons learned beyond just theory.

I hope the audience walks away with a deeper understanding of how emerging technologies are being practically deployed inside organizations, how problems are being solved at scale, and how we can collectively bridge the gap between innovation and impact.

Q9: If you could have dinner with any innovator from history, who would it be and what would you ask them?

A9: I’d choose Steve Jobs. I recently read his biography and was fascinated by how he blended design, technology, and emotion to create experiences that changed how we live.

If I could have dinner with him, I’d ask:

“If you were building something today purely to help humanity, what would it be?”

and

“What technology he would create that could bring people together?”

That question captures what excites me most about the future of innovation: using technology to deepen connection and empathy, not division.

Cornelis Networks on Turning Constraints Into Strategic Advantage

Cornelis CEO Lisa Spelman joins Allyson Klein to explore how focus, agility, and culture can turn resource constraints into a strategic edge in the fast-moving AI infrastructure market.

Rewriting the Data Center Playbook for AI with Solidigm

Reliable. Economical. Even predictable. Those were the markers by which enterprises historically measured storage, like a necessary utility. The explosion of AI workloads, however, is forcing a complete reimagining of infrastructure architecture. The challenge is no longer having enough capacity. It’s orchestrating an intricate dance between compute, memory, and storage while managing a critical fourth element: power.

I recently had the opportunity to explore this transformation with Scott Shadley, director of leadership narrative at Solidigm, and Jeniece Wnorowski, director of industry expert programs at Solidigm, during a recent TechArena Data Insights episode. Their conversation revealed how storage companies are evolving from component suppliers to strategic infrastructure partners, and why the traditional approach to data center planning is becoming obsolete.

Finding the Right Balance as Everything Gets Bigger and Faster

Scott emphasized a critical reality that many organizations are desperately grappling with. Modern infrastructures, whether supporting AI workloads or enterprise applications, are requiring exponentially more capacity and performance to support data at unprecedented scales. But this data explosion is happening between two contrasting forces: the constraints of finite data center space, and unconstrained escalating power costs. “As everything gets faster and everything gets bigger, it starts to consume more power,” Scott said.

With these factors in tension, treating compute, memory, and storage as separate architectural components is no longer sustainable. “We spent a lot of time in the industry talking about compute, memory, and storage as three unique architectures,” Scott explained. “We’ve really gotten past that, and it’s now starting to look at how do we really do a better job of load balancing everything.”

Beyond Component Sales: The Strategic Partnership Evolution

With this shift, Solidigm is fundamentally redefining its role in the marketplace, becoming an infrastructure enabler and planning partner to its customers. The company offers deep expertise not just in storage technology, but in the workloads that storage enables. Scott noted that their team includes experts who have become more AI specialists than storage specialists, a strategic decision that enables them to anticipate infrastructure needs rather than simply react to them.

Scott explained that their approach has moved far beyond product specifications and benchmarks: “It’s not about pitching slides. It’s not about ‘buy this because it’s the next big thing.’ It’s really about having those conversations with folks to make sure they understand their need, we understand their needs, and they understand the solutions available.”

Engineering for Tomorrow’s Workloads Today

With this expanded role, Scott emphasized that successful infrastructure planning requires thinking beyond “what’s next” to the “next-next” scenarios: “The current products are amazing, and where we think they’re going to go is great. But if we’re not already thinking about next-to-next type of thing—and we’re not even talking 5-year, we’re talking 10-year roadmaps—you’re never going to be able to design the products fast enough.”

This forward-looking approach is particularly critical as emerging trends like edge computing, software-defined infrastructure, and composable architectures create divergent yet complementary demands. Edge deployments might require everything from ultra-compact drives for space-constrained environments to massive 122TB drives for high-density applications. Each scenario demands different architectural approaches while maintaining consistent reliability and integration characteristics.

The Economics of Future-Proofing

Long-term thinking also affects another facet of decision making: price. “I’m probably going to make a few people mad…but CapEx is not the issue,” Scott said. “I mean, everybody says, ‘I’ve got to spend as little as possible on CapEx,’ but CapEx is for today.”

Scott argues that infrastructure decisions made today will determine operational costs, and that the ability to maximize performance per watt, optimize rack density, and minimize cooling requirements can deliver operational savings that far exceed initial hardware cost differences. And these decisions don’t just affect costs, but capabilities. Today’s investments and architectural decisions determine whether organizations will be positioned to capitalize on emerging opportunities or constrained by infrastructure limitations.

The TechArena Take

Solidigm’s expanding role as an infrastructure partner reflects a broader industry transformation where success depends on understanding workloads as much as hardware specifications. The company’s emphasis on long-term roadmap alignment, customer collaboration, and holistic system optimization demonstrates how technology companies can create sustainable competitive advantages in rapidly evolving markets.

The most compelling aspect of their approach is the recognition that tomorrow’s data center challenges won’t be solved by simply making components faster or denser. Success will depend on architecting solutions that balance performance, power efficiency, and operational flexibility while anticipating workload requirements that haven’t yet fully emerged. Organizations that understand these dynamics, and that invest in understanding workloads rather than just selling products, will be best positioned to navigate the infrastructure complexities ahead.

Connect with Scott Shadley on LinkedIn or explore Solidigm’s AI-focused solutions at solidigm.com/AI to continue the conversation about future-proofing your data center infrastructure.

Solidigm Redefines Measuring Storage Efficiency for AI Workloads

As AI workloads devour data center power budgets, storage efficiency and optimization have become fundamental design requirements. In this conversation, I sat down with Solidigm’s Dave Sierra to unpack how storage teams should be thinking about watts, racks, and wear in 2025.

Dave, who is part of Solidigm’s Data Center Solutions Marketing team, walks us through the real crossover point between HDD and QLC flash for AI workloads, the architectural choices delivering measurable efficiency gains today, and why the metrics that mattered last year are being replaced by new KPIs tied directly to GPU utilization and power consumption.

Q1: Dave, since our 2024 discussion, what’s changed in data center compute efficiency? If you had to pick one KPI storage teams should optimize in 2025 (e.g., watts per TB, PB per rack, GB/s per GPU), what is it—and why?

I think last year it was an idea but now it’s a fact—there isn’t enough energy to go around for AI. Accounting for every watt consumed in the data center is now a design imperative. To that end one major efficiency story in 2025 is the mainstreaming of liquid cooling technology in the AI data center. With upcoming GPU systems exceeding 150kW per rack, liquid cooling is not only becoming essential for effective heat management, but it can also improve overall data center energy efficiency by 15% or more. Storage efficiency metrics will vary depending on usage, but in compute scenarios the best measure of energy efficiency will be throughput per watt (GB/s per W). These workloads—particularly training—involve massive sequential data transfers for loading datasets, checkpoints, and model updates, where high bandwidth directly correlates with GPU utilization and overall system performance.

Q2: AI pipelines stress storage differently across pre-training, fine-tuning, and RAG. How do you advise customers to place hot/warm/cold data across TLC and QLC tiers so GPUs stay fed without over-provisioning compute or power?

It’s apparent that AI and analytics are making data warmer. Managing more frequent data access across TLC and QLC centers around striking a balance between performance, capacity, and efficiency. For pre-training, where speed and throughput are critical, you’d place the hot data—like training batches—on TLC to ensure GPUs are never starved for data, while larger but still warm datasets can sit on QLC to save power and space. Use TLC for fine-tuning, which deals with smaller, targeted datasets, while QLC takes care of archived checkpoints or referenced data. And for RAG workloads, where latency is king, you’d want your most accessed knowledge corpora on TLC while QLC stores the bulk of less-frequently accessed but potentially relevant data. This tiered approach ensures smooth data flow without over-provisioning power or compute, keeping AI infrastructure efficient and scalable.

Q3: On the architecture side, which choices have delivered the biggest real-world efficiency gains: EDSFF (E1/E3) density, NVMe-oF disaggregation, QLC consolidation, or firmware-level power management? If you can, quantify in servers removed, watts saved, or racks reclaimed.

To some extent all these factors can work together to improve efficiency in a modern data center. But a recent Solidigm and VAST Data paper highlights how QLC SSD consolidation paired with E1.L density is revolutionizing data center efficiency, especially compared to traditional nearline HDDs. The study shows that high-density QLC SSDs, such as the D5-P5336 in E1.L form factor, help to reduce storage-based power consumption by an impressive 77% and reclaim 90% of the physical rack space compared to legacy HDD infrastructures. This consolidation eliminates the need for multi-tiered storage systems, offering a unified flash architecture with unmatched scalability and efficiency, ideal for AI, ML, and other data-intensive workloads. For those scaling to multi-petabyte or even exabyte levels, this approach exemplifies the best path forward for reducing costs, energy, and infrastructure overhead.

Q4: Write amplification and endurance are top of mind for AI data lakes and vector indexes. How are innovations like storage acceleration software, smarter caching, or autonomous power states reducing both power draw and wear—and what proof points should operators ask vendors to show?

Solidigm’s Cloud Storage Acceleration Layer (CSAL) is an example of a game-changer for AI data lakes and vector indexes. CSAL minimizes write amplification factor (WAF) to near 1.0 and improves QLC endurance through smart data management and efficient write handling. This is free-to-use software designed to deliver smarter caching to prioritize key data and autonomous power states for Solidigm SSDs. These innovations lower wear and power costs while boosting performance and reduce energy draw during idle times. Operators should ask for proof points like reductions in write amplification factor (WAF), watts per terabyte, and cache hit rates to validate how these features translate into real-world efficiency gains.

Q5: Operators are optimizing for power, space, and service time. From a purely operational lens, where do you see the HDD-to-flash crossover in 2025 for AI and analytics if the goal is maximum throughput per watt and petabytes per rack?

There’s a couple of factors at play here, not the least of which is a pronounced and well-documented nearline HDD supply shortage. This supply situation alone has directed more attention to high-capacity SSDs for AI and analytics. When it comes to meeting performance and density needs, our 122TB SSD delivers more than 20x the throughput and nearly 10x the petabytes per rack compared to a 30TB HDD. These advantages translate directly into real world cost benefits, whether it be reduced energy consumption, far fewer racks to manage, or less space to fill with HVAC and power infrastructure. AI customers are increasingly motivated to have the QLC SSD crossover conversation, and as they do we see an audience that’s now much more receptive to a TCO-driven analysis versus legacy storage.

Rafay Systems: Building the Foundations of AI Infrastructure

At this year’s AI Infra Summit in Santa Clara, Allyson Klein and Jeniece Wnorowski sat down with Haseeb Budhani, CEO and co-founder of Rafay Systems, to explore how enterprises and neoclouds are approaching the dawn of the AI era. With data centers scaling at unprecedented speeds and global companies investing hundreds of millions of dollars in infrastructure, Rafay is positioning itself as an enabler of the next wave of AI-powered transformation.

The Early Innings of AI Infrastructure

Despite the frenetic pace of AI adoption, Budhani describes the industry as still in the “early innings.” For all the progress in building cloud platforms and high-performance compute clusters, most organizations are only beginning their AI journeys.

“We keep meeting neoclouds and enterprises globally who are just starting their AI journey,” Budhani said. “We’re working with customers who have invested hundreds of millions of dollars to deploy infrastructure. As they embark on AI initiatives, Team Rafay expects to move forward with them as they scale.”

That perspective is crucial: while the headlines are dominated by hyperscale AI factories and trillion-parameter models, the broader ecosystem is just warming up. Enterprises and service providers around the world are building out the foundational layers that will support AI for decades to come.

From Kubernetes to AI Factories

Rafay’s story began seven years ago with a clear focus: simplifying Kubernetes management at enterprise scale. As containerization took hold, organizations needed a way to automate lifecycle management, security, and compliance across distributed infrastructure. Rafay delivered a platform that reduced operational friction while enabling developers to move faster.

Fast forward to today, and the same operational pain points are amplified in AI environments. Training and inference workloads are complex, infrastructure is highly distributed, and data sovereignty concerns add new layers of complexity. Rafay has expanded its platform to help enterprises operationalize AI infrastructure, just as it helped them manage cloud-native applications.

Budhani is quick to emphasize that the need for operational excellence has never been greater.

“Nobody is getting a lot of sleep in our company right now,” he joked. “But what a great time to be working on a problem like this.”

Building Long-Term AI Infrastructure Partnerships Around the Globe

One of the most striking elements of Rafay’s journey is its global reach. Budhani described partnerships with large systems integrators and companies investing heavily in emerging markets, including a recent engagement in Africa where a customer committed hundreds of millions of dollars to infrastructure built on Rafay’s platform.

For these organizations, Rafay provides more than a software solution—it acts as a trusted partner helping them modernize. The engagements are long-term by design, with customers relying on Rafay for three to five years of growth.

This underscores a broader truth about the AI era: it is not a short-term trend but a structural shift in how compute is built, delivered, and consumed. Companies like Rafay are helping neoclouds and enterprises accelerate this transition without being overwhelmed by operational complexity.

Optimism in a Transformative Time

Throughout the conversation, Budhani’s enthusiasm was unmistakable. He acknowledged the long hours and the stress of building differentiated offerings in such a competitive space, but framed it as a privilege.

“We’re very blessed to be where we are at this point in time, with the solution that we have,” he said.

That optimism resonates deeply in an industry defined by both enormous promise and huge challenges. Scaling infrastructure to meet AI’s demands is not a trivial exercise. It requires rethinking everything from chip design to power delivery. Rafay’s success is a reminder that operational platforms are as essential to AI’s future as GPUs and memory bandwidth.

Looking Ahead

As Rafay enters its eighth year, the company’s trajectory is tied to the continued expansion of AI infrastructure worldwide. Enterprises are no longer content with proofs of concept. They are building production AI systems that demand reliability, scalability, and governance. Rafay is positioning itself as the operational glue that makes this possible.

When asked where Rafay’s customers will be in five years, Budhani didn’t hesitate: “Bigger, of course. We want them to be successful. This journey is just starting.”

TechArena Take

Rafay Systems’ success highlights the critical role of operational excellence in making AI scale real. The takeaway: GPUs may grab the headlines, but the future of AI factories will be defined just as much by the orchestration platforms and operational frameworks that keep the lights on. Companies like Rafay are proving that operational resilience is not an afterthought; it is the foundation of AI’s next chapter.

Anusha Nerella: Innovation Is Responsibility in Motion

Q1: Can you tell us a bit about your journey in tech?

A1: My journey in tech has been a blend of engineering rigor and the pursuit of responsible innovation. Starting as a software developer, I quickly realized that my passion wasn’t just writing code; it was architecting systems that could scale, adapt, and solve problems at a societal level. Over the years, I’ve led transformation projects in global financial institutions, modernized legacy systems into cloud-native architectures, and pioneered AI-driven frameworks in compliance and trading. Today, my work sits at the intersection of AI, fintech, and responsible automation, where technology must not only be powerful but also trustworthy.

Q2: Looking back at your career path, what's been the most unexpected turn that ended up shaping who you are today?

A2: The most unexpected turn was stepping into regulatory automation and compliance projects. I originally envisioned my career in pure software engineering and trading platforms, but working on systems where finance, regulation, and AI converge showed me how deeply technology impacts trust and accountability. That pivot shaped my philosophy: true innovation in fintech isn’t just about speed or efficiency, it’s about designing systems society can rely on.

Q3: How do you define “innovation” in today’s rapidly evolving tech landscape? Has your definition changed over the years?

A3: For me, innovation today is responsibility in motion. A decade ago, I would have defined it as solving problems faster with better technology. But now, I see innovation as the ability to anticipate risks, build responsibly, and scale solutions that balance human needs with machine intelligence. It’s less about shiny prototypes and more about architectures that endure.

Q4: What’s one emerging technology or trend that you believe is flying under the radar but will have significant impact in the next 2–3 years?

A4: I believe small language models (SLMs) and neuromorphic computing are underestimated. Everyone is focused on massive LLMs, but the future of enterprise adoption will come from smaller, energy-efficient, explainable systems that can run locally. These will transform compliance, fraud detection, and risk-aware trading areas where accountability matters as much as intelligence.

Q5: When you’re evaluating new ideas or technologies, what's your framework for separating genuine innovation from hype?

A5: I ask three questions:

- Does it solve a real, painful problem for enterprises?

- Can it scale responsibly without introducing hidden risks?

- Does it leave the system more explainable, not less?

If a technology only checks the first box but fails the other two, it’s usually hype. Genuine innovation leaves behind resilience, not fragility.

Q6: What’s the biggest misconception you encounter about innovation in the tech industry?

That innovation is about disruption. I see it differently; real innovation is continuity with accountability. The industry glorifies “breaking things fast,” but in domains like finance or healthcare, that mindset is reckless. The misconception is that speed equals innovation. In reality, responsible scaling is the truest form of innovation.

Q7: How do you see the relationship between AI advancement and human creativity evolving? Are they competitors or collaborators?

A7: Collaborators. AI accelerates patterns, but humans bring context, empathy, and judgment. I see AI as an amplifier of human creativity rather than its competitor. For example, in fintech, AI can spot anomalies, but only humans can decide what regulatory or ethical stance should follow. The future isn’t AI replacing creativity; it’s AI creating more space for human imagination to flourish.

Q8: If you could solve one major challenge facing the tech industry today, what would it be and why?

A8: I would solve the challenge of AI accountability at scale. We’ve proven that we can build powerful AI systems, but we haven’t solved how to make them explainable, ethical, and sustainable. Solving accountability would unlock adoption across finance, healthcare, and government, while protecting against systemic risks.

Q9: What’s a book, podcast, or idea that fundamentally changed how you think about technology or business?

A9: The concept of “antifragility” by Nassim Nicholas Taleb profoundly influenced me. Systems shouldn’t just survive stress, they should improve under it. That idea shaped how I approach fintech architecture: designing systems not just to withstand volatility, but to learn and adapt from it.

Q10: When you’re facing a particularly complex problem, what’s your go-to method for finding clarity?

A10: I rely on mind-mapping with AI augmentation. Visual mapping helps break a complex challenge into dependencies and highlights blind spots. Then I use AI copilots to simulate “what-if” scenarios. That combination – human clarity plus machine-driven insight has been invaluable in solving challenges in trading system design and regulatory automation.

Q11: Outside of technology, what hobby or interest gives you the most inspiration for your professional work?

A11: I find inspiration in podcasting and storytelling. I run conversations that explore how women can lead in AI and fintech. Those dialogues remind me that technology isn’t just about systems, it’s about voices, inclusion, and empowerment. Storytelling keeps me grounded in the human impact behind every technical decision.

Q12: What excites you most about joining the TechArena community, and what do you hope our audience will take away from your insights?

A12: I’m excited about the chance to co-create the future narrative of technology not just where AI and fintech are headed, but how we build responsibly together. I hope the audience walks away with this message: innovation is not about doing more; it’s about doing it right. And when we embed responsibility into design, we create technologies that endure beyond hype cycles.

Q13: If you could have dinner with any innovator from history, who would it be and what would you ask them?

A13: I would choose Alan Turing. I’d ask him: “If you could see the state of AI today, would you believe we’re living up to its potential or simply creating faster machines without deeper intelligence?” I think his answer would push us to rethink how we measure progress in computing.

AMD Lands OpenAI Deal, Challenging NVIDIA’s AI Dominance

In our recent piece, “AMD Challenges Goliath with MI355, Doubles Down on Open Innovation,” we explored how AMD is positioning its latest silicon and software stack to take aim at NVIDIA’s entrenched dominance in AI compute. This week, AMD followed that narrative with a decisive market moment: OpenAI announced a multi-year strategic partnership with the chipmaker to deploy up to 6 gigawatts (GW) of AMD GPU capacity—one of the largest single commitments to AMD’s Instinct platform to date.

The deal includes a stock warrant giving OpenAI the option to purchase up to 160 million AMD shares, or roughly 10% of the company’s outstanding stock if fully vested and exercised, tying the two companies together financially as well as technologically.

The announcement sent AMD’s stock surging by 23–24% in premarket trading and signaled a seismic shift in the competitive dynamics of AI infrastructure. With OpenAI on board as a strategic partner, AMD isn’t just challenging Goliath—it’s putting points on the board against NVIDIA in the hyperscale market, while giving OpenAI a crucial hedge against reliance on a single silicon supplier.

“This partnership with AMD reflects our commitment to building a resilient and efficient compute foundation for the next generation of AI systems,” OpenAI CEO Sam Altman said in a statement. “AMD’s roadmap gives us a path to scale quickly while optimizing for performance and power efficiency.”

A Multi-Generation Partnership Anchored in Scale

Under the terms of the agreement, OpenAI will begin deploying 1 GW of AMD’s Instinct MI450 GPUs in the second half of 2026, ramping up to 6 GW over multiple product generations. This will place AMD among OpenAI’s largest infrastructure suppliers, marking the company’s most significant public win in the hyperscale AI compute space to date.

AMD has been aggressively positioning its Instinct accelerator line as a credible alternative to NVIDIA’s H100 and B100 GPUs, emphasizing high-bandwidth memory, open software stacks, and deep system integration. While the green giant still dominates the training and inference markets, especially in large language model (LLM) workloads, AMD’s recent MI300 and MI450 series have begun to gain traction among hyperscalers and national labs.

The inclusion of a stock warrant is a notable twist. If OpenAI exercises its right to purchase shares, it could become one of AMD’s largest single shareholders. This kind of equity-linked partnership has precedent in the semiconductor industry; for example, foundry customers often take strategic stakes to secure capacity, but it’s unusual in the GPU space and signals long-term alignment.

OpenAI’s Infrastructure Ambitions Are Accelerating

The AMD deal comes amid OpenAI’s aggressive push to expand its compute footprint. The company recently unveiled five new U.S. sites for its Stargate initiative, a sprawling AI infrastructure program backed by Oracle and SoftBank that aims to bring 10 GW of AI data center capacity online. OpenAI is reportedly exploring new financing mechanisms, including debt, to keep pace with escalating chip and power requirements.

Parallel to the AMD announcement, OpenAI announced its 10 GW strategic partnership with NVIDIA, underscoring that it intends to work with multiple suppliers. NVIDIA remains a “preferred compute and networking partner,” but the addition of AMD gives OpenAI crucial supply chain resilience in an industry where access to cutting-edge GPUs can make or break deployment timelines.

This diversification also reflects the growing complexity of AI workloads. As models become larger and more agentic, infrastructure teams are looking to optimize not just raw performance but energy efficiency, total cost of ownership (TCO), and time-to-train—areas where competition between GPU vendors is intensifying.

AMD’s Big Moment

For AMD, this is a landmark win. Despite strong products, the company has struggled to gain meaningful share in the AI accelerator market dominated by NVIDIA’s CUDA ecosystem. Landing OpenAI—arguably the single most influential AI customer in the world—is a validation of AMD’s technology roadmap and an opportunity to prove its platforms at massive scale.

AMD will need to deliver. Running multi-gigawatt data centers is not just about chip performance; it requires mature software stacks, reliable supply chains, and deep co-engineering. To that end, AMD and OpenAI announced plans to collaborate on joint hardware–software optimization efforts, including compiler tuning, runtime integration, and distributed training frameworks tailored for AMD GPUs.

The scale of the deal could also have ripple effects across the broader ecosystem. Hyperscalers, enterprises, and AI startups looking for alternatives to NVIDIA may view OpenAI’s endorsement as a signal that AMD’s platform is enterprise-ready. Meanwhile, the financial structure of the partnership gives AMD upside potential if OpenAI’s deployments meet expectations, and could set a precedent for future chip supply deals in an era defined by unprecedented demand.

TechArena Take

OpenAI’s partnership with AMD is more than just a procurement deal; it’s a strategic realignment in the AI infrastructure landscape. For OpenAI, diversifying compute suppliers isn’t optional—it’s essential to sustain the kind of exponential scaling its roadmap demands. By aligning financially with AMD through a warrant structure, OpenAI is locking in both capacity and influence.

For AMD, this is the company’s “prove it” moment. Landing OpenAI gives AMD the platform to challenge NVIDIA's hegemony, but success will depend on execution across silicon, software, and systems integration. If AMD delivers, it could accelerate a long-awaited shift toward a more competitive and distributed AI hardware ecosystem—one that could benefit hyperscalers and enterprises alike.

For the broader industry, this partnership underscores how strategic compute supply has become in the AI era. Chips are moving from commodities to core competitive differentiators, shaping product timelines, corporate valuations and market power.

If OpenAI’s bet pays off, it could reshape the GPU market for years to come.

Daniel Wu on Scaling AI with Trust and Strategy

Artificial intelligence (AI) has moved from lab curiosity to enterprise necessity in less than a generation. Few people embody that transition better than Daniel Wu—an educator, executive, and author who has spent two decades on the frontlines of technology, with the last 10 years squarely focused on enterprise AI.

In a recent Data Insights conversation with TechArena host Allyson Klein and co-host Jeniece Wnorowski, he shared why AI captured his focus, how data management has evolved to keep pace, and why building trustworthy systems matters just as much as building powerful ones.

A Career at the Crossroads of Technology and Impact

Wu’s journey into AI emerged from his early background as a technologist and engineer. Over time, he saw the transformative power of AI firsthand and shifted his career toward enterprise AI strategy.

“I truly believe in the transformative power of AI,” he said. “As a technologist, I’m fascinated by the capabilities. But as a leader, I’m equally focused on the impact this technology brings and how to channel it for the betterment of humanity.”

That sense of dual responsibility—celebrating innovation while addressing risk—has guided Wu’s professional path. Alongside his executive leadership roles, he has also served as a core staff member for Stanford University’s AI Professional Program since 2019 and co-authored books on agentic AI and AI security.

Scaling AI Beyond Pilots

Enterprise leaders often struggle with the jump from proof-of-concept projects to scaled AI deployments. Wu emphasized that the biggest challenge isn’t just adoption, but the frameworks that allow AI to grow sustainably inside organizations.

“Innovation has to move beyond building powerful prototypes,” he explained. “What matters is creating robust frameworks that can scale while remaining trustworthy.”

That includes governance, alignment with business strategy, and ensuring transparency around how AI systems are trained, deployed, and measured. Without those safeguards, enterprises risk building tools that work in isolation but fail in the broader organizational or societal context.

From Three-Tiered Hierarchies to AI-Driven Pipelines

Wu also addressed one of the core infrastructure shifts behind modern AI: the transformation of data management. Where organizations once relied on a three-tiered storage hierarchy—hot, warm, and cold—today’s AI workloads demand more fluid, distributed pipelines.

“Data is no longer static,” Wu said. “We’ve moved toward AI-fueled pipelines that are dynamic, distributed, and responsive.”

This change is driven by the requirements of generative and agentic AI, which rely on constant access to diverse data sources. For enterprises, this means rethinking everything from storage architectures to governance models, ensuring that pipelines are not only efficient but also secure and compliant.

Building Trustworthy Systems

Trust emerged as a recurring theme throughout the conversation. For Wu, trust isn’t a vague ideal; it’s a measurable outcome of careful design and leadership.

“The scale and power of AI means its impact can be immense,” he said. “I’m particularly concerned about the lack of understanding of what AI can do and the implications of misuse. That’s why my focus has been on frameworks for building trustworthy systems.”

Trust, in this context, spans multiple dimensions: transparency in decision-making, accountability when errors occur, and assurance that systems serve human values rather than undermine them.

Bridging Academia and Industry

Wu is passionate about closing the gap between academia and enterprise adoption. His work in academia puts him in daily contact with both researchers pushing AI’s technical boundaries and practitioners trying to translate those breakthroughs into business outcomes.

“Academia generates incredible innovation,” he said. “But enterprises need practical frameworks to adopt and scale those innovations responsibly. Bridging that gap is where real progress happens.”

His books and teaching efforts aim to equip professionals with the literacy needed to understand complex topics like agentic AI, where autonomous agents collaborate to solve problems, and AI security, which covers everything from adversarial attacks to data privacy.

Why AI, Why Now

When asked why he chose to focus so deeply on AI, Wu’s response underscored both urgency and opportunity.

“The transformative potential is here today,” he said. “This is the moment where we can shape how AI is developed and deployed. If we don’t act thoughtfully now, the consequences could be profound. But if we do it right, the benefits for humanity will be extraordinary.”

TechArena Take

Daniel Wu’s insights remind us that the AI era isn’t defined solely by model benchmarks or GPU density—it’s defined by leadership, frameworks, and trust.

Enterprises face a dual challenge: to scale AI quickly enough to remain competitive, and to do so responsibly enough to sustain trust among employees, customers, and society at large. Wu’s career illustrates how those priorities are not mutually exclusive. In fact, they’re inseparable.

As AI continues to evolve, from generative to agentic and beyond, the organizations that thrive will be those that balance ambition with responsibility.

Cirrascale CEO on Defining Compute Efficiency

As organizations push more workloads into inference and AI-driven applications, compute efficiency is moving to the top of every buyer’s checklist.

We sat down with Cirrascale CEO Dave Driggers to walk through the practical yardsticks they use when evaluating performance, the trade-offs behind scheduling and accelerator selection, and the engineering choices that sustain efficiency even at high rack densities.

Check out the Q&A below to learn how Cirrascale defines compute efficiency in business terms, what levers they pull to optimize GPU utilization, how storage tiers and data movement policies keep costs predictable, and the apples-to-apples tests Driggers recommends for validating provider claims.

Q1: How do you define “compute efficiency” in business terms for Cirrascale customers—what simple yardsticks (e.g., performance delivered per dollar or sustained GPU utilization during runs) actually tell you they’re getting efficient compute?

A1: We measure actual job performance and build a TCO model based upon using different accelerators (including GPUs) to determine the most cost-efficient platform for the customer to use.

Q2: From the provider side, what are the two biggest levers you pull to raise effective GPU utilization—guiding customers to the right accelerator, improving scheduling/pooling to cut idle time, or removing storage/network bottlenecks? A quick example of impact would help.

A2: We run the actual workload on different accelerators and measure the relative performance. We then compare the costs of both the hardware and the operating cost to run them. With that data we create a "total cost of ownership" (TCO). With our Inference as a Service offering we also look at the actual time the workloads need to run. Is it real time or batch? That determines the scheduling needed.

Q3: Inference at scale often loses efficiency when data movement gets expensive or slow. What have you done around storage tiers and data-movement policies to keep GPUs fed and the bill predictable?

A3: We do not charge for Ingress or Egress of data, so the bill is very predictable. We offer multiple tiers of storage to best match the performance requirements.

Q4: Rack densities are rising fast. Without getting into plumbing, how are you planning for 100–150 kW racks so compute efficiency doesn’t drop to thermal throttling or queue delays? What’s one decision that materially changed outcomes?

A4: All of our racks support water to the rack. For densities higher than 75kW/rack, we leverage Direct Liquid to Chip (DLC) and additional water to air cooling at the rack level like using RDHX doors.

Q5: Your Inference Cloud uses token-based pricing. How does that map to customers’ efficiency goals versus GPU-hour billing, and when does it meaningfully lower total cost to serve?

A5: We offer both token-based pricing with our inference as a service offering and GPU-hour billing on our dedicated inference offerings. The token-based pricing is typically a better deal for customers that are not using the servers 24/7 whereas the dedicated inference is better for folks using the GPUs continuously.

Arm’s AI Infrastructure Advantage: Efficiency Meets Innovation

The semiconductor industry has undergone a dramatic transformation over the past decade, shifting from commodity hardware approaches to purpose-built silicon designed around specific data center architectures. At the heart of this revolution sits Arm, whose 35-year legacy in efficiency-first design has positioned it perfectly for the artificial intelligence (AI) era’s demanding performance and power requirements.

I recently sat down with Mohamed Awad, senior vice president and general manager of Infrastructure Business at Arm, we and discussed how the company is enabling partners to build the next generation of AI-optimized systems while addressing the massive scale challenges facing the industry.

The Infrastructure Paradigm Shift

The cloud industry’s evolution from cobbled-together commodity hardware to purpose-built systems reflects broader changes in how organizations approach infrastructure. As Mohamed explained, the traditional approach of building data centers around available silicon has inverted entirely. Today’s hyperscalers design silicon around their data center architectures and specific workload requirements.

This shift has been accelerated by AI’s exponential growth. With projections of $6-7 trillion in AI infrastructure investment by 2030, and training models like GPT-4 requiring petabytes of data, the industry has taken to creating “AI factories”—full racks where networking, compute, and acceleration are designed as integrated systems to optimize both performance and efficiency.

Efficiency at Gigawatt Scale Drives Arm Adoption

The scale challenges caused by this transformation are staggering. Data centers are entering the gigawatt era in power consumption, making efficiency non-negotiable, and the cumulative effect of small gains can be massive.

“When you’re talking about a 500-watt CPU, pulling 20% or 30% of the power out may not seem like a lot, but when you start multiplying that across an entire data center, that means a lot more AI you can fit into those platforms,” Mohamed explained.

This efficiency advantage plays one part in explaining Arm’s growing share in the hyperscale computing market. Amazon Web Services (AWS) has already shipped 50% Arm-based compute over the past two years, and other major cloud service providers are following suit. The company forecasts that half of all compute shipped to top hyperscalers in 2025 will be Arm-based, a remarkable transformation given its previous focus on mobile and embedded applications. This growth stems from as hyperscalers recognizing the total cost of ownership benefits and performance-per-watt advantages that Arm-based solutions deliver.

Momentum Accelerates with Software Optimization Primacy