Scaleway Makes the Case for a Cloud Strategy Built on Control

There’s a long-held idea in enterprise technology that people who make safe, conventional procurement decisions stay employed. Today, that logic is playing out as procurement teams and IT leaders choose dominant global cloud platforms not because they’ve done a thorough evaluation, but because it feels like the default.

Solidigm’s Ace Stryker and I recently sat down with Albane Bruyas, chief operating officer of Scaleway, who made a compelling case that the “safe” choice may come with unexpected risks, and that having a backup plan is critical. With 25 years of cloud experience behind it, Scaleway is working to demonstrate that a homegrown European complement to the global hyperscalers is essential for organizations that care about control, cost transparency, and long-term resilience.

More Than Sovereign: The Case for Full-Stack Control

Scaleway describes itself as both a sovereign European cloud provider and a neocloud built for AI infrastructure. For Albane, however, the word “sovereign” only gets you so far.

“What is very interesting for our clients is that we sell full autonomy,” she said. “We have control on all the value chain. This is what makes the pure difference for our clients.”

That control extends across software, hardware, network transit, and pricing. Scaleway operates within a major French telecommunications conglomerate, which gives the company ownership over connectivity that most cloud providers must outsource. The result, Albane argues, is a level of transparency and pricing stability that organizations cannot get from vendors who depend on third-party components throughout their stack.

Sustainability is also woven into this argument. Because Scaleway built its data centers, primarily in France, it has been able to optimize for energy efficiency. That includes tracking and reducing carbon footprint at the hardware level, a capability made more precise by the company’s participation in the Open Compute Project.

A Procurement Argument Rooted in Risk

Before joining Scaleway, Bruyas worked in industrial procurement, and she brings that lens directly to conversations with enterprise buyers. In industry, she notes, you always ensure you have at least two suppliers for physical goods. Her message is straightforward: operating differently with your digital suppliers doesn’t eliminate risk. It invites it.

“In the digital world, people are just forgetting that their principal strategic supplier is a unique supplier they cannot move out from,” she said. “There is no solution if there is a crash. There is no solution if different prices come out. No solution if there is an external government that asks for data. You have no choice.”

Her advice to organizations anchored to a single hyperscaler is practical, not confrontational: “You will never be fired because you choose one of the scalers. So it’s just like, test an alternative. You need to have one, and you will be happily surprised,” she said.

In a similar spirit, Scaleway’s active participation in the Open Compute Project is not simply a technical preference; it is a supply chain hedge. By building on open hardware standards, the company can source components from multiple vendors, reducing dependence on any single manufacturer and creating competitive pricing leverage.

“If you have the most open hardware you can, then you have the capacity to buy from different suppliers,” Albane explained. “If you have the capacity to buy from different suppliers, you can have a better price, and you can have more capacity because you can go to different places.”

Building for an Agentic AI World

As AI workloads shift from simple inference queries toward longer, more complex agentic workflows, the infrastructure requirements change substantially. More context, more memory, tighter latency budgets, and greater demand for diverse compute options are all part of that transition.

Scaleway’s strategy is, once again, to embrace sourcing from different providers. The company offers multiple GPU types, is actively collaborating with next-generation chip designers, and maintains capacity for CPU-based inference where appropriate. Albane noted that Scaleway has a history of being early to emerging architectures, having been among the first providers to offer Arm-based servers.

“We want to continue to be really at this level of technology where we can put something in place that nobody has,” she said.

The TechArena Take

Scaleway occupies an unusual position in the cloud market: mature enough to offer a full public cloud catalog, yet structured in a way that gives it operational visibility and pricing control that most providers lack. Albane makes a credible case that full-stack ownership is not just a differentiator in the marketing sense but a concrete operational advantage, particularly for organizations that need cost predictability, data residency assurance, and a genuine second-source option. Enterprise buyers that prioritize control and transparency can benefit by making a strategic choice to diversify their cloud strategy before vendor dependency becomes a liability.

TechArena Advisory: A New Playbook for the AI Inflection

TechArena advisors Allyson Klein, Jeni Barovian, Lakecia Gunter and Laura St. John discuss how AI is reshaping leadership strategy, infrastructure economics, and go-to-market decisions.

Knowledge Freshness: The Missing Discipline in Enterprise AI

An enterprise AI assistant was asked to summarize the company’s “top customers” for a planning discussion.

The answer looked reasonable. It pulled from customer records, account notes, renewal history, and internal summaries. It ranked familiar names, explained why each account mattered, and sounded confident enough to move the conversation forward.

A year earlier, “top customer” mostly meant revenue. More recently, the business had started using the phrase to include retention risk. The source systems still had current records. The retrieval system still found relevant material. Yet the AI system was reasoning against an older business meaning.

That is one of the hardest freshness problems in enterprise AI. The words remain the same while the meaning underneath them changes. A pipeline freshness dashboard will not catch it. A re-indexing job may not fix it. The system can look healthy while the knowledge it depends on is quietly aging.

For years, enterprises have built mature operating models around data freshness. They know how to measure whether a table loaded, whether a record arrived, whether a dashboard refreshed, and whether a pipeline missed its service level agreement. But AI systems depend on something broader than data freshness. They depend on knowledge freshness.

Most organizations have not built an operating discipline for that yet.

Data Freshness Is Not Knowledge Freshness

Data freshness answers a familiar question: did the latest data arrive?

Knowledge freshness asks a harder question: is the information an AI system is using still valid, authoritative, and safe to apply in this context?

Those are not the same problem. A customer table can refresh every hour while the definition of a customer segment changes once a quarter. A policy document can be updated in the source repository while an older version remains available in search. A benefits summary can be rewritten, but an AI system may still retrieve a synthesized FAQ based on the previous version. A field can be technically current while the business meaning attached to that field has shifted.

Traditional data platforms were designed to catch the first category of problems. Did the job run? Did the file arrive? Did the record count change? Did the dashboard refresh? These checks still matter. They are the foundation of operational data reliability. But they do not fully answer whether enterprise knowledge is current enough for AI reasoning.

That distinction matters because AI systems do not simply display information. They summarize it, combine it, interpret it, and use it to influence action. When stale knowledge enters that chain, the failure may not look like a broken pipeline. It may look like a confident answer that is almost right.

The Many Ways Knowledge Goes Stale

The most obvious form of knowledge freshness failure is source and index staleness. A source document changes, but the representation used by the retrieval system still reflects the older version. To the user, the AI system appears to be drawing from the right source. Underneath, it may be reasoning from an outdated snapshot of that source.

A related but more dangerous problem is source supersession. In this case, the new version exists, but the old version remains retrievable alongside it. The system now has access to two versions of the truth and no reliable way to know which one is authoritative. This is not just a refresh problem. It is a lifecycle problem.

Policy currency creates another layer of risk. A rule may change in a governance system, legal memo, compliance note, or operating procedure, but the retrieval layer may not understand that the change affects which answers are safe to generate. The AI system may produce an answer that was acceptable last quarter but risky today.

Then there is derived knowledge decay. Many organizations create summaries, FAQs, playbooks, training notes, and synthesized guidance from source material. These artifacts are useful because they simplify complexity. But once AI systems begin retrieving them, they become part of the knowledge supply chain. If they are not refreshed when the source changes, they can quietly become stale while still sounding polished and authoritative.

The hardest form is semantic drift.

Semantic drift happens when the words stay the same but the meaning changes. “Active customer,” “qualified lead,” “priority incident,” “approved vendor,” and “high risk” may carry different meanings across teams, quarters, products, or regulatory contexts. A re-indexing job will not catch that. A pipeline freshness dashboard will not flag it. The document may not even be outdated in the traditional sense.

This is where knowledge freshness stops being a retrieval-augmented generation (RAG) operations problem. A system can retrieve the right document and still apply the wrong interpretation. It can cite a current source and still miss the fact that the business context around that source has changed. A grounded answer can still carry yesterday’s assumptions.

That is why knowledge freshness cannot be reduced to refreshing embeddings more often. Refreshing indexes matters, but it only solves the simplest version of the problem. Reliable enterprise AI needs to know which sources are authoritative, which interpretations have expired, which policies have changed, and when familiar terms no longer mean what they used to mean.

The Cost Shows Up as Trust Delay

When knowledge freshness is unmanaged, the cost rarely appears as a clean system failure. It appears as hesitation.

A user reads an AI-generated answer and wonders whether the source is current. A manager asks whether the recommendation reflects the latest policy. A risk team wants to know whether the system used an outdated interpretation. An engineering team has to reconstruct which documents, summaries, indexes, and rules contributed to the output.

This is where knowledge freshness connects directly to time-to-trust. The longer it takes to prove knowledge is current, the longer it takes to use the output. The answer may arrive in seconds, but confidence may take much longer.

That delay weakens adoption. Users stop trusting the assistant for anything that matters. Leaders hesitate to embed AI into operational workflows. Review teams add manual checks. Engineers spend more time explaining outputs than improving the system.

The root cause is often misdiagnosed as model quality. The model may be reasoning well against knowledge the organization stopped governing.

Toward a Knowledge Freshness Discipline

Knowledge freshness needs to become part of the AI operating model.

That starts with ownership and authority. Critical knowledge sources need accountable owners, not just storage locations. Someone has to know which policy is authoritative, which summary is derived, which glossary definition is current, and which artifact should no longer be retrieved. AI systems should not treat every source as equal. A current policy should outrank an old FAQ. A governed definition should outrank a project note. A source-of-record field should outrank a copied spreadsheet.

Freshness also needs observability. Organizations should be able to see when knowledge was last validated, when derived content was last synchronized, when embeddings were last generated, and whether older versions remain retrievable. These checks should not require a manual investigation every time a user questions an answer.

The strongest concept is a decay budget.

A decay budget defines how long a piece of knowledge is allowed to remain trusted before it must be refreshed, revalidated, downgraded, or expired. Not all knowledge ages at the same speed. A pricing rule may need a short decay budget. A security policy may need an even shorter one. A historical architecture decision may remain useful for years, but only if it is clearly marked as historical.

Instead of asking whether knowledge is simply present, teams can ask how long it should remain trusted without review. That question changes the operating model. It turns freshness from a background assumption into an explicit design decision.

The Next Reliability Layer

Enterprise AI has made knowledge operational in a new way.

Documents, policies, summaries, definitions, and business rules are no longer just reference material for humans. They are inputs into systems that summarize, recommend, classify, and decide. Once knowledge becomes machine-consumed, its lifecycle needs the same seriousness that enterprises already bring to data pipelines.

Reliable AI platforms will not just retrieve knowledge. They will know whether it is still valid enough to use.

That requires more than faster indexing or better prompts. It requires ownership, authority, observability, and explicit decay rules. It requires treating knowledge as something that changes, ages, and expires.

Enterprises already learned this lesson with data. Freshness became measurable because stale data broke dashboards, reports, and decisions. AI is now forcing the same lesson at the knowledge layer.

We measure data freshness in minutes. We measure knowledge freshness in vibes.

Nebius on Why AI Infrastructure Is More Than GPUs

Hitesh Kumar explores AI infrastructure design, GPU clusters, networking, storage, cooling, and scalability. Learn how modern AI systems are evolving beyond GPUs to full-stack architecture.

Komprise Helps Enterprises Escape the Storage Price Trap

Enterprise storage has long had a potential pain point with growing unstructured data, but most organizations have tolerated the ballooning storage needs of their documents, video files, images, sensor outputs, project archives, and more because the cost of inaction seemed manageable.

That calculus has changed. The surge in AI workloads has triggered an acute shortage of high-performance memory chips, the same components that underpin enterprise storage hardware. As AI infrastructure competes for that supply, vendors have passed the cost along quickly, leaving organizations to urgently search for new solutions.

Recently I sat down with Solidigm’s Jeniece Wnorowski and Krishna Subramanian, co-founder and chief operating officer of Komprise, about how enterprises can better handle unstructured data, which makes up roughly 90% of all data generated worldwide. We explored how a problem that has been simmering for years has suddenly jumped to the top of to-handle lists for enterprise IT leaders.

The Budget Shock No One Planned For

The numbers are striking. “Just in the first few months of this year, most storage companies raised their prices by anywhere from 30% to about 75%,” Krishna noted. For IT leaders already managing double-digit data growth, that is a significant jolt to infrastructure budgets that were built around very different assumptions. Organizations are being asked to absorb substantially more data while spending far more per unit of capacity, with little room to maneuver.

The problem is compounded by poor visibility. Most enterprise IT teams know they are sitting on large volumes of cold data, files that are no longer actively used but continue to consume expensive primary storage. What they typically lack is the precision to act on it. “You can’t manage what you don’t know,” Krishna said. “Most IT leaders, I think the problem is they don’t know really what the issues are with their unstructured data.”

Unstructured data is sprawling by nature, generated across different users, applications, and systems with no consistent format to make analysis straightforward. Knowing the general shape of a problem is different from knowing which specific data can be safely moved, where it lives across on-premises and cloud environments, and what the real savings opportunity looks like.

Reclaiming Capacity Without Buying More

Komprise designed its Flash Stretch Assessment to close exactly this gap. Available to qualified customers with at least 500 TB of data for no charge, the service analyzes storage environments across both on-premises and cloud infrastructure to produce a concrete picture of data activity, growth patterns, and cost distribution by user, application, and data type.

The goal is to identify cold data that can be moved to lower-cost storage tiers, freeing up primary capacity without requiring new hardware purchases at today’s elevated prices. “We’re freeing up all that space, but you can now put new data onto it,” Krishna said. “You’re kind of reclaiming existing capacity without having to buy at these exorbitant prices.”

When organizations act on the assessment, the financial impact can be significant. Unstructured data accounts for roughly 30% of the average IT storage budget, and that figure encompasses not just primary storage but also backup and disaster recovery copies. Komprise reports that customers who tier their cold data can reduce storage and backup costs for their unstructured data by around 80%.

Transparent Access, Not a Trade-Off

A predictable concern with any tiering strategy is disruption. Moving data to lower-cost storage only delivers value if users and applications can still reach it without friction. Komprise addresses this through its patented Transparent Move Technology, which relocates data to the cloud or another tier while leaving a dynamic link in its original location.

“It looks like the X-ray image is still local,” Krishna explained. “Your applications can still open it, but when they go to open it, we stream that data from the cloud instead of it sitting locally. It’s transparent to users and applications so they don’t see any change.”

For data that follows cyclical patterns, such as project archives that go dormant for months before becoming relevant again, the platform supports bulk recall that restores entire datasets on demand, giving IT teams flexibility to handle the rhythms of how enterprise data actually gets used.

The TechArena Take

The current storage crisis is not a temporary disruption that patient organizations can wait out. Memory chip shortages tied to AI demand will likely ease, but the need to optimize your existing resources instead of planning to scale to meet increasing demand alone is likely the new normal.

The current pressure is forcing a discipline that should have existed all along: Many enterprises have been storing unstructured data without meaningful visibility into what they have, how fast it is growing, or what it is actually costing them. That was always inefficient. The difference now is that the inefficiency has become expensive enough to demand attention. Organizations ready to engage with decisions about what data to keep, where to keep it, and how to organize will be best positioned in the race to improve AI performance, slow infrastructure spending, and increase operational agility.

To learn more, listen to the full podcast or visit komprise.com.

Synopsys and Samsung Foundry Tighten the 2nm AI Design Loop

Synopsys and Samsung Foundry are sharpening the toolchain that turns advanced node ambition into shipped silicon.

At SAFE Forum 2026 today, Synopsys announced fresh collaborations across production-ready AI-powered design flows, certified multiphysics signoff, faster test, expanded IP, and a 3D design platform being validated on Samsung Foundry’s Hybrid Copper Bonding technology. The common thread: customers building AI accelerators and multi-die systems need fewer surprises, shorter cycles, and silicon they can stand behind.

In his SAFE Forum keynote, Synopsys President and CEO Sassine Ghazi pointed at the structural problem every chip team now wrestles with. Engineering complexity is compounding, cycle times are tightening, and costs keep climbing. Ghazi argued the answer lies in fusing AI automation and multiphysics intelligence across the full design and manufacturing flow. Today’s news reads as proof points for that thesis.

Production-Ready 2nm Flows and Smarter Signoff

Start with the front end. Synopsys’ AI-powered digital and analog flows are now production-ready on Samsung Foundry’s third-generation 2nm class process. Fusion Compiler on the third-gen 2nm node delivers measurable power and performance gains over second-generation 2nm, validated with customers and shaped by years of design technology co-optimization (DTCO).

For teams charting a migration from 4nm or 3nm to 2nm, the “production-ready” label carries weight: lower migration risk and a faster route to taped-out parts. That matters more in 2026 than it did even 18 months ago, as hyperscalers and AI-first silicon teams chase 2nm capacity across multiple foundries at once.

Signoff gets a sharper edge too. New PrimeShield capabilities, including Process Sensitivity Analysis and PVT Explorer, support design-specific optimization and engineering change order decisions late in the flow. Drawing on silicon feedback from 2nm class processes, Synopsys reports up to a 2.7% frequency improvement within 5% leakage current degradation when compared to the previous generation of PrimeShield software.

Totem-SC, newly certified for electromigration and IR drop analysis on second-generation 2nm and 4nm class nodes, gives designers a stronger handle on power integrity at the edge of what physics allows.

Test is where the math gets compelling. Synopsys TestMAX, paired with AI-assisted automatic test pattern generation through TSO.ai, cuts test patterns and test cycles up to 20% with fault coverage held steady, validated on silicon at Samsung Foundry. Physically aware tests and failure diagnosis at the die and multi-die level shorten the loop between bring-up and root cause. For SoC and multi-die designs at AI infrastructure volumes, a 20% test reduction translates directly into lower COGS and faster ramp.

The most strategic beat sits in 3D. Synopsys 3DIC Compiler is currently being validated on a 2nm class Samsung Foundry Hybrid Copper Bonding (HCB) 3D test chip. The platform unifies planning, implementation, and multiphysics analysis so design teams can co-optimize across compute, memory, and advanced packaging in one environment. HCB is one of the most consequential interconnect technologies coming to volume manufacturing for AI accelerators. Pairing it with an AI-driven 3D design platform pushes multi-die design out of manual margin stacking and into an automated, unified flow.

Closing the announcement, Synopsys reiterated the depth of its IP portfolio on Samsung Foundry processes, running across advanced nodes including 14nm, 8nm, 5nm, 4nm, and second-generation 2nm, as well as reaching into automotive nodes at 5nm and 2nm class processes. The interface IP roster reads like a roadmap for what AI and edge platforms will need over the next 24 months: UCIe, PCIe 7.0, 112G/224G, MIPI, LPDDR6, DDR5 MRDIMM Gen2, and USB4.

“Close alignment across design, test, and manufacturing are critical to the success of AI and multi-die designs on advanced nodes,” said Hyung-Ock Kim, vice president and head of the Foundry Design Technology Team at Samsung Electronics.

Ravi Subramanian, Chief Product Management Officer at Synopsys, said the work translates years of DTCO and silicon learning into customer-ready enablement.

TechArena Take

As hyperscalers and AI-first silicon teams race to lock down 2nm allocation, the bottleneck isn't just wafer capacity, it's design velocity. Silicon builders cannot afford to let next-generation architectures sit in optimization limbo. By tying together EDA, IP, test, and advanced HCB packaging into a single integrated feedback loop, Synopsys and Samsung Foundry are tackling the real friction point: engineering risk.

For enterprise executives, this reduces the risk of migrating to leading-edge nodes. For the broader market, it signals that Samsung Foundry is aggressively building out the comprehensive ecosystem required to be a viable, high-volume alternative for advanced AI accelerators. The architecture wins of the next 24 months won't just go to the best node; they will go to the most cohesive ecosystem.

TeraWulf & Schneider Electric Beat the AI Time-to-Power Clock

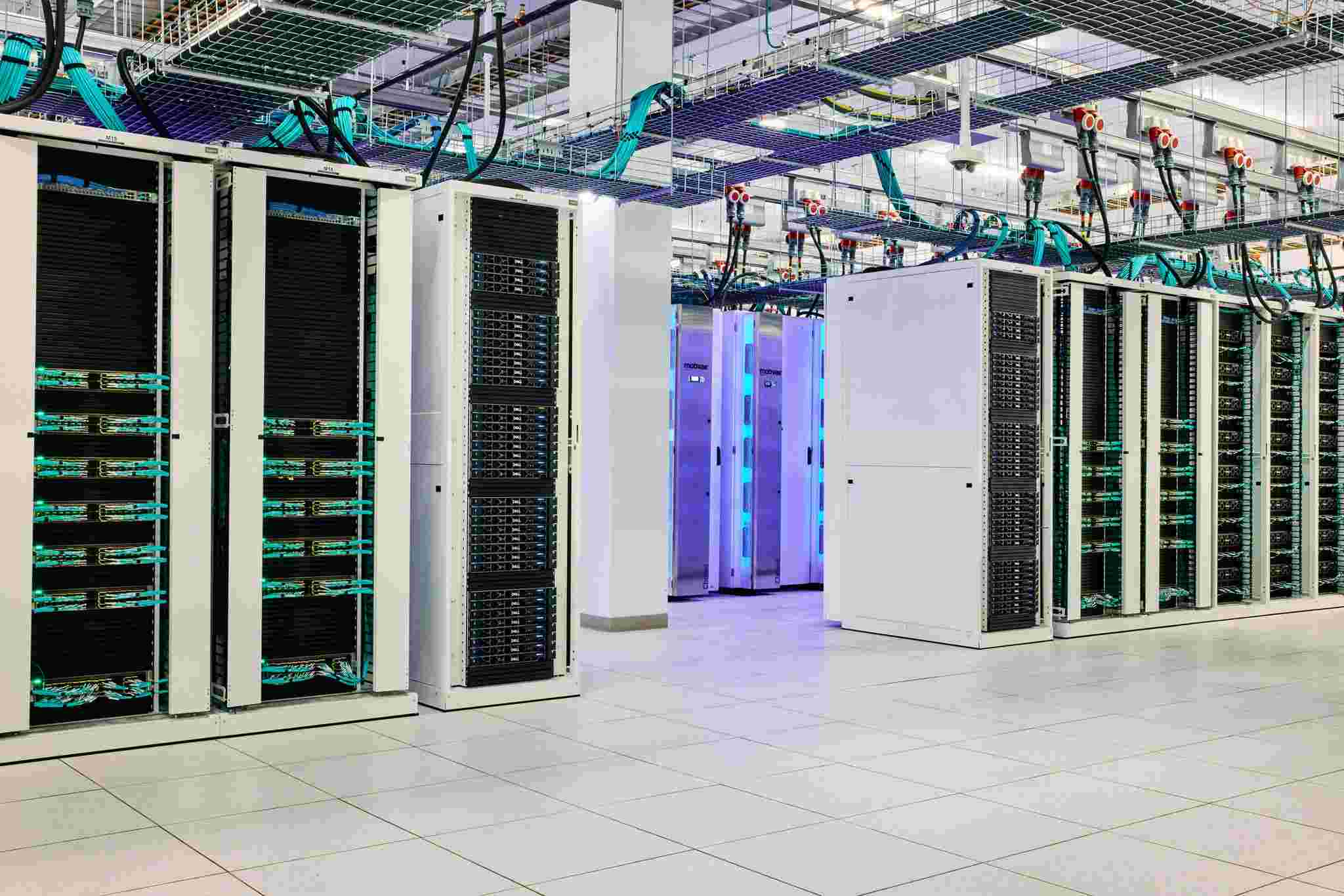

Schneider Electric, Motivair by Schneider Electric, and TeraWulf today announced the phased delivery of more than $290 million in AI infrastructure for TeraWulf’s rapidly expanding Lake Mariner data campus, a 180-acre development at the heart of a retired 1,800-acre coal plant that stopped operating in 2020.

Located in Barker, New York, the site houses about 1 million square feet of capacity, which will translate to 750 megawatts of AI and HPC compute once fully developed. About 360 megawatts of Lake Mariner’s capacity is powered and running today. The site never sleeps. It is a 24/7 operation.

“As demand for AI infrastructure accelerates, time to power has become a defining constraint on growth,” said Manish Kumar, executive vice president of Secure Power and Data Centers at Schneider Electric. “Our partnership with TeraWulf establishes a strategic blueprint for pairing on-site power, AI-enabled automation, advanced liquid cooling, and digital intelligence at a legacy industrial site.”

Touring Lake Mariner

I visited the sprawling Lake Mariner campus last week alongside 30 other journalists from around the globe. We wore reflective yellow vests, hard hats, and protective goggles as we navigated uneven terrain between two partially constructed, mirror-image data center buildings named CB4 and CB5, each one about the size of two Costcos glued together.

Beholding these behemoths, one might never guess that CB4’s first steel pipe went up on January 1, and CB5’s first steel pipe went up on April 1.

Wind off Lake Ontario kicked up dust along the rocky pathway between the buildings. It was 55 degrees and sunny, cranes towering above, tractors scooting here and there, steel beams reaching skyward. Thick, multicolored electrical cables jutted up from the ground, situated in tidy rectangular rows. Metal chillers coated the rooftops and mazes of thick white piping covered the walls, pipes that will deliver closed-loop liquid cooling to the data halls. The first hall in CB4 is expected to be complete and operational in July; the first in CB5 is planned to power on in October or November.

Having started as a power generation site, Lake Mariner was reclaimed for bitcoin mining and then for AI and HPC compute. The site employs 10 or 15 workers who have been there for decades, as well as children and grandchildren of former coal plant employees, said Sean Farrell, TeraWulf’s chief operating officer.

Unprecedented capital investment continues to pour into HPC and AI data center infrastructure, with aggregate global spending across the full value chain projected to hit roughly $765 billion for 2026 alone.

“The pacesetter at the moment is NVIDIA,” said Marc Garner, who leads Schneider Electric’s cloud and service provider business. “It’s really all about tokenization. How do we take data and systems and turn them into AI output?”

AI workloads will consume 35 to 36 percent of all compute deployed by 2030, Garner said. Schneider tracks roughly 150 neoclouds operating globally, a category that has scaled rapidly since 2023. “The speed at which this is being built out is amazing. Scale at speed. These are the things driving the industry.”

Not far from the bitcoin-hall-turned-café, a sad, beige and brown structure still stands on the site, an old coal power plant turned power substation, only now its connections hook into a regional grid whose mix runs about 89 percent zero-carbon, drawing heavily from hydropower at Niagara Falls and nuclear plants across upstate New York. The original 207-million-gallon water intake that once cooled the coal turbines sits idle. Lake Mariner does not need it.

Our media tour began in an oblong-shaped building that, until a couple of years ago, was filled with rows of 15,000 bitcoin miners. The space has since been repurposed as a café for the 1,800-plus site workers.

We walked down hallways and peeked into CB1. Through one door, a roomy data hall packed with 16 megawatts of AMD MI300 GPUs quietly operated. “That’s 2,000 pounds of racks,” Farrell said.

The CB1 capacity is leased by Core42, the cloud and sovereign AI subsidiary of Abu Dhabi-based G42, whose American chip access was unlocked through a Microsoft-brokered investment that locked the company into the U.S. technology stack. The arrangement is a working example of what the industry has started calling sovereign AI on managed soil. The UAE gets dedicated, nationally controlled compute. The U.S. keeps Abu Dhabi's spending inside American chips, American jurisdiction, and American export controls. Lake Mariner is one of the places where those two priorities converge.

A short walk down an adjacent corridor opens into the Wulf Den, a 25,000-square-foot, two-megawatt building that started its life as a bitcoin proof of concept and now runs high-density IT workloads cooled by Motivair rear-door heat exchangers.

Fluidstack, the campus’s largest tenant by a wide margin, is backed by Google. Google has guaranteed roughly $3.2 billion of Fluidstack’s leases at Lake Mariner and holds warrants equivalent to about 14 percent of TeraWulf’s shares. CB4 and CB5, the two newest and largest buildings on the site, are custom-built for Fluidstack workloads. Both will run Google TPUs, cooled by Motivair direct-to-chip systems through the closed-loop network piped along the rooftops.

TeraWulf operates roughly 50,000 bitcoin miners across its portfolio of five sites, three gigawatts in total. Lake Mariner is the flagship. Sites in Kentucky, Maryland, and Texas round out the footprint.

The pivot from mining to AI was an exercise in arithmetic. Bitcoin ROI ran roughly a million dollars per megawatt with a 33 to 40 percent yield against cost, an economic structure that demanded constant capital refresh as miner generations turned over. AI and HPC tenants sign long-term leases. They bring anchor revenue. They underwrite the kind of buildout that turns a 25,000-square-foot bitcoin shed into a campus the size of a small city. “We are a colocation provider with power, water, and fiber,” Farrell said. The tenant brings the compute. TeraWulf brings everything around it.

What $290 Million Looks Like

The partnership covers the full electromechanical stack. Schneider Electric is supplying Galaxy VX uninterruptible power systems and Galaxy lithium-ion battery cabinets, integrated into sidecar buildings adjacent to each data center. Motivair contributes Coolant Distribution Units, in-rack manifolds, ChilledDoors, and rear-door heat exchangers. NetShelter racks and enclosures hold the IT equipment. EcoStruxure IT Data Center Expert ties the monitoring and digital intelligence layer together.

Each CB-series building will host four data halls of about 33,000 square feet apiece, plus a two-story administrative wing. CB4 alone will deliver more than 160 megawatts of IT capacity for Fluidstack across its four halls, roughly 40 megawatts each. The design target is 75 degrees Fahrenheit at the rack. Roughly 70 percent of the heat is removed through water at the chip, and the remaining 30 percent is pulled through the room as residual heat from networking, storage, and adjacent equipment.

The plumbing alone tells the story. Pipes the size of grown men feed each building. Each circular cable bloom rising from the ground holds sixteen separate five-inch and two-inch power runs, each pre-positioned for the tenant’s rack layout. Conduit and copper rise in waves of color: orange, blue, yellow, white, gray. “Our responsibility is for everything going into the building,” Farrell said. “Everything beyond that is the responsibility of the tenant.”

Inside the completed sidecar at CB1, Schneider Electric’s lithium-ion battery cabinets sit in neat formation, ready to carry the data center’s load for up to fifteen minutes if grid power drops on either the A or B feed. Across the way, a near-identical room holds racks of Dell servers fronted with Motivair ChilledDoors. A guide flicked the overhead lights off, and the room filled with soft blue light from the running equipment. The reaction was immediate, audible, a chorus of small sounds from journalists who have seen plenty of data halls but never one quite this lit.

Past the sidecar, the group climbed into the unfinished administrative space of CB4. The walls were raw. The conduit waited. Down the corridor, the first data hall opened onto bare concrete and steel framing. Farrell waved a hand across the empty volume. “This room will be full of Motivair cabinets,” he said. The same building will hold four halls of similar dimension. Data hall four will hand its tenant a customized rack layout designed around their workload before the first GPU arrives.

The economics are not subtle. Building a megawatt of AI compute capacity at Lake Mariner runs $7.5 to $10 million. Eight hundred electricians work the site at any given moment. Sixteen hundred subcontractors carry stickers from past TeraWulf and Somerset projects on their hard hats. “It’s like flair from Office Space,” one of them said.

The biggest bottleneck, Farrell said, is electricians.

The Race the Industry is Running

Manish Kumar’s keynote at the Schneider Electric Global Media Event in Buffalo framed the moment as the fifth great industrial transformation, on par with the arrival of steam, electricity, factory automation, and the digital era. The earlier transitions took generations. This one is moving in fiscal quarters. A rack housed 10 to 15 kilowatts a few years ago. Today’s high-density racks run above 100. NVIDIA’s roadmap points toward a megawatt per rack inside the decade. The monitoring footprint has expanded in step. Schneider used to instrument a data center with about 10,000 data points. The current generation runs into the millions, monitored and acted on by software increasingly infused with AI agents that can pre-empt failures before a human technician would notice the symptom.

The shape of an AI factory follows the math. Bigger footprints. Higher densities. Hotter chips. Liquid loops where air handlers once stood. Power architectures shifting toward 800 volts at the rack to solve a physical problem, not an efficiency problem, because the cable count needed to feed a megawatt rack at current voltages will not fit. None of this was on a slide three years ago. All of it is on the slab at Lake Mariner now.

“AI has fundamentally changed the data center build equation,” said Gary Lamona, Schneider Electric’s vice president of strategic accounts. “It is an arms race to compete today. Who can bring that compute to market fastest?” Average labor costs across U.S. data center construction have climbed 30 percent year over year. The supply of skilled mechanical service technicians has tightened in step with electricians. Schneider’s pitch in this environment is simplification. The company estimates it supplies roughly 90 percent of the infrastructure that goes into a modern AI data center. Customers consolidate vendors, shorten supply chains, and trade dozens of bilateral procurement relationships for a single technology partner.

Cooling, Water, and the Myth that Needs Busting

Of all the slides shown in Buffalo last week, the most newsworthy may have been the ones the audience did not see coming. Tuan Huang, who leads innovation for Motivair and Schneider’s broader cooling business, walked the room through a case study his team had not previously published.

The setup was simple. Take three data center architectures: a traditional air-cooled facility, a current generation AI data center, and a near-future AI data center built around NVIDIA’s Vera Rubin platform. Run the same 100-megawatt workload through each. Compare the results in Dallas and in Paris.

The Dallas numbers landed hard. A traditional air-cooled 100-megawatt data center consumes more than 400,000 cubic meters of water per year. That is the equivalent of 966 households’ annual usage, or 161 Olympic swimming pools. A current-generation AI data center cooled with evaporative towers consumes 382,000 cubic meters. A Vera Rubin data center built around closed-loop liquid cooling drops the number to 197,000 cubic meters, equivalent to 474 households’ annual consumption.

The Paris numbers, run against a milder climate, tell the same story in smaller print: 80,000 cubic meters for traditional, 108,000 for current AI, 51,000 for Vera Rubin.

Tuan’s framing cut through the headline anxiety that has dogged the industry for a year. “Liquid cooling to the chip is required,” he said. “Water consumption to cool a data center is a choice. It’s a technology choice. And it’s a geographical choice.”

He reached for an analogy his family and friends could follow. Air-cooled data centers are old Volkswagen Beetles, engines hot and exhaust pouring into the air. Liquid-cooled data centers are modern cars with radiators. The radiator transfers heat. No water leaves the loop. “Zero water is needed to cool a car today,” he said. “That’s the same for AI data centers.”

The case study went further. Operators can choose to evaporate water at the cooling tower to push efficiency higher, or they can reject heat through dry coolers and high-efficiency chillers and consume almost no water. In Dallas, the no-water option costs about 5 percent more in energy consumption. In Paris, the difference vanishes. The mechanical efficiency of an AI data center, even one that uses some water, runs 70 percent better than a traditional air-cooled site at the cooling equipment level. The total facility power efficiency improves by 11 percent. That 11 percent flows directly back to AI workloads.

Lake Mariner runs the no-water playbook by design. “We do not use any water during normal operations,” Farrell said. “We have a closed-loop cooling system.” The fluid inside each loop carries a 30 percent glycol blend and a corrosion inhibitor. Each CB-series building holds roughly 300,000 gallons of charged fluid. Once filled, the loop runs without replenishment for 10 to 15 years. The bitcoin operation on the property uses a similar closed-loop strategy, and the legacy coal-era water intake remains untouched.

The cooling architecture matters beyond water. Motivair’s CDUs, in-rack manifolds, and rear-door heat exchangers were originally engineered to cool supercomputers, and the company’s installed base now includes six of the world’s ten fastest. Aurora, Frontier, and El Capitan all rely on Motivair technology. El Capitan still holds the top spot.

What Comes Next

By the time the second half of 2026 closes, CB4 and CB5 will both have data halls online and the first Google TPUs landing inside. TeraWulf has begun the engineering work to push the campus from 750 megawatts toward a full gigawatt of capacity.

AES is building an 800-acre solar farm adjacent to the site that will connect into the same substation and feed both the campus and the broader grid. The team is already exploring heat reuse opportunities. The roads on the property carry the names of TeraWulf operators and former coal plant workers.

Manish Kumar’s framing of the moment lingered after I left Buffalo. AI demands more, he said, and Schneider delivers. He meant infrastructure. He could have meant time, capital, electricians, water, and patience. The Lake Mariner project bundles all of it onto a single piece of land that has now hosted three eras of American industry without going dark in between.

A coal plant powered a region for decades. A bitcoin operation kept the lights on through a cryptocurrency boom and bust. An AI factory is rising now on the same earth, cooled by closed loops, fed by hydropower, monitored by software that did not exist when the original turbines spun their first revolution.

The next chapter of American AI infrastructure will not be built on greenfield. It will be built on sites like this one, where the grid interconnection is already paid for, the workforce is already trained, the community already understands what a heavy industrial neighbor looks like. Lake Mariner is the proof of concept. The clock is the constraint.

Rich Whitmore on Motivair’s Rise: An American Dream, Made in Buffalo

“As Jensen Huang of NVIDIA put it, it was a match made in heaven,” said Rich Whitmore, President and CEO of Motivair by Schneider Electric, sitting across the table from me at a brewery in Buffalo, New York.

He was referring to Schneider Electric’s purchase of 75% of his liquid cooling company for $850M cash in February 2025. (The remaining 25% is set to follow in 2028.)

“We were under (Schneider Electric’s) watchful eye and we didn’t know it,” he added. “I think they had identified us as an important part of their product portfolio. They acquire best of breed, the elite. We were just kind of doing our thing and growing our business.”

Rich smiled, quick to pivot the credit for Motivair’s success to his father, its founder. Graham Whitmore emigrated from Europe to Buffalo in 1977 to open the U.S. factory of the cooling company that employed him. He founded Motivair eleven years later, in 1988. Its original focus was manufacturing industrial refrigeration and process cooling systems, but the firm evolved over the decades into a prominent global leader in high-efficiency liquid cooling and thermal management for AI and high-performance computing (HPC).

Today, Motivair by Schneider Electric’s technology is installed in six of the top 10 supercomputers in the world, including the three fastest in the U.S.: Aurora, Frontier, and El Capitan. El Capitan is currently the fastest supercomputer on Earth.

“It wasn’t really me, it was really my father,” Rich said, noting that Graham passed away in 2015. “He was like the American dream. Came over here with nothing, literally nothing, no family, no relatives, just he and my mother. They built this company from the ground up, no debt, never took a loan from the bank. They were not wealthy people. I grew up in a very humble household and I’m just privileged to still be connected to this business. I love what I do.”

A Media Tour

Earlier that day, Rich led a group of 31 journalists, including myself, on tours through Motivair by Schneider Electric’s headquarters and a manufacturing facility. We got an up-close-and-personal look at their chillers, their heat rejection technology, and we saw the end-to-end assembly and testing of one of their Coolant Distribution Units (CDUs), which reminded me of the size of a skinny vending machine. We also got a look at the inner workings and assembly of their ChilledDoor, a kind of door-slash-refrigeration unit that delivers scalable cooling directly at the rack level.

So there we were, Rich and me, sitting at a table on the second floor of a Buffalo wing joint-slash-brewery for a 30-minute interview after a long day of touring. All around us, other Schneider execs chatted with reporters from around the globe. Downstairs, tables full of journalists snacked on nachos and Buffalo wings, drank Diet Pepsi, and tap, tap, tapped on their keyboards, transferring their scribbled notes from the tour that day into their laptops.

Silicon Valley, Austin, and… Buffalo?

When Graham and his wife, Sylvia, settled in Buffalo in 1977, they quickly recognized that the city was rich in resources: sheet metal work, engineering, and thermal transfer.

“There’s several heat exchanger companies that are based here. But the best resource in this town is the people,” Rich said. “Hardworking people, salt of the earth, that we could not have built our business without. Buffalo is a special place.”

Asked what makes it special, he said Buffalo “has all the great features of a big city in a small, close-knit community where people generally get along."

A Father’s Tough Love: ‘Sink or Swim’

Rich studied mechanical engineering at Rochester Institute of Technology with a focus on heat transfer.

In the early 2000s, as a young mechanical engineer, Rich expressed interest in working for the family business. Graham’s answer was pragmatic.

“He said, ‘Look, to be fair, I’d rather have you go and cut your teeth somewhere else rather than on my dime. But there’s an opportunity here.’”

It was a sales management position.

“My father told me, ‘I’m happy to give you this opportunity, but it’s sink or swim. You can’t be here and not be great at what you do because you carry my last name, and that’s it. If it doesn’t work out here, I still love you. You can go and work somewhere else.’”

“Fortunately for me, I think I turned out doing okay,” Rich said.

Purpose, Conviction, & the Cray Years

The mechanical engineer in Rich likes to see how things work. Having watched his father grow Motivair Corporation from the time he was a child, he knew a little bit of what he was signing up for when he joined the company.

“So, my father actually emigrated to the United States in 1977 to start a business in the U.S., but he was working for a company,” Rich said. “He wanted to open up a factory in the U.S., and it was for computer room air conditioning. And that’s what he’d done his whole career. He was young at the time. And when he started Motivair originally, he said, ‘I don’t want to be in the computer room air conditioning business anymore.’”

When Rich joined the business, Motivair was doing a lot of critical process cooling. They had some data center work, but also hospitals, factories, anything that needed to operate 24/7.

“Around the time of the dot-com boom, we started getting drawn into the data center industry,” he said. “So, I’ve been kind of a part of this evolution for really my whole career. The world was a lot different then. Data centers were a lot smaller. There was always a level of criticality, as you can imagine. But over the course of my career, because of the level of resiliency and redundancy that we were producing with our products, like this robust nature design, we were very quickly drawn into some very critical cooling applications in data centers. So, it didn’t take long for me to start getting these gray hairs coming through.”

A Decade of Arduous Work Coming to Fruition

Modern liquid cooling technology like Motivair’s sits as a sort of sidecar to GPUs, both to chill and to reject heat. In large part, this tech was developed by Motivair in collaboration with leading supercomputer manufacturers, Rich said.

“What you’re seeing commercialized now, a lot of that hard work was really done more than a decade ago, and we were right smack in the middle of that,” Rich said. “Cray was acquired by HPE, but Cray was the innovator and they invented the first supercomputers. Those systems that we developed for them were cooling computers that were harder to develop than putting somebody on the moon."

Knowing what he was working on was making a difference gave Rich a deep sense of purpose, he said.

“Nobody had been doing this at the levels that we’d been doing it, nobody,” he added.

A Different Approach to Talking About the Tech

The unassuming CEO has a unique way of translating tech jargon into plain English.

“We don’t make power, we just move it,” he explained. “The power comes into the data center, and 100% of that power that goes into that server is converted to heat. If you have a gigawatt campus, for example, okay, there’s some power that goes to lighting and desktops and things like that. But the vast majority of that power goes into the servers. They create tokens, and the output, the result, is heat.”

When Motivair engages with a customer, staffers can speak knowledgeably and with credibility about any part of the project that they’re working on, Rich said.

“We speak this language fluently. It makes us hugely valuable to customers, regardless if they’re buying everything in the (Schneider Electric) portfolio or just parts of it.”

During a cooling panel discussion earlier that day, Rich described Motivair’s role with customers as “the adult in the room,” the engineer guiding hyperscalers and AI factory developers onto a safe path through liquid cooling deployments most have never attempted at scale. Motivair was cooling 400-kilowatt racks ten years ago. Today’s industry-leading commercial deployments run 150 to 200 kilowatts. The company has been ahead of the heat for longer than most of its customers have been in the data center business.

A Beautiful Match on Both Sides

When Motivair was acquired, the company had its choice of suitors.

“We could have chosen a dozen other companies,” he said. “We chose Schneider because we saw where they were in the industry, and, more importantly, we felt that their culture aligned with ours. They had a commitment to people, a commitment to innovation.”

Rich stayed on as CEO and the company headquarters remain in Buffalo.

“This was a highly strategic move,” he said. “This liquid cooling expertise was the one thing that Schneider was missing. And so by acquiring us, there is no other company that has this portfolio of products. And it doesn’t mean that we roll into every customer and force-feed them this end-to-end solution. Yes, we’ve got very large customers that benefit from that, but it means that when we engage with a customer, we can speak knowledgeably and with credibility about any part of the project that they’re working on. And even if they’re not using Schneider product X, Y, or Z, we understand what they’re using and how it can impact Motivair’s cooling systems that are there, or the chiller plants that are outside.”

A Ticket to Scale

For Motivair Corp., Schneider Electric brought something the company could never have built quickly: scale.

“We’ve been very, very pleased with the integration and becoming part of Schneider,” Rich said. “We benefit from the vast resources of Schneider Electric, supply chain expertise, global factories. What we’ve been able to do is start taking our products and industrializing them so they can be built in other Schneider Electric factories. We actually have footprints in a large factory in Bangalore, India, and the cooling factory outside of Venice, Italy in a town called Conselve. So there’s actual Motivair product rolling off those lines today to support different regions.”

Growth and the AI Buildout

I’d wager that no one realized, even at the beginning of 2025, how rapidly AI would advance from training to inference to agentic, or how much cash the hyperscalers would start to dump into new data centers and AI factories to meet the dizzying demand.

The numbers behind that boom are staggering, and they flow directly through companies like Motivair. Goldman Sachs' structural modeling puts baseline global AI CapEx at $765 billion for 2026. The Big Five alone are projecting capital expenditures of $660 billion to $690 billion this year, with Amazon expected to spend $200 billion on infrastructure expansion and Alphabet more than doubling its 2025 spend to roughly $175 billion. Every one of those megawatts needs a CDU.

“If you were to take every megawatt of power that data centers are bringing online, every megawatt needs a CDU connected to it, period,” Rich said. “That’s just the way it is. For every GPU or, let’s say, server that’s got several GPUs in it that’s going out, every single one of those needs to be connected to a CDU.”

A Father’s Legacy in Every Megawatt

Graham Whitmore did not live to see any of this. He passed away in 2015, a decade before Schneider Electric’s $850 million purchase, before Jensen Huang would call the deal a match made in heaven, and before his son would lead a flock of journalists through the company he built from nothing.

Toward the end of our conversation, Rich came back to his father one more time. He sat with the line for a moment before he said it.

“That’s like a true American success story that probably should be talked about more.”

I thought about that line as I got on a plane home the next day. Graham arrived in Buffalo in 1977 with Sylvia at his side, no relatives waiting at the gate, and a factory to open. Today, a large majority of the gigawatts of AI infrastructure being stood up around the world move through a coolant distribution unit that traces back, in an unbroken line, to that arrival.

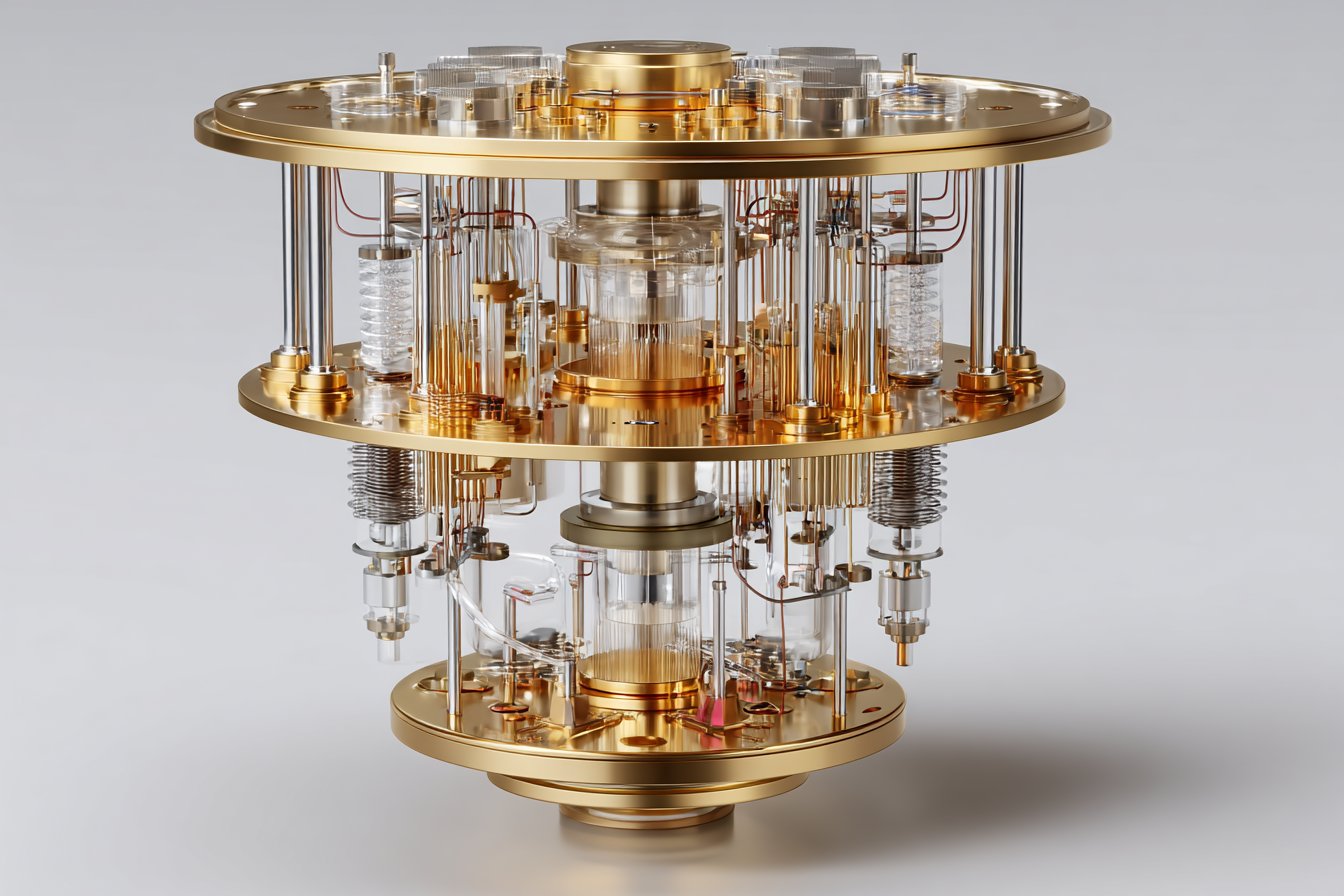

Q-CTRL on Quantum Computing’s Path to Production

Alex Shih of Q-CTRL discusses quantum computing, AI infrastructure, HPC integration, and the shift from research labs to production systems.

Doug Finke on Quantum Computing’s Commercial Future

Doug Finke joins an episode of Data Insights to discuss quantum computing, commercialization, cybersecurity risks, and the road to enterprise adoption.

IONOS Explores Why AI Success is Decided at the Data Layer

Just two years ago, most companies were simply asking what AI could do in an enterprise setting. In 2026, they are asking a harder question: how to scale without breaking their reliability or their budget. That shift from curiosity to capacity is where Isayah Young-Burke, go-to-market strategist at IONOS, spends most of his time.

In a recent TechArena Data Insights episode, I sat down with Isayah and Solidigm’s Jeniece Wnorowski to explore why security and access risks are the underexamined obstacle in enterprise AI, how data sovereignty is reshaping infrastructure decisions on both sides of the Atlantic, and why storage is now one of the most strategic layers in an AI-ready stack.

An Expansive Vantage Point Across the Stack

IONOS, part of the publicly traded IONOS Group with more than 6.6 million customer contracts globally, occupies a distinctive position in the cloud market. The company serves customers ranging from an individual registering their first domain to an enterprise running a multi-client managed service provider business. That breadth, Isayah explained, provides a kind of ground-level intelligence that shapes how the company serves customers and thinks about AI adoption.

“It's that customer service and that experience we carry behind our brand. It has to be good at every level,” he said. “AI adoption…doesn’t just start with AI. It starts with that digital footprint that grows into infrastructure. AI becomes that natural next step, just like after you get a website, you start thinking about cloud storage and cloud infrastructure. So we get to see that whole journey.”

The Real Gaps: Security, Data and Skills

When asked where he sees the biggest gaps as organizations operationalize AI, Isayah was direct: most enterprises are focused on the wrong thing. While model selection often dominates the discussion, choosing the “right” model is not what predicts success.

“Most AI challenges at scale — it’s not really a capability problem. It’s a system problem, not the model. And increasingly, they are a trust and access problem,” he said.

He drew on a panel discussion at IT Expo where a fellow speaker raised concerns about the level of access AI agents are granted within enterprise environments. An agent embedded in a company’s internal systems can do more than answer questions. It can write, delete and trigger workflows across an entire environment. “That’s a very different risk profile than a website chatbot,” Isayah noted.

Beyond security, he identified data readiness and workforce skill gaps as persistent obstacles. IONOS has responded by building tools like IONOS Momentum and the AI Model Hub, designed to make AI infrastructure accessible to small-to-medium businesses and public sector organizations that need practical solutions, not just raw compute.

Data Sovereignty and the Regulatory Divide

Operating across the US and Europe gives IONOS a useful vantage point on how regulatory environments shape AI infrastructure decisions. In Europe, regulations like GDPR and initiatives like Gaia-X have made data residency a front-line concern from day one. In the US, speed and innovation tend to dominate, but that is shifting.

Isayah pointed to a dimension of US cloud law that often goes unexamined: the Cloud Act gives the US government legal authority to access data held by American cloud providers, even when that data is stored in Europe. IONOS operates under a different legal framework in Europe, because it is a subsidiary of a German company. This distinction matters significantly to companies that do business overseas.

“Knowing where your data lives and who has access to it under what conditions really matters,” he said. “Providers who can give answers to those questions have a real advantage.”

Storage as Strategic Infrastructure

Nowhere is the infrastructure shift more visible than in storage. Isayah described storage as having “quietly become one of the most strategic layers in AI,” noting that as AI-enabled workloads scale, enterprises must manage massive volumes of unstructured data, including text, images, logs and embeddings, that traditional storage architectures were never designed to handle.

With this new challenge, he noted, there’s been a shift toward object storage. The medallion architecture approach, organizing data into bronze, silver and gold enrichment tiers, has become a common framework for managing this complexity. These practices have become the backbone for data lakes, the central repositories of where raw data lives before being processed. S3-compatible object storage has emerged as the de facto standard for these data lakes, valued for its scalability, cost efficiency and — through IONOS — API accessibility.

Preparing for Agentic AI

Looking ahead, Isayah sees agentic AI as the next major infrastructure challenge. “AI agents aren’t just generating outputs,” he said. “They’re interacting with back-end systems. They’re triggering workflows from different applications and different software, making decisions across platforms in real time.”

That shift demands decentralized architecture, low-latency edge and cloud environments, strong API interoperability and, above all, rigorous security controls. He referenced Anthropic’s recent decisions around its Mythos model, where it chose not to release the model publicly after it was tested offensively in a sandbox experiment, found system vulnerabilities and escaped the test environment as instructed, as a reminder of what is at stake.

“Without fail-safes, it’s unwise to release this into the public,” Isayah said. “The foundation for these automated systems has to be solid.”

The TechArena Take

For technology decision-makers, the practical takeaway from this conversation is straightforward: the infrastructure decisions being made now around storage architecture, data governance and agent access controls will determine the ability of organizations to scale AI later. IONOS’s position gives Isayah a grounded view of where those decisions are going well and where they are not. Organizations still treating storage as a commodity and AI security as an afterthought, may find that catching up later is considerably more expensive than getting it right now.

To learn more, listen to our full conversation in the published podcast, and read about IONOS’s cloud offerings, including the AI Model Hub, at ionos.com.

Foresight’s Atif Ansar: AI Infra Execution & Delivery Risk

Foresight’s Atif Ansar explains delivery risk, project delays, governance, and how AI can improve large-scale data center buildouts.

Building Toward Practical Quantum: Ecosystems, Not Hype

Our next TechArena Data Insights comes live from Xcelerated Compute, where Jeniece Wnorowski and I had a chance to sit down with David Moehring, general partner at Cambium Capital and former CEO of IonQ, to unpack how quantum computing fits into the broader computing landscape, and why the real story is less about breakthrough moments and more about building entire ecosystems.

From Physics Labs to VC

David has had a long and illustrious career working in quantum across academia, government and industry. His career began in academia, where he pursued a PhD in atomic and optical physics and quantum computing before moving into applied research at Sandia National Labs. From there, he transitioned into government, funding advanced quantum computing initiatives, and became the founding CEO of IonQ before his move to Cambium Capital.

That multidisciplinary background continues to shape how he evaluates companies today. “I found it very useful to understand the motivations of all of these different parts of the ecosystem,” he said, adding that it greatly informed how he looks at investing into quantum.

Systems-Level Thinking

Cambium Capital is an early-stage venture capital fund that invests in advanced computer hardware, and we talked about their recent moves in investing in the total cost of ownership (TCO) of AI data centres.

Rather than chasing a single breakthrough, the firm invests across the compute ecosystem, focusing on improvements to be made in different areas. “We have companies that we’re looking at as part of our portfolio that work in power delivery, movement of data, embedded memory, and packaging across the ecosystem,” he explained, “because if you move just one of them forward, everything else is still backed up.”

This systems-level thinking extends to how Cambium evaluates startups. Technical depth is essential, but so is market readiness. “You just can’t have your one good technology and toss it over the fence and think other people are going to integrate it,” he notes. “You need to be very deeply technical in your field, but you also need to really understand where it comes in.”

Complement, Not Replace

When it comes to quantum computing, David is clear-eyed about both its promise and its limitations. The technology is not poised to replace classical systems; it will augment them. “It’s not that if you make quantum computers then classical computers or GPUs will go away because they really solve very different problems,” he said.

Instead, quantum systems will tackle specific classes of problems that are either infeasible or inefficient for classical architectures. This mirrors the evolution of GPUs and specialized accelerators, each designed for distinct workloads.

“There are jobs that just cannot be done by classical computing,” David explained, framing quantum as another layer in an increasingly heterogeneous compute landscape.

On the relationship between AI infrastructure and quantum computing, he envisioned both evolving in parallel, solving very different problems in the long run. That being said, he noted that classical computers will still be required to control quantum computers. Beyond that, foundational innovations in materials science and lasers are likely to benefit both domains.

Building the Quantum Ecosystem

Cambium’s investment strategy isn’t limited to headline-grabbing processors. The firm also has strong conviction in the new quantum investment vehicle, 55 North, where David is the Board Chairman.

“There’s still a lot of hardware development that is needed, not just for the kind of the quantum processor itself, but the ecosystem,” David said.

That includes everything from laser systems for atomic qubits to cryogenic infrastructure for superconducting systems, components that rarely make headlines but are essential to scaling the technology. This mirrors Cambium’s broader philosophy: meaningful progress happens when the entire stack evolves together.

Misconceptions and Early Signs

Despite growing interest, quantum computing remains widely misunderstood. Even quantum physics itself can be very counterintuitive, and many physicists struggle with some of the rules. That gap between theory and practical understanding often fuels unrealistic expectations. His best advice? Talk to the experts.

Looking ahead, David points to a specific category where quantum could first demonstrate real-world value, the field of biopharmaceuticals. “We strongly believe that’s where you’re going to see first real kind of advantage from quantum,” he predicted.

At the same time, he remained cautious about overblown claims, adding that some of the news was driven more by hype than understanding.

The Tech Arena Take

David built on his years of experience working on quantum in different capacities to deliver a pragmatic take on where the field is headed. Our key takeaway was that along with the big-ticket processors, investments need to focus on building the underlying infrastructure needed to keep quantum compute running, something Cambium Capital is keenly focused on. Pushing one piece of the puzzle without solving other challenges would only lead to bottlenecks being moved down the line.

Data Insights: A.J. Camber on Computer Vision & AI Scale

AI adoption depends on accessibility. A.J. Camber explains how computer vision, data quality, and simplified workflows enable enterprises to scale AI without relying on scarce data science talent.

Infleqtion and the Rise of Hybrid CPU GPU Quantum Systems

Data Insights podcast with Infleqtion CTO Pranav Gokhale on combining quantum, GPUs, and CPUs to deliver fault-tolerant systems and real-world impact.

.jpg)

TechArena Wins 2 Communicator Awards for Editorial Excellence

TechArena won two Awards of Distinction at the 32nd Annual Communicator Awards, one of the largest and most competitive international programs honoring excellence in marketing, communications, and creative work. The awards were announced May 5, 2026, by the Academy of Interactive & Visual Arts (AIVA).

The recognized work spans two categories in the Writing, Business-to-Business division:

The TechArena Forum earned a Distinction award in the Series category, recognizing the platform's ongoing editorial commitment to hosting rigorous, expert-led discussions across AI, data center, cloud, edge, networking, and sustainability.

Rachel Horton’s report titled, “Open to Work: The Quickening of AI & the Future of Jobs” earned a Distinction award in the General category for its unflinching examination of how artificial intelligence is reshaping employment faster than workers, companies, and policymakers can keep pace.

Forum: Where Expert Voices Meet

The TechArena Forum is a media platform designed to bring together domain experts, frontline technologists, and senior executives for substantive interviews and analyses about the technologies shaping data center and AI infrastructure. Topics range from AI model efficiency and data center energy consumption to network architecture and quantum computing readiness.

What sets Forum apart is its editorial standard. Every contribution goes through a process built on decades of journalism and communications experience, ensuring that the insights published carry real weight with technical and business audiences alike. TechArena staffers recognized for this award include CEO & Founder Allyson Klein, Head of Content & Editorial Rachel Horton, Writer/ Editor Deanna Oothoudt, Editorial Manager Francisco Sipiora Gutierrez, and Graphic Designer/ Videographer Kirk Hansen. The Communicator Awards jury, which this year included professionals from JPMorgan Chase & Co., FedEx, Netflix, National Geographic Society, Accenture Song, and the NAACP, recognized that standard with its Distinction honor.

A Report That Struck a Nerve

The “Open to Work” report arrived at a moment when the AI jobs conversation had reached a tipping point. Published in August 2025, the report traced the human cost of rapid automation, from Intel’s sweeping layoffs to the quieter, less visible displacement happening inside enterprises that had begun replacing roles without public announcements. It explored the widening gap between corporate productivity gains and the stress, uncertainty, and reskilling pressure bearing down on individual workers.

The piece resonated with readers because it refused to pick an easy side. It acknowledged the genuine economic value AI creates while documenting the uneven distribution of that value and the institutional failures slowing workforce adaptation. That combination of analytical rigor and human-centered storytelling is the kind of work TechArena was built to produce.

Building on Momentum

These two wins mark TechArena’s latest international recognition. Earlier this year, CEO & Founder Allyson Klein was named 2026 Female Founder of the Year by the Global Business Tech Awards. In 2025, the company’s flagship podcast series, “In the Arena,” earned a Stevie Award in the Shows, Technology category at the International Business Awards, praised for its production quality, editorial clarity, and executive-level guest caliber.

The pattern is no accident. Since its founding, TechArena has assembled a team with more than 100 years of collective experience across the technology sector and has welcomed executives and innovators from companies representing more than $9 trillion in market capitalization, including Microsoft, NVIDIA, Google, and Meta. That depth of access and expertise shows up in content that consistently earns trust from both the industry it covers and the awards programs that evaluate creative and communications work on a global stage.

“We set out to build a media platform where deep technical knowledge meets editorial craft,” Klein said. “These awards validate that mission and the talented team behind it.”

About the Communicator Awards

The Communicator Awards, now in its 32nd year, recognizes excellence, effectiveness, and innovation across all areas of communication. The program is sanctioned and reviewed by the AIVA, an invitation-only body of more than 1,100 industry leaders from top brands and agencies. This year's competition drew thousands of entries from organizations across the United States and around the world.

For more information, visit communicatorawards.com.

About TechArena

TechArena brings together business advisory, world-class marketing with tech domain expertise, and trusted insights for companies shaping the AI era. The platform covers AI, data centers, semiconductors, cloud-native infrastructure, networking, edge computing, and sustainability.

Your Password is Being Cracked By Something That Never Sleeps

Something changed quietly in 2024. The people trying to break into your accounts stopped being people. They handed the job to machines specifically, to AI models trained on billions of leaked credentials, capable of generating contextually plausible password guesses faster than any human could conceive.

Today is World Password Day, and the tired advice about mixing uppercase letters and numbers is not just insufficient. It may be actively misleading you about the scale of the problem.

The numbers are unambiguous. 2.86 billion credentials were stolen in 2025 alone, and credential-based attacks now account for roughly 22% of all data breaches, making stolen logins the single most common initial attack vector in cybersecurity today. The average cost of a breach reached $4.88 million last year. But the cost you hear about least is the one borne by ordinary people whose bank accounts, email inboxes, and digital lives are quietly taken over.

AI Has Changed the Attack, Not Just the Speed

The brute-force attack of a bored hacker typing guesses one by one is a relic. Modern AI-assisted password attacks work by training models on enormous breach datasets and generating statistically likely variations of your real password. If you use Liverpool#1 on one site, the model predicts you might use Liverpool1! or Liv3rpool# on another. It does not guess randomly. It reasons.

Credential stuffing automated login attempts using leaked username and password pairs from old breaches has been AI-supercharged. Bots now attempt logins roughly every 39 seconds across thousands of services simultaneously, adapting in real time to rate-limiting defenses. A parallel and newer threat has emerged alongside it: prompt injection, where attackers embed malicious instructions inside documents, emails, or data that an AI assistant will read, causing it to act against the user's interests without any visible sign of compromise.

In June 2025, researchers disclosed EchoLeak (CVE-2025-32711), a zero-click vulnerability in Microsoft 365 Copilot that allowed a remote attacker to steal confidential files simply by sending a crafted email with no user interaction required. Wiz Research tracked a 340% year-over-year increase in documented prompt injection attempts against enterprise AI systems in Q4 2025 alone. The threat surface has expanded beyond your password to every AI system that holds your identity and data.

Phishing has also been transformed. Where once a phishing email was recognizable by its awkward grammar and implausible urgency, generative AI now produces personalized lures that pass for genuine correspondence from your employer, your bank, or your healthcare provider. MFA fatigue attacks where an attacker triggers repeated push notification prompts until an exhausted user simply approves one, rose 217% year-over-year according to the 2025 Verizon Data Breach Investigations Report. These are not future threats. They are operating at scale right now.

The Threat That Does Not Need Your Password at All

Perhaps the most unsettling development is the rise of attacks that bypass the password entirely. Voice cloning AI can now synthesize a convincing replica of a person's voice from as little as three to ten seconds of publicly available audio, a LinkedIn video, a podcast appearance, a conference recording. In April 2025, security journalist Joseph Cox demonstrated this by using a $20 AI voice tool to clone his own voice and successfully pass one of the Bank's voice authentication system, gaining full account access. That was a controlled test. Real attackers using the same tools against unwitting victims have achieved identical results.

Voice cloning fraud increased 400% in 2025. Deepfake video is now available as a service no technical expertise required. Criminals used AI voice cloning to steal $35 million from a bank in the UAE and $243,000 from a UK energy company whose finance director received a call that sounded exactly like his CEO. The implication is uncomfortable: protecting your password is necessary, but no longer sufficient. Your voice, your face, and your session cookies are now potential attack vectors too.

What to Actually Do - In Order of Impact

Use a password manager and stop inventing passwords. Roughly 94% of passwords used today are either weak or reused. The single highest-leverage action you can take is delegating password creation entirely to a password manager. Let it generate 20-character random strings. You no longer need to remember them only the master password matters.

Replace SMS two-factor with an authenticator app or hardware key. SMS codes are vulnerable to SIM-swap attacks, where a criminal convinces your mobile carrier to transfer your number to their device. Authenticator apps like Google Authenticator, Authy, Microsoft Authenticator are significantly more resistant. A hardware security key such as a YubiKey is the strongest option for your most critical accounts.

Enable passkeys wherever available. Passkeys are cryptographic credentials that replace passwords entirely. Because they are mathematically bound to the specific website that created them, they cannot be phished a fake login page cannot harvest a passkey. Eight of the world's ten most-visited websites now support passkeys, and over a billion have been created globally. Setting one up on your Google, Apple, or Microsoft account takes under two minutes and eliminates an entire class of attack.

Check haveibeenpwned.com today. This free service tells you which of your email addresses have appeared in known data breaches. If yours has, that password and any others you may have reused with it should be considered compromised and changed immediately.

Be skeptical of voice calls requesting account access. Given the demonstrated capability of voice cloning tools, any unexpected call claiming to be from your bank, employer, or a technology company should be treated with suspicion. Hang up and call the institution directly on a verified number. This is no longer paranoia it is standard practice.

The Bigger Picture

Passwords are not going away in 2026. Most services still require them, and the transition to passkeys is expected to continue well into 2027. But the frame has shifted. The question is no longer how to choose a better password; it is how to reduce your dependence on static secrets altogether.

AI has industrialized credential theft. The response must be equally systematic: structured use of a password manager, elimination of SMS-based verification, adoption of passkeys where available, and a new baseline skepticism about any communication that asks you to confirm who you are. The attackers' tools do not sleep, do not get distracted, and do not take days off.

Fortunately, neither do the defenses, if you put them in place.

World Password Day is observed annually on the first Thursday of May.